Cursus

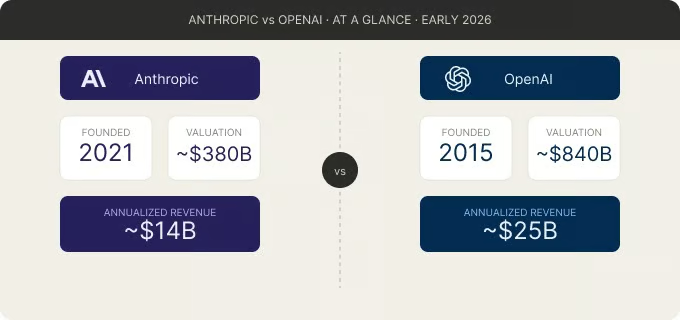

Als je met large language models (LLM’s) werkt, heb je bijna zeker een product van OpenAI of Anthropic, of allebei, gebruikt. OpenAI populariseerde de categorie met ChatGPT, dat naar verluidt honderden miljoenen wekelijkse actieve gebruikers heeft. Anthropic, opgericht in 2021 door voormalige OpenAI-onderzoekers, is uitgegroeid tot het op één na grootste AI-bedrijf qua omzet, met analisten die de jaarlijkse omzet op tientallen miljarden schatten.

Maar dit is niet alleen een modelvergelijking. Anthropic en OpenAI vertegenwoordigen twee verschillende benaderingen van het bouwen van AI. OpenAI richt zich op een breed consumentenbereik en multimodale integratie. Anthropic focust op vertrouwen bij ondernemingen, veiligheidsresearch en het verwerken van diepe context.

Wat is OpenAI?

OpenAI is het bedrijf achter ChatGPT, de GPT-modelfamilie, DALL-E en Sora. Het werd in 2015 in San Francisco opgericht, oorspronkelijk als een non-profit. In oktober 2025 rondde het een herstructurering af naar een Public Benefit Corporation (PBC), waarmee het oude model met winstplafond werd ontbonden. De non-profittak werd omgedoopt tot de OpenAI Foundation, die de macht behoudt om het bestuur te benoemen en ongeveer 26% aandelen bezit.

OpenAI’s samenwerking met Microsoft is cruciaal voor de manier waarop het bedrijf opereert. Azure is de exclusieve cloudprovider voor OpenAI’s API-calls, en OpenAI heeft zich gecommitteerd aan $250 miljard aan Azurediensten gedurende de looptijd van de overeenkomst. De samenwerking werd in februari 2026 publiekelijk bevestigd, terwijl OpenAI in zijn meest recente financieringsronde $110 miljard ophaalde, naar verluidt een van de grootste private rondes ooit.

Aan de productkant volgt OpenAI een strategie van snelle iteratie en agressieve uitfasering van modellen. In februari 2026 haalde het bedrijf meerdere legacy-modellen uit ChatGPT, waaronder GPT-4o en GPT-4.1. In maart 2026 werd ook de tussentijdse GPT-5.1-serie uitgefaseerd. Dit houdt de productlijn gefocust, maar vereist dat developers migratietijdlijnen goed bijhouden.

De GPT-5-familie is de huidige generatie van OpenAI. Die lanceerde in augustus 2025 met een "router"-architectuur die automatisch schakelt tussen snelle (Instant) en diepe (Thinking) redeneermodi, afhankelijk van de complexiteit van de vraag. De belangrijkste modellen om te kennen zijn:

- GPT-5.4 (uitgebracht in maart 2026, het nieuwste vlaggenschip met een contextvenster van 1,05 miljoen tokens),

- GPT-5.3 Codex (de agentische codingspecialist), en

- GPT-5.2 (het vorige vlaggenschip, nu in uitfasering).

- Ook in maart 2026 uitgebracht zijn GPT-5.4 mini en GPT-5.4 nano, kleinere varianten gebouwd voor snelheid en lagere kosten. GPT-5.4 mini behoudt het merendeel van de codeer-, tool- en multimodale kracht van GPT-5.4 en draait meer dan twee keer zo snel. GPT-5.4 nano richt zich op eenvoudigere taken met hoog volume.

- OpenAI bracht ook open-weight-modellen uit (gpt-oss-120b en gpt-oss-20b onder de Apache 2.0-licentie), een opmerkelijke verandering ten opzichte van de historisch gesloten aanpak.

Wat is Anthropic?

Anthropic is het bedrijf achter de Claude-modelfamilie. Het werd in 2021 opgericht door Dario Amodei (CEO), Daniela Amodei (President) en ongeveer tien andere voormalige OpenAI-onderzoekers die vertrokken wegens meningsverschillen over veiligheid en prioriteiten rond commercialisering.

De technische identiteit van het bedrijf draait om Constitutional AI, een trainingsaanpak waarbij het model zijn output zelf bekritiseert aan de hand van een geschreven set ethische principes, in plaats van uitsluitend te vertrouwen op menselijke feedback. Anthropic publiceerde in januari 2026 een herziening van Claude’s Constitutie, met een verschuiving van een regelgebaseerde naar een redeneergebaseerde alignment: in plaats van het model alleen te vertellen wat het moet volgen, legt de constitutie uit waarom regels bestaan, met als doel meer genuanceerd oordeel in nieuwe situaties mogelijk te maken.

Anthropic’s primaire cloud- en trainingspartner is Amazon Web Services (AWS), met een totale investering van $8 miljard door Amazon. Claude-modellen zijn ook beschikbaar op Google Cloud Vertex AI (Google bezit ongeveer 14% van Anthropic), en sinds maart 2026 ook op Microsoft Foundry.

De Claude 4-familie is de huidige generatie. De naamgeving kent drie niveaus: Opus (vlaggenschip, meest capabel), Sonnet (gebalanceerd) en Haiku (snel en kostenefficiënt). De nieuwste modellen zijn:

- Claude Opus 4.6 (uitgebracht in februari 2026, het topmodel voor redeneren met een contextvenster van 1 miljoen tokens en 128K maximale output)

- Claude Sonnet 4.6 (ook februari 2026, de standaard voor gratis en betaalde gebruikers met een 1M-contextvenster), en

- Claude Haiku 4.5 (uitgebracht in oktober 2025, de budgetoptie).

Naast de kernmodellen heeft Anthropic Claude Code uitgebracht (een terminalgebaseerde coding-agent), desktopachtige agents voor niet-technisch kenniswerk en agent-SDK’s voor Python en TypeScript om aangepaste agentische workflows te bouwen.

Anthropic vs. OpenAI: modelmogelijkheden vergeleken

Beide platforms zijn capabel over een breed scala aan taken, maar hun sterke punten verschillen. Er is geen enkel beste model voor alles. Laten we het per categorie uitsplitsen.

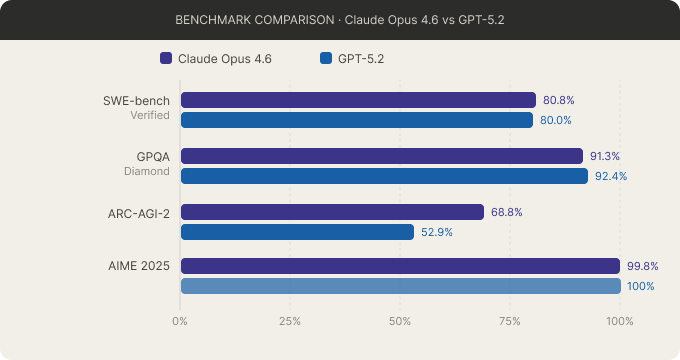

Benchmarkscores op vier belangrijke tests. Afbeelding door de auteur.

Redeneren en probleemoplossing

Beide bedrijven bieden nu hybride redeneren, waarbij één model kan schakelen tussen snelle antwoorden en uitgebreide chain-of-thought-denkprocessen. Claude introduceerde dit eerst met Claude 3.7 Sonnet in februari 2025. OpenAI volgde met GPT-5’s uniforme router in augustus 2025.

Op benchmarks is het beeld gemengd:

- AIME 2025 (wiskundewedstrijd): GPT-5.2 leidt, inclusief een perfecte score in sommige testconfiguraties.

- GPQA Diamond (masterniveau wetenschap): GPT-5.2 blijft Claude nipt voor.

- ARC-AGI-2 (nieuwe visuele logica, lastig te memoriseren uit trainingsdata): onafhankelijke analyses plaatsen Claude Opus 4.6 rond 68–69% tegenover GPT-5.2 rond 52–54%.

- Humanity’s Last Exam (expertmatige synthese): Claude leidt op basis van beschikbare vergelijkingen.

Op basis van benchmarkresultaten lijkt OpenAI beter te presteren op deterministische logica en feitelijke retrieval, terwijl Anthropic beter scoort op contextuele synthese en kwalitatieve analyse. Geen van beide aanpakken is universeel beter. Het hangt af van wat je het model wilt laten doen.

Voor een nadere blik op hoe GPT-5.4 en Claude Opus 4.6 zich specifiek op deze benchmarks verhouden, zie onze GPT-5.4 vs. Claude Opus 4.6-uitleg.

Coderen en gebruik door developers

Beide bedrijven zijn ver voorbij simpele codegeneratie en bieden nu agentische codingtools, maar ze hanteren heel verschillende benaderingen.

Claude Code draait lokaal, indexeert je codebase en vraagt goedkeuring voordat bestanden worden aangepast. Claude Opus 4.5 scoorde 80,9% op SWE-bench Verified, waarmee het in de meeste onafhankelijke analyses vóórloopt op GPT-5-seriemodellen.

OpenAI Codex draait in de cloud, kloont je repo in een sandbox en werkt autonoom. Het scoort 77,3% op Terminal-Bench 2.0 vergeleken met 65,4% voor Claude, en gebruikt volgens OpenAI aanzienlijk minder tokens voor vergelijkbare taken.

In de praktijk heeft Claude Code vaak de voorkeur bij complexe refactors en productieklare output. Codex is doorgaans favoriet voor snel prototypen en gedelegeerde achtergrondtaken. GPT-5.4 mini en nano breiden dit verder uit door op te treden als subagents die smallere taken afhandelen terwijl een groter model planning en review doet.

Voor fine-tuning ondersteunt OpenAI meerdere methoden (SFT, DPO, RFT) voor de GPT-4.1-familie. Fine-tuning bij Anthropic is beperkt tot Claude 3 Haiku op Bedrock, met niets beschikbaar voor Claude 4.x-modellen begin 2026.

Omgaan met lange context

Beide platforms ondersteunen nu contextvensters in de orde van 1 miljoen tokens, wat neerkomt op grofweg 750.000 woorden of 150.000 regels code.

Claude Opus 4.6 en Sonnet 4.6 bieden 1M-token contextvensters met vlakke prijzen, dus geen toeslag voor het volledige venster. GPT-5.4 ondersteunt een contextvenster van 1,05M tokens, maar hanteert een toeslag (2x input, 1,5x output) voor prompts boven 272K tokens. Het oudere GPT-5.2 heeft een kleiner venster van 400K tokens.

Qua kwaliteit op lange context scoorde Claude Opus 4.6 78,3% op MRCR v2 (een multi-needle retrieval-benchmark bij 1M tokens), en stond daarmee bovenaan onder de geteste modellen in die benchmark. Voor workflows met grote documenten, volledige codebases of juridische/onderzoeksreviews met meerdere documenten zijn zowel de grootte van het contextvenster als de retrieval-nauwkeurigheid belangrijk, en hier heeft Anthropic momenteel een voorsprong.

Hoe één miljoen tokens eruitziet. Afbeelding door de auteur.

Multimodale mogelijkheden

Hier is het verschil tussen de twee platforms het duidelijkst. OpenAI ondersteunt tekst, beelden, audio en video native in de hele modelfamilie. Het ecosysteem omvat beeldgeneratie (GPT Image 1), videogeneratie (Sora 2), een realtime voice-API en een geavanceerde spraakmodus voor conversational audio. GPT-5.4 biedt ook computerbediening via een previewfunctie.

Claude verwerkt tekst- en beeldinputs en dekt documentanalyse (PDF’s, grafieken, tabellen) behoorlijk goed af. Het ondersteunt ook computerbediening via een visuele aanpak (screenshot, dan actie), zowel in Claude Opus 4.6 als Sonnet 4.6. Claude genereert geen beelden, audio of video.

Eén ding om te vermelden: sinds 12 maart 2026 kan Claude interactieve grafieken, diagrammen en visualisaties direct in de chat maken. Dit zijn geen statische beelden, maar dynamische visuals die meebewegen met het gesprek. De functie is beschikbaar in alle abonnementen, ook gratis. Dit is iets anders dan beeldgeneratie en verkleint die kloof met OpenAI niet, maar het is wel een betekenisvolle toevoeging voor data- en analysetaken

Als je werk beeldgeneratie, audio of video vereist, is OpenAI hier nog steeds de enige optie. Als je vooral tekst, documenten en data-analyse nodig hebt, dekt Claude nu meer terrein dan voorheen.

Anthropic vs. OpenAI: veiligheids- en alignmentfilosofie

Veiligheid is een van de duidelijkste verschillen tussen deze twee bedrijven, en het is de moeite waard te kijken naar wat elk bedrijf daadwerkelijk doet in plaats van alleen wat ze zeggen.

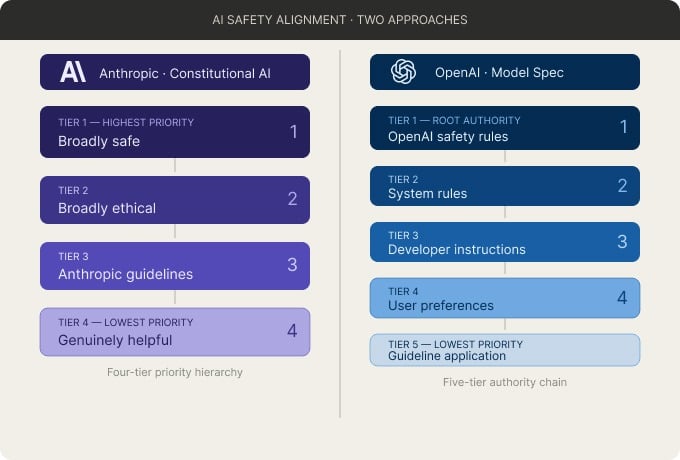

Twee benaderingen van AI-veiligheidsalignment. Afbeelding door de auteur.

Hoe elk bedrijf veiligheid benadert

De kernmethode van Anthropic is Constitutional AI met Reinforcement Learning from AI Feedback (RLAIF): het model bekritiseert zijn eigen output aan de hand van een geschreven set principes, ontleend aan bronnen zoals de VN-Verklaring van de Rechten van de Mens en academisch veiligheidsonderzoek. De herziening van januari 2026 introduceerde een hiërarchie van vier prioriteiten (breed veilig, breed ethisch, in lijn met Anthropics richtlijnen, en daarna oprecht behulpzaam) en erkende formeel de mogelijkheid van AI-bewustzijn, wat destijds opmerkelijk was.

OpenAI gebruikt Reinforcement Learning from Human Feedback (RLHF) naast een governance-document genaamd de Model Spec, laatst bijgewerkt in december 2025. Dit document legt een autoriteitsketen vast van OpenAI’s basisregels tot aan gebruikersvoorkeuren. GPT-5 introduceerde "safe completions", die mikken op hoogover antwoorden op gevoelige vragen in plaats van direct te weigeren, en het model werd getraind om minder toegeeflijk te zijn en vaker tegen te spreken.

Het duidelijkste verschil tussen de twee is transparantie. OpenAI verbergt de interne chain-of-thought van het model voor gebruikers, met het argument dat blootstelling kan worden misbruikt. Anthropic publiceert interpretability-onderzoek en attributiegrafieken die interne redenering in kaart brengen. Praktisch gezien doorstonden Anthropics Constitutional Classifiers meer dan 3.000 uur red teaming zonder universele jailbreak. Onafhankelijke tests zetten de succesratio van promptinjecties bij Claude Opus 4.5 op ongeveer 4,7%, lager dan wat andere platforms meldden.

Beide bedrijven pasten hun veiligheidsverplichtingen in 2025 en 2026 aan. Critici stellen dat de wijzigingen eerdere toezeggingen afzwakken. Anthropics Responsible Scaling Policy v3.0 verving harde pauzes door openlijk beoordeelde publieke doelen. Analyses van OpenAI’s Preparedness Framework v2.0 stellen dat sommige risicocategorieën minder expliciete pre-deployment aandacht krijgen dan voorheen. De veiligheidspositionering is bij beide nog in ontwikkeling, dus het is zinvol hun laatste beleidsdocumenten te checken en niet alleen op reputatie te vertrouwen.

Veiligheidswijzigingen om te kennen

Beide bedrijven pasten hun veiligheidsverplichtingen in 2025 en 2026 aan. Critici stellen dat deze herzieningen eerdere toezeggingen afzwakken. Anthropics Responsible Scaling Policy v3.0 (februari 2026) verving harde pauzes door publiekelijk beoordeelde doelen, waarmee werd afgestapt van wat eerdere versies als onvoorwaardelijke uitrolwaarborg zagen. Analyses van OpenAI’s Preparedness Framework v2.0 (april 2025) stellen dat sommige risicocategorieën, zoals massamanipulatie en desinformatie, minder expliciete pre-deployment focus krijgen dan voorheen.

Deze verschuivingen kregen kritiek uit de AI-veiligheidsgemeenschap. Beide bedrijven noemen concurrentiedruk als factor. De veiligheidspositionering evolueert bij beide, dus het is de moeite waard om hun nieuwste beleid te checken en niet alleen op reputatie af te gaan.

API-ecosysteem en developerervaring

Anthropic biedt een gefocust API-oppervlak via de Messages API. SDK’s zijn beschikbaar in zeven talen (Python, TypeScript, Java, Go, Ruby, C# en PHP). De API ondersteunt tool use en function calling, gestructureerde JSON-output, vision-inputs, extended thinking met een configureerbaar tokenbudget en streaming. Anthropic introduceerde ook het Model Context Protocol (MCP), een open standaard om AI-agents te verbinden met externe tools en databronnen die door een groeiend aantal tools en platforms wordt overgenomen.

De API van OpenAI is breder. Die omvat de Responses API (die de Assistants API vervangt), de Chat Completions API, een Realtime API voor audio en spraak, en SDK’s voor Python, TypeScript, Go en Java. Naast de standaardfeatures (function calling, gestructureerde outputs, streaming, batchmodus) biedt OpenAI ook een beeldgeneratie-API, text-to-speech, transcriptie en embeddings.

|

Functie |

Claude |

OpenAI |

|

Tool- en functieaanroepen |

Ja |

Ja |

|

Gestructureerde JSON-output |

Ja |

Ja |

|

Streaming |

Ja |

Ja |

|

Batch API (50% korting) |

Ja |

Ja |

|

Promptcaching |

Ja |

Ja |

|

Ingebouwde webzoekfunctie |

Ja |

Ja |

|

Code-uitvoering |

Ja |

Ja |

|

Computerbediening |

Ja (Opus 4.6, Sonnet 4.6) |

Ja (preview) |

|

Fine-tuning |

Beperkt (alleen Haiku 3 op Bedrock) |

Ja (SFT, DPO, RFT voor GPT-4.1-familie) |

|

Beeldgeneratie |

Nee |

Ja |

|

Audio-invoer en -uitvoer |

Nee |

Ja (Realtime API, TTS) |

|

Agent-SDK |

Ja (Python, TypeScript) |

Ja (open-source, Python, TypeScript) |

Zoals ik al vermeldde in de coderingsectie, is de fine-tuningkloof noemenswaardig. OpenAI ondersteunt meerdere fine-tuningmethoden over meerdere modelniveaus via de directe API en Azure. Anthropic beperkt fine-tuning tot één model op één cloudplatform.

Adoptie door enterprises en partnerschappen

De API-specificaties zijn één ding. Hoe bedrijven deze platforms daadwerkelijk gebruiken is iets anders.

OpenAI en Microsoft

OpenAI heeft de grotere enterprise footprint, met ongeveer 78% productiepénétratie en zo’n 56% van de totale enterprise LLM-bestedingen, volgens brancheonderzoek.

De integratie met Microsoft is diepgaand. Azure OpenAI Service biedt directe toegang tot alle GPT-5.x-modellen. Microsoft 365 Copilot (aangedreven door OpenAI-modellen) is geadopteerd door de overgrote meerderheid van betaalde enterprise-licenties in bevraagde organisaties, en GitHub Copilot heeft ongeveer 70% marktaandeel in enterprise-codingtools.

OpenAI leidt bij chatbotimplementaties, enterprise-kennisbeheer en klantenservicecases.

Anthropic en Amazon

De enterprise-penetratie van Anthropic ligt op 44%, een stijging van 25 procentpunten sinds mei 2025, volgens enquêtegegevens de grootste groei van alle AI-providers in die periode. Het bedrijf genereert ongeveer 80% van zijn omzet uit enterpriseklanten en telt 8 van de Fortune 10 als Claude-klanten. Opvallende implementaties zijn onder meer Deloitte (470.000 medewerkers) en Cognizant (350.000 medewerkers).

Het AWS-partnerschap geeft Anthropic toegang tot aangepaste Amazon Trainium- en Inferentia-chips voor training en inferentie, wat kostenvoordelen op hardwareniveau biedt. Claude is ook beschikbaar op Google Cloud Vertex AI en sinds maart 2026 op Microsoft Foundry.

Anthropic loopt voorop in enterprise-usecases voor softwareontwikkeling en data-analyse. Een enquête onder enterprise-CIO’s wees uit dat de meerderheid van de bedrijven nu drie of meer modelfamilies gebruikt, wat suggereert dat multi-modelimplementatie gemeengoed wordt.

Prijzen en toegankelijkheid

Prijsstructuren verschillen tussen de consumentenchatproducten en de API’s. Ik behandel beide, maar omdat prijzen vaak veranderen, focus ik op de structuur in plaats van exacte bedragen. Check de prijs pagina van Anthropic en OpenAI’s prijspagina voor de laatste cijfers.

Consumentenabonnementen

Beide bedrijven bieden gratis lagen met beperkte toegang. De gratis laag van ChatGPT geeft toegang tot GPT-modellen met een roterende limiet van ongeveer 10 berichten per 5 uur, waarna wordt teruggevallen op een model van lagere kwaliteit. De gratis laag van Claude biedt toegang tot Sonnet 4.6 met dagelijkse berichtlimieten, maar zonder extended thinking.

OpenAI introduceerde in januari 2026 een nieuwe instaplaag: ChatGPT Go voor $8 per maand, tussen de gratis en Plus ($20 per maand) plannen in. Opvallend is dat het Go-plan advertentie-ondersteund is, waarmee OpenAI een van de eerste grote AI-labs is die reclame in zijn consumentenproduct introduceert. Claude Pro kost $20 per maand en omvat toegang tot alle modellen, Claude Code en extended thinking.

Aan de bovenkant kost het Pro-plan van OpenAI $200 per maand voor onbeperkte toegang. De Max-plannen van Anthropic variëren van $100 tot $200 per maand met 5x tot 20x Pro-gebruik.

Structuur van API-prijzen

OpenAI heeft een duidelijk prijsvoordeel aan de budgetkant. GPT-4.1 nano begint bij $0,10 per miljoen inputtokens, vergeleken met $1,00 voor Claude Haiku 4.5. Zelfs GPT-4.1 mini met $0,40 is merkbaar goedkoper dan Haiku.

Op het vlaggenschipniveau is het gat kleiner. Claude Sonnet 4.6 zit op $3/$15 (input/output per miljoen tokens) versus GPT-5.2 op $1,75/$14. Claude Opus 4.6 kost $5/$25 tegenover GPT-5.4 op $2,50/$15. OpenAI is per token doorgaans nog steeds goedkoper op elk vergelijkbaar niveau, maar Anthropic compenseert dit voor zware contextgebruikers door lange-contexttoeslagen op de nieuwste modellen te schrappen. GPT-5.4 rekent daarentegen een premie voor prompts boven 272K tokens.

Beide aanbieders bieden batch-API-kortingen en promptcaching om kosten voor productie-workloads te verlagen.

Anthropic vs. OpenAI: voor- en nadelen

Zo verhouden de twee platforms zich op de factoren die er het meest toe doen.

|

Factor |

OpenAI |

Anthropic |

|

Multimodaal |

Tekst, beeld, video en stem |

Alleen tekst en beeld |

|

Budgetprijzen |

GPT-4.1 nano voor $0,10/M inputtokens |

Haiku 4.5 voor $1,00/M inputtokens |

|

Contextvenster |

1,05M tokens; toeslag boven 272K |

1M tokens tegen vlak tarief |

|

Fine-tuning |

SFT, DPO, RFT voor GPT-4.1-familie |

Beperkt tot Claude 3 Haiku op Bedrock |

|

Codingtools |

Codex voor autonome clouddtaken |

Claude Code voor complexe refactoren en productie-output |

|

Enterprise-bereik |

~78% productiepénétratie |

~44%, +25 punten sinds mei 2025 |

|

Ecosysteem |

Azure, GitHub Copilot, GPT Store |

AWS Bedrock, Google Vertex AI, Microsoft Foundry |

|

Veiligheidstransparantie |

Model Spec publiek; interne redenering verborgen |

Constitutional AI en constitutie beide gepubliceerd |

|

Releasefrequentie |

Snel bewegend; frequente modeluitfasering |

Stabieler, minder breaking changes |

Wanneer kies je Anthropic of OpenAI

De juiste keuze hangt af van je specifieke usecase. Dit zijn de scenario’s waarin elk platform meestal beter past.

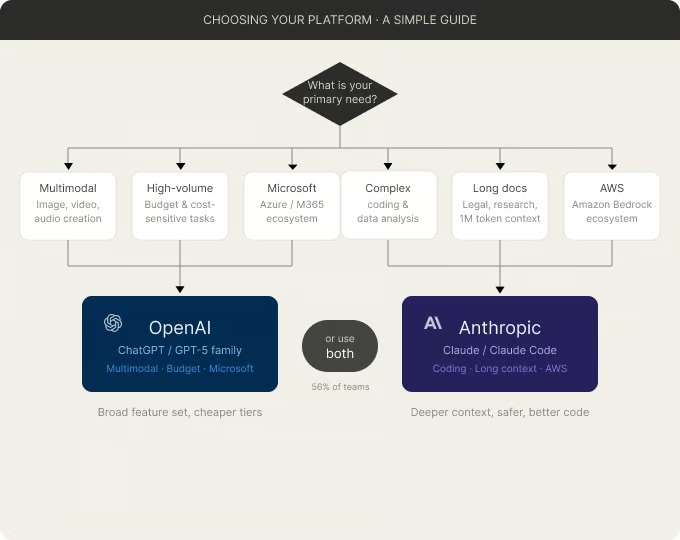

Een eenvoudige gids om je platform te kiezen. Afbeelding door de auteur.

Kies OpenAI als je multimodale ondersteuning nodig hebt voor tekst, beeld, audio en video. Als je organisatie draait op Microsoft en Azure, is de integratie met OpenAI goed ingeburgerd. Voor grootschalige, kostenkritische verwerking zijn OpenAI’s budgetmodellen veel goedkoper dan de tegenhangers van Anthropic. Als fine-tunen op proprietaire data een vereiste is, loopt OpenAI hier voorop.

Kies Anthropic als je werk complexe softwareontwikkeling of data-analyse omvat. Als je lange documenten moet verwerken, zoals juridische reviews, onderzoeksartikelen of audits van volledige codebases, is het vaste 1M-token contextvenster van Anthropic praktisch. Voor veiligheidskritieke implementaties heeft Anthropics Constitutional AI-aanpak een gedetailleerder publiek trackrecord. Als je op AWS bouwt, is Claude native geïntegreerd via Bedrock.

Voor veel teams is het antwoord beide. Marktcijfers tonen dat de meerderheid van de enterprises nu drie of meer modelfamilies gebruikt, en een groot deel van de betalende OpenAI-gebruikers betaalt ook voor Anthropic. Het routeren van verschillende taken naar verschillende modellen op basis van hun sterke punten wordt steeds gebruikelijker.

Conclusie: Anthropic vs. OpenAI in 2026

OpenAI heeft een groter bereik, diepere multimodale ondersteuning en goedkopere prijzen in het budgetsegment. Anthropic biedt sterkere prestaties op lange context, een transparanter veiligheidsdossier en tools die zijn gebouwd rond complexe codeer- en analysetaken.

De juiste keuze hangt af van wat je bouwt en in welk ecosysteem je al zit. Door OpenAI’s snelle uitfaseringscyclus is het de moeite waard om beide prijspagina’s en modellenlijsten te checken voordat je je vastlegt.

Ben je specifiek geïnteresseerd in hoe de codingtools van beide bedrijven zich verhouden? Lees dan ons artikel Codex vs. Claude Code voor een diepere kijk op die dimensie.

Bekijk ook onze aanbevolen resources:

- Onze Understanding ChatGPT-cursus legt uit hoe je effectieve prompts schrijft.

- Ons ChatGPT Fundamentals-traject combineert ChatGPT met Python en data-analyse.

- Onze Introduction to Claude Models-cursus leert je hoe je de Anthropic API gebruikt.

Ik ben een data-engineer en communitybouwer die werkt aan datapijplijnen, cloud en AI-tools, en tegelijkertijd praktische, impactvolle tutorials schrijft voor DataCamp en beginnende developers.

FAQs

Kan ik Claude en ChatGPT tegelijk gebruiken?

Ja, en veel professionals doen dat ook. Velen geven aan zowel Claude als ChatGPT parallel te gebruiken en verschillende taken naar elk te routeren. De twee platforms hebben verschillende sterke punten, dus beide draaien is in de praktijk gebruikelijk, ook al tellen de dubbele abonnementskosten op.

Welke AI is beter om data science te leren?

Beide werken goed, maar ze hebben verschillende stijlen. Claude geeft vaak meer gestructureerde, stapsgewijze uitleg en gaat goed om met lange documenten, dus het is handig bij het doornemen van studieboeken of research papers. ChatGPT heeft een breder ecosysteem met een gratis laag die snel toegang geeft tot capabele modellen. Ik zou beginnen met de gratis laag waar jij je het prettigst bij voelt en vervolgens upgraden op basis van wat je het meest gebruikt.

Is Anthropic "veiliger" dan OpenAI?

Anthropic heeft zijn merk gebouwd rond safety-first AI, en de Constitutional AI-aanpak is transparanter dan de meeste. Maar beide bedrijven hebben hun veiligheidsverplichtingen in 2025 en 2026 aangepast onder concurrentiedruk. Veiligheid is minder binair en meer een bewegend doel. Het artikel behandelt de specifieke beleidswijzigingen voor beide.

Ondersteunt Claude beeld- en videogeneratie?

Nee. Begin 2026 verwerkt Claude tekst- en beeldinputs, maar het genereert geen beelden, video of audio. OpenAI heeft een breder multimodaal pakket, inclusief beeldgeneratie, video via Sora en een realtime voice-API. Als je workflow afhankelijk is van het genereren van visuele of audiocontent, past OpenAI beter.

Welke is goedkoper voor API-gebruik?

Dat hangt af van de laag. OpenAI heeft veel goedkopere budgetmodellen: GPT-4.1 nano start op $0,10 per miljoen inputtokens tegenover $1,00 voor Claude Haiku 4.5. Op vlaggenschipniveau liggen de prijzen dichter bij elkaar, en Anthropic heeft lange-contexttoeslagen voor de nieuwste modellen geschrapt. Het juiste antwoord hangt af van je volume en welk modelniveau je echt nodig hebt.