course

Unele ecuații pur și simplu nu au o soluție algebrică elegantă.

Puteți factoriza și substitui cât doriți, dar unele ecuații nu au formă închisă. De exemplu, un polinom de gradul cinci sau mai mare nu are o soluție algebrică generală. Funcțiile care amestecă exponențiale cu polinoame, precum e^x = 3x, intră în aceeași categorie. În aceste cazuri aveți nevoie de o altă abordare.

Metoda lui Newton este acea abordare. Găsește numeric rădăcinile făcând presupuneri tot mai bune – fiecare ghidată de tangenta funcției în punctul estimării curente.

În acest articol, vă voi prezenta formula din spatele metodei lui Newton, cum funcționează pas cu pas, când converge și când nu – cu exemple concrete pentru a fixa teoria.

Căutați și alte subiecte de matematică esențiale pentru un data scientist? Citiți articolul nostru Serii geometrice: formulă, convergență și exemple pentru a vedea cum se aplică în finanțe, fizică și informatică.

Ce este metoda lui Newton?

Metoda lui Newton este o tehnică iterativă pentru a găsi rădăcinile unei funcții. Rădăcinile sunt valorile de intrare pentru care funcția este egală cu zero.

Începeți acest proces cu o estimare inițială. Apoi, metoda folosește geometria funcției în acel punct pentru a face o estimare mai bună. Repetați procesul, iar fiecare iterație vă aduce mai aproape de rădăcina reală.

Aceasta este întreaga idee. Aveți nevoie doar de o regulă de actualizare inteligentă și repetabilă care să converge către răspuns.

Formula metodei lui Newton

Miezul metodei lui Newton este o singură regulă de actualizare pe care o aplicați repetat până când sunteți suficient de aproape de rădăcină.

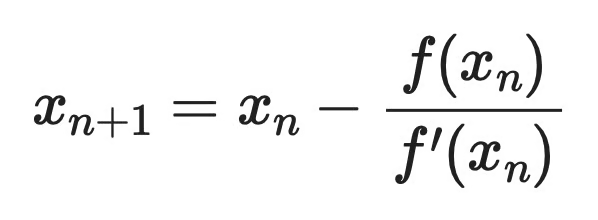

Iată formula:

Formula metodei lui Newton

Fiecare iterație ia estimarea curentă x_n și produce una mai bună, x_{n+1}. Continuați să actualizați până când rezultatul este suficient de aproape de zero.

Formula are trei componente:

-

x_n- estimarea dumneavoastră curentă a rădăcinii -

f(x_n)- valoarea funcției în acea estimare -

f'(x_n)- derivata funcției în acea estimare, care vă spune panta tangentei

Dacă f(x_n) este mare, sunteți departe de rădăcină. Dacă f'(x_n) este abruptă, funcția se schimbă rapid, deci puteți face un pas mai mare. Raportul f(x_n) / f'(x_n) vă spune exact cât de mult să vă deplasați – și îl scădeți din presupunerea curentă pentru a vă apropia.

Dacă f'(x_n) este zero sau aproape de zero, formula nu va funcționa cu adevărat. Ați împărți la zero, ceea ce înseamnă că metoda nu poate produce o estimare următoare. Voi detalia acest lucru în secțiunea despre limitări.

Cum funcționează metoda lui Newton

Metoda lui Newton urmează aceiași patru pași la fiecare iterație.

-

Alegeți o estimare inițială: Alegeți o valoare de start

x_0undeva aproape de rădăcină. Nu trebuie să fie exactă – doar suficient de aproape încât funcția să se comporte previzibil în jurul acelui punct. Voi explica ce înseamnă „suficient de aproape” în secțiunea despre convergență. -

Calculați valoarea funcției: Evaluați

f(x_0). Acest lucru vă spune cât de departe este funcția de zero în estimarea curentă. Dacăf(x_0) = 0, ați terminat – ați găsit rădăcina. -

Calculați derivata: Evaluați

f'(x_0). Aceasta vă dă panta funcției înx_0, care este panta tangentei în acel punct. -

Actualizați estimarea: Aplicați regula de actualizare conform formulei din secțiunea anterioară.

Și ați terminat!

Această nouă valoare x_1 este locul unde tangenta intersectează axa x. Geometric, trasați o linie dreaptă care atinge curba în x_0 și o urmați până la zero. Punctul de intersecție este următoarea dumneavoastră estimare, mai bună.

Apoi repetați. Introduceți x_1 în pașii 2 până la 4 pentru a obține x_2, apoi x_3 și așa mai departe. Fiecare iterație desenează o nouă tangentă în punctul actualizat și găsește unde intersectează axa x.

Procesul se oprește când f(x_n) este suficient de aproape de zero – de obicei când scade sub un prag mic stabilit de la început.

Interpretarea geometrică a metodei lui Newton

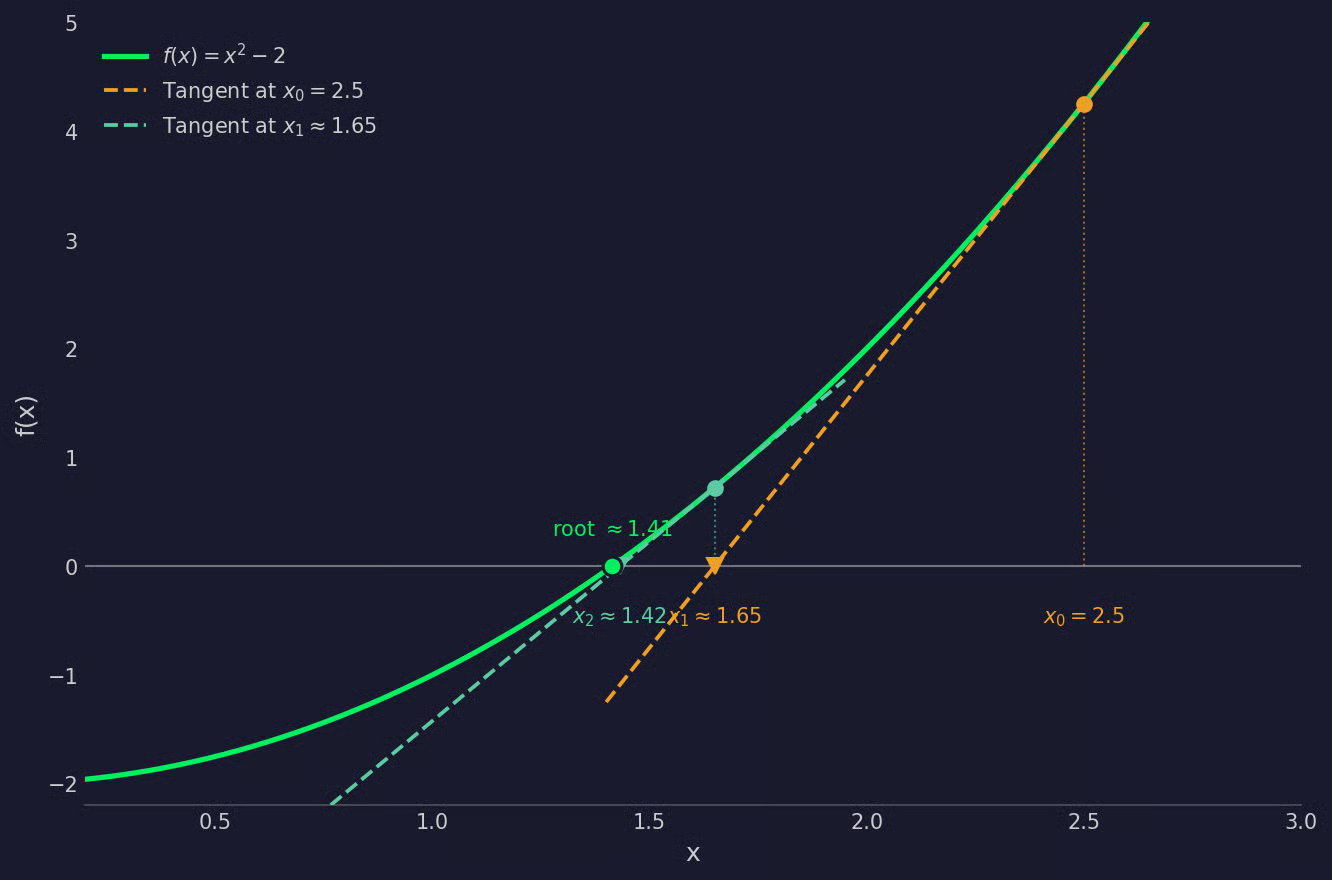

Imaginați-vă o curbă pe un grafic – aceasta este funcția dumneavoastră f(x). Rădăcina este locul unde curba intersectează axa x. Încă nu știți unde este acea intersecție, așa că porniți cu o presupunere x_0 undeva pe axa x.

La fiecare pas, plasați punctul (x_0, f(x_0)) pe curbă, apoi desenați tangenta în acel punct – o linie dreaptă care atinge curba acolo și îi urmează panta. Acea tangentă nu este orizontală. Este înclinată și, dacă o urmați în jos, intersectează axa x într-un punct. Acea intersecție este următoarea estimare, x_1.

Apoi repetați. În x_1, trasați o nouă tangentă și găsiți unde intersectează axa x. Asta vă dă x_2. Fiecare tangentă este o aproximație liniară locală a curbei, iar fiecare punct de intersecție ajunge mai aproape de rădăcina reală.

Graficul de mai jos arată două iterații ale metodei lui Newton aplicate la f(x) = x^2 - 2, pornind de la x_0 = 2.5:

Grafic de interpretare geometrică

Acest lucru funcționează deoarece o tangentă este cea mai bună aproximație liniară a unei curbe într-un punct dat. Cu cât sunteți mai aproape de rădăcină, cu atât tangenta seamănă mai mult cu curba însăși – și cu atât mai precis devine pasul următor.

În practică, estimările nu doar se târăsc spre rădăcină. Ajung acolo rapid, adesea dublând numărul de zecimale corecte la fiecare iterație.

Exemplu pas cu pas al metodei lui Newton

Să aplicăm metoda lui Newton la f(x) = x^2 - 2. Rădăcina acestei funcții este x = sqrt(2) ≈ 1.4142 – cu alte cuvinte, calculăm rădăcina pătrată a lui 2.

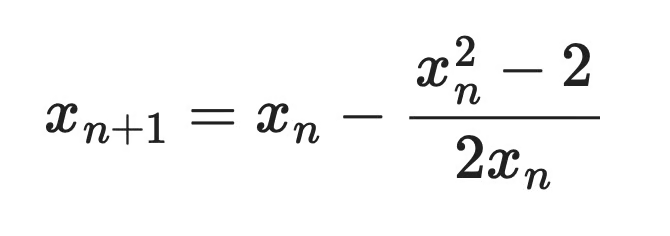

Derivata este f'(x) = 2x, astfel încât regula de actualizare devine:

Exemplu (regula de actualizare)

Să începem cu o estimare inițială x_0 = 2.5.

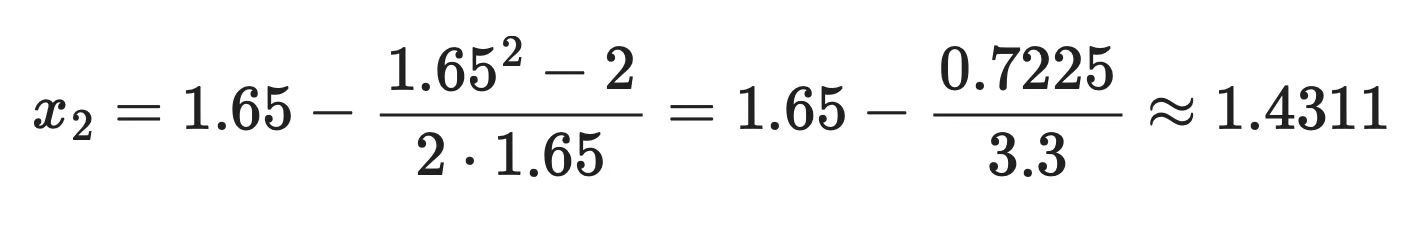

Iterația 1:

Exemplu (iterația 1)

Iterația 2:

Exemplu (iterația 2)

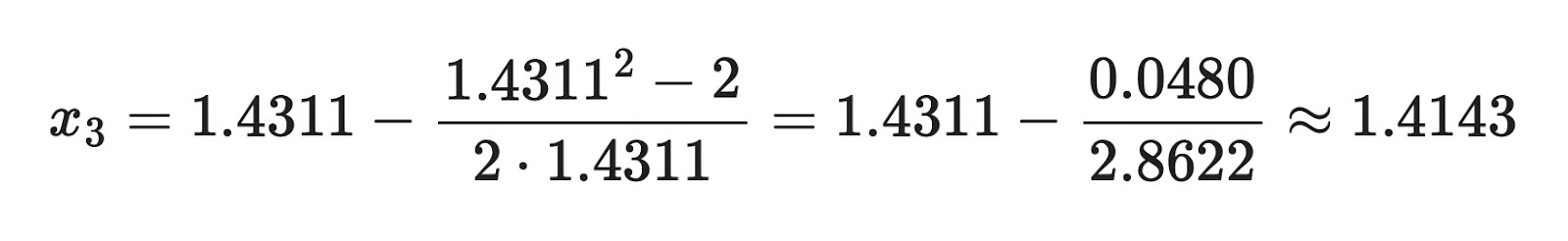

Iterația 3:

Exemplu (iterația 3)

După doar trei iterații, suntem deja exacți la patru zecimale. Eroarea a scăzut de la 1.086 la x_0 la 0.0001 la x_3 – și continuă să se reducă la fiecare pas.

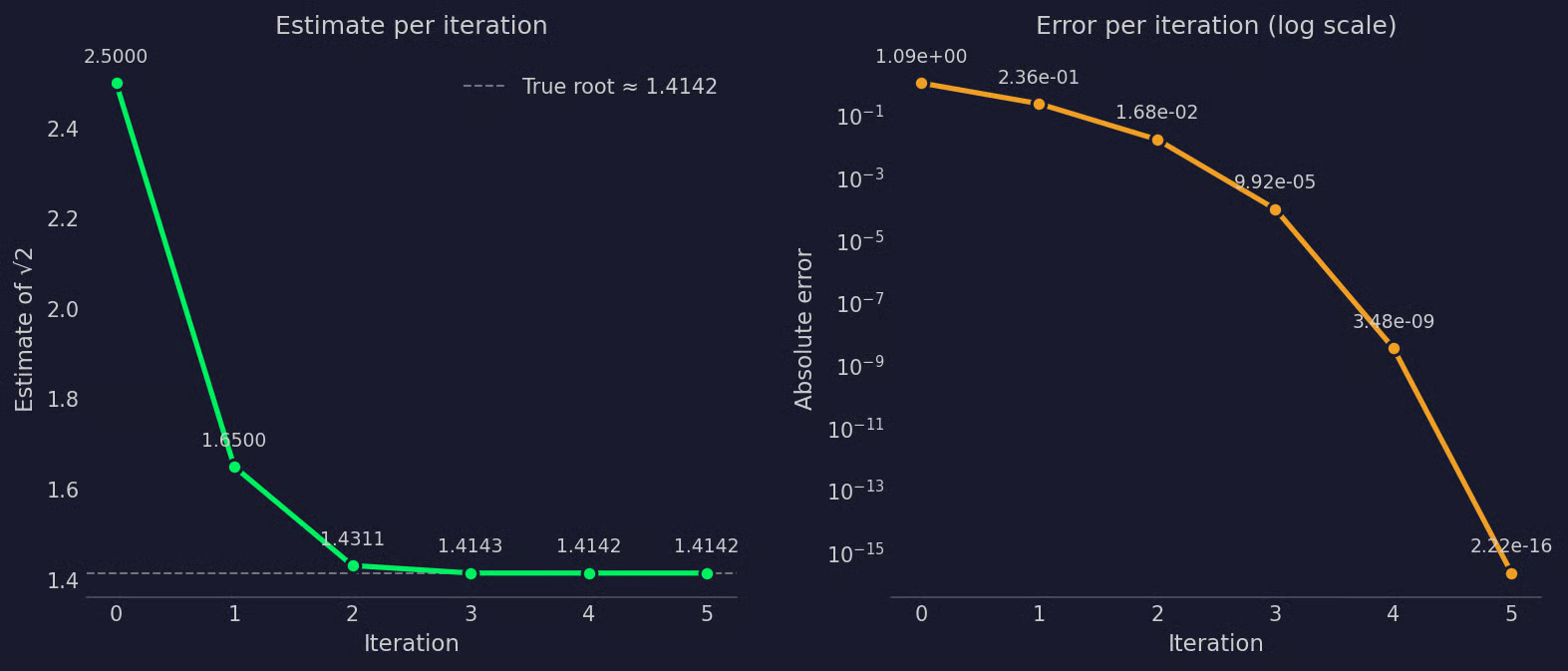

Iată cum funcționează vizual această estimare și valorile erorii:

Prezentare vizuală a estimării și erorii

Panoul din stânga arată cum fiecare estimare se apropie de sqrt(2) ≈ 1.4142, în timp ce panoul din dreapta arată cum eroarea se micșorează pe o scară logaritmică – fiecare iterație aproximativ dublează precizia celei precedente.

Convergența metodei lui Newton

Metoda lui Newton poate converge rapid, dar doar în condițiile potrivite.

Când estimarea inițială este aproape de rădăcină și funcția este netedă în acea regiune, metoda are convergență cuaternară. Acesta este termenul tehnic pentru ce ați văzut în exemplu: fiecare iterație aproximativ pătrățește eroarea celei precedente. Două zecimale corecte devin patru, patru devin opt și așa mai departe.

Pentru ca acest lucru să funcționeze, trebuie îndeplinite două condiții:

- O estimare inițială bună: Cu cât

x_0este mai aproape de rădăcina reală, cu atât metoda converge mai repede. Dacă porniți prea departe, tangenta în acel punct vă poate trimite în direcția greșită. - O funcție bine comportată: Funcția trebuie să fie netedă și derivabilă lângă rădăcină. Cotiturile ascuțite sau regiunile plate pot perturba aproximația cu tangenta.

Cel mai frecvent mod de eșec este o derivată aproape de zero.

Dacă f'(x_n) este aproape de zero, împărțiți la un număr foarte mic în regula de actualizare, ceea ce trimite următoarea estimare departe de rădăcină. În cel mai rău caz, f'(x_n) = 0 și calculele încetează să mai funcționeze deoarece nu puteți împărți la zero.

Un punct de pornire slab poate, de asemenea, face ca metoda să oscileze sau să diverge. În loc să se apropie de rădăcină, estimările sar înainte și înapoi sau se îndepărtează la fiecare iterație.

Metoda lui Newton răsplătește o configurare bună. O estimare inițială rezonabilă și o funcție netedă sunt tot ce are nevoie pentru a converge – și a converge rapid.

Avantajele metodei lui Newton

Când condițiile sunt potrivite, metoda lui Newton este greu de depășit.

Cel mai mare avantaj este convergența cvadratică. Majoritatea metodelor numerice se apropie de rădăcină cu o rată liniară, ceea ce înseamnă că fiecare iterație reduce eroarea cu o cantitate fixă. Metoda lui Newton, în schimb, pătrățește eroarea, ceea ce înseamnă că devine precisă rapid, cu foarte puține iterații.

Este, de asemenea, generală. O puteți aplica la o gamă largă de funcții – polinomiale, trigonometrice, exponențiale – fără a schimba nimic. De aceea apare în atâtea domenii, de la simulări inginerești la antrenarea modelelor de învățare automată.

Limitările metodei lui Newton

Metoda lui Newton cere mult în schimbul vitezei. Iată câteva limitări de reținut:

-

Necesită o derivată: Aveți nevoie de o expresie analitică pentru

f'(x)înainte de a putea rula o singură iterație. Pentru funcțiile la care derivata este greu de calculat (sau nu există), aveți nevoie de o altă abordare. -

Este sensibilă la estimarea inițială: Dacă porniți prea departe de rădăcină, metoda vă poate trimite în direcția greșită.

-

Este posibil să nu convergă: Dacă funcția are regiuni plate sau curbe ascuțite, aproximația cu tangenta pur și simplu nu funcționează.

-

Poate diverge sau oscila: În cazurile nefavorabile, estimările nu reușesc să converge și se îndepărtează de rădăcină sau sar la nesfârșit înainte și înapoi.

Așadar, înainte de a apela la metoda lui Newton, asigurați-vă că înțelegeți funcția.

Metoda lui Newton vs alte metode de găsire a rădăcinilor

Metoda lui Newton nu este singura modalitate de a găsi rădăcini și nu este întotdeauna cea potrivită pentru dumneavoastră.

Alte două metode apar des: metoda bisecției și metoda secantei. Permiteți-mi să le explic pe scurt.

Metoda bisecției

Metoda bisecției este cea mai simplă dintre cele trei. Porniți cu un interval [a, b] în care funcția își schimbă semnul – ceea ce înseamnă că trebuie să existe o rădăcină undeva în interior. Apoi tăiați repetat intervalul la jumătate, păstrând jumătatea în care încă apare schimbarea de semn.

Funcționează, dar este lentă. Eroarea se reduce la jumătate la fiecare iterație, ceea ce înseamnă convergență liniară. Dar este, de asemenea, garantată să funcționeze atâta timp cât funcția este continuă și intervalul inițial încadrează o rădăcină. Nu sunt necesare derivate.

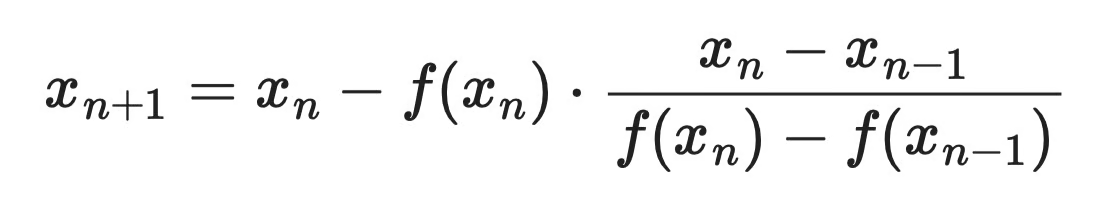

Metoda secantei

Metoda secantei este o rudă apropiată a metodei lui Newton. În loc să calculeze analitic derivata, o aproximează folosind două estimări anterioare:

Formula metodei secantei

Aceasta este o abordare bună când derivata este greu de calculat. Plătiți prin viteza de convergență – metoda secantei este mai rapidă decât bisecția, dar mai lentă decât metoda lui Newton.

Aplicațiile metodei lui Newton

Metoda lui Newton apare în știință, inginerie și învățare automată. Iată cum, concret.

Rezolvarea numerică a ecuațiilor

Aplicația cea mai directă. Când o funcție nu are soluție în formă închisă, metoda lui Newton găsește rădăcina. Aceasta apare constant în calculul științific – de exemplu, găsirea punctelor de echilibru în reacții chimice sau rezolvarea ecuațiilor transcendente în procesarea semnalelor.

Optimizare

Găsirea minimului sau maximului unei funcții f(x) înseamnă a găsi unde derivata sa f'(x) = 0. Aceasta este o problemă de găsire a rădăcinilor – ceea ce înseamnă că metoda lui Newton se poate aplica. Pur și simplu rulați algoritmul pe f'(x) în loc de f(x), folosind derivata a doua f''(x) în locul celei dintâi.

Această variantă se numește metoda lui Newton pentru optimizare și converge mai rapid decât gradient descent pe funcții netede, bine comportate.

Învățare automată

În învățarea automată, antrenarea unui model înseamnă minimizarea unei funcții de pierdere. Metoda lui Newton și variantele sale apar în câteva locuri aici.

L-BFGS (Limited-memory Broyden-Fletcher-Goldfarb-Shanno) este un optimizator quasi-Newton care aproximează derivata a doua pentru a evita calculul direct. Este o alegere standard pentru regresia logistică și alte probleme convexe. Metoda lui Newton stă, de asemenea, la baza actualizărilor Newton-Raphson utilizate în potrivirea modelelor statistice, precum modelele liniare generalizate.

Fizică și inginerie

Metoda lui Newton este peste tot în simulare și proiectare. Inginerii o folosesc pentru a rezolva sisteme neliniare de ecuații care descriu sisteme fizice – gândiți-vă la analiza tensiunilor structurale și la dinamica fluidelor. În fiecare caz, problema de bază se reduce la a găsi unde un set de ecuații este egal cu zero.

Greșeli frecvente în folosirea metodei lui Newton

Cele mai multe erori cu metoda lui Newton se reduc la aceleași patru greșeli. Iată-le:

-

Pornirea prea departe de rădăcină: O estimare inițială slabă este cel mai frecvent motiv pentru care metoda diverge sau oscilează. Dacă nu aveți o intuiție bună despre locul în care este rădăcina, reprezentați mai întâi funcția grafic. Acest lucru vă va arăta de unde să porniți.

-

Derivata greșită: Regula de actualizare depinde de

f'(x). O derivată incorectă – fie dintr-o eroare de calcul, fie dintr-o greșeală de codare – produce estimări greșite încă de la prima iterație, iar eroarea se compune pe măsură ce iterați. -

Neverificarea împărțirii la zero. Dacă

f'(x_n)este egală cu zero sau se apropie foarte mult, pasul de actualizare nu poate funcționa. Adăugați o protecție în implementare: dacă derivata scade sub un prag mic, opriți-vă și raportați eșecul, în loc să produceți un rezultat fără sens. -

Oprirea prea devreme. Întreruperea iterațiilor înainte ca estimarea să fi convergat vă lasă cu un răspuns care pare aproape, dar nu este. Setați condiția de oprire pe eroarea efectivă – fie

|f(x_n)|, fie|x_{n+1} - x_n|să scadă sub un prag ales în mod deliberat, nu doar pe un număr fix de iterații.

Concluzie

Metoda lui Newton este unul dintre cele mai utile instrumente în calculul numeric. O singură regulă de actualizare, aplicată repetat, poate găsi rădăcini cu precizie arbitrară în doar câteva iterații.

Plătiți pentru acea viteză cu anumite condiții. Aveți nevoie de o estimare inițială bună, o funcție neplată, neascuțită și o derivată nenulă pentru a obține convergență rapidă. Înțelegeți aceste condiții și veți ști când să apelați la metoda lui Newton și când să folosiți altceva (precum metodele bisecției sau secantei).

Cel mai bun mod de a vă forma această intuiție este să exersați pe exemple simple. Începeți cu f(x) = x^2 - 2, încercați puncte de pornire diferite și urmăriți ce se întâmplă. Treceți apoi la funcții cu rădăcini multiple sau regiuni plate și vedeți unde metoda cedează.

Dacă vă place conceptul de optimizare prin iterație, trebuie să cunoașteți gradient descent. Citiți Gradient Descent în învățarea automată: o analiză în profunzime pentru a afla cum optimizează modelele pentru învățarea automată.

Întrebări frecvente

Pentru ce este folosită metoda lui Newton?

Metoda lui Newton este o tehnică numerică pentru a găsi rădăcinile unei funcții – valorile lui x pentru care f(x) = 0. Este folosită în știință, inginerie și învățare automată ori de câte ori o ecuație nu are o soluție algebrică clară. Aplicații comune includ rezolvarea ecuațiilor neliniare, ajustarea modelelor statistice și alimentarea algoritmilor de optimizare precum L-BFGS.

De câte iterații are nevoie metoda lui Newton pentru a converge?

Depinde de funcție și de estimarea inițială, dar metoda lui Newton converge de obicei în foarte puține iterații când condițiile sunt potrivite. Datorită convergenței cvadratice, numărul de zecimale corecte aproximativ se dublează la fiecare pas. În practică, doar câteva iterații sunt adesea suficiente pentru a atinge precizia mașinii.

Ce se întâmplă dacă metoda lui Newton nu converge?

Dacă estimarea inițială este prea departe de rădăcină sau dacă funcția are o regiune plată aproape de punctul de pornire, metoda poate diverge sau oscila în loc să convergă. O derivată apropiată de zero este o cauză frecventă – trimite următoarea estimare mult pe lângă. În aceste cazuri, trecerea la o metodă mai stabilă, precum bisecția, sau îmbunătățirea estimării inițiale, rezolvă de obicei problema.

Care este diferența dintre metoda lui Newton și metoda secantei?

Ambele metode folosesc aceeași idee de actualizare de bază, dar metoda lui Newton necesită derivata analitică f'(x), în timp ce metoda secantei o aproximează folosind două estimări anterioare. Metoda secantei funcționează bine când derivata este dificil de calculat, dar converge puțin mai lent decât metoda lui Newton.

Ce înseamnă convergență cvadratică în metoda lui Newton?

Convergența cvadratică înseamnă că eroarea la fiecare iterație este aproximativ proporțională cu pătratul erorii din iterația anterioară. Pe scurt, dacă aveți două zecimale corecte, următoarea iterație vă dă patru, apoi opt și așa mai departe. Acesta este motivul pentru care metoda lui Newton este atât de rapidă comparativ cu metode precum bisecția, care doar înjumătățesc eroarea de fiecare dată.