Kurs

Modern BBO’lar daha derin, daha geniş ve daha fazla hesaplama gücü talep eder hale geldi; ancak daha fazla Transformer katmanı yığmak her zaman orantılı kazançlar getirmiyor. Bunun bir nedeni, standart artık bağlantıların katman çıktılarının sabit birim ağırlıklarla toplanması; böylece her katman, kendisinden önce gelen her şeyin uniform toplamını devralıyor. Bu, erken temsilcileri seyreltebilir, gizli durum büyüklüğünü artırabilir ve ağın en faydalı ara özellikleri seçici biçimde yeniden kullanmasını zorlaştırabilir.

Derinliği sabit bir toplamsal yineleme olarak ele almak yerine, Attention Residuals Kimi ekibinin makalesi her katmanın, öğrenilmiş softmax ağırlıklarıyla daha önceki katman çıktıları üzerine dikkat uygulamasına izin veriyor. Bu yazıda, standart artık toplamının neden darboğaza dönüştüğünü, Attention Residuals’ın nasıl çalıştığını, blok varyantının neden önemli olduğunu ve sonuçların daha derin dil modellerini ölçeklendirme hakkında gerçekte ne söylediğini anlayacağız.

Modern Transformer mimarilerinin ardındaki bazı fikirler hakkında daha fazla bilgi edinmek istiyorsanız, DataCamp kursu olan PyTorch ile Transformer Modelleri Eğitimina göz atmanızı öneririm.

Sorun: Boğulan Sinyal

Artık bağlantılar yıllardır derin öğrenmenin temel taşlarından biridir. Transformer’larda standart artık güncellemesi şöyledir:

hl=hl−1+fl−1(hl−1)

Bu, gradyanların çok derin ağlar boyunca akmasına yardımcı olur. Ancak artıklar sadece bir gradyan hilesi değildir. Aynı zamanda bilginin derinlik boyunca nasıl toplandığını da tanımlarlar. Yinelemeyi açarsak şunu elde ederiz:

hl=h1+∑i=1l−1fi(hi)

Bu, l’inci katmandaki gizli durumun sadece gömme (embedding) ve önceki tüm katman çıktılarının uniform ağırlıklı bir toplamı olduğu anlamına gelir. Yani her katkıya fiilen aynı ağırlık atanır.

Bu, ölçekte sorun hâline gelir. Makale PreNorm mimarilerinde, ağırlıksız artık birikiminin gizli durum büyüklüklerinin derinlikle birlikte yaklaşık O(L) olarak arttığını gösteriyor.

Artık akışı büyüdükçe, erken katman çıktıları giderek daha büyük bir koşan toplam içinde seyreliyor. Erken katman sinyali bu birikime karıştığında, daha derin katmanlar onu seçici olarak geri kazanamıyor. Dolayısıyla sadece toplanmış durum üzerinde çalışıyorlar.

Bu, makalenin “boğulan sinyal” etkisi dediği şeye yol açıyor. Bu verimsizliğin güçlü bir ampirik göstergesi, katman budama çalışmalarından geliyor; yani, eğitilmiş modellerdeki katmanların önemli bir bölümü, performansı asgari düzeyde etkileyerek kaldırılabiliyor. Bu da derinliği artırmaya devam ederken, modellerin bunu tam olarak kullanacak etkili bir mekanizmadan yoksun olduğunu düşündürüyor. Hiyerarşik bir akıl yürütme zinciri oluşturmak yerine, ağ daha çok, erken sinyallerin giderek seyreltildiği, gereksiz bir aktarıcı gibi davranıyor.

İleri geçişte de bir ödünleşim var. Artık akışı büyüdükçe, sonraki katmanlar, birikmiş durumu anlamlı biçimde etkileyebilmek için daha yüksek büyüklükte çıktılar üretmek zorunda kalabilir. Makale bunu, standart artık toplamı altında gizli durum büyüklüklerinin derinlikle tekdüze biçimde arttığı PreNorm davranışıyla ilişkilendiriyor.

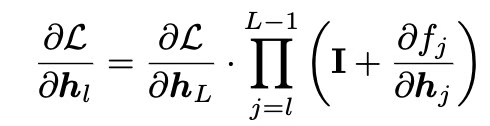

Artık bağlantılar gradyan akışına yardımcı olur ve ara bir gizli duruma göre gradyan şöyle yazılabilir:

Buradaki özdeşlik terimi, doğrudan bir gradyan yolunu korur. Ancak artıklar, ileri toplama yolunu yine de her önceki katmanı 1,0 sabit ağırlıkla ele almaya zorlar. AttnRes’in düzeltmeye çalıştığı yapısal sınırlama budur.

Toplamsal Artıklardan Derinlik Boyunca Dikkate

Makalenin en ilginç kavramsal hamlesi zaman–derinlik ikiliğidir. Artık bağlantılar, yinelenen ağların bilgiyi zaman boyunca sıkıştırmasına benzer biçimde, bilgiyi derinlik boyunca sıkıştırır. Dizi modellemede, dikkat mekanizması, her konumun önceki konumlara seçici biçimde erişmesine olanak tanıyarak yinelemeyi ikame etti. AttnRes aynı dönüşümü ağ derinliğine uygular. Bir sonraki gizli durumu, önceki katmanlar üzerindeki sabit bir toplam olarak tanımlamak yerine, AttnRes her katmanın önceki katman çıktıları üzerine dikkat uygulamasına izin verir:

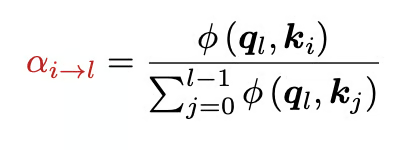

burada αi→l ağırlıkları, derinlik boyunca softmax dikkat ağırlıklarıdır ve toplamları 1’dir. Bu ağırlıklar şöyle hesaplanır:

makalede ise şu kullanılır:

![]()

Her katman, öğrenilen bir sahte-sorgu vektörü wl alır ve bu sorgu, önceki katman çıktılarından oluşturulan anahtarlar ve değerler üzerine dikkat uygular. RMSNorm, doğası gereği daha büyük çıktı büyüklüklerine sahip katmanların yalnızca ölçek olarak daha büyük oldukları için softmax’ta baskın hâle gelmemesi için anahtarlara uygulanır.

Makaledeki temel değişim, standart artıkları bir tür derinlik boyu doğrusal dikkat olarak ele almak; AttnRes’in ise bunu derinlik boyu softmax dikkate yükseltmesidir. Uniform birikim yerine, derinlik boyunca seçici geri getirim elde ederiz.

Küçük ama önemli bir uygulama detayı, başlatmadır. Yazarlar, tüm sahte-sorgu vektörlerinin sıfıra başlatılmasını önerir; bu, başlangıçtaki dikkat ağırlıklarının uniform olmasını sağlar; böylece model, rastgele önyargılı bir dikkat mekanizması yerine eşit ağırlıklı bir ortalama olarak eğitime başlar.

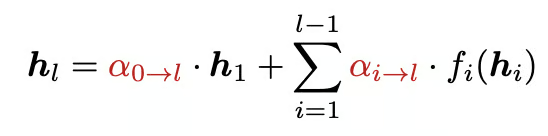

Şekil 1: Attention Residuals’ın genel görünümü (Attention Residuals makalesi)

Tam Attention Residuals

Tam Attention Residuals’ta her katman, önceki tüm katman çıktıları üzerine dikkat uygular; bu da bilgiyi derinlik boyunca seçici biçimde toplamaya olanak tanır. Bu yaklaşım, modele azami esneklik sağlar; böylece daha derin bir katman, eğer en faydalı sinyal oradaysa, doğrudan bir önceki katmana, ilk gömmeye veya herhangi bir önceki katmana vurgu yapabilir.

Standart eğitimde, Tam AttnRes’in bellek yükü ilk bakışta göründüğünden daha küçüktür; çünkü pek çok katman çıktısı zaten geri yayılım için tutulur. Ancak büyük ölçekli eğitim tabloyu değiştirir. Aktivasyon yeniden hesaplama ve boru hattı paralelliği devreye girdiğinde, bu önceki çıktılar, sonraki katmanların üzerlerine dikkat uygulayabilmesi için açıkça saklanmalı ve iletilmelidir; bu da maliyetli hâle gelir.

Dolayısıyla Tam AttnRes, temel fikri anlamanın en iyi yolu olsa da, ölçekli dağıtımda çoğu ekibin tercih edeceği sürüm değildir.

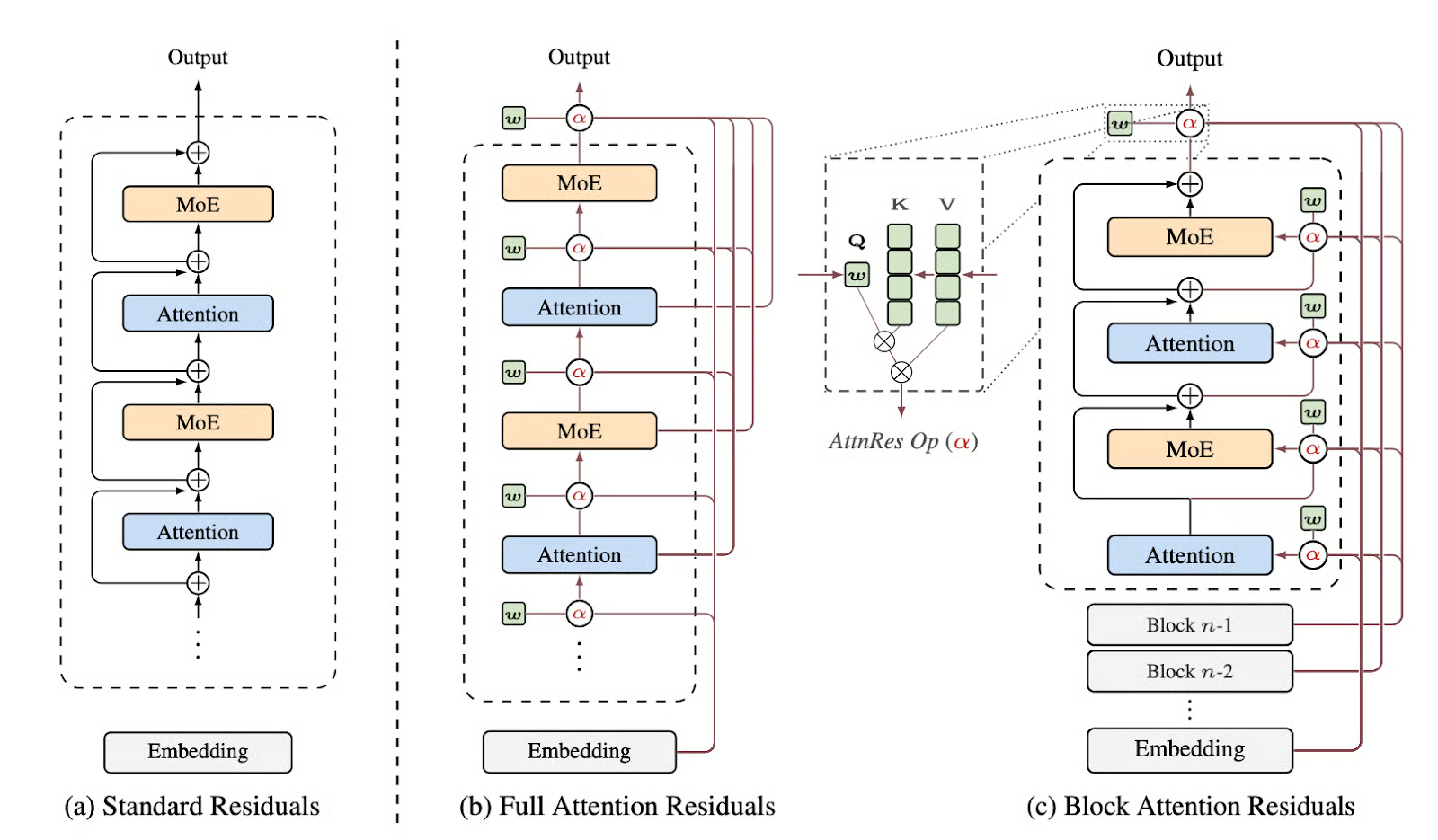

Blok Attention Residuals

Makale, bellek ve iletişim yükünü azaltmak için Blok Attention Residuals fikrini tanıttı. Modelin katmanları bloklara bölünür; her blok içinde çıktılar standart toplamsal artık birikimiyle birleştirilir; ancak bloklar arasında, model, tek tek önceki her katman yerine blok düzeyi özetler üzerinde dikkat uygular.

Eğer Bn blok n içindeki katmanlar kümesi ise, blok temsili şöyle verilir:

Ardından model, yerleştirme b0=h1 daha önceki blok özetlerine ve hesaplama ilerledikçe mevcut bloğun kısmi toplamına dikkat uygular. Bu, bellek ve iletişimi O(Ld)’den O(Nd)’ye düşürür; burada N blok sayısıdır.

Makale, yaklaşık sekiz blok kullanmanın tam sürümün faydasının çoğunu geri kazandırdığını ve Tam AttnRes ile Blok AttnRes arasındaki performans farkının ölçek büyüdükçe daraldığını bildiriyor. S=2,4,8 gibi blok boyutları tam sürüme oldukça yakın kalırken, çok daha kaba gruplamalar temel (baseline) davranışa geri yöneliyor.

Makaledeki en faydalı çıkarım, kazancın çoğunu elde etmek için her katman üzerinde tam derinlik boyu dikkate ihtiyacınız olmadığıdır. Blok AttnRes derinlik boyu dikkati hesaplamalı olarak mümkün kılsa da, bunu ölçekli biçimde verimli dağıtmak hâlâ dikkatli sistem tasarımı gerektirir. Makale ayrıca AttnRes’i gerçek dünya eğitimi ve çıkarımı için pratik kılan çeşitli optimizasyonlar sunar.

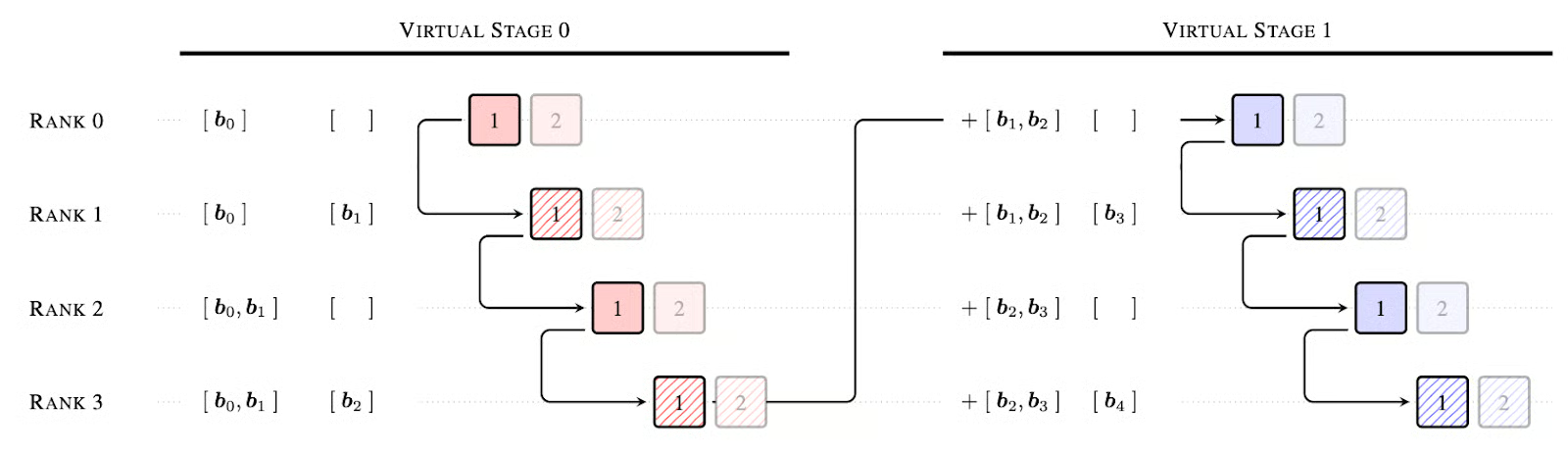

Şekil 2: 4 fiziksel derece ve derece başına 2 sanal aşama ile önbellek tabanlı boru hattı iletişimi örneği; taralı kutular AttnRes bloklarının sonunu gösterir (Attention Residuals makalesi)

İşte AttnRes’i standart artıkların yerine tak-çalıştır bir çözüm hâline getiren üç temel sistem optimizasyonu:

- İki aşamalı hesaplama: Sahte sorguyu gizli durumdan ayırarak, bir blok içindeki tüm katmanlar için sorguları toplu hâlde işleyebiliriz. 1. Aşama, bloklar arası dikkati paralel hesaplar; 2. Aşama ise Çevrimiçi Softmax Birleştirme kullanarak ardışık blok içi bağımlılıkları ele alır. Bu, mHC gibi önceki çok akışlı yöntemlere kıyasla katman başına G/Ç’yi önemli ölçüde azaltır.

- Aşama-ötesi önbellekleme: Boru hattı paralelliğinde, daha önce alınan blokları yerelde önbelleğe alarak gereksiz veri aktarımlarını ortadan kaldırıyoruz. Bu, Şekil 2’de gösterildiği gibi V sanal aşama sayısı olmak üzere, geçiş başına tepe maliyette V× iyileştirme sağlar ve iletişimin hesaplamayla tam örtüşmesine olanak tanır.

- Bellek verimli ön doldurma: Blok temsillerini tensör-paralel aygıtlar arasında parçalayarak, uzun bağlamlı (128K+) diziler için bellek ayak izi katlar mertebesinde azaltılır (ör. aygıt başına 15 GB’ten 0,3 GB’a).

Sonuçlar ve Performans Analizi

Makale, AttnRes’i ölçek yasaları, ablation’lar, eğitim dinamikleri ve aşağı akış kıyasları gibi birden çok düzeyde doğruluyor.

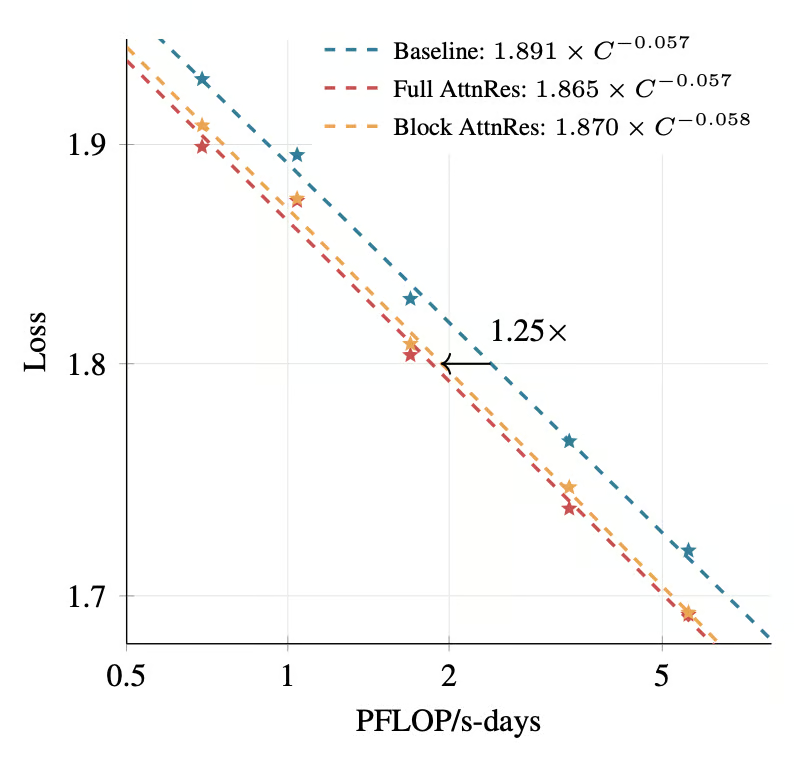

Şekil 3: Attention Residuals için ölçek yasası eğrileri. (Attention Residuals makalesi)

Bununla birlikte, en çarpıcı sonuç, ölçek yasası deneylerinden gelir. Beş model boyutu boyunca, hem Tam AttnRes hem de Blok AttnRes tutarlı biçimde temelden (baseline) daha düşük doğrulama kaybı elde eder. Uydurulan eğrilere göre, Blok AttnRes, temel modele kıyasla yaklaşık 1,25× daha fazla hesaplama ile eğitilmiş bir modelin kaybına aynı seviyede ulaşır. Bu, AttnRes’in sadece teoride daha iyi olmadığını, aynı zamanda daha hesaplama verimli olduğunu gösterir.

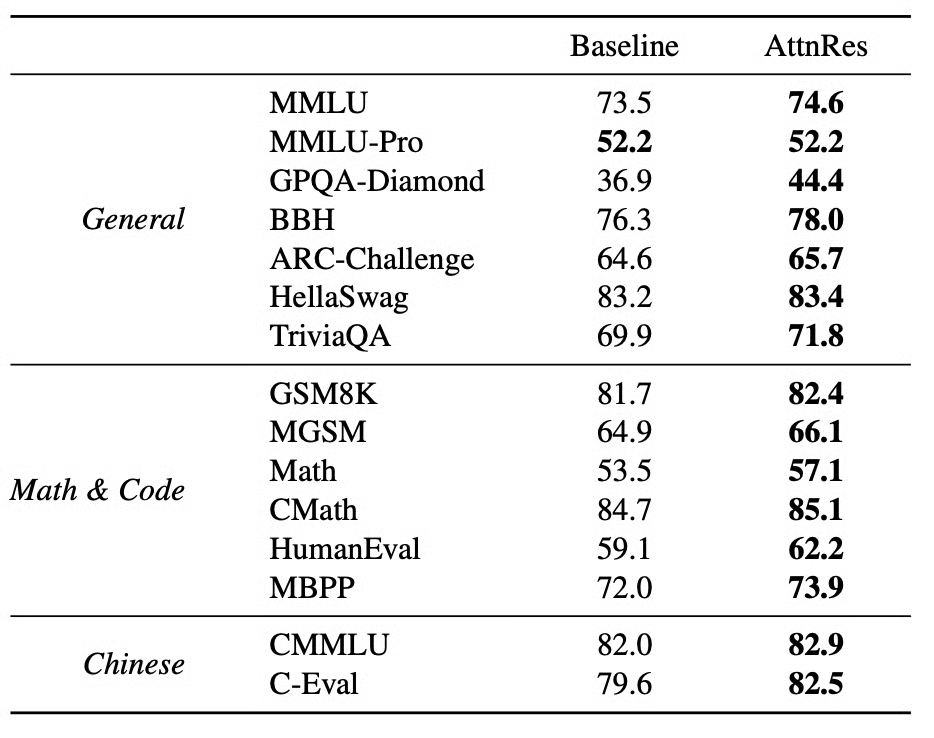

Aşağı akış görevlerinde, AttnRes değerlendirilen tüm kıyaslarda temel modele göre iyileşme sağlar. En büyük kazanımların bazıları, Tablo 1’de gösterildiği gibi, akıl yürütme yoğun görevlerdedir:

Tablo 1: AttnRes’in, aynı ön eğitim tarifi sonrası temel modelle performans karşılaştırması (Attention Residuals makalesi)

Kazançlar özellikle çok adımlı akıl yürütme, matematik ve kod görevlerinde güçlüdür; bu da, daha sonraki katmanların, bulanık bir toplamı devralmak yerine önceki temsilleri seçici olarak geri getirebilmesi durumunda, bileşimsel akıl yürütmenin iyileşeceği yönündeki makalenin temel hipoteziyle uyumludur.

Temel modele kıyasla, AttnRes eğitim boyunca daha düşük doğrulama kaybı, derinlik boyunca daha sınırlı çıktı büyüklükleri ve katmanlar arasında daha uniform gradyan büyüklükleri gösterir. Temel model, gizli durum büyüklüklerinin derinlikle tekdüze biçimde arttığı tipik PreNorm seyrelmesi deseninden muzdariptir; buna karşılık Blok AttnRes, blok sınırlarındaki seçici toplama sayesinde daha kontrollü, sınırlı bir desen üretir.

Ayrıca, sabit hesaplama ve sabit parametre taramasında, AttnRes için en uygun yapılandırmanın, temel modele kıyasla daha derin ve daha dar bir modele kaydığına dair daha geniş bir mimari bulgu da vardır. Bu, AttnRes’in standart artık toplamı altında olduğundan daha fazla derinliği faydalı kılabileceğini düşündürüyor.

Sonuç

Artıklar sıklıkla, yani gradyanları canlı tutan bir özdeşlik kestirmesi olarak, gerekli bir eğitim hilesi gibi ele alınır. AttnRes bu bakışın fazla dar olduğunu öne sürüyor. Artık yolları aynı zamanda bilginin derinlik boyunca yönlendirildiği mekanizmadır ve sabit toplamsal birikim, çok derin modeller için fazlasıyla ilkel kalabilir.

Attention Residuals, bilinen bir sorunu yamalamaktan ziyade, göz ardı edilmiş bir tasarım tercihinin yükseltilmesiyle ilgilidir. Dizi modelleme, sabit yineleme çok kısıtlayıcı olduğundan, yinelemeden dikkate evrildi. AttnRes, derinliğin de artık aynı dönüşüme hazır olabileceğini savunuyor.

Model hâlâ güçlü bir yerellik sergiliyor; katmanlar sıklıkla en çok yakın ardıllara dikkat ediyor; ancak aynı zamanda kayda değer atlama desenleri öğreniyor, gömme üzerinde kalıcı bir ağırlığı koruyor ve dikkat öncesi ile MLP öncesi katmanlar arasında farklı davranışı sürdürüyor.

Pratik çıkarım, tüm modellerin artıkların yerine tam derinlik boyu dikkati koyması gerektiği değildir. Bunun yerine, artık toplamını temel bir mimari tasarım alanı olarak yeniden çerçeveler.

Attention Residuals SSS

AttnRes, standart artık bağlantılardan nasıl farklıdır?

Standart artıklar, önceki katman çıktılarının sabit birim ağırlıklarla toplamıdır. AttnRes bunu, önceki katmanlar üzerinde öğrenilmiş softmax ağırlıklandırmasıyla değiştirir; böylece bir katman en ilgili önceki temsilleri seçici olarak geri getirebilir.

AttnRes hangi sorunu çözmeye çalışıyor?

AttnRes, derinlik altında uniform artık birikiminin sınırlılıklarını hedefler; özellikle gizli durum büyümesi, önceki katmanlara seçici erişimin kaybı ve daha geniş PreNorm seyrelmesi etkisi.

Tam AttnRes ile Blok AttnRes arasındaki fark nedir?

Tam AttnRes tüm önceki katman çıktıları üzerine dikkat uygular. Blok AttnRes ise katmanları bloklara ayırır ve bellek ile iletişimi O(Ld)’den O(Nd)’ye düşürerek blok özetleri ve kısmi toplamlar üzerine dikkat uygular.

AttnRes çıkarım gecikmesini artırır mı?

Evet, ancak makale, optimize edilmiş iki aşamalı uygulamanın, tipik çıkarım iş yüklerinde uçtan uca çıkarım gecikmesi ek yükünü %2’nin altında tuttuğunu bildiriyor.

En büyük bildirilen kazanımlar nelerdir?

Büyük Kimi Linear deneyinde, AttnRes değerlendirilen tüm görevlerde temel modele göre iyileşir; GPQA-Diamond, Math ve HumanEval kıyaslarında güçlü kazanımlar sağlar.

ML (Üretken Yapay Zekâ) alanında Google Developers Uzmanıyım, Kaggle 3x Expert unvanına sahibim ve 3+ yıllık teknoloji deneyimiyle Women Techmakers Elçisiyim. 2020'de bir sağlık teknolojileri girişiminin kurucu ortağı oldum ve Georgia Tech'te makine öğrenmesi alanında uzmanlaşarak bilgisayar bilimleri yüksek lisansı yapıyorum.