In 2026, 82% of enterprise leaders say their organization provides some form of AI training. And yet, 59% report an AI skills gap. If AI training is widely available, why isn’t it translating into workforce capability?

The answer isn’t lack of interest. It’s how training is designed.

In our 2026 survey of 500+ US and UK enterprise leaders, conducted with YouGov, leaders consistently identified structural flaws in corporate AI training programs, particularly around relevance, application, and measurement.

The problem is effectiveness, not access

Of the leaders surveyed:

- 82% offer some kind of AI training

- 68% say employees have access to AI learning resources

- 46% provide basic AI literacy training

But only 35% say they have a mature, organization-wide AI upskilling program.

Training exists; capability at scale does not. So what’s breaking down?

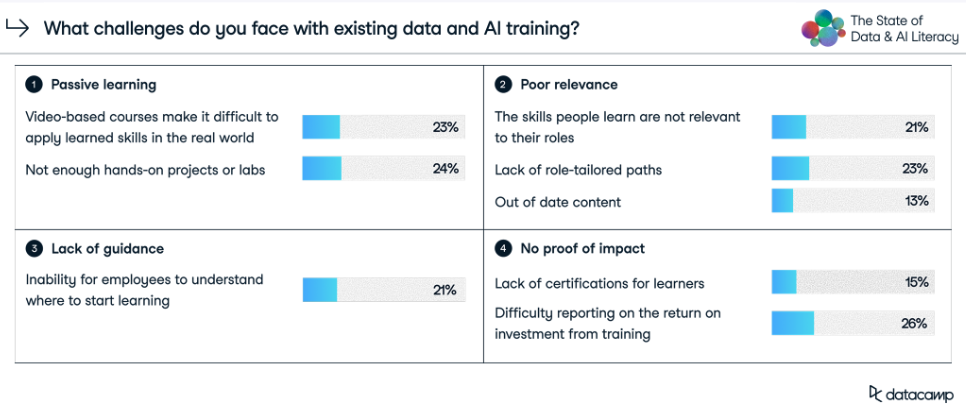

1. Passive learning dominates corporate AI training

The most common AI training format is a combination of online-based learning and occasional instructor-led sessions (40%). Leaders report that these formats struggle to build applied capability:

- 23% say video-based courses make it difficult to apply learned skills in the real world

- 24% cite a lack of hands-on projects or labs

Watching AI explained is not the same as using AI effectively. Without structured practice, employees may understand AI concepts but struggle to apply them in real workflows. The result: familiarity without fluency.

2. AI training isn’t role-relevant

Another consistent complaint is lack of role tailoring. When asked about challenges with online learning, nearly a quarter (23%) of leaders say learning paths are not tailored to specific roles. Another 21% say employees struggle to understand where to start.

The real problem is that generic AI literacy sessions often fail to connect to day-to-day responsibilities. The fact is that an HR leader, a finance manager, and a marketing analyst use AI differently, yet many AI training programs treat them the same.

When AI learning isn’t mapped to real use cases, adoption becomes fragmented and inconsistent.

3. Organizations struggle to measure ROI from training

Traditional AI training also lacks clear performance measurement. Common complaints from leaders include:

- Difficulty report on the ROI of training programs (26%)

- Lack of certification or proof of skill (15%)

If organizations can’t measure whether AI training improves performance, it becomes difficult to justify sustained investment.

This is particularly problematic when:

- 35% of leaders cite time constraints as the top barrier to improving AI skills

- 31% cite lack of budget

Without clear ROI, AI training competes, and often loses, against operational priorities.

4. One-off training can’t keep pace with AI’s evolution

AI tools evolve rapidly, yet many organizations still rely on one-time workshops or short-term learning initiatives.

AI literacy is not a static competency. It requires:

- Ongoing reinforcement

- Contextual practice

- Feedback loops

- Continuous adaptation

Traditional training models were built for slower-moving skills. AI capability demands a different system.

The consequence: Awareness without application

When AI training is passive, generic, and difficult to measure, the outcome is predictable:

- Employees experiment with AI but lack confidence

- Use cases remain superficial

- Risk increases due to overreliance or misunderstanding

- ROI from AI investments stagnates

Organizations with mature, workforce-wide upskilling programs are nearly twice as likely to report significant positive AI ROI. The full breakdown of how training maturity correlates with AI ROI is available in the 2026 State of Data & AI Literacy Report.

What effective AI training looks like instead

Leaders who report stronger workforce capability tend to move beyond traditional course-based models. Effective AI training programs are:

- Hands-on, emphasizing applied practice, not passive consumption

- Role-specific, mapped to real workflows

- Structured, with clear progression pathways

- Reinforced over time, not one-off sessions

- Measurable, tied to skill benchmarks and performance outcomes

In other words, they function as capability systems, not content libraries.

As a practical example, Bayer built a multi-tier Data Academy that strengthened enterprise-wide digital and AI fluency, with more than 90% of learners reporting improved innovation or process improvements.

When Bayer explored learning partners for its Data Academy, DataCamp stood out for both range and relevance. Through the extensive course catalog, spanning fields like data analytics, statistics, machine learning, SQL, and generative AI, multiple learner personas are supported through a single solution.

To move learners from theory to practice, Bayer pairs DataCamp learning with capstone projects. After completing courses, employees apply what they’ve learned to real Bayer use cases, ranging from greenhouse research to how to build neural networks, demonstrating their proficiency and identifying opportunities to create business value.

These outcomes weren’t driven by more AI tools. They were driven by better training design.

From content delivery to capability systems

The future of AI training is not more content. It’s better integration between learning and work.

Organizations that treat AI literacy as core infrastructure—in other words, embedded into workflows, reinforced over time, and measured against outcomes—are far more likely to close the AI skills gap and improve ROI.

DataCamp for Business is designed around this model, combining role-based learning paths, hands-on exercises, assessments, and workforce benchmarking to build applied AI capability at scale.

If you’re evaluating how to move beyond passive AI training, explore how DataCamp for Business supports enterprise AI upskilling.