Course

Функции активации решают, какие сигналы проходят через нейронную сеть, а какие нет. Если выбрать неправильную, модель будет учиться слишком медленно или не сможет обобщать. В течение многих лет разумным выбором по умолчанию была ReLU — она быстрая и достаточно хорошая для большинства задач.

GELU (Gaussian Error Linear Unit) это изменил. Сейчас это функция активации, стоящая за одними из самых мощных моделей, включая BERT и GPT.

В этой статье я разберу интуицию GELU, его формулу, сравнение с другими функциями активации и то, где его действительно стоит применять на практике.

Если вы совсем новичок в функциях активации в машинном обучении, прочитайте наш Гид для начинающих по выпрямленной линейной единице (ReLU).

Что такое функция активации GELU?

GELU, или Gaussian Error Linear Unit, — это функция активации, которая взвешивает входы по их величине с помощью плавного и вероятностного подхода.

Большинство функций активации принимают решение либо пропустить сигнал, либо заблокировать его. Например, ReLU обнуляет всё отрицательное и пропускает остальное без изменений. GELU работает иначе. Вместо жёсткого порога он плавно масштабирует входы в зависимости от их величины, то есть даже небольшие отрицательные значения всё ещё могут вносить вклад в выход.

Отличие от ReLU в том, что GELU гладкая и непрерывная повсюду. Нет резкого излома в нуле и никаких внезапных переходов. Эта гладкость важна при обучении, потому что даёт оптимизатору более чистую информацию о градиентах.

Интуиция GELU

Думайте о GELU как о фильтре, который не относится ко всем входам одинаково.

ReLU прямолинейна — всё отрицательное обнуляется всегда. А GELU задаётся вопросом: «насколько вероятно, что это входное значение будет полезным?» Явно большие положительные значения проходят почти без изменений. Малые или отрицательные — масштабируются вниз, а не отсекаются полностью.

В результате получается плавная кривая, которая подавляет менее релевантные сигналы, не отбрасывая их полностью.

Представьте, что вы просматриваете стопку резюме. Жёсткий фильтр исключит всех без диплома без исключений. Более умный фильтр всё же рассмотрит кандидатов «на грани», ведь у них может быть релевантный опыт, компенсирующий это. GELU работает как такой умный фильтр. Он не делает строгих отсечек, а взвешивает каждый вход по его величине и решает, сколько из него пропустить.

Именно такое постепенное и вероятностное масштабирование отличает GELU. Нет резких переходов и «мёртвых» нейронов — лишь плавное решение «пропустить или подавить» для каждого входного значения.

Формула GELU

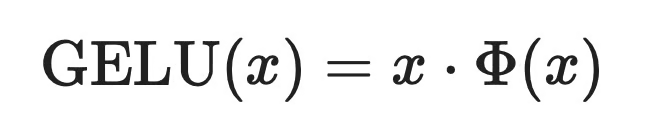

Точная формула GELU основана на функции распределения (CDF) нормального закона и записывается так:

Функция распределения нормального закона

где x — входное значение, а Φ(x) — это вероятность того, что случайная величина из стандартного нормального распределения меньше или равна x. Проще говоря, Φ(x) показывает, насколько «нормально» или ожидаемо входное значение — и именно эту вероятность GELU использует для масштабирования входа.

Чем выше вход, тем ближе Φ(x) к 1 — вход проходит почти без изменений. Чем ниже — тем ближе Φ(x) к 0 — вход подавляется.

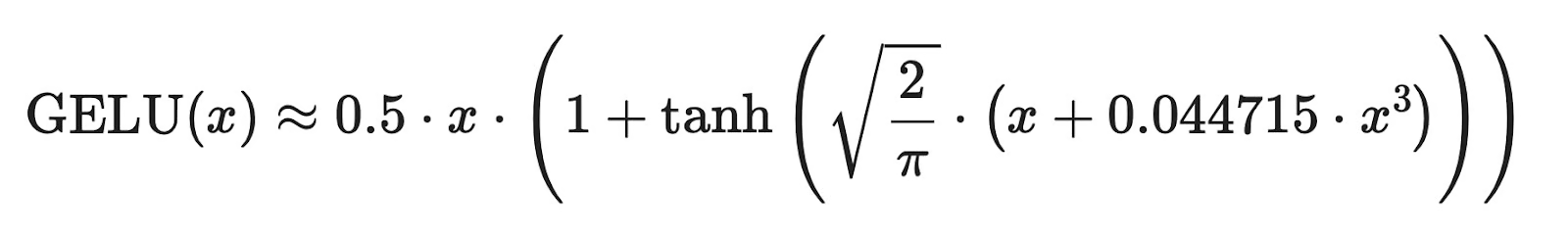

Аппроксимация, используемая на практике

Проблема точной формулы в том, что вычисление Φ(x) затратное. Оно включает функцию ошибок, у которой нет простого аналитического вида и которая медленно считается в больших масштабах.

Фреймворки глубокого обучения вместо этого используют такую аппроксимацию:

Аппроксимация формулы GELU

Эта аппроксимация использует tanh, которая быстра и хорошо поддерживается современным «железом». Результат практически идентичен точной формуле в диапазоне входов, который важен на практике, поэтому такие фреймворки, как PyTorch и TensorFlow, применяют её по умолчанию.

Разумеется, запоминать формулы не обязательно. Но полезно знать, что аппроксимация существует — и почему — чтобы понимать, что происходит при вызове GELU в вашем коде.

GELU и другие функции активации

Каждая функция активации по-своему обрабатывает входы, и эти различия сказываются на обучении модели.

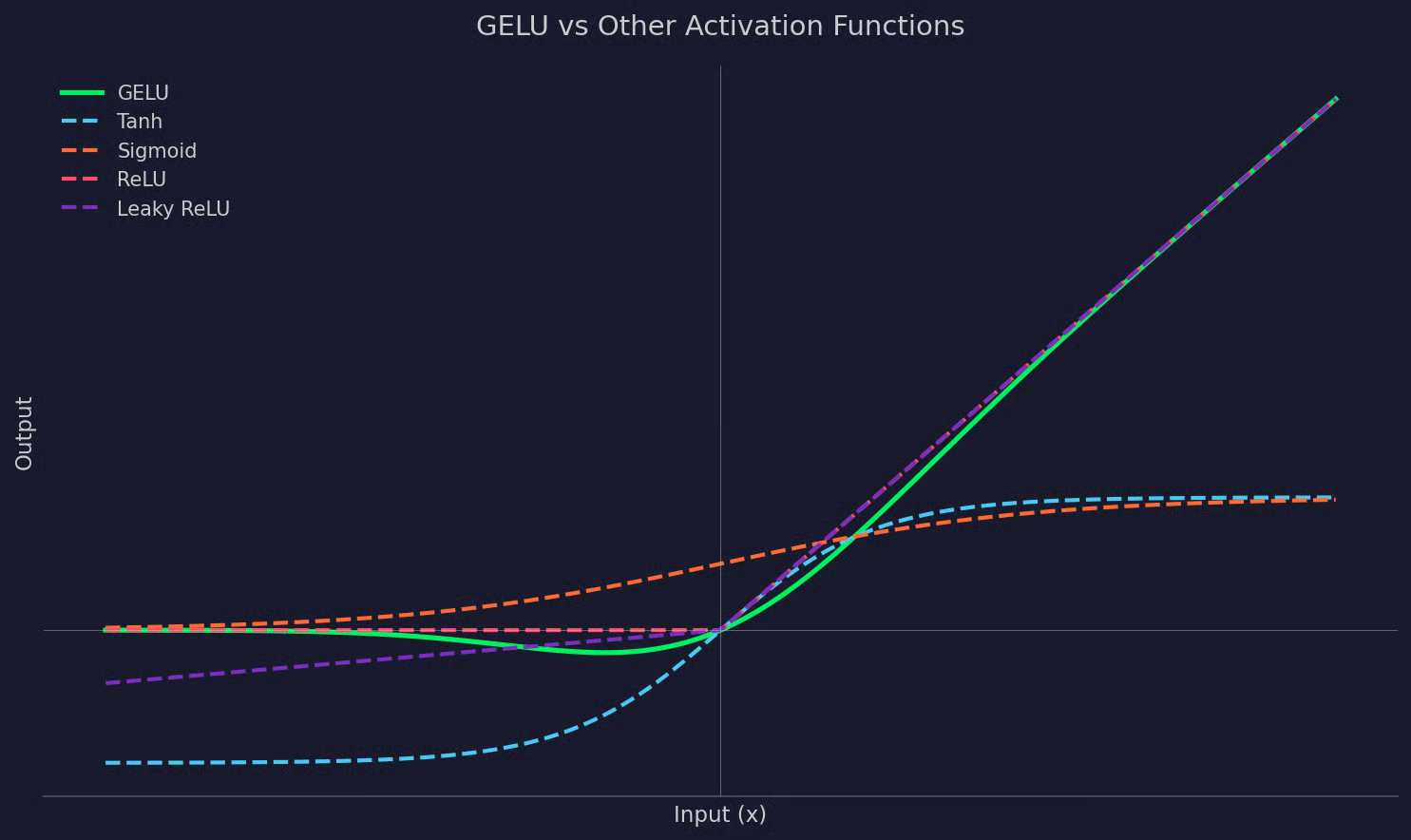

Вот как различия выглядят на графике перед текстовым объяснением:

График: GELU и другие функции активации

Sigmoid

Sigmoid сжимает все входы в диапазон от 0 до 1. Она гладкая, но имеет известную проблему: затухающие градиенты. Для очень больших или очень малых входов градиент стремится к нулю, из‑за чего глубокие слои перестают учиться. У GELU этой проблемы нет, потому что его градиент остаётся информативным на более широком диапазоне входов.

Tanh

Tanh похожа на Sigmoid, но центрирована в нуле, с выходами между -1 и 1. Она лучше обрабатывает отрицательные входы, чем Sigmoid, но всё равно страдает от затухающих градиентов на краях. GELU даёт более плавную выходную кривую и лучший поток градиентов в глубоких сетях.

ReLU

ReLU проста и быстра: положительные входы проходят без изменений, отрицательные обнуляются. Резкий порог в нуле приводит к проблеме «умирающих нейронов»: нейроны, которые долго получают отрицательные входы, полностью перестают обновляться. GELU избегает этого, не отсекая отрицательные входы, а масштабируя их.

Leaky ReLU

Leaky ReLU решает проблему умирающих нейронов, пропуская небольшую долю отрицательных входов. Это шаг вперёд по сравнению с ReLU, но переход в нуле остаётся резким. GELU даёт более плавную кривую в целом, что обычно лучше работает в глубоких архитектурах, где качество градиента особенно важно.

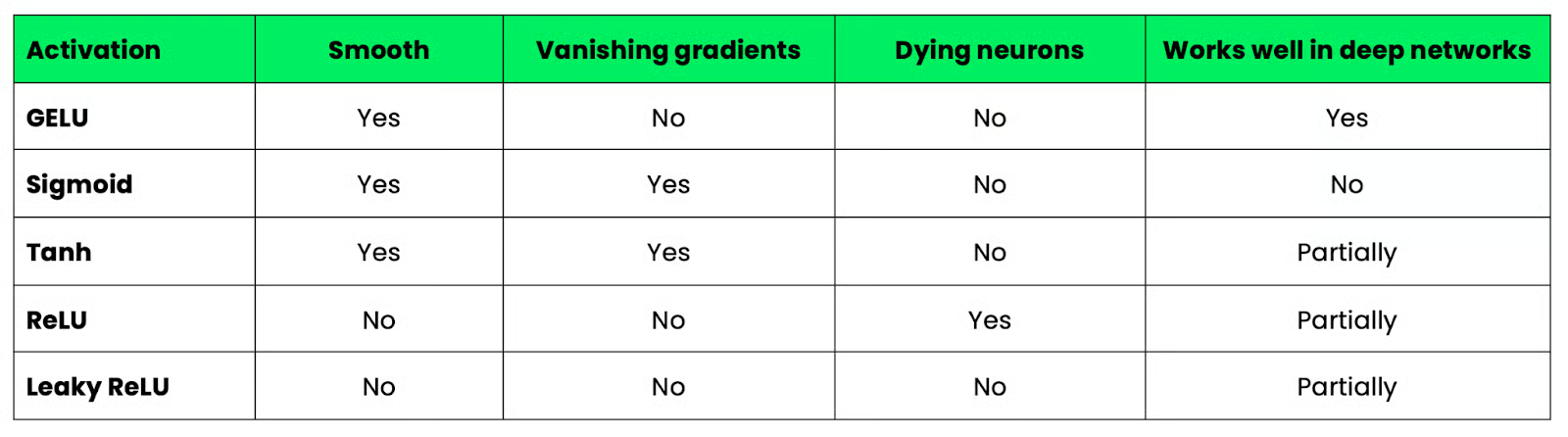

Итак, подытожим различия между этими пятью функциями активации:

Таблица: GELU и другие функции активации

Почему GELU используется в трансформерах

Трансформеры — это просто глубокие нейронные сети. И чем глубже сеть, тем больше значение имеет качество градиента.

Модели вроде BERT и GPT накладывают десятки слоёв друг на друга. На такой глубине небольшие проблемы с потоком градиентов накапливаются. Если ваша функция активации даёт нестабильные или почти нулевые градиенты в некоторых областях, ранние слои почти не обновляются при обучении, то есть мало чему учатся.

GELU избегает этого, сохраняя градиенты плавными и отличными от нуля на более широком диапазоне входов. Нет отсечки вроде нулевой границы ReLU, поэтому оптимизатор получает более чистый сигнал на каждом слое, а не только рядом с выходом.

Есть ещё одна причина, почему GELU хорошо вписывается в архитектуры трансформеров.

Трансформеры обрабатывают входы через механизмы внимания, которые порождают широкий диапазон значений активации — как положительных, так и отрицательных. Плавная функция активации лучше справляется с таким диапазоном, чем функция с резкими переходами.

Когда вышла оригинальная статья о BERT, авторы выбрали GELU вместо ReLU и сообщили о лучших результатах на своих бенчмарках. GPT пошёл тем же путём. С тех пор GELU стал функцией активации по умолчанию в большинстве архитектур на основе трансформеров — не потому, что это новинка, а потому, что он лучше работает в масштабах, характерных для этих моделей.

GELU на практике

Использовать GELU в ваших моделях так же просто, как и любые другие функции активации. И PyTorch, и TensorFlow имеют встроенную поддержку.

PyTorch

В PyTorch вы можете применять GELU как отдельный модуль или встроить в определение модели. Вот простой блочный модуль прямого распространения с GELU:

import torch

import torch.nn as nn

class FeedForwardBlock(nn.Module):

def __init__(self, input_dim, hidden_dim):

super().__init__()

self.fc1 = nn.Linear(input_dim, hidden_dim)

self.act = nn.GELU()

self.fc2 = nn.Linear(hidden_dim, input_dim)

def forward(self, x):

return self.fc2(self.act(self.fc1(x)))

block = FeedForwardBlock(input_dim=512, hidden_dim=2048)

x = torch.randn(8, 512)

output = block(x)nn.GELU() расположен между двумя линейными слоями — именно там он стоит в подслое прямого распространения трансформера. Активация выполняется после первой проекции и перед второй.

TensorFlow

В TensorFlow GELU доступен через API Keras:

import tensorflow as tf

from tensorflow import keras

model = keras.Sequential([

keras.layers.Dense(2048, input_shape=(512,)),

keras.layers.Activation("gelu"),

keras.layers.Dense(512)

])

x = tf.random.normal((8, 512))

output = model(x)Его также можно передать напрямую как строковый аргумент слою Dense:

keras.layers.Dense(2048, activation="gelu")Оба подхода дают один и тот же результат.

Где GELU уместен в сети

GELU располагается там же, где и любая другая функция активации — сразу после линейного преобразования и перед следующим слоем. В архитектурах трансформеров это внутри подслоя прямого распространения, между двумя плотными проекциями. В других глубоких сетях размещайте его после линейного или сверточного слоя, чтобы масштабировать выход перед передачей дальше.

Преимущества GELU

Если вы всё ещё читаете, вы уже знаете ключевые преимущества GELU по сравнению с другими функциями активации. Краткое резюме:

- Плавная активация: GELU даёт непрерывную, дифференцируемую кривую без резких переходов, что обеспечивает оптимизатор чистой информацией на каждом шаге.

- Лучший поток градиентов: GELU не обнуляет отрицательные входы, поэтому градиенты могут распространяться через нейроны, получающие отрицательные значения. Это снижает риск «смерти» нейронов во время обучения.

- Лучшая эффективность в глубоких моделях: В глубоких архитектурах, таких как трансформеры, совокупный эффект более плавных градиентов обычно приводит к лучшим результатам обучения по сравнению с простыми функциями активации.

Ограничения GELU

GELU подходит не всегда. Вот пара ограничений, о которых стоит помнить:

-

Дороже в вычислении, чем ReLU: GELU включает либо функцию ошибок, либо аппроксимацию на основе

tanh— обе дороже простого порогового оператора ReLU. В больших моделях с множеством слоёв это может накапливаться. -

Менее интуитивен: Про функции вроде ReLU легко рассуждать — положительные значения проходят, отрицательные — нет. Вероятностное масштабирование GELU сложнее интерпретировать.

-

Не всегда необходимо: Для неглубоких сетей или простых задач GELU не даёт значимых преимуществ. ReLU или Leaky ReLU часто справляются так же хорошо при меньших вычислительных затратах.

В итоге, если вы строите трансформер или другую глубокую архитектуру, GELU — крепкий выбор по умолчанию. Во всех остальных случаях оценивайте на бенчмарках, прежде чем останавливаться на нём.

Заключение

GELU — не универсальное улучшение и не «один размер для всех», который заменяет ReLU. Это осознанный конструкторский выбор, оправданный в конкретных контекстах — в глубоких сетях и моделях‑трансформерах.

Если вы работаете с BERT, GPT или любой моделью на основе трансформеров, вы уже используете GELU — осознанно или нет. Теперь вы знаете, почему он там.

Во всём остальном выбор функции активации — это баланс компромиссов. Нет функции, которая побеждает всегда, и понимание работы каждой позволяет принимать решение уверенно, а не по привычке.

Если различия между функциями активации всё ещё кажутся запутанными, запишитесь на наш трек «Machine Learning Engineer», чтобы получить карьерные навыки в ML и MLOps.

FAQs

Что такое функция активации GELU?

GELU (Gaussian Error Linear Unit) — это функция активации, используемая в нейронных сетях. В отличие от ReLU, GELU плавно масштабирует входы в зависимости от их величины, используя вероятностный подход. Это делает её более подходящей для глубоких архитектур, где качество градиента критично.

Чем GELU отличается от ReLU?

ReLU с жёстким порогом в нуле обнуляет все отрицательные входы, из‑за чего нейроны могут перестать обучаться — это проблема «умирающих нейронов». GELU избегает этого, постепенно масштабируя отрицательные входы вместо их отсечения. В итоге вы получаете более плавный поток градиентов и лучшую работу глубоких сетей.

Когда стоит использовать GELU вместо других функций активации?

Лучше всего GELU работает в глубоких архитектурах, особенно в трансформерах, таких как BERT и GPT. Для неглубоких сетей или простых задач вычислительные накладные расходы редко оправдывают отказ от ReLU. Начните с ReLU как с бенчмарка — при необходимости всегда можно протестировать другие варианты.

В чём разница между точной формулой GELU и аппроксимацией?

Точная формула GELU использует функцию распределения нормального закона, для вычисления которой требуется функция ошибок — операция, медленная в больших масштабах. В аппроксимации она заменена выражением на основе tanh, которое быстрее и хорошо поддерживается современным «железом». На практике оба варианта дают почти идентичные результаты, поэтому большинство фреймворков по умолчанию используют аппроксимацию.

Работает ли GELU и в PyTorch, и в TensorFlow?

Да, оба фреймворка поддерживают GELU. В PyTorch вы можете использовать nn.GELU() как модуль внутри определения модели. В TensorFlow можно передать строку "gelu" в аргумент activation любого слоя или использовать keras.layers.Activation("gelu").