Kurs

Most AI tool comparisons treat this as a spec sheet contest: who has more context, who scores higher on benchmarks, who costs less per month. That framing misses the more useful question, which is what kind of work you actually do, and whether the tool fits it.

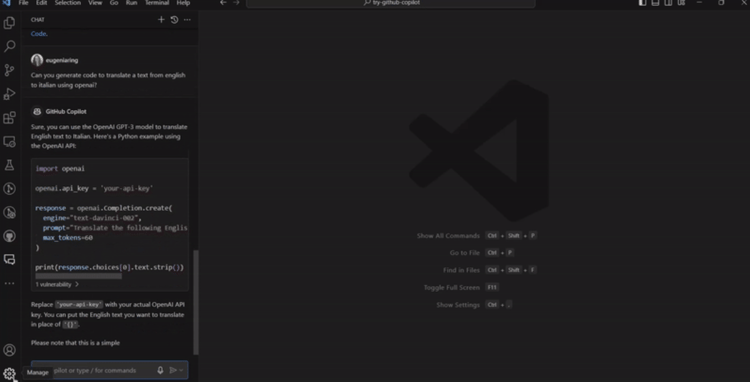

GitHub Copilot and Claude Code represent different answers to that question. Copilot started as an IDE assistant and grew into a multi-surface platform. Claude Code was built as an autonomous agent from the start and has never pretended to be anything else. That difference in origin still shapes how each tool feels on a normal workday, and it is a better frame for this comparison than any benchmark number.

One thing worth flagging: Cursor is a legitimate third option, building a full IDE around AI rather than adding AI to an existing one. This article does not cover it, but if you are evaluating the space, it belongs in the conversation.

What Is Claude Code vs. GitHub Copilot?

Before comparing them directly, it helps to look at each tool on its own.

What is Claude Code?

Claude Code is Anthropic's agentic coding tool. It runs as a command-line interface (CLI): you install it, navigate to your project, run claude, and give it tasks in plain English. It reads your codebase, edits files across multiple locations, runs shell commands, checks test output, and adjusts based on what it learns along the way.

It works through what Anthropic calls an agentic loop: gather context, take action, verify results, then repeat. You might say "fix the failing tests in the auth module," and Claude Code will run the tests, read the error output, find the relevant files, make edits, run the tests again, and keep going until it finishes or hits a blocker. You can interrupt and redirect at any point.

As of April 2026, Claude Code is available beyond the terminal. There is a VS Code extension, a JetBrains plugin (still in Beta), a rebuilt desktop app, a web version at claude.ai/code, and an iOS app. "Terminal-only" is no longer accurate, though the terminal is still where it is most complete.

You describe a task, it works on it, you review the result.

Two tools, two different starting points. Image by Author.

What is GitHub Copilot?

GitHub Copilot is harder to describe because there are now many things at once. It started as inline code completion and has since expanded into IDE extensions, a terminal CLI, a cloud agent, a code review tool, and Copilot Spaces for persistent project context.

The most used part is still the inline experience. As you type, Copilot suggests the next line or block of code, and you accept or ignore it. Agent mode, available in VS Code since April 2025 and in JetBrains since March 2026, lets it edit across multiple files at once. The cloud agent goes further: it works asynchronously on GitHub itself, opening pull requests after you have logged off.

The model flexibility is worth noting. In a single session, you can switch between GPT-4.1, GPT-5.4, Claude Sonnet 4.6, Claude Opus 4.7, or Gemini 3.1 Pro, depending on your plan.

Claude Code vs. GitHub Copilot: Key Differences

The biggest difference is execution model. Claude Code is agent-first: every interaction goes through a plan-execute-verify loop. Copilot is platform-first, offering inline autocomplete, chat, agent mode, and cloud agents as separate surfaces.

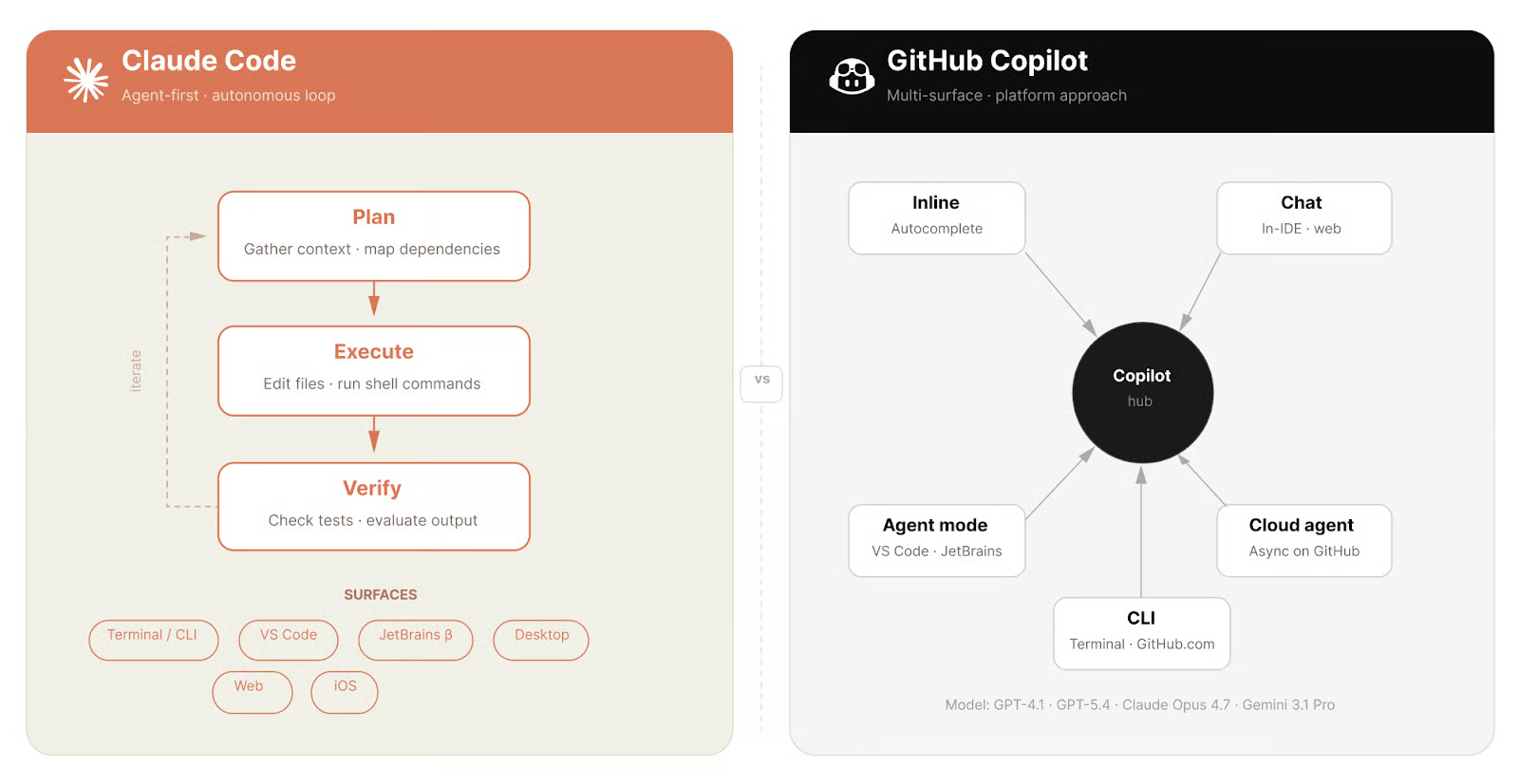

Context works differently too. Claude Code holds your codebase, project rules, and session history in a single window of up to 1 million tokens on the Opus and Sonnet 4.6 plans. Copilot assembles context through retrieval: workspace indexing, Copilot Spaces, and repository instructions. The difference shows up most on large refactors where cross-file dependencies are not obvious upfront.

And then there is the most noticeable thing: Claude Code has no inline autocomplete. Copilot does. If code appearing as you type is central to how you work, that is the comparison that matters most.

|

Dimension |

Claude Code |

GitHub Copilot |

|

Execution model |

Agent-first loop (plan, execute, verify) |

Multi-surface platform (inline, chat, agent, cloud) |

|

Inline autocomplete |

No |

Yes |

|

Context model |

Up to 1M tokens in-session |

Retrieval-based (indexing, Spaces, repo instructions) |

|

Multi-agent |

Agent Teams (experimental) |

/fleet command (April 2026) |

|

IDE support |

VS Code, JetBrains (Beta), desktop, web |

8+ editors natively, plus GitHub.com |

|

GitHub.com integration |

Via MCP server |

Native (cloud agent works on PRs and issues directly) |

|

Model choice |

Claude models only |

GPT-4.1, GPT-5.4, Claude Opus 4.7, Gemini 3.1 Pro, others |

|

Free tier |

No |

Yes |

|

Starting price |

$20/month |

$10/month, or $0 on Free |

Claude Code vs. GitHub Copilot for Developer Workflows

The right tool often depends on which part of the development cycle you are in.

Writing new code

Copilot is faster. The inline suggestions are context-aware, and it can predict your next edit before you make it. If you are writing a lot of new code in an established repo, Copilot keeps you moving.

Claude Code takes longer to start because it plans before it writes. For a simple function, that overhead is not worth it. For code with complex dependencies or architecture constraints, the output tends to be more coherent.

Refactoring large codebases

This is where the context window difference matters in practice. Claude Code can hold the entire relevant codebase in memory and make changes across it without losing track of what it already modified.

Copilot's agent mode handles multi-file edits well for targeted refactors. Context assembled through retrieval can miss cross-file dependencies in very large repositories, which is where the gap shows. For an extended autonomous refactor across dozens of files, Claude Code is the more reliable choice. That said, Copilot's agent mode has improved enough that for many day-to-day refactors, you may not notice a difference.

Debugging

Claude Code can trace errors across file boundaries, reproduce the issue in the terminal, and iterate until the bug is resolved without you switching context. Copilot is better for in-file debugging: inline fixes and Chat reasoning across open files. If the bug is isolated, Copilot is faster. If it spans several modules, Claude Code is usually the right tool.

Code Quality and Reasoning

Claude Code leads on SWE-bench Verified, the standard benchmark for coding agents on real GitHub issues. Claude Opus 4.7, generally available since April 16, 2026, scores 87.6%. Earlier Claude models scored around 80.8 to 80.9%.

GitHub Copilot does not publish a unified benchmark score as a product, because performance depends on which model is selected. With Claude Opus 4.7 selected inside Copilot, you are using the same model that drives Claude Code's top tier. What differs is the scaffolding: how context gets assembled and how errors are handled. The same model performs differently depending on what wraps it. Running Opus 4.7 inside Copilot also has a pricing implication I will get to.

Both tools can reason through complex problems. In Copilot Chat, particularly with Opus 4.7 selected, that happens through the chat interface when you ask for it. In Claude Code, it is chain-of-thought reasoning that runs automatically before every task: you can watch it work through an approach in the terminal before it writes a line.

Multi-File and Codebase Awareness

Claude Code loads your project directory, session history, and project-level instructions into a single context window. At up to 1 million tokens, most real codebases fit, though very large monorepos are a different story.

Copilot handles this through retrieval. Copilot Spaces let you pull together repositories and documentation as a reusable context source. Copilot Memory, currently in preview, stores learnings across sessions. This approach scales to codebases too large for any single context window.

Context size is not the story. Image by Author.

Developer Experience and Integration

The day-to-day feel is where they diverge most.

GitHub Copilot

Copilot is built around not changing how you work. The extension installs in one click, suggestions appear as you type, and the chat interface has no learning curve. It runs natively across eight editors and on GitHub.com. The Copilot CLI, generally available since February 2026, adds terminal interaction. You can also define project-specific rules in a repository instructions file that loads automatically.

Agent HQ, available to Pro and Business subscribers since February 2026, lets Claude and Codex act as first-party coding agents inside GitHub. You can run Claude-powered tasks directly from GitHub.com through Copilot's billing.

Claude Code

Claude Code requires a more deliberate setup. You install it from the command line, navigate to your project, and start a session. The VS Code extension integrates the CLI into the editor with inline diffs and a conversation sidebar. The rebuilt desktop app, released April 14, 2026, adds parallel sessions, a diff viewer, and SSH support.

The JetBrains plugin is still in Beta as of April 2026. Visual Studio 2026 is not yet supported. If either is where you spend most of your time, you can skip Claude Code for now.

The learning curve is real. There are slash commands, permission modes, and project configuration files that take time to learn. Claude Code also uses a CLAUDE.md file in your project root to carry conventions and rules across sessions, so the agent does not start fresh every time. None of it is difficult, but there is more upfront investment than Copilot asks for.

Pricing and Cost Structure

Both tools have moved away from flat-rate pricing, and the details matter more than they appear to.

|

Plan |

Monthly Price |

Premium Requests/Month |

|

Free |

$0 |

50 (plus 2,000 completions) |

|

Pro |

$10 |

300 |

|

Pro+ |

$39 |

1,500 |

|

Business |

$19/user |

300/user |

|

Enterprise |

$39/user |

1,000/user |

Standard models like GPT-4.1 and GPT-5 mini do not consume premium requests on paid plans. Higher-end models apply multipliers. Claude Opus 4.7 applies a 7.5x multiplier on a promotional basis until April 30, 2026, meaning one query costs 7.5 of your monthly allowance. Requests beyond your limit cost $0.04 each.

If you use Claude Opus 4.7 on a Pro plan, your 300 monthly requests run out after around 40 queries. Pro+ gives more room to work with.

Claude Code uses subscription tiers with no additional metered costs within each plan:

|

Plan |

Monthly Price |

Access |

|

Pro |

$20 |

Claude Code with Sonnet 4.6; limited Opus access |

|

Max 5x |

$100 |

Higher limits, full Opus 4.6/4.7 access |

|

Max 20x |

$200 |

Effectively unlimited daily use, Agent Teams |

Claude Code has no free tier. The API is available for pay-per-token usage; see Anthropic's platform documentation for current rates.

For occasional agentic work, Copilot Pro at $10/month is the better starting point. For heavy autonomous use, Claude Code Max at $100/month is often more predictable because you are not managing per-request multipliers.

When to Use Claude Code vs. GitHub Copilot

Choose Copilot if inline autocomplete is central to how you work, if you want minimal disruption to your current setup, or if your team is on GitHub Enterprise and needs native PR and code review integration. It also wins on model flexibility and cost: you can switch between GPT-4.1, Claude Opus 4.7, and others in a single session, and the free tier is a real on-ramp that Claude Code does not offer.

Choose Claude Code if you regularly work across large codebases where multi-file reasoning matters, if you prefer handing off a task and reviewing the result rather than co-piloting line by line, or if you work heavily in the terminal or over SSH. It is also the better fit for longer autonomous sessions and for building agent workflows with MCP integrations and sub-agents.

Use both if your work splits between fast in-editor coding and longer autonomous tasks. The typical setup is Copilot in the IDE for day-to-day work, quick edits, and PR reviews, with Claude Code in the terminal for the heavier jobs. And as mentioned earlier, Agent HQ lets Claude act as a coding agent directly inside GitHub Copilot, so the two are less separate than they look on paper.

Conclusion

Copilot fits alongside how you already work; Claude Code changes how you work. Neither is the better tool in absolute terms. They are built for different things, and the right choice comes down to how you actually spend your time writing code.

Both tools are still moving fast. Claude Code's rate-limit problems in March 2026 frustrated a lot of users, and Copilot's premium-request multipliers make cost planning harder than it should be. This comparison will look different in six months.

If you want to go deeper on the model side, our Introduction to Claude Models course covers working with the Anthropic API directly.

I’m a data engineer and community builder who works across data pipelines, cloud, and AI tooling while writing practical, high-impact tutorials for DataCamp and emerging developers.

FAQs

Does Claude Code work inside VS Code and JetBrains, or is it terminal-only?

Claude Code runs in both, though the experience differs. The VS Code extension handles inline diffs and plan review well, which makes it good for shorter tasks where you want to stay in the editor. For longer autonomous runs across many files, the terminal is easier to monitor. The JetBrains plugin works but is still in Beta as of April 2026, so rough edges are possible.

Can GitHub Copilot use Claude models?

Yes. Claude Sonnet 4.6 and Claude Opus 4.7 are both selectable inside Copilot. Opus 4.7 applies a 7.5x premium request multiplier on a promotional basis until April 30, 2026. The post-promotion rate had not been announced at the time of writing, so check current rates before counting on Opus 4.7 fitting your monthly allowance.

Which tool is better for a solo developer versus a team?

For solo developers, it mostly comes down to workflow: Copilot if you live in an IDE, Claude Code if you prefer terminal-based work. For teams, Copilot has a bigger head start on admin tooling: policy management, audit logs, and seat management at the Business and Enterprise tiers. Claude Code's team plans cover shared billing and SSO, but the organizational controls are thinner.

How do I decide which plan to start with?

For Copilot, the free tier is a real starting point: 50 premium requests and 2,000 completions is enough to evaluate both inline suggestions and basic agent use. For Claude Code, there is no free tier; Pro at $20/month is the entry point. If you mostly use standard models, Copilot Pro at $10/month stretches further than the price suggests. The cost math changes once you start using Opus 4.7 on either platform.