course

Google has just introduced Gemma 4, describing it as its most intelligent open model family so far, built for strong reasoning and agentic workflows. Gemma models are designed to be flexible across environments, with official support and tooling for local development, cloud deployment, and model customization, which makes them a strong choice for fine-tuning projects.

In this tutorial, we will fine-tune Gemma 4 E4B-it on a human emotion classification dataset from Hugging Face. We will set up a 3090 GPU environment, load and inspect the dataset, prepare and format the data for supervised fine-tuning, load the base model, run baseline evaluation before training, fine-tune the model, and then evaluate its performance again after training.

1. Setting Up the Environment

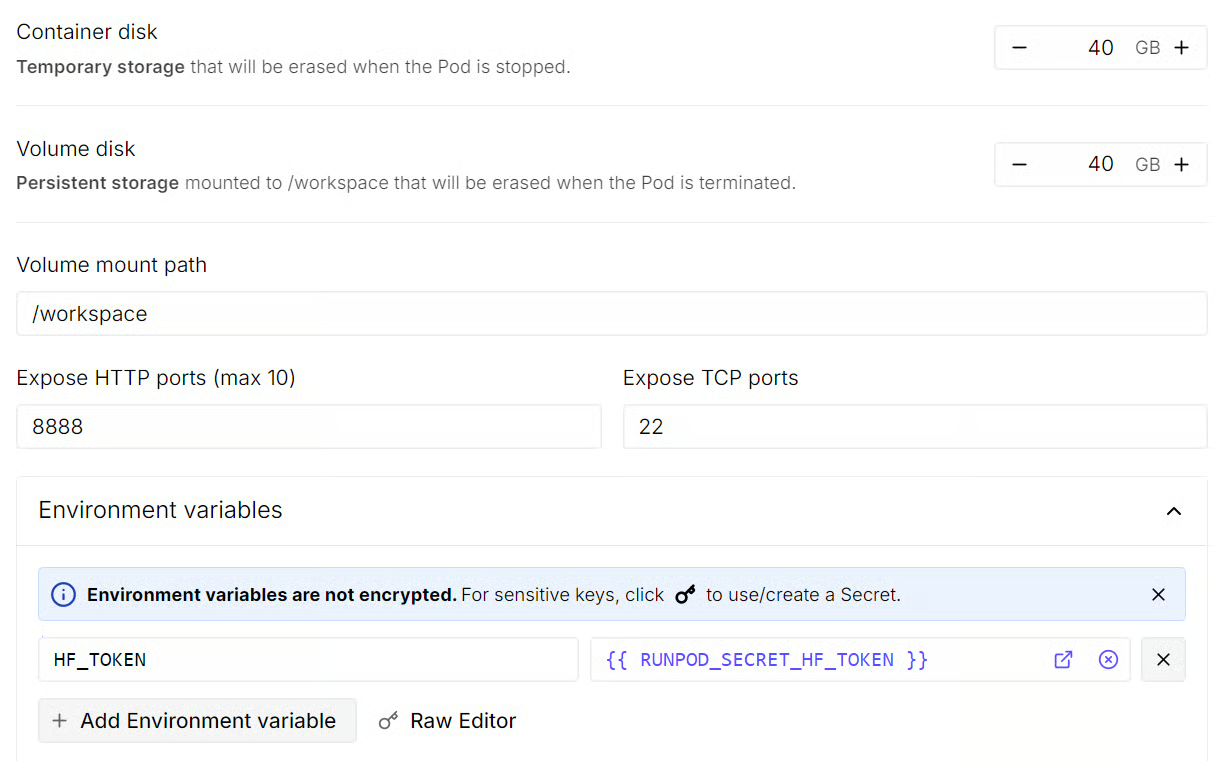

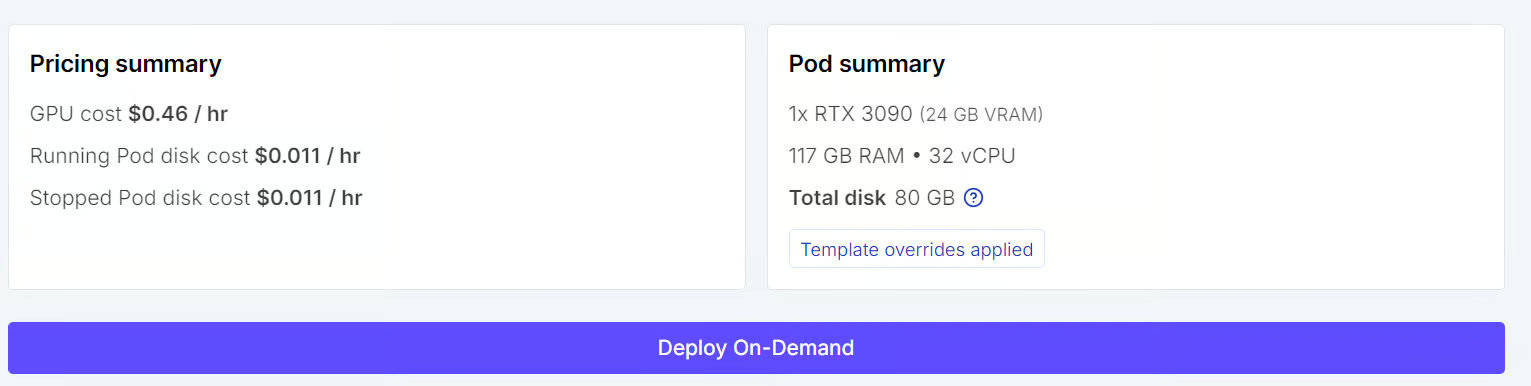

Start by launching a new Runpod instance, and make sure your account has at least $5 in credit before you begin. For this tutorial, choose a 3090 GPU pod and select the latest PyTorch template.

Before deploying, open the template settings and make a few updates. Increase both the container disk and volume disk to 40 GB so you have enough space for the model, dataset, cached files, and training checkpoints.

You should also add your Hugging Face token as an environment variable. You can generate this token from Settings > Access Tokens in your Hugging Face account.

Once these settings are in place, go ahead and deploy the pod. It may take a minute or two for the instance to start. After it is ready, open the JupyterLab interface so you can begin working inside the environment.

The first thing to do in JupyterLab, is launch the new Python notebook and install all the required Python packages. Run the following command in a notebook cell:

%%capture

!pip install -U transformers accelerate datasets trl peft bitsandbytes scikit-learn huggingface_hubThese packages will cover the full workflow, including loading the dataset, preparing the model, fine-tuning, and evaluation.

The last step is to sign in to the Hugging Face Hub using your saved token. This gives you access to the gated model and also makes it easier to upload files, create repositories, and push your fine-tuned model later.

import os

from huggingface_hub import login

hf_token = os.environ.get("HF_TOKEN")

if not hf_token:

raise ValueError("Set HF_TOKEN in the RunPod environment before running this notebook.")

login(token=hf_token)

print("Logged in to Hugging Face.")2. Load and Prepare the Emotion Dataset

Now that the environment is ready, the next step is to load the emotion dataset from Hugging Face and prepare smaller splits for training and evaluation.

For this tutorial, we are not using the full dataset. Instead, we create limited train, validation, and test splits so the fine-tuning process stays faster and easier to run on a single GPU.

from datasets import load_dataset, DatasetDict

TRAIN_LIMIT = 4000

VALIDATION_LIMIT = 400

TEST_LIMIT = 400

EVAL_LIMIT = 400

raw_dataset = load_dataset("dair-ai/emotion")

def maybe_limit(split, limit):

split = split.shuffle(seed=42)

if limit is None:

return split

return split.select(range(min(limit, len(split))))

dataset = DatasetDict({

"train": maybe_limit(raw_dataset["train"], TRAIN_LIMIT),

"validation": maybe_limit(raw_dataset["validation"], VALIDATION_LIMIT),

"test": maybe_limit(raw_dataset["test"], TEST_LIMIT),

})

datasetThe final dataset contains 4,000 training examples, 400 validation examples, and 400 test examples.

DatasetDict({

train: Dataset({

features: ['text', 'label'],

num_rows: 4000

})

validation: Dataset({

features: ['text', 'label'],

num_rows: 400

})

test: Dataset({

features: ['text', 'label'],

num_rows: 400

})

})Next, we look at the label names stored in the dataset. These are the emotion classes the model will learn to predict.

label_names = dataset["train"].features["label"].names

label_namesThis shows that the task has six emotion categories: sadness, joy, love, anger, fear, and surprise.

['sadness', 'joy', 'love', 'anger', 'fear', 'surprise']We can also inspect an example from the training split to see how the data is structured.

dataset["train"][0]Each example contains a piece of text and a numeric label. In this case, the label 4 maps to fear based on the label list above.

{'text': 'while cycling in the country', 'label': 4}3. Formatting Data for Gemma 4 Fine-Tuning

Before we can fine-tune the model, we need to convert the dataset into the format Gemma 4 will use during training.

Instead of passing only raw text and labels, we structure each example as a short chat interaction with a system message, a user message, and the expected assistant response.

The system prompt tells the model exactly what task it should perform. In this case, we want the model to act as an emotion classification assistant and return only one of the six allowed labels.

SYSTEM_PROMPT = """You are an emotion classification assistant.

Read the user's text and answer with exactly one label.

Only choose from: sadness, joy, love, anger, fear, surprise.

Return only the label and nothing else."""In this setup, the user message contains the input text we want to classify, and the assistant message contains the correct label. This is the format used for supervised fine-tuning, where the model learns to generate the right response for each training example.

def to_prompt_completion(example):

text = example["text"]

label = label_names[example["label"]]

return {

"prompt": [

{

"role": "system",

"content": SYSTEM_PROMPT,

},

{

"role": "user",

"content": f"Classify the emotion of this text:\n\n{text}",

},

],

"completion": [

{

"role": "assistant",

"content": label,

}

],

}

sft_dataset = dataset.map(to_prompt_completion, remove_columns=dataset["train"].column_names)After applying this formatting function, the original text and label columns are replaced with structured prompt and completion fields.

We can inspect one example to confirm that the dataset has been formatted correctly.

sft_dataset["train"][0]The output shows the full training structure clearly. The model sees the instruction, reads the input text, and learns to produce the correct emotion label as the answer.

{'prompt': [{'content': "You are an emotion classification assistant.\nRead the user's text and answer with exactly one label.\nOnly choose from: sadness, joy, love, anger, fear, surprise.\nReturn only the label and nothing else.",

'role': 'system'},

{'content': 'Classify the emotion of this text:\n\nwhile cycling in the country',

'role': 'user'}],

'completion': [{'content': 'fear', 'role': 'assistant'}]}4. Load Gemma E4B-it With 4-Bit Quantization

Now we can load Gemma 4 E4B-it and prepare it for fine-tuning. Since this is a relatively large model, we load it with 4-bit quantization to reduce memory usage and make it easier to run on a 3090 GPU. We also use bfloat16 as the compute type, which helps keep the setup efficient.

We start by importing the required libraries and defining the main model settings.

import torch

from transformers import AutoModelForCausalLM, AutoTokenizer, BitsAndBytesConfig

MODEL_ID = "google/gemma-4-E4B-it"

MODEL_DTYPE = torch.bfloat16

USE_4BIT = TrueNext, we enable a few CUDA optimizations and load the tokenizer.

if torch.cuda.is_available():

torch.backends.cuda.matmul.allow_tf32 = True

torch.backends.cudnn.allow_tf32 = True

processor = AutoTokenizer.from_pretrained(MODEL_ID, use_fast=True)

if processor.pad_token is None:

processor.pad_token = processor.eos_tokenNow we prepare the quantization settings and model loading arguments.

bnb_config = None

model_kwargs = {

"device_map": "auto",

}

if USE_4BIT:

bnb_config = BitsAndBytesConfig(

load_in_4bit=True,

bnb_4bit_quant_type="nf4",

bnb_4bit_compute_dtype=MODEL_DTYPE,

)

model_kwargs["quantization_config"] = bnb_config

else:

model_kwargs["torch_dtype"] = MODEL_DTYPEFinally, we load the model and align its configuration with the tokenizer.

base_model = AutoModelForCausalLM.from_pretrained(MODEL_ID, **model_kwargs)

base_model.config.use_cache = False

base_model.config.pad_token_id = processor.pad_token_id

base_model.config.bos_token_id = processor.bos_token_id

base_model.config.eos_token_id = processor.eos_token_id

base_model.generation_config.pad_token_id = processor.pad_token_id

base_model.generation_config.bos_token_id = processor.bos_token_id

base_model.generation_config.eos_token_id = processor.eos_token_id

print(f"Base model loaded with 4-bit={USE_4BIT} and dtype={MODEL_DTYPE}.")This loads the base model onto the available device, disables caching for training, and makes sure the special token IDs are set correctly for both the model config and generation config.

Base model loaded with 4-bit=True and dtype=torch.bfloat16.5. Evaluate the Base Model

Before fine-tuning, it is useful to evaluate the base model first so we have a clear baseline to compare against later.

In this section, we define a few helper functions that generate predictions, extract valid emotion labels, and run evaluation on the test split.

We start by creating a simple label extraction pattern and helper functions for prediction.

These functions handle the full prediction flow. The model receives the input in chat format, generates a short response, and then we extract the predicted label. If the model returns extra text, the helper function tries to recover the first valid emotion label.

import re

LABEL_PATTERN = re.compile(r"\b(sadness|joy|love|anger|fear|surprise)\b", re.IGNORECASE)

def extract_label(raw_text: str) -> str:

raw_text = raw_text.strip().lower()

match = LABEL_PATTERN.search(raw_text)

if match:

return match.group(1)

first_token = raw_text.split()[0].strip(".,!?:;\"'()[]{}") if raw_text.split() else ""

return first_token

def generate_label(model, processor, user_text, system_prompt, max_new_tokens=4):

messages = [

{

"role": "system",

"content": system_prompt,

},

{

"role": "user",

"content": f"Classify the emotion of this text:\n\n{user_text}",

},

]

device = next(model.parameters()).device

inputs = processor.apply_chat_template(

messages,

tokenize=True,

add_generation_prompt=True,

return_dict=True,

return_tensors="pt",

).to(device)

input_len = inputs["input_ids"].shape[-1]

with torch.no_grad():

outputs = model.generate(

**inputs,

max_new_tokens=max_new_tokens,

do_sample=False,

pad_token_id=processor.pad_token_id,

eos_token_id=processor.eos_token_id,

)

raw_pred = processor.decode(outputs[0][input_len:], skip_special_tokens=True).strip()

return extract_label(raw_pred)

def predict_emotion(user_text: str, model=None, proc=None) -> str:

model = model or base_model

proc = proc or processor

return generate_label(model, proc, user_text, SYSTEM_PROMPT)Now we can test the setup on a single example before running the full evaluation.

predict_emotion("I feel so happy and excited today!")The sample prediction looks correct, so we can move on to evaluating the model across the test split.

'joy'This code evaluates the model on the test split and collects several useful outputs. It stores the true and predicted labels, tracks whether each prediction is correct, and returns summary metrics, a classification report, and a dataframe with all predictions.

from sklearn.metrics import accuracy_score, classification_report, confusion_matrix, f1_score

import pandas as pd

from tqdm.auto import tqdm

VALID_LABELS = set(label_names)

ALL_EVAL_LABELS = label_names + ["INVALID"]

def evaluate_model(model, processor, split="test", limit=EVAL_LIMIT):

y_true, y_pred, rows = [], [], []

raw_source = dataset[split]

if limit is not None:

raw_source = raw_source.select(range(min(limit, len(raw_source))))

model.eval()

for ex in tqdm(raw_source, desc=f"Evaluating {split}", leave=False):

true_label = label_names[ex["label"]]

raw_pred_label = generate_label(model, processor, ex["text"], SYSTEM_PROMPT)

pred_label = raw_pred_label if raw_pred_label in VALID_LABELS else "INVALID"

y_true.append(true_label)

y_pred.append(pred_label)

rows.append({

"text": ex["text"],

"true_label": true_label,

"pred_label": pred_label,

"raw_pred_label": raw_pred_label,

"correct": true_label == pred_label,

})

metrics = {

"accuracy": accuracy_score(y_true, y_pred),

"macro_f1": f1_score(y_true, y_pred, labels=label_names, average="macro", zero_division=0),

"invalid_predictions": sum(1 for p in y_pred if p == "INVALID"),

"evaluated_examples": len(y_true),

}

report = classification_report(

y_true,

y_pred,

labels=label_names,

output_dict=True,

zero_division=0,

)

df = pd.DataFrame(rows)

return metrics, report, df

def confusion_matrix_df(pred_df):

return pd.DataFrame(

confusion_matrix(pred_df["true_label"], pred_df["pred_label"], labels=ALL_EVAL_LABELS),

index=ALL_EVAL_LABELS,

columns=ALL_EVAL_LABELS,

)Now we can run the full baseline evaluation on the base model.

pre_metrics, pre_report, pre_preds = evaluate_model(base_model, processor, "test")

pre_metricsThese baseline results show that the untuned model already performs reasonably well, but there is still room for improvement.

The accuracy is around 58.25%, the macro F1 score is around 0.42, and the model produced 33 invalid predictions, which means it sometimes returned something outside the expected label set.

{'accuracy': 0.5825,

'macro_f1': 0.42112912841373906,

'invalid_predictions': 33,

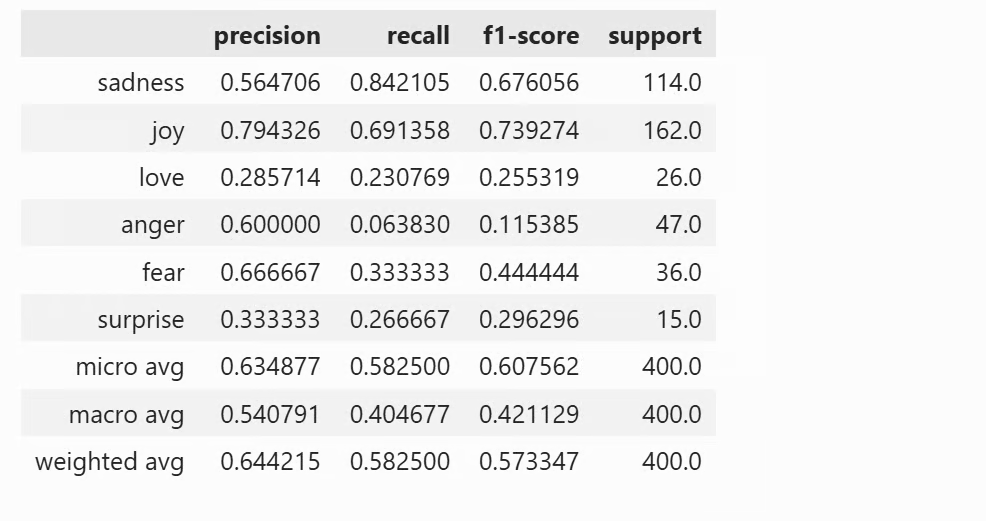

'evaluated_examples': 400}Next, we can look at the full classification report for each emotion category.

pd.DataFrame(pre_report).transpose()This gives us the precision, recall, F1 score, and support for each class. It helps us see which emotions the model handles well and which ones are more difficult before fine-tuning.

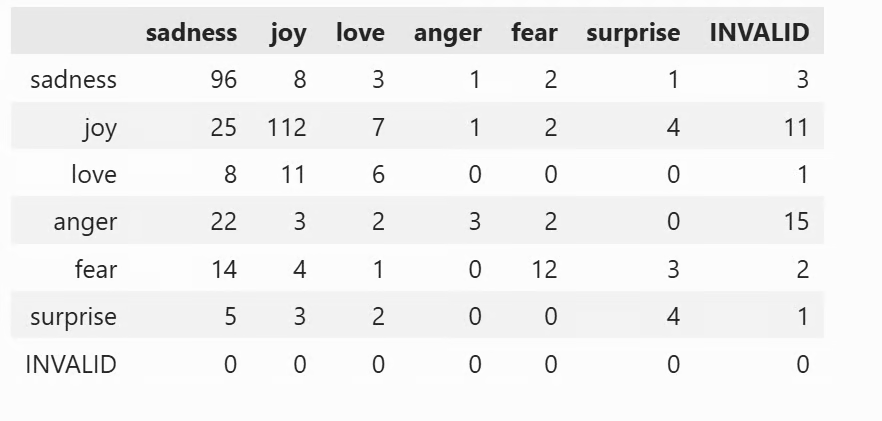

Finally, we can inspect the confusion matrix.

confusion_matrix_df(pre_preds)The confusion matrix shows how the predictions are distributed across the different classes.

In the notebook, this is displayed as a table, making it easier to spot which emotions are being confused with each other and where the base model struggles the most.

6. Fine-Tune Gemma 4 With LoRA

Now that we have the baseline results, we can fine-tune Gemma 4 using LoRA.

LoRA is a parameter-efficient fine-tuning method, which means we do not update the full model. Instead, we attach a small number of trainable adapter weights on top of the base model. This makes training much lighter and more practical on a single GPU.

We start by defining the LoRA configuration.

These settings control how the LoRA adapters are attached to the model. Here, we use a rank of 16, a dropout of 0.05, and apply LoRA to all linear layers, which is a common setup for efficient fine-tuning.

from peft import LoraConfig

lora_config = LoraConfig(

r=16,

lora_alpha=32,

lora_dropout=0.05,

bias="none",

task_type="CAUSAL_LM",

target_modules="all-linear"

)Next, we define the training configuration and set up the trainer.

This training setup is designed to keep memory usage manageable while still giving the model enough room to learn from the dataset. We train for one epoch, use gradient accumulation to simulate a larger batch size, and enable options like gradient checkpointing and 8-bit optimization to make training more efficient.

from trl import SFTConfig, SFTTrainer

training_args = SFTConfig(

output_dir="./gemma4-emotion-lora",

per_device_train_batch_size=8,

per_device_eval_batch_size=8,

gradient_accumulation_steps=2,

learning_rate=1e-4,

weight_decay=0.01,

lr_scheduler_type="linear",

warmup_steps=50,

num_train_epochs=1,

logging_steps=50,

eval_strategy="steps",

metric_for_best_model="eval_loss",

greater_is_better=False,

gradient_checkpointing=True,

bf16=True,

fp16=False,

tf32=True,

max_length=256,

packing=False,

completion_only_loss=True,

remove_unused_columns=False,

dataloader_num_workers=2,

optim="paged_adamw_8bit",

report_to="none",

)Now we make sure the base model is ready and initialize the trainer. This step attaches the LoRA adapters to the base model and prepares the supervised fine-tuning trainer using our formatted training and validation splits.

from peft import PeftModel

if isinstance(base_model, PeftModel):

base_model = base_model.unload()

base_model.config.use_cache = False

trainer = SFTTrainer(

model=base_model,

train_dataset=sft_dataset["train"],

eval_dataset=sft_dataset["validation"],

peft_config=lora_config,

args=training_args,

processing_class=processor,

)Before starting training, it is a good idea to confirm that LoRA parameters were attached correctly.

This counts the number of trainable parameters and raises an error if no LoRA layers were added.

After that, training begins.

trainable_params = 0

for param in trainer.model.parameters():

if param.requires_grad:

trainable_params += param.numel()

if trainable_params == 0:

raise RuntimeError("No trainable LoRA parameters were attached. Check target_modules before training.")

print(f"Trainable LoRA parameters: {trainable_params:,}")

train_result = trainer.train()

trainer.model.eval()

trainer.model.config.use_cache = True

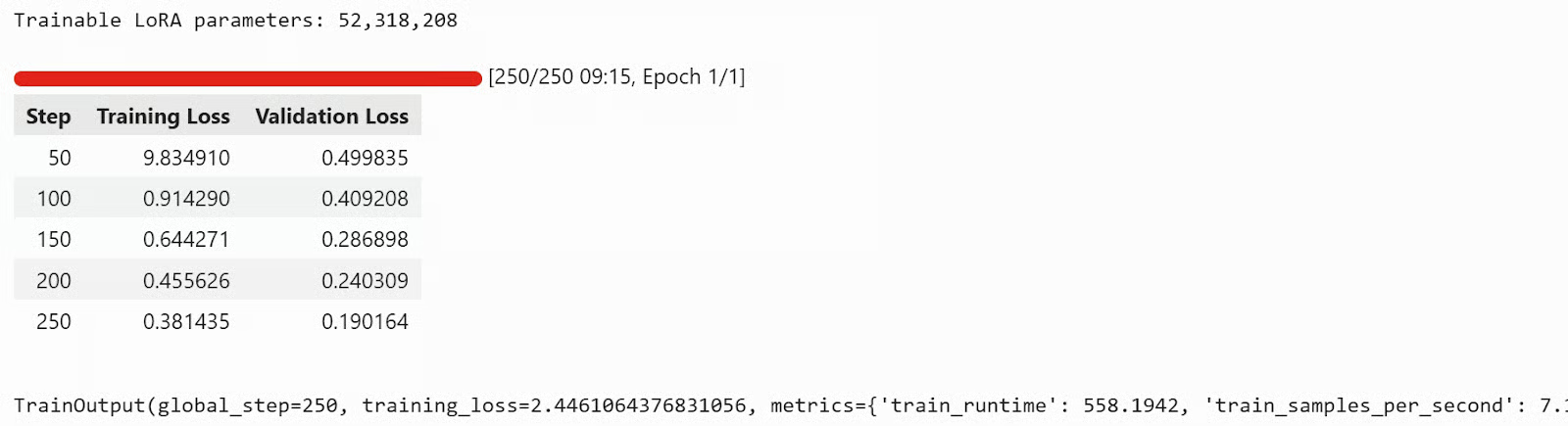

train_resultIn this run, training took almost 9 minutes, and both the training loss and validation loss kept decreasing over time, which is a good sign that the model was learning from the dataset.

Once training is complete, we can save the adapter and tokenizer locally.

trainer.model.save_pretrained("./gemma4-emotion-lora")

processor.save_pretrained("./gemma4-emotion-lora")Finally, we can push the model to the Hugging Face Hub.

This uploads the fine-tuned adapter and tokenizer to the Hub so you can access them from anywhere, share them with others, or load them directly into another notebook or application.

repo_id = "kingabzpro/gemma4-emotion-lora"

# Push adapter + processor to the Hub

trainer.model.push_to_hub(

repo_id,

private=False,

)

processor.push_to_hub(

repo_id,

private=False,

)You can now view kingabzpro/gemma4-emotion-lora on Hugging Face and try it yourself. The repository includes the model files, usage instructions, and the fine-tuning results.

Source: kingabzpro/gemma4-emotion-lora · Hugging Face

7. Evaluate the Fine-Tuned Model

Now that training is complete, the final step is to evaluate the fine-tuned model on the same test split and compare the results with the base model. This helps us see whether LoRA fine-tuning improved the model’s ability to classify emotions more accurately.

We start by loading the fine-tuned model from the trainer and running the evaluation.

ft_model = trainer.model

ft_model.eval()

ft_model.config.use_cache = True

post_metrics, post_report, post_preds = evaluate_model(ft_model, processor, "test")

post_metricsThis gives us the main evaluation metrics for the fine-tuned model.

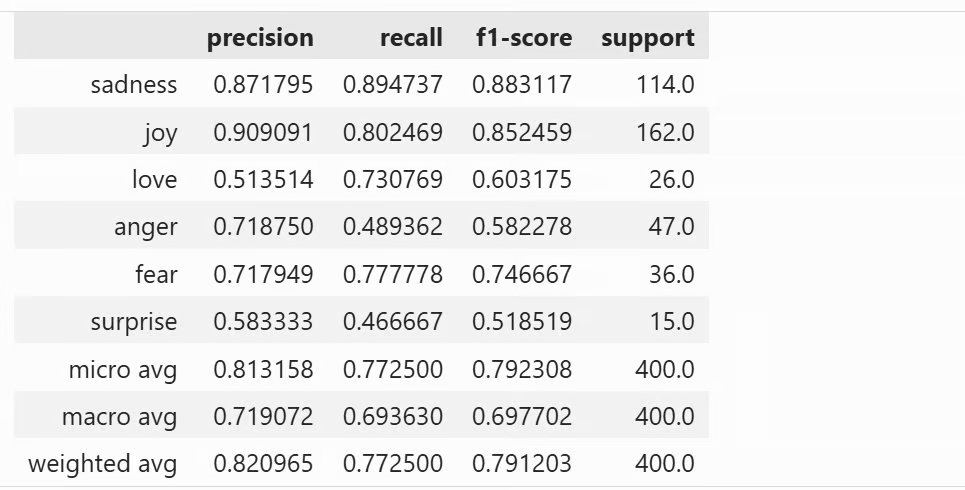

These results are clearly stronger than the baseline. After fine-tuning, the model reaches 77.25% accuracy and a macro F1 score of 0.698. The number of invalid predictions also drops from 33 to 20, which shows that the fine-tuned model is not only more accurate, but also more consistent in returning valid labels.

{'accuracy': 0.7725,

'macro_f1': 0.697702361480462,

'invalid_predictions': 20,

'evaluated_examples': 400}Next, we can view the full classification report.

This displays the classification report as a pandas DataFrame directly in the notebook. It includes the precision, recall, F1 score, and support for each emotion class, making it easier to see which categories improved the most after fine-tuning.

pd.DataFrame(post_report).transpose()

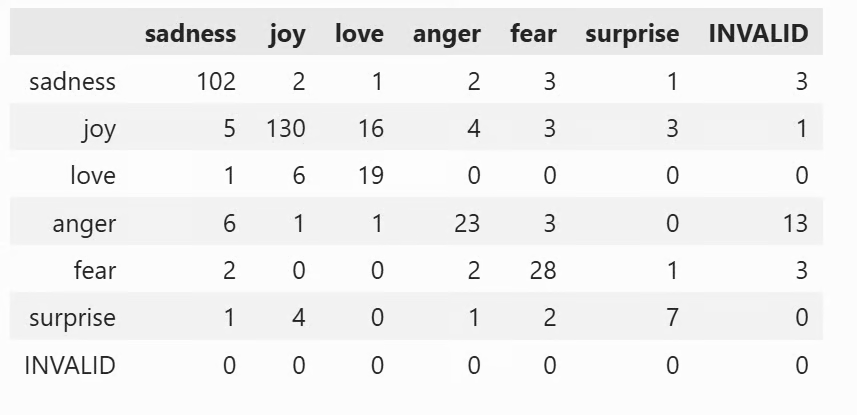

This is also displayed in the notebook as a table. It helps you see where the fine-tuned model is still making mistakes and which emotion categories are most often confused with one another.

confusion_matrix_df(post_preds)

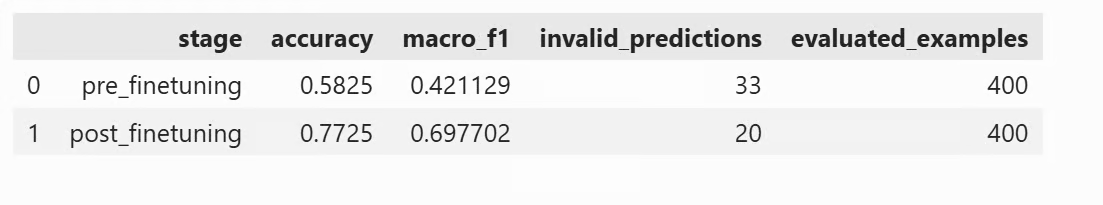

To make the comparison clearer, we can place the pre-fine-tuning and post-fine-tuning metrics side by side.

comparison_df = pd.DataFrame([

{"stage": "pre_finetuning", **pre_metrics},

{"stage": "post_finetuning", **post_metrics},

])

comparison_dfIt gives a quick summary of how much the model improved after training.

Note: If you run into any issues while running the code, you can refer to the full Jupyter notebook here: fine-tune-gemma-4-on-emotions_final.ipynb

Final Thoughts

Fine-tuning Gemma 4 is very sensitive to setup, especially the prompt structure and training arguments. If the prompt format is wrong, or you do not use the proper template consistently, the model may go through training without actually learning the task well. The same applies to training settings. These are usually the main reasons loss does not decrease, or why the loss decreases but evaluation results barely improve.

Another important lesson is max_length. If you reduce it too much, especially below around 125, the model may not learn the pattern properly at all. I ran into several issues during this process, but they were resolved one by one, and most of them came back to the same two areas: prompt formatting and training configuration.

To improve the results further, a good next step would be to fine-tune on the full dataset and train for at least 3 epochs instead of just one. That would give the model more examples to learn from and more time to adapt, which should lead to stronger accuracy and F1 scores.

As a certified data scientist, I am passionate about leveraging cutting-edge technology to create innovative machine learning applications. With a strong background in speech recognition, data analysis and reporting, MLOps, conversational AI, and NLP, I have honed my skills in developing intelligent systems that can make a real impact. In addition to my technical expertise, I am also a skilled communicator with a talent for distilling complex concepts into clear and concise language. As a result, I have become a sought-after blogger on data science, sharing my insights and experiences with a growing community of fellow data professionals. Currently, I am focusing on content creation and editing, working with large language models to develop powerful and engaging content that can help businesses and individuals alike make the most of their data.