Courses

I've been following Mistral AI's product launches from the Mistral 3 model family to Le Chat to Vibe 2.0. Mistral Forge, if you are catching up to speed, isn't a model release. It's Mistral's play for enterprise infrastructure.

Nvidia GTC announced on March 17, 2026, Forge lets enterprises build AI models trained entirely on internal data. Not fine-tuned on public models. Actually trained from scratch on decades of engineering docs, proprietary codebases, compliance frameworks, and operational logs.

In this article, I’ll cover what Mistral Forge is, how it works, when custom model training makes sense versus using APIs, and what it costs. I'll use examples from early adopters like ASML and Ericsson to show where Forge fits.

What Is Mistral Forge?

Mistral Forge is an enterprise platform for building AI models using your organization's proprietary data. Unlike API-based services where you send prompts to someone else's model, Forge gives you control over the entire training pipeline.

Traditional AI APIs (OpenAI, Anthropic):

- Send prompts → get responses

- Models trained on public internet data

- Optional fine-tuning on limited datasets

- Your data never becomes part of the model's core knowledge

Mistral Forge:

- Train models from scratch on internal data

- Full lifecycle control: pre-training, fine-tuning, reinforcement learning

- Your company's knowledge is embedded at every layer

- Models understand your domain terminology, workflows, and context

The problem I keep seeing: generic models trained on public web data don't understand your company's institutional knowledge. They hallucinate domain-specific terminology. They don't know your engineering standards, compliance policies, or operational procedures. Forge solves this by letting you build models that internalize your organization's knowledge from the ground up.

Early adopters include ASML (semiconductor manufacturing), Ericsson (telecommunications), the European Space Agency, and Singapore's DSO National Laboratories. These aren't companies experimenting with chatbots. They're building AI for mission-critical operations where generic models won't cut it.

How Mistral Forge Works

Here's the complete workflow, from data ingestion to deployment:

Model selection

You start by choosing a base architecture from Mistral's open-weight models: Mistral Large 3 for complex reasoning, Mistral Small 4 for faster inference and lower costs, or custom MoE (Mixture-of-Experts) configurations that balance performance with efficiency.

The choice between dense and MoE matters for operational costs. Dense models provide strong general capability. MoE models can match performance while cutting latency and compute costs, think comparable results at 40-60% of the infrastructure spend.

Data integration

This is where your proprietary data comes in. Forge supports unstructured text (documentation, reports, emails), codebases with repository structure preserved, structured data (databases, spreadsheets), and multimodal inputs (text plus images where relevant).

For regulated industries where real data can't always be used, Forge includes synthetic data generation. You can create policy-compliant training examples, edge-case scenarios, and long-tail situations that don't appear frequently in real data but matter in production.

Pre-training

This is the expensive part where institutional knowledge gets embedded into neural weights. Pre-training from scratch costs millions in compute, which is why most organizations skip it. But it's what makes the model actually understand your domain.

Pre-training on large internal datasets can take weeks on enterprise GPU clusters. Companies like ASML and Ericsson allocate months-long timelines for initial training runs, with costs running into millions depending on data volume and model size. This isn't something you run on a local machine.

Supervised fine-tuning

After pre-training, you refine the model for specific tasks through supervised fine-tuning (SFT). This involves training on instruction-response pairs specific to your domain like reviewing engineering change requests for compliance violations or analyzing financial instruments according to your internal risk taxonomy.

Reinforcement learning

For agentic workloads models that need to use tools, make decisions, and complete multi-step tasks, Reinforcement Learning (RL) is how you optimize for actual task completion, not just generating plausible text. According to early adopter reports, RL-trained models handling customer escalations achieved task completion rates above 85%.

Evaluation and deployment

Forge includes evaluation frameworks aligned to enterprise KPIs, not just generic benchmarks. You define custom metrics like compliance accuracy, domain vocabulary coverage, and latency thresholds. Throughout this process, Forge tracks everything: models, datasets, training runs, configs. Version control is first-class. When regulators ask "how did the model make this decision," you have complete lineage.

Mistral Forge vs. Traditional AI APIs

|

Aspect |

Mistral Forge |

OpenAI/Anthropic APIs |

RAG + API |

|

Training Depth |

Full pre-training on your data |

Fine-tuning on top of public models |

No training, retrieval only |

|

Domain Knowledge |

Embedded in model weights |

Thin layer on generic foundation |

External context at inference |

|

Data Privacy |

On-premises deployment, zero external access |

Data sent to OpenAI/Anthropic servers |

Documents stored externally |

|

Customization |

Complete control (architecture, training, RL) |

Limited (instruction pairs, examples) |

Minimal (document selection) |

|

Setup Complexity |

Very high (months, ML team required) |

Low (API key, days) |

Medium (vector DB, weeks) |

|

Cost |

$2-5M+ for training + ongoing inference |

$10-50K/month typically |

$5-20K/month typically |

|

Vendor Lock-in |

Low (you own model weights) |

High (API dependency) |

Medium (can switch LLMs) |

Why Mistral Forge Exists

I've talked to enough data teams to know the pattern: companies deploy AI, get impressive demos, then hit a wall when models encounter domain-specific terminology or need to operate within strict compliance constraints.

RAG helps. Fine-tuning helps. But neither solves the fundamental problem: generic models weren't trained on your organization's knowledge.

Mistral is betting that as AI moves from "cool demo" to "core infrastructure," enterprises will need models that understand their domain at a foundational level. Here's why:

- Data sovereignty: In regulated industries (pharma, defense, finance), hosting proprietary data in third-party systems creates compliance nightmares. Training on your own infrastructure keeps data under your governance. This is especially important in Europe, where GDPR enforcement is real.

- Agentic systems: Enterprise agents need to do more than answer questions. They navigate internal systems, use tools correctly, and make decisions within organizational constraints. Generic models struggle here because they don't understand the environment. I've seen this with Vibe 2.0 agents: they work better when the underlying model is trained on your codebase.

- Model ownership: API dependencies create business risk. If OpenAI updates GPT-5 and breaks your workflows, you're stuck. If Anthropic discontinues a model version, you rebuild. With Forge, you own the weights. You control updates. You decide when to deploy new versions.

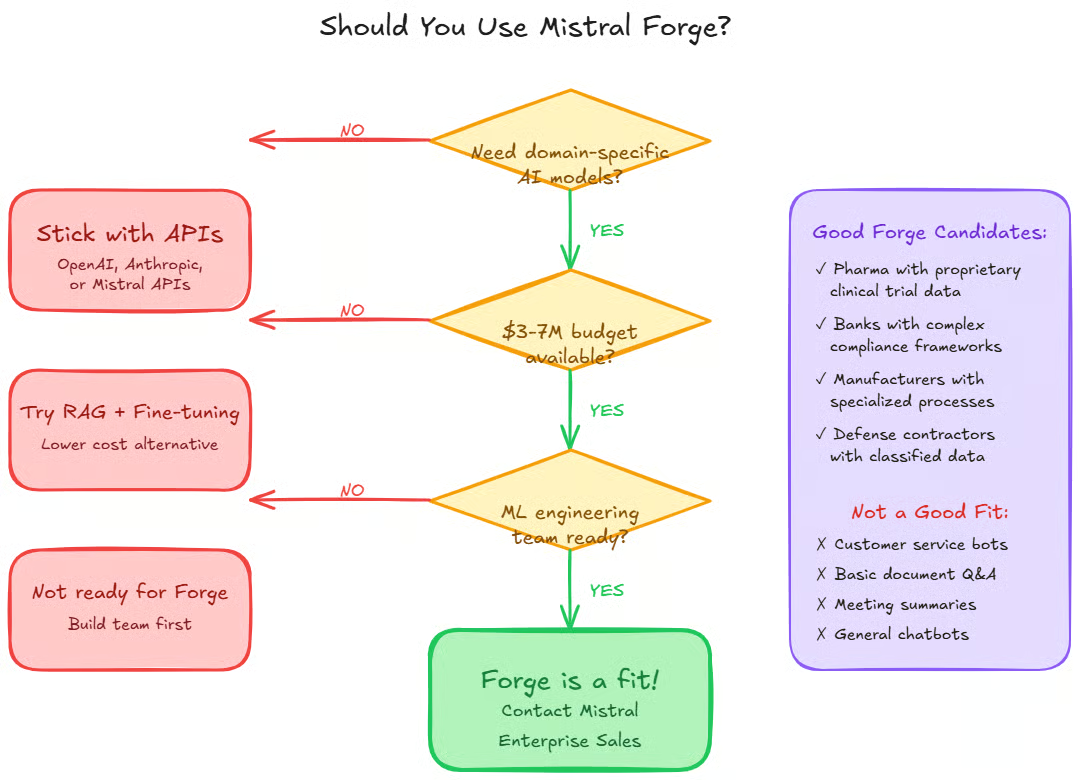

But here's the contrarian take: most companies don't need custom models. Customer service automation, basic document Q&A, and meeting summarization work fine with general-purpose models and good prompting. Forge targets organizations where domain specificity is a genuine competitive advantage, not a nice-to-have.

Key Features of Mistral Forge

- Agent-First Design: Forge was built with autonomous agents as primary users, not humans. Mistral's own Vibe agent can independently fine-tune models, optimize hyperparameters, schedule training jobs, and generate synthetic data through plain English instructions. As agentic systems become more common, they need platforms designed for programmatic access.

- Production-Grade Evaluation: Generic benchmarks (MMLU, HumanEval) don't tell you if a model works for your use case. Forge lets you define custom evaluation criteria aligned to enterprise KPIs: regression suites to detect performance drops when data or prompts change, drift detection to monitor behavioral changes over time, and A/B testing infrastructure to compare model versions in production.

- Version Control: Models, datasets, training runs, configs—tracked as first-class assets. For regulated industries, this audit trail is table stakes. When regulators ask "why did the model recommend this treatment" or "how did the fraud detection system flag this transaction," you need answers.

- Flexible Deployment: You can run trained models on Mistral's managed infrastructure, on Mistral Compute (dedicated clusters), entirely on-premises (zero data leaving your environment), or at the edge (quantized models for local inference). For defense contractors handling classified data or healthcare organizations with HIPAA requirements, on-premises deployment with zero external data access is non-negotiable.

Future of Platforms Like Mistral Forge

The early enterprise AI playbook was buy API access, hire prompt engineers, and layer on RAG. This works until it doesn't. As AI becomes infrastructure rather than a feature, companies will want ownership of their models the same way they want ownership of their databases. Not everyone. But enough to make custom training a viable market.

Enterprise agents must do more than generate answers. They need to navigate internal systems, use tools correctly, and make decisions within organizational constraints. Custom models make this possible. I'm already seeing this with Vibe 2.0. The agents that work best are built on models trained on the codebases they're operating in.

Enterprises increasingly face compliance requirements that make hosting proprietary business data in third-party systems untenable. EU AI Act. GDPR. Industry-specific regulations in finance and healthcare. Platforms offering on-premises training with complete data isolation have a structural advantage.

Mistral announced Forge at Nvidia GTC for a reason. NVIDIA is building enterprise AI infrastructure with cheaper GPUs, better distributed training tools, and optimized inference. As training costs drop, custom models become viable for a wider range of use cases.

But the counterargument: most enterprises don't need this complexity yet. Customer service automation doesn't require custom models. Neither does basic document processing. The market will split—most companies stick with APIs, a smaller segment builds custom infrastructure. That smaller segment includes some of the world's largest, most profitable companies. That's Mistral's bet.

Getting Started with Mistral Forge

Prerequisites

Before contacting Mistral's enterprise sales team, you need significant proprietary text or code (enterprise deployments typically handle terabytes of domain-specific data), a data governance framework (who can access what, retention policies, PII handling), and clear domain-specific evaluation criteria.

For infrastructure: either a GPU cluster (8-64 H100s recommended for training) or willingness to use Mistral's managed infrastructure. You also need ML engineers familiar with distributed training, domain experts who can evaluate model outputs, and legal/compliance review for data usage.

Initial setup

Forge isn't self-serve. You go through Mistral's enterprise team, discuss use cases, data requirements, and, deployment constraints. Start narrow—don't try to train on all your data immediately. Pick one high-value use case like compliance document analysis or code review automation.

Data preparation takes longer than you think. Cleaning, formatting, removing PII, organizing by domain budget 2-3 months here. Before training anything, test prompt engineering with Mistral Large 3 to ensure acceptable performance. You might not need custom training at all.

First training run

Start with supervised fine-tuning, not full pre-training. It's cheaper and faster. See if task-specific tuning on a base model gets you 80% of the way there. If fine-tuning isn't enough, move to pre-training. But understand you're committing significant compute resources and time.

Common pitfalls

Garbage in, garbage out. If your internal docs are inconsistent, outdated, or poorly structured, the model will learn those patterns. Custom training doesn't magically solve AI problems. If your use case doesn't need domain-specific knowledge, you're wasting resources. Running your own training infrastructure is hard. Unless you have serious ML engineering capacity, stick with Mistral's managed offering.

Common Use Cases for Mistral Forge

1. Financial services: Compliance analysis

A large investment bank needed models that understand proprietary risk frameworks—internal rating systems, compliance procedures, regulatory interpretations specific to their business. Generic models couldn't distinguish between similar-sounding financial instruments with very different risk profiles, as defined by the bank's internal classification system.

They pre-trained on decades of internal risk documentation, compliance memos, and regulatory filings. The model internalized the bank's risk taxonomy. Result: 94% accuracy on compliance review tasks, down from 3-day human review to 2-hour automated analysis with human verification.

2. Semiconductor manufacturing: Design optimization

ASML builds the machines that make computer chips. Their engineering documentation spans decades, multiple languages, and highly specialized terminology. Engineers were spending hours searching through documentation to understand why certain design decisions were made.

They trained on proprietary design docs, CAD files, manufacturing logs, and engineering change requests. Now engineers can query the model in natural language and get contextually relevant answers citing specific documentation sections. This isn't public knowledge—it's institutional intelligence built up over decades.

3. Software development: Legacy code migration

A Fortune 500 company needed to migrate a massive C++ codebase to modern frameworks. Decades of custom libraries, internal abstractions, undocumented dependencies. Generic code models suggested changes that broke internal conventions or violated architectural standards.

They trained on the company's entire code history—commits, code reviews, architectural decision records, style guides. The model now generates refactoring suggestions that respect internal patterns. Similar to how Mistral Vibe 2.0 works better when it understands your repository structure.

Pricing and Business Model

Forge's pricing isn't publicly listed. Based on enterprise sales conversations:

- Platform license fees: $500K-$2M annually depending on scale (for organizations training on their own GPU clusters).

- Managed infrastructure: If you use Mistral's cloud, you pay for GPU hours on top of platform fees. Roughly $2-3 per H100-hour for training, $0.50-1.00 per inference hour.

- Forward-deployed scientists: This is the interesting part. Mistral offers embedded AI researchers who work alongside your team. They help with training recipes, hyperparameter tuning, evaluation design. Think Palantir's forward-deployed engineers model. Costs $300K-$500K per scientist annually.

- Example deployment: For pre-training on substantial internal data, supervised fine-tuning, and RL optimization, total first-year cost runs around $3.7M (platform license $750K, compute $2.5M, data pipeline support $200K, forward-deployed scientist for 6 months $250K).

Forge isn't for everyone. You need a use case where domain accuracy is worth millions. Most companies don't.

Conclusion

Mistral Forge trades ease of use for complete model ownership and deep domain customization. For most enterprises, I would stil think the answer is more simple: stick with APIs. And general-purpose models with effective prompting or RAGs still work well for common things: customer service, document processing, and summarizing meetings.

But for organizations where domain specificity is a genuine competitive advantage (I'm thinking like pharmaceutical research analyzing proprietary compound data, semiconductor manufacturers optimizing fabrication processes, and financial institutions with their regulatory frameworks), Forge could make sense. The question isn't whether custom training is better in theory, but whether the improvement justifies millions in training costs.

If you're evaluating Forge, try this progression: Start with prompt engineering using Mistral Large 3 . Add RAG if needed. Try API-based fine-tuning. Only think about what Mistral Forge has to offer if those approaches fail.

Tech writer specializing in AI, ML, and data science, making complex ideas clear and accessible.

Mistral Forge FAQs

What is Mistral Forge?

Mistral Forge is an enterprise platform that lets you train custom AI models on your organization's proprietary data. Unlike fine-tuning APIs that adapt existing models, Forge supports full pre-training from scratch, supervised fine-tuning, and reinforcement learning to build models that understand your domain at a foundational level.

How is Mistral Forge different from fine-tuning?

Fine-tuning adjusts an existing model's behavior for specific tasks by training on instruction-response pairs. Forge goes deeper—it can pre-train models from scratch on your proprietary corpus, meaning your data becomes the model's foundational knowledge rather than a thin layer on top of someone else's training data.

What data do I need to use Mistral Forge?

You need substantial domain-specific text or code for meaningful pre-training. This could include internal documentation, codebases, compliance policies, operational logs, engineering standards, or historical decisions. The data should be structured, cleaned, and governed according to your compliance requirements.

How much does Mistral Forge cost?

Pricing isn't publicly listed. Based on enterprise sales, expect $500K-$2M annually for platform licenses, plus $2-5M for initial training compute (if pre-training from scratch), plus optional fees for data pipeline services and forward-deployed scientists. Total first-year costs typically run $3-7M, depending on scale.

Can I run Mistral Forge on my own infrastructure?

Yes. Forge supports on-premises deployment where training runs entirely on your GPU clusters with zero data leaving your environment. This is critical for regulated industries with strict data sovereignty requirements. You can also use Mistral's managed infrastructure or Mistral Compute (dedicated clusters).