course

Most AI benchmarks follow the same formula: give the model a question, get an answer, check if it is right. For years, this approach worked well enough. But as frontier models started scoring above 90% on nearly every major evaluation, a familiar problem emerged. The benchmarks started losing their ability to separate one model from another.

ARC-AGI-3 takes a different approach. Instead of presenting static puzzles with clear input-output pairs, it drops AI agents into interactive environments with no instructions, no stated goals, and no explicit rules. The agent has to figure out everything on its own through trial and observation, the same way a person would when handed a game they have never seen before.

Launched on March 25, 2026, by the ARC Prize Foundation, ARC-AGI-3 produced a notable result at release: every frontier AI model scored below 1%, while humans solved all of the benchmark's environments.

What Is ARC-AGI-3

ARC-AGI-3 is an interactive reasoning benchmark created by the ARC Prize Foundation, co-founded by AI researcher François Chollet (creator of Keras) and Mike Knoop (co-founder of Zapier). The benchmark is built around what the ARC Prize team sees as a core principle: true intelligence is not about how much a system knows, but how efficiently it can learn something entirely new.

Where earlier AI evaluations test crystallized knowledge (retrieving memorized facts, applying trained heuristics), ARC-AGI-3 targets fluid intelligence, the ability to reason through novel problems and adapt to new situations rather than rely on what was learned during training. It measures whether an AI agent can explore an unfamiliar environment, figure out the rules, identify what "winning" looks like, and then act on that understanding efficiently.

The benchmark includes 135 environments across public, semi-private, and fully private sets. Each environment is a turn-based, game-like scenario built by an in-house design team. The agent receives nothing to go on. It simply observes the state of the world, takes an action, sees what happens, and repeats.

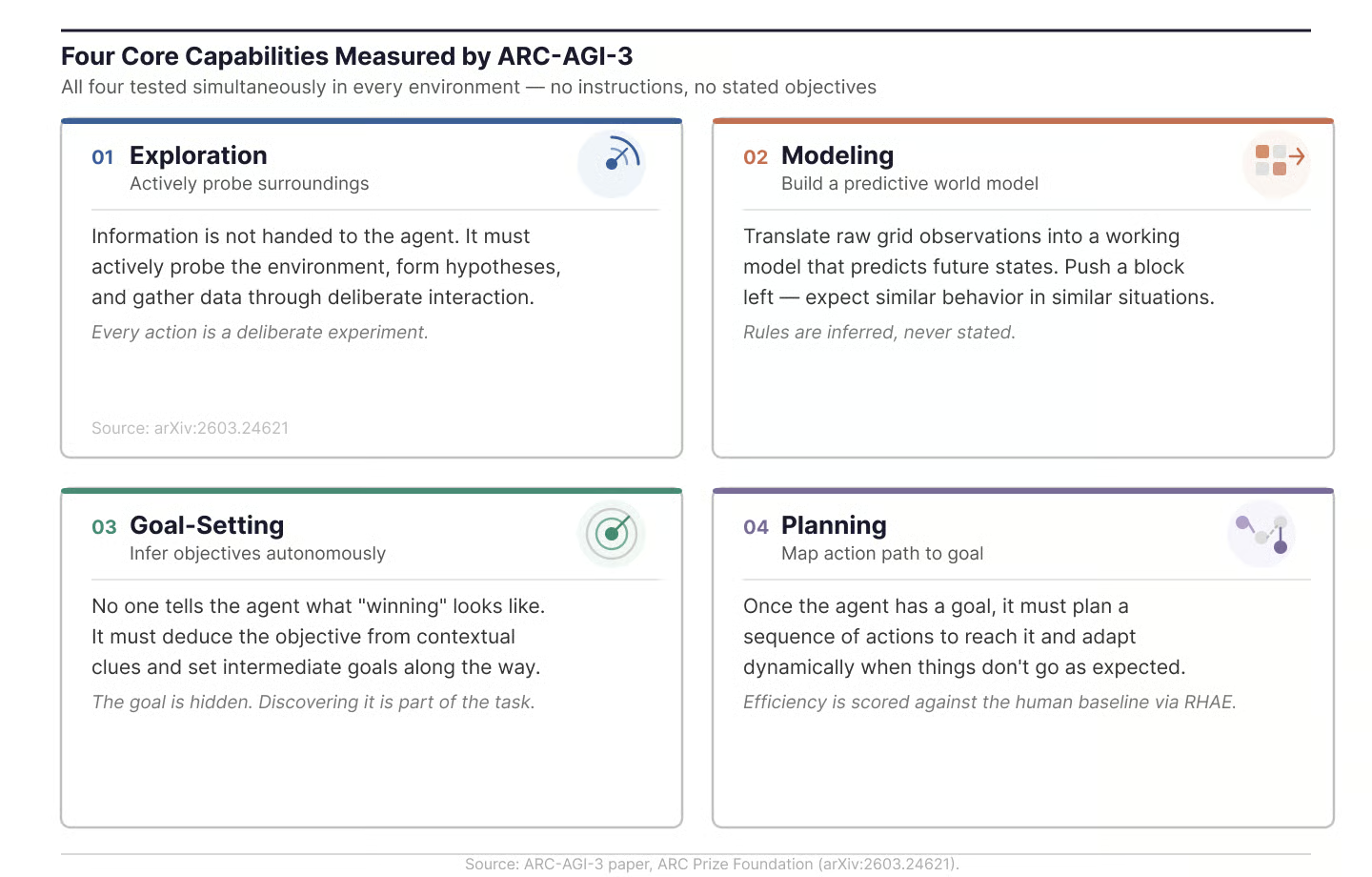

The technical paper describes the benchmark as testing four core capabilities: exploration, modeling, goal-setting, and planning.

The core knowledge priors

To make sure the benchmark measures reasoning rather than training data recall, ARC-AGI-3 environments avoid language, numbers, letters, cultural symbols, or recognizable real-world objects. Instead, they rely only on what the ARC Prize team calls "Core Knowledge priors," basic cognitive abilities shared by all humans.

These priors include objectness (understanding that things are persistent entities that can move or collide), basic geometry and topology (symmetry, rotation, "inside" versus "outside"), basic physics (momentum, gravity, bouncing), and agentness (recognizing when something in the environment is acting with intent). If an environment required knowing that a key opens a lock, it would test training data, not reasoning.

How ARC-AGI-3 Works

The shift from static evaluation to interactive environments changes what the benchmark can actually measure.

What agents see and do

Each ARC-AGI-3 environment presents a 64×64 grid where each cell displays one of 16 possible colors. The agent receives a visual state (called a "frame") as a JSON object, then submits a single action per turn. The action space is small: typically five directional or key-based commands, plus an optional coordinate-based "click" action for selecting specific grid cells.

The environments are strictly turn-based. Nothing changes until the agent acts, which means the benchmark rewards careful reasoning over fast reflexes. Importantly, internal operations like reasoning steps, retries, or tool calls do not count as actions. Only commands that actually change the environment state are counted toward the agent's score.

How levels and environments fit together

Each environment contains multiple levels arranged in order of increasing difficulty. The first level acts as a tutorial, introducing basic mechanics with minimal complexity. Later levels layer on new rules and interactions, so the agent has to carry what it learned forward and apply it in more complex situations.

Difficulty in ARC-AGI-3 does not come from obscurity or scale. It comes from the composition of multiple mechanics learned across levels. A single-mechanic environment would be too easy to brute-force. The real test is combining several learned dynamics to solve a problem the agent has never seen before.

The benchmark runs on a custom Python-based engine designed to meet a 1,000 FPS performance threshold in headless mode. Developers can get started with pip install arc-agi and interact with environments through the SDK or a REST API. You can also play the public environments in your browser to get a feel for what these games look like.

How Models Are Performing on ARC-AGI-3

One thing to note upfront: these scores reflect the state of the leaderboard as of late March 2026 and will almost certainly change as researchers develop new approaches.

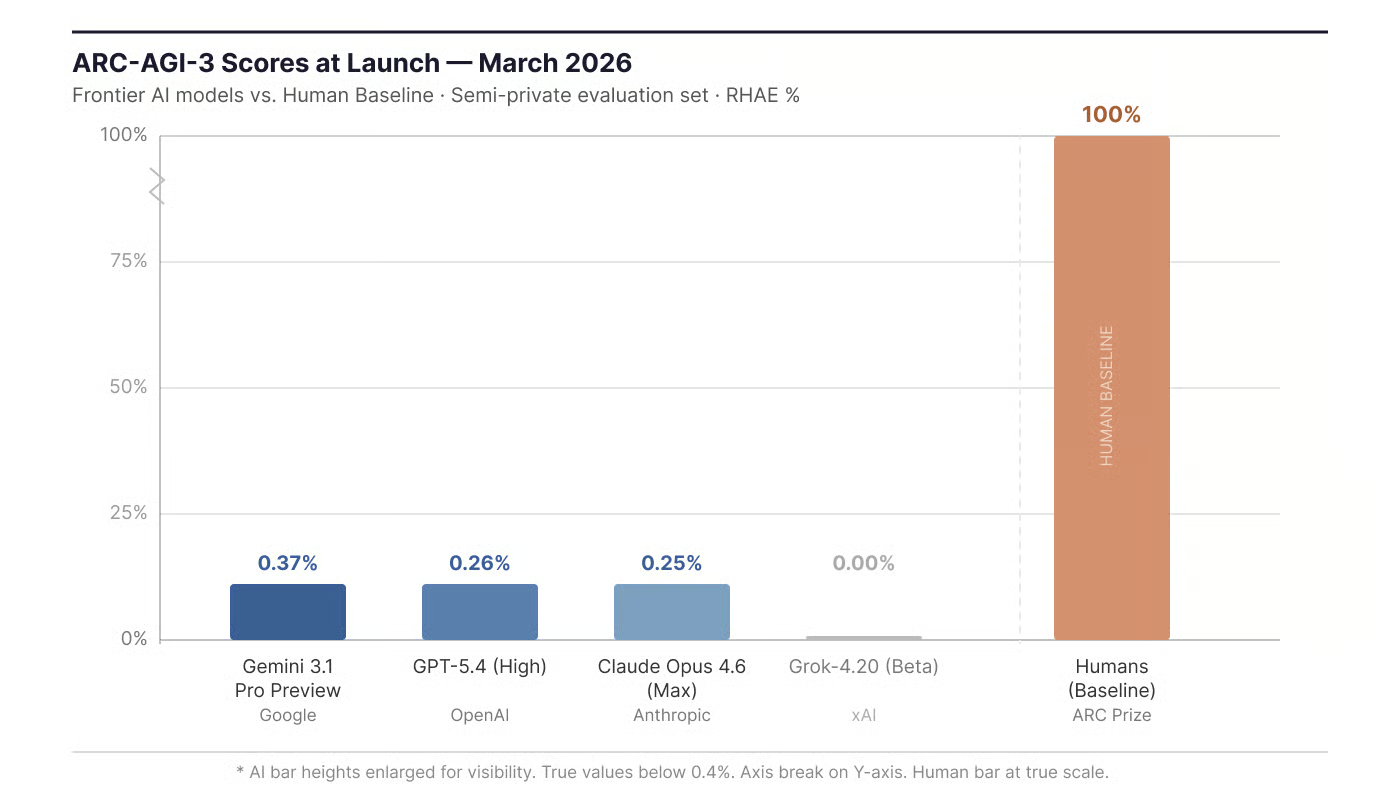

At launch, the ARC Prize Foundation tested several frontier models on the semi-private evaluation set.

Launch-time scores (March 2026): frontier AI versus human baseline. Image by Author.

For context, these same frontier models dominate most other AI benchmarks. ARC-AGI-1 is approaching saturation, with top models now scoring well above 90%. The drop to sub-1% on ARC-AGI-3 suggests that the capabilities being tested here are different from what current models do well.

The 0% score for Grok-4.20 does not mean the model never took any actions. It means the model exceeded the benchmark's action cutoff on every level. I will explain how that cutoff works in the scoring section.

Early signs from non-LLM approaches

The top-scoring agents during the preview period were not language models. During the preview competition (which used 3 public environments and 3 private ones as a hidden evaluation set), a system called StochasticGoose from Tufa Labs reached 12.58% using a reinforcement learning approach with convolutional neural networks.

When StochasticGoose was evaluated on the full official benchmark at launch, however, its score dropped to 0.25%, roughly the same level as frontier LLMs. That result is telling: an approach that worked well on a handful of known environments did much worse when faced with the full range of unseen ones, reinforcing how much ARC-AGI-3 depends on general adaptability rather than task-specific optimization.

This suggests the interactive format may work better with different architectures, since a language model cannot reason its way through a problem when the necessary information only appears after each action.

You can check the latest scores on the ARC-AGI-3 leaderboard.

ARC-AGI-3 vs. ARC-AGI-1 and ARC-AGI-2

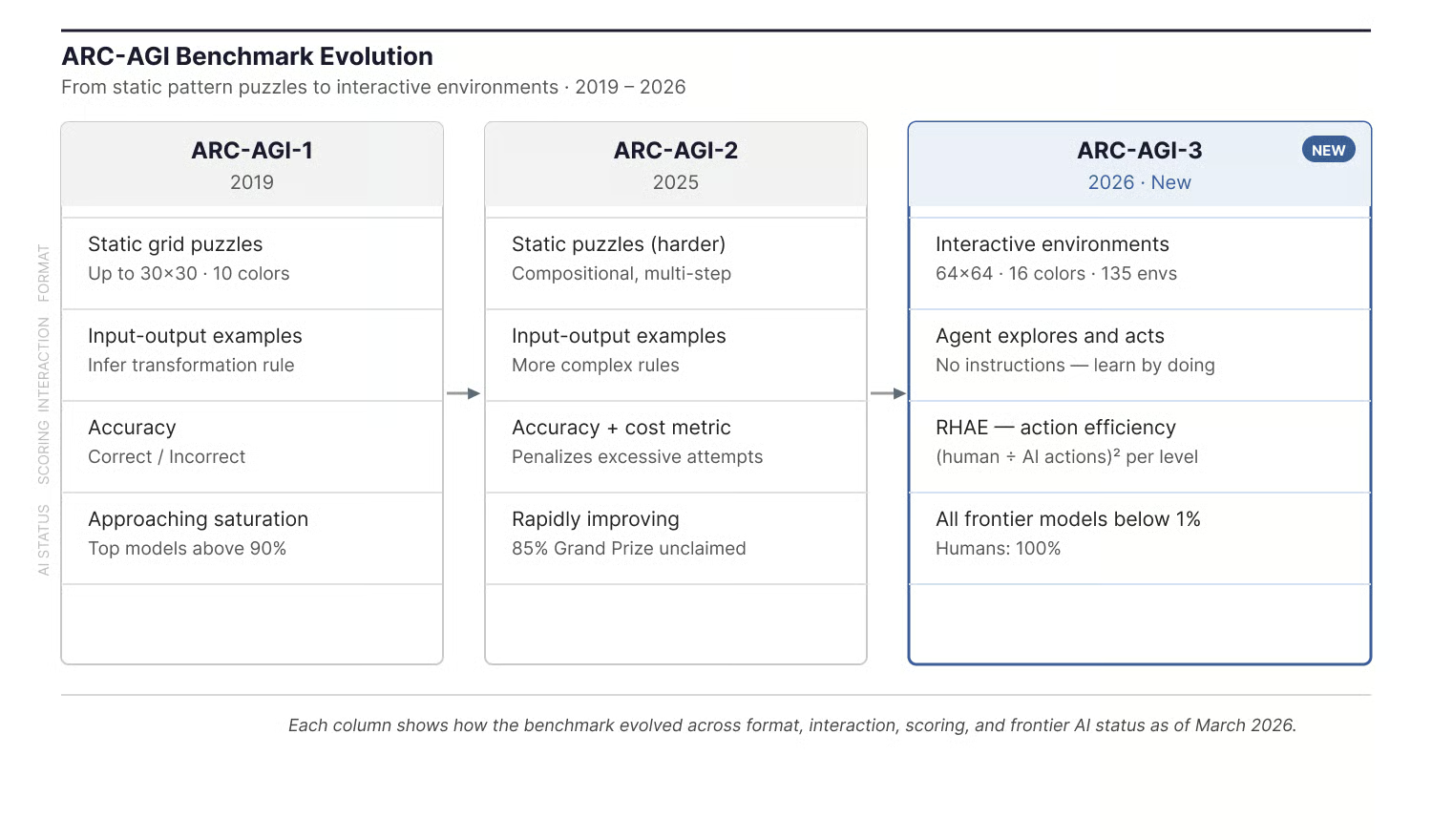

Those numbers look different once you know what earlier ARC benchmarks were testing. ARC-AGI-1, released by Chollet in 2019, introduced the concept of measuring fluid intelligence through abstract visual puzzles. The format was static: the model saw a few input-output grid examples, inferred the transformation rule, and produced a single output. ARC-AGI-2, released in March 2025, used the same static format but with harder, more compositional tasks.

ARC-AGI-1 helped identify the emergence of large reasoning models as a new category, with OpenAI's o3 being the first clear sign. ARC-AGI-2 tracked how quickly that reasoning capability scaled. But the static format had a vulnerability: test-time compute scaling. By generating thousands of candidate solutions in parallel during inference, labs pushed ARC-AGI-2 scores from single digits to well above 50% within a year.

ARC-AGI benchmark evolution over time. Image by Author.

ARC-AGI-3 makes the brute-force sampling strategy much harder to pull off because, as I mentioned earlier, the environment only reveals its rules after the agent acts. You cannot generate 10,000 candidate solutions in a context window when the problem changes with each action.

How ARC-AGI-3 resists shortcutting

Beyond the interactive format, ARC-AGI-3 includes specific design choices meant to counter the data contamination and synthetic generation shortcuts that affected earlier versions. The most notable change is the inverted dataset ratio. ARC-AGI-1 and ARC-AGI-2 had roughly a 10:1 ratio of public to private tasks, giving researchers extensive training material. ARC-AGI-3 flips this: only 25 environments are public, while the vast majority are held back in semi-private and fully private sets used for evaluation.

The ARC Prize team also flagged evidence suggesting that some frontier models may have ARC data in their training sets. During ARC-AGI-2 evaluation, Gemini 3's chain-of-thought reasoning referenced ARC-specific color mappings without being prompted to, which suggests training data saturation. By reducing the public surface area and shifting to interactive environments that cannot be memorized as static patterns, ARC-AGI-3 aims to make this kind of shortcut much harder.

What ARC-AGI-3 Is Designed to Measure

Those design choices make more sense once you understand what the benchmark is actually trying to test. The benchmark targets what the ARC Prize team describes as "skill-acquisition efficiency": how efficiently an AI agent can learn something it has never encountered before.

Four core capabilities measured by ARC-AGI-3. Image by Author.

These four areas are tested simultaneously across every environment, with no instructions and no stated objectives at any point.

A 100% score on ARC-AGI-3 would mean an AI agent can solve every environment as efficiently as the second-best human tester. The ARC Prize team is clear that this would not equal AGI. It would mean one particular gap between AI and human learning has been closed.

How ARC-AGI-3 Is Scored

Scoring is where ARC-AGI-3 differs most from earlier benchmarks. It does not just check whether the agent eventually stumbles into a solution. It measures how efficiently the agent gets there.

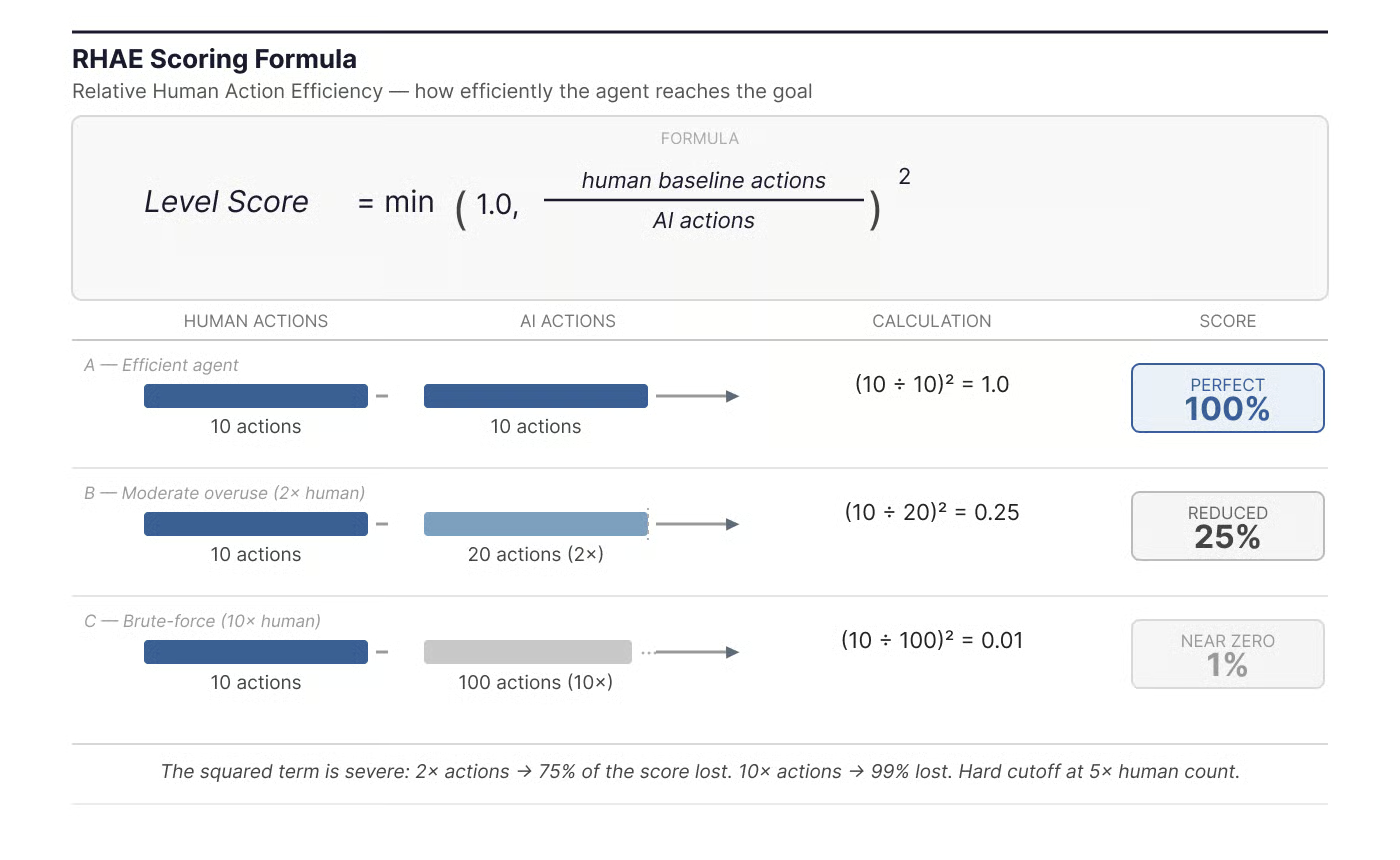

The RHAE scoring formula

The metric is called RHAE, which stands for Relative Human Action Efficiency. The per-level formula is:

Level Score = min(1.0, (human_baseline_actions / AI_actions))²

The key detail is the squared term. This is not a linear relationship. Doubling the action count does not halve the score; it quarters it. Ten times the action drops the score to nearly zero. This power-law design heavily penalizes brute-force approaches and rewards agents that actually learn how the environment works.

RHAE scoring formula with worked examples. Image by Author.

The human baseline is set by the second-best performer out of 10 testers who attempted each environment on first exposure. Using the second-best rather than the absolute best filters out lucky outliers while staying grounded in real play.

There is also a hard cutoff: if an agent takes more than 5x the human action count on any level, it is cut off and scores zero for that level. And scores are capped at 1.0, so discovering an unintended shortcut that beats the human baseline does not give bonus credit.

Within each environment, later levels count more. The scoring uses a weighted average where Level 1 gets a weight of 1, Level 2 gets a weight of 2, and so on. This means that tutorial levels barely affect the score, while the harder levels that require combining multiple learned mechanics carry the most weight.

Two leaderboards

ARC-AGI-3 maintains two separate leaderboards with different rules. The Official leaderboard evaluates frontier models using general-purpose API systems that have not been specially prepared for ARC-AGI-3. The Community leaderboard allows self-reported results and permits harnesses. This distinction matters because, as I mentioned earlier, hand-crafted harnesses can dramatically inflate scores on known environments while failing completely on unseen ones. The Official leaderboard is designed to measure the AI's actual adaptability, not the developer's scaffolding.

ARC Prize 2026 and the ARC-AGI-3 Competition

ARC-AGI-3 is the centerpiece of this year's competition, but it is not the only track.

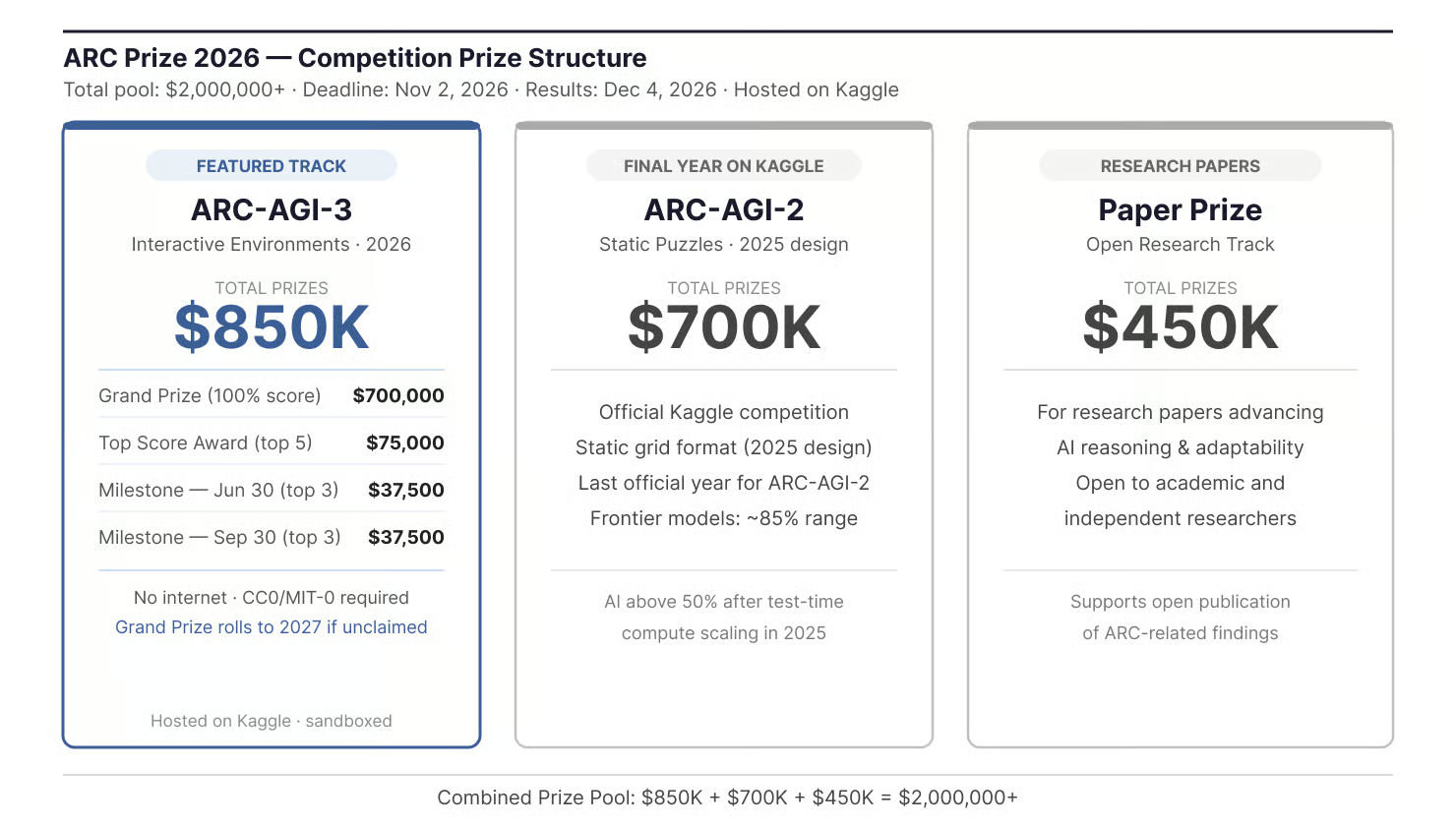

ARC Prize 2026 has a total prize pool of over $2 million spread across three tracks. The ARC-AGI-3 track offers $850,000 in total prizes, headlined by a $700,000 Grand Prize for the first eligible agent that achieves 100% on the fully private evaluation set. If no one claims it this year, the funds roll over to 2027. Beyond the Grand Prize, there is a $75,000 top score award split among the top five finishers, and two milestone checkpoints (June 30 and September 30, 2026) each distributing $37,500 across the top three teams at that date.

ARC Prize 2026 competition prize structure. Image by Author.

The other two tracks are an ARC-AGI-2 track ($700,000, and notably the final year ARC-AGI-2 will be used in an official Kaggle competition) and a Paper Prize track ($450,000 for research papers).

The competition runs on Kaggle, but with rules that set it apart from a typical Kaggle competition. Submissions are evaluated in a sandboxed environment with no internet access, which rules out API calls to hosted models like GPT, Claude, or Gemini. All prize-eligible solutions must be released under a permissive or public-domain license (CC0 or MIT-0) before receiving private evaluation scores. The competition opened on March 25, 2026, with a final submission deadline of November 2, 2026, and results announced on December 4, 2026.

Why ARC-AGI-3 Matters

Benchmarks matter most when they reveal a gap that did not look like a gap before. By that measure, the first two ARC benchmarks earned their place: ARC-AGI-1 surfaced the emergence of large reasoning models, and ARC-AGI-2 tracked how far test-time compute scaling could go. ARC-AGI-3 is pointing at a different problem.

What makes this benchmark relevant right now is context. As of early 2026, many widely used AI benchmarks are approaching saturation. Frontier models score above 90% on MMLU, HumanEval, and GSM8K, among others, which limits how much these evaluations can tell us about differences between systems. ARC-AGI-3 tests a capability that current models appear to lack: learning on the fly inside unfamiliar environments. The gap between sub-1% AI scores and 100% human solvability is large enough to suggest this is not just a harder version of the same test, but a different kind of challenge.

ARC Prize positions ARC-AGI-3 as the most important test of interactive reasoning, though it is worth remembering that framing comes from the organization running the benchmark. Whether it holds up depends on how the benchmark performs as researchers adapt to it.

Limitations and Open Questions

No benchmark is perfect, and ARC-AGI-3 has received some criticism worth noting.

One common concern is the scoring formula itself. The squared efficiency metric heavily penalizes exploration, which is arguably a sign of intelligence rather than a failure. If an agent spends 50 actions carefully mapping boundary conditions before reaching a solution, it scores far worse than a human who guesses the rule in 5 actions. Some community members have argued this makes the performance gap look artificially large.

There is also the question of baseline selection. As I covered in the scoring section, the benchmark uses the second-best human tester rather than the average, which sets a high bar. Even typical humans would score below 100% under this approach.

- Does abstract game performance transfer? Whether success on interactive grid games predicts anything about real-world adaptive intelligence is still an open question. The benchmark tests a specific, narrow dimension of reasoning.

- Agent design versus base models. It is unclear whether progress will come from better agent scaffolding (harnesses, state-space search, RL-based approaches) or from different model architectures. As I covered earlier, StochasticGoose's preview-period lead disappeared on the full benchmark, so no approach has demonstrated clear generalization yet.

- Will the benchmark stay resistant? As I covered earlier, labs moved ARC-AGI-2 from single digits to well above 50% in under a year once they focused on it. Whether ARC-AGI-3's design changes (interactive format, inverted dataset ratio, action-efficiency scoring) are enough to resist similar pressure is still an open question.

- Context window costs. The 64×64 grids with 16 colors can quickly exhaust language model context windows without compression, making LLM-based approaches expensive to run at scale.

The ARC Prize team presents the benchmark as a scientific attempt to measure a specific missing capability, not a definitive test of general intelligence. That is a fair reading given what we know today.

Conclusion

ARC-AGI-3 takes a different approach to evaluating AI systems. By moving from static puzzles to interactive environments, it tests whether models can explore, set their own goals, and figure things out through experience rather than instructions.

The early numbers are striking. Models that dominate coding benchmarks, math competitions, and knowledge tests struggle to cross 1% here. Whether that gap closes quickly, as it did with earlier ARC benchmarks, or whether the interactive format proves harder to crack is something I think is worth watching closely.

For more on the concepts behind adaptive AI systems, check out our AI Fundamentals skill track or our course on Building Scalable Agentic Systems.

I’m a data engineer and community builder who works across data pipelines, cloud, and AI tooling while writing practical, high-impact tutorials for DataCamp and emerging developers.

FAQs

What is ARC-AGI-3?

An interactive reasoning benchmark that tests whether AI agents can explore novel environments, infer goals, and act efficiently without any instructions.

How is ARC-AGI-3 different from ARC-AGI-2?

ARC-AGI-2 uses static input-output puzzles; ARC-AGI-3 uses live, interactive environments where rules and goals only emerge through the agent's own actions.

Is ARC-AGI-3 a Kaggle competition?

It is hosted on Kaggle as part of ARC Prize 2026, but with stricter rules: no internet access during evaluation, and prize-eligible solutions must open-source their code and methods. The frontier model scores (GPT-5.4, Gemini, etc.) were collected separately via API, not through Kaggle submissions.

What does ARC-AGI-3 measure?

Skill-acquisition efficiency: how quickly an AI agent can learn to navigate a novel environment, scored by action count relative to a human baseline.

Does passing ARC-AGI-3 mean AGI?

No. A 100% score means the agent matches human efficiency in these specific environments, not that it has achieved general intelligence.