Track

What if you could make large language models run faster without upgrading your GPU, changing your machine, or switching to a smaller model?

That is what we will test in this guide using Multi-Token Prediction, or MTP. In my benchmark, the same Qwen3.6 27B model on the same RunPod RTX 3090 setup improved from 38 tokens/sec to 65 tokens/sec after enabling MTP. That is a 1.71x speedup, or around 71% higher throughput, with no visible loss in output quality.

In this guide, we will:

- Set up a RunPod RTX 3090 machine

- Clone and switch to the MTP branch

- Build llama.cpp with CUDA support

- Download the Qwen3.6 27B MTP GGUF model

- Run the model without MTP to get a baseline speed

- Enable MTP and test the model again

- Compare the token generation speed with and without MTP

- Look at TurboQuant as a possible next step for further optimization

Associate AI Engineer

What Is Multi-Token Prediction?

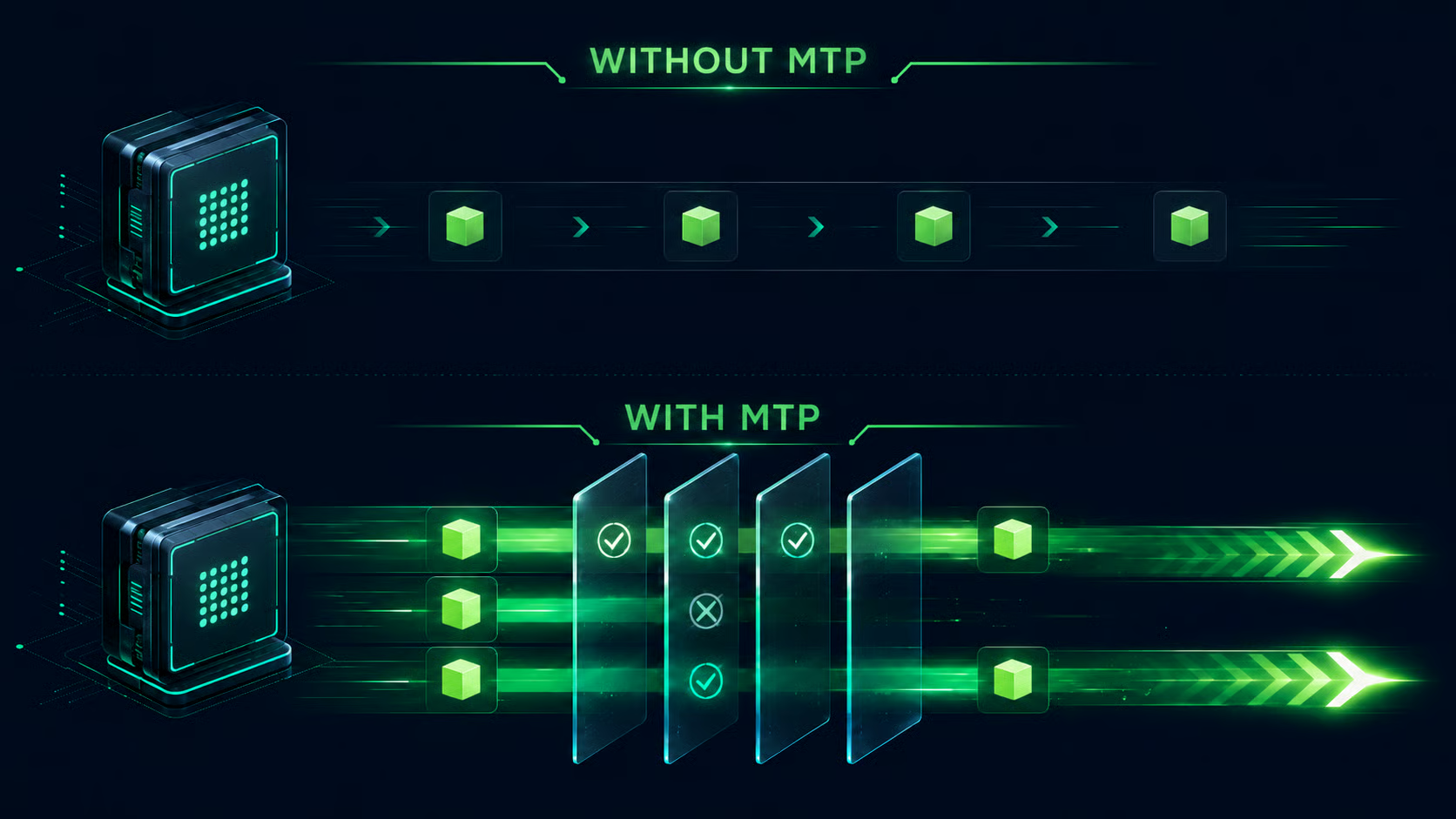

Most LLMs generate text one token at a time. The model predicts the next token, adds it to the context, and repeats the same process again. This is reliable, but it can be slow because each new token usually requires another decoding step.

Multi-Token Prediction changes this by allowing the model to look ahead and propose multiple future tokens instead of only one. These proposed tokens are then checked by the main decoding process. If the predictions are correct, the model can accept several tokens in one go. If one token is wrong, the model falls back to the normal path from that point.

In practice, MTP works like a built-in drafting mechanism. The model drafts a few likely next tokens, verifies them, and keeps the valid ones. The more draft tokens that get accepted, the fewer full decoding steps are needed, which can increase tokens per second without changing the final output quality.

In simple terms:

- Without MTP: Generate token 1 → generate token 2 → generate token 3

- With MTP: Draft multiple tokens → verify them → accept valid tokens together

This is why MTP can make local LLM inference feel much faster. Instead of forcing the model to move forward one tiny step at a time, it lets the model safely jump ahead whenever its draft predictions are correct.

In tools like llama.cpp and vLLM-style implementations, this is closely related to speculative decoding, where draft tokens are accepted only when they match the verifier’s output.

1. Set Up a RunPod RTX 3090 Machine

For this guide, I used a RunPod GPU instance with an RTX 3090. You can use another CUDA-enabled GPU, but the benchmark results in this tutorial are based on an RTX 3090 setup.

First, create a new RunPod pod and select an RTX 3090 GPU.

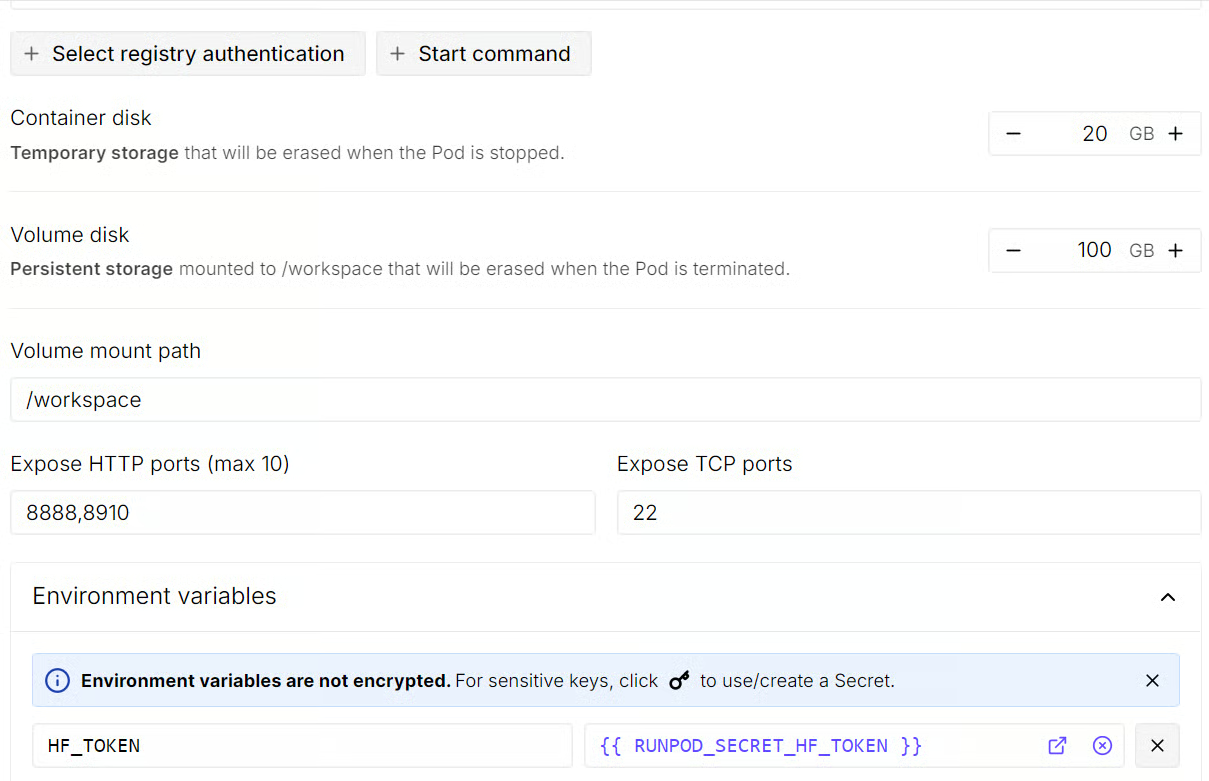

Before deploying the pod, edit the template settings:

-

Increase the volume disk size to 100 GB

-

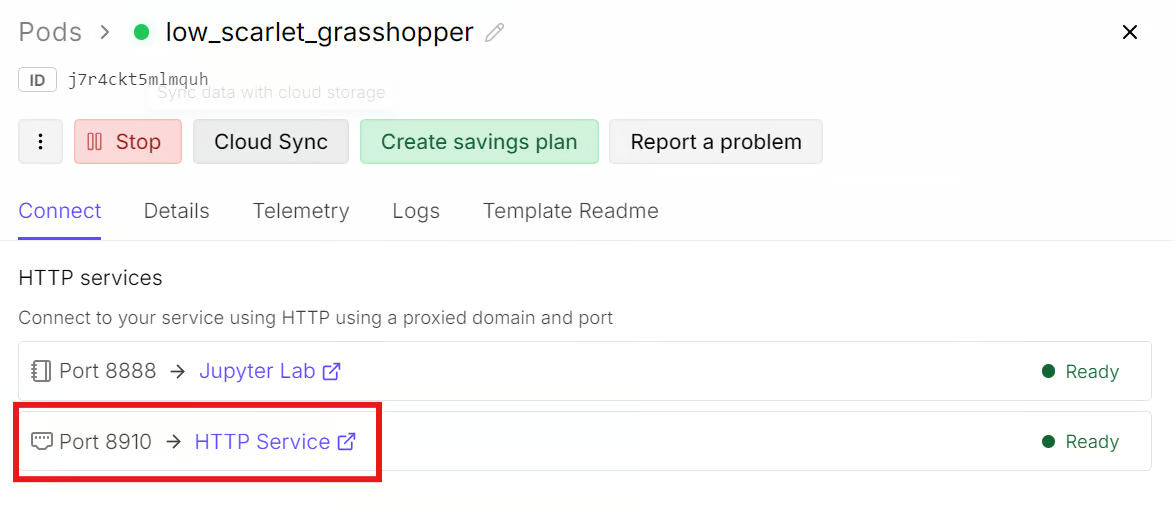

Add an additional HTTP port: 8910

-

Add an environment variable called

HF_TOKENand set its value to your Hugging Face access token.

The extra HTTP port will let you access the llama.cpp server and web UI from your browser. The Hugging Face token helps authenticate the download request and can improve model download speed, especially for large GGUF files.

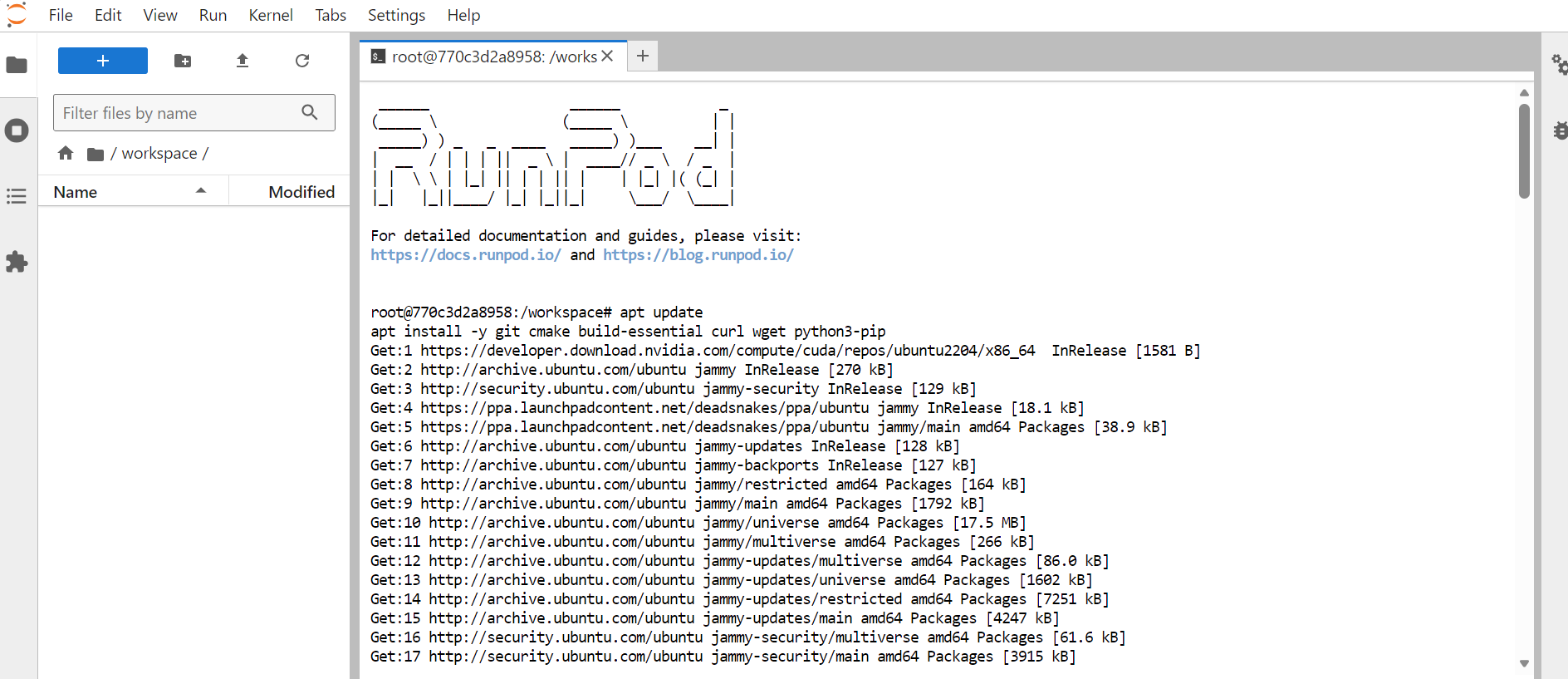

After updating the template, deploy the pod. Once it is running, wait for RunPod to give you access to the JupyterLab instance. Open JupyterLab, then launch a new terminal.

Inside the terminal, install the required system packages:

apt update

apt install -y git cmake build-essential curl wget python3-pip

2. Clone and Switch to the MTP Branch

Next, move into the workspace directory where we will install and build llama.cpp:

cd /workspaceClone the llama.cpp repository:

git clone --depth 1 https://github.com/ggml-org/llama.cpp.git

cd llama.cppThe MTP changes are still being tested through a dedicated llama.cpp pull request, so we fetch and switch to that branch to use the latest MTP implementation before it becomes part of the standard main build.

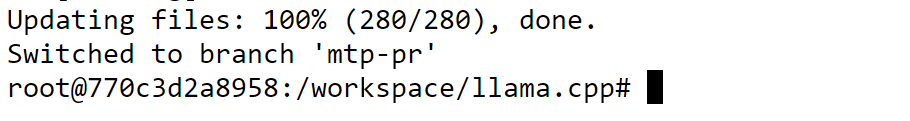

Fetch the MTP branch locally:

git fetch origin pull/22673/head:mtp-pr

git checkout mtp-prThis switches your local llama.cpp build to the MTP-enabled version, which we will use for the rest of the guide.

3. Build llama.cpp with CUDA Support

Now that you are on the MTP-enabled branch, build llama.cpp with CUDA support. This allows the model to use the RTX 3090 GPU instead of running inference on the CPU.

Run the CMake build configuration:

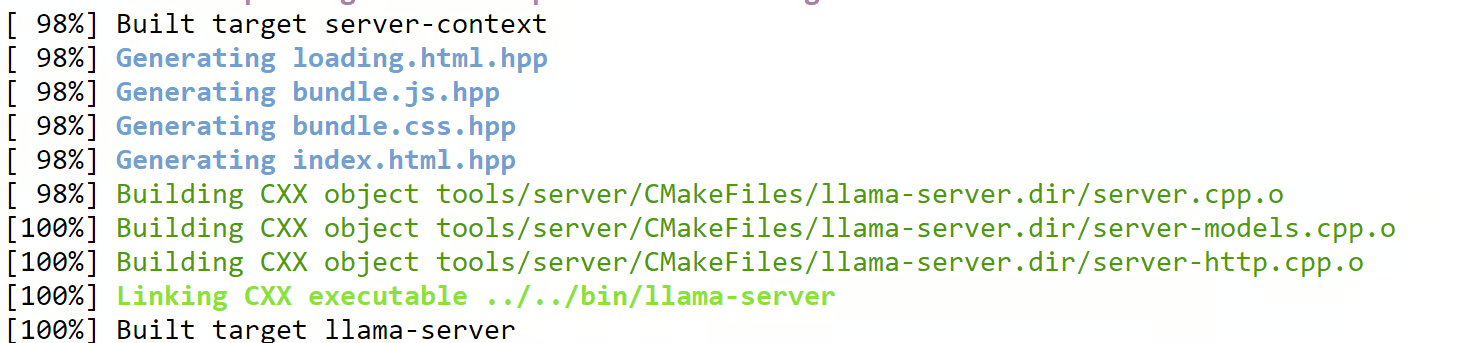

cmake -B build -DGGML_CUDA=ON -DCMAKE_BUILD_TYPE=ReleaseThen compile the two targets we need for this guide:

cmake --build build --target llama-cli llama-server -j

This builds:

-

llama-clifor running quick command-line tests -

llama-serverfor launching an OpenAI-compatible server with browser access

Once the build is complete, copy the llama-server binary into the main llama.cpp directory:

cp ./build/bin/llama-server ./llama-serverThis makes it easier to run the server from the project root in the next steps.

4. Download the Qwen3.6-27B-MTP Model

Next, download the Qwen3.6 27B MTP GGUF model that we will use for testing. This is the model we will run first without MTP, and then again with MTP enabled to compare the speed difference.

First, install the Hugging Face download tools:

pip install -U "huggingface_hub[hf_xet]" hf-xet hf_transferThen enable faster Hugging Face downloads:

export HF_HUB_ENABLE_HF_TRANSFER=1This helps speed up large model downloads, especially when working with GGUF files.

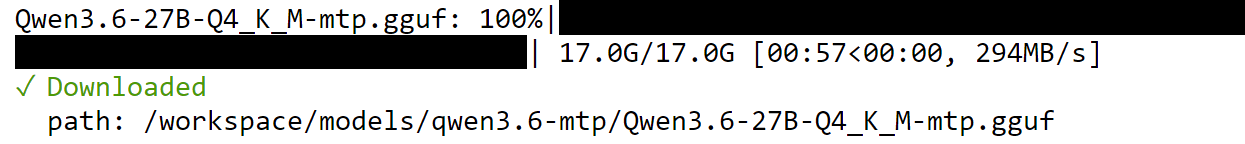

Now create a dedicated directory for the model:

mkdir -p /workspace/models/qwen3.6-mtpDownload the Qwen3.6 27B MTP GGUF model:

hf download froggeric/Qwen3.6-27B-MTP-GGUF \

Qwen3.6-27B-Q4_K_M-mtp.gguf \

--local-dir /workspace/models/qwen3.6-mtp

If you’re interested in fine-tuning LLMs, check out my tutorial on fine-tuning Qwen3.6 on a medical Q&A dataset.

5. Run Qwen3.6-27B Without MTP Enabled

Now we are at the main part of the guide: testing the model speed before and after enabling MTP.

First, we will run the model without MTP. This gives us a clean baseline so we can compare the speed difference later. We are using the same model, same GPU, same context size, and same server settings. The only major change in the next step will be enabling MTP.

Move back into the llama.cpp directory:

cd /workspace/llama.cppStart the server without MTP:

./llama-server \

-m "/workspace/models/qwen3.6-mtp/Qwen3.6-27B-Q4_K_M-mtp.gguf" \

--alias qwen3.6-27b-no-mtp \

--host 0.0.0.0 \

--port 8910 \

-ngl 99 \

-c 100000 \

--cache-type-k q8_0 \

--cache-type-v q8_0 \

-np 1 \

-b 2048 \

-ub 512 \

-t 8 \

-fa on \

--temp 0.7 \

--top-k 20 \

--top-p 0.95 \

--repeat-penalty 1.1 \

--metricsThis starts an OpenAI-compatible llama.cpp server on port 8910.

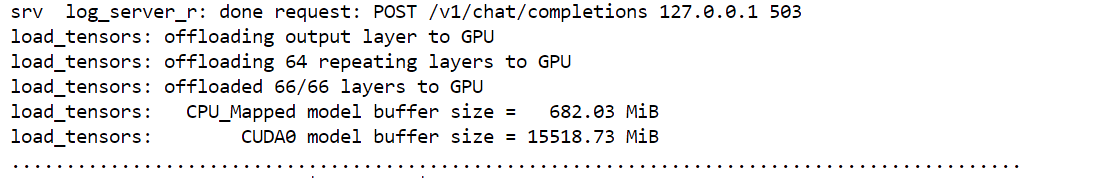

The model may take a short time to load because the server needs to load the model weights into GPU memory. Once everything is ready, the terminal will show that the server is available on port 8910.

Because we exposed this port when setting up the RunPod template, you do not need to configure anything else. Go back to your RunPod dashboard and click the link associated with port 8910. This will open the llama.cpp web UI in your browser, with the local model already loaded.

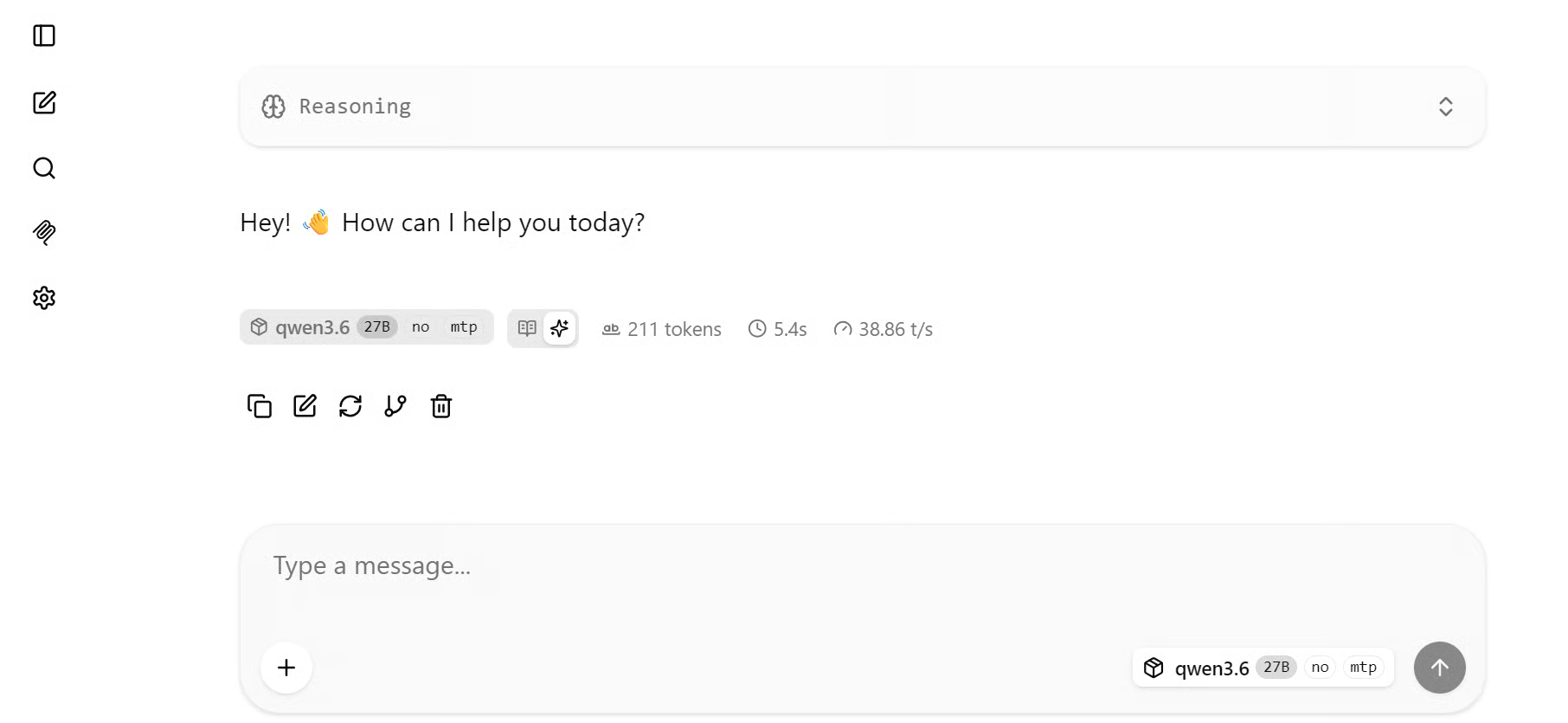

From there, you can start testing prompts directly in the browser, similar to how you would use a chat interface.

In my baseline test, the model generated responses at around 38.86 tokens/sec without MTP. Even with a more complex prompt, the speed stayed around the same range.

For a 27B model running on an RTX 3090, this is already a usable result, especially considering the GPU is slower and has limited memory compared to newer data center cards.

6. Run Qwen3.6-27B With MTP Enabled

Now we will run the same model again, but this time with MTP enabled.

Go back to the terminal where the server is running and stop it with:

CTRL + CThe important thing here is that we are not changing the model, GPU, quantization, or most runtime settings. We are only adding two MTP-related flags:

--spec-type mtp

--spec-draft-n-max 3The first flag tells llama.cpp to use MTP-style speculative decoding. The second flag sets the maximum number of draft tokens to 3. This means the model can attempt to draft up to three future tokens before verification.

Now start the server again with MTP enabled:

./llama-server \

-m "/workspace/models/qwen3.6-mtp/Qwen3.6-27B-Q4_K_M-mtp.gguf" \

--alias qwen3.6-27b-mtp \

--host 0.0.0.0 \

--port 8910 \

-ngl 99 \

-c 100000 \

--spec-type mtp \

--spec-draft-n-max 3 \

--cache-type-k q8_0 \

--cache-type-v q8_0 \

-np 1 \

-b 2048 \

-ub 512 \

-t 8 \

-fa on \

--temp 0.7 \

--top-k 20 \

--top-p 0.95 \

--repeat-penalty 1.1 \

--metricsOnce the server is ready, refresh the browser page. If the page does not reconnect automatically, close it and open the port 8910 link again from your RunPod dashboard.

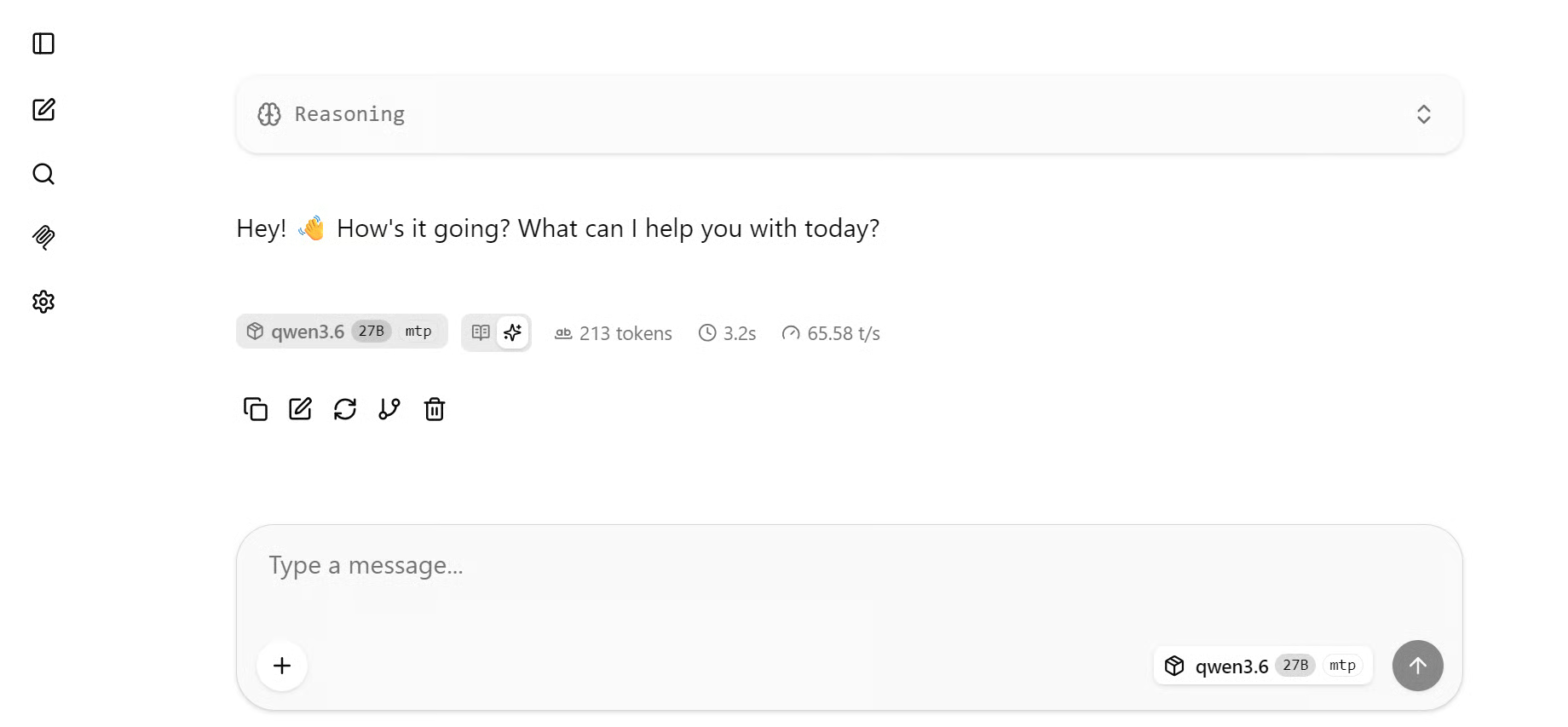

Now test the model again using the same type of prompts.

With MTP enabled, the speed increased noticeably. For a simple greeting prompt, the model reached around 65–67 tokens/sec. Compared to the baseline speed of around 38.86 tokens/sec, this is a major improvement from adding only two command-line flags.

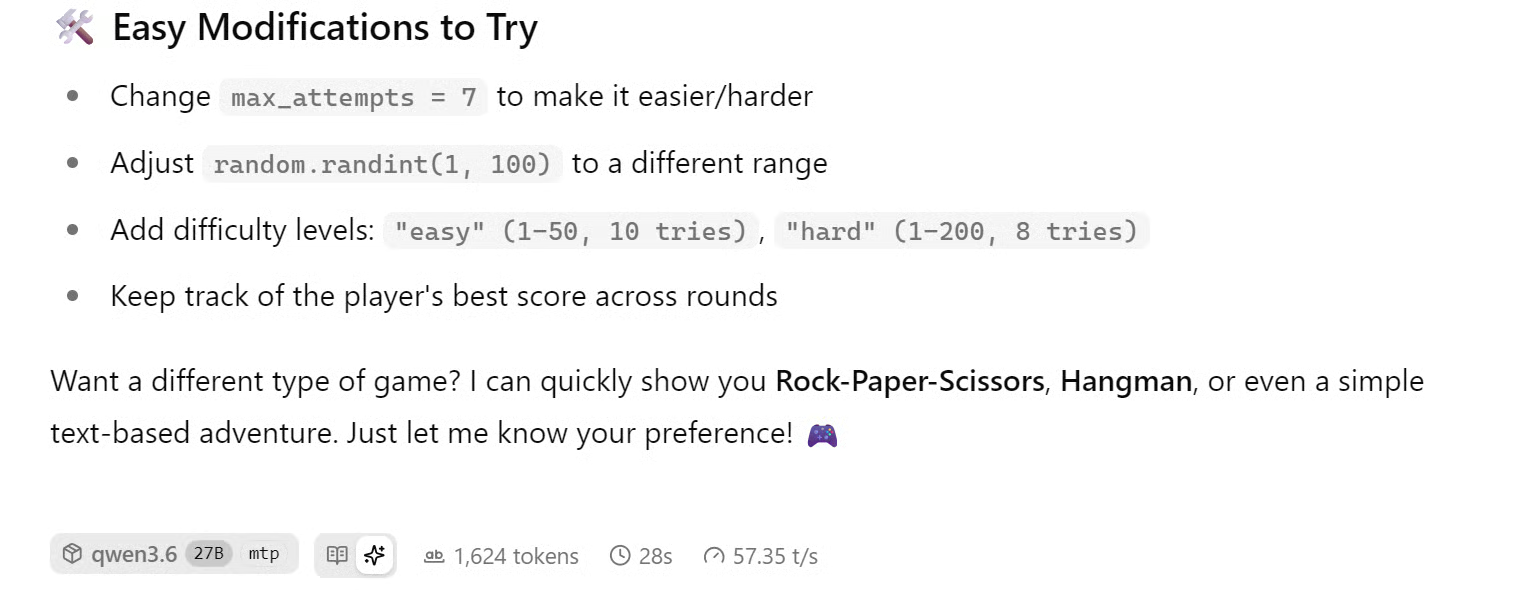

For a more complex prompt, such as asking the model to build a simple game in Python, the speed was slightly lower but still much faster than the non-MTP baseline. In that test, the model generated at around 56–61 tokens/sec, which is still a strong result for a 27B model on an RTX 3090.

Overall, enabling MTP improved Qwen3.6 27B from around 38 tokens/sec to 65 tokens/sec on the RunPod RTX 3090 setup. That gives a 1.71x speedup, or around 71% higher throughput, without changing the hardware or switching to a smaller model.

7. Recommendation: Further Speed Optimization with TurboQuant

The benchmark in this guide uses the original llama.cpp MTP setup, without adding TurboQuant, custom patches, or other runtime-level optimizations. This keeps the test simple, reproducible, and focused on the speed gain from enabling MTP alone.

To push performance further, the next optimization to explore is MTP and TurboQuant together. MTP improves throughput by allowing the model to accept multiple predicted tokens, while TurboQuant helps reduce KV-cache memory pressure during inference.

This can be especially useful for larger models, long-context prompts, and GPUs like the RTX 3090, where memory bandwidth and VRAM can become limiting factors.

This is why some r/LocalLLaMA community results report higher tokens/sec than this guide. Those setups often combine MTP with TurboQuant, patched builds, different KV-cache settings, or faster GPUs. Since this tutorial focuses on a clean MTP-only benchmark, TurboQuant should be treated as the recommended next experiment rather than part of the current setup.

Final Thoughts

Recently, I have been following posts in the LocalLLaMA Reddit community, and it is amazing to see how far local LLM inference has come. People are now running models like Qwen3.6 27B as local coding agents, even on older GPUs with limited VRAM. Some are also running similar setups on Mac systems, and the results are genuinely impressive.

After testing MTP myself, I can see why there is so much excitement. With the same model and the same RTX 3090 setup, enabling Multi-Token Prediction improved generation speed from around 38 tokens/sec to 65 tokens/sec. That is almost a 2x speedup without upgrading the GPU or switching to a smaller model.

This guide focused on a simple and reproducible MTP setup using llama.cpp, but this feels like only the beginning. The next step is to experiment with better GGUF quantization, MTP, TurboQuant, and more tuned runtime settings to see how much further local inference speed can be pushed.

For me, the most exciting part is what this means for local coding agents. You can run powerful models on your own hardware, reduce cost per query, keep your code private, and use an AI coding assistant without depending entirely on internet-based APIs. Local LLMs are becoming faster, more practical, and much more useful than they were even a short time ago.

Multi-Token Prediction FAQs

Do I need a separate draft model for MTP?

No. With Qwen3.6-27B, MTP is built into the model itself, so no second model is required.

How much faster does MTP make the model?

In our RunPod RTX 3090 setup, enabling MTP improved generation speed from ~38 tokens/sec to ~65 tokens/sec, a 1.71x speedup, or ~71% higher throughput.

What is the difference between MTP and speculative decoding?

MTP in llama.cpp is a form of speculative decoding. Draft tokens from the model's own MTP heads are accepted only if they pass verification. The key difference from traditional speculative decoding is that no external draft model is needed.

Can I get even faster speeds beyond what MTP offers?

Yes. Combining MTP with TurboQuant, which reduces KV-cache memory pressure during inference, is the recommended next step for further speed gains, especially on memory-constrained GPUs like the RTX 3090.