Kursus

Long-context models unlock a different way to analyze codebases. Instead of breaking a repository into chunks, embedding them, and retrieving relevant pieces, you can pack an entire small-to-medium repo into a single prompt and let the model reason over it end-to-end.

In this tutorial, I’ll show you how to build a GitHub repository analyzer using NVIDIA Nemotron 3 Super with a Gradio interface. The app takes a public repo URL, clones it, filters out nonessential files, estimates token usage, and packs the remaining content into one structured prompt. This prompt is then sent to Nemotron 3 Super via OpenRouter’s hosted endpoint, which returns structured JSON outputs for tasks like architecture analysis, onboarding guidance, testing gaps, improvement opportunities, and more.

There’s no embedding step, no vector database, and no retrieval loop. Instead, we rely entirely on prompt packing, which works well as long as the repository fits within the model’s practical context window. By the end of this tutorial, you’ll have a Gradio app that can:

- Clone and preprocess a public GitHub repository

- Estimate whether it fits within a token budget

- Run one or multiple repo analysis tasks

- Present structured results through a task-aware UI

The video below shows the project in action (with a truncated loading time for demonstration purposes):

You can access the complete code to this demo here.

What is NVIDIA Nemotron 3 Super?

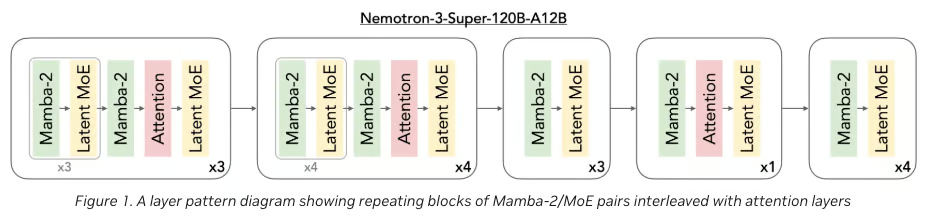

Nemotron 3 Super is NVIDIA’s open reasoning model for agentic and long-context workflows. It uses a hybrid Mamba-Transformer Latent MoE architecture with 120B total parameters and 12B active parameters, includes multi-token prediction (MTP), and is designed for reasoning-heavy tasks such as coding, tool use, and long-context analysis.

NVIDIA positions it specifically for complex multi-agent applications and whole-codebase reasoning.

Figure 1: Nemotron 3 Super Architecture(NVIDIA Blog)

Some core ideas behind Nemotron 3 Super include:

- A hybrid Mamba-Transformer backbone(Figure 1) for long-sequence efficiency and precise retrieval,

- Latent MoE to route tokens through more specialists at the same effective cost,

- MTP to improve generation speed and reasoning quality,

- A native 1M-token context window in NVIDIA’s supported deployment stack.

For this tutorial, we’ll be using OpenRouter’s hosted free endpoint, since both the full and quantized variants of the model typically require at least an H100 GPU for practical inference. The hosted endpoint currently supports a 262K token context window (instead of the full 1M), which is still sufficient for analyzing medium-sized repositories in this demo.

Nemotron 3 family

NVIDIA’s Nemotron 3 family was introduced as an open model family for agentic AI systems, with different sizes aimed at different deployment and reasoning needs, named Nemotron Nano, Super, and Ultra tiers.

At a practical level, you can think of the family like this:

- Nemotron 3 Nano: The lighter model line aimed at efficient targeted tasks and lower-cost inference, focused on agentic workloads and targeted steps inside larger workflows.

- Nemotron 3 Super: The middle “serious reasoning” tier known for denser technical reasoning, complex coding tasks, long-context analysis, and higher-volume agentic systems.

- Nemotron 3 Ultra: The larger family tier for even heavier reasoning/deployment scenarios.

So, Nemotron 3 Super is the right choice here because we want a model that can reason over a whole repository at once, not just solve isolated coding subtasks.

Nemotron 3 Super Tutorial: Build a Repo Analyzer With Gradio

In this section, we’ll build a repo analyzer using Nemotron 3 Super wrapped in a Gradio interface. At a high level, here’s what the final app does:

- Accepts a public GitHub repository URL and an optional branch name.

- Clones the repository locally, filters out nonessential files, and estimates whether the remaining code fits within the available context budget.

- Optionally excludes tests, docs, or notebooks to make the packed repository smaller and easier to analyze.

- Packs the filtered repository into a single long-context prompt and sends it to Nemotron 3 Super through OpenRouter.

- Finally, it returns structured results repo-related tasks.

Let’s build it step by step.

Step 1: Install dependencies

Before building the repo analyzer, we need a minimal environment that can handle three core responsibilities, including UI rendering, repository processing, and model interaction. Since we’re not running Nemotron locally, the setup stays lightweight and focused.

Install everything by running:

!pip -q install gradio openai tiktoken gitpython orjson pandasIn this project, we’ll use:

openaito interact with OpenRouter’s API (Nemotron endpoint)gradioto build the interactive UItiktokento estimate token usage before sending promptsorjsonfor fast JSON parsing from model outputspandasto render structured results as tablesgitpythonto clone and work with repositories

Unlike local LLM setups (which require CUDA, vLLM, or quantization), this approach avoids GPU dependencies entirely and keeps the environment Colab-friendly.

Step 2: Initialize the OpenRouter client

In this step, we set up Nemotron 3 Super via OpenRouter and define a few core constants that control how repositories are processed. Since we are not running the model locally, this client becomes the central interface for sending large, packed prompts and receiving structured analysis outputs.

OPENROUTER_API_KEY = os.environ.get("OPENROUTER_API_KEY", "")

MODEL = "nvidia/nemotron-3-super-120b-a12b:free"

WORKDIR = "/content/repo_ui"

if not OPENROUTER_API_KEY:

raise ValueError("Set OPENROUTER_API_KEY in environment before running.")

client = OpenAI(

base_url="https://openrouter.ai/api/v1",

api_key=OPENROUTER_API_KEY,

)

enc = tiktoken.get_encoding("cl100k_base")At this stage, the application communicates with Nemotron 3 Super through OpenRouter using the openai Python client configured with a custom base_url and an API key stored in OPENROUTER_API_KEY.

The selected model points to OpenRouter’s hosted endpoint, which allows us to run a very large model without needing local GPU infrastructure.

To manage context size, we initialize a tokenizer (tiktoken with cl100k_base) so we can estimate how many tokens the packed repository will consume before sending it to the model. This is because the model has a finite context window.

A couple of practical considerations here:

- OpenRouter’s free endpoint logs both prompts and outputs, so this setup should be used only with public repositories.

- The available context window (~262K tokens) is smaller than the model’s theoretical maximum, so large repositories may require filtering (e.g., excluding tests, docs, or notebooks). Also avoid sending proprietary or sensitive code for safety reasons.

- Since everything is routed through a remote API, this keeps the setup lightweight and avoids dependency on high-memory GPUs like H100s.

Step 3: Repo filters and prompt rules

Now, we need two safeguards in place, i.e, file filtering and prompt grounding.

The first prevents us from wasting context on irrelevant artifacts such as build outputs, notebooks, images, or binary files.

The second ensures that, once the repository is packed, the model stays anchored to the actual code instead of drifting into generic software advice.

Together, these rules make repo analysis both more efficient.

BASE_IGNORE_DIRS = {

".git", ".github", "__pycache__", "node_modules", ".venv", "venv",

"dist", "build", ".mypy_cache", ".pytest_cache", ".idea", ".vscode"

}

IGNORE_SUFFIXES = {

".png", ".jpg", ".jpeg", ".gif", ".webp", ".pdf", ".zip", ".gz",

".tar", ".mp4", ".mov", ".onnx", ".pt", ".bin", ".parquet", ".feather",

".ico", ".svg", ".lock"

}

MAX_FILE_BYTES = 250_000

SYSTEM_PROMPT = """You are a codebase analysis model.

You are given the complete repository contents in one prompt.

Rules:

1. Use only the provided repository content.

2. Do not invent files, modules, libraries, or dependencies.

3. Every substantial claim must cite one or more file paths.

4. Clearly separate direct observations from engineering inferences or recommendations.

5. For modernization or improvement suggestions, explicitly distinguish:

- repo-grounded findings

- general engineering recommendations

6. Return valid JSON only.

7. If uncertain, say so explicitly.

8. For anything involving security, dead code, dependency replacement, or testing gaps,

present findings as likely candidates unless directly proven by repository content.

"""The following three filtering variables control repository preprocessing:

BASE_IGNORE_DIRSexcludes directories that are either irrelevant to code analysis or too noisy to be useful, such as.git,node_modules, build folders, and editor-specific caches.IGNORE_SUFFIXESfilters out file types that are generally not helpful for repo reasoning, including images, archives, videos, model checkpoints, and other artifacts.MAX_FILE_BYTESprovides one more safety check by skipping files that are too large, which helps keep the packed repository within a practical context budget.

The SYSTEM_PROMPT is just as important as the file filters.

Since the model sees the repository as one long prompt, we need to provide clear behavioral constraints to avoid inventing modules, misreading abstractions, or giving overly generic recommendations.

These instructions explicitly force the model to stay grounded in the provided repository, cite file paths for important claims, and separate direct evidence from general judgment.

Step 4: Task schemas and safety guards

Now that we have file filtering and a system prompt in place, the next step is to define a set of repository-wide tasks, each paired with a structured JSON schema.

This gives us two major benefits, such as the model knows exactly what kind of output to produce, and the app can reliably parse and render the results in task-specific tables.

TASK_DEFS: Dict[str, Dict[str, Any]] = {

"Architecture Overview": {

"description": "Explain the architecture, major modules, likely entrypoints, dependencies, and architectural issues.",

"schema": {

"repo_overview": {

"summary": "",

"primary_languages": [],

"likely_entrypoints": []

},

"modules": [

{

"name": "",

"responsibility": "",

"key_files": [],

"evidence_paths": []

}

],

"dependencies": [

{

"from_module": "",

"to_module": "",

"relationship": "",

"evidence_paths": []

}

],

"issues": [

{

"type": "",

"description": "",

"severity": "low|medium|high",

"evidence_paths": []

}

],

"unknowns_and_uncertainties": []

},

},

...

}The TASK_DEFS dictionary acts as the central registry for all analysis modes supported by the app.

Each task contains a natural-language description that tells the model what to do, and a strict schema that defines the expected JSON output.

This is what allows the same packed repository to support very different forms of reasoning, such as architecture mapping, duplication analysis, onboarding guidance, or testing gap detection.

For example, the Architecture Overview asks the model to identify:

- a high-level repository summary,

- major modules,

- dependency relationships,

- and architectural issues.

Other tasks in the full code expand to:

Code Duplicationwhich focuses on repeated logic and related refactor opportunities,Improvement Opportunitieswhich identifies maintainability and modularity gains,- Refactor Roadmap turns findings into a prioritized engineering plan,

Testing Gapshighlights under-tested areas,Onboarding Guideexplains how a new engineer should approach the codebase,Library Modernizationsurfaces outdated or overlapping libraries,Code Quality Hotspotspoints out brittle or bug-prone areas,- and

SecurityorConfig Hygieneflags risky defaults or operational issues.

FULL_REVIEW_TASKS = [

"Architecture Overview",

"Improvement Opportunities",

"Code Quality Hotspots",

"Testing Gaps",

"Refactor Roadmap",

]

TASK_CHOICES = [

"Architecture Overview",

"Code Duplication",

"Improvement Opportunities",

"Refactor Roadmap",

"Testing Gaps",

"Onboarding Guide",

"Library Modernization",

"Code Quality Hotspots",

"Security / Config Hygiene",

"Full Review",

]

def safe_str(value: Any) -> str:

if value is None:

return ""

if isinstance(value, str):

return value.strip()

return str(value).strip()The FULL_REVIEW_TASKS list defines a bundled multi-step review.

Instead of running every possible task, it runs a curated subset that gives a broad engineering view of the repository. TASK_CHOICES is then used directly in the Gradio dropdown so the user can pick either a single task or the bundled review.

The last part, safe_str(), solves a surprisingly important stability issue. During development, one common failure mode was:

'NoneType' object has no attribute 'strip'This can happen when:

- a Gradio input field returns

None, - a task value is missing,

- a model response has empty content,

- or a parser receives an unexpected null value.

By normalizing all such values through safe_str() function, we make the app much more robust.

Step 5: Repo cloning and packing

Before we can send anything to the model, we need to turn a GitHub repository into a single structured prompt.

This step handles the full ingestion pipeline including, cloning the repo, filtering out irrelevant files, extracting only usable text, and packing everything into a format that the model can consume in one shot.

Unlike traditional RAG pipelines, we’re not chunking or embedding. Instead, we’re compressing the entire repository into a single long-context input, so being selective and structured here is critical for both performance and quality.

def clone_repo(repo_url: str, branch: str = "main") -> str:

repo_url = safe_str(repo_url)

branch = safe_str(branch)

if not repo_url:

raise ValueError("Repository URL is empty.")

if os.path.exists(WORKDIR):

shutil.rmtree(WORKDIR)

cmd = ["git", "clone", "--depth", "1"]

if branch:

cmd += ["--branch", branch]

cmd += [repo_url, WORKDIR]

subprocess.run(cmd, check=True, capture_output=True, text=True)

return WORKDIR

def is_probably_text(path: Path) -> bool:

try:

with open(path, "rb") as f:

chunk = f.read(2048)

if b"\x00" in chunk:

return False

chunk.decode("utf-8")

return True

except Exception:

return False

def iter_repo_files(root: str, exclude_tests: bool, exclude_docs: bool, exclude_notebooks: bool):

root = Path(root)

for path in root.rglob("*"):

if not path.is_file():

continue

rel = path.relative_to(root)

parts = set(rel.parts)

rel_str = str(rel).lower()

if any(part in BASE_IGNORE_DIRS for part in rel.parts):

continue

if exclude_tests and ("test" in parts or "tests" in parts or "/tests/" in f"/{rel_str}/"):

continue

if exclude_docs and ("docs" in parts or rel_str.endswith(".md") or rel_str.endswith(".rst")):

continue

if exclude_notebooks and rel_str.endswith(".ipynb"):

continue

if path.suffix.lower() in IGNORE_SUFFIXES:

continue

try:

if path.stat().st_size > MAX_FILE_BYTES:

continue

except Exception:

continue

if not is_probably_text(path):

continue

yield path

def repo_tree_string(root: str, exclude_tests: bool, exclude_docs: bool, exclude_notebooks: bool) -> str:

root = Path(root)

lines: List[str] = []

for path in sorted(root.rglob("*")):

rel = path.relative_to(root)

rel_str = str(rel).lower()

parts = set(rel.parts)

if any(part in BASE_IGNORE_DIRS for part in rel.parts):

continue

if path.is_file() and path.suffix.lower() in IGNORE_SUFFIXES:

continue

if exclude_tests and ("test" in parts or "tests" in parts or "/tests/" in f"/{rel_str}/"):

continue

if exclude_docs and ("docs" in parts or rel_str.endswith(".md") or rel_str.endswith(".rst")):

continue

if exclude_notebooks and rel_str.endswith(".ipynb"):

continue

lines.append(f"{rel}/" if path.is_dir() else str(rel))

return "\n".join(lines)

def pack_repo(root: str, exclude_tests: bool, exclude_docs: bool, exclude_notebooks: bool):

root = Path(root)

tree = repo_tree_string(root, exclude_tests, exclude_docs, exclude_notebooks)

files: List[Tuple[str, int, str]] = []

total_tokens = len(enc.encode(tree))

for path in iter_repo_files(root, exclude_tests, exclude_docs, exclude_notebooks):

rel = path.relative_to(root)

try:

content = path.read_text(encoding="utf-8", errors="ignore")

except Exception:

continue

block = f"\n=== FILE START: {rel} ===\n{content}\n=== FILE END: {rel} ===\n"

tok = len(enc.encode(block))

files.append((str(rel), tok, block))

total_tokens += tok

return tree, files, total_tokens

def build_repo_context(tree: str, files: List[Tuple[str, int, str]]) -> str:

joined_files = "".join(block for _, _, block in files)

return f"""=== REPO TREE START ===

{tree}

=== REPO TREE END ===

=== REPOSITORY FILES START ===

{joined_files}

=== REPOSITORY FILES END ===

"""This step defines the full repository ingestion and packing pipeline, but each function has a particular responsibility:

clone_repo()function: This function clones a GitHub repository into a temporary working directory using a shallow clone (--depth 1) to avoid pulling full history. Inputs like repo URL and branch are normalized usingsafe_str()to prevent runtime errors. The output is a local path (WORKDIR) that the rest of the pipeline operates on.iter_repo_files()function: This function acts as the main filtering layer. It recursively scans the repository and yields only high-signal files. It excludes system directories, unsupported file types, oversized files, and optionally filters out tests, documentation, and notebooks.is_probably_text()function: This is a lightweight safety check used during filtering. It reads a small chunk of each file and verifies the absence of null bytes (which usually indicates binary content) and successful UTF-8 decoding. Only files that pass this check are considered valid text and included in the final dataset.repo_tree_string()function: This function builds a string representation of the repository structure. It builds a directory tree (applying the same filtering rules) and outputs a list of directories and files which are used in the prompt so the model gets a high-level understanding of the codebase layout before reading file contents.pack_repo()function: This is the core packing function. It iterates over all filtered files, reads their contents, and wraps each file inside explicit markers like=== FILE START ===and=== FILE END ===. It also estimates token usage usingtiktokenand accumulates a total token count.build_repo_context()function: This function combines everything into a single structured prompt. It places the repo tree first, followed by all file contents. The model first understands the structure, then reads the actual implementation details.

The net effect is a raw GitHub repository transformed into a single, structured long-context input.

Step 6: Task prompting and JSON parsing

Now that the repository has been filtered and packed into a single long-context input, the next step is to tell the model what kind of analysis to perform and how to format the result.

Instead of sending the same generic instruction for every use case, we generate a task-specific prompt that includes the chosen task name, its description, and the exact JSON schema expected in the response.

def build_task_prompt(task_name: str, repo_context: str) -> str:

task_name = safe_str(task_name)

if not task_name:

raise ValueError("Task name is empty.")

if task_name not in TASK_DEFS:

raise ValueError(f"Unknown task: {task_name}")

task_def = TASK_DEFS[task_name]

return f"""Analyze this full repository.

Task Name:

{task_name}

Task Description:

{task_def["description"]}

Return JSON matching this schema exactly:

{json.dumps(task_def["schema"], indent=2)}

{repo_context}

"""

def try_parse_json(text: str) -> Dict[str, Any]:

text = safe_str(text)

if not text:

raise ValueError("Model returned empty text.")

try:

return orjson.loads(text)

except Exception:

start = text.find("{")

end = text.rfind("}")

if start != -1 and end != -1 and end > start:

return orjson.loads(text[start:end+1])

raise ValueError("Could not parse model output as JSON.")The above code snippet defines two closely related functions that form a bridge between the packed repository and the task-specific analysis output.

build_task_prompt()function: This function creates the final prompt which is sent to the model. It first normalizes the task name withsafe_str()so that empty input does not break the pipeline. It then validates if the task exists insideTASK_DEFS, which prevents the app from sending unsupported or misspelled task names. Once the task is validated, the function retrieves that task’s description and JSON schema, and embeds both into the final prompt alongside the full packed repository context.

try_parse_json()function: This function handles the model’s output after inference. In practice, long-context models sometimes wrap JSON with extra text, formatting artifacts, or partial explanatory content. To make the app more robust, thetry_parse_json()function first attempts direct parsing withorjson.loads(). By combining strict prompting with tolerant parsing, the app remains much more stable.

In the next step, we’ll connect this prompting logic to the model call itself and start generating structured repository analysis results.

Step 7: Model inference

At this stage, we run the analysis by sending the prompt to Nemotron 3 Super via OpenRouter.

Instead of chunking or retrieving parts of the repo, we send the entire filtered codebase in a single request and let the model reason globally across files, modules, and dependencies.

def call_model(prompt: str, max_tokens: int = 5000) -> str:

prompt = safe_str(prompt)

if not prompt:

raise ValueError("Prompt is empty.")

resp = client.chat.completions.create(

model=MODEL,

messages=[

{"role": "system", "content": SYSTEM_PROMPT},

{"role": "user", "content": prompt},

],

temperature=0.2,

max_tokens=max_tokens,

extra_headers={

"HTTP-Referer": "https://colab.research.google.com",

"X-Title": "Whole Repo Analysis Demo"

}

)

if not getattr(resp, "choices", None):

raise ValueError("Model response has no choices.")

message = getattr(resp.choices[0], "message", None)

if message is None:

raise ValueError("Model response has no message.")

content = getattr(message, "content", None)

content = safe_str(content)

if not content:

raise ValueError("Model returned empty content.")

return contentThe call_model() function is responsible for executing the actual model call and extracting the response. Here is the complete pipeline:

- Input validation: The function starts by normalizing the prompt which prevents sending invalid requests to the API.

- Model request construction: The request uses the OpenAI-compatible client with OpenRouter as the backend. The payload includes a system prompt that enforces grounding, JSON output, and evidence-based reasoning, and a user message containing the full task prompt and repository context. The temperature is set to

0.2, which keeps outputs deterministic and themax_tokensparameter limits the response size, ensuring the model focuses on structured output.

- Response validation: It checks if choices exist in the response and verifies that a message object is present. It also extracts content and normalizes it. If any of these are missing, the function raises an error.

- Empty response handling: The final guard ensures that even if the API call succeeds, an empty string does not propagate downstream. This directly prevents issues like "Model returned empty content" and downstream JSON parsing failures.

Finally, we can move to the model inference part next and understand how we run analysis via OpenRouter hosted model.

Step 8: Helper functions

What we need next are a few small helper functions that make the UI and output handlingeasy.

def to_df(items, columns=None):

if items is None:

return pd.DataFrame(columns=columns or [])

if isinstance(items, dict):

return pd.DataFrame([items])

if not items:

return pd.DataFrame(columns=columns or [])

return pd.DataFrame(items)

def get_tasks_to_run(task: str) -> List[str]:

task = safe_str(task)

if not task:

raise ValueError("Task is empty.")

return FULL_REVIEW_TASKS if task == "Full Review" else [task]

def visible_for_task(task: str) -> Dict[str, bool]:

task = safe_str(task)

if task == "Full Review":

return {

"modules": True,

"deps": True,

"issues": True,

"dups": False,

"refactor": False,

"improvements": True,

"roadmap": True,

"testing": True,

"onboarding": False,

"libs": False,

"hotspots": True,

"security": False,

}

return {

"modules": task == "Architecture Overview",

"deps": task == "Architecture Overview",

"issues": task == "Architecture Overview",

"dups": task == "Code Duplication",

"refactor": task == "Code Duplication",

"improvements": task == "Improvement Opportunities",

"roadmap": task == "Refactor Roadmap",

"testing": task == "Testing Gaps",

"onboarding": task == "Onboarding Guide",

"libs": task == "Library Modernization",

"hotspots": task == "Code Quality Hotspots",

"security": task == "Security / Config Hygiene",

}

def render_markdown_summary(repo_url: str, total_tokens: int, files, results: Dict[str, Dict[str, Any]]) -> str:

repo_url = safe_str(repo_url)

lines = [

"# Whole-Repo Analysis Report",

f"**Repository:** {repo_url}",

f"**Files included:** {len(files)}",

f"**Estimated input tokens:** {total_tokens}",

"",

]

if "Architecture Overview" in results:

overview = results["Architecture Overview"].get("repo_overview", {})

lines += [

"## Architecture",

overview.get("summary", "No summary returned."),

"",

f"**Primary languages:** {', '.join(overview.get('primary_languages', [])) or 'N/A'}",

f"**Likely entrypoints:** {', '.join(overview.get('likely_entrypoints', [])) or 'N/A'}",

"",

]

if "Improvement Opportunities" in results:

lines += [

"## Improvement Opportunities",

f"- Identified: {len(results['Improvement Opportunities'].get('improvement_opportunities', []))}",

"",

]

if "Code Quality Hotspots" in results:

lines += [

"## Code Quality Hotspots",

f"- Identified: {len(results['Code Quality Hotspots'].get('quality_hotspots', []))}",

"",

]

if "Testing Gaps" in results:

lines += [

"## Testing Gaps",

f"- Identified: {len(results['Testing Gaps'].get('testing_gaps', []))}",

"",

]

if "Code Duplication" in results:

dup = results["Code Duplication"]

lines += [

"## Duplication & Refactor Summary",

f"- Duplication candidates: {len(dup.get('duplication_candidates', []))}",

f"- Design smells: {len(dup.get('design_smells', []))}",

f"- High risk files: {len(dup.get('high_risk_files', []))}",

f"- Refactor recommendations: {len(dup.get('refactor_recommendations', []))}",

"",

]

if "Library Modernization" in results:

lines += [

"## Library Modernization",

f"- Recommendations: {len(results['Library Modernization'].get('library_recommendations', []))}",

"",

]

if "Security / Config Hygiene" in results:

lines += [

"## Security / Config Hygiene",

f"- Findings: {len(results['Security / Config Hygiene'].get('security_and_config_findings', []))}",

"",

]

if "Refactor Roadmap" in results:

lines += [

"## Refactor Roadmap",

f"- Planned items: {len(results['Refactor Roadmap'].get('refactor_roadmap', []))}",

"",

]

if "Onboarding Guide" in results:

onboarding = results["Onboarding Guide"].get("onboarding_guide", {})

lines += [

"## Onboarding Guide",

onboarding.get("summary", "No summary returned."),

"",

f"**Recommended reading order:** {', '.join(onboarding.get('recommended_reading_order', [])) or 'N/A'}",

f"**Entrypoints:** {', '.join(onboarding.get('entrypoints', [])) or 'N/A'}",

"",

]

return "\n".join(lines)

def hidden_update():

return gr.update(value=pd.DataFrame(), visible=False)

def visible_update(df):

return gr.update(value=df, visible=True)

def empty_outputs(stats, status_message):

return (

status_message,

"",

*all_hidden(),

"{}",

json.dumps(stats, indent=2),

)This step defines a small set of helper functions, but together they handle most of the app’s presentation logic.

to_df()function: This function converts model outputs into apandasDataFrame so they can be rendered cleanly in Gradio tables.get_tasks_to_run()function: This function decides whether the app should run a single task or the bundledFull Review. If the selected task is "Full Review", it expands into the predefined list of review tasks. Otherwise, it simply returns a one-item list with the selected task.visible_for_task()function: This function controls which report tables should actually be shown in the UI. For example, if the user selectsOnboarding Guide, there is no benefit to display duplication or security tables.render_markdown_summary()function: Instead of dumping raw JSON directly, this function generates a readable summary with repository metadata, token count, and task-specific highlights.hidden_update()andvisible_update()functions: These are small Gradio helpers used to control component state. Thehidden_update()function clears a table and hides it, whilevisible_update()fills a table with data and makes it visible. This pattern is important because Gradio expects a return value for every output component, and without explicit resets.empty_outputs()function: This function handles early exits and error states. If the repo is too large, the task is invalid, or something else fails, the app still needs to return valid outputs for every UI component.

Together, these helper functions do not change the core model logic, but they make up a usable application.

Step 9: End-to-end repository analysis

This function orchestrates the full workflow. It validates inputs, prepares the repository, runs one or more analysis tasks, and returns structured outputs to the Gradio UI.

def analyze_repo(repo_url, branch, task, token_budget, exclude_tests, exclude_docs, exclude_notebooks):

repo_url = safe_str(repo_url)

branch = safe_str(branch)

task = safe_str(task)

try:

if not repo_url:

stats = {"repo_url": "", "branch": branch}

return empty_outputs(stats, "Error: Repository URL is empty.")

if not task:

stats = {"repo_url": repo_url, "branch": branch}

return empty_outputs(stats, "Error: Task is empty.")

repo_path = clone_repo(repo_url, branch)

tree, files, total_tokens = pack_repo(repo_path, exclude_tests, exclude_docs, exclude_notebooks)

stats = {

"repo_url": repo_url,

"branch": branch,

"files_included": len(files),

"estimated_input_tokens": total_tokens,

"token_budget": token_budget,

"fits_budget": total_tokens <= token_budget,

"sample_files": [f[0] for f in files[:30]],

"tasks_requested": get_tasks_to_run(task),

}

if total_tokens > token_budget:

msg = (

f"Repo too large for current budget.\n\n"

f"Estimated tokens: {total_tokens}\n"

f"Budget: {token_budget}\n\n"

f"Try excluding tests/docs/notebooks or use a smaller repo."

)

return empty_outputs(stats, msg)

repo_context = build_repo_context(tree, files)

tasks_to_run = get_tasks_to_run(task)

results: Dict[str, Dict[str, Any]] = {}

for task_name in tasks_to_run:

prompt = build_task_prompt(task_name, repo_context)

raw_text = call_model(prompt)

results[task_name] = try_parse_json(raw_text)

report_md = render_markdown_summary(repo_url, total_tokens, files, results)

vis = visible_for_task(task)

arch_json = results.get("Architecture Overview", {})

dup_json = results.get("Code Duplication", {})

imp_json = results.get("Improvement Opportunities", {})

roadmap_json = results.get("Refactor Roadmap", {})

testing_json = results.get("Testing Gaps", {})

onboarding_json = results.get("Onboarding Guide", {})

lib_json = results.get("Library Modernization", {})

hotspot_json = results.get("Code Quality Hotspots", {})

security_json = results.get("Security / Config Hygiene", {})

modules_df = to_df(arch_json.get("modules", []), ["name", "responsibility", "key_files", "evidence_paths"])

deps_df = to_df(arch_json.get("dependencies", []), ["from_module", "to_module", "relationship", "evidence_paths"])

issues_df = to_df(arch_json.get("issues", []), ["type", "description", "severity", "evidence_paths"])

dups_df = to_df(dup_json.get("duplication_candidates", []), ["summary", "files_involved", "why_it_looks_duplicate", "confidence"])

refactor_df = to_df(dup_json.get("refactor_recommendations", []), ["title", "description", "target_files", "expected_benefit"])

improvements_df = to_df(imp_json.get("improvement_opportunities", []), ["title", "description", "why_it_matters", "affected_files", "suggested_change", "expected_impact", "effort", "evidence_paths"])

roadmap_df = to_df(roadmap_json.get("refactor_roadmap", []), ["priority", "title", "description", "target_files", "impact", "effort", "why_now"])

testing_df = to_df(testing_json.get("testing_gaps", []), ["module_or_file", "why_it_needs_tests", "likely_missing_cases", "recommended_test_type", "evidence_paths"])

onboarding_df = to_df(onboarding_json.get("onboarding_guide", {}), ["summary", "recommended_reading_order", "entrypoints", "important_directories", "debugging_start_points", "common_confusion_points"])

libs_df = to_df(lib_json.get("library_recommendations", []), ["current_library_or_pattern", "where_used", "suggested_alternative", "why_better", "migration_difficulty", "confidence", "evidence_paths", "recommendation_type"])

hotspots_df = to_df(hotspot_json.get("quality_hotspots", []), ["file_or_module", "risk_level", "why_it_is_a_hotspot", "evidence_paths", "recommended_cleanup"])

security_df = to_df(security_json.get("security_and_config_findings", []), ["title", "severity", "description", "affected_files", "evidence_paths", "recommended_fix", "confidence"])

status = (

f"Done.\n\n"

f"Files included: {len(files)}\n"

f"Estimated input tokens: {total_tokens}\n"

f"Tasks run: {', '.join(tasks_to_run)}"

)

return (

status,

report_md,

visible_update(modules_df) if vis["modules"] else hidden_update(),

visible_update(deps_df) if vis["deps"] else hidden_update(),

visible_update(issues_df) if vis["issues"] else hidden_update(),

visible_update(dups_df) if vis["dups"] else hidden_update(),

visible_update(refactor_df) if vis["refactor"] else hidden_update(),

visible_update(improvements_df) if vis["improvements"] else hidden_update(),

visible_update(roadmap_df) if vis["roadmap"] else hidden_update(),

visible_update(testing_df) if vis["testing"] else hidden_update(),

visible_update(onboarding_df) if vis["onboarding"] else hidden_update(),

visible_update(libs_df) if vis["libs"] else hidden_update(),

visible_update(hotspots_df) if vis["hotspots"] else hidden_update(),

visible_update(security_df) if vis["security"] else hidden_update(),

json.dumps(results, indent=2),

json.dumps(stats, indent=2),

)

except subprocess.CalledProcessError as e:

stats = {"repo_url": repo_url, "branch": branch}

stderr = safe_str(getattr(e, "stderr", "")) or safe_str(e)

return empty_outputs(stats, f"Git clone failed:\n{stderr}")

except Exception as e:

stats = {"repo_url": repo_url, "branch": branch}

return empty_outputs(stats, f"Error: {safe_str(e)}")This function orchestrates the entire pipeline, from raw repo to structured output.

- Input handling and repo preparation: The repository is cloned and packed into a structured prompt, along with token estimation. If the repo exceeds the token budget, the function exits early.

- Task execution loop (core logic): For each requested task, the function builds a task-specific prompt, then calls the model and parses structured JSON output. All results are then stored in a single

resultsdictionary. - Structuring outputs for UI: The raw JSON outputs are converted into DataFrames using

to_df()and mapped to UI components. Visibility is controlled viavisible_for_task(), ensuring only relevant tables are shown for the selected task. - Reporting and error handling: A markdown report summarizes key findings, while full JSON outputs remain available for debugging. All failures (clone issues, empty responses, parsing errors) are safely handled through

empty_outputs()to keep the UI stable.

Step 10: Build the Gradio UI

Finally, we now wrap everything in a simple Gradio interface.

with gr.Blocks(title="Nemotron Repo Analyzer") as demo:

gr.Markdown("# Nemotron Repo Analyzer")

# gr.Markdown("Analyze a public GitHub repository without RAG using a single packed prompt.")

with gr.Tab("Analyze"):

with gr.Row():

repo_url = gr.Textbox(

label="GitHub Repo URL",

value="https://github.com/AashiDutt/Nemotron-3-Nano.git"

)

branch = gr.Textbox(label="Branch", value="main")

with gr.Row():

task = gr.Dropdown(

choices=TASK_CHOICES,

value="Full Review",

label="Task"

)

token_budget = gr.Slider(

minimum=40000,

maximum=180000,

step=5000,

value=120000,

label="Token Budget"

)

with gr.Row():

exclude_tests = gr.Checkbox(value=False, label="Exclude tests")

exclude_docs = gr.Checkbox(value=False, label="Exclude docs / markdown")

exclude_notebooks = gr.Checkbox(value=True, label="Exclude notebooks")

analyze_btn = gr.Button("Analyze Repo", variant="primary")

status = gr.Textbox(label="Status", lines=7)

with gr.Tab("Report"):

report_md = gr.Markdown()

modules_df = gr.Dataframe(label="Modules", visible=False)

deps_df = gr.Dataframe(label="Dependencies", visible=False)

issues_df = gr.Dataframe(label="Architecture Issues", visible=False)

dups_df = gr.Dataframe(label="Duplication Candidates", visible=False)

refactor_df = gr.Dataframe(label="Refactor Recommendations", visible=False)

improvements_df = gr.Dataframe(label="Improvement Opportunities", visible=False)

roadmap_df = gr.Dataframe(label="Refactor Roadmap", visible=False)

testing_df = gr.Dataframe(label="Testing Gaps", visible=False)

onboarding_df = gr.Dataframe(label="Onboarding Guide", visible=False)

libs_df = gr.Dataframe(label="Library Modernization", visible=False)

hotspots_df = gr.Dataframe(label="Code Quality Hotspots", visible=False)

security_df = gr.Dataframe(label="Security / Config Hygiene", visible=False)

with gr.Tab("Raw JSON"):

all_results_json_box = gr.Code(label="All Task Results JSON", language="json")

stats_box = gr.Code(label="Run Stats", language="json")

analyze_btn.click(

analyze_repo,

inputs=[repo_url, branch, task, token_budget, exclude_tests, exclude_docs, exclude_notebooks],

outputs=[

status,

report_md,

modules_df,

deps_df,

issues_df,

dups_df,

refactor_df,

improvements_df,

roadmap_df,

testing_df,

onboarding_df,

libs_df,

hotspots_df,

security_df,

all_results_json_box,

stats_box,

]

)

demo.launch(debug=True, share=True)The gr.Blocks() container defines the overall layout of the app, while the top-level Markdown sets the title and context. The interface is split into three tabs to keep the workflow structured.

- The Analyze tab acts as the input layer. It collects the repository URL and branch, lets the user select a task, and configures the token budget. It also provides toggles to exclude tests, docs, or notebooks, which helps control prompt size and noise. The “Analyze Repo” button triggers the full pipeline, and the status textbox displays progress, errors, or completion details.

- The Report tab is the primary output layer. It renders a Markdown summary for a quick overview, followed by structured DataFrame tables for inspection.

- The Raw JSON tab serves as a debugging and transparency layer. It exposes the full structured outputs returned by the model along with run metadata. This is useful for validating outputs, debugging failures, or exporting results into downstream systems.

This connects user inputs directly to the analyze_repo() function and maps its outputs back to the UI components. The ordering of outputs is critical here, since each returned value is routed to a specific UI element.

Finally, the app is launched using:

demo.launch(debug=True, share=True)The debug=True flag helps surface runtime errors during development, while share=True creates a public link, making it easy to share the app without deployment.

Conclusion

In this tutorial, I demonstrated how to build a repo codebase analyzer using NVIDIA Nemotron 3 Super, OpenRouter, and Gradio.

Instead of relying on chunking, embeddings, and retrieval, the app clones a public GitHub repository, filters and packs the codebase into a single prompt, and asks the model to reason over the entire repository at once.

This whole-context approach is useful for tasks that need a global view of the codebase, such as architecture explanation, onboarding guidance, testing gap analysis, improvement opportunities, and refactor planning.

From here, you could extend the project in several ways:

- Add a “preview fit” button that estimates token count before analysis,

- Add partial-failure handling for Full Review,

- Support downloadable markdown reports,

- Or compare repo prompt packing against a RAG baseline on the same repository.

FAQs

Does this tutorial use the full 1M-token Nemotron 3 Super context window?

No. NVIDIA documents up to 1M tokens for official Nemotron 3 Super deployments, but OpenRouter’s hosted free endpoint currently exposes 262,144 context, which is good enough for this tutorial.

Why not run Nemotron 3 Super locally?

Nemotron 3 Super is a large reasoning model (about 85Gb via Ollama) and is much easier to use through a hosted endpoint for a tutorial like this.

Which Nemotron task is most reliable for this app?

In practice, the strongest tasks are:

- Architecture Overview

- Improvement Opportunities

- Testing Gaps

- Onboarding Guide

- Refactor Roadmap

Those benefit the most from seeing the full repository at once.

Should I use Full Review by default?

For smaller public repos, yes. For larger repos, single-task analysis is usually more stable.

I am a Google Developers Expert in ML(Gen AI), a Kaggle 3x Expert, and a Women Techmakers Ambassador with 3+ years of experience in tech. I co-founded a health-tech startup in 2020 and am pursuing a master's in computer science at Georgia Tech, specializing in machine learning.