Tracks

Most LLM agents restart from zero on every run. They forget the user's name, their last conversation, and the file they were working on. Anything user-specific has to be re-established turn by turn.

This tutorial adds persistent memory to an agent so the next run starts where the last one ended. The memory layer is Supermemory, a hosted API that stores per-user facts and returns them in one call. You'll build a personal exercise trainer in Python. The agent logs workouts, suggests the next session, and remembers preferences and recent lifts across separate runs of the script.

The stack is small. Supermemory holds the memory. The OpenAI Agents SDK runs the agent loop. The trainer consists of two Python files and a pyproject.toml file.

You'll need Python 3.10 or newer, an OpenAI account, a Supermemory account, and basic comfort with the command line.

Building AI Agents with Google ADK

What Is Supermemory?

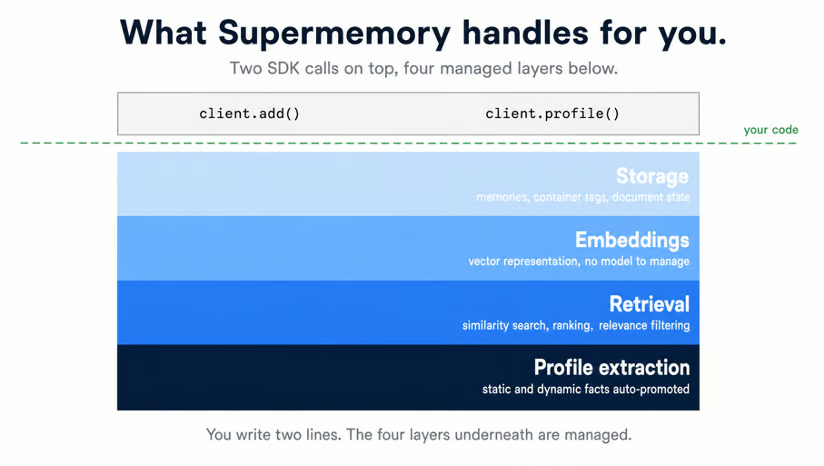

Supermemory can best be described as an AI memory API for agents. When you hand Supermemory strings about your user, it later returns a compact view of who that user is and what they've recently been doing. Embedding, indexing, and retrieval all run inside Supermemory, so your agent code stays small.

The LongMemEval benchmark tests how well a memory system answers questions over a long conversation history. Supermemory recalls 81.6% of the right facts. Zep, the next-best system, scores 71.2%, a 10-point gap that translates to roughly 1 extra correct answer per 10 user questions. The open-source repository has 22k+ GitHub stars, one more signal of real use.

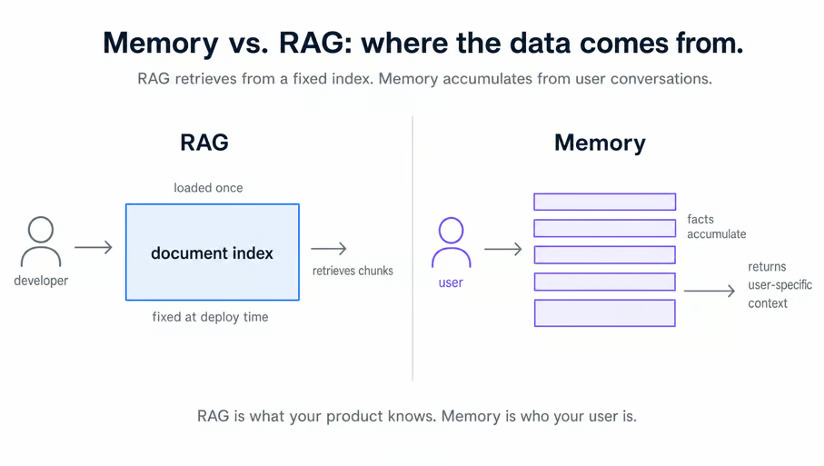

Memory vs RAG

Most readers reaching for an agent memory tool have used RAG before. It helps to place Supermemory next to it. RAG and memory solve different problems, and they often live in the same agent.

A RAG system points to a document corpus that the developer prepares once. Product manuals, support articles, internal docs. The corpus is loaded at deploy time, queried at runtime, and rarely changes. The agent uses it to answer questions that the product itself knows the answer to.

A memory system points at the user. Supermemory writes user-specific facts as the agent talks to that user, and the store grows with every conversation. The agent uses it to answer questions that only the user can answer, like preferences, history, and recent activity.

In a real product, the two run side-by-side. RAG over a company knowledge base answers "what's our refund policy?". Supermemory over the user's answer, "What was my bench press last week?" Same agent, two data stores, two jobs.

User profiles: static and dynamic facts

Supermemory's main idea is the user profile. Every log gets sorted into two buckets: static facts that rarely change, and dynamic facts about current activity. Recurring patterns get promoted into static. Recent activity stays in the dynamic.

When the agent reads the profile, one call returns both buckets plus the matching memory chunks.

The split matters because static and dynamic facts answer different questions about the same user:

|

Static facts |

Dynamic facts |

|

Trains at home with dumbbells and a pull-up bar |

Current focus: upper body strength |

|

Left knee injury, no deep squats |

Last bench: 4 sets of 5 reps at 185 lb |

|

Wants to add 20 lb to bench by year-end |

Working on grease-the-groove pull-ups this week |

|

Trains evenings only, never mornings |

Ran 5k in 28 minutes yesterday |

Read row one. The static side says how the user trains: at home, with the equipment they own. That doesn't change week to week. The dynamic side says what they're working on right now: upper body, this cycle.

A workout suggester needs both. The static side rules out gym-only exercises, the dynamic side picks today's session.

Behind that profile, Supermemory does four jobs you'd otherwise build yourself. It stores raw memories, embeds every chunk, runs similarity search at read time, and extracts profile facts from logged content. None of the four shows up in your code.

Every memory is tagged with a string the developer chooses. Every read passes the same string back to limit what comes out. The trainer hardcodes one tag because one user is enough to show what the profile does. Real apps compute the tag from the authenticated user, like their JWT.

Setting Up Your Supermemory Environment

The trainer needs two API keys (Supermemory and OpenAI) and a Python project with three dependencies. A quick round-trip script proves both keys work before any agent code goes near them.

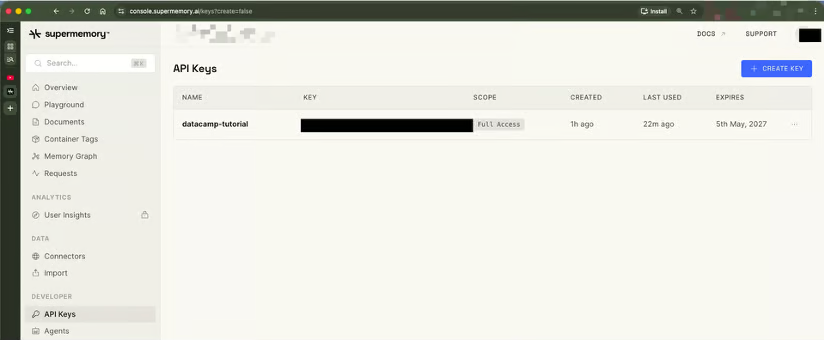

Getting your API keys

The Supermemory API key lives at console.supermemory.ai, NOT app.supermemory.ai. The app subdomain is the consumer memory product (for saving notes, browsing your space). It has no API key page. Skip it and go straight to the console.

On console.supermemory.ai:

-

Sign in.

-

Click API Keys in the sidebar.

-

Click Create API Key.

-

Name it (the trainer demo uses

datacamp-tutorial). -

Copy the resulting key. It starts with

sm_.

You also need an OpenAI key for the agent's LLM calls. Grab one at platform.openai.com/api-keys if you don't have one already.

Create a .env file in your project root with both keys. Don't commit it.

SUPERMEMORY_API_KEY=sm_your_key_here

OPENAI_API_KEY=sk-your_key_hereSupermemory's free tier covers this tutorial without entering payment info. Exact limits live on the pricing page.

Installing dependencies

The tutorial uses uv for project setup and execution. If you don't have uv, install it once with the one-liner from astral.sh/uv.

Initialize the project:

uv init supermemory-trainer

cd supermemory-trainerDelete the auto-generated README.md that uv init adds. The auto-generated hello.py will get overwritten in the next step, so leave it for now.

Add three dependencies:

-

supermemory==3.37.0is the memory client, pinned to the version verified for this tutorial. -

openai-agents is the OpenAI Agents SDK. The package name is hyphenated, the import path is

agents. -

python-dotenvreads the.envfile you just created.

uv add supermemory==3.37.0 openai-agents python-dotenvThe resulting pyproject.toml:

[project]

name = "supermemory-trainer"

version = "0.1.0"

description = "Personal exercise trainer agent built with Supermemory and the OpenAI Agents SDK."

requires-python = ">=3.10"

dependencies = [

"openai-agents>=0.10.2",

"python-dotenv>=1.2.1",

"supermemory==3.37.0",

]Verifying your setup

Before writing any agent code, watch Supermemory do its job once on a single sentence. The script below sends one fact to Supermemory, waits for the pipeline, then reads the profile back out. If this runs cleanly, the keys work, and the SDK is reachable. The output also gives you a first look at what Supermemory does with raw text.

Open hello.py in the project root and replace the auto-generated body with the imports and a write call:

import time

from dotenv import load_dotenv

from supermemory import Supermemory

load_dotenv()

client = Supermemory()

USER_ID = "demo_warmup"

response = client.add(

content="The user is learning Supermemory by building a personal trainer agent.",

container_tag=USER_ID,

)

print(f"client.add() -> id={response.id} status={response.status}")load_dotenv() reads the API key from .env into the environment before Supermemory() is constructed. The client picks up SUPERMEMORY_API_KEY automatically. The container_tag="demo_warmup" value scopes this single fact to a throwaway user.

Now add the wait and the read at the bottom of the same file:

print("Waiting 20 seconds for processing...")

time.sleep(20)

prof = client.profile(container_tag=USER_ID, q="learning")

print(f"profile.static ({len(prof.profile.static)}): {prof.profile.static}")

print(f"profile.dynamic ({len(prof.profile.dynamic)}): {prof.profile.dynamic}")

print(f"search_results.results ({len(prof.search_results.results)}):")

for r in prof.search_results.results[:3]:

print(f" - {r['memory']} (similarity={r['similarity']:.3f})")The 20-second sleep gives Supermemory's embed-and-extract pipeline time to process the new memory. Without it, the read returns nothing, and the script looks broken when it isn't.

Run the file:

uv run python hello.pyExpected output:

client.add() -> id=zNLsJBrY1PZupAeZ3Qn6EL status=queued

Waiting 20 seconds for processing...

profile.static (0): []

profile.dynamic (1): ['Building a personal trainer agent to learn Supermemory.']

search_results.results (1):

- Building a personal trainer agent to learn Supermemory. (similarity=0.650)Three details matter in this output. client.add() returns immediately with status="queued", since Supermemory processes documents asynchronously. The 20-second wait covers the embed-and-extract pipeline. By the time the read runs, the raw sentence is one searchable memory chunk.

The interesting line is profile.dynamic. The input was the sentence "The user is learning Supermemory by building a personal trainer agent." The output is the dynamic fact 'Building a personal trainer agent to learn Supermemory.' Supermemory rewrote a third-person sentence into a first-person fact about the user. That's the profile extractor doing its job.

profile.static is an empty list. Static facts consolidate slowly, after a handful of related logs accumulate, so a single warm-up write doesn't produce one. The trainer's suggestion tool plans for this and treats static as a bonus rather than a guarantee.

Building a Supermemory Agent: Personal Exercise Trainer in Python

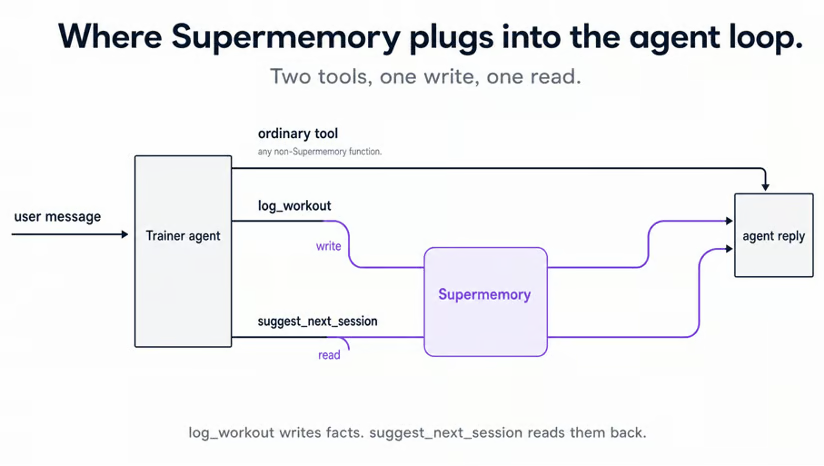

The trainer wraps client.add() and client.profile() in two agent tools, so reads and writes happen automatically as the user chats. Workout history fits memory well. Equipment, injuries, and recent lifts don't live in the LLM's training data, and they accumulate session by session.

Project structure and the agent

The trainer is small enough that the whole project fits in two Python files plus the pyproject.toml you already have:

supermemory-trainer/

├── .env # your real keys (gitignored)

├── .env.example # placeholders, committed

├── .gitignore

├── .python-version

├── main.py # agent definition, system prompt, REPL loop

├── pyproject.toml

└── tools.py # log_workout and suggest_next_sessiontools.py holds the two memory-backed tools you'll write next. log_workout writes a workout to Supermemory via client.add(). suggest_next_session reads the user's profile via client.profile(). main.py imports both and wires the agent.

Most of main.py is OpenAI Agents SDK boilerplate. One sentence in the system prompt does the Supermemory work: every fact about the user must come back through tool calls. The agent is told it has no memory of its own. That single rule is what makes the trainer memory-backed.

Open main.py and start with the imports and the system prompt:

import asyncio

from agents import Agent, Runner, SQLiteSession

from tools import log_workout, suggest_next_session

SYSTEM_PROMPT = """You are a personal exercise trainer who logs the user's

workouts and recommends what to do next.

You have no memory of the user's history on your own. Every fact about the

user lives in Supermemory and reaches you only through tool calls.

Two rules, no exceptions:

1. Whenever the user reports completing a workout, call log_workout immediately, before responding. Extract the exercise, sets, reps, weight, and any notes from what they said. If a value is missing, ask one short follow-up question instead of guessing. After logging, confirm in one short sentence and stop. Do NOT recommend the next session unless the user asks for one.

2. When the user explicitly asks what to do next (or asks for a recommendation, suggestion, or plan), call suggest_next_session first. Never recommend from your own training data. The tool returns the user's

recent activity, stable preferences, and matching past sessions. Reference those facts directly in your reply.

Keep replies concise (2-4 sentences). Be specific: name the exercise, sets, reps, and weight. Honor any injuries or equipment constraints the tool surfaces.

"""Both rules in the system prompt route the model through Supermemory.

Rule 1 forces a log_workout write whenever the user reports a workout, so every workout reaches the memory store. Rule 2 forces a suggest_next_session read before any recommendation, so every recommendation is grounded in what Supermemory knows.

Skip those rules, and the agent answers from its training data, which defeats the point of a memory layer.

Now define the agent and the chat loop in the same file:

def build_agent() -> Agent:

return Agent(

name="Trainer",

instructions=SYSTEM_PROMPT,

tools=[log_workout, suggest_next_session],

model="gpt-5",

)

async def chat() -> None:

agent = build_agent()

session = SQLiteSession(session_id="trainer-cli")

print("Trainer ready. Type a message, or 'exit' to quit.\n")

while True:

try:

message = input("You: ").strip()

except (EOFError, KeyboardInterrupt):

print()

break

if not message:

continue

if message.lower() in {"exit", "quit"}:

break

result = await Runner.run(agent, message, session=session)

print(f"\nTrainer: {result.final_output}\n")

if __name__ == "__main__":

asyncio.run(chat())Two lines in that block are worth naming. tools=[log_workout, suggest_next_session] registers the two memory-backed tools. The @function_tool decorator on each one (in tools.py) tells the SDK they're callable. Without the decorator, the agent has no tools at runtime, even though the construction call succeeds.

SQLiteSession(session_id="trainer-cli") keeps short-term turn history inside the running Python process. Supermemory keeps long-term user facts across processes. Killing the Python process drops the SQLite session, but the Supermemory data stays.

Important: Run main.py as a script, not in a Jupyter cell, since Jupyter's event loop conflicts with asyncio.run(). The synchronous Supermemory() client works inside async tool functions because the Agents SDK runs tools in a thread pool. For more on the SDK itself, see the OpenAI Agents SDK tutorial.

Writing the workout-logging tool

log_workout is the write side of the agent's memory. The function takes structured arguments from the agent: exercise name, sets, reps, weight, and optional notes. It turns those into one short English sentence and hands the sentence to Supermemory through client.add(). The embed-and-extract pipeline runs inside Supermemory after that and needs nothing from the trainer.

Open tools.py and start with the imports and a single shared client:

from agents import function_tool

from dotenv import load_dotenv

from supermemory import Supermemory

load_dotenv()

USER_ID = "demo_user"

client = Supermemory()load_dotenv() runs at import time so SUPERMEMORY_API_KEY is in the environment before Supermemory() is constructed. Construct the client before loading the env, and you get an unauthenticated client. The first call then returns a confusing 401. Both tool functions in this file share that one client and the one USER_ID constant.

Add the logging tool below the client:

@function_tool

def log_workout(

exercise: str,

sets: int,

reps: int,

weight: float,

notes: str = "",

) -> str:

"""Log a completed workout to the user's memory.

Args:

exercise: Name of the exercise.

sets: Number of sets performed.

reps: Number of reps per set.

weight: Weight in pounds. Pass 0 for bodyweight or cardio.

notes: Optional notes about the session.

"""

print(f"[log_workout] {exercise=} {sets=} {reps=} {weight=} {notes=}")

content = f"Performed {exercise}: {sets} sets of {reps} reps at {weight} lbs."

if notes:

content += f" Notes: {notes}"

response = client.add(content=content, container_tag=USER_ID)

print(f"[log_workout] -> id={response.id} status={response.status}")

return f"Logged {exercise} ({sets}x{reps} @ {weight} lb)."The @function_tool docstring is what the LLM sees when it decides whether to call the tool. The Args: block maps to per-parameter descriptions. Both are part of the agent's contract with the function.

The tool sends a plain sentence to client.add(), not JSON. Supermemory's profile extractor reads natural language and infers facts from it. JSON technically works, but the extraction quality drops because the model has no narrative to summarize. "Performed bench press: 4 sets of 5 reps at 185.0 lbs" gives the extractor a clean sentence to work with.

The two print() calls write each tool invocation to the terminal: first the parsed args, then the response.

[log_workout] exercise='bench press' sets=4 reps=5 weight=185.0 notes=''

[log_workout] -> id=xY7AK3qLzBPx5Vd2HnRf1M status=queuedThe status="queued" value matches what the warm-up script returned earlier. The raw log is stored, but client.profile() won't return it as a search result until the pipeline finishes. You'll add a verification step later that waits for that to settle.

Writing the workout-suggestion tool

suggest_next_session is the read side, and it's where the static-and-dynamic split pays off. One client.profile(container_tag=USER_ID, q=focus) call returns three views of the user in a single round trip.

Stable preferences come back as profile.static, current activity as profile.dynamic, and the closest matching past memories as search_results.results. The tool's job is to flatten those three views into one block of context that the agent can quote.

After a few workouts, the tool produces output like this:

Recent activity:

- Trains at home instead of a gym

- Performed deadlift: 3 sets of 5 reps at 225.0 lbs

- Performed 5k run in 26 minutes

- Reports no knee pain during bench press

- Performed bench press: 4 sets of 5 reps at 185.0 lbs

Closest matching past entries:

- Trains at home instead of a gym

- Performed deadlift: 3 sets of 5 reps at 225.0 lbs

- Performed bench press: 4 sets of 5 reps at 185.0 lbs

- Performed 5k run in 26 minutes

- Reports no knee pain during bench pressThe agent reads that block and writes a recommendation grounded in the user's actual history. Without Supermemory's profile, you would build the same context yourself. That means a separate semantic search, your own profile store, and merging the results. The single client.profile() call replaces all three.

Add this to tools.py below log_workout:

@function_tool

def suggest_next_session(focus: str) -> str:

"""Fetch the user's training history and preferences for a given focus.

Returns a context string the agent can use to recommend the next session.

The agent is responsible for the actual recommendation. This tool only

surfaces what Supermemory knows about the user.

Args:

focus: What the user wants to train next (e.g. "upper body", "legs",

"cardio", "today"). Drives semantic search against past logs.

"""

print(f"[suggest_next_session] focus={focus!r}")

profile = client.profile(container_tag=USER_ID, q=focus)

static_facts = profile.profile.static

dynamic_facts = profile.profile.dynamic

matches = profile.search_results.results

print(

f"[suggest_next_session] static={len(static_facts)} "

f"dynamic={len(dynamic_facts)} matches={len(matches)}"

)

sections = []

if static_facts:

sections.append("Stable preferences and constraints:")

sections.extend(f"- {fact}" for fact in static_facts)

if dynamic_facts:

sections.append("Recent activity:")

sections.extend(f"- {fact}" for fact in dynamic_facts)

if matches:

sections.append("Closest matching past entries:")

for r in matches[:5]:

sections.append(f"- {r['memory']}")

if not sections:

return (

"No prior training history found for this user. "

"Ask the user about their goals, equipment, and recent training."

)

return "\n".join(sections)client.profile(container_tag=USER_ID, q=focus) returns a ProfileResponse object. After 5 short logs, the three fields the tool reads look like this:

profile.profile.static # [] (list[str])

profile.profile.dynamic # ["Performed bench press: 4 sets of 5 reps at 185.0 lbs", ...]

profile.search_results.results # [{"memory": "...", "similarity": 0.631, ...}, ...] (list[dict])Each search result is a Python dict, not a Pydantic object. Use r["memory"] for the text and r["similarity"] for the score. The full dict has the following keys:

-

id -

memory -

rootMemoryId -

metadata -

updatedAt -

version -

similarity -

filepath -

documents

The r.memory or r.chunk snippet from Supermemory's OpenAI Agents SDK integration page raises AttributeError against supermemory==3.37.0. Use bracket access.

static is empty here, which is why the tool branches on if static_facts:. The dynamic and search_results branches do the real work for the first dozen logs.

Supermemory also applies a default similarity threshold. A fact you mentioned once may not come back for every query. The 5 logs above all returned for q="today", but a more specific query string might return fewer. The if matches: guard handles that without failing.

Running session 1: logging workouts

Start session 1 and log a few workouts to fill Supermemory with something to read back later. Run the script:

uv run python main.pyLog bench press, then a 5k run, then deadlift, plus one preference statement: "I only train at home, no gym." The agent fires log_workout once per workout, and the tool's print() lines make every call visible in the terminal.

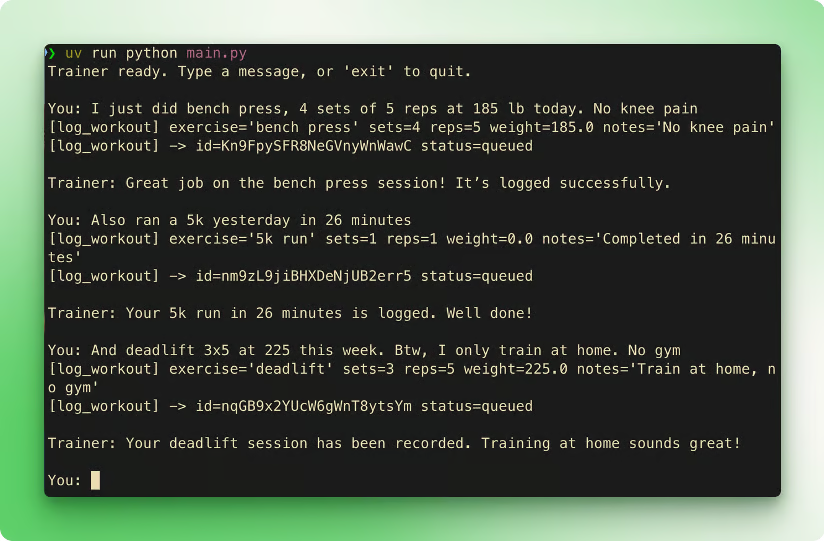

Example output. Your agent's exact phrasing will differ because the model is non-deterministic.

The three status=queued lines are the moment Supermemory takes over. Each one corresponds to a document moving through the embed-and-extract pipeline on Supermemory's side. For short text logs like these, the document becomes searchable through client.profile() within ~12 seconds.

Nothing in the trainer's code waits for that. The agent moves on, and Supermemory finishes the work in the background.

Every log fires exactly one log_workout call, and the agent stops. No proactive recommendations, no extra tool calls, no follow-up suggestions. The first system prompt rule does that work. Without the rule, the agent would suggest a next session after each log, doubling the tool calls.

Type exit to close session 1. The Python process ends, and the SQLiteSession is gone with it. The workout logs and the preference statement now live in Supermemory under container_tag="demo_user", separate from the script that wrote them.

Verifying recall and running session 2

Before session 2, confirm that the facts from session 1 are queryable. Open a fresh Python REPL or save this as a short script:

from dotenv import load_dotenv

from supermemory import Supermemory

load_dotenv()

client = Supermemory()

prof = client.profile(container_tag="demo_user", q="training")

print(f"static ({len(prof.profile.static)}): {prof.profile.static}")

print(f"dynamic ({len(prof.profile.dynamic)}):")

for fact in prof.profile.dynamic:

print(f" - {fact}")

print(f"matches ({len(prof.search_results.results)}):")

for r in prof.search_results.results[:5]:

print(f" - {r['memory']} (similarity={r['similarity']:.3f})")Real output captured between the two sessions:

static (0): []

dynamic (5):

- Trains at home instead of a gym

- Performed deadlift: 3 sets of 5 reps at 225.0 lbs

- Performed 5k run in 26 minutes

- Reports no knee pain during bench press

- Performed bench press: 4 sets of 5 reps at 185.0 lbs

matches (5):

- Trains at home instead of a gym (similarity=0.682)

- Performed deadlift: 3 sets of 5 reps at 225.0 lbs (similarity=0.643)

- Performed bench press: 4 sets of 5 reps at 185.0 lbs (similarity=0.631)

- Performed 5k run in 26 minutes (similarity=0.585)

- Reports no knee pain during bench press (similarity=0.585)Look at what Supermemory's extractor produced. The user said, "I only train at home, no gym," once. The extractor turned that into the dynamic fact "Trains at home instead of a gym".

The bench press log included a notes field about no knee pain. The extractor split that single log into two dynamic facts: one for the workout, one for the absence of pain.

Four logs became five normalized dynamic facts plus five matching memory chunks with similarity scores between 0.585 and 0.682. None of that splitting, normalization, or matching ran in the trainer's code. If dynamic is empty for you, wait another 10 seconds and re-run the snippet. The processing queue occasionally spikes.

Now start session 2 in a brand-new process:

uv run python main.pyThis is a fresh Python interpreter. No shared memory with session 1. No warm cache. Anything the agent recalls comes from Supermemory.

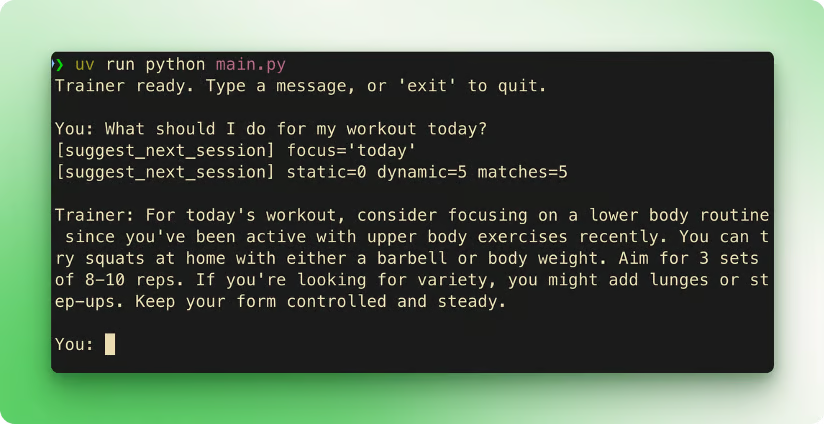

Send one message: "What should I do for my workout today?"

Example output. Same memory store, fresh Python process.

The agent calls suggest_next_session("today"). The tool prints static=0 dynamic=5 matches=5. The captured run replied with a lower-body session at home (squats, lunges, step-ups).

The recommendation lined up with the previous logs because Supermemory's profile told the agent what they were. Bench, deadlift, and a 5k were upper-body or cardio, and the user only trained at home. Both facts came back from the same client.profile() call. Your run will phrase things differently because the model is non-deterministic, but the recall path is the same.

Next Steps for Your Supermemory Agent

The demo is one user, two tools, and a CLI. A real version of the trainer extends in three Supermemory-shaped directions before it touches the agent loop.

Scope memory per real user. The USER_ID = "demo_user" constant works for one person. Production apps compute the tag from the authenticated user's ID, like container_tag="user_sarah" or container_tag=customer_id. Memory between users stays separate because every read passes the tag back. One change in tools.py, no other code moves.

Add more memory-backed tools. Deload weeks, PR tracking, and weekly mobility prompts. Each one is another @function_tool function that calls client.add() for writes and client.profile() for reads against the same container_tag. The shape of the tool stays the same. Only what the agent records and asks for changes.

Handle Supermemory failures. Wrap client.add() and client.profile() in try/except supermemory.APIError so transient failures from Supermemory don't crash the agent. Set per-request timeouts if your agent runs in a constrained environment.

The agent-loop side of the work is independent of Supermemory and can change later. Front the CLI with Telegram, Discord, or Slack, so the user texts a workout and the bot calls Runner.run(). Or swap the framework. Supermemory has a LangChain integration if your stack is already on LangChain agents, and the memory code doesn't change.

The static-and-dynamic split also fits other domains.

- Customer support agent: static = known issues and account preferences, dynamic = open tickets and recent contacts.

- Coding agent: static = preferred languages and frameworks, dynamic = current task and recently-touched files.

The split holds whenever the user is the source of truth.

Conclusion

You just built a Python trainer with two tools and persistent memory across processes. client.add() writes workouts. client.profile() reads the user back as static facts, dynamic facts, and semantic matches in one call, all scoped by container_tag. Supermemory does the chunking, embedding, search, and profile extraction that the demo never had to write.

Pair it with RAG, and the same agent answers questions about the user and the product. LLM Agents Explained covers broader agent patterns, and the Associate AI Engineer for Developers track goes further into memory-backed agents.

Supermemory FAQs

What is Supermemory?

Supermemory is a hosted memory API that stores, indexes, and retrieves long-term context for AI agents so they can remember users across sessions.

How is Supermemory different from plain vector databases?

Supermemory adds embeddings, indexing, semantic search, and profile-style fact extraction on top of storage, so you get usable memories back with one API call.

Can Supermemory handle both RAG and user memory?

Yes, it supports document-style RAG over files and URLs as well as user-centric memories, letting the same API power product knowledge and personal history.

Do I need to manage my own embedding models with Supermemory?

No, Supermemory runs the embedding and retrieval pipeline for you; you send raw text or content and query it later without handling models or indexes yourself.

Is there a free tier for trying Supermemory?

Yes, there is a free plan intended for testing and small projects, with monthly token and query limits so you can integrate and experiment before upgrading.

I am a data science content creator with over 2 years of experience and one of the largest followings on Medium. I like to write detailed articles on AI and ML with a bit of a sarcastıc style because you've got to do something to make them a bit less dull. I have produced over 130 articles and a DataCamp course to boot, with another one in the makıng. My content has been seen by over 5 million pairs of eyes, 20k of whom became followers on both Medium and LinkedIn.