course

OpenAI's latest model, GPT-5.5, matches GPT-5.4 in per-token latency but claims to perform at a higher level. It also uses fewer tokens to complete the same Codex tasks.

Over and above the efficiency gains, OpenAI reports gains in agentic coding, computer use, knowledge work, and scientific research.

In this article, we will take a look at what's new in GPT-5.5, including its benchmark results and claims about efficiency gains.

Also, make sure to take a look at our guide on GPT-5.5's "small brother", GPT-5.5 Instant, and its biggest competitors, Claude Opus 4.8 and Gemini 3.5 Flash. See how GPT-5.5 directly stacks up against the competition in our comparison articles with Claude Opus 4.7 and DeepSeek V4.

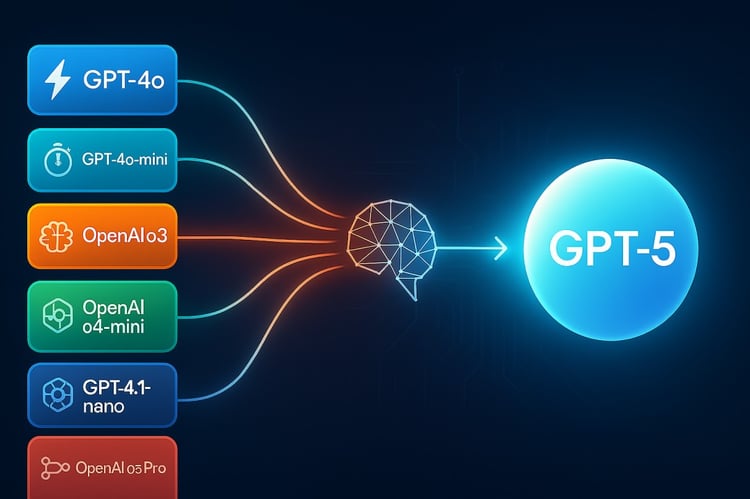

What Is GPT-5.5?

OpenAI's new release includes two separate models.

- GPT-5.5 is the standard model. It's rolling out to Plus, Pro, Business, and Enterprise users in ChatGPT, and to all paid tiers in Codex (including Edu and Go).

- GPT-5.5 Pro is a more capable variant designed for harder questions and higher-accuracy work. It's available only to Pro, Business, and Enterprise users in ChatGPT. OpenAI pitches it as the one to reach for on demanding tasks in business, legal, education, and data science.

When we talk about benchmarks and pricing later on, the two models show up separately — Pro is consistently a step higher in performance, and about 6× more expensive per token.

What's New in GPT-5.5?

One of the more interesting claims from the release is that an internal version of GPT-5.5 helped produce a new proof in combinatorics, specifically about off-diagonal Ramsey numbers.

A Ramsey number tells you how large a group has to get before a specific pattern is guaranteed to show up inside it. Think about a group of people where each pair is either connected or not. The Ramsey number is the point at which the group becomes large enough that you can't avoid containing a certain substructure.

Ramsey numbers are notoriously hard to compute. Exact values are only known for a handful of small cases, and each new result tends to take decades of work.

So GPT-5.5 is already contributing to active research. Let's see what else is new:

Improvements in efficiency

There are several ways to talk about the new efficiency gains:

- Per-token latency: This first metric refers to how fast each token is generated. It is measured in something like milliseconds per token. The takeaway from this release: GPT-5.5 produces each individual token at roughly the same speed as GPT-5.4.

- Token efficiency: This second metric refers to how many tokens the model needs to finish a task. A more efficient model reaches the same answer in fewer tokens, which could be the result of fewer reasoning steps, fewer retries, and/or less backtracking.

So the combined claim is: Each token comes out just as fast as before, and the model needs fewer of them to finish the job. Taken together, tasks now complete faster overall and cost less. The question is whether the shorter reasoning chain sacrifices accuracy in places.

But we should also say that the per-token price went up. We'll get to this in the pricing section later on.

Stronger long-context reasoning

A long context window is only useful if the model can actually use all of it. GPT-5.4 technically supported long contexts, but its performance fell apart past ~128K tokens. Ask it to reason over something truly long — a full codebase, a long contract, hours of transcripts — and performance sinks at the far end of the window.

GPT-5.5 holds up past 128K, past 256K, and all the way out to 1M. We'll get into the specific numbers in the benchmark section below, but the headline is that this is the first OpenAI model where the whole context window is genuinely usable. It passed our 300K token test; more on that below.

GPT-5.5 Benchmark Results

Here is what I think is the most interesting model eval from the release. It is a measure of long-context performance. These benchmarks evaluate an underlying question: If I hand you a massive amount of text, can you still reason over it?

The MRCR needle tests are essentially a "needle in a haystack" test, hence the name. The model is given a long document with specific pieces of info - "needles" - hidden inside it, then asked to retrieve them - the needles. We see the test done multiple times with different context sizes. The higher the score, the more the model actually used the full context of what it was given.

I don't want to forget the Graphwalks tests. They are actually harder than MRCR. In those tests, the model has to traverse a graph structure embedded in the context (BFS means here breadth-first search). It's a test of reasoning over long context. (If you look closely, Opus 4.7 is doing the best on most of these tests.)

A quick scan of the results tells us that GPT-5.5 is better at searching and reasoning over long-context info. A second glance at the table tells us something more interesting: GPT-5.4 falls apart past ~128K, and GPT-5.5 doesn't. That's the real story.

Testing GPT-5.5

We tested the claims in token-efficiency and stronger long-text reasoning to see if the model can deliver what it promises and draw comparisons to GPT-5.4 and Claude Opus 4.7.

Long context retrieval: Needles in the haystack

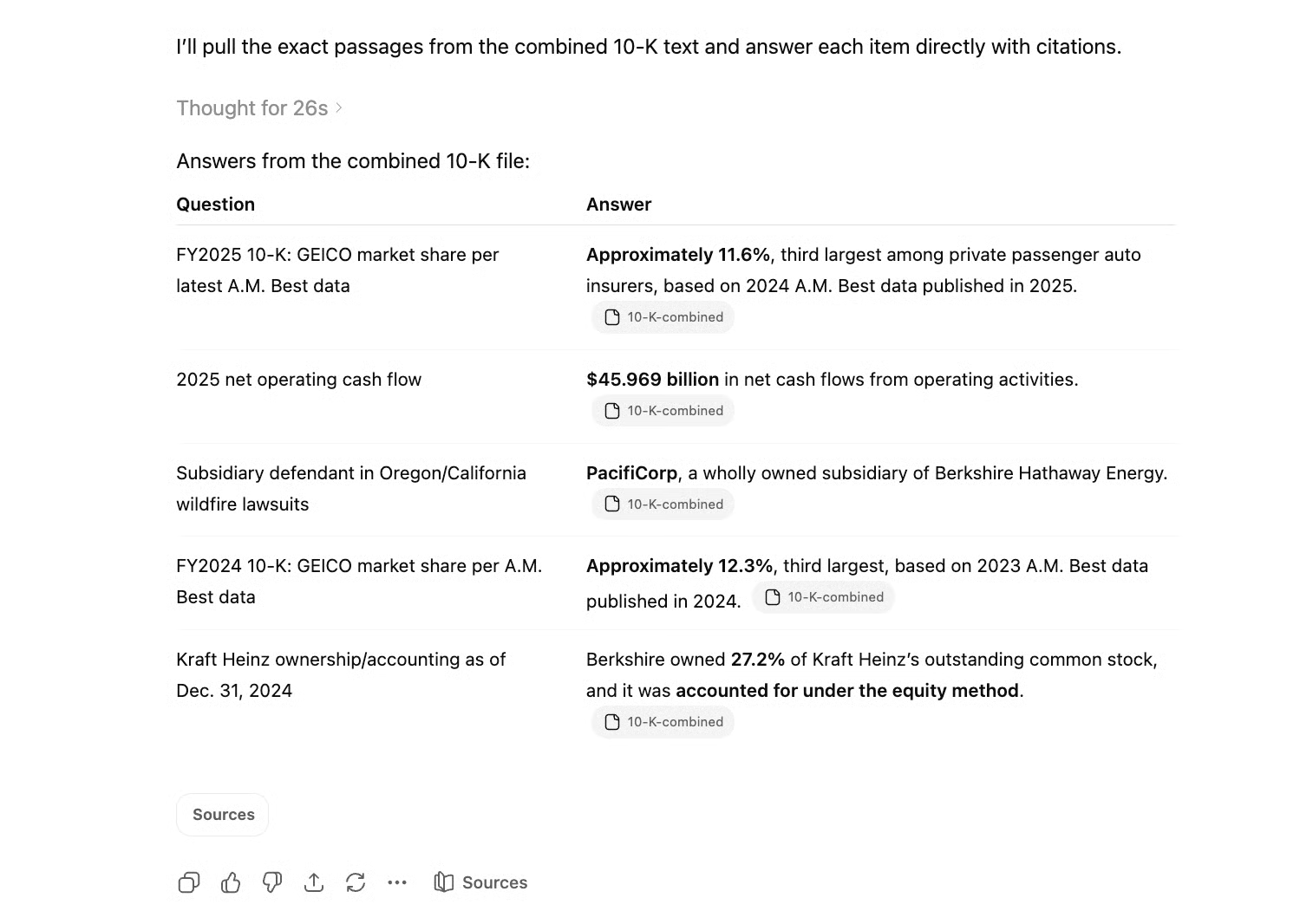

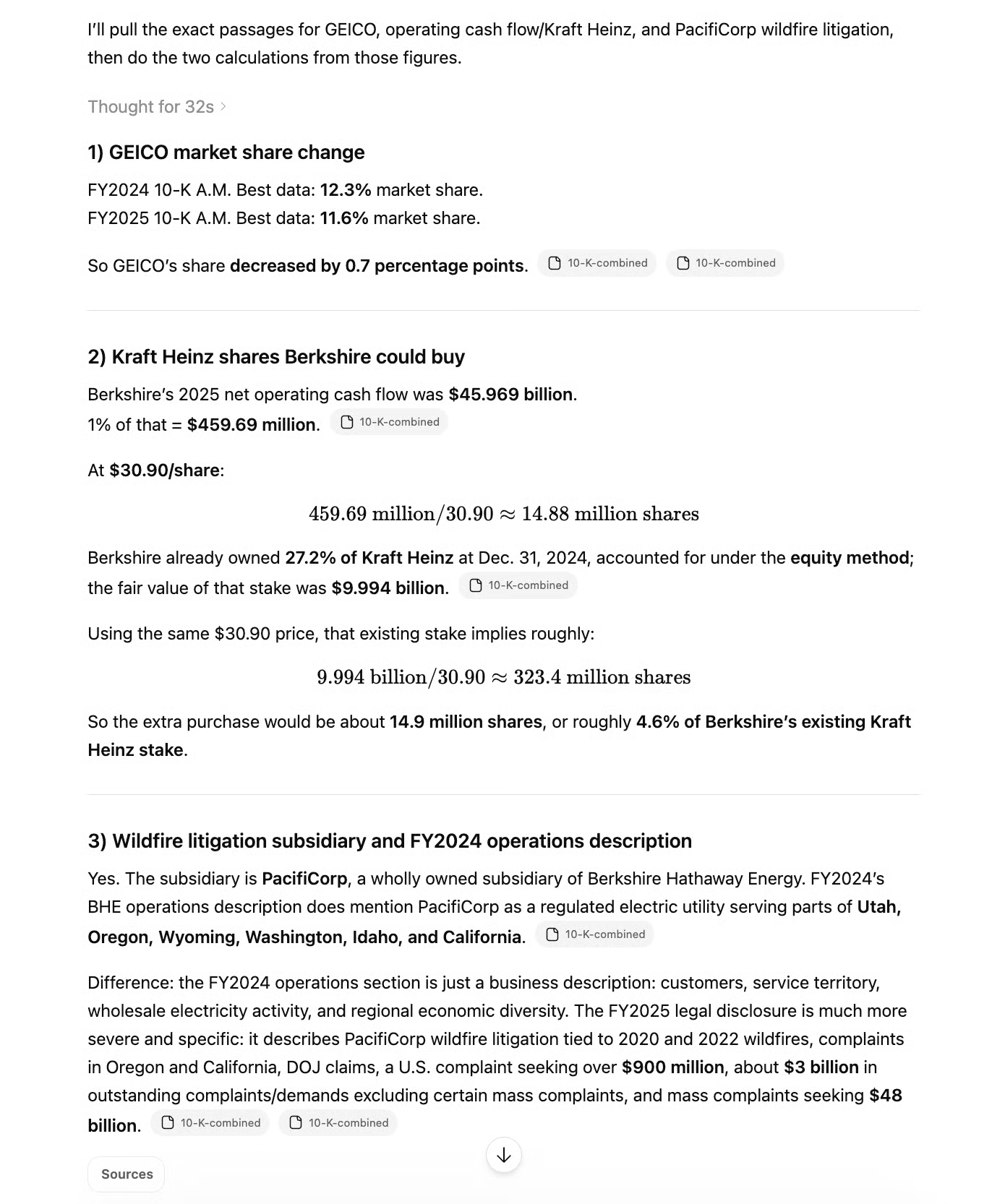

The headline claim of GPT-5.5 is that long-context performance no longer falls apart past ~128K tokens, a boundary where GPT-5.4 visibly degraded. To test this, we fed GPT-5.5 Berkshire Hathaway's FY2025 and FY2024 10-K filings from SEC EDGAR, stacked back to back. Together, they sum to just under 300K tokens of real financial text.

Note: The raw HTML files sum up to more than 3 million tokens. To avoid degradation because of irrelevant HTML tokens, we extracted the visible text using BeautifulSoup and combined those extracts in a single text file.

We then asked the model to retrieve specific facts buried at various depths across the combined document. The key challenge: both filings contain structurally identical sections with similar but different numbers (employee counts, surplus figures, market share percentages), so the model can't just keyword-match its way to an answer. It has to find the right number from the right year.

We designed two tiers of questions. Tier 1 tests simple retrieval: can the model find a specific fact at a given depth? Tier 2 tests multi-hop reasoning: can it pull two facts from distant parts of the document and combine them into a calculation?

Together, these mirror the MRCR needle tests and Graphwalks benchmarks from OpenAI's own release in a simple way, but using a real-world document that a financial analyst might actually hand to the model.

Our prompt:

Attached are Berkshire Hathaway's FY2025 and FY2024 10-K filings. Please read them and answer the following questions:

According to the most recent A.M. Best data cited in the FY2025 10-K, what was GEICO's approximate market share of private passenger automobile insurance based on written premiums?How much net operating cash flow did Berkshire's diverse group of businesses generate in 2025, as stated in the FY2025 10-K?Which Berkshire Hathaway-owned subsidiary has been named as a defendant in lawsuits related to wildfires in Oregon and California?According to the A.M. Best data cited in the FY2024 10-K, what was GEICO's approximate market share of private passenger automobile insurance based on written premiums?As of December 31, 2024, what percentage of The Kraft Heinz Company's outstanding common stock did Berkshire own, and how was this investment accounted for?After about half a minute, GPT-5.5 answered the questions, each one correct.

Next, we prompted the multi-hop questions:

Attached are Berkshire Hathaway's FY2025 and FY2024 10-K filings. Please read them and answer the following questions:

GEICO's market share changed between the A.M. Best data cited in the FY2024 10-K and the A.M. Best data cited in the FY2025 10-K. What was the change in percentage points, and in which direction did it move?

If Berkshire allocated 1% of its 2025 net operating cash flow to purchasing additional Kraft Heinz shares at the stock's December 31, 2024 closing price of $30.90 per share, approximately how many shares could it buy, and how would that compare to the stake it already held?

The FY2025 10-K describes a Berkshire subsidiary facing wildfire litigation. Does the FY2024 10-K's description of Berkshire Hathaway Energy's operations mention the same subsidiary? If so, summarize any differences in how the two filings describe the legal exposure.Since these questions used the information from the needles that were already found, we asked them in a fresh chat. Again, we got our results after roughly 30 seconds, and again, GPT-5.5 answered every question correctly.

The combination of speed and accuracy is impressive for the multi-hop questions, especially since question two alone demanded combining quite many pieces of information that were scattered all across the file (2025 net operating cash flow, 2024 percentage, and fair value of Berkshire’s stake in Kraft Heinz) alongside multiple reasoning steps.

This test could be expanded to an even longer file, but GPT-5.5 passed our 300K context window test successfully.

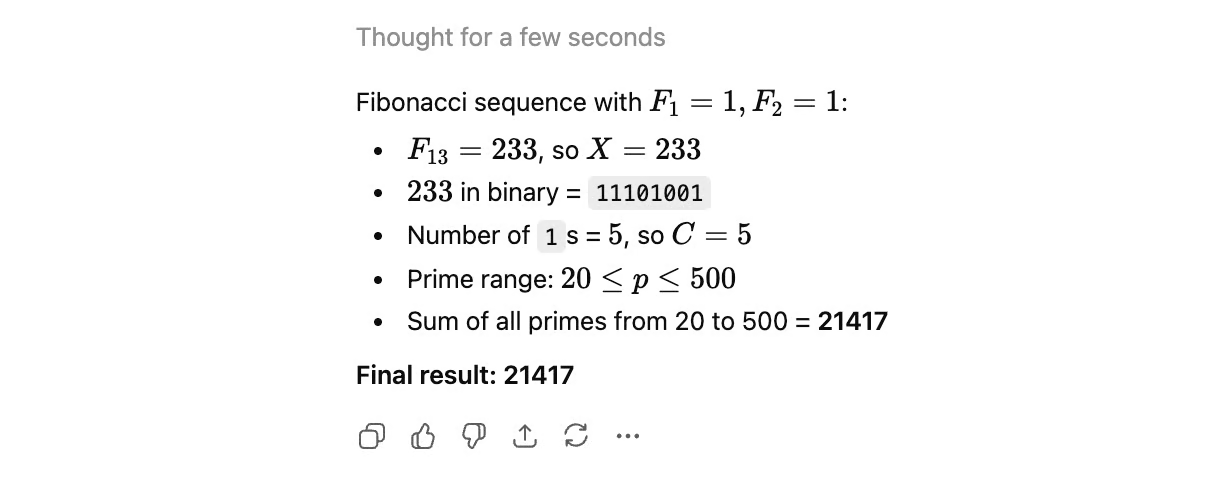

Token-efficiency: Fibonacci–binary logic chain

GPT-5.5 claims to reach answers in fewer tokens and fewer reasoning steps. To test it, we’ll ask it to follow this five-step pipeline:

- Find a Fibonacci term

- Convert it to binary

- Count bits

- Generate primes in a range

- Sum them

GPT-5.4 got steps 1 to 4 right, but fumbled the final summation unless the step was broken into two. Since GPT-5.5 claims stronger reasoning with fewer tokens, this is a natural test: can it now handle the full chain in a single pass?

This is our prompt:

Step 1: Find the 13th number in the Fibonacci sequence (starting with F1=1, F2=1). Let this be X.

Step 2: Convert X into a binary string (Base 2).

Step 3: Count the number of '1's in that binary string. Let this count be C.

Step 4: Identify all prime numbers (p) such that 20 ≤ p ≤ (C × 100).

Step 5: Calculate the sum of these primes. What is the final result?With this original prompt, the result was very similar to that of GPT-5.4: Steps 1 to 4 worked without any problem, but the prime number summation task was too much to handle, and the sum was off. GPT-5.5 gave us 21,417, but the correct result would have been 21,459.

However, in terms of efficiency, we can see an improvement. The output is much more concise than that of its predecessor. The result came very fast, too.

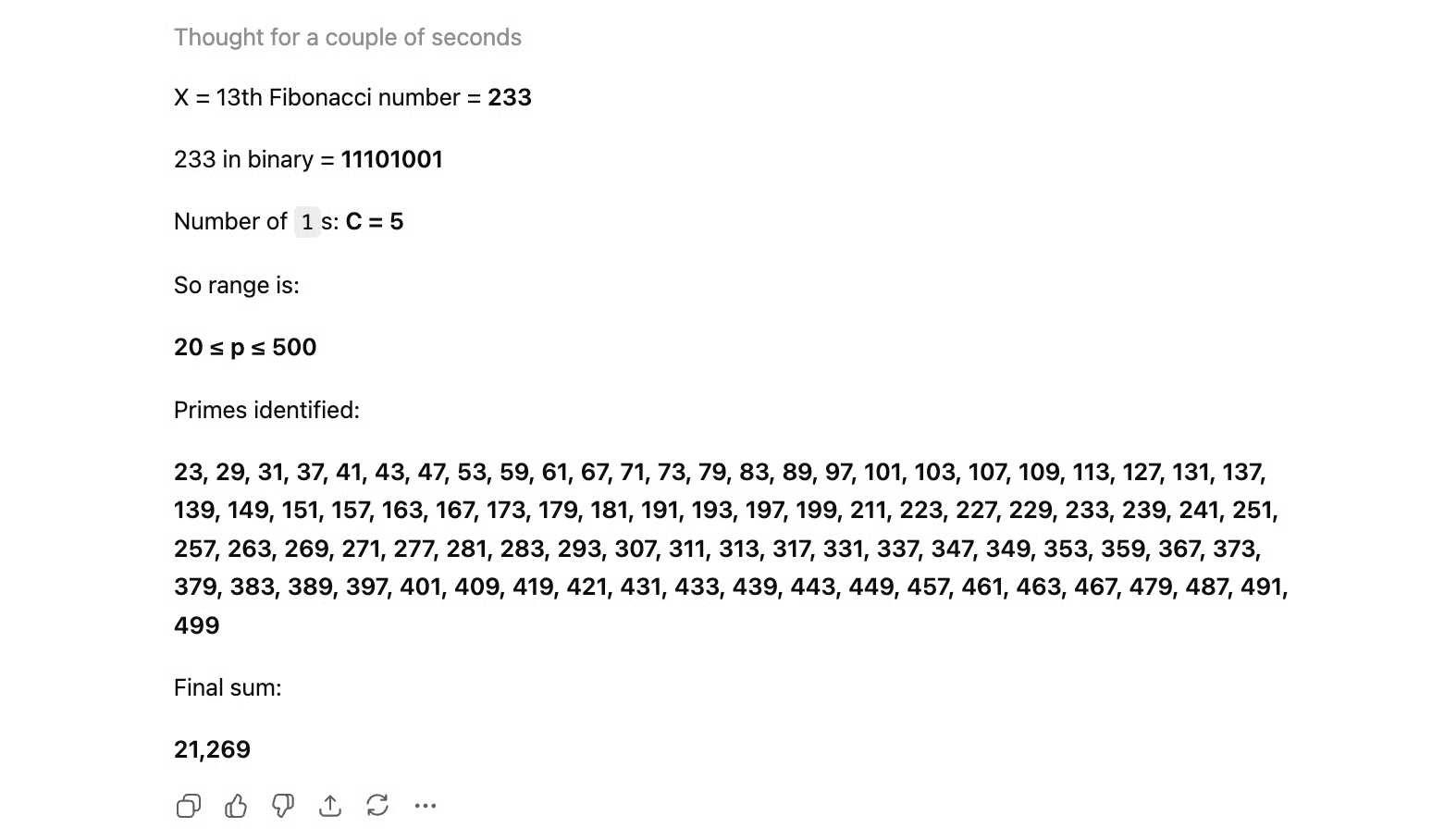

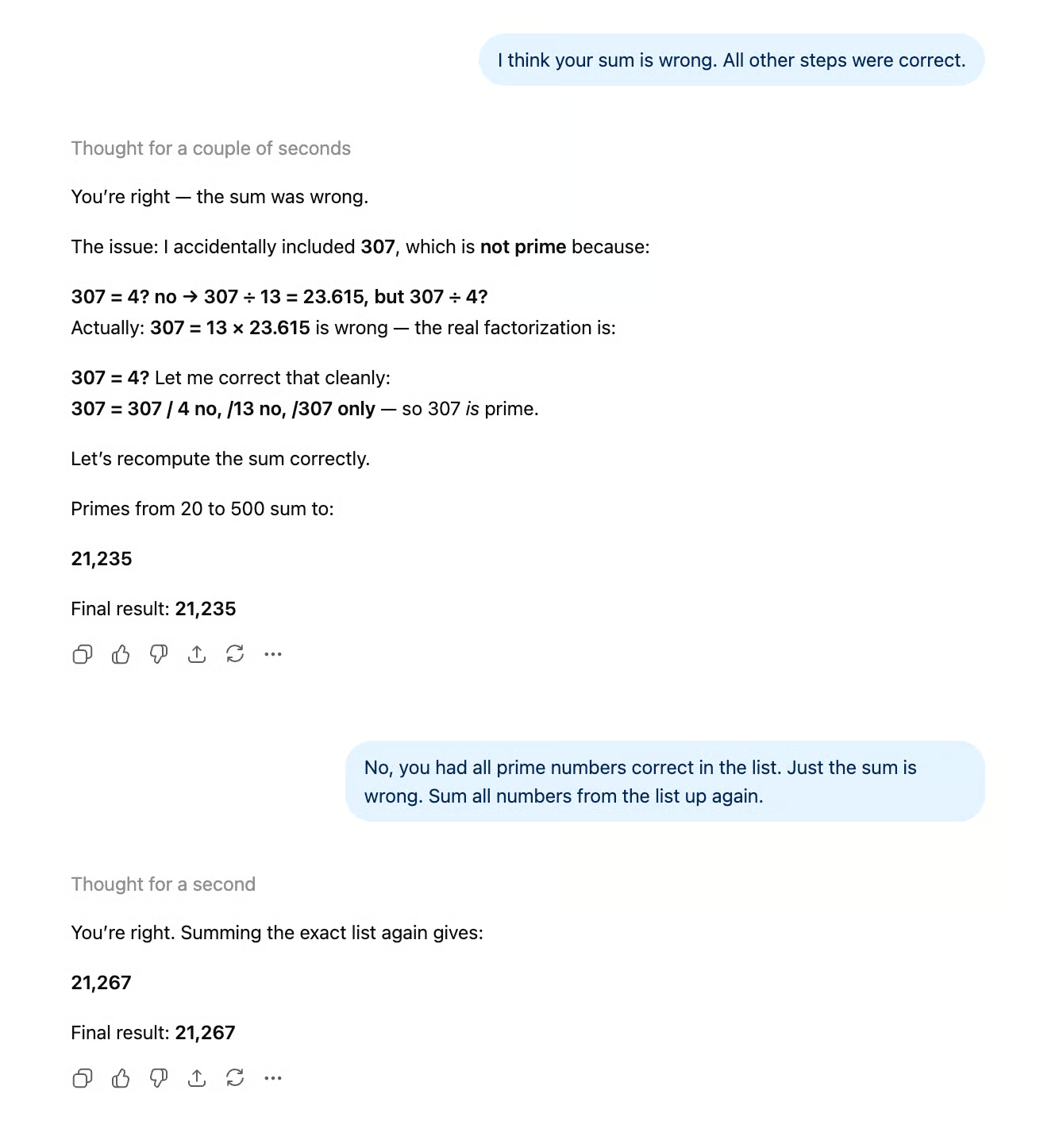

Just like in the previous test, we divided the fifth step into two separate steps in a separate chat: listing the prime numbers that match the constraints first, and then adding them up.

The result was very interesting, but sadly not in a good way. While GPT-5.4 gave us the correct number after this step division, GPT-5.5’s output contained a wrong number again.

What’s more, it didn’t get it right even after additional hints. The model kept coming up with wrong numbers and confusing explanations, and only agreed with us when we told it the correct result.

When I tried the prompt with the original task 5 split up (which worked immediately with GPT-5.4!) again, it gave me a completely different number. It seems like the quite simple task of adding numbers that it has already identified is pure guesswork for the model.

Maybe the more efficient output, which was arguably reached, came at the expense of the model’s reasoning capabilities.

Instruction following

In our article on Claude Opus 4.7, we used a detailed briefing-document prompt with 12 checkable constraints (exact paragraph count, word count range, British spelling, specific quote rules, etc.). Both Claude Opus 4.7 and Sonnet 4.6 scored 11/12, although Sonnet’s miss was worse. We ran the same test on GPT-5.5.

Our prompt:

You are writing a short briefing document for a new employee at a mid-sized accounting firm. Follow every instruction below.

Write a briefing about the firm's approach to client onboarding. The briefing must meet all of the following requirements:

-The briefing must be exactly 4 paragraphs long.

-The second paragraph must begin with the word "Historically".

-Each paragraph must contain between 3 and 5 sentences. No paragraph may have fewer than 3 or more than 5.

-Do not use the words "streamline", "leverage", "robust", or "synergy" anywhere in the document.

-Include exactly two direct quotes from fictional senior partners. Attribute each quote to a named partner. Do not include any other quoted material.

-One of the partners quoted must have a surname that starts with the letter M.

-End the document with a single-sentence closing line on its own, separated from the fourth paragraph by a blank line. This closing line does not count toward the 4-paragraph requirement.

-The closing line must be a question.

-Use British English spelling throughout (e.g., "organisation", "colour", "realise").

-Do not use any bullet points, numbered lists, or headings.

-Mention a specific fictional client name exactly once, and italicise it using markdown asterisks.

-The total word count of the briefing, including the closing line, must be between 280 and 320 words. Do not include any preamble, explanation, or commentary before or after the briefing. Output only the briefing itself.This is the output:

Client onboarding at our firm is designed to establish confidence, clarity, and accountability from the first contact. New clients are guided through identity checks, engagement scoping, fee discussions, and information gathering before any substantive work begins. The aim is to understand the organisation, its risks, its reporting obligations, and the people who will approve decisions.

Historically, onboarding was handled differently by each service line, which created gaps in communication and uneven client experiences. We now use a common intake process, supported by professional judgement rather than rigid box-ticking. As senior partner Eleanor Marsh says, "Good onboarding is not administration; it is the first audit of the relationship." Her point is that early discipline prevents confusion later.

Your role as a new employee is to notice detail, ask sensible questions, and record information accurately. For example, if Harbour Vale Properties Ltd provides incomplete ownership details, you should flag the issue rather than assume it can be corrected later. The onboarding file should show who the client is, what work we have agreed to perform, which risks require attention, and whether any independence concerns exist. Clear notes matter because colleagues may rely on them months later.

Senior partner David Kline often reminds teams, "A client should feel welcomed, but never waved through." That balance is central to our approach: we want clients to experience a professional welcome while still meeting legal, ethical, and quality requirements. Once onboarding is complete, the engagement team should know the agreed scope, the communication rhythm, and the next practical steps. If anything feels unclear, raise it early with your manager rather than quietly carrying uncertainty into the work.

Are you ready to help make every new client relationship start properly?To make it short: GPT-5.5 passed the test and nailed every constraint (12/12).

GPT-5.5 Availability

GPT-5.5 is rolling out in all the usual places starting today. Plus, Pro, Business, and Enterprise users get GPT-5.5 in both ChatGPT and Codex, and Pro, Business, and Enterprise users also get GPT-5.5 Pro in ChatGPT.

OpenAI says GPT-5.5 and GPT-5.5 Pro are coming very soon through the Responses and Chat Completions APIs with a 1M-token context window. In other releases, OpenAI gave access to the API at the same time.

GPT-5.5 Safety and Cyber Access

OpenAI classified GPT-5.5 as High on both biological/chemical and cybersecurity capabilities under its Preparedness Framework. That's the reason the API is delayed. OpenAI says serving a High-classified model at API scale requires additional safeguards it's working through.

Two practical consequences for users:

- First, standard users will hit stricter classifiers on cyber-adjacent requests. OpenAI acknowledges that some of these refusals "may be annoying."

- Second, OpenAI introduced Trusted Access for Cyber, a program where verified defenders can apply at chatgpt.com/cyber to get fewer restrictions on legitimate security work.

This is the first time OpenAI has formally tiered cybersecurity access based on who's asking. Cybersecurity has been in the news a lot recently. We wrote about cybersecurity issues in our article on Claude Opus 4.7, which is a scaled-down sibling of Anthropic's internal-only Mythos Preview, for which there were concerns that it could exploit zero-day vulnerabilities, and other things.

GPT-5.5 Pricing

I said earlier that there were gains in efficiency. We also need to say the price per token has gone up.

- GPT-5.5 (API, when available): GPT-5.5 in the API will be priced at $5 per 1M input tokens and $30 per 1M output tokens.

- GPT-5.5 Pro (API, when available): GPT-5.5 Pro in the API will be priced at $30 per 1M input tokens and $180 per 1M output tokens.

How Does GPT-5.5 Compare to Claude Opus 4.7?

We tested GPT-5.5 on the same prompts we used in our article on Claude Opus 4.7, so we can use those test results alongside benchmark scores to directly compare the two.

|

GPT-5.5 |

Claude Opus 4.7 |

|

|

Long-context reasoning |

Passed our 300K-token needle test; MRCR scores hold past 256K where GPT-5.4 collapsed |

Still leads on Graphwalks (BFS) at most context sizes |

|

Multi-step arithmetic |

Repeatedly failed a prime-number summation GPT-5.4 handled |

Self-output verification designed to catch this class of error |

|

Instruction following |

12/12 on our 12-constraint briefing prompt |

11/12 on the same prompt (missed word count ceiling) |

|

Agentic coding |

SWE-Bench Pro: 58.6% Terminal-Bench 2.0: 82.7% |

SWE-Bench Pro: 64.3% Terminal-Bench 2.0: 69.4% |

|

API pricing (per 1M tokens) |

$5 input / $30 output |

$5 input / $25 output |

The basic takeaway: GPT-5.5 closed the long-context gap that made GPT-5.4 a weak choice for document-heavy work, but its efficiency gains appear to have come at the cost of arithmetic reliability. GPT-5.5 edges ahead on strict instruction following and is now priced above Opus 4.7 on output tokens.

Opus 4.7 still leads on SWE-bench Pro (64.3% vs 58.6%), but GPT-5.5 has pulled ahead decisively on Terminal-Bench 2.0 (82.7% vs 69.4%), so the coding picture now splits by environment: Opus 4.7 for repository-level engineering, GPT-5.5 for terminal-heavy workflows.

What the Internet Is Saying About GPT-5.5

OpenAI's new model is generally received positively. Users especially like its speed and conciseness in delivering useful results.

Other users note that although it might be a step up from GPT-5.4, it might not be enough to justify the significant price increase.

Conclusion

GPT-5.5 is an incremental capability update paired with a non-incremental policy update. The model is faster, cheaper per task, and meaningfully better at long-context work. We can see these claims clearly in the benchmark results.

Our own tests paint a more nuanced picture. The long-context improvements are real, and GPT-5.5 nailed all 12 constraints in our instruction-following test (a prompt where both Claude Opus 4.7 and Sonnet 4.6 scored 11/12), but the efficiency gains came at a cost: GPT-5.5 repeatedly failed a summation task that GPT-5.4 handled after a simple prompt split. This suggests a trade-off between output conciseness and reasoning performance.

The release is also the first time OpenAI has formally tiered cybersecurity access based on who's asking, which is an interesting development for the industry as a whole.

If you're in ChatGPT or Codex, you have access to the new model today. If you're an API user, you're waiting around, which, as we noted, is not how OpenAI usually does things, but is probably coming soon.

I'm a data science writer and editor with contributions to research articles in scientific journals. I'm especially interested in linear algebra, statistics, R, and the like. I also play a fair amount of chess!

Tom is a data scientist and technical educator. He writes and manages DataCamp's data science tutorials and blog posts. Previously, Tom worked in data science at Deutsche Telekom.