There's an Architectural Shift Happening in Plain Sight

For the last couple of years, most people have been treating AI like an API.

You send a prompt in, you get a response out, and if you're feeling sophisticated, you wrap it with retries, add a bit of logging, and call it a system. That worked for demos. It's what let this whole wave ship so fast. But the pattern stops working the moment you try to build something that has to operate over time.

Production AI systems don't behave like APIs. They behave like processes.

The moment you introduce continuity, everything changes. You're no longer dealing with a single request and response. You're dealing with a sequence of interactions that depend on each other. You start needing memory. You start needing state. You need to decide what matters now, what mattered five minutes ago, and what should still matter tomorrow.

And once you cross that line, you realize this isn't prompt engineering at scale.

This is systems design.

What you're really building is not a better prompt. You're building a system that determines how context is created, filtered, accumulated, and evolved. That's the real problem space. That's the discipline that's emerging. That is context engineering.

Context is Not the Prompt. Context is What Assembles the Prompt

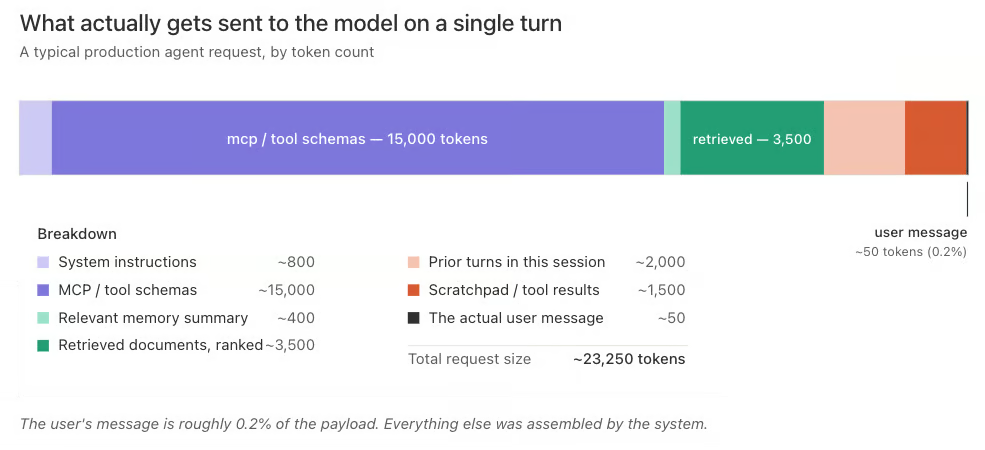

Open any production agent in your debugger and look at what actually gets sent to the model on a given turn. It's almost never just the user's message. A typical request looks something like this:

The user message is the smallest part of the payload. Every other block is something your system produced, selected, compressed, ranked, and injected. Those blocks are the output of a pipeline you designed, even if you didn't realize you were designing one.

That pipeline is the real product. The model is the runtime. Context engineering is the design of the pipeline.

Once you accept that distinction, the architecture looks very different.

Memory is Not One Thing

"Add memory" is the new "add caching." It sounds like a feature, but it's actually several different systems that happen to share a name. If you don't separate them, you'll end up with a single blob store that answers every question badly. Forgetful in some places, obsessive in others.

The first cut is the one cognitive science already gave us, and the one most practitioners are converging on: short-term memory (STM) and long-term memory (LTM). Short-term memory is whatever is currently inside the context window, the working set the model can see on this turn.

Long-term memory is everything that survives beyond a single call: facts, artifacts, plans, prior decisions, tool outputs, and rules. Short-term memory is a budget problem. Long-term memory is a systems problem.

The five types below are all long-term memory, organized by what they're for and how they should be stored. They map onto the canonical semantic, episodic, and procedural taxonomy you'll see in cognitive-science-inspired frames like CoALA. I'm subdividing two of those categories because in production they need to be governed differently.

Policy memory

Normative rules and constraints. Typically global or tenant-scoped. Versioned and tightly controlled. This is what the system is allowed to do: compliance rules, brand guardrails, security boundaries. It changes rarely and deliberately. This is procedural memory in the cognitive-science sense, the rules and workflows that shape behavior across sessions. The same category that holds files like CLAUDE.md or AGENTS.md in filesystem-first agents.

Preference memory

Stable personalization parameters. Usually user-scoped. This user wants JSON responses, that user prefers bullet points, this tenant formats dates DD/MM/YYYY. Preference memory is what makes the system feel tailored without having to be retold every session. Together with fact memory below, this is the semantic layer: durable, queryable assertions about the world and the things in it.

Fact memory

Durable assertions the agent may reuse. Must include provenance. "The customer's production DB is in us-east-1" is a fact. So is "their fiscal year starts in April." Facts are where compounding advantage lives, and where the hardest design problems are, because every fact you write is a bet about the future. I split facts out from preferences because they have different write paths, different decay profiles, and different governance requirements, even though both are semantic memory at the cognitive level.

Episodic memory

Structured summaries of completed work. "Case resolved." "Migration completed." "Exception granted." Reusable artifacts extracted and summarized from traces, not the traces themselves. Episodic memory is what lets the system recognize we've done this before and retrieve the shape of the prior solution.

Trace memory

Raw, append-only execution events. This is your flight recorder. It's high-volume, mostly useful for retrieval and replay, and it is not the right place to look when you want to know what a user is like, only what they did. The distinction matters because most teams conflate the two and end up doing semantic search across execution logs to infer preferences.

In a coarser taxonomy, both episodic and trace memory sit under the episodic umbrella, including conversation transcripts, tool outputs, and run histories. I separate them because in production the raw stream and the distilled summary have wildly different access patterns, retention policies, and cost profiles. Treating them as one thing is how teams end up paying vector-database prices to grep their logs.

Working memory isn't on this list because it isn't long-term memory. It's the context window itself, the working set the model can actually see on the current turn. Working memory is real and important, and a lot of the hardest applied-ML work right now lives there: prompt compression, KV-cache reuse, sliding-window attention, the budget math for what gets to occupy those tokens.

But it's transient by definition, and conflating it with the persistent stores above is what produces architectures where the scratchpad and the system of record share a database. They shouldn't. Working memory is assembled per turn from long-term memory and discarded unless explicitly promoted. The promotion step is where the two layers meet, and it's where most of the interesting design work happens.

When someone says "our agent has memory," ask which of these five they mean. If the answer is "we dump everything into a vector store," you've just found the reason their agent feels forgetful in some places and obsessive in others.

The Loop, and What Actually Breaks in it

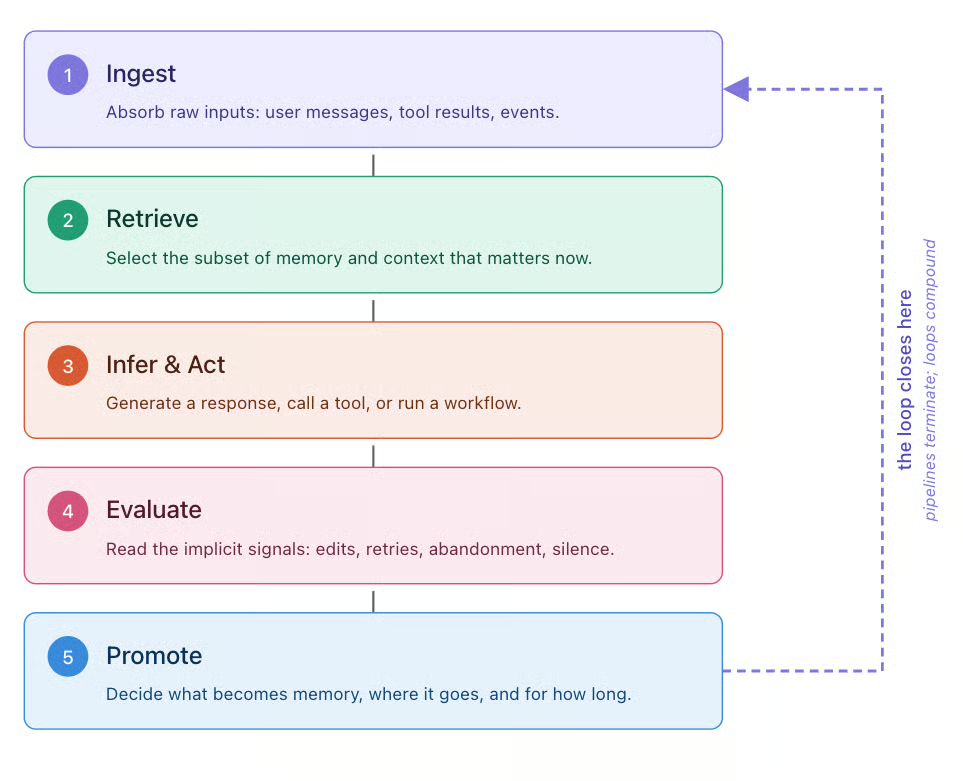

Every production AI system eventually converges on the same shape. Not a pipeline. A loop.

The shape isn't controversial. Most descriptions of the agent loop reduce to the same five steps: assemble context, call the model, take actions, observe results, and update memory, then repeat. I label the phases slightly differently because I want to separate the two halves of "assemble context" (what you absorb vs. what you select) and because I think promotion deserves its own name rather than getting folded into "update memory." But the underlying anatomy is the same, and you'll see the same five inflection points whether you came at this from the agent harness, the database, or the prompt.

ReAct popularized the interleaving of reasoning and action in 2022, production agent systems have since expanded that pattern into a broader loop that includes retrieval, evaluation, and memory promotion. The diagram provides a helpful visual, but knowing the failure mode of each phase is what tells you where to spend engineering time.

Injest

Ingest fails when you accept too much. Ingest is the first half of assembling context: absorbing raw inputs from the environment. Treating every event as potential context creates noise that retrieval has to undo later. The discipline here is at the boundary. What are you refusing to absorb?

Retrieve

Retrieve fails quietly. Retrieve is the second half of context assembly: selecting which subset of long-term memory matters now. It doesn't throw an exception when it returns the wrong chunk, it just makes the model sound slightly off. Retrieval is the phase most teams underinvest in and the phase that correlates most tightly with perceived system quality.

Pure semantic search is a first draft. For most production systems, the baseline is metadata filtering plus hybrid lexical/semantic retrieval with reranking; beyond that, query decomposition, graph traversal, and multi-hop retrieval help when tasks are compositional, but they must be bounded by latency and evaluation budgets.

Infer & Act

Infer & Act fails when context drives the wrong behavior. This is call the model and take actions collapsed into one phase, because the planning and the tool invocation are both consequences of what the assembled context made the model decide to do. This is where the long-context failure modes bite: lost-in-the-middle, attention dilution, context rot.

The intuition most people have is that more context should monotonically improve responses. You're giving the model more information to work with, so you'd think it should do better. In practice, more tokens often make the model worse. Budgeting context is now a real discipline, and the default instinct to "give the model everything we have" is the default wrong answer.

Evaluate

Evaluate is the phase most systems don't have at all. It introduces feedback into the system by observing the outcome of its actions. Did the response help? Did the workflow succeed? Did the user correct it? Even when feedback isn't explicitly captured, signals still exist. A few that work well in production: time-to-next-message (a fast follow-up usually means the answer didn't land), edit-distance between your output and the version the user actually sent or saved (small edits are noise, large edits are correction), and abandonment within N seconds of a tool result (the user looked at what you produced and walked away).

None of these require the user to rate anything. They just require you to instrument what already happens. These implicit signals are everywhere, but nothing in the stack is listening for them. Without this step, the loop doesn't close and the system doesn't learn. It just runs.

Promote

Promote is the phase most teams get wrong when they do build it. Promote is the half of update memory that decides not just whether to write, but what to keep, where it belongs, and how long it should last. Writing everything back creates a memory store that poisons itself.

Writing too little creates a system that feels amnesiac. The right answer is a policy, not a flag. A good policy answers six questions: what gets written, at what granularity, into which memory type, with what provenance, with what decay, and under whose authority. This is the hardest step to get right, and it's also the most crucial.

Pipelines terminate. Loops compound. The difference is Promote.

The Anti-Patterns You are Probably Shipping

If you've been building agents for more than a few months, you have almost certainly shipped several of these. My team's early experimentation got bitten by every one of them, which is how I know what they look like from the inside.

- Dumping the full transcript every turn. It's the lazy way to preserve state and it's ruinously expensive, hits context rot fast, and silently degrades model quality. A compacting summarizer running in the background is not optional at scale. Exploit prompt caching only for stable prefixes—system prompts, tool definitions, and static policy blocks—and design your request layout so volatile retrieval payloads sit after cached sections.

- Using retrieval as a kitchen sink. Pulling the top-20 chunks and hoping the model sorts it out. The model does not sort it out. It averages it out.

- Treating scratchpad as memory. Tool call results, intermediate reasoning, and generated assets, all of them leaking into long-term storage because nothing ever explicitly discards them. Your "memory" is mostly exhaust.

- One memory store for every kind of memory. A single vector index holding traces, facts, preferences, policies, and episodic summaries. Retrieval cannot disambiguate between them and neither can the model.

- Memory that never forgets. No TTL, no decay, no eviction. After only a few months, this becomes a liability, not an asset. Some of the best behaviors you can add to a system are aggressive forgetting policies.

- Promoting on user thumbs-up. The strongest feedback signal is also the rarest and most biased. Systems that promote only on explicit positive signals learn what makes users click the button, not what makes the system good.

- No replay. You cannot reproduce yesterday's session because the memory store has moved on. Debugging is guesswork. This is the bug that will eat your on-call rotation in 2026.

- Why This is Actually Systems Design, Not Vibes

The piece of this that deserves the name "systems design" is not the mental model. It's the tradeoffs, and every one of them has a wrong answer that's cheaper to ship and more expensive to live with.

Token economics are a primary design constraint, not an afterthought

Prompt caching has reshaped what it's rational to put where in a request. Cache-friendly prefixes, stable tool schemas, and pinned system instructions are not cosmetic choices anymore. Cache hits cost a fraction of what cache misses do: 10% of standard input price on Anthropic's API, 25% on Google's Gemini, and as low as 10% on OpenAI's newer models. That puts the savings ceiling somewhere between 4x and 10x on input tokens, depending on the provider, before you count the structural compounding in agentic workloads, where the same prefix gets re-sent every step. Get the cache strategy right, and a well-architected agent runs several times cheaper than a poorly architected one on identical work. This is the closest thing AI engineering has to a cache-line problem, and most teams are treating it like a CSS decision.

Retrieval has an architecture, not a library call

The production floor is hybrid (lexical plus semantic), reranked, with query rewriting. The ceiling is agentic retrieval, where the model issues and refines its own searches inside the loop. The distance between those two is enormous, invisible in benchmarks, and visible in every user interaction. If your retrieval is a single top-k call, your retrieval is the reason your agent feels dumb.

Context scoping is a security boundary

Multi-tenant isolation, sub-agent sandboxing, cross-session leakage. These are not hardening exercises you do later. If a sub-agent can see the parent's working set by default, you have a problem waiting to happen, and the only question is whether you find it first or an adversary does.

Evaluation is harder than for any prior class of system

Stateful, stochastic, path-dependent systems don't unit-test. You need trace-based evaluation, golden retrieval sets, offline replay against versioned memory snapshots, and LLM-as-judge harnesses that are themselves versioned. Most teams have none of this and are flying blind. They find out their last deploy regressed quality when a user tells them.

Governance is not optional, and it's technically harder than you think

Right-to-forget under GDPR becomes non-trivial the moment "the thing that knows about the user" is a fact derived from a hundred interactions. What does it mean to delete a user's data from a system that already absorbed it into fact memory? The EU AI Act adds another layer on top, with the bulk of its obligations (including data governance, human oversight, and transparency requirements for high-risk systems) coming into force in August of 2026.

Teams shipping AI products into Europe in the second half of 2026 will need answers to questions the field has not collectively solved yet: how to provably remove a user's contribution to a derived fact, how to audit the lineage of a memory promotion, how to demonstrate that a memory store is scoped the way you say it is. Nobody's answer is good yet. It still has to work, and regulators will not wait for the industry to figure it out.

This is why context engineering is about systems design and not prompt engineering. Most of the interesting work is in the interfaces between context producers, context stores, and context consumers. Almost none of it is in the prompt itself. And almost every tradeoff above has a cheap-looking shortcut that compounds into technical debt you will pay back at the worst possible time.

Where the Memory Layer is Converging

Once you accept that memory is five different things with several different access patterns, the obvious next question is: where does it all live?

Hybrid retrieval, meaning lexical plus semantic in one system, is largely a solved problem at this point. Elasticsearch, OpenSearch, LanceDB, Pinecone, Weaviate, and the vector extensions to Postgres all support some version of it, and the production-quality gap between them is narrower than vendor marketing suggests. If the whole memory layer were just "embeddings and full-text search," the architecture question would be boring.

It isn't, because memory isn't just retrieval. Look at the five types again and notice what each actually needs from its store.

|

Memory Type |

What it needs from a store |

|

Policy |

Relational. Versioned rules, tenant scopes, foreign keys to users and roles, transactional updates. |

|

Preference |

Structured and user-scoped. Rows or JSON keyed to a user, predictable schema. |

|

Fact |

Hybrid retrieval earns its keep here. Durable assertions with provenance, queryable by both semantic similarity and exact match on entity or source. |

|

Episodic |

Structured summaries with retrieval over them. |

|

Trace |

Append-only event storage, high-volume, with vector indexes layered on for replay and retrieval. |

The accidental architecture most teams end up with separates these along the axis that hurts most. The relational data lives in Postgres or a managed equivalent. The hybrid retrieval lives in Elasticsearch, OpenSearch, or a dedicated vector store like Pinecone, Weaviate, or LanceDB.

Traces end up in S3 or a time-series database. Each of these is a strong choice for the job it was built for, and many production stacks use them well. The problem isn't any individual component. It's that every meaningful retrieval path becomes a join across systems: "find facts relevant to this query, filtered by the policies that apply to this tenant, scoped to users this agent is allowed to see." That join crosses a trust boundary, a transactional boundary, and a latency boundary. Every time context crosses one of those boundaries, you re-introduce the exact consistency problem you were trying to avoid.

This is where the architecture picture changes. Hybrid retrieval in the same store as the relational data is the distinguishing capability, and that's a different problem than hybrid retrieval alone. Hybrid retrieval as a feature is increasingly available everywhere.

What's less common is co-locating relational, document, and vector data under a single transactional and security boundary, so that policy, preference, fact, and episodic memory can be queried together with ACID guarantees rather than reconciled across systems.

Oracle AI Database is one example of this converged, multi-model approach: relational tables, JSON documents, and vector embeddings live in a single engine, queryable together in a single SQL statement, inside a single transaction. Once you take the five-type taxonomy seriously and start drawing the joins between policy, preference, and fact, the question shifts from which retrieval engine is best to where should the relational data that governs that retrieval actually live.

What this looks like in practice is a single SQL statement that joins relational policy data, JSON-encoded preferences, and vector similarity over fact memory. The query that would otherwise span three systems collapses into one:

-- Find facts relevant to this question, scoped to the policies

-- and preferences that apply to this user, in a single query.

SELECT f.fact_text, f.provenance, f.created_at

FROM fact_memory f

JOIN user_preferences p

ON p.user_id = :current_user

JOIN tenant_policies t

ON t.tenant_id = p.tenant_id

AND t.is_active = 'Y'

WHERE f.tenant_id = p.tenant_id

AND JSON_VALUE(p.prefs, '$.allow_personalization') = 'true'

AND f.access_level <= t.max_access_level

ORDER BY VECTOR_DISTANCE(

f.embedding,

VECTOR_EMBEDDING(MINILM USING :query AS DATA),

COSINE

)

FETCH FIRST 5 ROWS ONLY;That single statement is doing four things at once: enforcing tenant policy, respecting user preference, applying access controls per fact, and ranking by semantic similarity. In a federated architecture, those are four round trips across three different stores, with a consistency model you have to defend yourself.

Here it's one query plan, one transaction boundary, one place to audit. The same ACID guarantees that have made databases the system of record for shared state since the 1970s, now applied to memory that happens to include vectors alongside rows. Working examples of this pattern, including agentic RAG with hybrid search and a notebook on memory architecture for context engineering, live in Oracle's AI Developer Hub on GitHub.

You'll still want specialized stores for a few things. Trace memory at high volume has different durability and cost characteristics than your operational database. Blob storage for large assets like documents, images, and model artifacts belongs somewhere built for that. A dedicated document database may still make sense for hardened document workflows where you need the specific features of that category. The point isn't that a unified platform eliminates every other store.

The point is that it eliminates the most expensive join: the one between the relational data that governs what the agent is allowed to do and the vector data that shapes what the agent actually does. When those two are co-located, the architectural problem shifts from "how do I keep these stores in sync" to "how do I design good memory policies," which is the problem that actually matters.

The model layer is commoditizing. Hybrid retrieval is commoditizing. What stays distinguishing is whether the relational data that governs memory lives in the same place as the memory itself, under the same transactional guarantees, and the policy that decides what to remember.

Seven Predictions for What Comes Next

The sections above describe where we are. These are bets about where we're going. They're opinionated on purpose. Disagree with the ones you disagree with.

1. The model does not become the memory.

The tempting story is that model providers absorb memory into the model itself. Long-context windows stretch, caching gets smarter, and the external memory layer quietly disappears. That isn't going to happen. Context windows will keep growing, but they'll still require priming on every turn. The model stays stateless every session, and the external memory layer stays the architecture of record. What changes is that external memory gets much smarter (vector stores, structured databases, hybrid platforms, and things we haven't seen yet) and context engineering becomes the craft of deciding what the model sees, when, and why. If a frontier provider ships a primitive that credibly replaces an external memory system for production use, this prediction is wrong. I'd bet against it.

2. Memory infrastructure outspends inference for mature AI products.

Inference costs are falling roughly an order of magnitude every 18 months. Storage, retrieval, embedding generation, reranking, and the assembly of per-request context are not. They scale with user count, session depth, and interaction history, all of which are growing. The dominant cloud bill line for a mature AI product becomes vector and hybrid retrieval infrastructure, not GPU tokens. This reshapes the build-vs-buy question. The model layer commoditizes; the memory layer doesn't. The teams that win long-term are the ones that treat memory infrastructure as a first-class investment now, not an afterthought they'll rearchitect later.

3. Classical RAG becomes the legacy architecture.

"Embed documents, top-k on the user query, stuff into the prompt" is the COBOL of AI architecture. It works, it's everywhere, and it's going to be deeply embarrassing in retrospect. The replacement is already visible: agentic retrieval, where the model plans its own searches, issues multi-step queries, reads intermediate results, and decides what to fetch next. It looks less like a database lookup and more like a research process. Teams still building static RAG pipelines today are on a migration path whether they know it or not.

4. Memory becomes the adversarial surface.

Prompt injection attacks a single request. Memory attacks compound. An adversary who can write to a long-lived memory store, either directly or through an input channel you didn't think was privileged, can shape that system's behavior for every future user it touches. Exfiltration works the same way in reverse: context from one session leaking into another because the scoping model was sloppy. The first serious real-world memory poisoning incident is coming. The industry scramble on provenance, attestation, and per-fact access control will follow it.

5. Context privacy boundaries become a compliance requirement.

The next logical step from "context scoping is a security boundary" is cryptographic isolation of memory segments, so agents can only reveal context on a need-to-know basis. This matters the moment you have sub-agents with different trust levels, or regulatory regimes that require provable scoping of sensitive facts. Expect the first regulated-industry audit finding that cites "insufficient isolation between agent memory scopes" to be the catalyst, and expect compliance frameworks to follow shortly after. Teams that already have cryptographic scoping on their roadmap will quietly win RFPs. Teams that don't will retrofit it under deadline pressure.

6. Multi-agent consistency becomes a named, expected capability.

Today, a billing agent and a support agent touching the same customer account can produce contradictory answers because they pulled different slices of memory and nothing reconciles them. That's tolerable right now because most production deployments are single-agent. As multi-agent systems move into production at scale, users will start catching the contradictions, and "contextual consistency" will become a named capability that platforms compete on. Expect this to show up in vendor comparison matrices first, then in enterprise procurement checklists. The teams that solve this early will have a differentiated story; the teams that don't will be explaining to customers why their two agents disagree.

7. "Useful context per capita" becomes a benchmarked metric.

Today, we benchmark models. We'll start benchmarking systems, and the headline metric will be something like useful context carried per user, per interaction. It's the number that actually predicts retention, satisfaction, and the gap between a product that feels like it knows you and one that feels like it resets every Tuesday. Expect it to show up in investor decks before it shows up in academic papers. Expect a correlated metric, cost per useful context token, to become the unit economics question every AI product team has to answer.

What to do on Monday

If you're new to this and want a place to start: stop treating memory as one thing. Pick a production agent you have access to, label what kind of memory each of its stores actually holds, and notice how often one store is being used for three jobs.

If you're already building agents: instrument the loop. Specifically, instrument the signal between retrieval and promotion. Most teams can tell you what their system retrieved on a given turn. Far fewer can tell you which of those retrieved memories the model actually used, and almost none can tell you why a given memory was promoted in the first place.

Knowing which memories got used is what lets you reinforce them at the next promotion step, which is how a memory system stops being a write-once log and starts actually learning. That feedback loop is where the compounding advantage lives, and it's where the next two years of this discipline are going to be won.

We're not building smarter models. We're building systems that remember, adapt, and, when designed well, refuse to forget the things that matter.

The model layer is shared. Everyone gets the same Claude, the same GPT, the same Gemini on roughly the same timeline. The memory layer and how you assemble context isn't shared. It's the one thing you build that nobody else can copy, because it's shaped by your users, your decisions, and your judgment about what's worth remembering and what's worth retrieving.

A smarter model is everyone's advantage. A better memory system is yours.

I’m a technology and product leader with 25+ years of experience building data-intensive, cloud-scale systems across AI, data, and modern cloud infrastructure. An AWS Serverless Hero and keynote speaker, I focus on turning complex technical concepts into practical, scalable solutions, and currently consult while building with modern cloud technologies.