Course

As the data revolution continues transforming the world as we know it, businesses are beginning to realize that thriving in this era involves maintaining a well-executed data architecture. A data architecture describes the structure of an organization's data assets and maps how data flows through the organization. Essentially, the data architecture serves as a blueprint for managing data to ensure that all of the business's data is managed accordingly and meets the business requirements. But what happens when they encounter bad data?

Without a well-defined data architecture, businesses are unlikely to unlock the true value of their data and potentially waste lots of resources in the process. They may also experience defeat to competitors with more mature data strategies. To steer clear of such fate, one of the most vital things business leaders must realize is that bad data exists and carries consequences.

Here, we explore what bad data is, why data quality matters, and what the signs of bad data are.

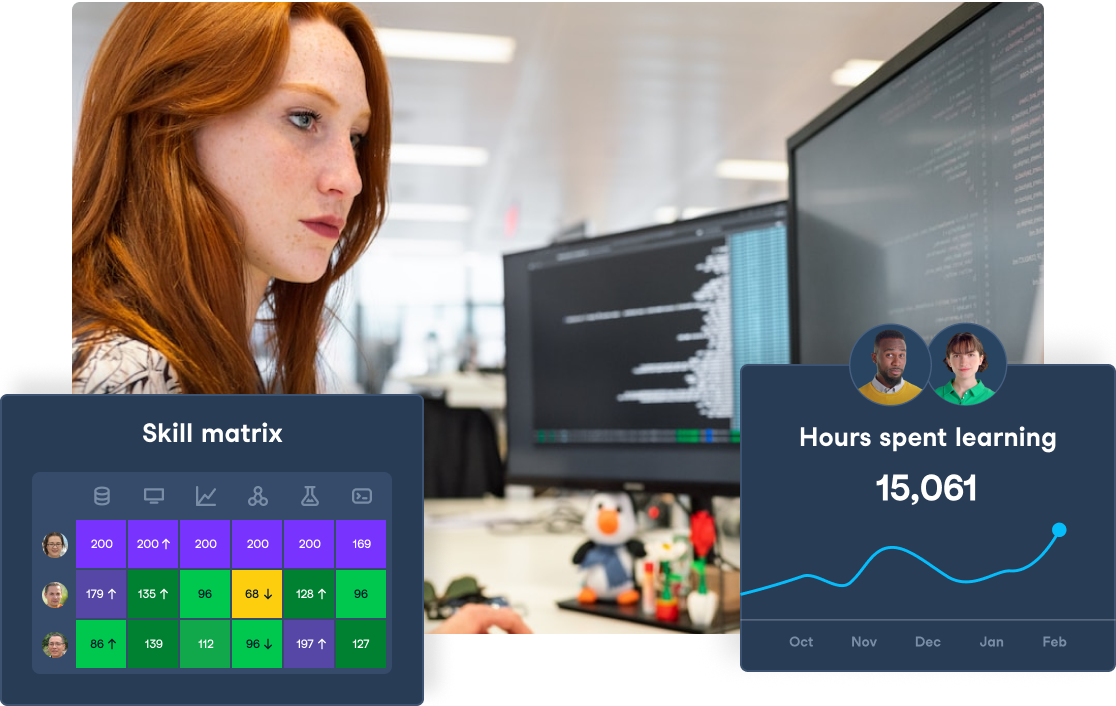

Empower Your Team with Data Literacy

Enhance your team's data literacy and decision-making capabilities with DataCamp for Business. Access diverse courses, hands-on projects, and centralized insights for teams of 2 or more.

What is Bad Data?

If we can define good quality data as that which is fit for purpose, we may say that bad quality data is not fit for purpose. This means that the data is not good enough to support the outcomes it’s being used for.

Often, raw data may be considered bad data. For example, data extracted from social media networks like Twitter is unstructured and, in its raw state, is not ready to be analyzed or used for other insightful purposes.

However, raw data can become good data through data cleaning and processing, which typically takes time.

The simplest way to put it is that any data lacking structure and suffering from quality issues such as inaccuracy, incompleteness, inconsistencies, and duplication can be considered bad data.

Why Data Quality Matters

Data quality refers to the state of qualitative or quantitive pieces of data: it measures the condition of data given specific factors such as accuracy, completeness, consistency, reliability, and whether the data is updated. As well as customer data, this includes product data, company data, vendor data, and much more.

Ensuring the data you possess is of good quality is vitally important. To derive value from data, we need it to be accurate, useful enough to support the outcomes we wish to use it for, and good enough to make the best use of the available resources.

Why does poor data quality occur?

There are three major types of data quality issues that have a direct impact on operational efficiency:

- Human error. One of the most common causes of poor data quality is human error; This typically occurs when the data entry process lacks standardization or as a result of employees manually inputting values into spreadsheets. Both scenarios increase the chances of errors.

- Disparate systems. Organizations often store data in several disparate systems consisting of their own rules. Building a dataset may involve integrating several sources from the various systems, resulting in duplicated data, missing fields, or inconsistent labels. It’s also possible for different fields to have the same meaning but be addressed differently by another system.

- Invalid data. Change happens, and businesses evolve. When this occurs, changes to the data must happen (i.e., changing the level of detail in the data structure, depreciating fields, or updating the data fields). However, analysts may only realize the required changes when they’re about to use the data.

These factors are the leading cause of bad data and become a bottleneck when it is time for the data to be used.

You can learn how to improve data quality in Python in this DataCamp Data Cleaning Tutorial.

The Cost of Poor Data

The cost of poor data can vary depending on several factors. In certain scenarios, costs may be accumulated in downstream processes or delays in critical operations. In the worst-case scenario, you can expect the failure of the entire process.

Let’s examine some of the costs of poor data.

Flawed insights

The insights that we derive from data are subject to the quality of the data itself. For example, business leaders may make a key decision based on insights from their data without realizing that the multiple sources used to draw insights contains duplicates. The redundancy may skew the findings such that they do not reflect reality, resulting in flawed insights.

Financial expenses

In an article about making a business case for data quality improvement, Gartner research stated that “organizations believe poor data quality to be responsible for an average of $15 million per year in losses” and “this is likely to worsen as information environments become increasingly complex — a challenge faced by organizations of all sizes.”

Organizational efficiency

As more businesses operate with data at their core, poor data directly impacts the organization as a whole. For example, the sales team may pitch products to the wrong target audience, which could have been avoided if they had access to good-quality data.

Issues during migration

Picture a scenario in which an organization decides to migrate from one platform to another; the new platform may have different data governance and standardization rules in contrast to the old one, which will cause migration issues. You may also face a situation in which the platform stores the data in a different format: this will make mapping data accurately difficult.

An overall bottleneck

Poor data quality can quickly cause severe bottlenecks during an organization's digital transformation. Issues that arise must be fixed, which means the transformation project is paused. Repeating this several times takes a massive toll on the rate of adoption, as well as resources.

10 Signs of Bad Data

The quality of input will determine the quality of output. It’s practically impossible to generate accurate and reliable reports with incomplete, inconsistent, or corrupted data: garbage in, garbage out.

Thus, how do you determine if your organization suffers from a data quality issue? Here are ten signs to look out for (not in order):

#1 Missing important information

There may be several causes for missing data, for example, equipment malfunctions, lost files, incomplete data entry, etc. Though it’s not uncommon to have some missing data in any given dataset, missing important information presents many challenges. Here are the three main ones:

- The absence of data will reduce statistical power, which reduces the chances of detecting a true effect.

- The missing data may result in bias in the estimation parameters

- Missing crucial information may reduce the representativeness of the samples.

You can learn more about dealing with missing data in Python with our online course.

#2 Excessive effort and time are required on menial tasks

If you feel most of your time is being spent on manual tasks, you’ve probably got bad data. An ineffective (or non-existent) data management strategy may result in you manually organizing data from various sources, chasing up people to fill missing gaps, and inputting that data into spreadsheets.

#3 Not enough actionable insights

Actionable insights are the conclusions drawn from data that can translate directly into action or response. Thus, actionable insights must be relevant, specific, and of value to the decision-maker.

What makes such insights valuable is the new information it brings to the table. However, this does not mean it must come from an entirely new dataset. If your data is telling you things you already know for sure, then it has no value and is not relevant.

#4 Analyzing the data is difficult

Normalization of your data is required to:

- Ensure that the table only consists of data directly related to the primary key

- Ensure each data field consists of exactly one data element

- Ensure redundant data is removed

Without normalized data, conducting the analysis may be extremely difficult since each data source will potentially come with its formats, fields, and labels which vary across the board.

#5 Opportunities are missed

The thought that you are not getting the most out of the data you possess may constantly linger at the back of your mind – especially if you don’t trust your current data management strategy. A clear sign you depend on bad data is your exposure to unnecessary risks is increased, which leaves you exposed when sudden changes occur.

#6 Insights fail to arrive on time

You must have instant access to data in a centralized repository. This allows you to generate reports rapidly and easily, as well as other benefits. For example, reduced redundancy, thereby minimizing errors and simplifying access to information.

Centralized data means that the entire organization works from the same blueprint and follows the same rules. The idea is to avoid the discrepancies that arise from using disparate data and different tools.

#7 There are too many errors in the data

Humans are prone to errors. It’s flawed to expect perfect data whenever a human is responsible for manually entering data into the system. Data should be audited. This will also make it clear whether the errors in your data result from the utility provider or human mistakes.

#8 Decision makers lacking confidence

Data should breed confidence. Possessing trustworthy data is a fundamental prerequisite for making data-driven decisions. When decision-makers lack confidence in the data, their instinct is to regress to old ways, which means you can expect decisions based on gut feelings and educated guesses.

Learn more about the importance of Data-Driven Decision Making for Business with our online course.

#9 Lack of visibility of key business metrics

When Key Performance Indicators (KPIs) are unavailable in real-time, it becomes unclear what actions will drive the highest impact.

#10 Disjointed customer experience

A clear (and possibly costly) sign you have bad data is when customers receive content that does not align with where they are in the purchase journey. In today’s age, consumers no longer “want” a personalized experience; they expect it. If customers are not receiving a personalized experience across touchpoints, the customer experience may be considered disjointed.

Recognizing more than a few of these symptoms in your organization is a cause for concern: There is likely a data quality problem. Find out more about building recommendation engines in Python with our online course.

How to Manage Bad Data

Hopefully, you are now aware not all data is good. The optimal way to manage bad data is to prevent poor quality at the source. However, this solution may be difficult to enforce if you already contend with bad data.

The following steps are designed to help you manage bad data if you have already collected bad data:

Step 1: Accept the reality

You have bad data. Accept it. If you fail to recognize bad data as being a problem, then it’s highly unlikely you will be willing to take steps to improve it.

Step 2: Update your bad data

Using your knowledge of how to identify bad data, your data must be cleaned – this will likely include updating existing records.

Step 3: Introduce a data quality program

Data quality programs are used to reduce the risk of errors while establishing common and reliable processes to support the use and production of data.

Step 4: Improve data collection techniques

Implement better techniques to acquire data: this may involve refraining from requesting information if it is not necessary and providing details as to why you require specific data, how you intend to use it, and what’s in it for your customer if they share their data with you.

Step 5: Educate those around you

To manage data better, employees must know how to collect, handle, dispose and manage data.

The key to managing bad data is dealing with it at the source. If you have already collected bad data, you must accept your data is bad and then perform data cleaning and updates as necessary. After you’ve cleaned up your data, it’s crucial to reduce the risk of facing such issues again by introducing a data quality program that improves your organization's data management techniques. Do you have sufficient Data Skills? You can learn how to answer real-world questions using data with DataCamp’s Data Skills for Business skill track.

Get certified in data literacy and land your dream role

Our certification programs help you stand out and prove your skills are job-ready to potential employers.

Bad Data FAQs

What is data quality?

Data quality is a measure of data condition based on accuracy, completeness, consistency, reliability, and whether the data is updated.

What are the common causes of data quality problems?

Data quality issues may arise due to several factors, but the three main causes that have a direct impact on operational efficiency are: 1) human error, 2) disparate systems, and 3) invalid data.

What are the consequences of bad data?

Poor data quality can seriously damage your business as it is a major bottleneck. The costs of poor data include flawed insights, high financial costs, migration issues, and depleted organizational efficiency.

How do you know if your data is bad?

Warning signs to indicate you have bad data include:

- The same question returns different answers

- Insights fail to arrive on time / Opportunities are being missed

- Excessive effort and time are required on menial tasks

- Customer's complaints about employee's knowledge of previous transactions

- Disagreements between teams regarding organizational performance

- A consistent failure of data migration activities as a result of poor data

- Unreliable performance figures despite multiple data warehouses and data lakes

- Employees do not trust the system – they consequently maintain their own data store.

- Analyzing the data is difficult

- Important information is missing