Kurs

GPT-5.4 wurde am 5. März 2026 als OpenAIs Flaggschiff für professionelle Arbeit veröffentlicht und bündelt Coding und Reasoning in einem universellen Modell. Sechs Wochen später, am 16. April, brachte Anthropic Claude Opus 4.7 heraus – mit einer anderen Wette: ein Modell, das langfristige Engineering-Aufgaben eigenständig meistert und auch in langen Sitzungen kohärent bleibt, in denen viele Agenten auseinanderfallen.

Ein guter Zeitpunkt für einen direkten Vergleich. Ein Hinweis vorweg: Dieser Beitrag erschien am selben Tag wie Opus 4.7, daher stammen die direkten Vergleichszahlen größtenteils von den Anbietern. Nimm sie als Ausgangspunkt, nicht als Urteil.

Update: OpenAI hat den Nachfolger von GPT-5.4 veröffentlicht. Alle Details findest du in unserem GPT-5.5-Guide.

Opus 4.7 vs. GPT-5.4 im Direktvergleich

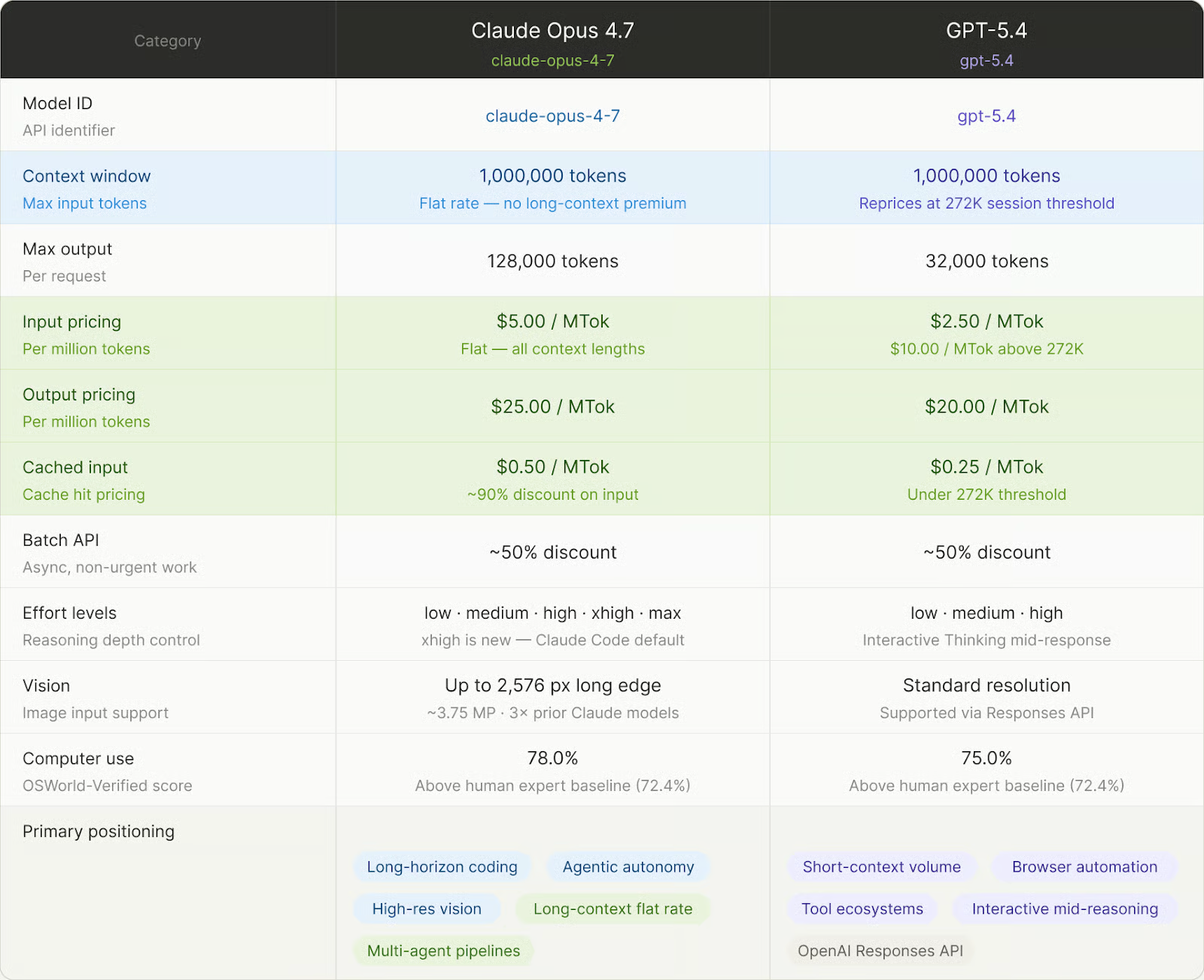

Hier eine schnelle Übersicht, bevor wir in die Details einsteigen. Die interessantesten Nuancen liegen beim Preis – das behandeln wir in einem eigenen Abschnitt.

Zentrale Spezifikationen beider Modelle im Vergleich. Bild: Autor.

Gemini 3.1 Pro ist eine echte Alternative, wenn du primär große Dokumentmengen verarbeitest oder lange juristische Analysen brauchst; es läuft mit niedrigeren Tokenkosten und einem 2M-Kontextfenster. Dieser Artikel bleibt beim Vergleich Anthropic versus OpenAI.

Wie die Anbieter ihre Modelle positionieren, verrät viel über die erwarteten Einsatzszenarien.

Modell-Positionierung und Einsatzfelder

OpenAI positioniert GPT-5.4 als einheitliches Allzweckmodell. Es übernimmt die Coding-Fähigkeiten, die zuvor in GPT-5.3-Codex lagen, sodass Entwickler Anfragen nicht mehr je nach Aufgabentyp an unterschiedliche Endpunkte routen müssen. Ein Modell, ein Endpoint – egal welche Aufgabe.

Anthropics Pitch für Opus 4.7 ist fokussierter: ein Modell, optimiert für „Coding, Agenten, Computerbedienung und Enterprise-Workflows“, mit Langstrecken-Autonomie als Hauptunterscheidungsmerkmal. Du gibst anspruchsvolle Engineering-Arbeit ab und kannst darauf vertrauen, dass es eigene Fehler erkennt, bevor es zurückmeldet. Wichtig: Opus 4.7 ist Anthropics leistungsfähigstes allgemein verfügbares Modell, aber nicht das Topmodell; Claude Mythos Preview liegt darüber und ist auf defensive Cybersicherheits-Workflows beschränkt.

Dieser Unterschied zeigt sich an den Extremen: sehr lange Coding-Sessions oder Pipelines, die Dutzende Tools verketten.

Coding und agentische Workflows

Beim Coding auf Repository-Ebene liegt Opus 4.7 in den von den Anbietern gemeldeten Benchmarks vorn (volle Zahlen unten). Neu ist die Selbstüberprüfung der Ausgaben: Das Modell prüft seine Ergebnisse, bevor es zurückmeldet. Genspark hob zudem die Loop-Resistenz hervor: Opus 4.7 bleibt seltener in Endlosschleifen an einem Problem hängen. Das ist genau der Punkt, der erst wichtig wird, wenn ein Agent 40 Minuten lang auf der Stelle tritt.

GPT-5.4 führt Terminal-Bench 2.0 mit rund sechs Punkten Vorsprung (75,1% gegenüber 69,4%), wobei Anthropic anmerkt, dass GPT-5.4s Wert aus einem selbst berichteten Harness stammt. GPT-5.4 führte außerdem Interactive Thinking ein: Mitten in der Antwort kannst du bei komplexem Reasoning eingreifen und den Plan umleiten, wenn der Weg falsch aussieht. Opus 4.7 hat dafür kein Pendant. Die SWE-bench-Lücke ist real: Sechs Punkte auf einem vom Anbieter ausgewählten Benchmark sind ein nützlicher Hinweis, aber kein Urteil.

Kontextfenster und Long-Context-Arbeit

Beide Modelle unterstützen etwa 1 Mio. Tokens; der Unterschied liegt in der Rechnung. Opus 4.7 berechnet einen einheitlichen Satz über das gesamte Fenster, ein Request mit 900.000 Tokens kostet pro Token also genauso viel wie einer mit 9.000. GPT-5.4 kostet unter 272.000 Input-Tokens 2,50 $ pro Million, aber wenn du diese Schwelle überschreitest, wird die gesamte Sitzung neu bepreist. Die genauen Zahlen kommen im Preisabschnitt.

Es gibt außerdem eine Tokenizer-Besonderheit: Opus 4.7 kann denselben Text in bis zu 35% mehr Tokens abbilden als 4.6. Der Preis pro Token bleibt gleich, aber die effektiven Kosten pro Aufgabe können steigen.

In echten Long-Context-Tests erreichte Opus 4.7 laut Partner-Testing die höchste Konsistenzwertung im Schnitt, gleichauf, über sechs Research-Module, bei 0,715. RAG-Pipelines nahe der 1M-Grenze solltest du auf deiner eigenen Workload testen, bevor du dich auf Anbieter-Benchmarks verlässt.

Toolnutzung, Multimodalität und Umgebungsinteraktion

Auf dem Papier ähneln sich die Tooloberflächen, in der Praxis unterscheiden sie sich stärker. Auf OSWorld-Verified (Desktop-Computerbedienung) führt Opus 4.7 nun mit 78,0% gegenüber 75,0% bei GPT-5.4, beide über dem Human-Expert-Baseline von 72,4%. Im Browser-basierten Webresearch dreht sich das Bild: GPT-5.4 erreicht 89,3% auf BrowseComp (Pro-Variante) gegenüber 79,3% bei Opus 4.7. Eine einzige „Computerbedienung“-Kennzahl verschleiert die Trennung zwischen Desktop und Browser.

Das große multimodale Upgrade von Opus 4.7 ist die Bildauflösung: Bilder bis 2.576 Pixel an der langen Kante, rund 3,75 Megapixel – mehr als das Dreifache früherer Claude-Modelle – werden automatisch mit höherer Treue verarbeitet, ohne zusätzlichen API-Parameter. XBOW, ein Security-Testing-Partner, meldete einen Sprung der visuellen Genauigkeit von 54,5% (Opus 4.6) auf 98,5% (4.7) – der stärkste Zugewinn auf einem Einzel-Benchmark in dieser Release.

Auch die Tool-Architektur unterscheidet sich: GPT-5.4 lädt Tooldefinitionen bei Bedarf, statt alle im Prompt einzubetten – das reduziert Token-Overhead in großen Tool-Ökosystemen. Opus 4.7 denkt sich erst durch das Problem, bevor es Tools aufruft, und verwendet insgesamt weniger Toolcalls; mit höherem Effort-Level steigt die Toolnutzung.

Steuerbarkeit, Verlässlichkeit und Ausgabestil

Opus 4.7 nimmt Anweisungen wörtlich. Es generalisiert nicht von einem Punkt zum anderen und leitet nichts Ungesagtes ab – Prompts für 4.6 können daher unerwartet reagieren; Anthropic empfiehlt Feintuning. Der Vorteil zeigt sich in langen agentischen Loops: Das Engineering-Team von Ramp brauchte deutlich weniger Schritt-für-Schritt-Anleitung in Multi-Tool-Workflows, und Hexagons Tests sahen Opus 4.7 auf niedrigem Effort in etwa auf dem Niveau von Opus 4.6 auf mittlerem Effort.

Anthropic führte außerdem xhigh als neues Effort-Level zwischen high und max ein und hob den Standard von Claude Code auf xhigh für alle Pläne an. Zusammen mit dem neuen Tokenizer können die Output-Tokens in späteren agentischen Zyklen höher ausfallen als bei 4.6; Task Budgets (jetzt in der Public Beta) erlauben, die Ausgaben einer Session zu deckeln. Bei GPT-5.4 dreht sich die Steuerbarkeit um Interactive Thinking (siehe Coding-Abschnitt), und OpenAIs Prompt-Guide betont, dass das Modell mit expliziten Output-Verträgen besonders gut performt.

Noch eine Anmerkung aus Anthropics eigener Safety-Evaluation: Opus 4.7 verbesserte sich bei Ehrlichkeit und Widerstand gegen Prompt-Injection gegenüber 4.6, zeigte aber leichte Rückschritte beim Widerstand gegen zu detaillierte Harm-Reduction-Ratschläge zu kontrollierten Substanzen. Anthropics Gesamturteil: „weitgehend gut ausgerichtet und vertrauenswürdig, aber nicht vollends ideal im Verhalten.“

Opus 4.7 vs. GPT-5.4 in Benchmark-Tests

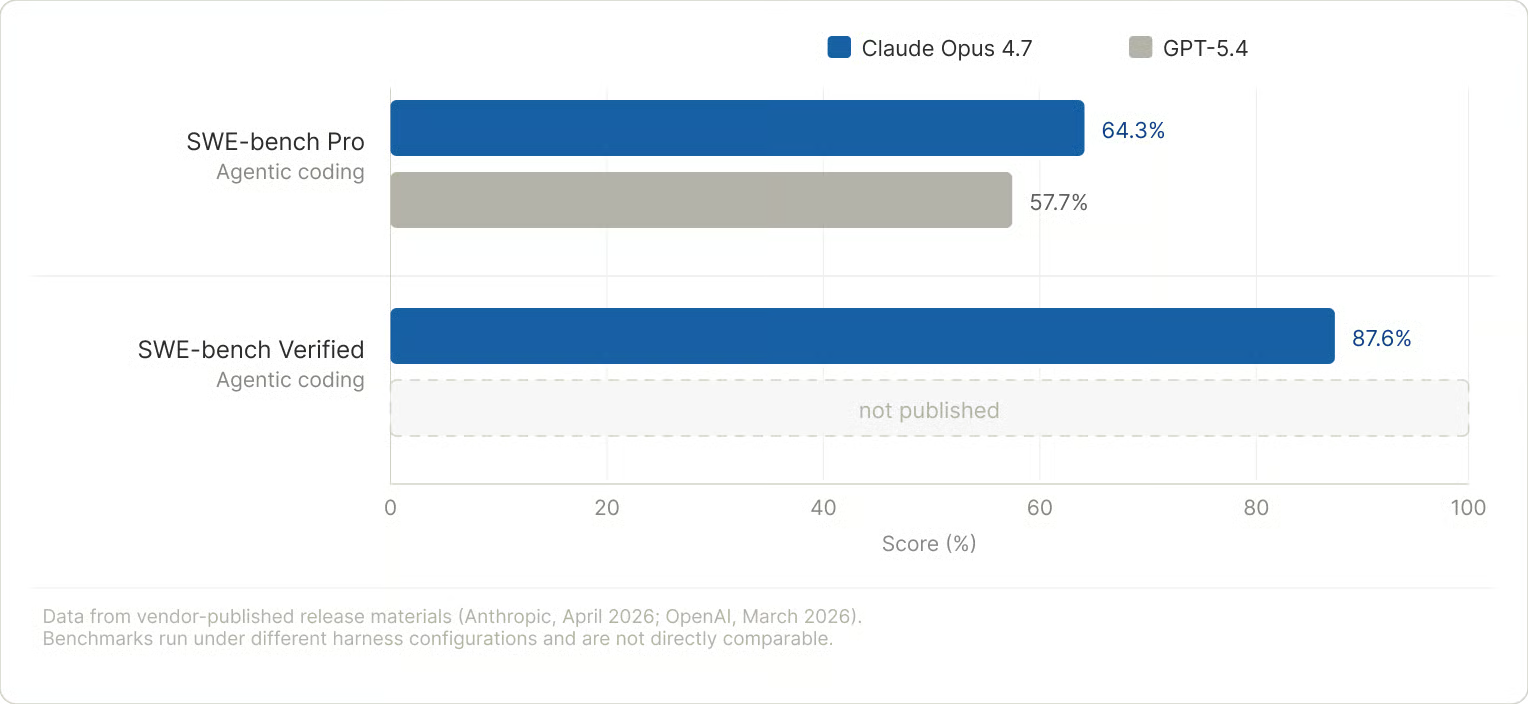

Benchmarks sind wertvoll – aber mit Vorsicht zu genießen. Beide Anbieter wählen Benchmarks, die sie begünstigen, und Vals.ai sowie Artificial Analysis hatten Opus 4.7 zum Schreibzeitpunkt noch nicht erfasst. Teste deine eigenen Aufgaben, bevor du aus diesen Zahlen Schlüsse ziehst.

Coding-Benchmarks

Die Tabelle unten zeigt die relevantesten Coding-Indizien aus den jeweiligen Release-Unterlagen.

|

Benchmark |

Claude Opus 4.7 |

GPT-5.4 |

Hinweise |

|

SWE-bench Pro |

64,3% |

57,7% |

Vom Anbieter gemeldet; unterschiedliche Harness-Konfigurationen |

|

SWE-bench Verified |

87,6% |

Nicht veröffentlicht |

OpenAI hat für diese Variante keinen offiziellen Score veröffentlicht |

|

CursorBench |

~70% |

Nicht veröffentlicht |

Cursor ist Anthropic-Partner; nicht unabhängig |

|

Terminal-Bench 2.0 |

69,4% |

75,1% |

Anthropic weist darauf hin, dass GPT-5.4s Wert aus einem selbst berichteten Harness stammt; zudem Rückgang von GPT-5.3-Codex (77,3%) |

|

GPQA Diamond |

94,2% |

94,4% (Pro) |

Praktisch gleichauf; auf diesem Niveau nahezu gesättigt |

Coding-Benchmarks sprechen klar für Opus 4.7. Bild: Autor.

SWE-bench hat mehrere Varianten, und beide Anbieter heben die jeweils vorteilhafte hervor. Anthropic setzte Memorization-Filter ein und berichtet, dass der Vorsprung von Opus 4.7 auch nach Ausschluss markierter Aufgaben bestehen bleibt. Kontext: Z.ai’s Open-Weight-GLM-5.1 lag Anfang April 2026 kurzzeitig mit 58,4% bei SWE-bench Pro vorne, bevor Opus 4.7 mit 64,3% kam – „State of the Art“-Ansprüche haben hier eine kurze Halbwertszeit.

Agenten- und Computerbedienungs-Benchmarks

Mit dem Release von Opus 4.7 veröffentlichte Anthropic Vergleichswerte für beide Modelle über die meisten agentischen Benchmarks. Das Bild ist gemischt, nicht einseitig.

|

Benchmark |

Claude Opus 4.7 |

GPT-5.4 |

Hinweise |

|

OSWorld-Verified |

78,0% |

75,0% |

Desktop-Computerbedienung; beide über dem Human-Expert-Baseline von 72,4% |

|

BrowseComp |

79,3% |

89,3% (Pro) |

Webrecherche mit Multi-Hop-Reasoning; GPT-5.4 führt |

|

MCP-Atlas |

77,3% |

68,1% |

Skalierte Toolnutzung über viele verbundene Services |

|

WebArena-Verified |

Nicht veröffentlicht |

67,3% |

Autonome Web-Navigation |

|

Toolathlon |

Nicht veröffentlicht |

54,6% |

Mehrschritt-Tool-Orchestrierung; gestiegen von 46,3% auf GPT-5.2 |

|

Finance Agent v1.1 |

64,4% |

61,5% (Pro) |

Langkontext-Finanzresearch-Agent |

|

GDPval-AA |

1753 Elo |

1674 Elo |

Wissensarbeit auf Profiniveau; Opus 4.7 führt mit 79 Elo-Punkten |

|

BigLaw Bench |

90,9% bei hohem Effort |

Nicht veröffentlicht |

Juristische Dokumentaufgaben; Harvey-Partnerbewertung |

Das Bild trennt sich nach Umgebung: Opus 4.7 gewinnt auf Desktop, bei Toolnutzung und Wissensarbeit; GPT-5.4 beim Browsen und Webresearch. Mehrere GPT-5.4-Werte stammen aus der Pro-Variante, die Standardstufe kann niedriger liegen. Unabhängige Runs auf einer gemeinsamen Scaffold sind der nächste Schritt.

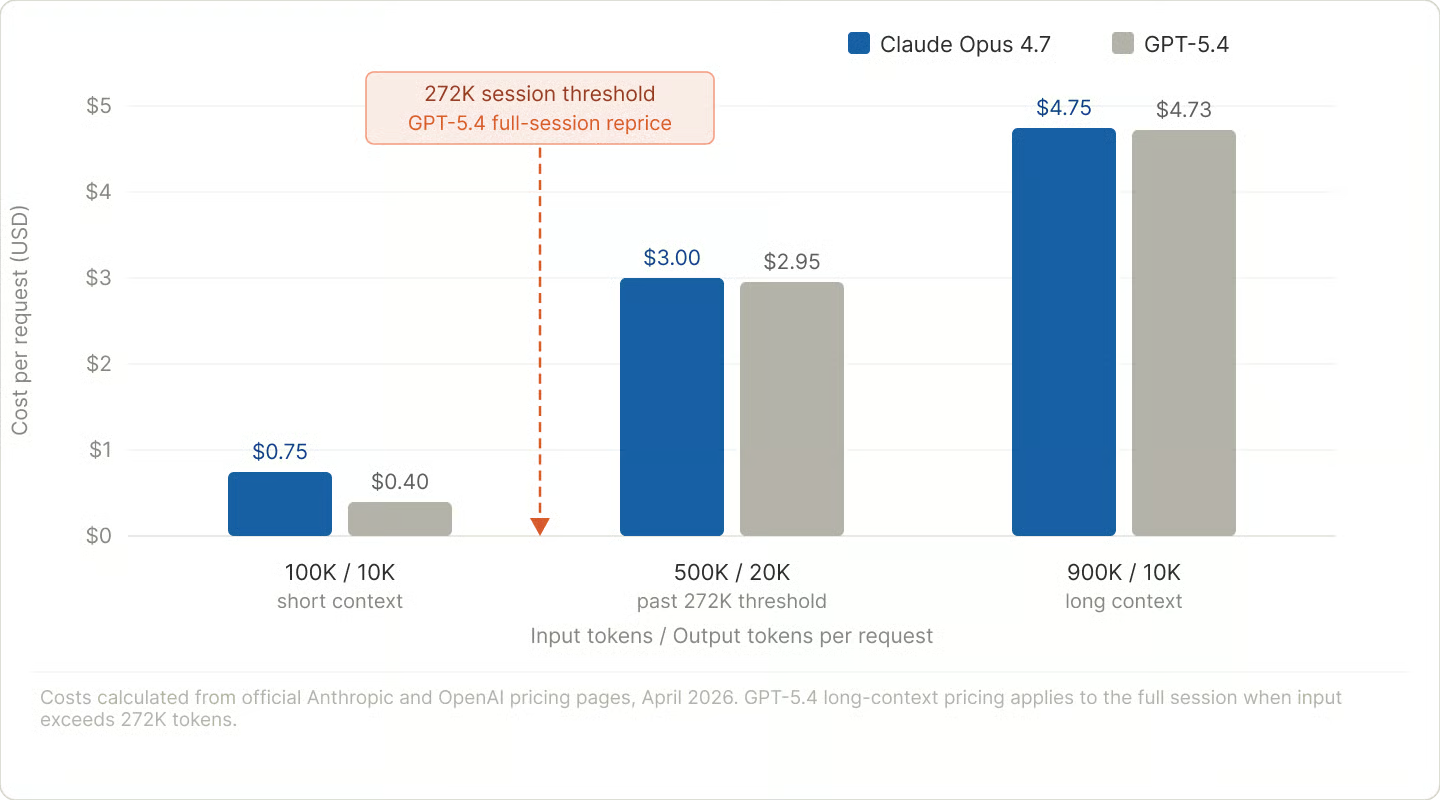

Preise: Opus 4.7 vs. GPT-5.4

Die offiziellen Sätze wirken simpel. Die realen Kosten sind es nicht.

API-Preismodell

Am besten verstehst du den Unterschied an konkreten Szenarien.

Bei 100.000 Input-Tokens und 10.000 Output-Tokens (klar unter GPT-5.4s 272k-Schwelle) kostet GPT-5.4 rund 0,40 $, Opus 4.7 etwa 0,75 $. Fast halb so teuer für kurze bis mittlere Kontexte.

Bei 500.000 Input und 20.000 Output, also über GPT-5.4s Schwelle, liegen beide Modelle preislich fast gleichauf: 2,95 $ vs. 3,00 $. Bei 900.000 Input und 10.000 Output sind sie nahezu identisch.

Die 272k-Neubepreisungsgrenze überrascht viele: Sie gilt für die gesamte Sitzung, nicht nur für die Tokens über der Schwelle. Eine Pipeline, die regelmäßig 280k-Prompts sendet, zahlt in jeder einzelnen Anfrage den vollen Long-Context-Tarif – nicht nur für die zusätzlichen 8k. Es ist eine Sitzungs-Neubepreisung, kein marginaler Aufschlag.

Bei GPT-5.4 steigen die Kosten jenseits von 272k Tokens. Bild: Autor.

Wie im Kontextfenster-Abschnitt erwähnt, kann der neue Tokenizer dieselben Inputs in bis zu 35% mehr Tokens abbilden als bei Opus 4.6. Der Preis pro Token bleibt gleich, aber die realen Kosten pro Aufgabe können steigen. Miss auf echtem Traffic; reine Extrapolation von 4.6 führt zu zu niedrigen Zahlen.

Beide Plattformen bieten rund 90% Rabatt auf gecachte Input-Tokens: 0,50 $ pro Million bei Opus 4.7, 0,25 $ pro Million bei GPT-5.4 unter 272k. Die Batch-APIs bringen für nicht-zeitkritische Jobs zusätzlich etwa 50% Nachlass. Für asynchrone Workloads sind diese Rabatte der größte Hebel auf beiden Plattformen.

Außerdem gibt es Tool-spezifische Kosten, die leicht untergehen. Anthropic berechnet 10 $ pro 1.000 Websuchen, plus die üblichen Tokenkosten für abgerufene Inhalte. OpenAI berechnet Speicher und Abfragen für File Search separat. In toolintensiven Pipelines summiert sich das.

Kosten je nach Workload

Für kurze Kontexte mit hohem Volumen (API-Calls unter 100k Tokens, Batch-Klassifikation, schnelle Iteration) ist GPT-5.4 günstiger. Beim Input kann der Unterschied bis zu Faktor 2 erreichen.

Über 272k Tokens dreht sich der Vorteil um. Der Festpreis von Opus 4.7 ist leichter zu budgetieren und liegt bei den Gesamtkosten fast gleichauf mit GPT-5.4.

Beide Plattformen erheben einen kleinen Aufpreis für Datenresidenz (rund 10% auf beiden Seiten). Auf diesem Niveau ist es eine Compliance-Entscheidung, keine Preisfrage. Für agentische Claude-Code-Sessions sind Task Budgets (siehe Steuerbarkeit) der Haupthebel für den Tokenverbrauch.

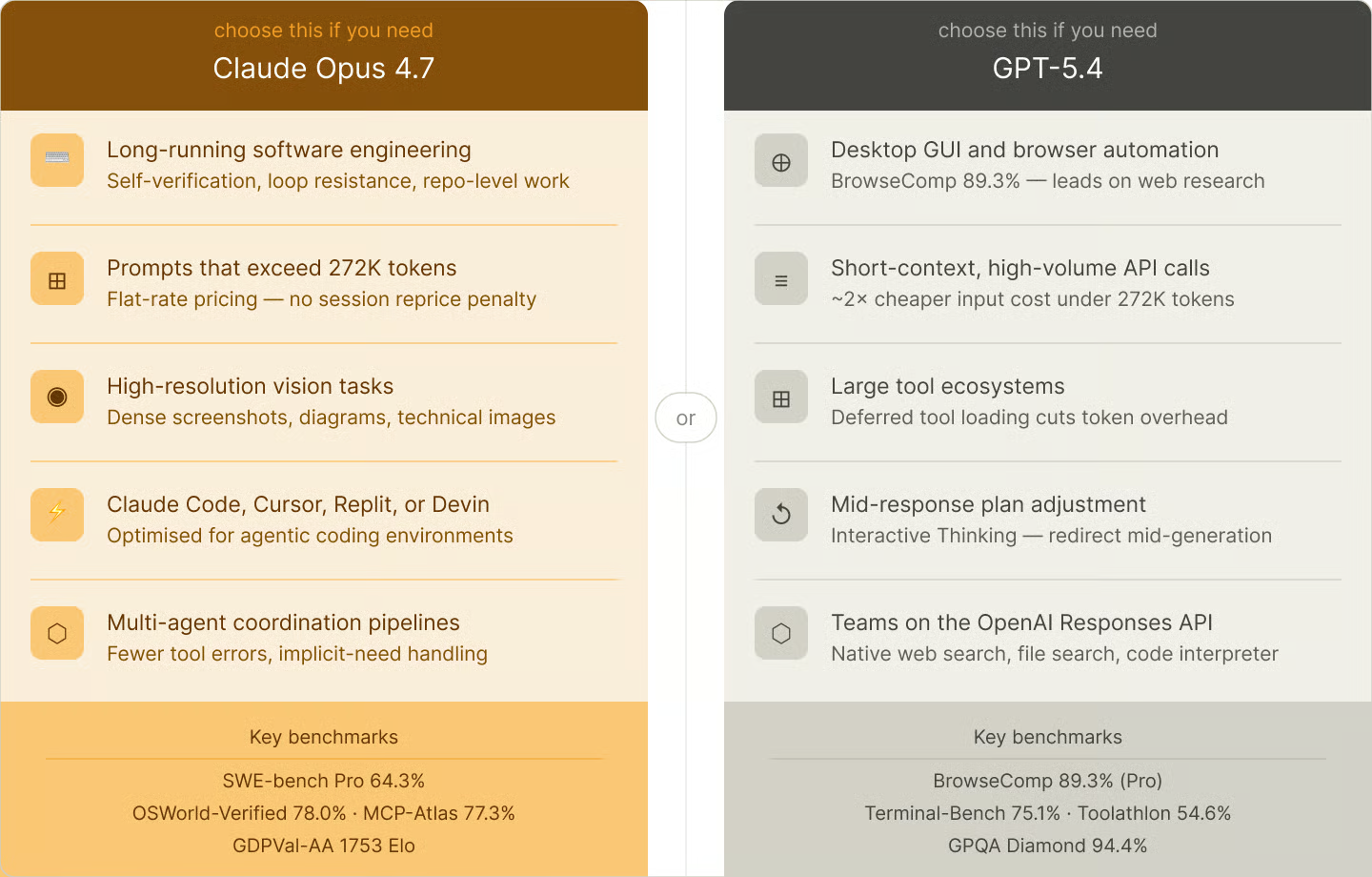

Ist Claude Opus 4.7 besser als GPT-5.4?

Eine allgemeingültige Antwort gibt es nicht – und jeder Artikel, der dir eine verkauft, verkauft dir etwas anderes mit.

Wähle Claude Opus 4.7, wenn du vor allem lange Software-Engineering-Aufgaben abgibst, bei denen Selbstüberprüfung zählt, dein Agent Desktop-Apps bedient, deine Prompts regelmäßig 272k Tokens überschreiten, dein Workflow dichte Screenshots oder technische Diagramme liest oder du bereits Claude Code, Cursor, Replit oder Devin nutzt.

Wähle GPT-5.4, wenn dein Agent intensive Browser-basierte Webrecherche betreibt, deine Workloads unter 272k Tokens bleiben und Kosten relevant sind, du auf ein großes Tool-Ökosystem mit On-Demand-Tool-Loading setzt oder dein Team bereits die OpenAI Responses API nutzt.

Teste beide, wenn sich deine Arbeit zwischen autonomer Webrecherche und Long-Form-Coding aufteilt. Die Stärken von GPT-5.4 im Browser und Terminal passen zu agentischen Web-Workflows; die Loop-Resistenz und der Festpreis von Opus 4.7 sind besser für tiefe Engineering-Sessions und dokumentenlastige Pipelines.

Das richtige Modell für deinen Workflow wählen. Bild: Autor.

Ein Punkt gilt für beide: Batch-API-Rabatte können bei asynchronen Workloads wichtiger sein als die Modellwahl. Und da unabhängige Benchmarks für Opus 4.7 noch nachziehen, ist ein Pilot auf einem echten Ausschnitt deiner Arbeit mehr wert als jeder Vergleichsartikel – einschließlich diesem.

Fazit

Der Unterschied zwischen Claude Opus 4.7 und GPT-5.4 dreht sich weniger darum, welches Modell „klüger“ ist, sondern welche Art Arbeit du damit erledigst.

Anthropic setzt auf Autonomie: ein Modell, das über lange Engineering-Strecken kohärent bleibt und seine Ausgaben prüft. OpenAI setzt auf Breite: mehr Tooloberfläche und günstigere Preise für die Mehrheit der Prompts unter 272k Tokens.

Die Preisgestaltung überrascht Teams am ehesten – und wie oben beschrieben ist die 272k-Sitzungsgrenze die konkrete Falle. Was die monatlichen Kosten stärker bewegt als der Basistarif, sind meist Caching und Batch-API-Rabatte auf beiden Plattformen.

Die Benchmark-Unterschiede liegen im einstelligen Bereich, und beide Anbieter liefern alle paar Wochen neue Modelle. Wähle, was zu deinem Stack passt – und prüfe in einem Monat erneut.

Wenn du tiefer in die praktische Nutzung einsteigen willst, deckt unser Kurs Software Development with Cursor KI-unterstützte Coding-Workflows in der Praxis ab.

Ich bin Dateningenieur und Community-Builder und arbeite mit Datenpipelines, Cloud- und KI-Tools. Außerdem schreibe ich praktische, super nützliche Tutorials für DataCamp und angehende Entwickler.

FAQs

Ist Claude Opus 4.7 außerhalb der Anthropic-API verfügbar?

Ja. Opus 4.7 ist auf Amazon Bedrock, Google Cloud Vertex AI und Microsoft Foundry unter der Modell-ID claude-opus-4-7 verfügbar. Regionale Verfügbarkeit und Preise für gecachte Tokens können je Cloud variieren – prüfe daher die Anbieterseite, wenn Datenresidenz für deinen Einsatz relevant ist.

Muss ich meinen API-Code beim Wechsel von Opus 4.6 auf Opus 4.7 anpassen?

Ja, drei Breaking Changes. Das Setzen von temperature, top_p oder top_k auf Nicht-Standardwerte führt nun zu einem 400-Fehler. Der ältere Parameter budget_tokens schlägt fehl; ersetze ihn durch Thinking im adaptiven Modus. Und der neue Tokenizer erzeugt mehr Tokens pro Anfrage – jede hart kodierte max_tokens -Grenze, die bei 4.6 schon knapp war, kann bei 4.7 die Ausgabe abschneiden. Passe auch deine Prompts an: 4.7 nimmt Anweisungen wörtlicher als 4.6.

Welches Modell ist besser fürs Coding?

Opus 4.7 führt bei SWE-bench Pro (64,3% vs. 57,7%) und SWE-bench Verified (87,6%; OpenAI hat hier keinen Score veröffentlicht). GPT-5.4 führt bei Terminal-Bench 2.0 mit 75,1% gegenüber 69,4%, wobei Anthropic anmerkt, dass dieser Wert aus einem selbst berichteten Harness stammt. Empfehlung: Opus 4.7 für Engineering auf Repository-Ebene, GPT-5.4 für terminallastige Workflows. Unabhängige Auswertungen auf gemeinsamer Scaffold stehen noch aus.

Wie wirkt sich der neue Opus-4.7-Tokenizer auf die Kosten aus?

Die Spanne liegt bei 1,0 bis 1,35x, nicht pauschal 35% – der Effekt hängt also vom Inhalt ab. Weniger offensichtlich: 4.7 „denkt“ auf späteren agentischen Turns bei höherem Effort zudem mehr, wodurch sich die Tokenzahlen über eine Session hinweg addieren. Task Budgets sind die praktische harte Grenze.

Ist GPT-5.4 besser in der Toolnutzung als Claude Opus 4.7?

Auf unterschiedliche Weise. GPT-5.4 bietet eine breitere eingebaute Toolfläche (Websuche, Dateisuche, Code Interpreter, Computerbedienung) mit On-Demand-Tool-Loading. Opus 4.7 setzt auf weniger Toolcalls und denkt upfront. Notion berichtet, dass Opus 4.7 als erstes Modell ihre Tests zu impliziten Bedürfnissen bestand und ein Drittel der Toolfehler von 4.6 produzierte. Auf MCP-Atlas (skalierte Toolnutzung) führt Opus 4.7 mit 77,3% zu 68,1% – eine breitere Toolfläche bedeutet nicht automatisch bessere Orchestrierung.