87% of data science projects never make it into production. Some fail because of a lack of management support, others due to a lack of quality data and proper infrastructure. Organizations looking to reap value from AI and machine learning should be aware of common pitfalls when scoping and managing AI projects. Yet, that is easier said than done. Even those familiar with implementing traditional software projects might still face difficulties implementing AI projects due to their unique nature.

What makes AI projects different from traditional software projects?

In a traditional software project, a programmer explicitly codes the logic of the program, which takes in an input to produce an output. An example is a banking software that deducts a borrower's credit score (an output) when they apply for a large loan (an input).

On the other hand, AI projects that leverage machine learning, do not require an explicitly coded logic. Machine learning algorithms systematically learn the pattern between the input and output before predicting an output based on an input. An example is predicting the borrower's default risk based on their credit score and the sum of loans taken out.

This can introduce considerable inertia when an organization first implements and manages its AI projects. Thus, this post aims to provide some guidelines for your next deployable and profitable AI project.

Three characteristics for initial AI projects

These three characteristics set the stage for the success of a company’s first AI projects. Here’s why.

AI projects should be viable

Before starting new AI projects, organizations should measure their own data maturity before committing resources that could be spent on non-viable projects. The pillars of data maturity range from infrastructure, people, tools, organization, and processes. Useful questions around these pillars could enable organizations to better prioritize which projects are most viable:

- Does the company have the ability to reliably collect data?

- Does the company have the infrastructure to store and clean the data?

- Does the team have the prerequisite skills to deliver reliable, accurate model results?

- Does the company have the processes, tools, and skills to monitor models in production?

AI projects should be simple

A technically simple project is more manageable for an organization with relatively low data maturity. A simple project that is fast to deliver helps generate quick wins that build up the experience of the team. This builds up the momentum for future AI projects, spurring a virtuous cycle that builds excitement in the company that generates buy-in for more technically advanced AI projects. As the company progresses in its AI maturity, it can then move on from low-hanging fruit to more complex projects.

AI projects should be valuable

Harvard Business Review purports that one of the traits of a strong AI project is the value it creates. This can come in three forms - AI can help make better decisions, reduce cost by automating repetitive manual tasks, or be used in new products. Such value derived from successful AI projects can help convince stakeholders to invest in the company’s AI capabilities.

Looking for a simple, valuable, and viable project is not trivial. To find projects that fit the bill, Veljko Krunic, the author of Succeeding with AI, proposes the following steps.

- Identify business problems.

- Brainstorm AI solutions to business problems.

- Estimate the technical viability and complexity of the project.

- Determine business metrics to measure the value of the AI project.

- Estimate the business value based on business metrics.

Executing these steps is simple in theory but nuanced in practice. In particular, the choice of business metrics might not be immediately obvious. The next section outlines how business metrics can be selected.

Measure AI projects with business metrics

The business value of an AI project can be measured with appropriate business metrics. Yet, the practice of measuring an AI system’s performance with business metrics can be counterintuitive to many AI practitioners.

Machine learning models are typically measured with evaluation metrics that measure their technical performance on a data set, not business metrics that quantify its value on a business. This is a common practice in academia, where the progress of machine learning algorithms is pegged to quantifiable, objective technical benchmarks. However, AI systems in the real world are tied to tangible results that go beyond accuracy, like revenue, cost, and customer satisfaction.

Thus, there is a need to connect the technical performance of AI systems with business metrics. Often, such links are not immediately clear. Business leaders and AI experts should collaborate and reach a consensus on a business metric that can be used to measure and optimize the performance of an AI system, according to Andrew Ng, the co-founder of Google Brain.

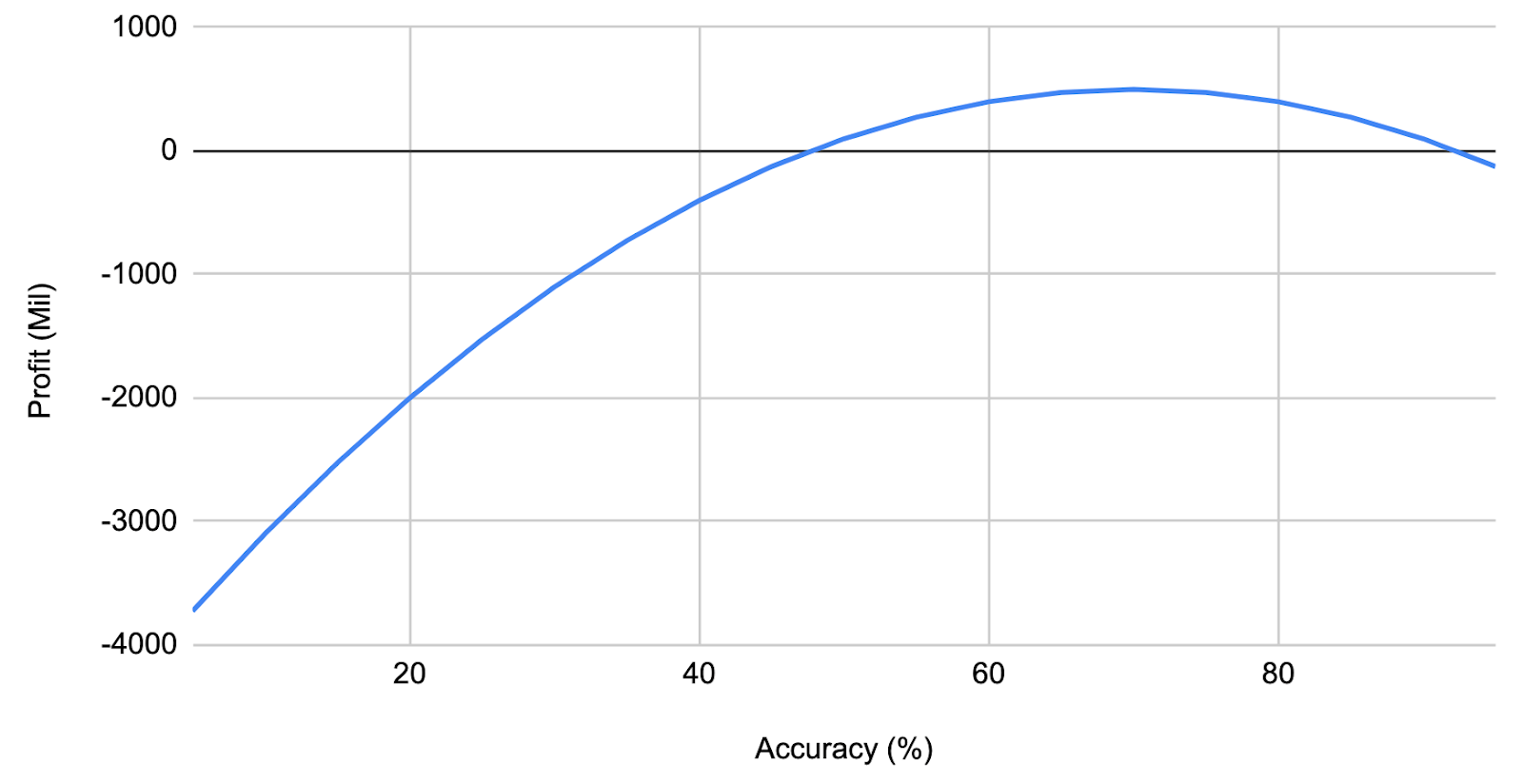

In this process, the use of a profit curve can help establish the relationship between business and technical metrics quantitatively, as proposed in the book Data Science for Business. This translates technical progress to business terms, allowing leaders to answer questions such as ‘how much more revenue is generated with a one percent increase in accuracy?’

Figure 1: An illustration of a profit curve that links the accuracy of an AI system with the resulting profit

How to communicate the value of an AI project

Communication between machine learning practitioners typically involves technical jargon around machine learning models, and rightfully so. However, that does not make sense to a non-technical audience, which cares much more about the business impact of a model rather than a 1% incremental improvement in accuracy.

An AI practitioner can avoid such a trap by keeping in mind the target audience when discussing updates around AI projects. Particularly, they should be mindful that the improvement of business metrics has a higher priority than that of technical metrics for business stakeholders. They can also benefit from data storytelling techniques in conveying how AI projects can help with business metrics—whether it is an improvement in customer experience, an increase in retention rates, or a decrease in cost.

Implementation of AI systems and machine learning pipelines go hand-in-hand

We have seen how an AI system is different from a traditional software system. In particular, an AI system does not require a programmer to explicitly code its logic since it is able to learn patterns from data. For this to happen, one needs to first build the pipeline that takes in the necessary data, transforms it, and feeds it to the AI system.

As such, AI systems consist of not only the machine learning algorithm but a machine learning (ML) pipeline. This pipeline describes the flow of data from start to finish, including how it is obtained, transformed, used, presented, and monitored.

Machine learning pipelines are a part of a larger software system. The procedures of developing a machine learning pipeline and a typical software system are largely similar. Among other things, the need for competent data engineers, the use of software development processes similar to DevOps, and the importance of security are common themes in developing both AI and software projects.

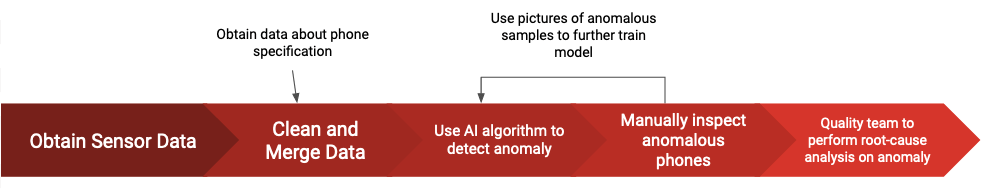

Here is an example of an ML pipeline of a computer vision system that detects anomalies in a phone manufacturing process. This simplified ML pipeline detects phone defects using photos and facilitates the work of a quality assurance team.

Figure 2: A simplified ML pipeline of a phone manufacturer

From this example, we can tell that the design of a machine learning pipeline is highly dependent on the data, the algorithm, and the business use case. There is rarely a one-size-fits-all machine learning pipeline.

A complete pipeline also includes data collection, feature extraction, data verification, results monitoring, among others, making it vast and highly complex, according to Google’s highly influential paper. This is why a Machine Learning Engineering Team is usually dedicated to building and maintaining the pipeline.

Build robust ML systems from the get-go to reduce costly changes

The deployment of machine learning systems might not go as expected, potentially due to a change in the data, a weakness in the model, or a defect in the pipeline. When this happens, practitioners wanting to improve the system may propose drastic changes to the existing pipeline, only to discover to their dismay that machine learning pipelines quickly become resistant to change once they are implemented.

Veljko Krunic calls this process the “ossification of ML pipelines.” The inertia to change comes from the challenges of modifying the code of such a technically complicated project. Business teams who foresee the ripple effect of pipeline changes on business operations may also object to the changes. In the example in Figure 2 above, if the algorithm’s capacity to automatically flag anomalous phones reduces drastically, the replacement headcount required for manual phone inspection might take weeks to be filled.

Making drastic changes to ossified AI projects is costly. A revamp might be necessary when an unscalable proof-of-concept machine learning pipeline is implemented for production. That said, the ossification of ML pipelines is oftentimes unavoidable.

As such, companies aiming to manage AI projects should aim to implement the right pipeline from the get-go. Machine Learning Model Operationalization Management (MLOps) is a burgeoning field that helps organizations achieve such a goal. MLOps tools and practices provide an end-to-end machine learning development process to design, build and manage reproducible, testable, and iterative ML-powered software. This enables data teams to implement continuous integration and continuous delivery for their machine learning pipelines, monitor data drifts, and maintain the explainability of their pipelines.

Build Your team’s capability to manage AI projects effectively

Managing an AI project is no easy feat. An organization more than just the right technical talent to build AI projects. It also needs a pervasive data culture and strong data literacy to catalyze AI adoption. Much like implementing AI projects takes time, programs to teach data literacy are long-drawn.

That is where DataCamp for Business can help by providing an interactive learning platform for companies that need to upskill and reskill their people on data skills. With topics ranging from data engineering to machine learning, over 1,600 companies trust DataCamp for Business to upskill their talent.

Not sure about your team's data capabilities? Take our data maturity assessment.