Course

Introduction

Large Language Models (LLMs) are at the forefront of Generative AI, transforming how machines understand and generate human-like text. LLM Models like GPT, Claude, LLaMA, Mistral, etc. drive innovations in chatbots, content creation, and beyond, offering unprecedented capabilities in processing and generating natural language.

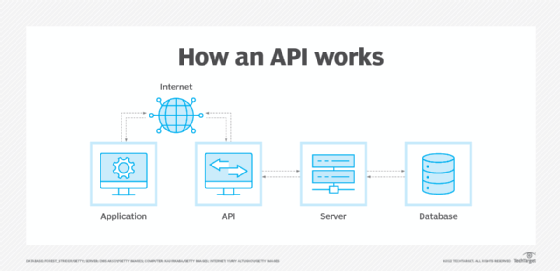

However, the true utility of LLMs extends beyond their raw computational power; accessibility is key. APIs, or Application Programming Interfaces, serve as bridges, allowing diverse applications to tap into the power of LLMs. They enable developers to integrate sophisticated language understanding and generation features into their applications, ranging from simple text analysis to complex dialogue systems.

In this tutorial, we will build a simple Python Application using OpenAI GPT model and serve it with an API endpoint that will be developed using the FastAPI framework in Python.

Large Language Models

Large Language Models (LLMs) refer to advanced artificial intelligence systems designed to understand and generate human-like text. These models are trained on vast amounts of data, enabling them to grasp complex patterns, comprehend language nuances, and generate coherent responses.

LLMs have the capability to perform various language-related tasks, including language translation, text completion, summarization, and even engaging in conversational interactions. GPT is an example of LLM.

In this post, we will build a simple Python application using OpenAI’s GPT API and then create a REST endpoint for our application using the FastAPI framework in Python.

LLM is a type of Generative AI. If you would like to learn about Generative AI and how it can boost your creativity, check out our blog posts on Using Generative AI to Boost Your Creativity and our podcast, Inside the Generative AI Revolution.

You can also register for our upcoming course on Large Language Models Concepts.

What is an API?

Application Programming Interface (API) is the backbone of software communication, enabling different systems, applications, and devices to interact and share data seamlessly. It acts as a set of rules and protocols that allow software entities to communicate, offering a predefined way for one application to leverage capabilities or data from another, without needing to understand its internal workings.

APIs are omnipresent in today's digital ecosystem, powering web services, mobile apps, and cloud computing, facilitating inter-application communication and the integration of third-party services. They simplify the development process by providing building blocks developers can use to speed up the creation of new software, enhancing functionality and user experience without starting from scratch.

Through APIs, developers can extend their applications in ways that would be otherwise complex or impossible, making them a critical component in modern software development.

If you are interested in learning about engineering as it relates to deploying machine learning models in real life, check out this comprehensive guide on Machine Learning, Pipelines, Deployment and MLOps. You can also check out the free course Introduction to API on Datacamp.

FastAPI

FastAPI is a Python framework designed for building high-performance APIs. Its rise to prominence is underpinned by its speed, ease of use, and compatibility with asynchronous programming. FastAPI facilitates developers in exposing LLM functionalities as API endpoints, making it simpler to deploy, manage, and scale AI-powered services.

FastAPI stands out for its performance and efficiency, leveraging modern Python features like type hints and asynchronous programming to offer speed improvements that rival NodeJS and even Go.

This performance edge is crucial for applications requiring real-time data processing and handling concurrent requests, such as those interfacing with LLMs. Its ability to automatically validate incoming data and serialize responses reduces boilerplate, making API development cleaner and more straightforward.

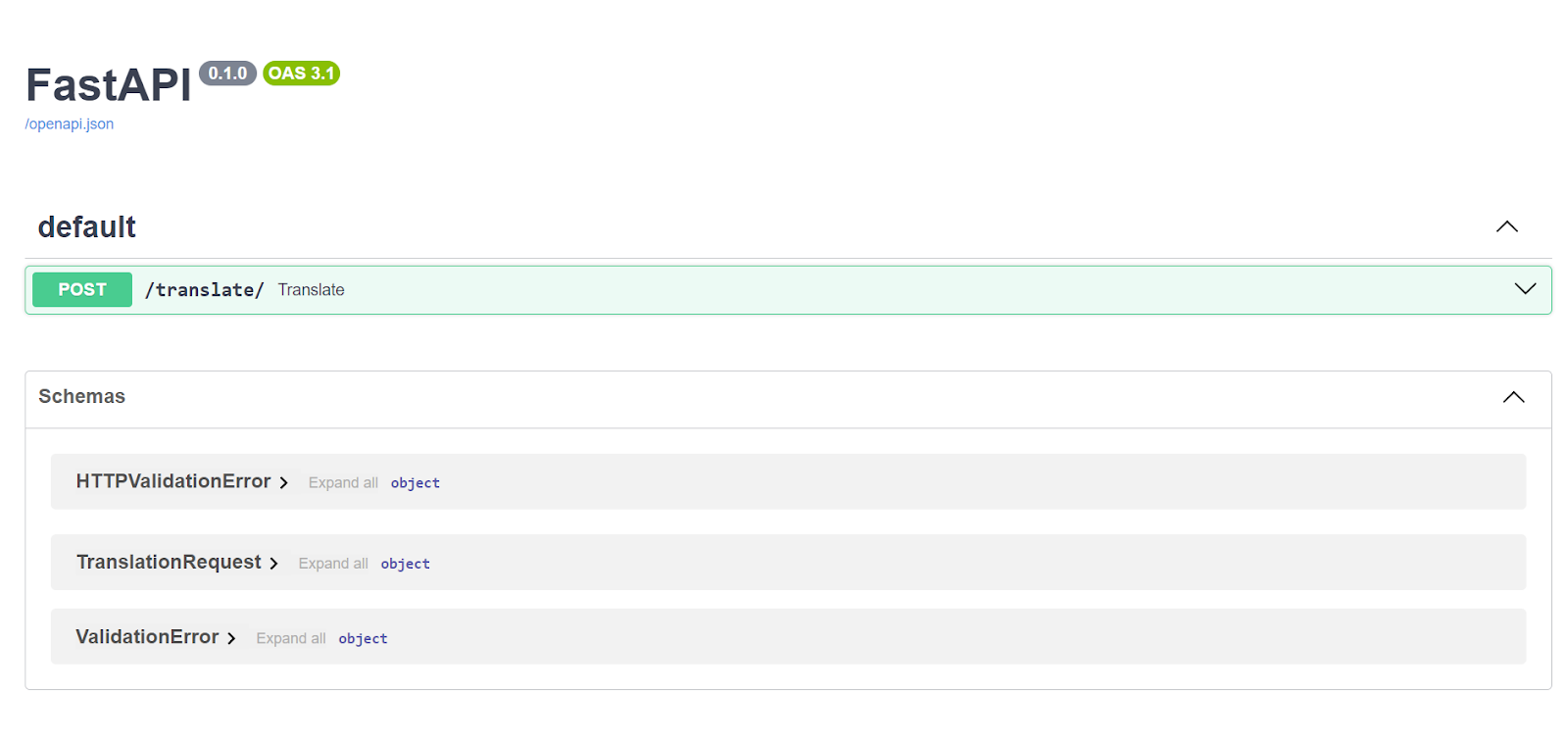

The automatic documentation feature, utilizing standards like OpenAPI, provides developers and users with clear, interactive API documentation, further simplifying the integration and testing process.

Example API Creation:

from typing import Union

from fastapi import FastAPI

app = FastAPI()

@app.get("/")

def read_root():

return {"Hello": "World"}

@app.get("/items/{item_id}")

def read_item(item_id: int, q: Union[str, None] = None):

return {"item_id": item_id, "q": q}This code creates a simple web server using FastAPI. The server responds to two types of web requests: one that just greets the user with "Hello World" when they visit the homepage ("/"), and another that displays an item's ID and an optional query parameter "q" when the user visits a specific item page ("/items/{item_id}"). The item ID is required and must be an integer, while the query parameter "q" is optional and can be a string or absent.

Run FastAPI server with:

Build End-to-End application in Python

The main purpose of this blog is to show how you can quickly build REST API endpoints for your LLM applications. We will create a simple Python application (just one function) that uses OpenAI GPT-4 API and translates English text into French. This application only has one function which is translate_text:

from openai import OpenAI

import os

os.environ["OPENAI_API_KEY"] = "..."

# Initialize OpenAI client with your API key

client = OpenAI()

def translate_text(input_str):

completion = client.chat.completions.create(

model="gpt-4-0125-preview",

messages=[

{

"role": "system",

"content": "You are an expert translator who translates text from english to french and only return translated text",

},

{"role": "user", "content": input_str},

],

)

return completion.choices[0].message.contentThis function takes one input from the user and simply passes it to the OpenAI GPT-4 model. It has a system prompt to define the context and the user prompt is the text we get from the user in input_str.

You can test this function by running the following. You should get the French text back.

# test the function

translate_text("this is a test string to translate")If you're considering sharing this application with others, simply sending the Python file for them to run on their command line could work for some users, especially those familiar with Python and command-line tools.

However, this approach has limitations. Not all users are comfortable using the command line, and even for those who are, running a Python script requires a Python environment set up with all necessary dependencies. This can be cumbersome and create a barrier for users to access the functionality of your translation application easily

This is where wrapping your application in a FastAPI to expose it as a REST API endpoint becomes a powerful solution. By doing so, you're essentially creating an accessible interface to your application that can be reached over the internet without requiring users to run Python scripts themselves. They can interact with your application through simple web requests, which can be made from a wide variety of platforms and programming languages, not just Python.

Creating a REST API with FastAPI automates the process of receiving translation requests and sending back translations. Users can send text to be translated through a web interface or a programmatic API call, making it much easier and more flexible for different use cases.

To convert this simple function into an API endpoint using FastAPI we will modify our code:

from fastapi import FastAPI, HTTPException

from pydantic import BaseModel

import os

os.environ["OPENAI_API_KEY"] = "..."

# Initialize OpenAI client with your API key

client = OpenAI()

# Initialize FastAPI client

app = FastAPI()

# Create class with pydantic BaseModel

class TranslationRequest(BaseModel):

input_str: str

def translate_text(input_str):

completion = client.chat.completions.create(

model="gpt-4-0125-preview",

messages=[

{

"role": "system",

"content": "You are an expert translator who translates text from english to french and only return translated text",

},

{"role": "user", "content": input_str},

],

)

return completion.choices[0].message.content

@app.post("/translate/") # This line decorates 'translate' as a POST endpoint

async def translate(request: TranslationRequest):

try:

# Call your translation function

translated_text = translate_text(request.input_str)

return {"translated_text": translated_text}

except Exception as e:

# Handle exceptions or errors during translation

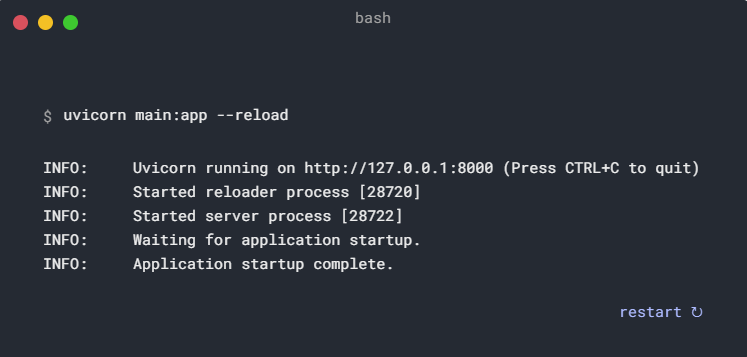

raise HTTPException(status_code=500, detail=str(e))To run and test this API locally simply run the following command:

uvicorn main:app --reloadHere, main.py refers to the file containing your code. The term app points to the FastAPI application instance, which is identified in your code app = FastAPI().

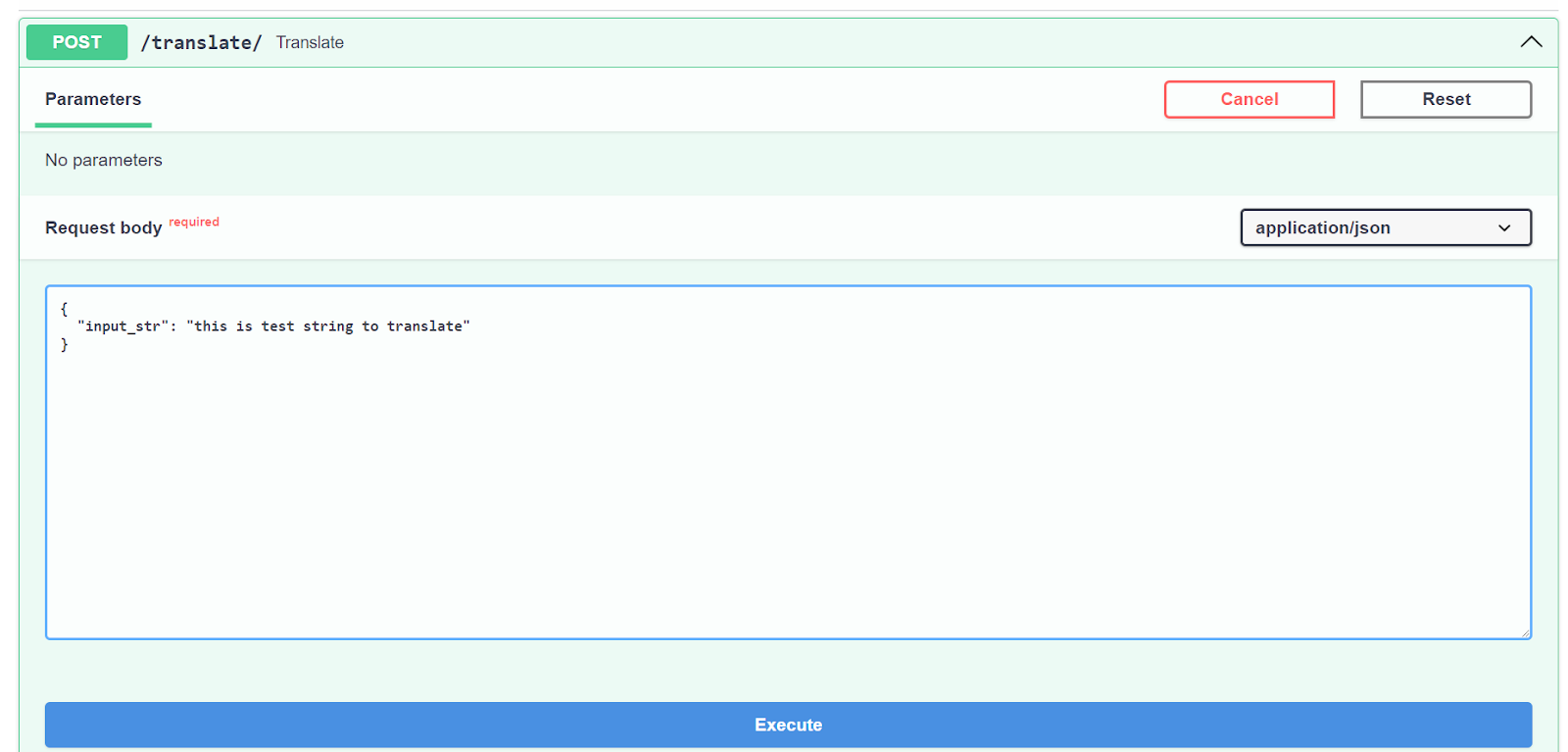

Navigate to localhost:8000/docs to see a UI interface which should look like this:

Click on POST then click on try it out and put your text string and click Execute.

You should see a curl command that was sent to the API, the request URL which is your localhost at this point, and the server response. Code 200 means success; next to it you can see the French translation.

Conclusion

This blog has walked through setting up an API endpoint using FastAPI to serve applications powered by Large Language Models like GPT. FastAPI, with its performance and ease of use, provides a straightforward way to build high-performance APIs, making it an ideal choice for developers looking to integrate LLMs into their projects.

If you want to discover the full potential of LLMs with a unique conceptual course covering LLM applications, training methodologies, ethical considerations, and the latest research, check out this free course on Mastering Large Language Models (LLMs) on Datacamp.

Are you looking to broaden your skill set and toolkit? Learn about the 21 essential Python tools for software development, web scraping and development, data analysis and visualization, and machine learning.