Cours

Amid U.S-China tech tensions and a surge in affordable Chinese models like Zhipu’s GLM-5 and Moonshot’s Kimi K2.5, a new player pops up for its SOTA benchmarks at a fraction of the expected cost.

The MiniMax 2.5 model is significant partly because of price. It is part of the general industry shift towards “intelligence too cheap to meter,” which is a reframing of an old phrase about nuclear energy.

MiniMax 2.5 is available in two variants, which are the full M2.5 with 50 tokens/second and the Lightning variant with what I think is a rather striking 100 tokens/second. Except for the difference in speed, both variants have identical capabilities.

In this article, I will explain what M2.5 is and its key features, help you come up with ideas for your own testing, and I’ll also give you some honest comparisons with the latest GPT and Gemini models.

What Is MiniMax M2.5?

MiniMax M2.5 is a very recent open-weight large language model from the Shanghai-based MinMax AI company. It was primarily announced on February 12, 2026, just weeks after the company’s Hong Kong IPO.

MiniMax AI is designed for real-world productivity, so it excels in coding, agentic tool use, web search, and office automation. M2.5 is trained via reinforcement learning in over 200k complex environments. This makes it robust enough to plan like a software architect and execute autonomously.

Key Features of MiniMax M2.5

The new model presents different features that make it stand out relative to other LLMs. In this section, I will mention a few of them.

Multilingual coding support

MiniMax M2.5 was trained on a sparse dataset, which allows it to handle more than 10 programming languages like Python, Rust, Java, Go, and others, in different full-stack development environments. Its skills span from system design to code review, and cover web, Android, IOS, and Windows applications.

Agentic tool use and search

The M2.5 model is capable of leading in a multi-turn function calling task with 76.9% on BFCL, and web navigation with 76.3% on BrowseComp, while using 20% fewer rounds than predecessors for efficient task decomposition.

Office productivity integration

In addition to agentic tool use and search capabilities, the M2.5 model generated formatted outputs forWord, PowerPoint, and Excel, like in the case of financial models with a 59% win rate over the mainstream models on GDPval-MM benchmarks.

Efficiency and RL training

Powered by the Forge RL framework and CISPO algorithm, it completes the tasks 37% faster than M2.1 while consuming fewer tokens, specifically 3.52M per SWE-Bench task.

Hands-on With MiniMax M2.5

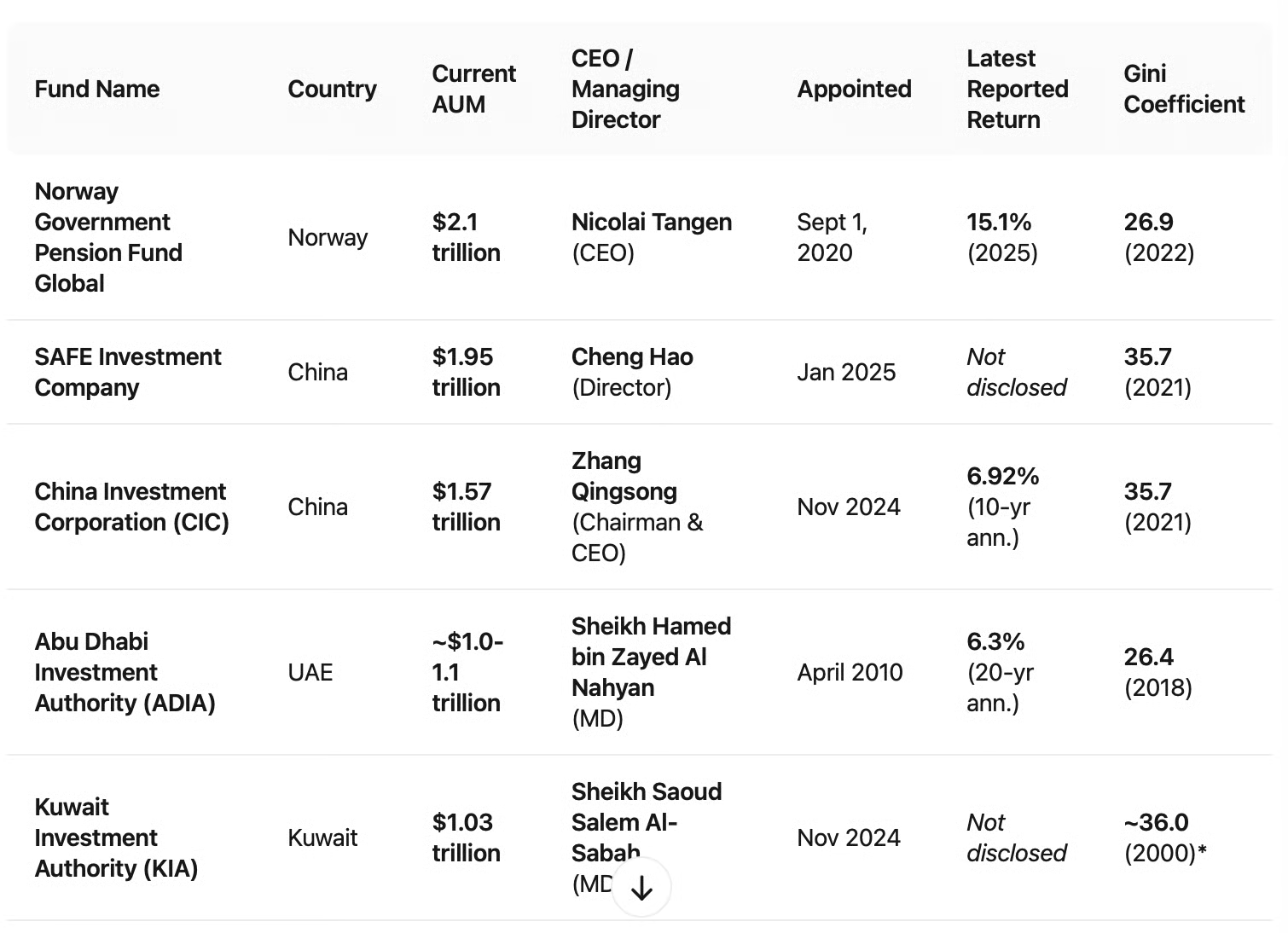

I decided to give MiniMax M2.5 a test of my own. Inspired by MiniMax M2.5’s big claims around agentic tool use and search, as well as its focus on office productivity, I gave it a research task that I expect could easily show up in someone’s work.

Here, I tried the following prompt to combine information about large sovereign wealth funds. To compile this table, MiniMax M2.5 would have to synthesize research from different places. Notice that I also asked it to find the country’s Gini coefficient, which I highly doubted would be on any of the sovereign wealth funds’ websites.

I spotchecked the information by visiting the respective websites.

For each of the five largest sovereign wealth funds by AUM, find the following: the fund's name and country, current AUM, current CEO or equivalent, when that person took the role, the fund's reported return in their most recent annual report, and the Gini coefficient of the country. Compile into a table with sources for each figure.

This was just a small test, but a very practical one. MiniMax 2.5 did a great job synthesis research from multiple sources into an accurate table.

Next, I looked closely at independent demos and benchmarks to study other showcases.

Full-stack web development

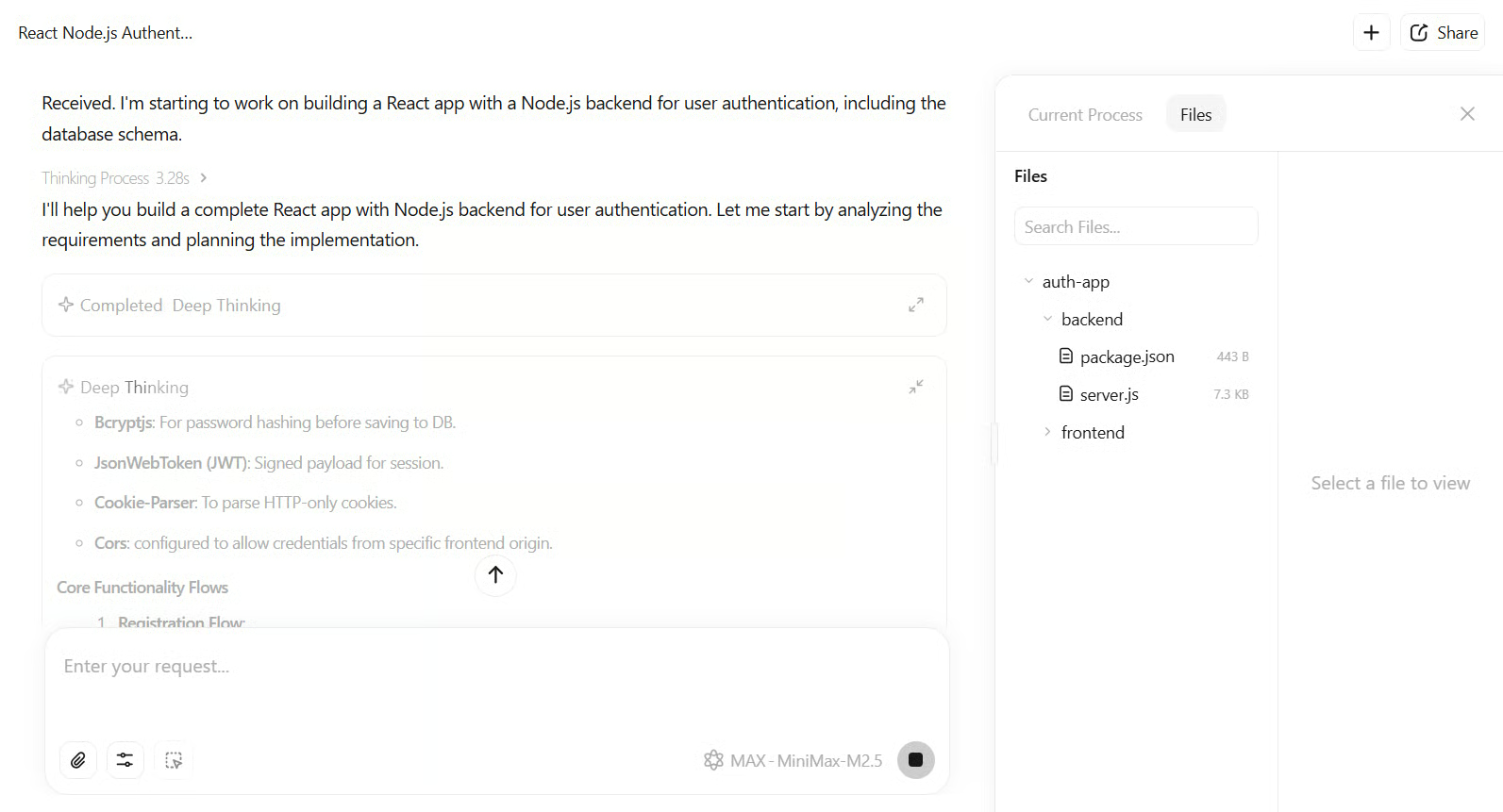

You could also try to closely test M2.5 on software engineering tasks and see how it plans and executes on a closed scope. I suggest you enter this prompt:

Build a React app with Node.js backend for user authentication, including database schema.M2.5 would output a complete spec-first plan with UI wireframes and API endpoints. With that, it will add 1200+ lines of TypeScript/JavaScript code. The tests passed on the first run in 22 minutes, which makes it faster than Claude Opus 4.6’s average. The result is a functional application with JWT auth and MongoDB integration.

This figure illustrates the given example and prompt in the MiniMax Agent's environment using the M2.5 model.

Excel financial modeling

To test its document generation capabilities, you could try a prompt like this one:

Create an Excel model for startup valuation using DCF, with sensitivity analysisM2.5 is built to output formatted files with working formulas and charts. This aligns with its strong GDPval-MM benchmark scores. (I’ll mention the benchmarks more in the next section.)

MiniMax put genuine effort into office productivity skills in order to do useful things like build financial models in Excel. The team worked directly with finance, law, and social science experts to build training data that reflects real industry standards instead of more generic templates.

A good follow-up test is to give it a deliberately ambiguous or underspecified brief. Think about a prompt a junior analyst might receive from a partner. See whether it asks clarifying questions or makes reasonable assumptions and flags them.

SVG generation and reasoning

What's worth watching for here is how M2.5 starts the task.

One of the more interesting things to come out of the release notes is that spec-writing behavior emerged organically during training. As I had mentioned, before generating any code, M2.5 tends to decompose the project and plan out structure, UI design, and API endpoints from a software architect's perspective.

Whether that planning actually produces better output is something I am continuing to evaluate for myself.

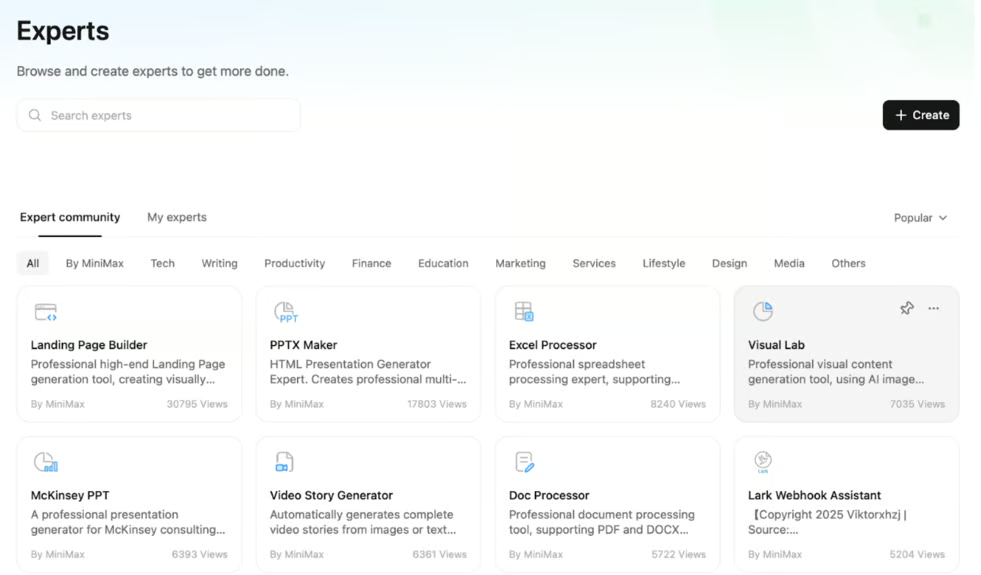

MiniMax M2.5 and the MiniMax Agent Platform

MiniMax ships M2.5 inside an agent platform that gives non-developers immediate access to its capabilities through what it calls pre-built experts. These are specialized agents configured for specific tasks. The platform works like an app store: you browse experts by category, pick one, and get a workflow-ready agent.

The most-used experts as of launch are Landing Page Builder, PPTX Maker, Excel Processor, and some others that seem genuinely useful. Taken together, you can see how MiniMax is getting its reputation for office productivity.

What's maybe even more interesting is the community layer. Users can build and publish their own experts. In fact, apparently over 10,000 have already been created.

MiniMax M2.5 Benchmarks

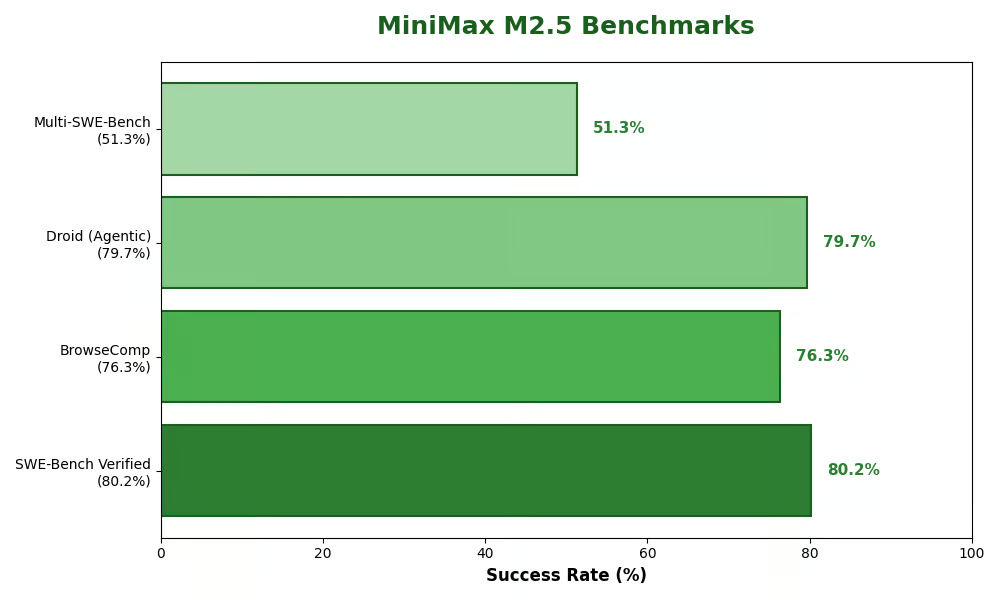

M2.5 puts up strong numbers across the benchmarks that matter most for coding and agentic work. It scored 80.2% on SWE-Bench Verified — a real-world GitHub issue resolution test — while completing tasks 37% faster than its predecessor M2.1.

On multilingual and cross-repository tasks, it ranked first on Multi-SWE-Bench with 51.3%. At launch, its 76.3% on BrowseComp placed it among the top-performing models for web search and research tasks, but the leaderboard has since shifted. Gemini 3.1 Pro now leads at 85.9%, followed by Claude Opus 4.6 at 84.0% and GPT-5.2 Pro at 77.9%. Both Gemini 3.1 Pro and Opus 4.6 launched within a week of M2.5, which is a pretty good illustration of how quickly the frontier moves right now.

Rounding things out, it scored 79.7% on the Droid benchmark for agentic coding and currently sits 4th on the OpenHands Index for long-running tasks like app-building.

This image makes it better to see the scored results of M2.5.

How Can I Access MiniMax M2.5?

There are mainly 3 ways to use this model. Thus, you can use:

- Open-source weights that you can basically download from Hugging Face and deploy locally with vLLM, SGLang, Ollama, or others. The GGUF quantizations are available for consumer hardware.

- API access from the official website with $0.3/M input tokens and $2.4/M output ones for Lightning. This means you can have $1/hour at 100 tokens/sec nonstop. Caching is supported as well as enterprise plans for high-volume use.

- Integrations with different IDEs like VS Code, Cline CLI, or Fireworks AI for day-one support.

Of course, there are no free tier limits for weights, but the API has usage-based billing.

MiniMax M2.5 vs. Competitors

The M2.5 model targets coding and agentic niches while also undercutting the costs with matching performance. Here’s a comparison:

| Feature/Benchmark | MiniMax M2.5 | Claude Opus 4.6 | GPT-5.2 | Gemini 3 Pro |

|---|---|---|---|---|

| SWE-Bench Verified | 80.2% | 80.8% | 80% | 78% |

| Multi-SWE | 51.3% | 50.3% | 49.1% | 42.7% |

| BrowseComp (Search) | 76.3% (1st open-weight) | 84.0% | 65.8% | 73.2% |

| Output Cost (/M tokens) | $2.4 | $25 | $14 | $15 |

| Speed (tokens/sec) | 100 | 60 | 80 | 70 |

| Open Weights? | Yes (MIT) | No | No | No |

| Multilingual Coding | 10+ langs | English-heavy | Strong | Moderate |

This data is sourced from official evaluations and Artificial Analysis, and by reading other recent releases from other models. M2.5 wins on efficiency and openness, but closed models edge in broad knowledge.

How Good Is MiniMax M2.5?

In the wider industry context, M2.5 is exceptionally good for targeted productivity. Its 80.2% SWE-Bench score and $1/hour pricing could actually disrupt enterprise AI, where cost barriers have slowed adoption. The open-weight approach is a game-changer in frontier coding and puts real pressure on closed models' moats.

Nowhere is that clearer than on BrowseComp, where M2.5 leads all open-weight models at 76.3% — sitting ahead of GLM-5 and Kimi K2.5 despite both being significantly larger models. The four models ranked above it are all proprietary, which says something about what MiniMax has managed to do with an MIT-licensed release.

Its agentic focus also positions it well for the agent economy, where autonomy and efficiency matter more than raw scale.

All that said, M2.5 has shortcomings. It lags in creative reasoning compared to more generalist models, reflected in its 42/100 score on the Artificial Analysis Intelligence Index. It's a specialist, not an all-rounder.

I'd give it an A+ for developers and a B+ for versatile use. Watch for fine-tunes that will likely expand its scope considerably.

MiniMax M2.5 Use Cases

There are many customized use cases of this model. For one thing, MiniMax M2.5 can be used for software engineering tasks like automating GitHub PRs, bug fixes, and full app development. (Apparently, 80% of MiniMax’s own source code is AI-generated!)

MiniMax M2.5 can also be used for Enterprise Office work, like financial modeling, report generation, and PPT editing for finance or law teams. Additionally, you can build custom AI agent experts for research or sales via the MiniMax Agent platform.

Conclusion

MiniMax M2.5 has the potential to be a productivity powerhouse that mixes SOTA agentics with open accessibility, at prices that could reshape AI economics.

To get more hands-on learning, check out our AI for Developers course. Experiment today because the future of affordable intelligence is here.

I work on accelerated AI systems enabling edge intelligence with federated ML pipelines on decentralized data and distributed workloads. Mywork focuses on Large Models, Speech Processing, Computer Vision, Reinforcement Learning, and advanced ML Topologies.