What is Data Ethics?

In short, data ethics refers to the principles behind how organizations gather, protect, and use data. It’s a field of ethics that focuses on the moral obligations that entities have (or should have) when collecting and disseminating information about us. In a world where data is more valuable and ubiquitous than ever, data ethics issues are more pressing now than at any time in history.

Strengthen Your Data Privacy & Governance

Ensure compliance and protect your business with DataCamp for Business. Specialized courses and centralized tracking to safeguard your data.

Why Data Ethics Matter

Let’s look at some examples of the importance of data ethics:

- In September 2018, hackers injected malicious code into British Airways’ website, diverting traffic to a fraudulent replica site. Customers then unknowingly gave their information to fraudsters, including login details, payment card information, address, and travel booking information.

- In 2019, after Apple introduced its credit card to consumers, allegations of an algorithm with gender bias emerged. Several prominent tech executives (including Steve Wozniak, the famous technologist and cofounder of Apple) described receiving exponentially higher credit limits than their wives, with whom they shared assets. Besides gender, no clear factors could suggest such a difference.

- In March 2021, the privacy of over 533 million Facebook users was compromised when their data was posted on an open hackers’ forum. It was one of the largest data breaches of all time. The incident raised concerns about how organizations store and secure personal information and whether they should be allowed access to such data in the first place.

These examples are a small snapshot of the breaches in digital security. Even DataCamp suffered a security breach in 2019.

Regardless of your technical expertise, the significance of data ethics is becoming increasingly apparent. We live in a world where data is a powerful asset, capable of driving innovation and improving lives. However, without ethical safeguards, data can also be misused, leading to harm.

Understanding data ethics enables us to better navigate how our information is used, ensuring that we can protect ourselves while still engaging in a data-driven society. Moreover, it encourages us to think critically about the kind of future we want to create, not only for ourselves but for generations to come, as technology continues to evolve.

Understanding the Current & Potential Future Data Ethics Landscape

Artificial Intelligence (AI) and machine learning are rapidly advancing, yet they are still in their early stages. This makes it crucial to address ethical considerations now, to ensure that these technologies remain beneficial to society. Questions about the future of responsible AI are pressing, and it's important to stay informed about these issues, such as through resources like DataCamp’s DataFramed podcast.

As we increasingly live our lives online, the distinction between private and public spaces blurs. Although there are legal protections for personal data—like the EU's General Data Protection Regulation (GDPR) and California's Consumer Privacy Act (CCPA)—gray areas remain, making it challenging to manage privacy effectively.

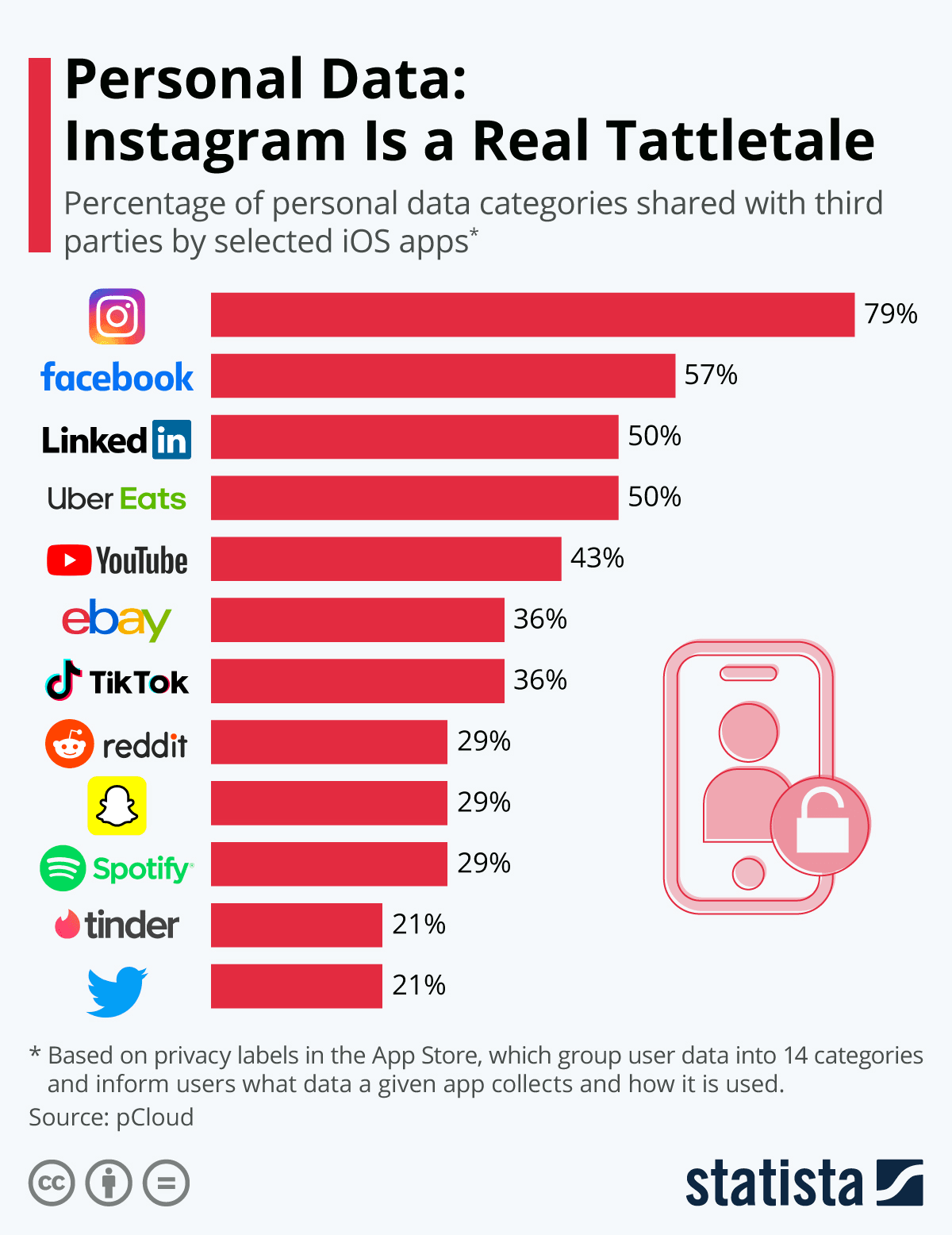

[Reference: Statista 2021]

And should you frequently use some of these platforms, you might be surprised about exactly what data is being sold. From your email to your chat and location history, advertising agencies or other buyers can gain a lot when you unwittingly let them into your life through applications.

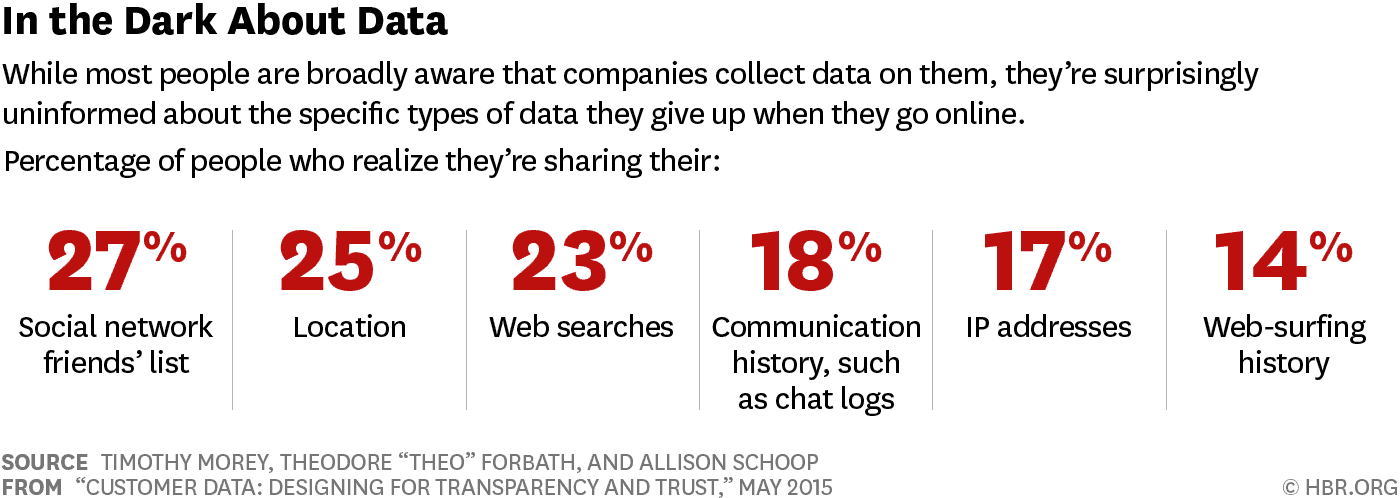

[Reference: Harvard Business Review]

[Reference: Harvard Business Review]

Many organizations are developing the infrastructure to make AI and data science possible in various industries, meaning that the research on exposure is only just beginning. Consent and the sale of personal information will become an important topic in more and more parts of life.

To understand the potential for impact once certain companies develop the infrastructure to facilitate big data projects, open-source AI art tools such as DALL-E and Stable Diffusion demonstrate how quickly AI can improve once made widely available. With these rapid developments, our only assurance for the future is that data privacy, security, and a sense of fairness will become more and more challenging to guarantee.

Principles of Big Data Ethics

While there is no one-size-fits-all approach to ethical data practices, there are fundamental principles that can guide responsible data usage:

- Transparency: Clear communication about data collection, storage, and sharing practices is vital. Users should understand how their information is being used, without needing legal expertise to navigate terms and conditions.

- Accountability: Organizations must take responsibility for the data they collect, including protecting it from breaches and misuse. This principle is crucial for maintaining trust between companies and their users.

- Individual Agency: Individuals should have control over their personal data, including the ability to access, correct, or delete their information. Ensuring this agency is a fundamental human right in the digital age.

- Data Privacy: Privacy is the expectation that personal data will be protected from unauthorized exposure. Organizations must uphold this expectation by implementing robust security measures and honoring the agreements made with users.

Applying Data Ethics

Implementing data ethics is not just about philosophical considerations; it's about making practical decisions that reflect these values in everyday operations. Here’s how different roles can integrate data ethics into their work:

Data Ethics Guidance for Individual Practitioners

Software, Machine Learning, or AI Engineers

Engineers must collaborate with ethicists and other stakeholders to address ethical challenges. Documenting processes and conducting thorough data reviews can help identify and mitigate potential ethical issues early in development.

Technical Project Managers

Project managers should account for ethical considerations in project planning, ensuring that all team members are aligned on these priorities. This might involve coordinating with new stakeholders and integrating ethical safeguards into the project’s scope.

Product Manager

Product managers play a key role in ensuring that products are developed with ethics in mind. This includes testing for inclusivity and addressing any ethical concerns that arise during the development process.

Data Ethics Guidance for Organizational Leaders

Organizations of all sizes must prioritize data ethics to protect their users and themselves:

Startups

For startups, building ethical practices from the beginning is essential. Although resources may be limited, establishing a strong ethical foundation can prevent issues as the company grows.

Small/Medium-Sized Businesses

As small businesses scale, they should maintain transparency with customers about data usage and ensure that consent is obtained and respected throughout the process.

Global Corporations

Large corporations must lead by example, implementing comprehensive data ethics programs that include cross-departmental collaboration, employee training, and regular audits of ethical practices.

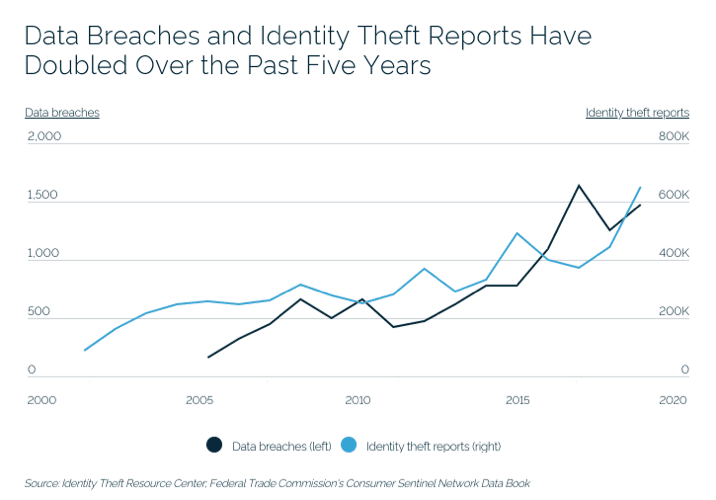

[Reference: Spanning]

[Reference: Spanning]

Today’s Challenges: Data Ethics Examples

Ethical Algorithms

Algorithms have the potential to amplify the impact of any action a million times over as they’re implemented. Even in the infancy of their capability, there are many examples of how a lack of ethical safeguards for algorithms affects us.

Hiring Algorithms at Amazon

Projects related to human resources are almost always a high risk because they determine access to employment, and impacts can often be a clear threat to people's livelihoods. However, with online applications and tools like LinkedIn, finding the right candidate while ensuring equal opportunity presents new challenges.

In 2016-2017, Amazon attempted to address both of these problems with an internal hiring algorithm that would decide which resumes made it to the next hiring stage. It wasn’t long before bias against women in technical roles became obvious. Due to existing bias in the historical data, the algorithm had taught itself to identify and single out women without prejudice.

While Amazon may have scrapped this algorithm, most HR representatives anticipate the regular use of AI tools in their hiring processes in the future. Read more about how to ethically use machine learning to drive decisions in a separate article.

Facial Recognition Struggles to Detect Darker Skin Tones

Facial recognition has gained widespread use not only related to accessing phones and accounts, but as a critical tool for law enforcement surveillance, airport passenger screening, and employment and housing decisions.

However, in 2018, research revealed that these algorithms performed notably worse on dark-skinned females—with error rates up to 34% higher than for lighter-skinned males.

If you’re curious about how face detection works, check out our tutorial on face detection with Python using OpenCV.

Data Privacy

Learn more about data privacy and anonymization in Python with our interactive course.

Period Tracking Apps

Since federal abortion protection Roe v. Wade was overturned in the US, period tracking apps have become a possible means for prosecution in any future outright abortion bans. While most apps have terms that allow them to share personal data with third parties, many period tracking apps have murky language around whether data could be shared with authorities for arrest warrants.

While you might need a legal expert to interpret the terms and conditions of all the applications you use daily, understanding the trade-offs and risks you expose yourself to will only become more important.

Uber Tracked Politicians and Celebrities with “God-Mode”

In 2014, reports claimed that Uber’s “god-mode” gave all employees access to complete user data, which was often used to track celebrities, politicians, and even personal connections (such as ex-partners). A former employee also filed a suit describing reckless behavior with user behavior and a “total disregard” for privacy.

Around the same time, in 2016, Uber attempted to cover up a major data breach by paying off hackers and not reporting the incident. Despite a pending investigation into company security by the US Federal Trade Commission at the same time.

Data Security

From inherent security flaws to hacking vulnerabilities, data security can put more than your login info at risk. Apps and websites of all kinds can pinpoint your location, read your messages, see who you’ve blocked, and how you spend your money.

Whether you’re a social butterfly online or just participate in minimal digital services (banking, texting, email, etc.), you’re most likely exposed in more ways than you know.

Current Data Ethics Regulations

Many people are aware of the importance of data ethics—but what does it mean to us as consumers? What does it mean for companies? What does it mean for government agencies?

The answer to these questions depends on where you live. In some countries, there are already laws in place requiring companies to be transparent about how they handle personal information; in others, there aren't any regulations at all.

And then there are the ethical questions we face every day: How much do we share with each other online? Should we be sharing less? And what happens when something goes wrong? These are all pressing issues that require thoughtful consideration and strong leadership from both government and industry leaders so that our future isn't threatened by unethical behavior or lack thereof.

Around the world, governments are trying new methods of regulating ethical data collection, AI, and various uses for data (ex: targeted marketing, etc.).

Here are a few regulations worth knowing and following to understand the current state of data regulation where you live:

Data Protection

- GDPR (European Union) - Learn more in our webinar on Data Ethics and GDPR for Data Practitioners.

- CCPR, CCPA (USA - California)

AI Use

- EU AI Act

- New York Bias in Hiring AI Regulation (USA - NY)

- Algorithm Justice and Online Platform Transparency Act (USA)

Platform Regulation

- Digital Services Act (European Union)

While the EU and US lead the way in ratified legislation, most of these policies are new and continuously under development.

Upskilling Your Team in Data Ethics

As the importance of data ethics continues to grow, it’s crucial for businesses to ensure that their teams are well-equipped to navigate the ethical challenges of working with data. Data ethics isn’t just a concern for data scientists or legal teams—it’s a fundamental aspect of responsible business practices across all departments.

If you’re a team leader or business owner, investing in data ethics training can significantly enhance your team’s ability to handle data responsibly, protect customer privacy, and uphold your organization’s reputation. DataCamp for Business offers comprehensive training programs that can help your employees develop a strong understanding of data ethics, tailored to your industry and needs. We provide:

- Customized learning paths: Training programs designed to match your team’s specific roles and ethical challenges they may face.

- Practical applications: Interactive courses and real-world case studies that help employees apply ethical principles in their day-to-day work.

- Ongoing assessment: Tools to track your team’s understanding and application of data ethics, ensuring they stay ahead of emerging ethical issues.

By upskilling your team in data ethics through platforms like DataCamp, you not only foster a culture of ethical awareness but also position your business as a leader in responsible data use. This investment in ethics education can help you avoid costly mistakes, build trust with customers, and ensure long-term success. Connect with our team and request a demo today.

Training 2 or more people?

Continued Data Ethics Education

For those interested in preparing their career for these developing changes in technology and business, there are many that can either facilitate a change or entryway into a career in ethical data. Start with our Introduction to Data Ethics course, which covers principles, AI ethics, and practical skills to ensure responsible data use. We also have various webinars covering data ethics:

- EU AI Act Readiness: Meeting Your Organization's AI Literacy Requirements

- Understanding Regulations for AI in the USA, the EU, and Around the World

- What Leaders Need to Know About Implementing AI Responsibly

- Data Literacy for Responsible AI

There are also several certifications to explore:

Data Privacy

- IAPP Certified Information Privacy Manager (CIPM) certification

- Securiti PrivacyOps certification

- ISACA Certified Data Privacy Solutions Engineer (CDPSE) certification

- IAPP Certified Information Privacy Professional (CIPP) certification

Data Security

- CompTIA Security+

- Certified Ethical Hacker (CEH)

- GIAC Security Essentials Certification (GSEC)

- Offensive Security Certified Professional (OSCP)

Data Ethics FAQs

What are the key principles of data ethics?

The key principles of data ethics include transparency, accountability, individual agency, and data privacy. Transparency involves clear communication about what data is collected, how it is used, and who it is shared with. Accountability means that organizations must take responsibility for the data they collect and the potential harm it can cause. Individual agency allows individuals to control their data, including access, removal, and storage. Data privacy ensures protection from unauthorized access and misuse of personal information.

How can organizations ensure they are ethically using data?

Organizations can ensure ethical data use by implementing robust data governance policies, regularly conducting ethical reviews, and involving ethicists in their data projects. They should also provide clear and accessible information to users about data collection and use, obtain informed consent, and allow users to control their data. Regular training for employees on data ethics and compliance with relevant regulations is also crucial.

What are the potential risks of not following data ethics principles?

Not following data ethics principles can lead to significant risks, including data breaches, loss of trust, legal consequences, and reputational damage. Ethical lapses can result in misuse of personal information, discrimination, and other harms to individuals and society. Organizations may also face regulatory fines and sanctions if they fail to comply with data protection laws.

Why is transparency important in data ethics?

Transparency is important in data ethics because it builds trust between organizations and individuals. By clearly communicating how data is collected, used, and shared, organizations can ensure that individuals are informed and can make educated decisions about their personal information. Transparency also helps in holding organizations accountable and fostering a culture of openness and ethical behavior.

How does data ethics impact AI and machine learning?

Data ethics significantly impacts AI and machine learning as these technologies rely heavily on data. Ethical considerations include ensuring fairness, avoiding biases, and protecting privacy. Organizations must carefully consider how data is used to train AI models and ensure that these models do not perpetuate discrimination or harm. Ethical AI development involves continuous monitoring, testing for biases, and involving diverse stakeholders in the design process.

A data science and public policy graduate student at the Hertie School and a writer covering tech, AI & social issues.