course

A few weeks ago, I installed claude-mem across all my projects. Since then, it's captured 6,814 observations across 259 sessions, covering ten different codebases, all sitting in a 39 MB SQLite file on my laptop.

Before that, every Claude Code session started from scratch. I'd open a new session and spend the first ten minutes re-explaining the project structure. The authentication bug we'd fixed together the day before? Claude had no idea. It would re-read files it had already analyzed, then land on the same wrong assumptions we'd already corrected.

claude-mem is a Claude Code plugin that fixes this by capturing what happens during a session and making it available to future ones.

In this article, I’ll cover how it actually works under the hood, how to install it without falling into the common traps, how to tune it for your budget, and what you should know before running it in production.

What is claude-mem?

claude-mem is a Claude Code plugin that:

- Hooks into session lifecycle events (session start, each tool call, session end)

- Compresses raw tool-call outputs into structured observations using AI

- Stores everything in a local SQLite database at

~/.claude-mem/claude-mem.db - Injects the relevant pieces back when you start a new session

It runs as a plugin, not an MCP server.

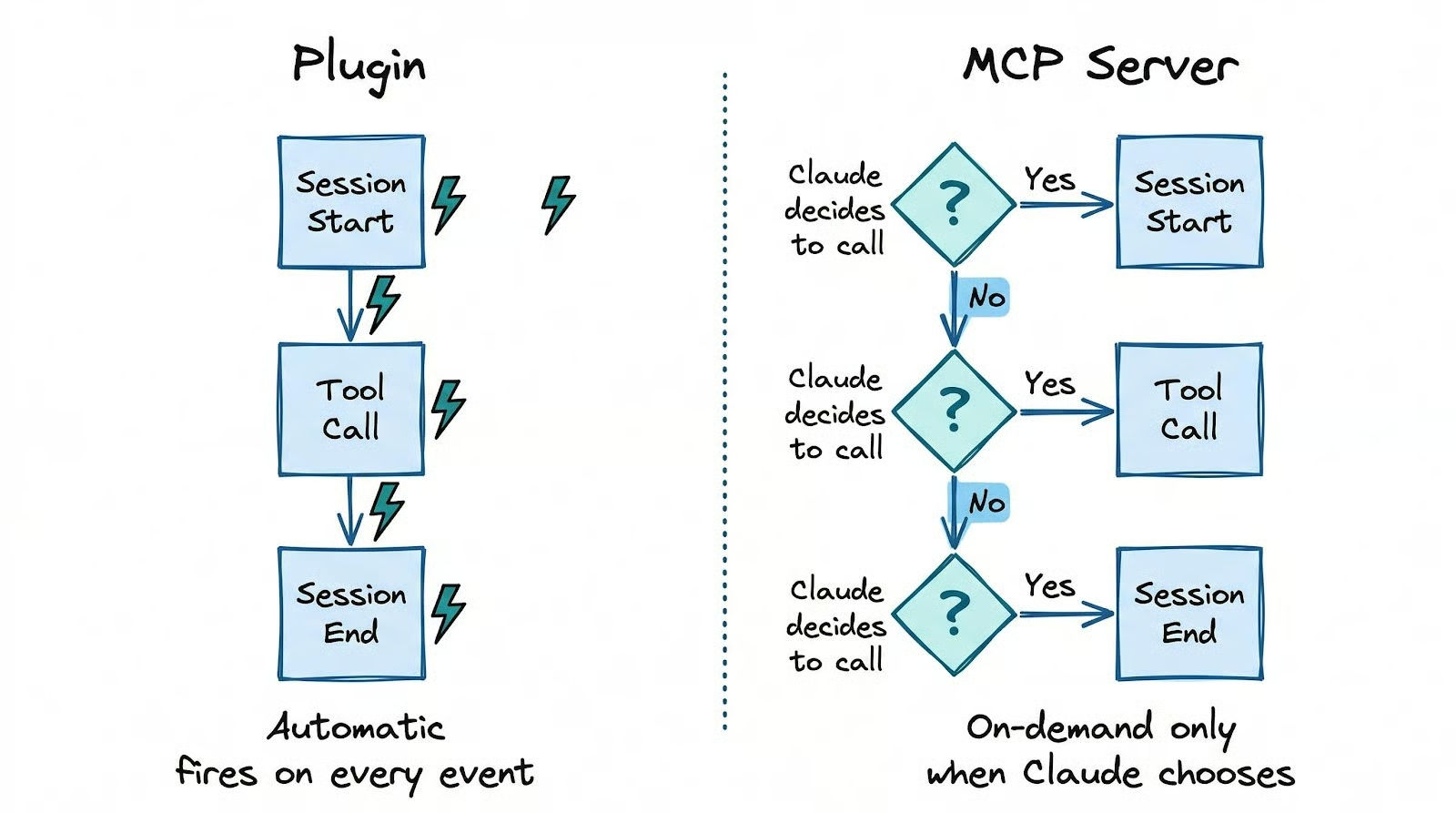

That distinction matters: plugins fire automatically on lifecycle events like session start and each tool call, while MCP servers sit idle until Claude decides to call them.

With an MCP-based approach, retrieval only happens when Claude thinks to ask for it. claude-mem captures and injects without Claude having to choose to.

Everything stays on your machine, and the compression runs on your existing Claude Code authentication, so there's no separate API key or account needed.

Getting it running takes two commands inside a Claude Code session:

/plugin marketplace add thedotmack/claude-mem

/plugin install claude-memRestart Claude Code after that.

The common mistake is running npm install -g claude-mem instead, which installs only the SDK library. Hooks don't get registered, the worker never starts, and nothing works.

The plugin marketplace path is the only one that gives you the full setup. The only hard prerequisite is Node.js 18+. Everything else (Bun, uv, SQLite) auto-installs on first run.

To verify the installation actually worked, check three things. First, curl http://localhost:37777/api/health should return {"status":"ok"}. If it fails, the background worker didn't start. The most common cause is a Node.js version below 18.

Second, check that ~/.claude/hooks.json contains claude-mem entries. If the file doesn't list claude-mem under PostToolUse and SessionStart, the hooks weren't registered, and no capture will run regardless of whether the worker is alive.

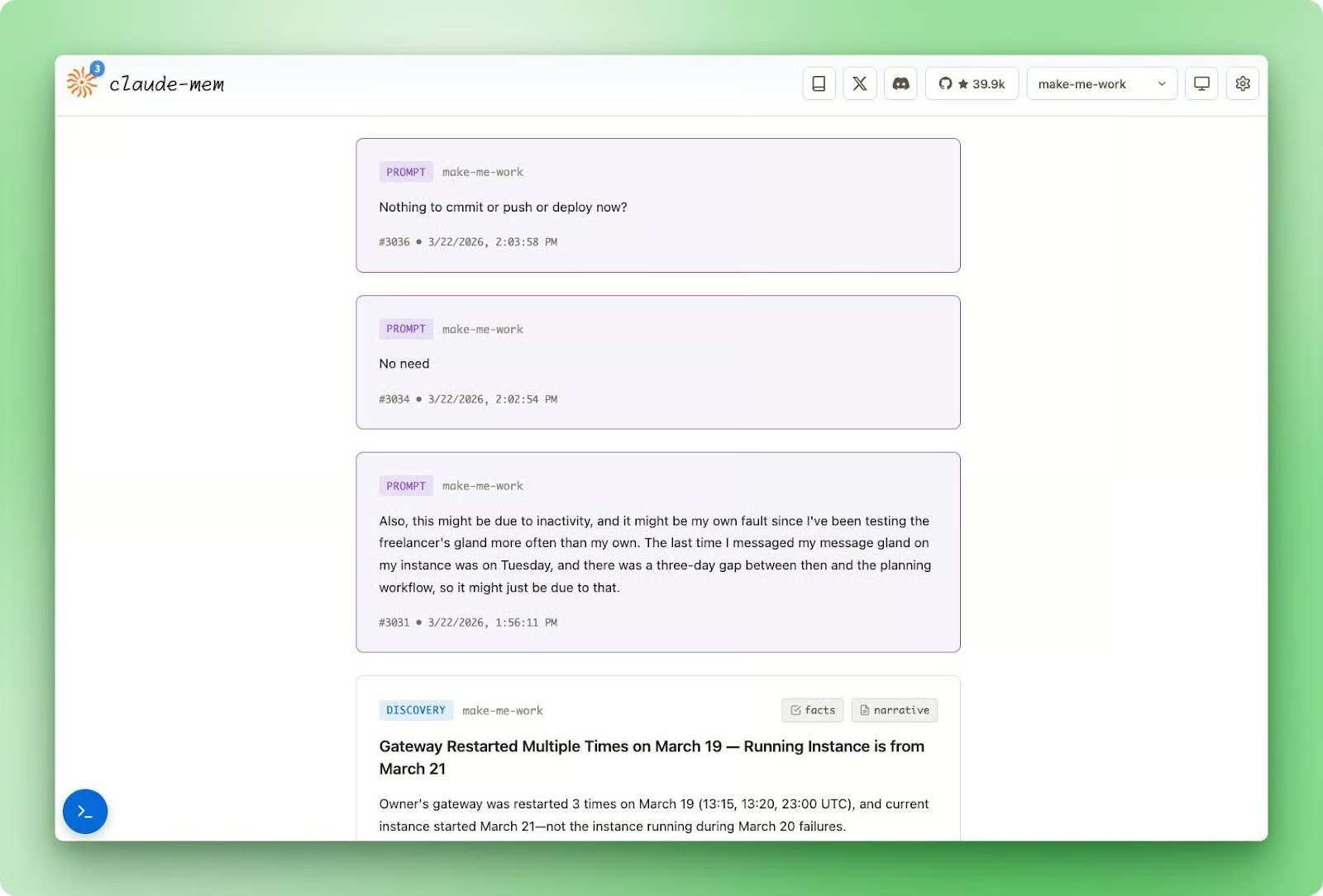

Third, open http://localhost:37777 in a browser to see the web viewer, which shows observations streaming in as you work.

The first session produces no injected context at SessionStart since the database is empty, but observations start accumulating from the first tool call.

By the second session, claude-mem will have a session summary and a batch of observations to inject.

Running through the verification checklist first saves you from discovering three sessions later that nothing was ever captured. The web viewer is the most reliable signal: if you see observations appearing after a tool call, everything is wired up correctly.

How claude-mem Works

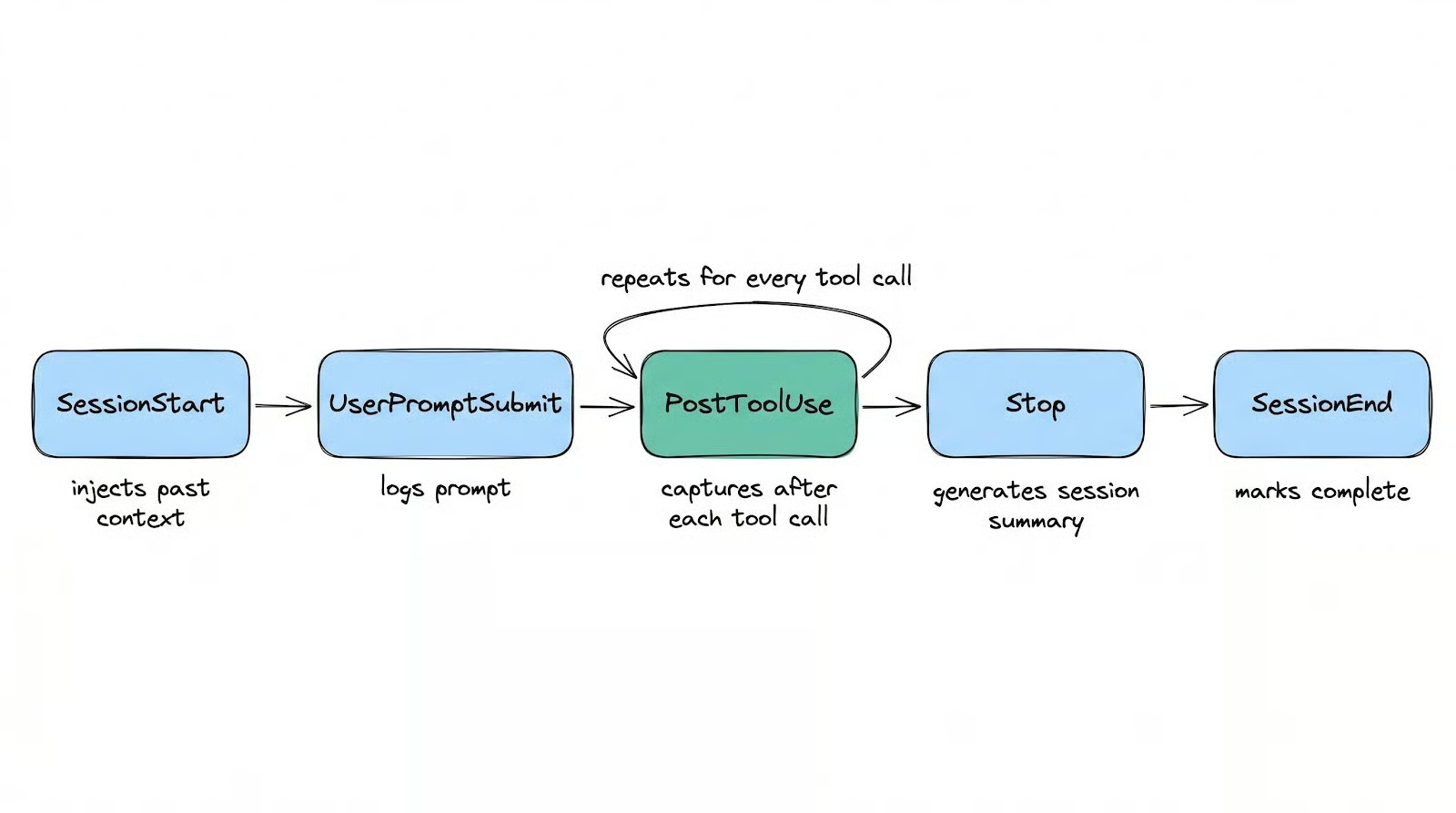

Once installed, claude-mem runs silently in the background across five lifecycle hooks. Understanding what each one does explains why the tool behaves the way it does.

Capture and compression

The five hooks map onto a session's natural timeline:

SessionStartqueries the database and injects a compressed index of recent work into your context windowUserPromptSubmitlogs the session and stores your promptPostToolUsefires after every tool call and sends the raw output to a background worker for compressionStopgenerates a session-level summary when you pause or idleSessionEndmarks the session complete

SessionStart builds that injected index from session summaries, observation titles grouped by type, and timestamps: a searchable map of recent work that Claude can reference throughout the session without you doing anything.

PostToolUse fires after every tool call. It sends the raw output to a background worker via a non-blocking HTTP POST (8ms on average), and the worker compresses it into a structured observation using the Claude Agent SDK.

Here's what that structure looks like:

|

Field |

What it contains |

|

|

One of |

|

|

A concise, searchable string |

|

|

An array of discrete facts (~50 tokens, cheap to load) |

|

|

A prose explanation (~155-500 tokens, only loaded on demand) |

|

|

Semantic tags like how-it-works, problem-solution, gotcha, trade-off |

The per-call capture is what separates claude-mem from tools that summarize at session end with a single AI call.

If your session crashes mid-refactor, those tools lose everything since the last completed session. claude-mem has every observation up to the last tool call.

The Stop hook produces something different: a session-level summary with fields like request, investigated, learned, completed, and next_steps. These give Claude a high-level map of what happened without loading every individual observation.

Retrieval

Storing thousands of observations is one thing. Loading the right ones into a context window without burning tokens is a different problem.

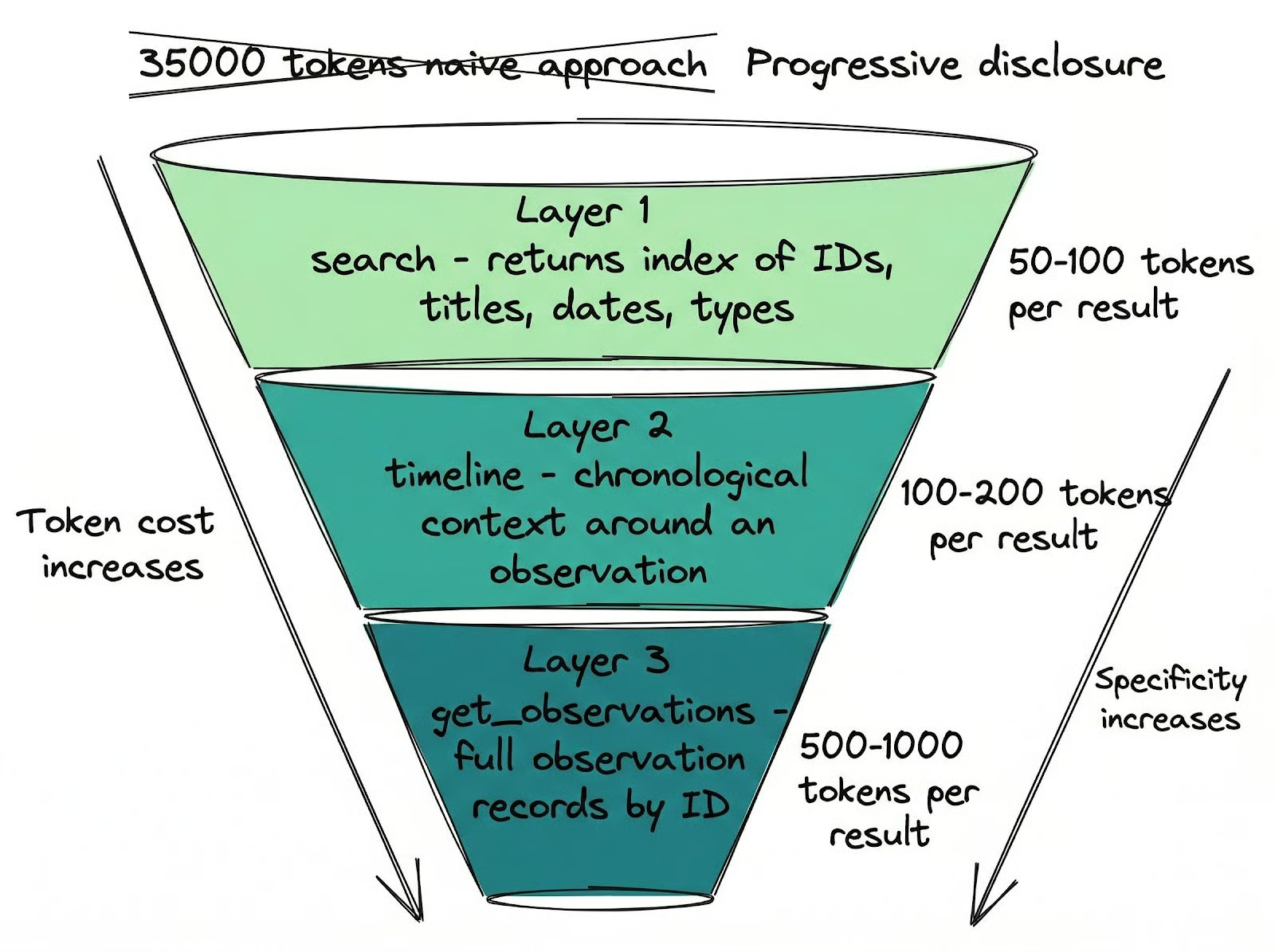

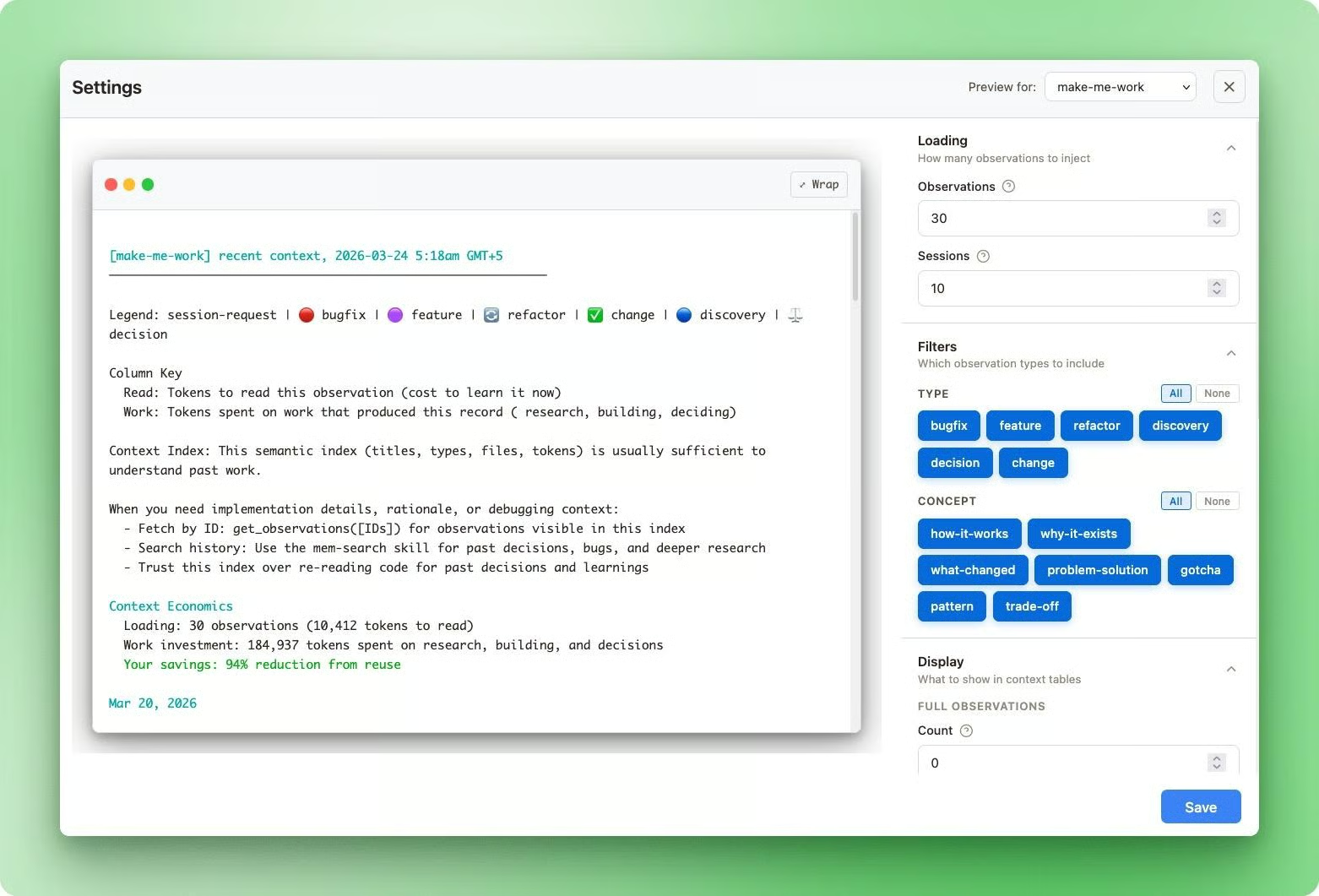

The naive approach is to dump historical context into the prompt. claude-mem's docs put numbers on this: a typical naive load sends 35,000 tokens to the context window, of which about 2,000 turn out to be relevant. That's a 6% signal rate.

A three-tier retrieval system pushes that above 80% by letting Claude load context progressively through claude-mem:

- Layer 1, search returns a compact index of observation IDs, titles, dates, and types. Cost: 50-100 tokens per result. You see what exists without loading it.

- Layer 2, timeline provides chronological context around a specific observation, showing what happened before and after it. Cost: 100-200 tokens per result.

- Layer 3,

get_observationsfetches full observation records by ID in batches. Cost: 500-1,000 tokens per result. Only pull what you actually need.

That retrieval discipline doesn't enforce itself.

claude-mem registers an MCP tool literally named __IMPORTANT whose only purpose is to remind Claude to follow this three-step pattern.

Without it, Claude skips the cheap layers and fetches everything at full detail, defeating the entire architecture. That a named tool had to be added just to enforce retrieval discipline gives you a realistic picture of how the system had to be designed around Claude's actual behavior.

These retrieval tools aren't just used at session start.

During a session, when you ask Claude something about past work, it searches memory directly.

You can ask it to analyze your working patterns across sessions, find details you've forgotten ("where did I save that API key?", "how did we implement the auth flow?"), or pick up where you left off on a project you haven't touched in weeks.

When you're juggling multiple codebases and sessions, details leak out of your own memory faster than you'd expect. claude-mem fills that gap by giving Claude access to everything that happened, even the things you've personally forgotten.

After three weeks, 61% of my observations are typed as discovery. Claude mostly captures what it learns about a codebase rather than just the changes it makes.

Across 259 sessions, I have 1,729 session summaries, averaging about 6-7 per session. That multi-session continuity is only possible because the capture runs continuously, not just at the end.

That's the difference between summarizing a session and actually remembering it.

Configuring claude-mem

All of claude-mem's settings are accessible through the web UI at http://localhost:37777 under the Settings tab. You can also set them as environment variables or edit ~/.claude-mem/settings.json directly.

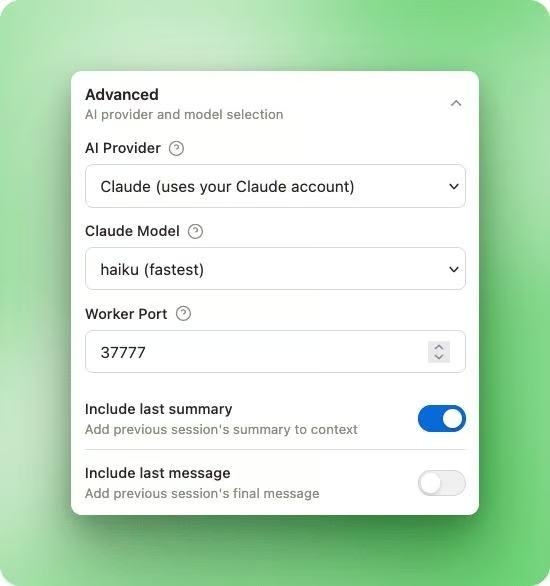

The first setting worth knowing about is CLAUDE_MEM_MODEL, which controls which model handles compression. The default is haiku, which is already the cheapest option in Claude's model lineup.

You can also switch the compression provider entirely with CLAUDE_MEM_PROVIDER, which accepts claude, gemini, or openrouter.

Running compression on Gemini Flash Lite or a free OpenRouter model like xiaomi/mimo-v2-flash:free drops the cost to zero beyond your existing Claude Code subscription.

I run mine on haiku with 30 observations per session. At roughly 400 input tokens and 150 output tokens per compression call, that comes out to about 16,500 tokens per session. At haiku rates, a month of heavy use costs well under a dollar.

After three weeks across ten projects, compression quality hasn't been an issue.

Two settings control how much context gets loaded at session start:

CLAUDE_MEM_CONTEXT_OBSERVATIONS: total observation count injected atSessionStart(default 50, range 1-200)CLAUDE_MEM_CONTEXT_FULL_COUNT: how many of those show expanded detail with the fullnarrativefield (default 5, range 0-20)

The rest display only a title, type, and date. All context injection is scoped to the project directory you're working in, so observations from other projects won't clutter your context.

You can preview exactly what gets injected and adjust these counts per-project through the web UI.

One thing worth expecting: in the first week on a new project, your context window may fill up faster than usual.

I almost uninstalled claude-mem during that initial stretch because sessions were hitting the context limit sooner than before.

What was happening was claude-mem learning the project from scratch, recording a high volume of new observations that all got injected at session start.

After about a week, the volume of new discoveries dropped as Claude had already mapped the codebase, and sessions started lasting longer than they had before I installed the plugin.

If you hit that early overhead, lower CLAUDE_MEM_CONTEXT_OBSERVATIONS temporarily and raise it back once the initial learning period settles.

CLAUDE_MEM_SKIP_TOOLS lets you exclude specific tools from capture.

The defaults already skip high-noise tools like TodoWrite, AskUserQuestion, and BashTool. You probably won't need to touch this unless you have a custom tool generating output you don't want stored. It's a comma-separated list, so adding tools is straightforward.

If you work with API keys or credentials, wrap them in <private> tags inside your prompts to exclude that content from storage.

claude-mem strips anything inside those tags before creating an observation.

It doesn't scan file contents proactively, so environment variables loaded from disk aren't at risk, but anything you paste directly into a prompt is. The <private> tag approach means the protection is opt-in: you have to remember to use it.

claude-mem vs Built-in Memory and Alternatives

Claude Code already ships with memory features, but none of them capture context automatically.

CLAUDE.md files are static markdown loaded at session start, useful for project rules and preferences, but capped at around 200 lines before adherence drops. No search, no retrieval. You write your instructions once and hope Claude follows them.

Auto Memory, added in Claude Code v2.1.59, makes Claude itself responsible for deciding what to save between sessions. It stores unstructured notes at ~/.claude/projects/<project>/memory/ and loads the first 200 lines of a MEMORY.md file at startup.

In practice, what gets saved doesn't always line up with what you'd want, and there's no way to search or filter through it after the fact. You end up with a text file of decisions that Claude may or may not pay attention to.

The /compact command rounds out the built-in options by summarizing your conversation to free context space. CLAUDE.md files survive because they're re-read from disk, but everything else disappears: conversational instructions, mid-session context, anything you said but didn't write down somewhere.

claude-mem covers the gap none of these fill: automatic continuous capture with structured compression and token-aware retrieval. It's also not the only plugin doing this work.

|

Tool |

Architecture |

Storage |

Search |

Capture timing |

Pricing |

Cross-machine |

Team memory |

|

Claude built-in |

Native |

Local markdown |

None |

Manual |

Free |

Via git sync |

Via shared CLAUDE.md |

|

claude-mem |

Plugin (hooks) |

Local SQLite + FTS5 |

FTS5 keyword |

Per tool call |

Free |

No |

No |

|

memsearch |

Plugin (hooks + skill) |

Local markdown + Milvus |

Hybrid dense + BM25 |

Session end |

Free |

No |

No |

|

supermemory |

Plugin (hooks + cloud) |

Cloud |

Semantic + temporal |

Session end |

Paid |

Yes |

Yes |

|

mem0 (self-hosted) |

MCP server |

Local Qdrant + Ollama |

Semantic vector |

Session end |

Free |

No |

No |

memsearch is the closest free alternative if you want markdown files instead of a database and don't want a background process running. It runs retrieval in an isolated subagent, so search results never mix into your main context window. Use it if you prefer a simpler setup and don't need per-call capture.

supermemory is the right call if you need cross-machine sync and shared team memory, though it requires a paid subscription.

The self-hosted mem0 stack takes a different approach altogether: Qdrant and Neo4j for graph-based entity tracking, zero extra cost, but a heavier setup worth it only if you're already running that infrastructure.

claude-mem sits in the middle. Fully local, free, per-call capture with structured compression. The tradeoff is a background worker process on port 37777 and some rough edges that haven't been smoothed out yet.

claude-mem Limitations and Known Issues

The security picture is the biggest concern.

A community audit in February 2026 rated the risk as HIGH, and the issues remain open.

The HTTP API on port 37777 has zero authentication: any process on your machine can read every stored observation, view your settings (including any API keys in cleartext), and inject arbitrary memories into the database.

Default host binding was 0.0.0.0 rather than 127.0.0.1, which on cloud VMs or machines without a firewall means the API is exposed to the network.

The smart_unfold and smart_outline tools also have a path traversal vulnerability with no directory boundary checks.

Run this on a personal dev machine only.

Reliability has a few sharp edges too.

The ChromaDB integration has a known subprocess leak: one user traced it to 184 orphaned processes in 19 hours, consuming about 16 GB of RAM.

The root cause was a corrupted ONNX model triggering infinite retry loops. Stick with FTS5 (SQLite's built-in full-text search engine) instead, which works without ChromaDB and has been reliable in my experience.

On macOS with Apple Silicon, the worker cold start can exceed the hardcoded 5-second timeout when ChromaDB is enabled, causing the SessionStart hook to fail. This doesn't affect FTS5-only setups. There's also an active bug where the search and timeline MCP tools have empty parameter schemas, so Claude can't pass queries to them. get_observations works fine.

These aren't dealbreakers for local development on a personal machine. But they're worth knowing before you install something that has access to your entire session history.

Final Thoughts

Three weeks in, the main thing I notice is what I no longer do. I don't re-explain the project structure at the start of each session. I don't re-trace a debugging path we already walked. Claude arrives with context, and we start where we left off.

The architecture makes this possible in a way that simpler approaches don't. Capturing once at session end means losing everything if a session crashes. Dumping history without retrieval tiers means spending tokens on noise. The design choices here are deliberate, and knowing them helps you tune the tool rather than just trust it.

The security gaps covered above are real, and they're still open. This is worth running on a personal dev machine. It's not worth running on a cloud VM or a shared machine until those issues are patched. But for local solo development, the tradeoffs are manageable.

If you want to go deeper, DataCamp's Introduction to Claude is a solid starting point for understanding how Claude Code works before layering plugins on top of it.

claude-mem FAQs

What is claude-mem and what problem does it solve?

claude-mem is a Claude Code plugin that captures what happens during each coding session, compresses the raw tool outputs into structured observations, and injects relevant context back when you start a new session. It solves the blank-slate problem where every Claude Code session starts with zero memory of previous work, forcing you to re-explain your project structure and past decisions each time.

How do I install claude-mem?

Run two commands inside a Claude Code session: /plugin marketplace add thedotmack/claude-mem followed by /plugin install claude-mem, then restart Claude Code. The common mistake is running npm install -g claude-mem, which only installs the SDK library without registering the hooks or starting the background worker. The only prerequisite is Node.js 18 or higher; everything else auto-installs.

How is claude-mem different from CLAUDE.md and Auto Memory?

CLAUDE.md files are static markdown with no search or retrieval, capped at about 200 lines before adherence drops. Auto Memory lets Claude decide what to save, but it's unstructured and not searchable. claude-mem captures automatically after every tool call, compresses observations into a typed schema with fields like type, title, facts, and narrative, and retrieves them through a three-tier system that loads only what's relevant instead of dumping everything into context.

Does claude-mem cost extra money to run?

claude-mem uses your existing Claude Code authentication for compression, so there's no separate API key or account required. The default compression model is haiku, the cheapest in Claude's lineup. You can also switch the provider to Gemini or OpenRouter to run compression on free models, bringing the additional cost to zero beyond your existing Claude Code subscription.

Is claude-mem safe to use?

claude-mem stores all data locally on your machine, but a February 2026 community security audit rated it HIGH risk. The HTTP API on port 37777 has no authentication, meaning any local process can read stored observations and settings. The recommendation is to run it only on a personal development machine, not on cloud VMs or shared servers. Stick with FTS5 for search instead of ChromaDB to avoid a known subprocess leak issue.

I am a data science content creator with over 2 years of experience and one of the largest followings on Medium. I like to write detailed articles on AI and ML with a bit of a sarcastıc style because you've got to do something to make them a bit less dull. I have produced over 130 articles and a DataCamp course to boot, with another one in the makıng. My content has been seen by over 5 million pairs of eyes, 20k of whom became followers on both Medium and LinkedIn.