Programa

Dois dos lançamentos de modelo mais comentados do início de 2026 vêm de lugares bem diferentes. O Muse Spark, da Meta, é o primeiro modelo do Meta Superintelligence Labs e marca uma ruptura deliberada com a linhagem Llama. O Claude Opus 4.6, da Anthropic, chegou mais cedo no ano como um upgrade do carro-chefe da empresa, com janela de contexto de 1 milhão de tokens e nota máxima no Terminal-Bench 2.0.

Escolher entre eles não é tão óbvio. O Muse Spark é multimodal nativo, com três modos distintos de raciocínio e foco em eficiência computacional. O Claude Opus 4.6 foi concebido para coding agentivo, fluxos de trabalho de longa duração e raciocínio profundo, com Agent Teams e pensamento adaptativo integrados. Ambos são proprietários e apenas na nuvem, o que já reduz o leque em relação a alternativas com pesos abertos.

Neste artigo, eu comparo o Muse Spark e o Claude Opus 4.6 em seis dimensões principais: arquitetura e filosofia de design, raciocínio e benchmarks, capacidades multimodais, recursos agentivos, acesso e disponibilidade, além de privacidade e licenciamento.

Se você quer aprender mais sobre os modelos de linguagem da Anthropic (LLMs), recomendo nosso curso Introduction to Claude Models. E não deixe de conferir também nossa outra comparação: GPT-5.4 vs Claude Opus 4.6.

Atualização: Logo após a publicação deste artigo, saiu uma nova versão do Opus. Leia nosso guia do Claude Opus 4.7.

O que é o Muse Spark?

O Muse Spark é o primeiro modelo lançado sob a família Muse, originalmente com o codinome "Avocado" durante o desenvolvimento. Foi construído pelo Meta Superintelligence Labs, uma divisão criada pela Meta em junho de 2025 após um investimento reportado de US$ 14,3 bilhões que incluiu a contratação de Alexandr Wang, da Scale AI. O modelo foi lançado em 8 de abril de 2026.

A principal decisão de design por trás do Muse Spark foi reconstruir todo o pipeline de treinamento do zero. Em vez de estender a arquitetura Llama, a equipe da Meta recomeçou com multimodalidade nativa em texto, imagens, áudio e uso de ferramentas. O resultado, segundo a Meta, é um modelo que iguala o desempenho do Llama 4 Maverick usando uma ordem de grandeza a menos de computação.

O Muse Spark oferece três modos de raciocínio:

- Instant para respostas rápidas

- Thinking para chain-of-thought em problemas complexos

- Contemplating para raciocínio multiagente em paralelo (ainda em rollout gradual)

O modelo é apenas na nuvem, acessível via meta.ai ou pelo app Meta AI, com uma API em visualização privada para parceiros corporativos selecionados.

O que é o Claude Opus 4.6?

O Claude Opus 4.6 é o mais novo modelo carro-chefe da Anthropic, lançado no início de 2026 como um upgrade do Opus 4.5. A Anthropic o descreve como seu nível de modelo mais inteligente, com foco em coding agentivo, raciocínio profundo e autocorreção. Ele lidera o benchmark de programação Terminal-Bench 2.0 e está no mesmo patamar dos líderes em outros benchmarks, como o BrowseComp para pesquisa de informações.

O grande destaque é a janela de contexto de 1 milhão de tokens, atualmente em beta. Isso coloca o Opus 4.6 lado a lado com o Gemini 3 em extensão de contexto e o torna viável para grandes bases de código e tarefas agentivas de longa duração. Junto com o modelo, a Anthropic lançou o Agent Teams no Claude Code, permitindo que várias instâncias independentes do Claude trabalhem em paralelo em uma mesma tarefa.

O Claude Opus 4.6 está disponível via Claude API (ID do modelo: claude-opus-4-6), Claude Code e Claude no PowerPoint. É proprietário e apenas na nuvem, sem versão com pesos abertos.

Muse Spark vs Claude Opus 4.6: comparação direta

Sem enrolação, vamos comparar os dois modelos em algumas categorias relevantes.

Guia rápido de decisão

Se você quer uma resposta rápida antes de ir aos detalhes, esta tabela mapeia cenários comuns para o modelo mais indicado.

| Caso de uso | Recomendado | Por quê |

|---|---|---|

| Coding agentivo com agentes em paralelo | Claude Opus 4.6 | Agent Teams no Claude Code, 80,8 no SWE-Bench Verified |

| Análise de documentos com longo contexto | Claude Opus 4.6 | Janela de contexto de 1M de tokens (beta) |

| Raciocínio multimodal (texto + imagens + áudio) | Muse Spark | Multimodalidade nativa desde a base, chain-of-thought visual |

| Inferência com eficiência computacional | Muse Spark | Iguala o Llama 4 Maverick com 10x menos computação |

| Matemática e raciocínio complexos | Claude Opus 4.6 | Melhores notas em benchmarks de raciocínio |

| Acesso a API corporativa | Claude Opus 4.6 | API pública disponível; API do Muse Spark é apenas preview privado |

| Raciocínio extremo em múltiplas etapas | Muse Spark (Contemplating) | Modo de raciocínio multiagente em paralelo; compete com Gemini Deep Think e GPT Pro |

| Integração com PowerPoint e Excel | Claude Opus 4.6 | Claude no PowerPoint e Claude no Excel são integrações prontas |

| Casos de uso em saúde | Muse Spark | Ponto forte do Muse Spark: 42,8 vs. 14,8 no HealthBench Hard |

Arquitetura e filosofia de design

Como um modelo é construído determina no que ele é bom. Muse Spark e Claude Opus 4.6 refletem apostas genuinamente diferentes sobre o rumo da IA de fronteira.

A Meta reconstruiu seu pipeline de treinamento do zero para o Muse Spark. O modelo é multimodal nativo, ou seja, texto, imagens, áudio e uso de ferramentas foram treinados juntos, e não acoplados depois. Isso contrasta diretamente com a série Llama, que a própria Meta descreveu como baseada em reconhecimento de padrões.

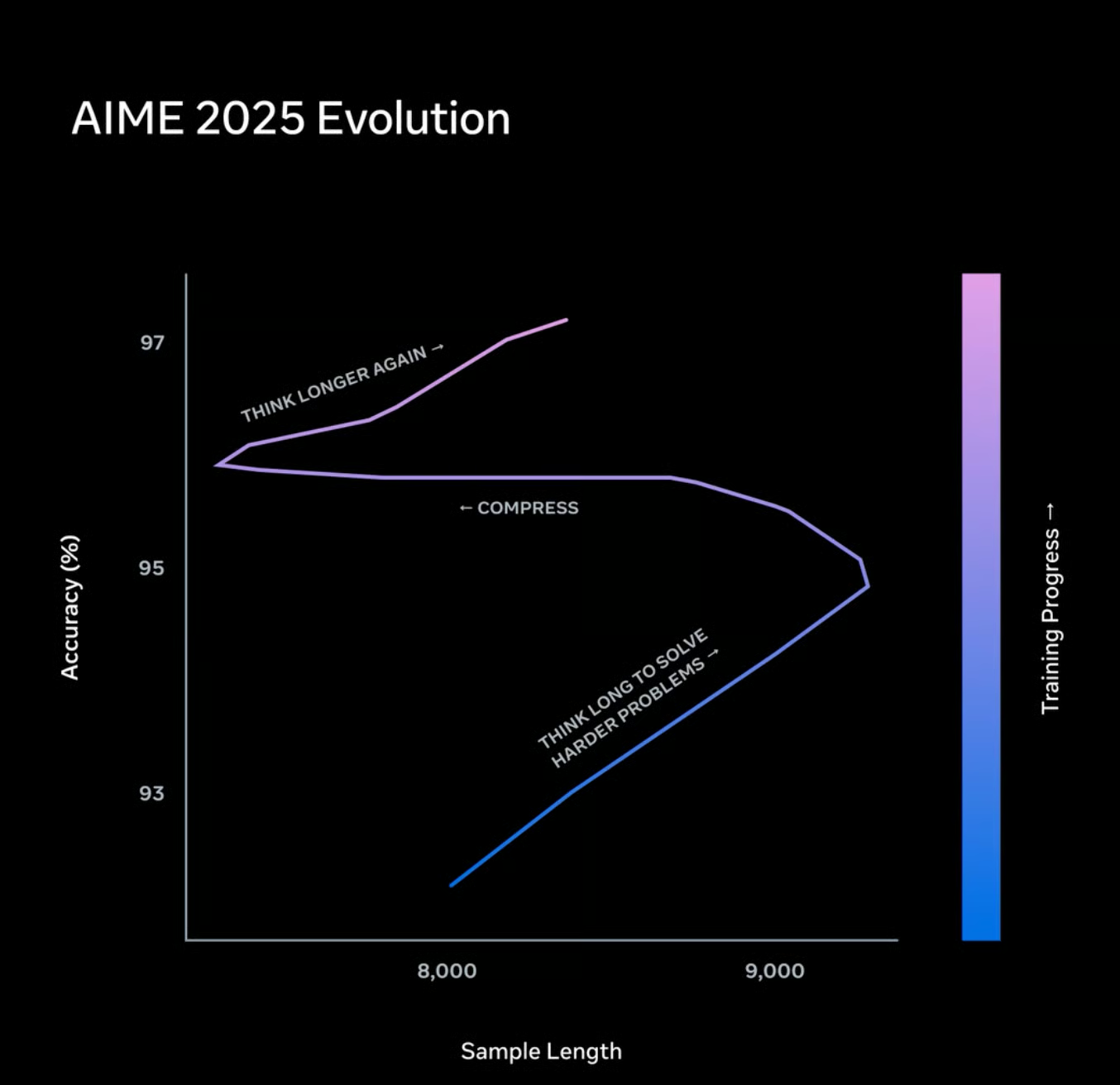

Uma das escolhas técnicas mais interessantes é a Thought Compression, uma técnica de aprendizado por reforço que penaliza o excesso de tokens durante o raciocínio. O objetivo é eficiência: o modelo é incentivado a pensar bem sem gerar etapas intermediárias desnecessárias. Isso ajuda a explicar por que o Muse Spark iguala o desempenho do Llama 4 Maverick com uma fração do custo computacional.

O foco de design da Anthropic para o Opus 4.6 é ação sustentada, não apenas performance em uma única interação. O modelo foi feito para planejar com cuidado, manter coerência por longos períodos e identificar erros no próprio raciocínio. O pensamento adaptativo permite ao modelo decidir se um prompt merece um chain-of-thought mais longo, e o parâmetro de esforço dá aos desenvolvedores controle manual sobre esse trade-off.

Os níveis de esforço valem a pena entender se você usa a API:

- Max effort: Sempre usa pensamento estendido, sem limites de profundidade

- High effort: Padrão; sempre pensa e oferece raciocínio aprofundado

- Medium effort: Pensamento moderado, pode pular em consultas simples

- Low effort: Pula o pensamento em tarefas simples, prioriza velocidade

A pilha reconstruída do Muse Spark é uma ruptura arquitetural mais radical, e a história de eficiência computacional é realmente impressionante. Já o pensamento adaptativo e os controles de esforço do Claude Opus 4.6 são imediatamente úteis para desenvolvedores que precisam de controle fino entre custo e minúcia.

Raciocínio

Números de benchmark são proxies imperfeitos, mas ainda são o sinal mais claro para comparar modelos que a maioria das pessoas não testou lado a lado.

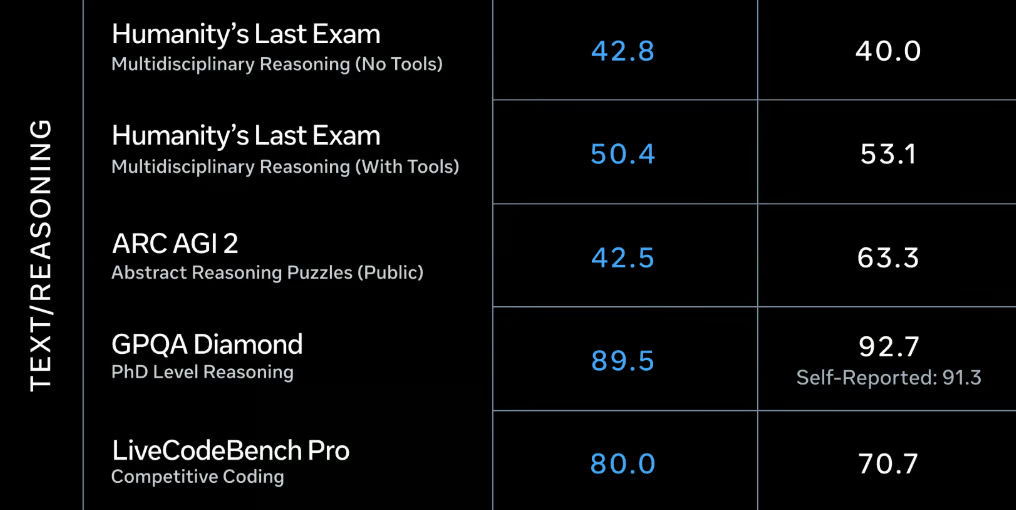

Benchmarks de texto/raciocínio. Notas do Muse Spark (Thinking) à esquerda, Claude Opus 4.6 (Max) à direita. Fonte: Meta

Comparando os modelos no domínio de texto/raciocínio, vemos os seguintes padrões:

- Em raciocínio relacionado a código, o Claude Opus 4.6 assume a liderança, como esperado (80,0 vs. 70,7 no LiveCodeBench Pro)

- O mesmo vale para quebra-cabeças de raciocínio abstrato, medidos no ARC AGI 2, onde a diferença é ainda maior (63,3 vs. 42,5 para o Muse Spark)

- Em GPQA Diamond e Humanity's Last Exam, ambos estão praticamente empatados. Uma observação interessante para este último é que o Muse Spark lidera ligeiramente no raciocínio sem uso de ferramentas, enquanto o Opus 4.6 obtém melhor nota com ferramentas. Segundo a Meta, o modo Contemplating leva o Muse Spark a 50,2 sem e 58,4 com ferramentas, colocando-o no topo do ranking

No geral, o Claude Opus 4.6 parece a melhor escolha quando é exigido raciocínio bem abstrato, enquanto o Muse Spark fica no mesmo nível em raciocínio de bom senso e específico de domínio.

Capacidades multimodais

Ambos lidam com mais do que texto, mas a profundidade desse suporte difere bastante.

A multimodalidade é central na identidade do Muse Spark, não um complemento. O modelo foi treinado nativamente com texto, imagens, áudio e dados estruturados juntos. Chain-of-thought visual é um recurso específico: o modelo consegue resolver problemas baseados em imagem passo a passo, não apenas descrever o que vê. O uso de ferramentas também é nativo, o que importa para fluxos agentivos que envolvem chamadas a APIs externas ou processamento de dados estruturados junto de entradas não estruturadas.

O Claude Opus 4.6 aceita entradas multimodais, mas as notas de pesquisa não o descrevem como multimodal nativo no mesmo sentido arquitetural do Muse Spark. A principal integração multimodal do modelo está no lado da saída: o Claude no PowerPoint gera objetos editáveis de slides, e não imagens, e o Claude no Excel rastreia dependências de fórmulas entre abas.

Benchmarks multimodais. Notas do Muse Spark (Thinking) à esquerda, Claude Opus 4.6 (Max) à direita. Fonte: Meta

No domínio multimodal, o Muse Spark mostra sua força: ele lidera contra o Claude Opus 4.6 em todos os benchmarks citados. Os resultados abaixo são especialmente impressionantes:

- O Muse Spark lidera o benchmark CharXiv Reasoning para entendimento de figuras com nota 86,4 (Claude Opus 4.6: 65,3)

- Em entendimento multimodal (80,4 no MMMU Pro), o Muse Spark está no nível do atual líder, o GPT-5.4

- Tanto em raciocínio incorporado (64,7 vs. 51,6 no ERQA) quanto em factualidade visual (71,3 vs. 62,2 no SimpleVQA), o Muse Spark obtém notas significativamente melhores que o Opus 4.6

Para tarefas que misturam texto, imagens e áudio no nível do modelo, o Muse Spark tem uma base mais forte. Para fluxos corporativos com documentos e planilhas, as integrações do Claude Opus 4.6 são mais práticas de imediato.

Recursos agentivos

Os dois modelos miram casos de uso agentivos, mas abordam o problema de formas diferentes.

O modo Contemplating do Muse Spark é sua aposta agentiva. Em vez de um único modelo raciocinar de forma sequencial, o Contemplating cria vários agentes em paralelo, cada um trabalhando em parte do problema, com verificação cruzada dos resultados entre agentes. Isso é semelhante, em espírito, ao Agent Teams do Claude, mas embutido no próprio modo de raciocínio, e não exposto como um recurso separado de API.

O Agent Teams no Claude Code é o destaque agentivo do Opus 4.6. Você pode criar várias instâncias independentes do Claude, com uma atuando como coordenadora e as demais executando, cada qual com sua própria janela de contexto. Isso significa que fluxos paralelos não competem pelo mesmo orçamento de tokens, mas os custos podem escalar rapidamente. A Anthropic recomenda o Agent Teams para cenários de alta complexidade, em que a execução paralela justifique a despesa.

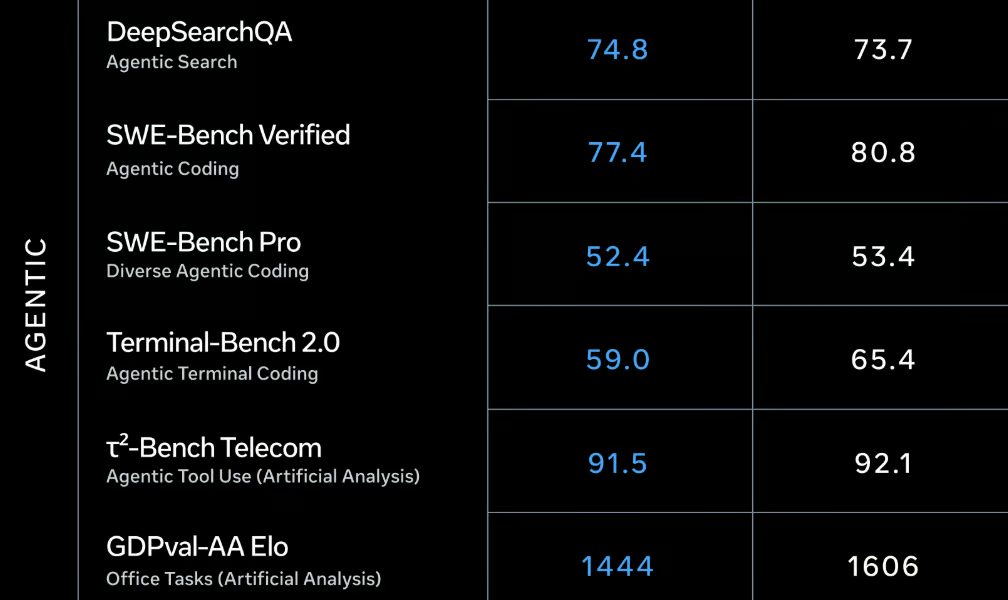

Benchmarks agentivos. Notas do Muse Spark (Thinking) à esquerda, Claude Opus 4.6 (Max) à direita. Fonte: Meta

No geral, a maioria das notas em benchmarks agentivos é bem próxima entre os dois modelos, mas o Opus 4.6 tem uma leve vantagem sobre o Muse Spark. Os destaques:

- Nos três benchmarks de coding agentivo (SWE-Bench Verified e Pro, Terminal-Bench 2.0), o Opus 4.6 lidera. Dito isso, as notas do Muse Spark ainda são muito boas, especialmente considerando que o Opus 4.6 lidera o Terminal-Bench 2.0 (65,4 vs. 59,0 aqui)

- No GDPval-AA, que mede tarefas de escritório do dia a dia, a diferença é a maior. O Claude Opus 4.6 (1606) está em segundo lugar, atrás do seu "irmão menor", o Claude Sonnet 4.6 (1633), e o Muse Spark fica bem atrás (1444)

- O Muse Spark supera o Claude Opus 4.6 em busca agentiva (74,8 vs. 73,7 no DeepSearchQA), o que surpreende

As capacidades agentivas do Claude Opus 4.6 são mais maduras e melhores para a maioria das tarefas. O modo Contemplating do Muse Spark é promissor, mas ainda está sendo liberado gradualmente, o que limita o que dá para construir com ele hoje.

Casos de uso em saúde

Embora não seja uma categoria clássica para comparar LLMs, desempenho em cenários relacionados à saúde merece menção, já que um dos objetivos do Muse Spark é ajudar as pessoas a aprender sobre e melhorar sua saúde. A Meta colaborou com mais de 1.000 médicos para selecionar dados de treinamento médico sobre dúvidas comuns, como conteúdo nutricional de alimentos ou músculos ativados durante exercícios.

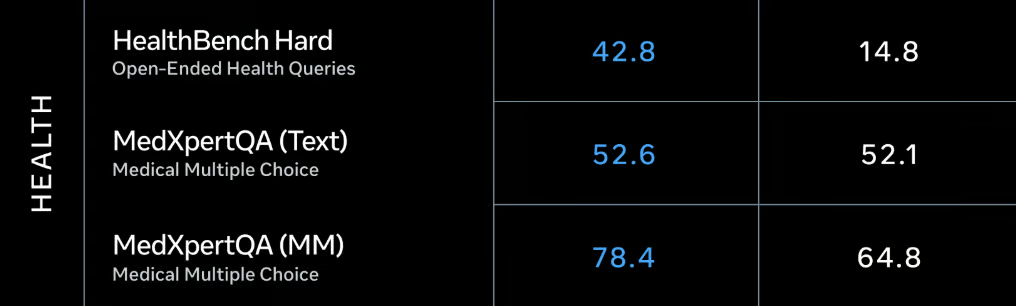

Benchmarks de saúde. Notas do Muse Spark (Thinking) à esquerda, Claude Opus 4.6 (Max) à direita. Fonte: Meta

O foco em saúde aparece nas notas. Como padrão geral, quanto menos padronizadas são as perguntas, maior a diferença entre os modelos.

- O Claude Opus 4.6 consegue competir em avaliações médicas de múltipla escolha (52,1 vs. 52,6 na versão em texto do MedXpertQA)

- Em avaliações multimodais de múltipla escolha, a diferença aumenta, já que o Muse Spark lidera o Opus 4.6 por mais de dez pontos percentuais na versão multimídia do MedXpertQA

- Por fim, em perguntas abertas de saúde, o Muse Spark quase triplica a nota do Opus 4.6 (42,8 vs. 14,8 no HealthBench Hard)

Especialmente em combinação com as habilidades multimodais do Muse Spark, isso abre uma série de aplicações bem legais para o dia a dia. Pense em tirar uma foto da sua geladeira e receber um plano alimentar personalizado para a semana de acordo com suas metas nutricionais. Resta ver como essas ferramentas funcionam na prática, mas a perspectiva é animadora.

Acesso

Ambos os modelos são proprietários e apenas na nuvem, mas a história de acesso é bem diferente.

O Muse Spark está disponível via meta.ai e pelo app Meta AI, ambos exigindo conta Meta. Há uma API em visualização privada para alguns parceiros corporativos, mas não existe API pública nem data confirmada para acesso mais amplo. A Meta afirmou que espera abrir o código de versões futuras do Muse, mas o próprio Muse Spark é fechado, sem opção de download ou fine-tuning.

Sobre privacidade: a política da Meta permite que dados de conversas sejam usados para melhoria de modelos. Se você trabalha com dados sensíveis, vale considerar isso antes de direcioná-los ao Muse Spark.

O Claude Opus 4.6 está disponível via API pública do Claude usando o ID claude-opus-4-6. Também é acessível pelo web app do Claude, pelo Claude Code, pelo Claude Cowork e pelos apps móveis do Claude para iOS/Android. Na interface web, o acesso é restrito a assinantes pagos. O Agent Teams é experimental no Claude Code.

Para quem precisa de acesso à API hoje, o Claude Opus 4.6 é a única opção. A API em preview privado do Muse Spark significa que a maioria dos desenvolvedores ainda não consegue construir com ele, independentemente da qualidade do modelo.

Muse Spark vs Claude Opus 4.6: qual você deve escolher?

Como os pontos fortes e fracos de ambos são bem distintos, dá para indicar com clareza os casos de uso de cada um.

Quando escolher o Muse Spark

O Muse Spark é a melhor opção em um conjunto específico de cenários, a maioria girando em torno de entradas multimodais e eficiência computacional.

- Seu fluxo de trabalho mistura texto, imagens e áudio no nível do modelo, não só como anexos

- Seu caso de uso envolve perguntas médicas

- Você precisa de chain-of-thought visual em problemas baseados em imagem

- O custo computacional é um limitador e você quer desempenho de ponta com custo de inferência menor

- Você trabalha em problemas que se beneficiam de verificação multiagente em paralelo (quando o modo Contemplating estiver totalmente disponível)

- Você já está no ecossistema Meta e tem acesso à API corporativa em preview

Um alerta sincero: o acesso público ao Muse Spark é limitado hoje. Se você não entrar no preview corporativo, vai usar via meta.ai, o que é ótimo para explorar, mas não para construir fluxos de produção.

Quando escolher o Claude Opus 4.6

O Claude Opus 4.6 é a escolha mais forte para a maioria dos desenvolvedores e cientistas de dados hoje, principalmente porque ele é realmente acessível.

-

Você precisa de uma API pública com um ID de modelo documentado (

claude-opus-4-6) -

Coding agentivo é seu caso de uso principal, especialmente com Claude Code e Agent Teams

-

Você trabalha com grandes bases de código que se beneficiam de janela de contexto de 1 milhão de tokens

-

Você quer performance de ponta em benchmarks de programação

-

Você deseja controle fino sobre a profundidade do raciocínio via parâmetro de esforço

-

Seu time usa PowerPoint ou Excel e quer IA integrada diretamente nessas ferramentas

O recurso Agent Teams ainda é experimental e os custos de tokens escalam rápido quando você roda agentes em paralelo. Mas, para tarefas complexas de desenvolvimento de software, o modelo de execução paralela é realmente útil, e a compactação de conversas mantém agentes de longa duração no trilho.

Considerações finais

A verdade é que esses dois modelos não estão competindo exatamente pelo mesmo público agora. O Claude Opus 4.6 é um modelo maduro, acessível, líder em benchmarks, com API pública, recursos documentados e integrações reais. O Muse Spark é um primeiro lançamento tecnicamente interessante de um novo laboratório, com acesso público limitado e menos números publicados. Esse gap pode fechar rápido, mas é a realidade em abril de 2026.

Se você é desenvolvedor ou cientista de dados e precisa construir algo hoje, o Claude Opus 4.6 é a escolha prática. As notas em benchmarks de programação, a janela de contexto de 1M de tokens e o Agent Teams no Claude Code são coisas que você pode usar de fato. A multimodalidade nativa e a Thought Compression do Muse Spark são realmente interessantes, mas é mais difícil avaliá-las sem um acesso mais amplo à API.

Onde eu acompanharia o Muse Spark de perto é em tarefas de raciocínio multimodal quando o modo Contemplating estiver totalmente disponível. A abordagem multiagente paralela para problemas difíceis é uma aposta diferente de simplesmente escalar tokens de inferência e, se as alegações de eficiência da Meta se confirmarem em testes independentes, a história de custo computacional fica muito atraente para produção.

Se você quer desenvolver aplicações de IA, recomendo fortemente se matricular na nossa trilha de habilidades AI Engineering with LangChain. O conteúdo é nativo em IA, o que significa que você tem um tutor pessoal que ensina exatamente as habilidades de que precisa para, a partir do seu nível, virar um verdadeiro especialista em projetar fluxos de trabalho de IA.

Editor de Ciência de Dados @ DataCamp | Fazer previsões e construir com APIs é a minha paixão.