programa

Las últimas noticias de OpenAI son especialmente interesantes para quienes usan GPT-5 mini: el nuevo modelo GPT-5.4 mini es el doble de rápido y mejora el rendimiento en todas las áreas. Además, OpenAI ha lanzado la versión más reciente de su clase de modelos más eficiente, GPT-5.4 nano.

En este artículo, veremos qué son GPT-5.4 mini y nano, cómo rinden en comparación con GPT-5.4 y quién puede beneficiarse de los nuevos modelos de «vía rápida» de OpenAI.

No te pierdas también nuestra comparativa de GPT-5.4 vs Claude Opus y nuestra guía de OpenAI Frontier.

¿Qué es GPT-5.4 mini?

GPT-5.4 mini es el nuevo LLM pequeño de OpenAI, que sustituye a GPT-5 mini. Aporta varias mejoras importantes respecto al rendimiento de su predecesor y, además, es el doble de rápido, uno de sus principales atractivos.

En la API admite un amplio abanico de funciones:

- Entrada de texto e imagen

- Uso de herramientas y function calling

- Búsqueda web

- Uso del ordenador

- Skills

¿Qué es GPT-5.4 nano?

GPT-5.4 nano es la versión más pequeña de la nueva línea de modelos de OpenAI y sustituye a GPT-5 nano. Como sugiere el nombre, es aún más eficiente que el modelo mini, a costa de un menor rendimiento. Aun así, GPT-5.4 nano supera al antiguo modelo mini, GPT-5 mini, en muchos benchmarks.

No admite tantas funciones como el modelo mini, pero ofrece las capacidades estándar actuales de la API, como entrada de imágenes, uso de herramientas, function calling y salidas estructuradas.

¿Para quién son realmente GPT-5.4 mini y nano?

Esta versión ofrece flexibilidad para elegir el modelo adecuado, teniendo en cuenta el clásico equilibrio entre rendimiento por un lado, y latencia y precio por el otro.

OpenAI recomienda mini y nano para desarrolladores que trabajan en aplicaciones donde no quieres latencia. En esencia, casos que deben sentirse ágiles, en los que los usuarios toleran muy poco cualquier retraso.

Para tareas con mucha carga de razonamiento y poco margen de error, multimodalidad y tareas agentic, GPT-5.4 sigue siendo la primera opción.

Benchmarks de GPT-5.4 mini y nano

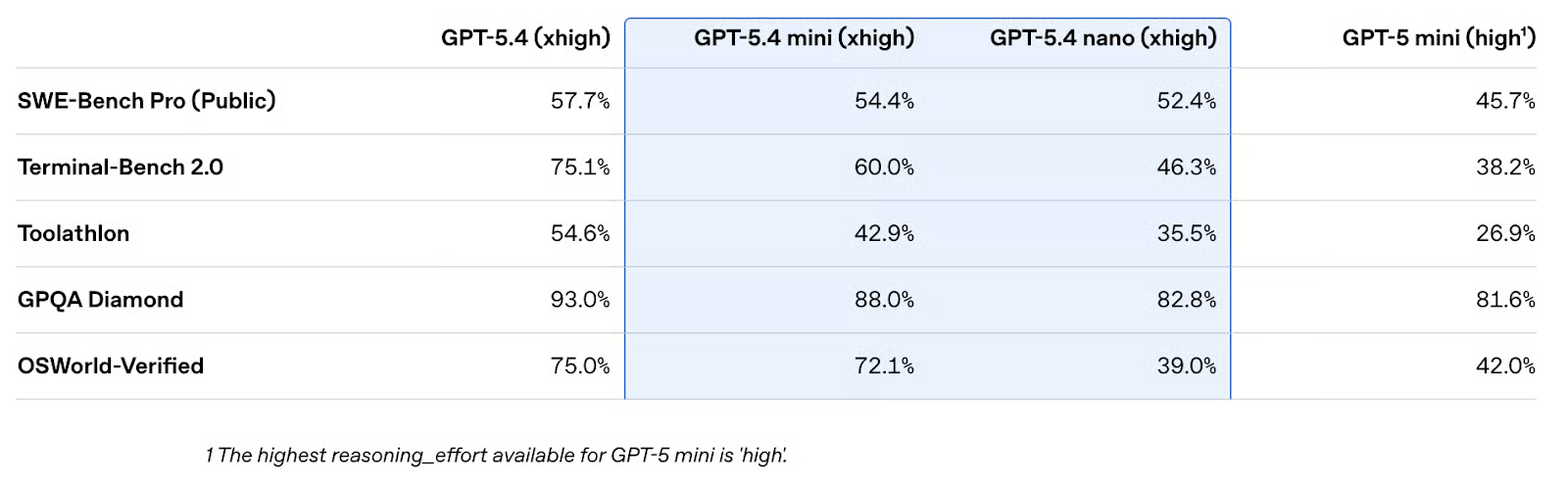

Echemos un vistazo a los benchmarks de LLM. Algunos resultados destacables:

- Programación: Tanto 5.4 mini (54,4%) como nano (52,4%) alcanzan una puntuación en SWE‑Bench Pro superior al 50% y no se quedan muy atrás de GPT-5.4. La mejora frente a GPT-5 mini (45,7%) es notable.

- Agentes de terminal: En Terminal‑Bench 2.0 se aprecia bien la distancia entre los tres sabores de los modelos 5.4. GPT-5.4 mini (60,0%) puede competir con antiguos modelos insignia como GPT 5.2 (62,2%), y 5.4 nano (46,3%) con GPT-5 (49,6%), pero ambos quedan lejos del tope de rendimiento de GPT-5.4.

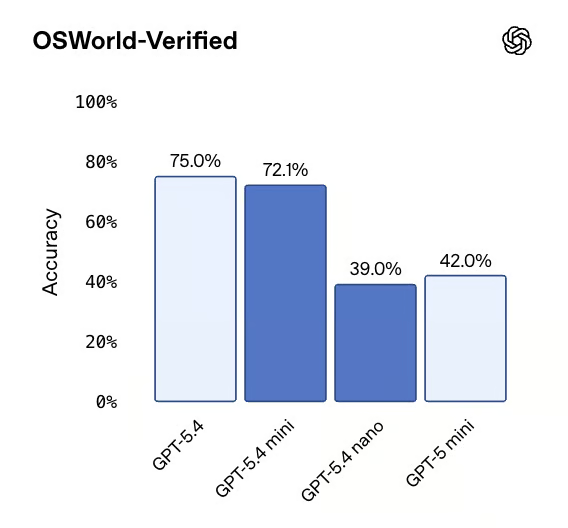

- Uso del ordenador: Mientras GPT-5.4 mini logra un impresionante 72,1% en OSWorld‑Verified, muy cerca de GPT-5.4, GPT-5.4 nano se queda claramente atrás (39,0%). Es evidente que no está pensado para tareas de uso del ordenador.

Otra cosa que nos llamó la atención enseguida fue que el orden de las puntuaciones se mantenía (casi) en todas las categorías: GPT-5.4 > GPT-5.4 mini > GPT-5.4 nano > GPT-5 mini. En todas las puntuaciones publicadas, la única excepción fue que el modelo mini antiguo superó a GPT-5.4 nano en visión y uso del ordenador, que no son los focos de nano.

No obstante, no está claro cuánto influye el nuevo nivel de esfuerzo de razonamiento «xhigh», que no estaba disponible para GPT-5 mini.

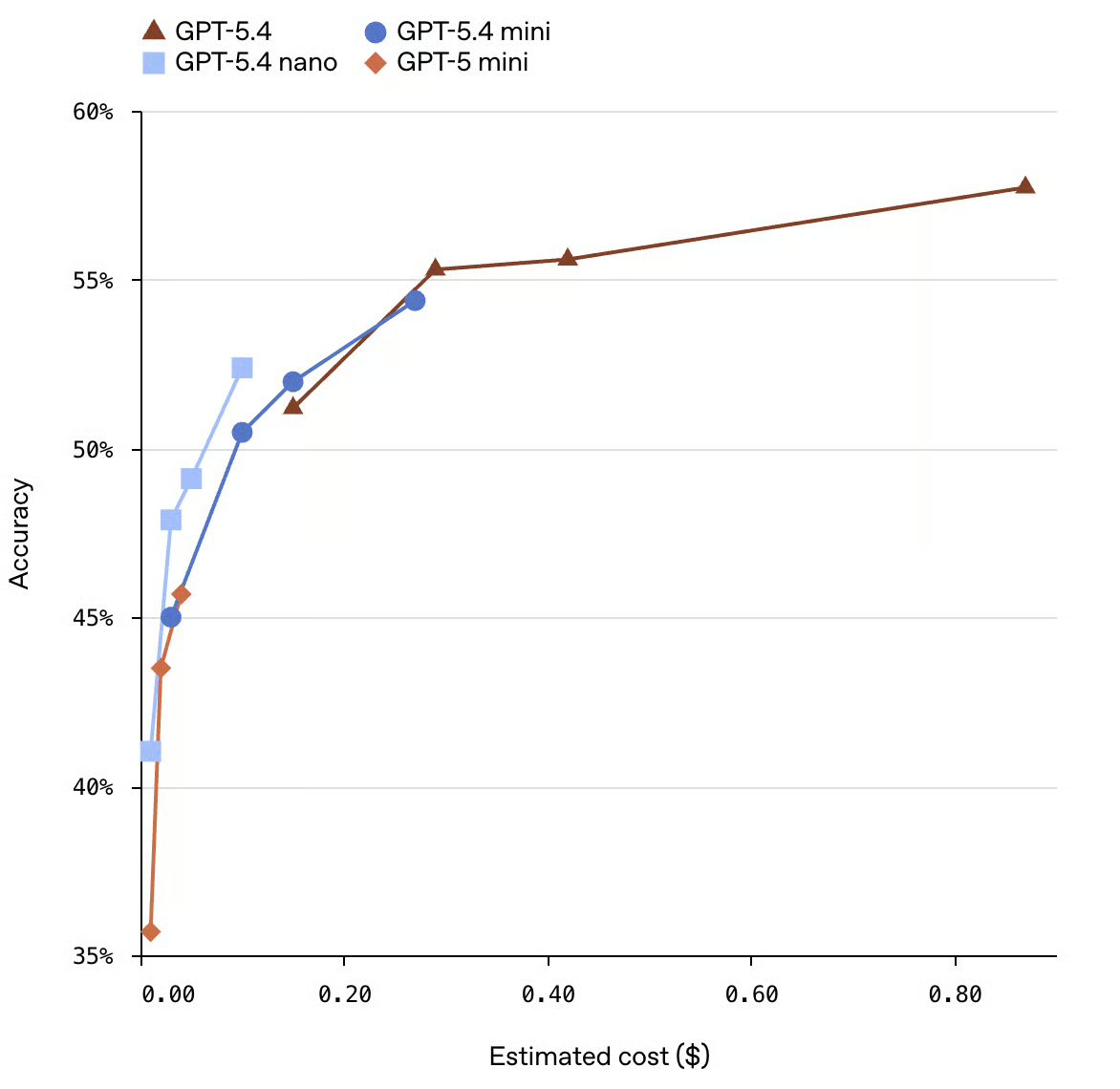

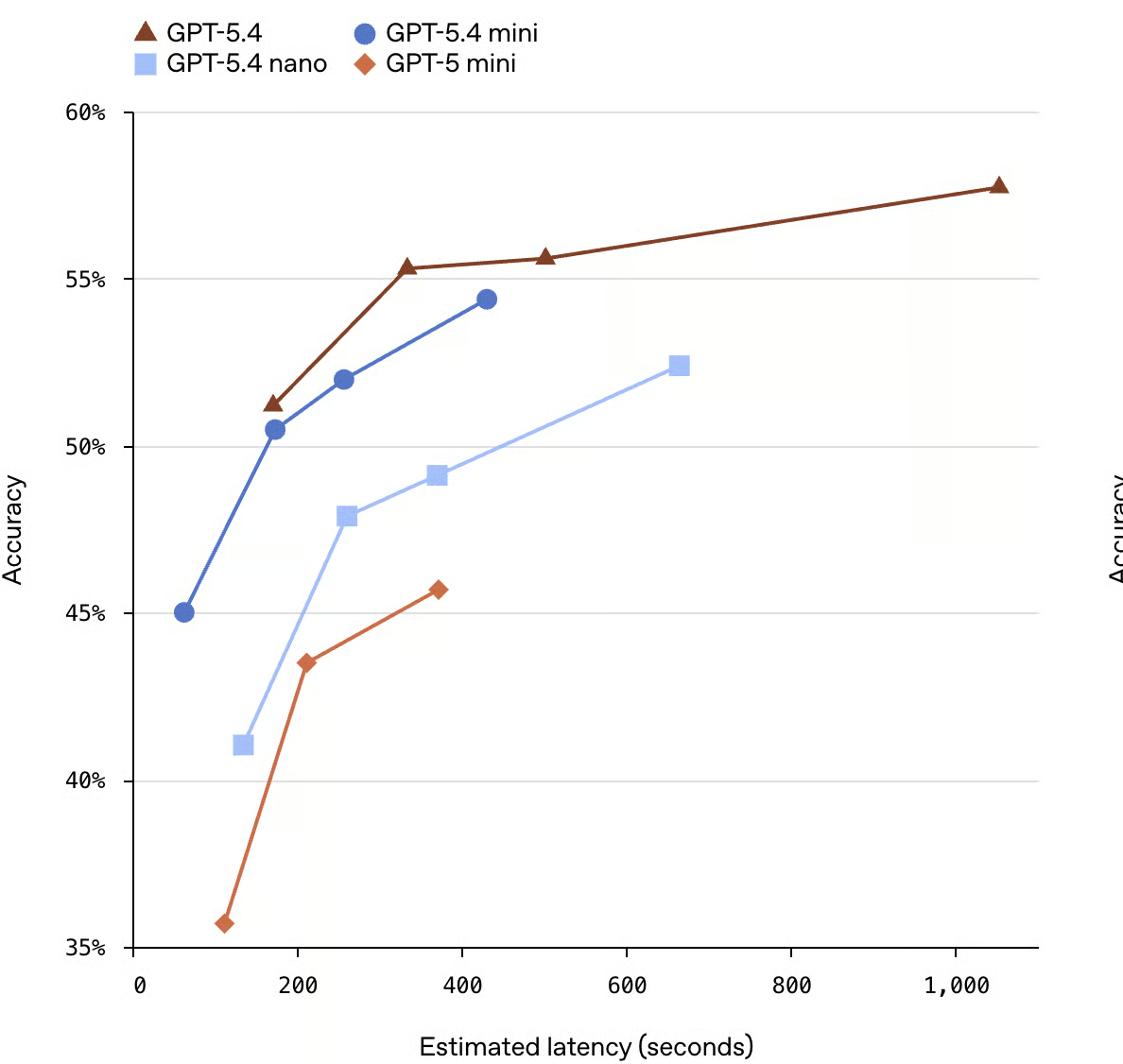

Pero, por supuesto, el rendimiento no lo es todo. OpenAI quiere subrayar el fenómeno de los rendimientos decrecientes, como muestran los gráficos que han compartido. De los cuatro modelos comparados, GPT-5.4 es el más lento y el más caro.

Las curvas ilustran los rendimientos decrecientes: puedes invertir más cómputo/dinero en un modelo y obtener mejoras modestas en precisión, pero los saltos se vuelven cada vez menores. Los últimos puntos porcentuales de GPT-5.4 cuestan mucho más que los primeros. Este tipo de gráfico ayuda a los ingenieros a decidir si merece la pena exprimir ese 3–4% adicional de precisión en su caso de uso concreto.

Aun así, conviene analizar el gráfico con espíritu crítico: el eje Y empieza en el 35%, no en 0%. Esto exagera visualmente las diferencias entre modelos. La ventaja de GPT-5.4 frente a GPT-5 mini parece mayor en un gráfico que arranca en 35% que en uno que empieza en cero.

Además, OpenAI señala que estas cifras de latencia no provienen de ejecuciones reales en producción; son estimaciones modeladas. Hay algo un poco incongruente en esto. OpenAI sugiere decisiones de infraestructura basándose en un gráfico con estimaciones de modelo únicamente.

También resulta extraño ver estimaciones modeladas sin barras de error. Apostaría a que, de haberlas incluido, se solaparían bastante.

Cómo acceder a GPT-5.4 mini y nano

Deberías poder encontrar GPT‑5.4 mini en la interfaz web de ChatGPT, en Codex y en la API. En ChatGPT es el modelo «Thinking» predeterminado para los usuarios de los planes Free y Go, y el modelo de respaldo para el resto de usuarios que hayan alcanzado su límite de uso de GPT-5.4 Thinking.

GPT‑5.4 nano, en cambio, solo está disponible a través de la API.

Precios de GPT-5.4 mini y nano

GPT-5.4 mini cuesta 0,75 $ por 1 M de tokens de entrada y 4,50 $ por 1 M de tokens de salida. GPT‑5.4 nano, que de nuevo solo está disponible en la API, cuesta 0,20 $ por 1 M de tokens de entrada y 1,25 $ por 1 M de tokens de salida. Por estos precios, obtienes una ventana de contexto de 400k.

Obviamente es mucho más barato que el modelo insignia de OpenAI (2,50 $/15 $ por 1 M de tokens de entrada/salida).

GPT-5.4 mini y nano vs. Claude Haiku 4.5

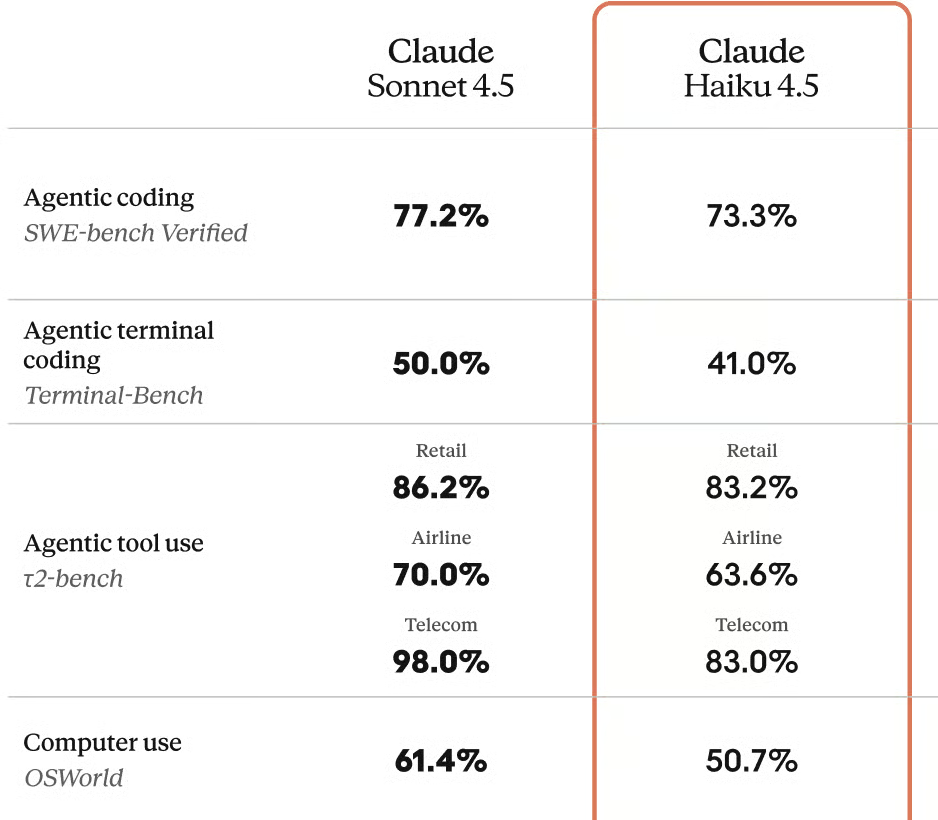

Lo realmente interesante es que GPT-5.4 nano tiene un precio inferior al de muchos modelos pequeños de menor rendimiento de la competencia, en concreto Claude Haiku 4.5, con un precio de 1 $ por millón de tokens de entrada y 5 $ por millón de tokens de salida. Así que OpenAI ha recortado el precio de Claude Haiku en ambos casos.

Pero ¿cómo se comparan los modelos en las pruebas? Compararlos no es trivial porque se han evaluado en variantes distintas. Los resultados de SWE-bench no son comparables, ya que usan versiones totalmente diferentes. Claude Haiku 4.5 se probó en SWE-bench Verified y obtuvo una puntuación del 73,3%, mientras que GPT-5.4 nano se probó en SWE-bench Pro (Public) y obtuvo un 52,4%. Pro es la prueba más reciente y más difícil.

Puntuación de Claude Haiku 4.5 del 50,7% en OSWorld

En las dos comparativas más limpias «manzanas con manzanas», GPT-5.4 nano va por delante en ambas.

- En GPQA Diamond, GPT-5.4 nano puntúa un 9,8% más, y

- en τ2-bench Telecom, GPT-5.4 nano puntúa un 9,5% más.

Sin embargo, Haiku 4.5 podría tener ventaja en el uso del ordenador según OSWorld, aunque, de nuevo, las variantes del benchmark complican la comparación.

- Claude Haiku 4.5 se probó en OSWorld estándar y obtuvo un 50,7%

- GPT-5.4 nano se probó en OSWorld-Verified y obtuvo un 39,0%.

OSWorld-Verified es la prueba más dura, pero la brecha de casi 12% parece bastante significativa. Somos más cautos a la hora de descartarla porque, a diferencia de SWE-bench Verified y SWE-bench Pro, donde se sabe que los modelos que lo hacen bien en Verified suelen rendir peor en Pro, hay menos evidencias de que ocurra lo mismo con OSWorld y OSWorld-Verified.

Puntuación de GPT-5.4 nano del 39% en OSWorld-Verified

Qué se comenta sobre GPT-5.4 mini y nano

Muchas reacciones en línea apuntan a un patrón conocido en tecnología: el buque insignia de este año acaba en el plan gratuito del próximo. Era de esperar, pero el ritmo del cambio impresiona.

Se dice que la frontier AI tiene la depreciación más rápida de cualquier producto jamás creado. Muchos se preguntan si el modelo por el que hoy pagas un premium seguirá mereciendo la pena dentro de seis meses. A veces, los desarrolladores no quieren cambiar de modelo sin más si ya han pasado por un proceso de fine-tuning o si han calibrado coste y rendimiento.

Conclusión

Los benchmarks muestran una clara escalera de rendimiento desde GPT-5.4 hasta 5.4 mini y 5.4 nano. Pero para muchas tareas, la elección práctica depende más de la latencia y el presupuesto que de exprimir unos puntos porcentuales extra.

Para muchas apps en producción, GPT-5.4 mini puede ser un nuevo gran predeterminado, ya que su calidad se siente frontier y, al mismo tiempo, es lo bastante barato y rápido para usos de alto volumen.

GPT-5.4 nano es más un especialista para cargas en tiempo real muy grandes y muy sensibles a la latencia. También es ideal como subagente para hacer el trabajo «en masa» más sencillo, delegado por modelos Thinking de mayor rendimiento.

En un mundo donde el buque insignia del año pasado se convierte en el «mini» de este año, diseñar sistemas capaces de intercambiar modelos con facilidad es mejor estrategia que optimizar para un único lanzamiento. Te recomiendo nuestro curso Building Scalable Agentic Systems, que aborda esta cuestión y te enseña a usar frameworks agentic como el Model Context Protocol (MCP).

GPT-5.4 mini y nano: preguntas frecuentes

¿GPT‑5.4 mini es solo un GPT‑5 mini más rápido?

No. Es más rápido y, además, notablemente más potente en benchmarks como SWE‑Bench Pro, manteniendo una ventana de contexto de 400k.

¿Cuál es el principal trade-off entre GPT‑5.4 y 5.4 mini?

GPT‑5.4 sigue siendo el mejor para máxima calidad; 5.4 mini sacrifica algo de precisión a cambio de una latencia y un coste mucho mejores.

¿Cuándo debería usar GPT‑5.4 nano en lugar de mini?

Usa nano para cargas ultra sensibles a la latencia o de volumen muy alto, donde el coste y la velocidad pesan más que la máxima precisión.

¿GPT-5.4 mini y nano admiten herramientas e imágenes?

Sí, ambos admiten entrada de imágenes, uso de herramientas, function calling y salidas estructuradas en la API.

¿Son suficientemente buenos GPT-5.4 mini y nano para programar y para agentes?

Sí. En particular, 5.4 mini supera el 50% en SWE‑Bench Pro y logra puntuaciones competitivas en Terminal‑Bench 2.0, por lo que es sólido para código y agentes de terminal.

5.4 nano es más débil, pero suficiente para muchas tareas de apoyo, como enrutar peticiones, actuar como subagente económico y gestionar flujos de terminal sencillos cuando priman la velocidad y el coste.

Editor de ciencia de datos en DataCamp | Me encanta hacer previsiones y crear con API.