Track

With the launch of GPT-5, OpenAI expanded tool/function calling in the API to include free-form tool calls (raw text, no JSON), grammar constraints via Lark/CFG, tool allowlists, and improved tool-use reasoning. Together, these make GPT-5 a truly agentic model; you can connect APIs, databases, and custom tools to the Responses API and generate grounded answers or even automate workflows.

In this tutorial, I will cover function tools, custom tools, grammar constraints, tool allowlists, and preambles, with code examples and clear explanations of how they work and when to use them.

Function Calling in GPT-5: What & Why

Function Calling in GPT-5 allows you to enhance the model with your application’s data and actions. The model can determine when to call a tool, while you handle the logic and return results for a final, grounded response.

Types of Function Calling:

- Function Tools (JSON Schema): These offer structured inputs and outputs that the model can call precisely.

- Custom Tools (Free-Form): These provide flexible text inputs and outputs for unstructured integrations.

What is function calling?

GPT-5 supports both structured function tools (using JSON Schema) and custom tools that accept free-form text payloads (such as SQL, scripts, or configurations) for seamless integration with external runtimes.

Function tools are defined by JSON Schema, ensuring the model understands exactly what arguments to pass and allowing for strict input validation. You can read more in our OpenAI Function Calling tutorial.

Why use function calling?

- Flexibility: Free-form tools enable the model to produce the exact text your system requires, without the constraints of rigid JSON, making them ideal for code, queries, or configurations.

- Reliability and Control: Function tools enforce structured arguments, which improves predictability and reduces parsing errors.

- Agentic Workflows: GPT-5 is designed for complex, multi-step tool usage and coding tasks, making tool calling a primary feature.

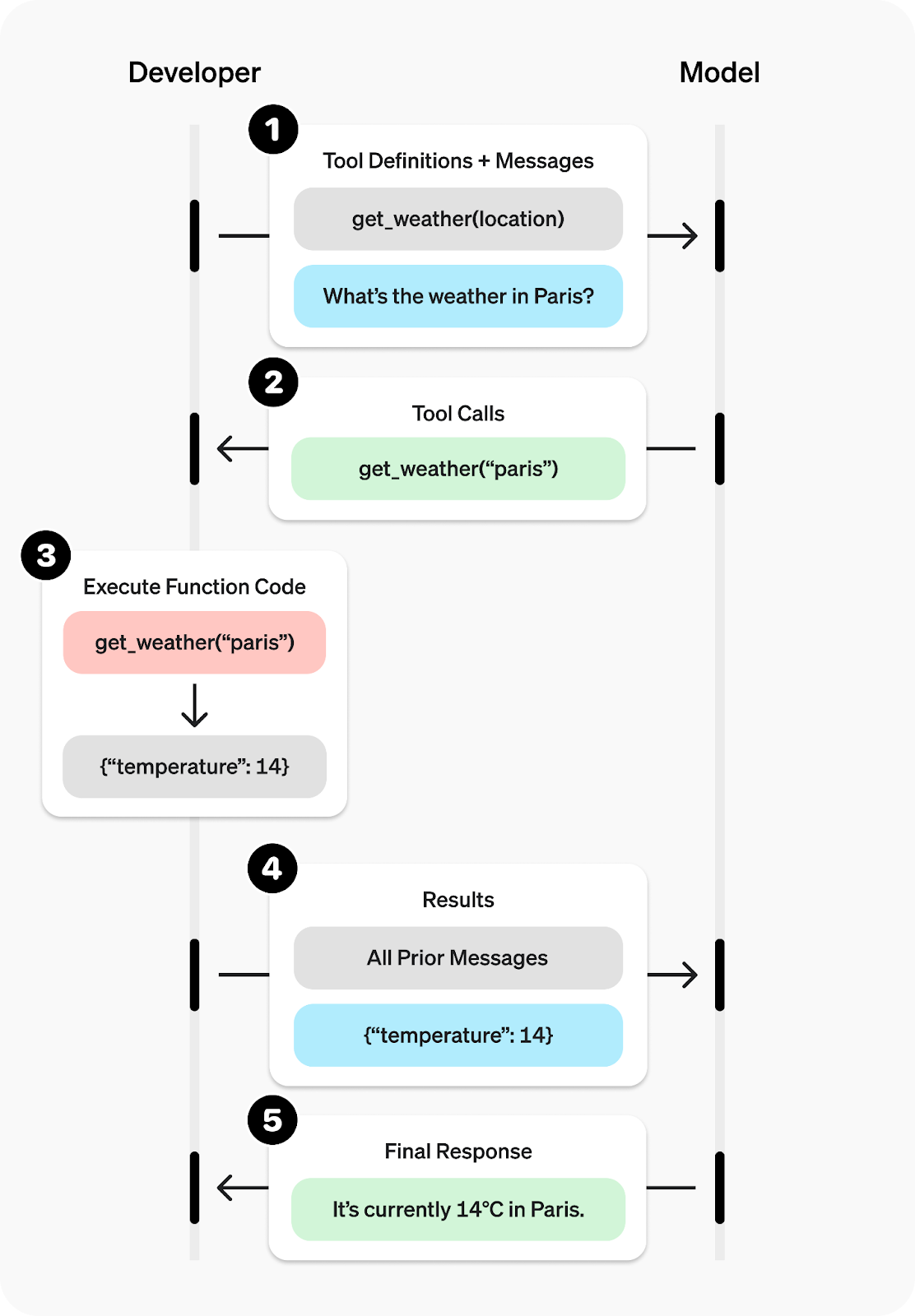

How does function calling work?

- Send a prompt that includes all the available tools.

- The model issues a tool call (either with arguments or free-form text).

- Your application executes the tool (run Python function).

- Return the output from the tool to the model.

- The model then responds or may call additional tools.

Source: Function calling - OpenAI API

When to use Function vs. Custom Tools

- Use function tools when you want strict validation and predictable, typed arguments through JSON Schema.

- Use custom tools (free-form) when your runtime requires raw text (like scripts, SQL, or configurations) or when you need fast iterations without schemas.

Function Tools (JSON Schema): Send Structured Data

Using Function Tools with JSON Schema enables you to obtain predictable and structured results from the model. The model evaluates the tools you have declared, determines when to use one, proposes JSON-validated arguments, and then executes the corresponding function in your code.

After that, you can ask the model to generate a response based solely on the data returned by the tool, ensuring that the output is grounded, machine-readable, and easy to integrate.

This example illustrates several key concepts:

- Tool discovery and argument generation: The model selects the appropriate tool and automatically fills in its JSON-typed arguments.

- Tool execution loop: Your application executes the requested tool(s) and sends the results back to the model.

- Schema-shaped final answer: The final response is based strictly on the outputs from the tools, which minimizes inaccuracies and ensures reliability.

1. Setting up

- Create an OpenAI account and generate an API key on the platform dashboard. On the dashboard, open the API section, then “View API keys,” and click “Create new secret key”.

- Add a payment method or credits to your account so API calls can run; usage deducts from your prepaid balance as you make requests.

- Save your API key as an environment variable named OPENAI_API_KEY before starting your app.

- Install the official OpenAI Python SDK with

pip install openai.

2. Define tools

You provide a list of tools with a name, description, and JSON Schema for parameters. The schema helps the model supply well-formed arguments.

make_coffeeexpects one string parameter:coffee_type.random_coffee_facttakes no parameters (empty object). These definitions are passed viatoolsargument in the API call so the model knows what is available.

import os

from openai import OpenAI

import json

client = OpenAI(api_key = os.environ["OPENAI_API_KEY"])

tools = [

{

"type": "function",

"name": "make_coffee",

"description": "Gives a simple recipe for making a coffee drink.",

"parameters": {

"type": "object",

"properties": {

"coffee_type": {

"type": "string",

"description": "The coffee drink, e.g. espresso, cappuccino, latte"

}

},

"required": ["coffee_type"],

},

},

{

"type": "function",

"name": "random_coffee_fact",

"description": "Returns a fun fact about coffee.",

"parameters": {"type": "object","properties":{}}

}

]

3. Implement tools

You implement the Python functions that actually do the work:

make_coffee(coffee_type)returns a concise recipe string keyed by the requested drink.random_coffee_fact()returns a small fact payload.

Both return JSON-serializable dicts, ideal for feeding back to the model.

def make_coffee(coffee_type):

recipes = {

"espresso": "Grind fine, 18g coffee → 36g espresso in ~28s.",

"cappuccino": "Brew 1 espresso shot, steam 150ml milk, pour and top with foam.",

"latte": "Brew 1 espresso shot, steam 250ml milk, pour for silky texture.",

}

return {"coffee_type": coffee_type, "recipe": recipes.get(coffee_type.lower(), "Unknown coffee type!")}

def random_coffee_fact():

return {"fact": "Coffee is the second most traded commodity in the world, after oil."}4. Start a conversation

Initiate the conversation with the user's question: {"role": "user", "content": "How do I make a latte?"}

The model might return messages that include a function_call item, such as make_coffee with {"coffee_type":"latte"}. You should then append the model's messages back into input_list: input_list += response.output. This helps maintain the conversation state for the next turn.

# Track used tools

used_tools = []

input_list = [{"role": "user", "content": "How do I make a latte?"}]

response = client.responses.create(

model="gpt-5",

tools=tools,

input=input_list,

)

input_list += response.output5. Execute tool calls and attach outputs

You should iterate over response.output and identify items where item.type is equal to "function_call". For these items, parse item.arguments and dispatch the requests to your Python functions.

For the make_coffee function, call it using make_coffee(args["coffee_type"]). For the random_coffee_fact function, simply call random_coffee_fact().

After executing the functions, append a function_call_output message that includes the following:

call_id(which links this output to the model’s request)output(a JSON string of your function result).

for item in response.output:

if getattr(item, "type", "") == "function_call":

used_tools.append(item.name)

args = json.loads(item.arguments or "{}")

if item.name == "make_coffee":

result = make_coffee(args["coffee_type"])

elif item.name == "random_coffee_fact":

result = random_coffee_fact()

else:

result = {"error": f"Unknown tool {item.name}"}

input_list.append({

"type": "function_call_output",

"call_id": item.call_id,

"output": json.dumps(result)

})6. Generate the final answer

You make a second client.responses.create call, passing the updated input_list that now includes the tool outputs.

The used_tools variable captures the names of tools that appeared in the model's function calls. We will use it to display which tools were used.

final = client.responses.create(

model="gpt-5",

tools=tools,

input=input_list,

instructions="Answer using only the tool results."

)

print("Final output:\n", final.output_text)

print("\n--- Tool Usage ---")

for t in tools:

status = "USED ✅" if t["name"] in used_tools else "NOT USED ❌"

print(f"{t['name']}: {status}")Because the user asked about a latte, the model selected make_coffee with coffee_type = "latte", your code executed it, and the final response was built only from that tool result.

Final output:

{"coffee_type":"latte","recipe":"Brew 1 espresso shot, steam 250ml milk, pour for silky texture."}

--- Tool Usage ---

make_coffee: USED ✅

random_coffee_fact: NOT USED ❌Custom Tools (Free-Form): Send Raw Text

Use GPT-5's free-form custom tools to allow the model to send raw text directly to your tool, such as code, SQL, shell commands, or simple CSV, without requiring JSON Schema.

This capability goes beyond the older JSON-only function calls, providing you with greater flexibility for integration with executors, query engines, or domain-specific language (DSL) interpreters.

In free-form mode, the model generates a custom tool call with an unstructured text payload that you can route to your runtime. You then return the result for the model to use in finalizing the user-facing answer.

We will now write the code that enables the model to select the appropriate tools and return unstructured raw text as the output. This output will then be passed to a Python function to generate a result. This way, the user can provide the ingredients they have, and the model will generate recipes that are tailored to their needs.

In this example code, we have:

- Initialized the OpenAI client and defined a single custom tool called

meal_plannerthat accepts raw text input. - Created the Python

plan_mealthat takes a comma-separated string of ingredients and returns a meal idea. - Started the conversation with a user message listing the available ingredients.

- Made an initial model call instructing GPT-5 to invoke the tool using only a clean list of ingredients, and appended the model’s output to the conversation history.

- Located the emitted custom tool call and extracted the raw comma-separated input string.

- Executed the tool by passing the list to

plan_meal, then sent the result back to the model as afunction_call_outputlinked viacall_id. - Performed a final model call to convert the tool’s raw idea into a concise, step-by-step recipe, and printed both the tool call details and the final output.

from openai import OpenAI

import json

import random

client = OpenAI()

# --- Custom tool ---

tools = [

{

"type": "custom",

"name": "meal_planner",

"description": "Takes ONLY a comma-separated list of ingredients and suggests a meal idea."

}

]

# --- Fake meal planner ---

def plan_meal(ingredients: str) -> str:

ideas = [

f"Stir-fry: {ingredients} with garlic & soy sauce.",

f"One-pot rice: cook {ingredients} together in broth until fluffy.",

f"Soup: simmer {ingredients} in stock with herbs.",

f"Sheet-pan bake: roast {ingredients} at 200°C for ~20 min."

]

return random.choice(ideas)

# --- Start conversation ---

messages = [

{"role": "user", "content": "I only have chicken, rice, and broccoli. Any dinner ideas?"}

]

# 1) Ask model with clear instruction

resp = client.responses.create(

model="gpt-5",

tools=tools,

input=messages,

instructions="If the user mentions ingredients, call the meal_planner tool with ONLY a comma-separated list like 'chicken, rice, broccoli'."

)

messages += resp.output

# 2) Find the tool call

tool_call = next((x for x in resp.output if getattr(x, "type", "") == "custom_tool_call"), None)

assert tool_call, "No tool call found!"

# Get clean CSV input

ingredients_csv = tool_call.input.strip()

# 3) Run the tool

meal_result = plan_meal(ingredients_csv)

# 4) Send tool output back

messages.append({

"type": "function_call_output",

"call_id": tool_call.call_id,

"output": meal_result

})

# 5) Final model response

final = client.responses.create(

model="gpt-5",

tools=tools,

input=messages,

instructions="Turn the meal idea into a short recipe with 3-4 steps."

)

print("\n--- Tool Call ---")

print("Name:", tool_call.name)

print("Input:", ingredients_csv)

print("Output:", meal_result)

print("\n--- Final Output ---\n", final.output_text)As a result, we received stats on the tools used, including installation, input, and output. Additionally, the final output provides a complete recipe on how to create the meal.

--- Tool Call ---

Name: meal_planner

Input: chicken, rice, broccoli

Output: One-pot rice: cook chicken, rice, broccoli together in broth until fluffy.

--- Final Output ---

One-Pot Chicken, Rice & Broccoli

- Season bite-size chicken pieces with salt and pepper; sear in a little oil in a pot until lightly browned.

- Add 1 cup rinsed rice and 2 cups broth (or water + salt). Bring to a boil, then cover and simmer on low for 12 minutes.

- Scatter 2 cups small broccoli florets on top, cover, and cook 5-7 more minutes until rice is fluffy and broccoli is tender.

- Rest 5 minutes off heat, fluff, and adjust seasoning. Optional: stir in a knob of butter or a splash of soy.Grammar Constraints (Lark): Constrain Tool Outputs

GPT-5 now supports context-free grammar (CFG) to ensure that output formats are strictly controlled.

By applying a grammar, such as SQL or a domain-specific language (DSL), to its responses, GPT-5 guarantees that the outputs consistently follow the required structure. CFG is especially important for automated processes and high-stakes workflows.

GPT-5 can use grammars defined in formats like Lark to restrict the tool's outputs during generation. This approach to constrained decoding enhances reliability by preventing format drift and ensuring structural consistency.

We will now write the code where the model selects a tool and outputs ONLY a grammar-valid arithmetic expression; this expression is then evaluated in Python, the result is returned to the conversation, and the final response is presented clearly in natural language.

In this example code, we have:

- Initialized the OpenAI client and defined a custom tool

math_solverthat must output ONLY a grammar-valid arithmetic expression (Lark CFG). - Started the conversation with a natural-language math question from the user.

- Made a first model call instructing GPT-5 to call

math_solverwith an expression that conforms to the grammar. - Located the emitted tool call and extracted the raw expression string from the tool payload.

- Evaluated the expression locally in a restricted environment to produce a concrete result.

- Returned the tool execution result back to the conversation, linked via the original

call_id. - Performed a final model call to present the evaluated result clearly in natural language.

from openai import OpenAI

import json

client = OpenAI()

# --- Local fake evaluator ---

def eval_expression(expr: str) -> str:

try:

# Evaluate safely using Python's eval on restricted globals

result = eval(expr, {"__builtins__": {}}, {})

return f"{expr} = {result}"

except Exception as e:

return f"Error evaluating expression: {e}"

# --- Custom tool with grammar constraint ---

tools = [

{

"type": "custom",

"name": "math_solver",

"description": "Solve a math problem by outputting ONLY a valid arithmetic expression.",

"format": {

"type": "grammar",

"syntax": "lark",

"definition": r"""

start: expr

?expr: term

| expr "+" term -> add

| expr "-" term -> sub

?term: factor

| term "*" factor -> mul

| term "/" factor -> div

?factor: NUMBER

| "(" expr ")"

%import common.NUMBER

%ignore " "

"""

},

}

]

# --- User asks ---

msgs = [

{"role": "user", "content": "What is (12 + 8) * 3 minus 5 divided by 5?"}

]

# 1) First model call: GPT-5 must emit a grammar-valid expression

resp = client.responses.create(

model="gpt-5",

tools=tools,

input=msgs,

instructions="Always call the math_solver tool with a grammar-valid arithmetic expression like '(12 + 8) * 3 - 5 / 5'."

)

msgs += resp.output

tool_call = next(

x for x in resp.output

if getattr(x, "type", "") in ("custom_tool_call", "tool_call", "function_call")

)

expr = getattr(tool_call, "input", "") or getattr(tool_call, "arguments", "")

print("\n=== Grammar-Constrained Expression ===")

print(expr)

# 2) Run the local evaluator

tool_result = eval_expression(expr)

print("\n=== Tool Execution Result ===")

print(tool_result)

# 3) Return tool output

msgs.append({

"type": "function_call_output",

"call_id": tool_call.call_id,

"output": tool_result

})

# 4) Final pass: GPT-5 presents answer nicely

final = client.responses.create(

model="gpt-5",

input=msgs,

tools=tools,

instructions="Present the evaluated result clearly in natural language."

)

print("\n=== Final Output ===")

print(final.output_text)As you can see, the model has selected the tool and then generated a grammar-constrained expression. After that, it is passed through the Python function to generate the answer. In the end, we have a proper answer in natural language.

=== Grammar-Constrained Expression ===

(12 + 8) * 3 - 5 / 5

=== Tool Execution Result ===

(12 + 8) * 3 - 5 / 5 = 59.0

=== Final Output ===

59Tool Allowlists: Safely Limit What the Model Can Use

The allowed_tools setting allows you to restrict the model to a safe selection of tools from your full toolkit. This enhances predictability and prevents unintended tool calls, while still providing the model with flexibility within the allowed set.

You can let the model choose among allowed tools or require it to use one, mirroring “force tool” behaviors seen in other stacks, and aligning with general best practices for tool calling orchestration.

We will now write the code where we allow a single tool, have the model call only that tool, run it, feed the results back, and then produce a concise user-facing summary.

Note: Please create a free Firecrawl account, generate an API key, save it as an environment variable FIRECRAWL_API_KEY, and install the Firecrawl Python SDK using pip install firecrawl-py.

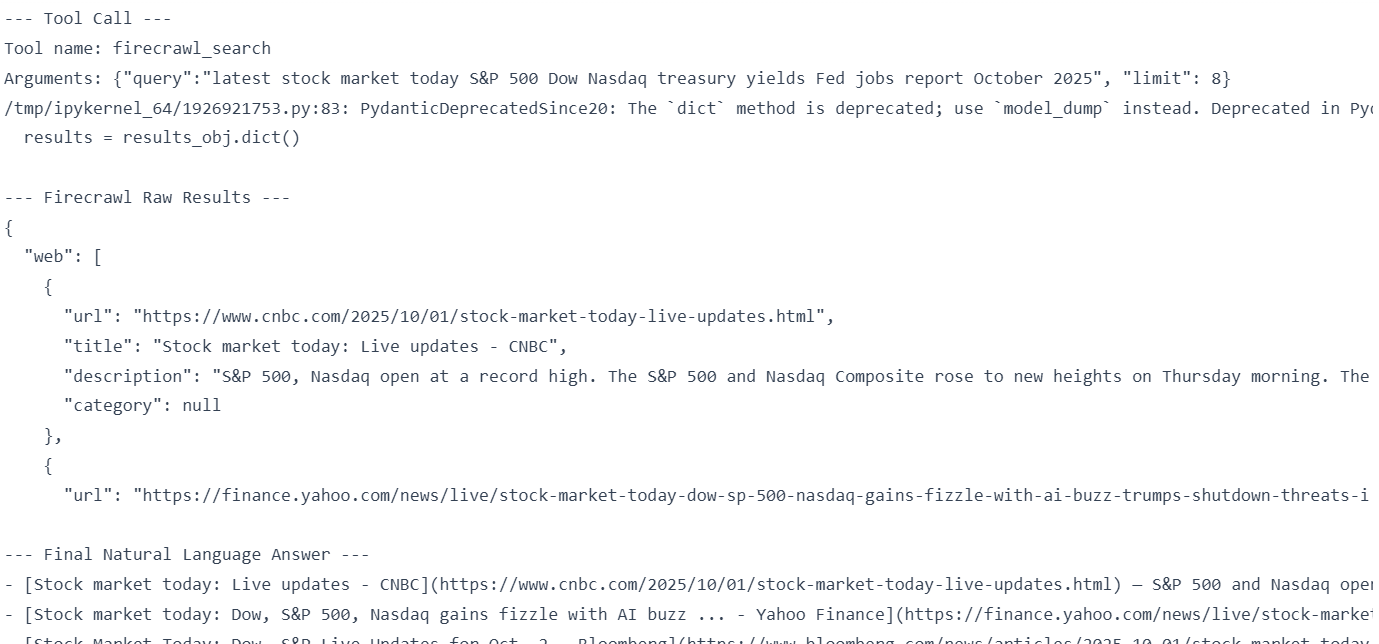

In this example code, we have:

- Defined a full toolset with both

dummy_web_searchandfirecrawl_search. - Restrained the model to

firecrawl_searchonly viatool_choice = { type: "allowed_tools", mode: "required", tools: [...] }. - Issued the first model call, captured the tool call name and arguments, and printed them for visibility.

- When

firecrawl_searchis invoked, deserialized arguments, executed Firecrawl, converted its response into a JSON-serializable dict, and extracted the safe data payload. - Fed the tool results back to the conversation using

function_call_outputlinked bycall_id, then made a final model call to summarize into 3–4 bullets with Markdown links. - Included a fallback branch that would run the dummy tool (though the allowlist prevents it in this run).

- Printed the final natural-language answer.

from openai import OpenAI

from firecrawl import Firecrawl

import json

import os

client = OpenAI()

firecrawl = Firecrawl(api_key=os.environ["FIRECRAWL_API_KEY"])

# --- Full toolset ---

tools = [

{

"type": "function",

"name": "dummy_web_search",

"description": "A fake web search that always returns static dummy results.",

"parameters": {

"type": "object",

"properties": {

"query": {"type": "string"},

"limit": {"type": "integer"}

},

"required": ["query"]

},

},

{

"type": "function",

"name": "firecrawl_search",

"description": "Perform a real web search using Firecrawl.",

"parameters": {

"type": "object",

"properties": {

"query": {"type": "string"},

"limit": {"type": "integer"}

},

"required": ["query"]

},

},

]

# --- Dummy implementation ---

def dummy_web_search(query, limit=3):

return {

"query": query,

"results": [f"[Dummy] Result {i+1} for '{query}'" for i in range(limit)]

}

# --- User asks ---

messages = [

{"role": "user", "content": "Find me the latest info about the stock market."}

]

# --- Restrict to Firecrawl only ---

resp = client.responses.create(

model="gpt-5",

tools=tools,

input=messages,

instructions="Only the firecrawl_search tool is allowed. Do not use dummy_web_search.",

tool_choice={

"type": "allowed_tools",

"mode": "required", # force Firecrawl

"tools": [{"type": "function", "name": "firecrawl_search"}],

},

)

for item in resp.output:

if getattr(item, "type", "") in ("function_call", "tool_call"):

print("\n--- Tool Call ---")

print("Tool name:", item.name)

print("Arguments:", item.arguments)

if item.name == "firecrawl_search":

args = json.loads(item.arguments)

# Firecrawl returns a SearchData object → convert it

results_obj = firecrawl.search(

query=args["query"],

limit=args.get("limit", 3),

)

# 🔥 Convert to JSON-serializable dict

if hasattr(results_obj, "to_dict"):

results = results_obj.to_dict()

elif hasattr(results_obj, "dict"):

results = results_obj.dict()

else:

results = json.loads(results_obj.json()) if hasattr(results_obj, "json") else results_obj

print("\n--- Firecrawl Raw Results ---")

print(json.dumps(results, indent=2)[:500])

# ✅ Extract only the data portion for summarization

safe_results = results.get("data", results)

# Feed back to GPT

messages += resp.output

messages.append({

"type": "function_call_output",

"call_id": item.call_id,

"output": json.dumps(safe_results) # now safe

})

# Final pass

final = client.responses.create(

model="gpt-5",

tools=tools,

input=messages,

instructions="Summarize the Firecrawl search results into 3-4 bullet points with clickable [Title](URL) links."

)

print("\n--- Final Natural Language Answer ---")

print(final.output_text)

elif item.name == "dummy_web_search":

args = json.loads(item.arguments)

results = dummy_web_search(args["query"], args.get("limit", 3))

print("\n--- Dummy Web Search Results ---")

print(json.dumps(results, indent=2))It has selected the Firecrawl search, and using that, it has generated the final response in markdown format.

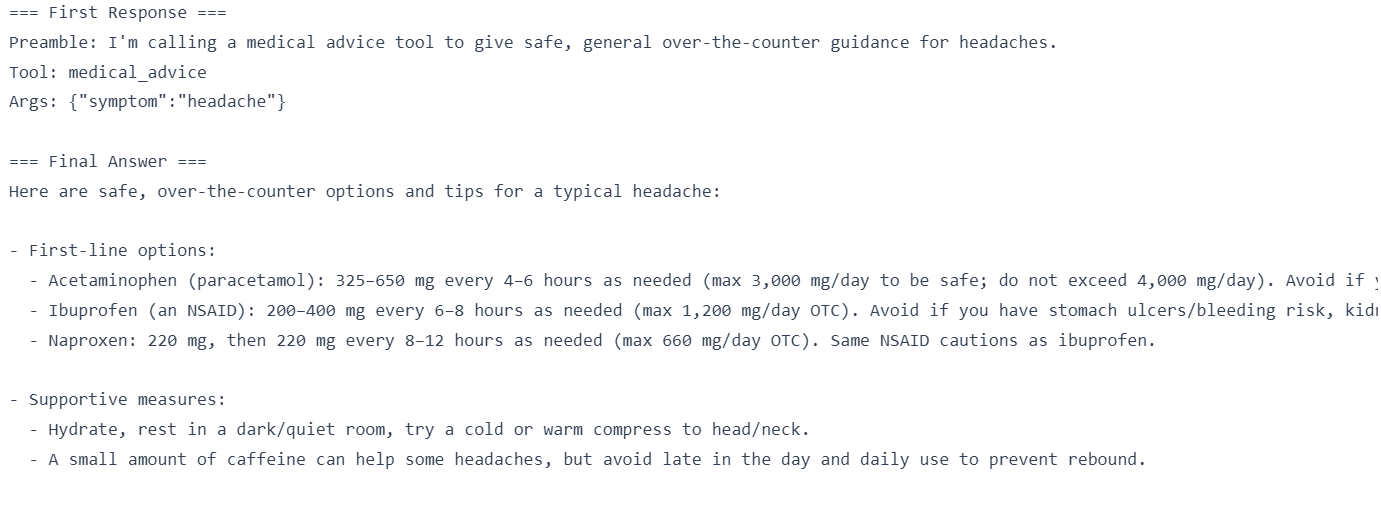

Preambles: Tell the Model How to Call Tools

Preambles are brief explanations that the model generates before invoking a tool, clarifying the reason for the call. They enhance transparency, build user trust, and improve debugging clarity in complex workflows. You can enable them by using a simple instruction like “explain before calling a tool,” typically without adding noticeable latency.

We will now write the code where the model first generates a brief “Preamble:” line explaining why it’s calling a tool, then calls the tool, you execute it locally, return the result, and the model produces a clear final answer.

In this example code, we have:

- Initialized the OpenAI client and defined a strict-typed function tool (

medical_advice) with JSON Schema. - Added a system instruction telling the model to output a one-line

“Preamble:”before any tool call. - Initialize the conversation with a user’s symptom question.

- Made the first model call to obtain both the preamble and the tool call with its arguments.

- Extracted and executed the tool locally to generate advice.

- Returned the tool’s output back to the model, linked via

call_id. - Performed a final model call so GPT‑5 presents the result as a concise, user-friendly answer.

from openai import OpenAI

import json

import random

client = OpenAI()

# --- Fake tool implementation ---

def medical_advice(symptom: str):

remedies = {

"headache": "You can take acetaminophen (paracetamol) or ibuprofen, rest in a quiet room, and stay hydrated.",

"cough": "Drink warm fluids, use honey in tea, and consider over-the-counter cough syrup.",

"fever": "Use acetaminophen to reduce fever, stay hydrated, and rest. See a doctor if >39°C.",

}

return {"advice": remedies.get(symptom.lower(), "Please consult a healthcare provider for guidance.")}

# --- Tool definition ---

tools = [{

"type": "function",

"name": "medical_advice",

"description": "Provide safe, general over-the-counter advice for common symptoms.",

"parameters": {

"type": "object",

"properties": {"symptom": {"type": "string"}},

"required": ["symptom"],

"additionalProperties": False

},

"strict": True,

}]

# --- Messages (system preamble instruction) ---

messages = [

{"role": "system", "content": "Before you call a tool, explain why you are calling it in ONE short sentence prefixed with 'Preamble:'."},

{"role": "user", "content": "What should I take for a headache?"}

]

# 1) First call: expect preamble + tool call

resp = client.responses.create(model="gpt-5", input=messages, tools=tools)

print("=== First Response ===")

for item in resp.output:

t = getattr(item, "type", None)

if t == "message":

# Extract just the model's text

content = getattr(item, "content", None)

text = None

if isinstance(content, list):

text = "".join([c.text for c in content if hasattr(c, "text")])

elif content:

text = str(content)

if text:

# ✅ Only print once

print(text)

if t in ("function_call", "tool_call", "custom_tool_call"):

print("Tool:", getattr(item, "name", None))

print("Args:", getattr(item, "arguments", None))

# Extract tool call

tool_call = next(x for x in resp.output if getattr(x, "type", None) in ("function_call","tool_call","custom_tool_call"))

messages += resp.output

# 2) Execute tool locally

args = json.loads(getattr(tool_call, "arguments", "{}"))

symptom = args.get("symptom", "")

tool_result = medical_advice(symptom)

# 3) Return tool result

messages.append({

"type": "function_call_output",

"call_id": tool_call.call_id,

"output": json.dumps(tool_result)

})

# 4) Final model call → natural answer

final = client.responses.create(model="gpt-5", input=messages, tools=tools)

print("\n=== Final Answer ===")

print(final.output_text)You can see that before calling the tool, it provides an explanation of why it is calling the tool. This is good for observability and debugging.

Summary

GPT-5 is OpenAI’s most advanced model to date, particularly excelling in coding and agent-based workflows. Its latest API features allow for the straightforward development of production-grade systems from start to finish.

In this tutorial, you learned how to:

- Return structured outputs using Function Tools and JSON Schema for reliable downstream automation.

- Enable free-form execution with Custom Tools (raw-text), allowing the model to generate code, SQL, shell scripts, and CSV files beyond just JSON calls.

- Restricted formats using Lark grammar constraints when precision is crucial, such as in mathematics, SQL, or domain-specific languages (DSLs).

- Constrain tool access with allowlists (or by enforcing a single tool) to enhance safety, control, and determinism.

- Improve transparency with brief preambles, so the model explains why it is calling a specific tool, aiding observability and debugging.

To learn more about what you can do with OpenAI’s various tools, I recommend taking our OpenAI Fundamentals skill track and Working with the OpenAI API course.

As a certified data scientist, I am passionate about leveraging cutting-edge technology to create innovative machine learning applications. With a strong background in speech recognition, data analysis and reporting, MLOps, conversational AI, and NLP, I have honed my skills in developing intelligent systems that can make a real impact. In addition to my technical expertise, I am also a skilled communicator with a talent for distilling complex concepts into clear and concise language. As a result, I have become a sought-after blogger on data science, sharing my insights and experiences with a growing community of fellow data professionals. Currently, I am focusing on content creation and editing, working with large language models to develop powerful and engaging content that can help businesses and individuals alike make the most of their data.