R packages are collections of functions and data sets developed by the community. They increase the power of R by improving existing base R functionalities, or by adding new ones. For example, if you are usually working with data frames, probably you will have heard about dplyr or data.table, two of the most popular R packages.

But imagine that you'd like to do some natural language processing of Korean texts, extract weather data from the web, or even estimate actual evapotranspiration using land surface energy balance models, R packages got you covered! Recently, the official repository (CRAN) reached 10,000 packages published, and many more are publicly available through the internet.

If you are starting with R, this post will cover the basics of R packages and how to use them. You’ll cover the following topics and 11 frequently asked user questions:

Start Learning R For Free

Introduction to Regression in R

1. What is an R Package?

Let’s start with some definitions. A package is a suitable way to organize your own work and, if you want to, share it with others. Typically, a package will include code (not only R code!), documentation for the package and the functions inside, some tests to check everything works as it should, and data sets.

The basic information about a package is provided in the DESCRIPTION file, where you can find out what the package does, who the author is, what version the documentation belongs to, the date, the type of license its use, and the package dependencies.

Note that you can also use the alternative stats link to see the DESCRIPTION file.

Besides finding the DESCRIPTION files such as cran.r-project.org or stat.ethz.ch, you can also access the description file inside R with the command packageDescription("package"), via the documentation of the package help(package = "package"), or online in the repository of the package.

For example, for the “stats” package, these ways will be:

packageDescription("stats")

help(package = "stats")2. What are R Repositories?

A repository is a place where packages are located so you can install them from it. Although you or your organization might have a local repository, typically, they are online and accessible to everyone. Three of the most popular repositories for R packages are:

- CRAN: the official repository, it is a network of ftp and web servers maintained by the R community around the world. The R foundation coordinates it, and for a package to be published here, it needs to pass several tests that ensure the package is following CRAN policies.

- Bioconductor: this is a topic-specific repository intended for open-source software for bioinformatics. As CRAN, it has its own submission and review processes, and its community is very active, having several conferences and meetings per year.

- Github: although this is not R-specific, Github is probably the most popular repository for open-source projects. Its popularity comes from the unlimited space for open source, the integration with git, a version control software, and its ease to share and collaborate with others. But be aware that there is no review process associated with it.

3. How to Install an R Package

Installing R Packages From CRAN

How you can install an R package will depend on where it is located. So, for publicly available packages, this means to what repository it belongs. The most common way is to use the CRAN repository, then you just need the name of the package and use the command install.packages("package").

For example, the oldest package published in CRAN and still online and being updated is the vioplot package, from Daniel Adler.

Can you find its date of publication? Clue: It is in the package description ;).

To install it from CRAN, you will need to use:

install.packages("vioplot")After running this, you will receive some messages on the screen. They will depend on what operating system you are using, the dependencies, and if the package was successfully installed.

Let’s take a deeper look in the output of the vioplot installation, some of the messages you might get are:

Installing package into ‘/home/username/R/x86_64-pc-linux-gnu-library/3.3’

(as ‘lib’ is unspecified)This indicates where your package is installed on your computer, and you can give a different folder location by using the lib parameter.

trying URL 'https://cran.rstudio.com/src/contrib/vioplot_0.2.tar.gz'

Content type 'application/x-gzip' length 3801 bytes

==================================================

downloaded 3801 bytesHere you receive information about the origin and size of the package. This will depend on the CRAN mirror you have selected.

You can also change it, but you’ll read more about this later on in this post.

* installing *source* package ‘vioplot’ ...

** R

** preparing package for lazy loading

** help

*** installing help indices

** building package indices

** testing if installed package can be loaded

* DONE (vioplot)These are the messages of the installation itself, the source code, the help, some tests, and finally, a message that everything went well and the package was successfully installed. Depending on what platform you are, these messages can differ.

The downloaded source packages are in

‘/tmp/RtmpqfWbYL/downloaded_packages’The last piece of information is telling you where the original files from the package are located. They are not necessary for the use of the package, so they are usually copied to a temporary folder location.

Finally, to install more than one R package at a time, just write them as a character vector in the first argument of the install.packages() function:

install.packages(c("vioplot", "MASS"))Installing From CRAN Mirrors

Remember that CRAN is a network of servers (each of them called a “mirror”), so you can specify which one you would like to use. If you are using R through the RGui interface, you can do it by selecting it from the list that appears just after you use the install.packages() command. On RStudio, the mirror is already selected by default.

You can also select your mirror by using the chooseCRANmirror(), or directly inside the install.packages() function by using the repo parameter. You can see the list of available mirrors with getCRANmirrors() or directly on the CRAN mirrors page.

Example: to use the Ghent University Library mirror (Belgium) to install the vioplot package, you can run the following:

install.packages("vioplot", repo = "https://lib.ugent.be/CRAN")Installing Bioconductor Packages

In the case of Bioconductor, the standard way of installing a package is by first executing the following script:

source("https://bioconductor.org/biocLite.R")This will install some basic functions needed to install bioconductor packages, such as the biocLite() function. If you want to install the core packages of Bioconductor, just type it without further arguments:

biocLite()If, however, you are interested in just a few particular packages from this repository, you can type their names directly as a character vector:

biocLite(c("GenomicFeatures", "AnnotationDbi"))Installing Packages Via devtools

As you have read above, each repository has its own way of installing a package from them, so in the case that you are regularly using packages from different sources, this behavior can be a bit frustrating. A more efficient way is probably to use the devtools package to simplify this process because it contains specific functions for each repository, including CRAN.

You can install devtools as usual with install.packages("devtools"), but you might also need to install Rtools on Windows, Xcode command line tools on Mac, or r-base-dev and r-devel on Linux.

After devtools is installed, you will be able to use the utility functions to install other packages. The options are:

install_bioc()from Bioconductor,install_bitbucket()from Bitbucket,install_cran()from CRAN,install_git()from a git repository,install_github()from GitHub,install_local()from a local file,install_svn()from a SVN repository,install_url()from a URL, andinstall_version()from a specific version of a CRAN package.

For example, to install the babynames package from its Github repository, you can use:

devtools::install_github("hadley/babynames")4. How to Update, Remove and Check Installed R Packages

After you spend more time with R, it is normal that you use install.packages() a few times per week or even per day, and given the speed at what R packages are developed, is possible that sooner than later you will need to update or replace your beloved packages. In this section, you will find a few functions that can help you to manage your collection.

- To check what packages are installed on your computer, you can use:

installed.packages()- Uninstalling a package is straightforward with the function

remove.packages(), in your case:

remove.packages("vioplot")- You can check what packages need an update with a call to the function:

old.packages()- You can update all packages by using:

update.packages()- But for a specific package, just use once again:

install.packages("vioplot")5. Are There User Interfaces for Installing R Packages?

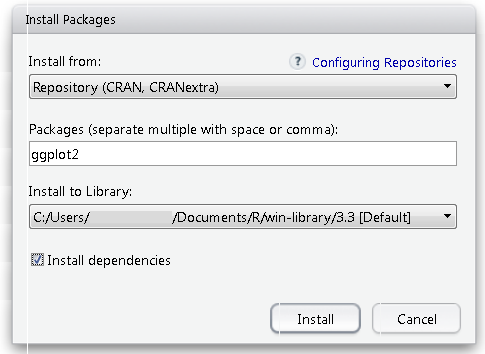

If you prefer a graphical user interface (that is, pointing and clicking) to install packages, both RStudio and the RGui include them. In RStudio, you will find it at Tools -> Install Package, and there you will get a pop-up window to type the package you want to install:

While in the RGui you will find the utilities under the Packages menu.

6. How to Load Packages

After a package is installed, you are ready to use its functionalities. If you just need a sporadic use of a few functions or data inside a package you can access them with the notation packagename::functionname(). For example, since you have installed the babynames package, you can explore one of its datasets.

Do you remember how to see an overview of what functions and data are contained in a package?

Yes, the help(package = "babynames"), can tell you this.

To access the births dataset inside the babynames package you just type:

babynames::births## # A tibble: 6 x 2

## year births

## <int> <int>

## 1 2009 4130665

## 2 2010 3999386

## 3 2011 3953590

## 4 2012 3952841

## 5 2013 3932181

## 6 2014 3988076If you will make more intensive use of the package, then maybe it is worth loading it into memory. The simplest way to do this is with the library() command.

Please note that the input of install.packages() is a character vector and requires the name to be in quotes, while library() accepts either character or name and makes it possible for you to write the name of the package without quotes.

After this, you no longer need the package::function() notation, and you can directly access its functionalities as any other R base functions or data:

births## # A tibble: 6 x 2

## year births

## <int> <int>

## 1 2009 4130665

## 2 2010 3999386

## 3 2011 3953590

## 4 2012 3952841

## 5 2013 3932181

## 6 2014 3988076You may have heard about the require() function: it is indeed possible to load a package with this function, but the difference is that it will not throw an error if the package is not installed.

So use this function carefully!

You can read more about library() vs require() in R in a separate article.

7. What’s the Difference Between a Package and a Library?

Speaking about the library() function, sometimes there is confusion between a package and a library, and you can find people calling “libraries” packages.

Please don’t get confused: library() is the command used to load a package, and it refers to the place where the package is contained, usually a folder on your computer, while a package is the collection of functions bundled conveniently.

Maybe it can help a quote from Hadley Wickham, Chief data scientist at RStudio, and instructor of the “Writing functions in R” DataCamp course:

@ijlyttle a package is a like a book, a library is like a library; you use library() to check a package out of the library #rsats

— Hadley Wickham (@hadleywickham) December 8, 2014

Another good reminder of this difference is to run the function library() without arguments. It will provide you the list of packages installed in different libraries on your computer:

library()8. How to Load More than One R Package at a Time

Although you can just input a vector of names to the install.packages() function to install a package, in the case of the library() function, this is not possible. You can load a set of packages one at a time, or if you prefer, use one of the many workarounds developed by R users.

You can find examples in this Stack Overflow discussion, this R package, and this GitHub repository.

9. How to Unload an R Package

To unload a given package, you can use the detach() function. The use will be:

detach("package:babynames", unload=TRUE)10. What are the Alternative Sources of Documentation and Help?

As you have read in the above sections, the DESCRIPTION file contains basic information about a package, and even though that info is very beneficial, it will not help you to use this package for your analysis. Then you will need two more sources of documentation: help files and vignettes.

Help Files

As in basic R, the commands ?() and help(), are the first source of documentation when you are starting with a package. You remember probably that you can get a general overview of the package using help(package = "packagename"), but each function can be explored individually by help("name of the function") or help(function, package = "package") if the package has not been loaded, where you will typically find the description of the function and its parameters and an example of application.

For example, you might remember that to obtain the help file of the vioplot command from the vioplot package, you can type:

help(vioplot, package = "vioplot")Tip: you can also use another way to see what is inside a loaded package. Use the ls() command in this way:

library(babynames)

ls("package:babynames")## [1] "applicants" "babynames" "births" "lifetables"Vignettes

Another very useful source of help included in most of the packages are the vignettes, which are documents where the authors show some functionalities of their package in a more detailed way. Following vignettes is a great way to get your hands dirty with the common uses of the package, so it's a perfect way to start working with it before doing your own analysis.

As you might remember, the information of vignettes contained in a given package is also available in its DOCUMENTATION file locally or online, but you can also obtain the list of vignettes included in your installed packages with the function browseVignettes(), and for a given package just include its name as a parameter: browseVignettes(package="packagename"). In both cases, a browser window will open so you can easily explore and click on the preferred vignette to open it.

If you prefer to stay in the command line, the vignette() command will show you the list of vignettes, vignette(package = "packagename"), the ones included in a given package, and after you have located the one you want to explore, just use the vignette("vignettename") command.

For example, one of the most popular packages for visualization is ggplot2. You might probably have installed it on your computer already, but if not, this is your chance to do it and test your new install.packages() skills.

Assuming you have already installed ggplot2, you can check what vignettes are included on it:

vignette(package = "ggplot2")Two vignettes are available for ggplot2, “ggplot2-specs” and “extending-ggplot2”. You can check the first one with:

vignette("ggplot2-specs")On RStudio, this will be displayed on the Help tab on the right, while in the RGui or on the command line this will open a browser window with the vignette.

You can find more options for getting help from R on the R-Project site.

11. How to Choose the Right R Packages

At this point, you should be able to install and get the most from your R packages, but there still is one final question the air: where do you find the packages you need?

The typical way of discovering packages is just by learning R, in many tutorials and courses the most popular packages are usually mentioned. For example, the Cleaning Data in R teaches all about tidyr.

For each topic you would like to cover in R, there is probably an interesting package you can find.

But what if you have a specific problem and you don’t know where to start, for example, as I stated in the introduction of this post, what if you are interested in analyzing some Korean texts?

Or what if you would like to collect some weather data? Or estimate evapotranspiration?

You have reviewed several repositories, and yes, you know you could check the list of CRAN packages, but with more than 10000 options, it is very easy to get lost.

Let's look at some alternatives!

CRAN Task View

One alternative can be to browse categories of CRAN packages, thanks to the CRAN task views. That's right! CRAN, the official repository, also gives you the option to browse through packages. The task views are basically themes or categories that group packages based on their functionality.

As you can see below, all packages that have to do with genetics will be categorized in the "Genetics" task view:

Taking the example of the Korean texts, you can easily find the package that you need by navigating to the Natural Language Processing task view. There, you can read through the text to find the package that can handle your texts, or you can do a simple CTRL+F and type in the keyword that you're looking for.

You'll have the right package in no time, guaranteed!

RDocumentation

Another alternative to finding packages can be RDocumentation, a help documentation aggregator for R packages from CRAN, BioConductor, and GitHub, which offers you a search box ready for your requests directly on the main page.

You might not know this second alternative yet, so let's dig a little bit deeper!

Let’s start with the Korean texts, one interesting feature of RDocumentation is the quick search, so while you are typing some first results appear:

But let’s go for the full search if you input the keyword “korean” and click “Search” you will get two columns with results: packages in the left, and functions in the right.

Focusing on the packages column, for each result, you get the name of the package, with a link to more detailed information, the name of the author, also linkable to see other packages from the same author, some description of the package with the search word highlighted, and information about the popularity of the package.

Speaking about popularity, this is relevant because the search will rank the most downloaded packages first in a way to improve the relevance of the results. If you want to know more details about the search implementation of RDocumentation, you have a very detailed post on scoring and ranking.

So, it seems that KoNLP package can cover your needs, after clicking on its name, you will get the following information:

- A header with the name of the package, the author, the version, the option to select older versions, the number of downloads, and a link to its RDocumentation page.

- A description of the package.

- The list of functions included in the package, where each of them is clickable so you can get more details about the use of the function. You also get a search box where you can get fast access to the desired function.

- A plot with the evolution of the number of downloads.

- The details of the package with the information from the DESCRIPTION file.

- And finally, a badge that can be included in the README file of the package with the link to the RDocumentation.

- RDocumentation package. RDocumentation is not only a website but also an R package. It overrides the help functions so you can get the power of RDocumentation incorporated into your workflow. After this package is loaded, the

help()function will open a browser window or your RStudio help panel with RDocumentation access.

Having RDocumentation directly on your R or RStudio panel gives you some advantages compared to the website use:

- Check the installed version of the package. The help pane for a package will provide you the same information as the web page (downloads, description, a list of functions, details), plus information about your installed version of the package.

Check, for example, the vioplot package you have installed previously:

install.packages("RDocumentation")

library(RDocumentation)

help(package = "vioplot")

- Ability to install or update a package directly from the help panel. I know that now you are an expert on installing packages, but here you have another alternative, doing it by clicking one button on the help panel provided by RDocumentation.

remove.packages("vioplot")

help(package = "vioplot")

- Run and propose examples. The help pane of the functions inside a package will provide you the option to run the examples again by just clicking a button. You can also propose examples that can be incorporated into the help page and tested by other R users.

install.packages("vioplot")

help(vioplot)

Conclusions

Today’s post has covered a broad range of techniques and functions to get the most from R through the use of packages. As usual, there is more than one way to do a specific task in R, and managing packages are not an exception.

Hopefully, you have learned the most used and some alternative ways of discovering, installing, loading, updating, getting help, or removing an R package.

This post didn’t cover too many details about the internal structure of packages, or how to create your own. Stay tuned to DataCamp’s blog and courses for these and other related topics, but in the meantime, a good reference is the “R packages” book that you can find here.

If you haven’t discovered yet by searching on RDocumentation, with weatherData you can extract weather data from the internet, and if you are interested in evapotranspiration, maybe you should take a look at the Evapotranspiration, water, or SPEI packages.