Curso

Linear models are simple and intuitive, but fail as soon as your data isn’t linearly separable.

And most real-world data isn’t. No matter how you tune the weights, a straight decision boundary isn’t a good fit - the classes either overlap or form patterns no line can split without mistakes. If you know the model is too simple for the job, but don’t want to jump straight to a neural network, there’s a good middle ground.

Support Vector Machines offer one “trick.” You can project your data into a higher-dimensional space, and what looked inseparable often becomes separable. The kernel trick is a computational shortcut that lets kernel-based models like SVMs operate as if the data were transformed, without ever explicitly doing the transformation.

In this article, you'll learn exactly how the kernel trick works inside SVMs, which kernel functions to know, and when kernel methods are worth going with.

But what is SVM exactly? Read our blog post on Support Vector Machines with Scikit-learn to learn all about the algorithm and how to apply it.

What Is the Kernel Trick?

The kernel trick is a method for computing inner products in a higher-dimensional feature space without explicitly mapping the data there.

So, you're not actually transforming your data points and then doing math on them. You're computing what the result of that math would be, using a kernel function that works directly on the original inputs.

What you should keep in mind is that the kernel trick only applies to models that rely on dot products between data points. It's not a general-purpose ML technique. If a model doesn't internally use dot products, the kernel trick doesn't apply. Most models don't use it.

SVMs, gaussian processes, and kernel PCA are a couple of good examples where this kernel trick will work. But don't let anyone tell you this is something "most ML models use."

Why the Kernel Trick Exists

Linear models can only learn linear decision boundaries. That's their hard constraint, and it’s what makes them easy to understand and interpret.

But most real-world datasets aren’t linearly separable. There is no straight line (or hyperplane) that will cleanly split the classes. But with the kernel trick, if you project that data into a higher-dimensional space, the same data can become separable.

The obvious way to approach this is to explicitly transform the data by creating new features, mapping each point into the higher-dimensional space, and training your model from there. It works, but the cost scales. If you're mapping to a space with thousands of dimensions, storing and computing on those transformed vectors gets expensive.

With the kernel trick, instead of computing the full transformation φ(x) for every data point, you compute K(x, x′) - a kernel function that gives you the same inner product result directly.

The Kernel Trick in Support Vector Machines

An SVM finds the decision boundary that maximizes the margin between two classes.

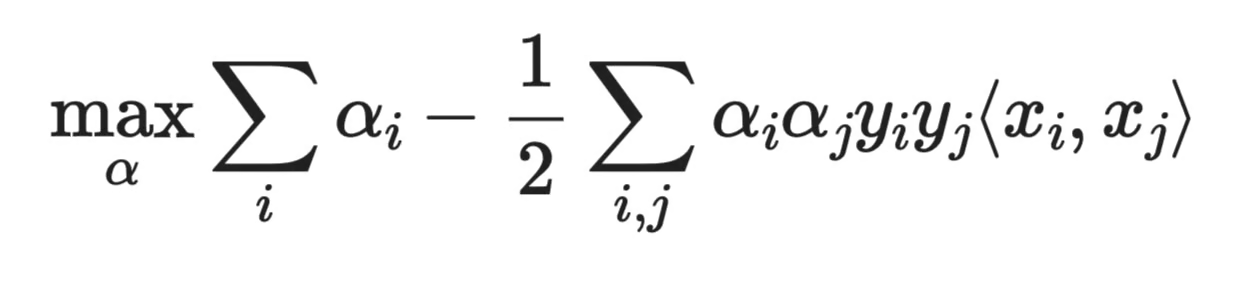

To find that boundary, the SVM solves an optimization problem. And in its dual form, the optimization depends only on dot products between data points, not on the data points themselves. The dual objective looks like this:

Dual objective function

Where α_i are the learned weights, y_i are the class labels, and ⟨x_i, x_j⟩ is the dot product between two data points. SVM just needs the pairwise similarities between the data points.

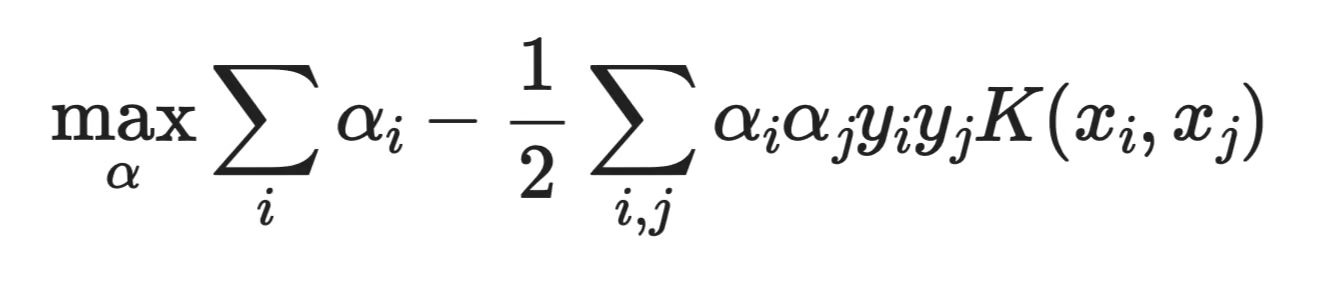

If the SVM only needs dot products, you don't have to give it dot products computed from the original space. You swap out ⟨x_i, x_j⟩ for a kernel function K(x_i, x_j):

Formula with kernel function

The SVM runs exactly the same way. It just thinks it's operating in a richer feature space.

And that’s what the kernel trick is all about.

How the Kernel Trick Works (Conceptual View)

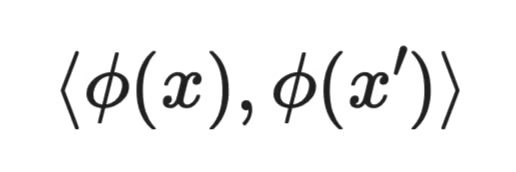

The standard approach would be to define a mapping φ(x) that transforms each data point into a higher-dimensional space, then compute dot products there:

The mapping

But computing φ(x) explicitly can be expensive, and in some cases the mapped space has thousands or even infinite dimensions.

The kernel trick skips that step.

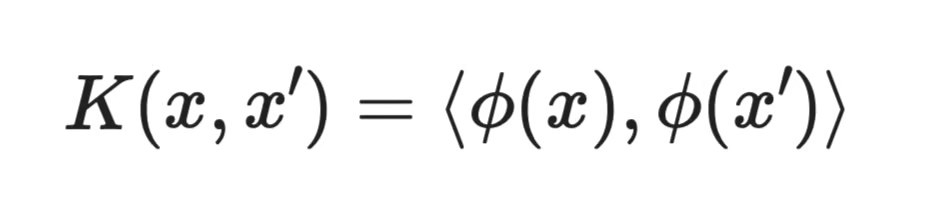

Instead of computing φ(x) and then taking the dot product, you directly compute K(x, x′) - a kernel function that satisfies:

Kernel function computation

The result is identical, but the cost is lower.

Think of K(x, x′) as a similarity function. It takes two data points in the original space and returns a number that reflects how similar they are - but in a way that corresponds to comparing them in a much richer space. The model behaves as if the data were transformed. It just never was.

Common Kernel Functions

Not all kernel functions work the same way. Each defines a different notion of similarity between data points, which means each one has a different kind of decision boundary. Let me show you a couple.

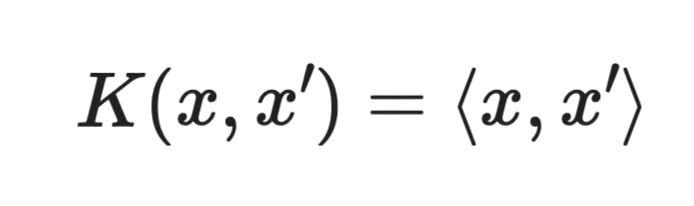

Linear kernel

Linear kernel

The linear kernel is just a standard dot product. The model stays in the original feature space and learns a linear boundary, which makes it equivalent to a standard linear SVM.

Use this kernel when your data is already linearly separable. It's the fastest option and the easiest to interpret.

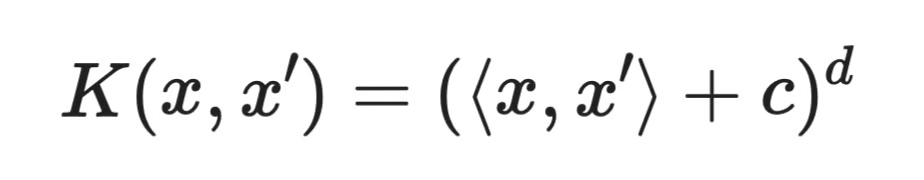

Polynomial kernel

Polynomial kernel

Where c is a constant and d is the degree of the polynomial.

The polynomial kernel captures interactions between features. A degree-2 kernel, for example, considers all pairwise feature combinations. This lets the model learn curved boundaries without you having to manually create those interaction terms.

Higher degrees mean more expressive boundaries, but also more risk of overfitting.

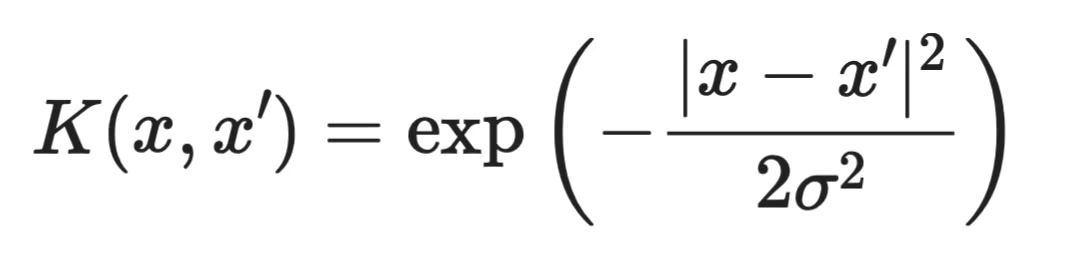

RBF (Gaussian) kernel

RBF kernel

The RBF (Radial Basis Function) kernel is the most widely used kernel in practice. It measures similarity based on distance. Two points that are close together get a high score, two points far apart get a score near zero.

What makes it interesting is that it implicitly maps data into an infinite-dimensional space. This gives it enough flexibility to understand complex, nonlinear boundaries that other kernels can't handle.

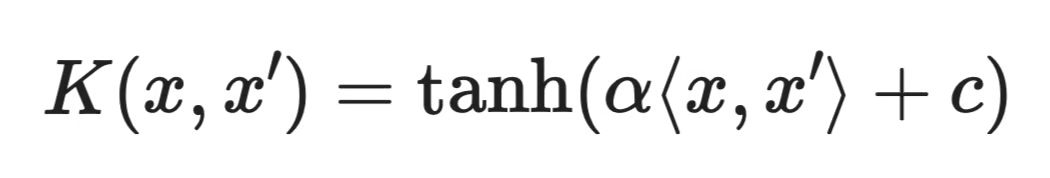

Sigmoid kernel

Sigmoid kernel

The sigmoid kernel is less commonly used than RBF or polynomial kernels, and it doesn't always satisfy the mathematical conditions required for a valid kernel function depending on the parameter choices.

It shows up occasionally in older literature, but in practice, RBF is almost always a better starting point.

Kernel Trick Beyond SVM

SVM is the most common algorithm for the kernel trick, but it’s not the only one.

A couple of other models use the same idea:

- Kernel ridge regression applies ridge regression in a higher-dimensional space using a kernel function instead of explicit features

- Gaussian processes use kernel functions to define covariance between data points. The kernel encodes assumptions about the smoothness and shape of the function you're trying to learn

- Kernel PCA extends standard PCA to nonlinear structures by finding principal components in a transformed feature space

Across all of them, the model only needs dot products, so you can swap in a kernel function and get nonlinear behavior without changing the rest of the math.

But SVM is still the clearest example, and the best place to build your intuition.

Kernel Trick vs. Feature Engineering

Both approaches solve the problem of your features not being expressive enough. But they solve it in a different way.

With feature engineering, you explicitly create new features from the existing ones. You decide what combinations matter, compute them, add them to your dataset, and train on the expanded feature set. You see exactly what went into the model.

The kernel trick implicitly operates in a higher-dimensional space without you ever defining or storing those extra features. The transformation is described by the kernel function.

The tradeoff comes down to interpretability versus flexibility.

Feature engineering keeps things transparent, as you know what every feature represents. The kernel trick gives you more expressive capabilities, but the implicit feature space is often hard to inspect or explain.

If interpretability matters for your use case, feature engineering is the safer choice. If you need to understand complex patterns and don't need to explain every decision the model makes, the kernel trick will get you there faster.

Advantages of the Kernel Trick

The most obvious one is that it lets linear models learn nonlinear boundaries. Without it, an SVM can only separate classes with a straight hyperplane. With it, the same model can handle curved, complex decision boundaries.

It also avoids the cost of explicit high-dimensional computation. You get the expressive power of a richer feature space without storing or computing those extra dimensions. For problems where the implicit feature space has thousands or infinite dimensions, this is what makes the approach possible at all.

Kernel methods also tend to work well on medium-sized datasets. When you don't have millions of examples but your data isn't linearly separable, an SVM with a good kernel is often a solid, reliable choice.

Limitations of the Kernel Trick

The biggest problem is scale. Training a kernel SVM requires computing K(x_i, x_j) for every pair of data points. That's an O(n²) operation - and it gets worse from there when you factor in memory. On large datasets, this can become a hard bottleneck.

Kernel choice also isn't trivial. RBF is a good default, but it's not always the right one. Picking the wrong kernel - or the wrong hyperparameters for a kernel - can result with worse performance then you started with.

Interpretability is another issue. With feature engineering, you know what each feature means. With the kernel trick, the implicit feature space isn’t clear. The model works, but explaining why it made a specific decision is hard.

And in many domains, deep learning has simply taken over. Neural networks handle large datasets, learn their own feature representations, and often outperform kernel methods without requiring manual kernel selection. For image classification, NLP, or any task with massive amounts of data, kernel methods are rarely the first choice anymore.

When to Use Kernel Methods

Kernel methods aren't obsolete in 2026, but they've become more specialized than they used to be.

You should go with kernel method like an SVM with an RBF kernel when:

- Your data has nonlinear structure that a linear model can't understand

- Your dataset is small to medium in size - think thousands of samples, not millions

- You don't need to explain individual predictions, so lower interpretability is an acceptable tradeoff

They're a good fit for structured, tabular data problems where you have limited data and need a model that generalizes well without a lot of tuning. In those cases, a kernel SVM can still outperform more complex models.

But if your dataset is large, or you need predictions you can explain, kernel methods aren’t the best solution.

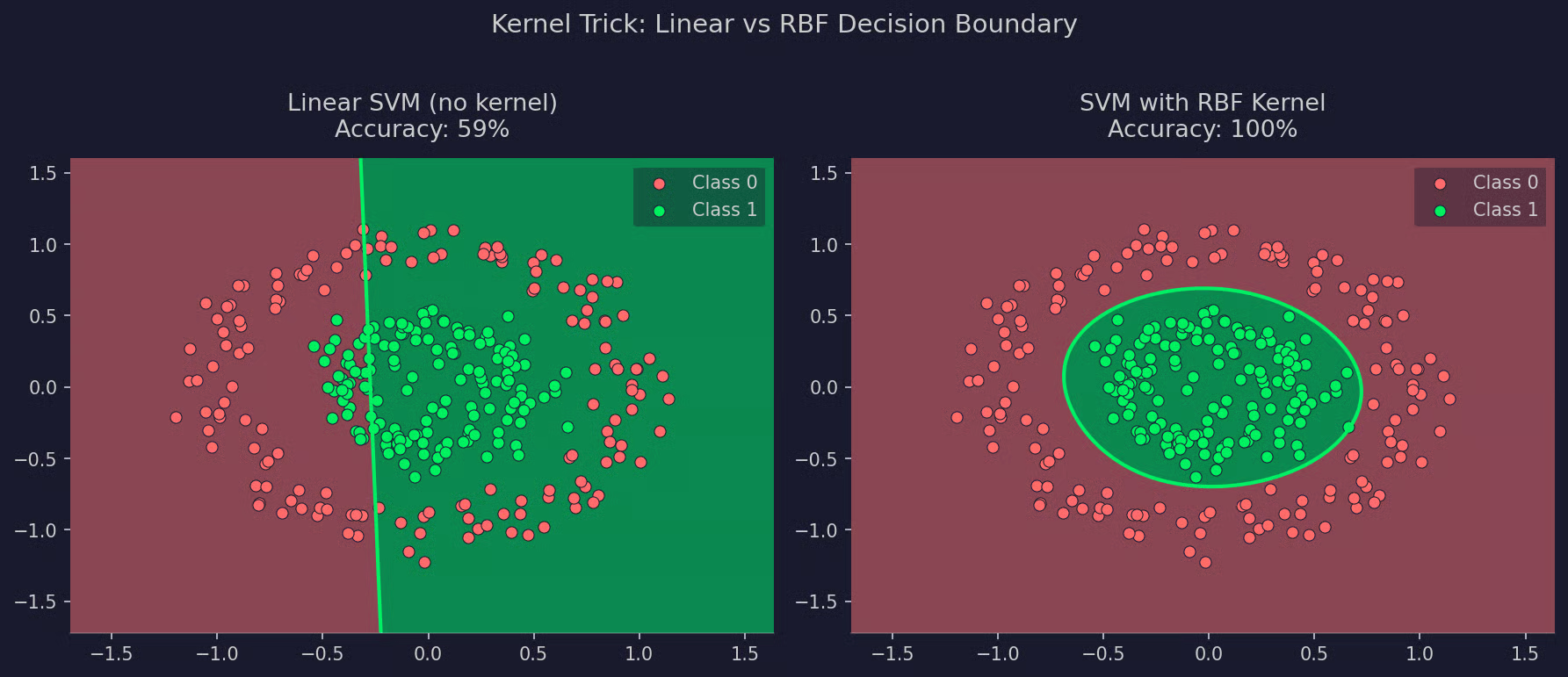

Example: SVM with and without a Kernel

The best way to see what the kernel trick actually does is to watch a linear SVM fail, and then fix it with a kernel.

In the example below, you have a simple dataset with two concentric circles, where one class forms an inner ring and the other forms an outer ring. There is no straight line that can separate them. A linear SVM will fail every time

With a RBF kernel, the same SVM will draw a circular boundary that separates the classes. The only thing that has changed is the kernel function.

Here's the full example:

import numpy as np

import matplotlib.pyplot as plt

from sklearn.svm import SVC

from sklearn.datasets import make_circles

# Generate concentric circles dataset

np.random.seed(42)

X, y = make_circles(n_samples=300, noise=0.1, factor=0.4)

# Train both SVMs

svm_linear = SVC(kernel="linear", C=1)

svm_rbf = SVC(kernel="rbf", C=1, gamma="scale")

svm_linear.fit(X, y)

svm_rbf.fit(X, y)

print(f"Linear SVM accuracy: {svm_linear.score(X, y):.0%}")

print(f"RBF SVM accuracy: {svm_rbf.score(X, y):.0%}")

Linear versus RBF SVM accuracy

The linear SVM draws a straight boundary through the middle of the data. It splits the plane in two halves, which doesn't match the actual structure of the problem at all. The RBF kernel, by contrast, produces a circular boundary that follows the shape of the data.

Linear versus RBF SVM visualized

To conclude, the model didn't learn a more complex structure - it just operated in a space where the structure was simpler to find.

Common Misconceptions About the Kernel Trick

There are a couple of misconceptions about the kernel trick that show up often enough, so let me address them here.

"The kernel trick works for all models." It doesn't. The kernel trick only applies to models that rely on dot products between data points in their optimization. Most models - decision trees, random forests, neural networks, linear regression - don't use dot products that way, so the kernel trick doesn't apply to them.

"It literally transforms the data." Not explicitly. Your original data points stay exactly as they are. The kernel function computes what the dot product would be in a higher-dimensional space, but no transformation ever happens in practice. The data is never expanded or stored differently.

"It always improves performance." It depends. On nonlinear problems with small to medium datasets, a good kernel can make a difference. On large datasets, the computational cost often outweighs the benefit. And if your data is already linearly separable, adding a kernel just adds complexity.

Why the Kernel Trick Still Matters

The kernel trick isn't the most talked-about idea in ML right now. Deep learning is on top of the charts for most benchmarks, and kernel methods rarely show up anywhere.

But it's still a foundational concept worth understanding.

SVMs and the kernel trick were central to classical ML because they work well on structured, tabular data with limited samples, and the math behind them is clean and well-understood. If you want to understand how similarity-based learning works, or why dot products matter in optimization, the kernel trick is one of the clearest examples to study.

It also still has real uses. Small datasets, specialized domains like bioinformatics or text classification with hand-made features, and problems where you need a model that generalizes well without a lot of data - these are areas where kernel methods are still relevant.

The kernel got replaced in the domains where scale and raw data volume matter most. In the right context, it's still a good tool.

Conclusion

The kernel trick solves a specific problem: how to get nonlinear behavior out of a model that only knows how to work with dot products. The answer is to replace those dot products with a kernel function that computes the same result in a richer feature space - without actually going there.

It's most useful to understand in the context of SVMs, where the dual formulation makes the substitution clean and explicit. Once you get comfortable with that, the broader family of kernel methods will start to make a lot more sense.

Deep learning gets most of the attention today, and for large-scale problems, that's fair. But the kernel trick represents a different kind of thinking - one based on geometry and similarity. It’s worth understanding, but unless you work in a specialized field, you’ll hardly use it in practice.

But why exactly did deep learning take over? Enroll in our Deep Learning in Python track to see how neural networks allow you to build complex models at scale.

FAQs

What is the kernel trick in plain English?

The kernel trick is a computational shortcut that lets certain models - like SVMs - learn nonlinear patterns without explicitly transforming the data into a higher-dimensional space. Instead of computing that transformation directly, a kernel function computes what the dot product between two data points would be in that space. You get the expressive power of a richer feature space without the computational cost of actually going there.

Which machine learning models use the kernel trick?

The kernel trick only applies to models that rely on dot products in their optimization. SVMs are the most common example, but kernel ridge regression, Gaussian processes, and kernel PCA also use it. Most modern models don't use dot products this way, so the kernel trick doesn't apply to them.

Is the kernel trick still relevant in 2026?

For large-scale problems, deep learning has largely taken over. But kernel methods still hold up on small to medium datasets, structured tabular data, and specialized domains like bioinformatics or text classification with hand-made features. Understanding the kernel trick also gives you a cleaner mental model of similarity-based learning, which is useful well beyond SVMs.

What's the difference between the RBF kernel and the linear kernel?

The linear kernel computes a standard dot product and produces a linear decision boundary - it's equivalent to training an SVM without any kernel at all. The RBF (Radial Basis Function) kernel measures similarity based on the distance between points, implicitly mapping data into an infinite-dimensional space. This makes RBF a much better fit for nonlinear problems, which is why it's the default choice when you're not sure which kernel to use.

Why does the kernel trick scale poorly with large datasets?

Training a kernel SVM requires computing K(x_i, x_j) for every pair of data points in the training set. That's an O(n²) operation. Memory scales the same way, since you need to store the full kernel matrix. This is why kernel methods are rarely used on datasets with hundreds of thousands of samples or more.