Course

Comparing multiple groups is easy when your data follows a normal distribution. The problem is, most real-world data doesn’t.

If ANOVA is your default test, you’ll get to the wrong conclusions, as it assumes your data follows a normal distribution. When it doesn't - think skewed data or small samples - you need a different approach.

The Kruskal-Wallis test is that different approach. It’s a nonparametric alternative to ANOVA, and it works on ranked data instead of raw values, so a normal distribution isn't a requirement.

In this article, I'll cover the concept, the math behind it, how to run it in Python and R, and how to interpret the results.

What Is the Kruskal-Wallis Test?

The Kruskal-Wallis test is a nonparametric method for comparing three or more independent groups. It converts all observations into ranks and compares those ranks across groups instead of working with raw values.

You can think of it as an extension of the Mann-Whitney U test, which I've also written about.

The Mann-Whitney U does the same rank-based comparison, but only for two groups. The Kruskal-Wallis test scales it to three or more, so when you have multiple groups and can't use ANOVA, this is what you should use.

Because it works on ranks rather than raw values, it doesn't assume your data follows any particular distribution. That's what makes it useful with real-world data, as it never tends to follow one distribution type perfectly.

When to Use the Kruskal-Wallis Test

The Kruskal-Wallis test is a great fit when you're dealing with:

- Three or more independent groups you want to compare

- Ordinal or continuous data such as Likert scale ratings or measurement data

- Non-normal distributions through skewed data, outliers, small samples, or anything ANOVA can't handle

- Small sample sizes where normality is hard to verify

Here’s a simple example.

Imagine you want to compare exam scores across three different classes. The scores are skewed and the samples are small, so ANOVA isn’t a good choice. The Kruskal-Wallis test doesn't need normality, so it works here. It'll tell you whether at least one class scored differently from the others without making assumptions your data can't support.

Kruskal-Wallis Test vs. ANOVA

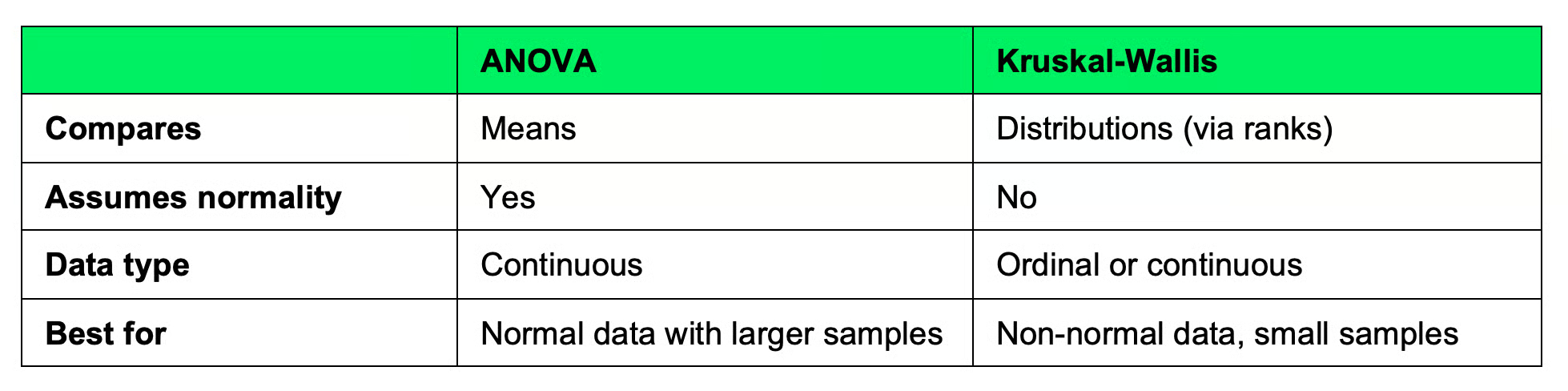

Both tests compare groups, but they do it differently.

ANOVA compares group means and assumes your data is normally distributed with roughly equal variances. When those assumptions are true, it's the better choice - it's more statistically powerful and the results are easier to interpret.

The Kruskal-Wallis test compares group distributions using ranks. It doesn't care about normality or equal variances. That makes it more flexible, but you lose some statistical power in the process.

Here's a quick comparison table:

ANOVA compared to Kruskal-Wallis test

If your data is normally distributed, use ANOVA. If it isn't - or you can't verify that it is - use Kruskal-Wallis.

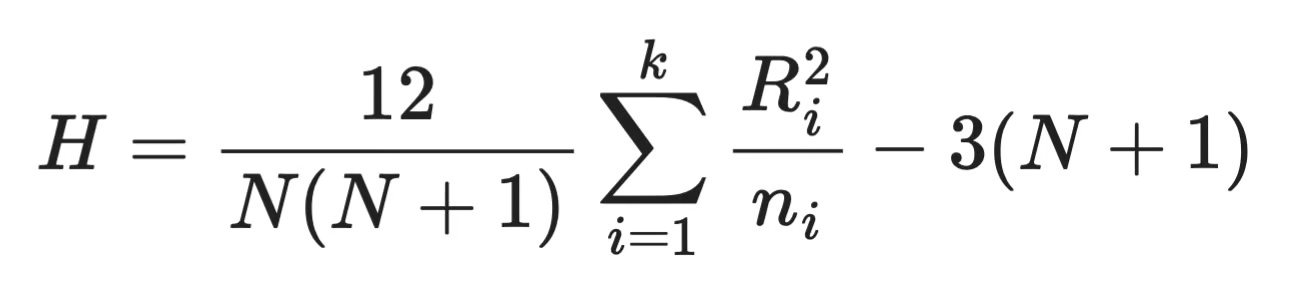

Kruskal-Wallis Test Formula

The Kruskal-Wallis test boils down to a single test statistic, H. Here's the formula:

Kruskal-Wallis formula

Here’s the explanation of the components:

-

N- total number of observations across all groups -

k- number of groups -

n_i- number of observations in groupi -

R_i- sum of ranks assigned to groupi

The formula measures how much the rank sums of each group deviate from what you'd expect if all groups were identical. A large H means the groups are different, and a small H means they are not that different.

Once you have H, you compare it against a chi-square distribution with k - 1 degrees of freedom to get a p-value.

How the Kruskal-Wallis Test Works

There are four steps needed to perform the Kruskal-Wallis test:

- Combine all groups: Take all observations from every group and combine them into a single dataset

- Rank all observations: Sort the combined data from smallest to largest and assign ranks. The smallest value gets rank 1, the next gets rank 2, and so on. If two values are equal, they share the average of the ranks they would have occupied.

- Compute rank sums: Split the ranks into their original groups. Add up the ranks for each group. These are your rank sums -

R_iin the formula - Calculate the test statistic: Add the rank sums into the

Hformula. If the groups are similar, their rank sums will be close to each other andHwill be small. If one group consistently gets higher or lower ranks,Hgrows larger

And that's it!

You can see that the test doesn't care about the actual values, but instead, only where they are relative to everything else.

Kruskal-Wallis Test in Python

Python's scipy library has a built-in function for the Kruskal-Wallis test, meaning you don’t have to implement the formula by hand. Let's go through an example.

Say you're comparing exam scores across three classes. Here's how you'd run the test:

from scipy import stats

# Exam scores

class_a = [78, 85, 90, 72, 88]

class_b = [65, 70, 68, 74, 60]

class_c = [88, 92, 95, 85, 91]

# Run the test

statistic, p_value = stats.kruskal(class_a, class_b, class_c)

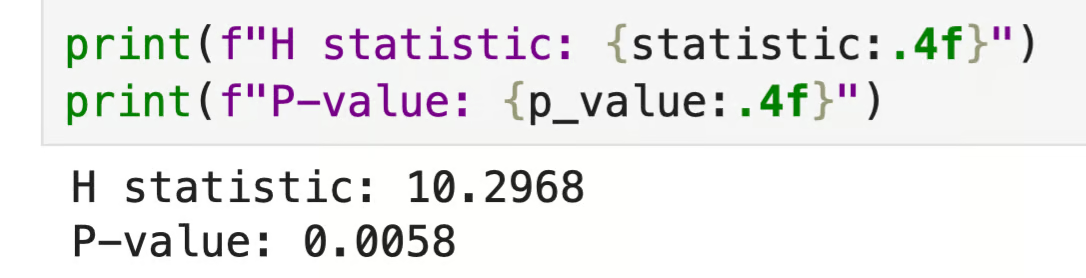

print(f"H statistic: {statistic:.4f}")

print(f"P-value: {p_value:.4f}")

Python output

The p-value is below 0.05, which means at least one class scored differently from the others. Just keep in mind the test doesn't tell you which one - you'll need a post hoc test for that, which I'll cover in the next section.

Kruskal-Wallis Test in R

Just like Python, R has a built-in function for this test. Let's use the same exam score scenario.

# Exam scores

class_a <- c(78, 85, 90, 72, 88)

class_b <- c(65, 70, 68, 74, 60)

class_c <- c(88, 92, 95, 85, 91)

# Combine

scores <- c(class_a, class_b, class_c)

groups <- factor(rep(c("A", "B", "C"), each = 5))

# Run the test

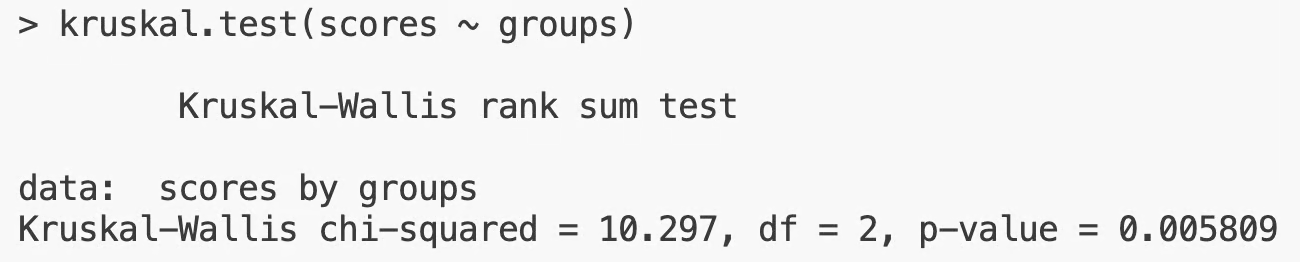

kruskal.test(scores ~ groups)

R output

The output is the same as what I got in Python - same H statistic, same p-value. With p < 0.05, you'd reject the null hypothesis and conclude that at least one group differs.

How to Interpret Kruskal-Wallis Results

The null hypothesis of the Kruskal-Wallis test is that all groups have the same distribution. The p-value tells you whether to reject it. Here’s how to interpret it:

- p < 0.05: At least one group differs from the others, so reject the null hypothesis

- p >= 0.05: There is no strong evidence that the groups differ, so don't reject the null hypothesis

The 0.05 threshold is a convention. Depending on your field or the stakes of your analysis, you might use a stricter threshold like 0.01 or a looser one like 0.10.

Keep in mind this test won’t tell you which group is different. A significant result just means the groups aren't all the same. You know something is going on, but not where. To find out which pairs are driving the difference, you need a post hoc test.

Post Hoc Tests After Kruskal-Wallis

The test tells you that at least one group differs, but not which group is actually different. If you have three groups and p < 0.05, it could be A versus B, A versus C, B versus C, or some combination. You need to perform a post hoc test to get these pairwise comparisons.

Dunn's test is the most common choice. It runs pairwise comparisons between all groups and adjusts the p-values to account for multiple comparisons - without that adjustment, you'd inflate the chance of a false positive. The more comparisons you run, the higher the risk of finding a "significant" result by chance alone.

Dunn's test in Python

You'll need the scikit_posthocs library for this. If you don't have it, install it with pip install scikit-posthocs.

From there, the calculation is simple:

import scikit_posthocs as sp

import pandas as pd

# Same exam scores as before

class_a = [78, 85, 90, 72, 88]

class_b = [65, 70, 68, 74, 60]

class_c = [88, 92, 95, 85, 91]

# Combine

scores = class_a + class_b + class_c

groups = ["A"] * 5 + ["B"] * 5 + ["C"] * 5

df = pd.DataFrame({"score": scores, "group": groups})

# Run the test

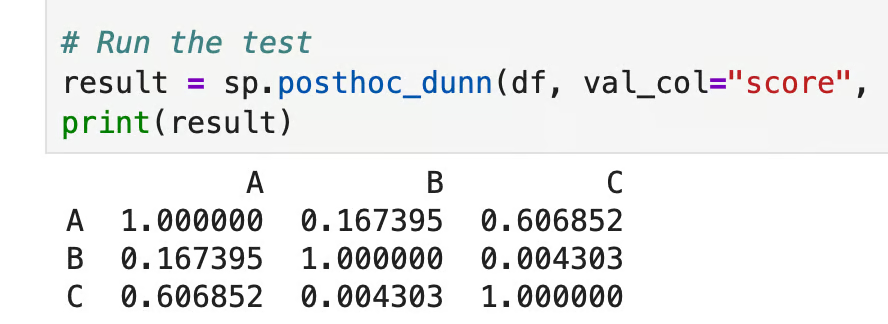

result = sp.posthoc_dunn(df, val_col="score", group_col="group", p_adjust="bonferroni")

print(result)

Dunn’s test in Python

Each cell shows the adjusted p-value for that pair. Here, only B versus C (p = 0.004) crosses the 0.05 threshold, so those two groups differ. A versus B (p = 0.167) and A versus C (p = 0.607) don't, which means class A isn't statistically different from either of the other two classes.

Dunn's test in R

To start, install the library if needed with the install.packages("dunn.test") command:

library(dunn.test)

# Same exam scores as before

class_a <- c(78, 85, 90, 72, 88)

class_b <- c(65, 70, 68, 74, 60)

class_c <- c(88, 92, 95, 85, 91)

scores <- c(class_a, class_b, class_c)

groups <- factor(rep(c("A", "B", "C"), each = 5))

# Run the test

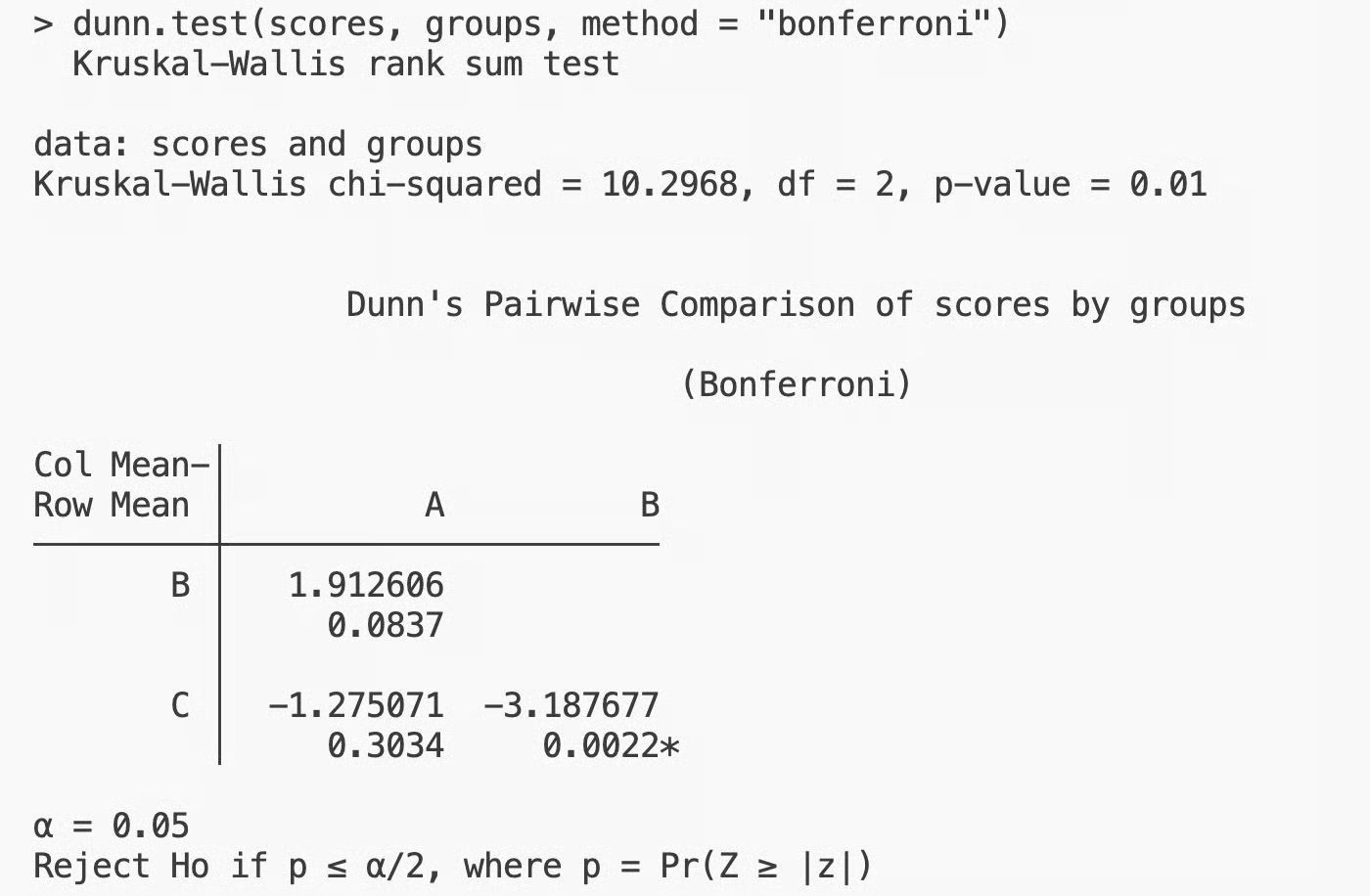

dunn.test(scores, groups, method = "bonferroni")

Dunn’s test in R

The results match Python, as you would expect. Only B versus C is significant, while A versus B and A versus C aren't. Class B and class C are the ones behind the difference detected by the Kruskal-Wallis test.

Assumptions of the Kruskal-Wallis Test

The Kruskal-Wallis test is more flexible than ANOVA, but it still has three assumptions you need to check before running it:

- Independent samples: Observations in one group don't influence observations in another. If your data is paired or repeated measures, this test isn't the right fit

- Ordinal or continuous data: The test needs data you can rank. Nominal categories (like colors or labels) can't be ranked, so they won't work here

- Similar distribution shapes: If you want to interpret the results as a comparison of medians rather than just distributions, the groups need to have roughly the same shape. If the shapes differ a lot, you can still compare distributions, but the median interpretation doesn't hold

If you violate the first two assumptions, the test results won’t be valid. The third assumption is somewhat softer, as it affects how you interpret the results, not whether you can run the test at all.

When You Should Not Use the Kruskal-Wallis Test

There are three cases where a different test would be a better fit:

- Your data is paired or repeated measures: If the same subjects appear across groups, use the Friedman test instead. It's the nonparametric equivalent designed for dependent samples. Using Kruskal-Wallis on paired data ignores the relationship between observations and can lead to wrong conclusions

- Your data meets ANOVA's assumptions: If your data is normally distributed with roughly equal variances, ANOVA is the better choice. It's more statistically powerful, which means it's better at detecting real differences when they exist

- Your sample sizes are large: With large samples, parametric methods tend to work well even when the data isn't perfectly normal. The central limit theorem does its thing, and ANOVA will give you more reliable results than the rank-based approach. If you're working with hundreds or thousands of observations per group, Kruskal-Wallis isn’t the test for you

Conclusion

The Kruskal-Wallis test compares three or more independent groups when your data doesn't follow the normal distribution required by tests like ANOVA. This is possible because it works on ranks instead of raw values.

That said, it's not a replacement for ANOVA. If your data is normal, ANOVA is the better test because it carries more statistical significance. On the other hand, if your data are paired, use the Friedman test. As always, the right test depends on your data.

When the conditions are just right, the Kruskal-Wallis test is a reliable and straightforward choice. You need to run it, check the p-value, and follow up with Dunn's test if you need to know which groups are behind the difference.

Is your knowledge of statistics a bit rusty? Take our Introduction to Statistics course and get back on track in a single afternoon.

Kruskal-Wallis Test FAQs

What is the Kruskal-Wallis test used for?

The Kruskal-Wallis test is used to compare three or more independent groups when you can't assume your data follows a normal distribution. It's a nonparametric alternative to ANOVA that works on ranked data instead of raw values. You'll find it useful in situations where distributions are skewed or data is ordinal.

What does a significant Kruskal-Wallis result mean?

A significant result - typically p < 0.05 - means at least one group differs from the others. It doesn't tell you which groups are different, just that they're not all the same. To find out which pairs are behind the difference, you need to follow up with a post hoc test like Dunn's test.

What are the assumptions of the Kruskal-Wallis test?

The test requires independent samples, meaning observations in one group don't influence observations in another. Your data needs to be ordinal or continuous - something you can rank. If you want to interpret results as a comparison of medians, the groups should also have similar distribution shapes.

What is the difference between the Kruskal-Wallis test and the Mann-Whitney U test?

The Mann-Whitney U test compares two independent groups, while the Kruskal-Wallis test extends that approach to three or more groups. Both work on ranked data and don't assume normality. If you only have two groups, Mann-Whitney U is the right choice - Kruskal-Wallis is its multi-group equivalent.

When should you use Dunn's test after Kruskal-Wallis?

Run Dunn's test when your Kruskal-Wallis result is significant and you need to know which specific pairs of groups differ. It performs pairwise comparisons between all groups and adjusts the p-values to reduce the chance of false positives. In Python, scikit_posthocs.posthoc_dunn() does this, and in R, the dunn.test package gives you the same functionality.