Track

AI literacy is no longer optional. According to our State of Data & AI Literacy Report 2026, 69% of leaders believe AI literacy is important for their teams' daily tasks. The problem is that "learn AI" is an instruction so broad it's hard to unwrap. Where do you actually start?

This guide is for people who are completely new to AI and want a clear, structured learning path, not a random pile of links. If you want a deeper dive, I recommend checking our full 'How to Learn AI' guide.

Whether you're a career-switcher, a business professional trying to upskill, or someone who's been meaning to learn this stuff for two years and keeps putting it off, the resources below are chosen to take you from zero to genuine practitioner. That means covering the theory (machine learning, deep learning, neural networks), the prerequisites (Python, statistics, math), and the modern application layer (LLMs, prompt engineering, RAG, fine-tuning, agentic AI).

I've organized these into a rough sequence. You don't have to follow it rigidly, but if you're starting from scratch, working through them in order will save you a lot of confusion. Each entry includes what you'll actually learn, how long it takes, and who it's best suited for. If you're totally new, I'd say the best place to start is with our new AI-native Introduction to AI for Work course, which gives you your very own AI tutor that will tailor the course to your learning style and needs.

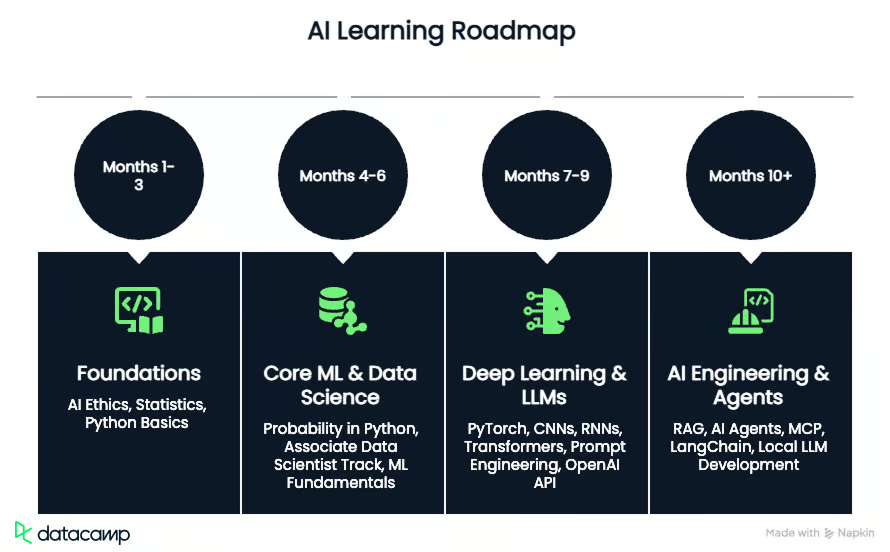

AI Roadmap: TL;DR

If you want to go from absolute beginner to building autonomous AI agents, here is the high-level path (assuming roughly 10 hours of study per week):

- Months 1-3: Foundations. Grasp the core AI concepts, ethics, and foundational math, then learn Python and data manipulation (pandas).

- Months 4-6: Core Machine Learning. Connect statistics to Python and master classical ML models (regression, classification) using scikit-learn.

- Months 7-9: Deep Learning & LLMs. Dive into neural networks and Transformers with PyTorch, then learn to integrate and prompt models programmatically via the OpenAI API and Hugging Face.

- Months 10+: AI Engineering & Agents. Move beyond static models to build production-ready systems using RAG, LangChain, and autonomous agents (MCP, Claude Code, Gemini CLI).

Suggested AI Learning Roadmap

Here's how I'd sequence a learning plan based on our resources if you're starting AI from zero. The timelines are approximate and assume roughly 10 hours of study per week.

Stage 1: Foundations (months 1-3)

Start with Introduction to AI for Work, the AI Fundamentals skill track, and AI Ethics to build your conceptual vocabulary and governance framework. These require no coding and give you the mental model you'll need to make sense of everything that follows. Run them in parallel with Introduction to Statistics and the Demystifying Mathematical Concepts for Deep Learning tutorial.

Once you have the theory, move into Python. Work through the Python Data Structures tutorial, then the Python Programming skill track, and then the Data Manipulation in Python skill track. By the end of month three, you should be comfortable writing Python, manipulating DataFrames with pandas, and understanding what a probability distribution is - all crucial for a deeper understanding of AI.

Stage 2: Core ML and data science (months 4-6)

This is where the real work begins. Take Foundations of Probability in Python to connect your statistics knowledge to code, then start the Associate Data Scientist in Python career track. You don't need to complete all 90 hours in this stage, but work through the data manipulation, visualization, and supervised learning sections. The scikit-learn courses in particular are essential.

Alongside this, work through the Machine Learning Fundamentals in Python skill track. The supervised and unsupervised learning courses overlap with the career track, so you'll reinforce the same concepts from two angles. By month six, you should be able to train, evaluate, and tune a classification or regression model.

Stage 3: Deep learning and modern LLMs (months 7-9)

Now move into the Deep Learning in Python skill track. Work through it in order: PyTorch basics, CNNs, RNNs, and then the Transformer Models course. The Transformer course is the bridge between classical deep learning and modern LLMs.

Once you understand what's happening under the hood, you can start interacting with these models effectively. Of course, if you're more interested in building with AI rather than training models from scratch, you can skip the deep learning math and treat LLMs as a powerful utility. Start by learning how to get the most out of them by completing Prompt Engineering with the OpenAI API and Working with Hugging Face. Then, take the leap from web interfaces to actual software by taking Developing AI Systems with the OpenAI API.

Stage 4: AI Engineering and Agents (months 10 onwards)

This stage is about building complex, production-ready AI systems. Start with Retrieval-Augmented Generation (RAG) with LangChain so you can connect LLMs to your private data.

From there, shift from passive generation to active task execution with the AI Agent Fundamentals skill track and the Introduction to the Model Context Protocol (MCP) tutorial. Finally, apply these modern workflows to your own local development by walking through the Claude Code and Building with Gemini 3.1 Pro: Coding Agent tutorials.

The Best Resources for the AI Learning Roadmap

These resources are ordered to reflect a sensible learning sequence, from conceptual foundations through to hands-on model building and modern LLM applications. That said, if you already have Python experience, feel free to skip ahead.

1. Introduction to AI for Work

If you're not a developer and just want to understand what AI is and how to use it responsibly at work, start here. This 2-3 hour course covers what large language models are, how generative AI works, and how to write effective prompts using a four-component framework built around ask, requirements, context, and examples.

What I like most about this course (and what makes it stand out fromn other intro to AI courses) is that it uses DataCamp’s new AI-native learning experience. You don't just get static video content followed by exercises; the platform acts as a 1-on-1 AI tutor. It dynamically generates lessons, examples, and exercises tailored to your specific job role, goals, and prior knowledge.

For example, if you're a marketer, your examples will reflect marketing workflows. It also adapts to your pace, meaning the typical 2-3 hour runtime will flex depending on how quickly you grasp the material.

Beyond the tailored experience, I like the practical framing. It teaches you to identify which of four AI capabilities (Execution, Thought Partnership, Refinement, and Continuous Learning) applies to a given task. It also covers AI limitations, hallucinations, and responsible use, which is often skipped in beginner content.

- Level: Beginner

- Format: Course, 2-3 hours

- Who it's for: Business professionals, marketers, analysts, or anyone who wants to use AI tools more effectively without writing code

2. AI Fundamentals skill track

This 10-hour track is the conceptual backbone of any AI learning journey. It covers six courses: Introduction to AI for Work, Understanding ChatGPT, Understanding Machine Learning, Large Language Models Concepts, Generative AI Concepts, and AI Ethics. No coding required.

The track is designed to give you a working vocabulary for the entire AI landscape, from how machine learning algorithms learn patterns to how LLMs like ChatGPT are trained and deployed. The AI Ethics course at the end is worth taking seriously, not just skimming. Completing the track also prepares you for the AI Fundamentals certification.

- Level: Beginner

- Format: Skill track, 10 hours, 6 courses

- Who it's for: Anyone who wants a solid conceptual foundation before writing a single line of code

3. Introduction to Statistics

Statistics is the language that AI speaks. Before you can understand why a model is making predictions, you need to understand probability distributions, hypothesis testing, and measures of spread. This 4-hour course covers all of that using real-world datasets, including London crime data and online retail sales.

The course has over 8,000 reviews and a 4.8 rating, which is unusually high for a statistics course. It covers summary statistics, probability, the normal distribution, the central limit theorem, and correlation, all without requiring any coding. Think of it as the theoretical grounding that makes everything else click.

- Level: Beginner

- Format: Course, 4 hours, 56 exercises

- Who it's for: Anyone who skipped statistics in school or wants a refresher before diving into machine learning

4. Demystifying Mathematical Concepts for Deep Learning

This tutorial is the most direct answer to the question "how much math do I actually need?" It covers scalars, vectors, matrices, tensors, eigenvalues, singular value decomposition, gradient descent, and entropy, all with Python code using NumPy and SciPy. It's not a full linear algebra course, but it gives you enough to understand what's happening inside a neural network.

Gradient descent alone is worth the read. The tutorial explains all three variants (full batch, stochastic, and mini-batch) and shows how they're used to train neural networks. If you've ever wondered why training a model involves "minimizing a loss function," this is where that concept becomes concrete.

- Level: Beginner to Intermediate

- Format: Tutorial, approximately 15 minutes to read

- Who it's for: Learners who want to understand the math behind deep learning without committing to a full linear algebra course

Demystifying Mathematical Concepts for Deep Learning

5. Foundations of Probability in Python

This 5-hour course picks up where the statistics course leaves off, adding Python code to the probability concepts. It covers Bernoulli trials, binomial distributions, normal distributions, Poisson distributions, the law of large numbers, and the central limit theorem, and then connects all of it to linear and logistic regression.

The final chapter is the most useful for ML practitioners. It shows how the central limit theorem explains why sample means converge to population means, which is the theoretical basis for why training on large datasets works. The course uses scipy throughout, which is the same library you'll encounter in most ML codebases.

- Level: Intermediate

- Format: Course, 5 hours, 61 exercises

- Who it's for: Learners who have completed Introduction to Statistics and want to apply probability concepts in Python

Foundations of Probability in Python

6. Python Programming skill track

Python is the dominant language in AI, and this 19-hour track takes you beyond the basics into the kind of code that actually gets used in production. It covers context managers, decorators, writing efficient code, software engineering principles, automated testing with pytest, and object-oriented programming.

The track uses packages including pandas, NumPy, setuptools, pytest, and pycodestyle. If you're coming from a data analysis background and your Python is functional but messy, this is the track that will clean it up. Writing testable, modular code matters a lot once you start building ML pipelines.

- Level: Beginner to Intermediate

- Format: Skill track, 19 hours, 4 courses

- Who it's for: Learners who know basic Python and want to write code that's maintainable and production-ready

Python Programming skill track

7. Python Data Structures tutorial

A short but essential read that covers every data structure you'll encounter in AI work: integers, floats, strings, booleans, arrays, lists, tuples, dictionaries, sets, stacks, queues, graphs, and trees. The tutorial includes working Python code for each structure and explains when to use one over another.

The section on NumPy arrays is particularly relevant. It explains why NumPy arrays are faster than Python lists for large datasets, how vectorised operations work, and how to create multi-dimensional arrays. That knowledge pays off immediately when you start working with ML libraries.

- Level: Beginner

- Format: Tutorial, approximately 15 minutes to read

- Who it's for: Beginners who want a clear reference for Python data structures before moving into data manipulation

Python Data Structures tutorial

8. Data Manipulation in Python skill track

Before you can train a model, you need to be able to clean, reshape, and analyse data. This 16-hour track covers pandas and NumPy in depth, using real-world datasets including New York City's tree census, customer purchase data, and stock market prices. It includes four courses: Data Manipulation with pandas, Reshaping Data with pandas, Joining Data with pandas, and Introduction to NumPy.

The pandas skills here are genuinely foundational. Filtering DataFrames, merging datasets, handling missing values, and reshaping from wide to long format are tasks you'll do constantly in any ML project. The NumPy course adds array operations that feed directly into scikit-learn and PyTorch workflows.

- Level: Beginner

- Format: Skill track, 16 hours, 4 courses

- Who it's for: Anyone who needs to prepare data for machine learning models

Data Manipulation in Python skill track

9. Associate Data Scientist in Python career track

This is the most comprehensive single resource on the list. At 90 hours across 23 courses, it covers the full data science workflow in Python: data manipulation, visualisation with Matplotlib and Seaborn, statistical hypothesis testing, regression with statsmodels, supervised learning with scikit-learn, unsupervised learning, and tree-based models. It also includes 10 real-world projects.

The track prepares you for the Associate Data Scientist certification. What I find most useful about it is the project work. Projects like "Predictive Modeling for Agriculture" and "Clustering Antarctic Penguin Species" give you portfolio pieces that demonstrate you can apply ML to real problems, not just complete exercises.

- Level: Beginner to Intermediate

- Format: Career track, 90 hours, 23 courses, 10 projects

- Who it's for: Learners who want a structured, end-to-end path to becoming a working data scientist

Associate Data Scientist in Python career track

10. Machine Learning Fundamentals in Python skill track

This 16-hour track covers the four main branches of machine learning: supervised learning with scikit-learn, unsupervised learning with scikit-learn and scipy, deep learning with PyTorch, and reinforcement learning with Python's Gymnasium library. It's the most direct path to understanding how ML models actually work.

The PyTorch section is where things get interesting. You build your first neural network from scratch, learn backpropagation and gradient descent in code, and apply deep learning to image classification and sentiment analysis. The reinforcement learning course at the end covers Q-learning and policy gradients, which are the foundations of modern AI agents.

- Level: Intermediate

- Format: Skill track, 16 hours, 4 courses

- Who it's for: Learners who have Python and statistics foundations and are ready to build real ML models

Machine Learning Fundamentals in Python skill track

11. Deep Learning in Python skill track

This 18-hour track goes deeper into neural network architectures using PyTorch. It covers CNNs for image classification, RNNs and LSTMs for sequential data, object detection, image segmentation, and text generation. The final course on Transformer Models with PyTorch is the one that connects everything to modern LLMs like ChatGPT.

The Transformer course is worth highlighting specifically. It explains how the attention mechanism works, why transformers replaced RNNs for most NLP tasks, and how the architecture underpins GPT-style models. If you want to understand why LLMs behave the way they do, this is where that understanding comes from.

- Level: Intermediate to Advanced

- Format: Skill track, 18 hours, 5 courses

- Who it's for: Learners who have completed the ML Fundamentals track and want to specialise in deep learning

Deep Learning in Python skill track

12. Prompt Engineering with the OpenAI API

Once you understand how LLMs work conceptually, this 4-hour course teaches you how to get reliable outputs from them. It covers zero-shot, one-shot, and few-shot prompting, chain-of-thought reasoning, self-consistency prompting, multi-step prompting, and iterative refinement. All exercises use the OpenAI API in Python.

The business applications chapter is the most practical section. It covers text summarisation, tone adjustment for email marketing, customer support ticket routing, and code generation with multi-step prompts. These are tasks that come up constantly in real workflows, and the course shows you how to design prompts that produce consistent, structured outputs.

- Level: Beginner

- Format: Course, 4 hours, 55 exercises

- Who it's for: Developers and data professionals who want to build reliable LLM-powered applications

Prompt Engineering with the OpenAI API

13. Working with Hugging Face

Hugging Face is where most open-source AI development happens, and this 2-hour course teaches you how to navigate it. You'll load pre-trained models from the Hub, download and manipulate datasets, build text classification pipelines, summarise long documents, and use AutoModel and AutoTokenizer classes for custom NLP tasks.

The course also covers the difference between running inference locally versus through Hugging Face inference providers, which is a practical decision you'll face on every project. With over 28,000 learners and a 4.8 rating, it's one of the more popular courses in the AI catalogue for good reason.

- Level: Beginner

- Format: Course, 2 hours, 26 exercises

- Who it's for: Learners who want to use open-source models for NLP tasks without building everything from scratch

14. AI Agent Fundamentals skill track

If RAG is about giving AI a memory, agents are about giving it hands. This track shifts the approach from AI as a passive responder to AI as an active worker. You'll explore the architecture of autonomous agents, learning how to combine LLMs with tool-use, multi-step reasoning, and external APIs to execute complex workflows.

The evolution from static pipelines to agentic systems is where the industry is heading right now. This track provides the conceptual and practical groundwork for building systems that don't just answer questions, but actually complete tasks independently.

- Level: Advanced

- Format: Skill track, approximately 12 hours

- Who it's for: Advanced learners ready to move beyond conversational AI and build autonomous, action-oriented systems

AI Agent Fundamentals skill track

15. AI Ethics

As AI becomes more integrated into business and software, understanding how to deploy it responsibly is no longer optional. This course covers the core principles of AI ethics, such as fairness, transparency, accountability, and privacy, and provides actionable strategies to identify and mitigate bias in your datasets.

This course is relevant to loads of different audiences, thanks to its focus on practical governance rather than just abstract philosophy. You'll learn how to establish an ethical framework and examine real-world case studies of AI deployment gone wrong, giving you the tools to build user trust and deploy models responsibly before you start building complex systems.

- Level: Beginner

- Format: Course, 2 hours

- Who it's for: Anyone involved in building, deploying, or managing AI systems who wants to ensure they are creating equitable and compliant tools

16. Developing AI Systems with the OpenAI API

Moving beyond simple web interfaces, this course teaches you how to programmatically integrate OpenAI's models into your own applications. You'll learn how to authenticate, make API calls, handle responses, and manage token limits using Python, essentially acting as the bridge between model theory and actual software development.

The transition from prompting in a chat window to orchestrating API calls in code is a massive leap in capability. This course gives you the architectural understanding of how production AI systems are actually built, making it an essential stepping stone before tackling more complex data retrieval frameworks.

- Level: Intermediate

- Format: Course, 4 hours

- Who it's for: Developers who want to transition from using AI chat tools to building their own AI-powered software

Developing AI Systems with the OpenAI API

17. Retrieval-Augmented Generation (RAG) with LangChain

Large Language Models are great, but they don't know your proprietary data. This course introduces Retrieval-Augmented Generation (RAG), the industry-standard architecture for grounding AI responses in external documents. You'll learn how to chunk data, create vector embeddings, and use LangChain to orchestrate the flow of information from your database to the LLM.

What I like about this is how thoroughly it demystifies the "magic" of enterprise AI. By building data engines and indexing pipelines, you'll see exactly how applications like customer support chatbots pull the right context to avoid hallucinations.

- Level: Intermediate to Advanced

- Format: Course, 4 hours

- Who it's for: Developers looking to build AI applications that securely query and reason over private or domain-specific data

Retrieval-Augmented Generation (RAG) with LangChain

18. Introduction to the Model Context Protocol (MCP)

Often described as the "USB-C for AI," the Model Context Protocol is an open-source standard for connecting AI models to external data sources and tools. This tutorial walks you through the architecture of MCP and how to deploy both managed and custom servers, allowing your AI agents to query databases like BigQuery or interact with Google Maps without writing custom adapter code for every new tool.

The standardization MCP brings to the table is a game-changer for agentic AI. Instead of building bespoke integrations, you learn how to implement a data source once as an MCP server and use it seamlessly across any compliant AI client. It drastically reduces development friction.

- Level: Intermediate to Advanced

- Format: Tutorial, approximately 15 minutes to read

- Who it's for: AI developers and system architects looking to standardize how their agents connect to enterprise data and external services

Introduction to the Model Context Protocol (MCP)

19. Claude Code Tutorial

This tutorial brings AI assistance directly into your terminal, focusing on Anthropic's Claude Code CLI. You'll learn how to set up the environment, connect to GitHub, and implement an Explore-Plan-Execute workflow for safe, multi-file code refactoring.

The highlight here is "Plan Mode." Rather than letting the AI loose to make unguided edits across your codebase, this read-only phase forces the model to generate a reviewable markdown plan first. It’s a masterclass in adding guardrails to AI coding, solving the compounding error problem that plagues typical AI code generation.

- Level: Intermediate

- Format: Tutorial, approximately 15 minutes to read

- Who it's for: Software engineers wanting to embed AI deeply into their local development and refactoring workflows

20. Building with Gemini 3.1 Pro: Coding Agent Tutorial

This is where everything comes together in a modern development workflow. This tutorial teaches you how to use the Gemini CLI, powered by the state-of-the-art Gemini 3.1 Pro model, to build a production-ready Next.js application from scratch. You'll cover everything from initial architectural prompting to creating custom skills, managing persistent memory, and deploying to Vercel.

What stands out is the sheer practicality of the exercise. You're not just writing toy scripts, you're actually using an advanced, agentic workflow to handle database migrations, authentication, and unit testing. It's the ultimate demonstration of how a developer can act as a technical director while an AI agent handles the heavy lifting.

- Level: Advanced

- Format: Tutorial, approximately 20 minutes to read

- Who it's for: Developers who want to master AI-driven development by building full-stack applications with state-of-the-art models

Building with Gemini 3.1 Pro: Coding Agent Tutorial

How to Choose the Right AI Resource to Get Started

The list above is ordered for a complete beginner who wants a deep understanding of AI and how it works, but not everyone starts at the same point. Here's a quicker decision guide.

- If you have no coding experience: Start with Introduction to AI for Work, AI Ethics, and the AI Fundamentals skill track. Don't touch Python yet.

- If you know Python but not statistics: Go straight to Introduction to Statistics, then Foundations of Probability in Python.

- If you know Python and statistics: Skip to the Machine Learning Fundamentals in Python skill track and the Associate Data Scientist in Python career track.

- If you want to build software with LLMs: Take Developing AI Systems with the OpenAI API, followed immediately by Retrieval-Augmented Generation (RAG) with LangChain.

- If you want to build autonomous systems: The AI Agent Fundamentals skill track and the Introduction to the Model Context Protocol (MCP) tutorial are your next steps.

- If you are a software engineer looking to speed up local development: Read the Claude Code and Building with Gemini 3.1 Pro: Coding Agent Tutorial tutorials to see how AI integrates into the CLI and full-stack workflows.

- If you want to understand how LLMs work internally: You need the Deep Learning in Python skill track, specifically the Transformer Models course.

- If you're a business professional who just wants to use AI tools: Introduction to AI for Work is the only course you strictly need.

Final Thoughts

For most people starting from scratch, the honest answer is: begin with the AI Fundamentals skill track and Introduction to Statistics, run them in parallel, and don't skip the math. The temptation is to jump straight to building RAG applications or AI agents, and you can do that, but you'll hit a ceiling quickly if you don't understand what's happening underneath.

One caveat worth naming: this list focuses on DataCamp resources, which means it's weighted toward structured, interactive learning rather than reading research papers or building open-source projects. Both of those matter for a long-term AI career, but they're harder to recommend as starting points. The resources here give you the foundation to engage with that broader ecosystem.

If you want a single place to start that covers the conceptual landscape without overwhelming you, I'd recommend the AI Fundamentals skill track. It's 10 hours, requires no coding, and by the end, you'll have a clear picture of where you want to go next.

A senior editor in the AI and edtech space. Committed to exploring data and AI trends.