Course

Update: GPT-5 was released on August 7, 2025, and we have published a dedicated article on GPT-5, in which we tested the model and provided an overview of the new features. We also have a guide to the GPT-5 API.

More than two years have passed since ChatGPT launched in November 2022. When I originally wrote this article on February 14, 2024, it had been just over a year, and OpenAI remained a dominant force in AI. Since then, the field has evolved, with Google’s Gemini, Anthropic’s Claude, and Meta’s LLaMA emerging as significant competitors.

On February 12, 2025, Sam Altman posted a roadmap on X, providing details about GPT-4.5 and GPT-5 and outlining plans to simplify OpenAI’s offerings under the concept of “magic unified intelligence.” I’m updating this article based on that information.

This article examines GPT-5, combining Altman’s recent statements with the progression of OpenAI’s earlier models.

What Is GPT-5?

Generative Pre-trained Transformer or GPT is a series of large language models (LLM) developed by OpenAI that have significantly influenced both the ML and AI fields.

GPT, at its core, is designed to understand and generate human-like text based on the input it receives. These models are trained from vast datasets. The GPT family of models has been instrumental in popularizing LLM-based applications, setting new benchmarks for what is possible in natural language processing, generation, and beyond.

GPT-5 represents the next iteration in the GPT series. Some of you might be wondering what the next iteration means. Let's look at the history of GPT models so far:

GPT-1

In 2018, OpenAI introduced the concept of generative pre-training with GPT-1, using a transformer architecture to enhance natural language understanding. This model, detailed in their paper "Improving Language Understanding by Generative Pre-Training," served as a proof-of-concept and was not publicly released.

GPT-2

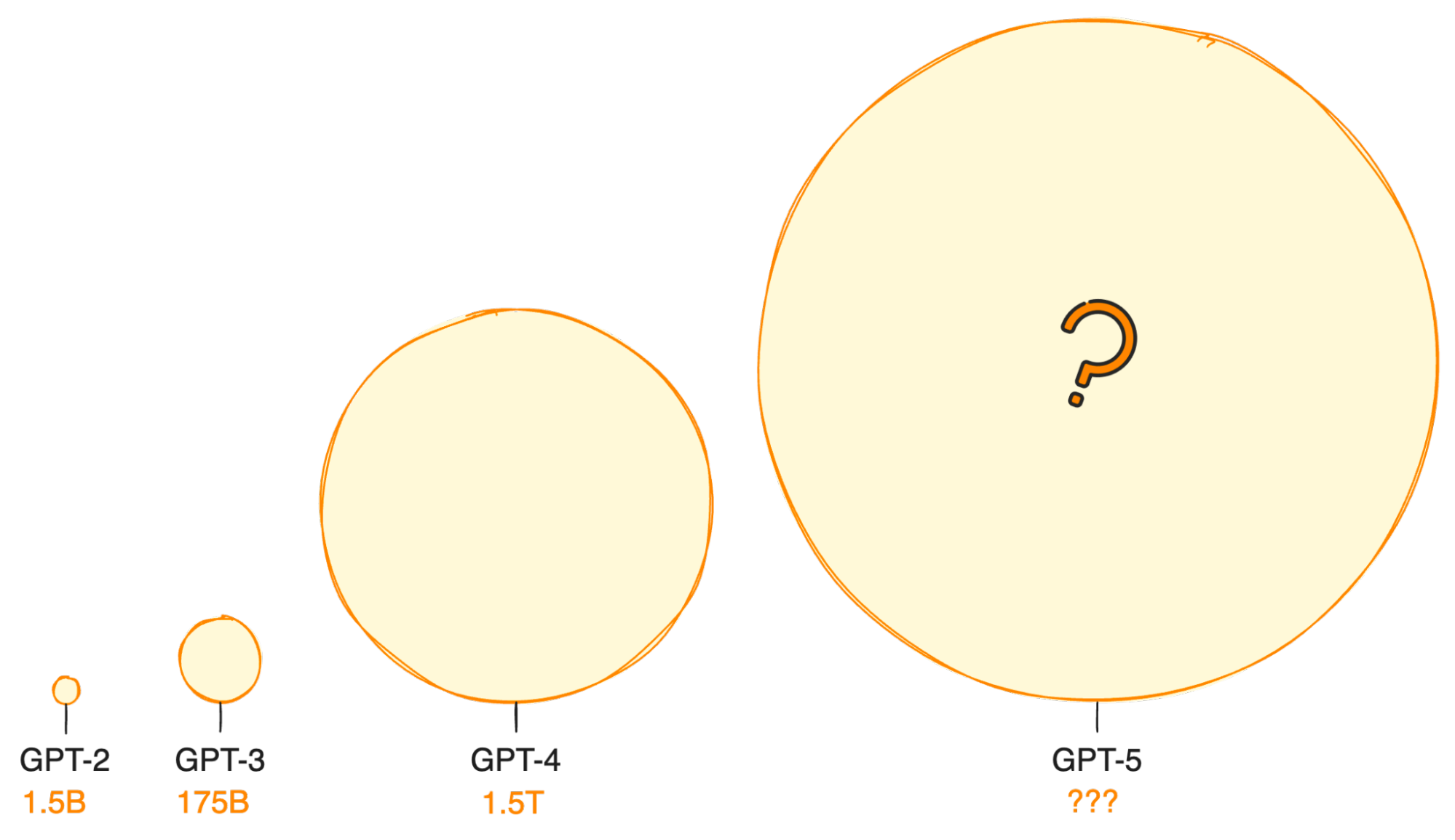

A year later, OpenAI released GPT-2, showcasing significant improvements in text generation. GPT-2 was capable of generating short passages of text, marking a notable advancement from its predecessor. It was publicly available, allowing for broader experimentation in the machine learning community.

GPT-3

With the release of GPT-3 in 2020, OpenAI scaled up its model significantly, boasting 100 times more parameters than GPT-2. This expansion enabled GPT-3 to produce much longer and more coherent text, performing impressively across various tasks. The introduction of ChatGPT, a conversation-focused iteration within the GPT-3.5 series, demonstrated the model's remarkable ability to generate human-like text, achieving rapid adoption and reaching 100 million users in just two months.

GPT-4

GPT-4, the latest iteration in the series, further refines the capabilities introduced by its predecessors. With an even larger dataset and more parameters, GPT-4 improves upon the natural language understanding and generation capabilities of GPT-3. It exhibits enhanced performance in generating coherent, contextually relevant text over extended passages and shows better understanding in complex conversation scenarios.

GPT-4's advancements include a more nuanced understanding of context, improved factuality, and a reduction in generating biased or harmful content. Its adoption spans various applications, from advanced conversational agents to sophisticated content creation tools, highlighting its versatility and the ongoing evolution of AI-driven natural language processing technologies.

In November 2023, OpenAI unveiled GPT-4 Turbo with Vision, which updated several features. Then in May 2024, GPT-4o was launched, a multimodal model that offers even faster speeds and lower costs. You can learn more about the evolution of the GPT family in our previous article regarding GPT-4.

GPT-5

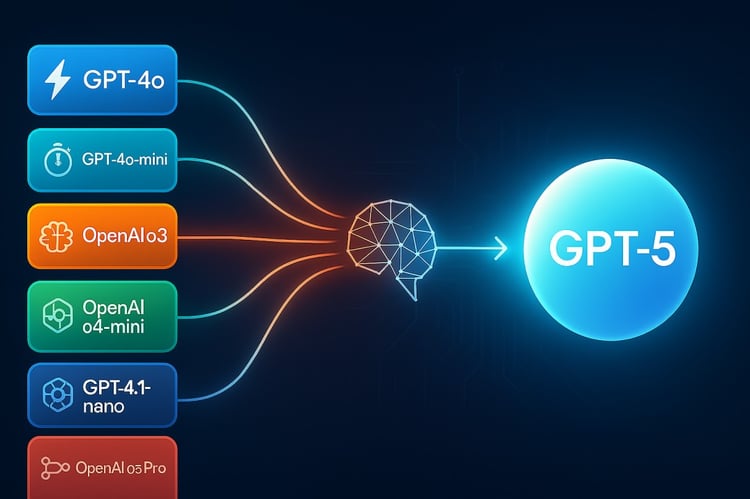

According to Altman’s February 12, 2025, X post, GPT-5 will be the next evolution of the Generative Pre-trained Transformer series. Altman’s recent roadmap provides clear details about GPT-5, indicating it will not be a standalone model but a system integrating GPT-series and o-series models, such as o3.

We know that GPT-4 presented significant improvements over its predecessors, particularly in its capacity for logical reasoning. Though GPT-4o, released in May 2024, remains limited to knowledge before its training cutoff, it offers enhanced reasoning and multimodal capabilities. I expect GPT-5 to build on these advancements, incorporating o3’s reasoning and additional tools as outlined in the roadmap.

When Will GPT-5 Be Released?

In a January 2024 Sam Altman’s discussion with Bill Gates, Gates received confirmation that work on GPT-5 had begun without giving any clue about when the release date could be.

Altman’s February 12, 2025, X post specifies that GPT-5 will release in "months" from that date, indicating a summer 2025 launch. GPT-4’s development cycle, including training, development, and testing, exceeded two years, with its initial release in early 2023 following ChatGPT’s November 2022 debut. GPT-4o, launched in May 2024, marked a subsequent update.

Altman’s roadmap accelerates GPT-5’s release to mid-2025 and confirms GPT-4.5, codenamed Orion, will launch in "weeks" from February 12, 2025, likely March 2025, as a precursor.

What Features Can We Expect From GPT-5?

With GPT-5's release possibly a year or two in the future, most predictions about its advancements are based on current trends shaped by Google and open-source AI initiatives. These developments give us valuable insights into the future direction of the industry.

However, there are some first clues coming directly from the OpenAI core team. During Gates's interview, Altman highlighted that OpenAI's efforts would concentrate on enhancing reasoning abilities and incorporating video processing capabilities.

So, let’s try to make a little sense of it all and discuss some key enhancements expected from GPT-5.

Parameter size

The exact parameter size of GPT-4 remains undisclosed, with estimates suggesting around 1.5 trillion. GPT-5, as a system rather than a standalone model, will integrate multiple architectures, including o3’s reasoning capabilities. I anticipate its capacity will reflect this combined approach rather than a simple parameter increase.

If this trajectory continues, GPT-5 could redefine the limits of current LLMs, offering an unprecedented size.

Multimodality

GPT-4o currently processes speech, images, and text. Altman’s roadmap confirms GPT-5 will include voice, canvas, and search features, with potential for video processing based on earlier hints from his January 2024 discussion with Bill Gates. This will enhance OpenAI’s multimodal capabilities, aligning with trends seen in competitors like Google’s Gemini.

From Chatbot to Agent

The transition from chatbots to fully autonomous agents is another exciting frontier. Imagine if you could assign menial tasks or jobs to a GPT-powered app. This could actually become a reality if OpenAI keeps integrating third-party services. We’ve already seen the introduction of Custom GPTs and Operator, and this will likely continue to develop.

This new feature would allow GPT-5 to connect to various services and perform actions in the world seamlessly, acting on behalf of users to accomplish tasks without direct human oversight. For instance, we could ask an autonomous agent to buy our groceries based on our own dietary preferences.

Better accuracy

The current GPT-4 model is 40% better than its predecessor GPT-3. With o3’s chain-of-thought reasoning integrated into GPT-5, I predict further gains in reliability and contextual understanding, reducing errors across diverse applications.

Increased context windows

One of the limitations of current models is the size of the context window they can consider for generating responses. Given that GPT-5 might be trained with a larger amount of data, it is anticipated to have an expanded context window, allowing it to understand and reference larger portions of text, leading to more coherent and contextually relevant outputs.

Cost-effective use of the OpenAI API

As newer models emerge, we can also anticipate a reduction in the cost of using the OpenAI API, making technologies like GPT-4o more accessible.

This democratization of access could spur a wave of innovation, enabling a broader range of developers and organizations to integrate advanced AI into their applications.

Once it becomes cheaper and more accessible, the GPT models could become more proficient at performing complex tasks like coding or research. If you haven’t tried OpenAI’s API yet, I strongly recommend you follow DataCamp’s guide to the OpenAI API to get a taste of it.

Conclusion

Sam Altman’s February 12, 2025, roadmap provides specific details about GPT-5, moving beyond the speculation that shaped earlier discussions. It confirms GPT-4.5 will launch in weeks, followed by GPT-5 in months, targeting a summer 2025 release with a unified system approach. I see this as a significant step in OpenAI’s evolution, integrating advanced features and tiered access to meet diverse needs.

If you’re eager to get started exploring all that GPT models have to offer, start with our Introduction to ChatGPT course or, if you’re already familiar with the model, our webinar on Using ChatGPT’s Advanced Data Analysis.

Josep is a freelance Data Scientist specializing in European projects, with expertise in data storage, processing, advanced analytics, and impactful data storytelling.

As an educator, he teaches Big Data in the Master’s program at the University of Navarra and shares insights through articles on platforms like Medium, KDNuggets, and DataCamp. Josep also writes about Data and Tech in his newsletter Databites (databites.tech).

He holds a BS in Engineering Physics from the Polytechnic University of Catalonia and an MS in Intelligent Interactive Systems from Pompeu Fabra University.