Course

Labeling data is expensive, slow, domain-specific, and most teams don't have enough of it.

You always need labeled data to train a model, but labeling it correctly takes time and often a domain expert. For example, medical images need radiologists and legal documents need lawyers. Even simple tasks like sentiment analysis need someone to sit down and tag each example by hand. As a result, most ML teams end up with a tiny labeled dataset and a huge amount of unlabeled data they can't use.

Semi-supervised learning fixes this by training on both. It takes your small labeled dataset, combines it with your large unlabeled one, and lets the model learn patterns.

In this article, I'll break down how semi-supervised learning works, cover the most common techniques, and show you when it makes sense to use it.

But what exactly is Supervised Machine Learning? Read our blog post to learn how essential supervised learning algorithms work.

What Is Semi-Supervised Learning?

Semi-supervised learning is a machine learning approach that trains on a mix of labeled and unlabeled data.

It sits between supervised and unsupervised learning, as the name suggests. Supervised learning needs every sample labeled. Unsupervised learning works with no labels at all. Semi-supervised learning uses a small set of labeled examples alongside a larger collection of unlabeled ones.

The labeled data tells the model what to look for. The unlabeled data shows the model how the data is structured. When combined, they give the model more to work with than either type could alone.

How Semi-Supervised Learning Works

The process starts with a small labeled dataset - maybe a few hundred examples where you know the correct output.

Then you bring in a much larger unlabeled dataset. This could be thousands or even millions of samples with no labels attached. The model uses this unlabeled data to learn the underlying patterns and relationships between data points.

The labeled examples then guide that structure toward the right answers. The model already knows how the data is organized from the unlabeled samples. The labels tell it which regions of that structure map to which outputs.

Here's a quick example.

Say you're classifying emails as spam or not spam. You have 100 labeled emails and 10,000 unlabeled ones. The model first learns how emails group together based on word patterns and structure. Then it uses your 100 labeled examples to figure out which groups are spam and which aren't. The result is a model that performs better than if you'd trained on just those 100 labeled emails.

Semi-Supervised vs Supervised vs Unsupervised Learning

Let's go through each learning approach to see where semi-supervised learning fits relative to the other two.

Supervised learning

Supervised learning trains on fully labeled data. Every sample in the dataset has an input and a known output. The model learns the mapping between the two.

This works well when you have enough labeled examples. But "enough" can mean thousands or even millions of samples depending on the task. That's expensive to produce and sometimes just impossible.

Unsupervised learning

Unsupervised learning uses no labels at all. The model looks at raw data and finds structure on its own. Classic examples are clustering and dimensionality reduction.

The upside is you don't need any labeled data. The downside is the model has no idea what you actually care about. It finds patterns, sure, but those patterns might not match the problem you're trying to solve.

Semi-supervised learning

Semi-supervised learning combines the best of both. You give the model a small labeled dataset to define the task and a large unlabeled dataset to learn the data's structure.

The labeled data points the model in the right direction. The unlabeled data shows the model how different samples relate to each other. This combination often outperforms supervised learning on the same small labeled set, because the model detects patterns it would've missed without the unlabeled data.

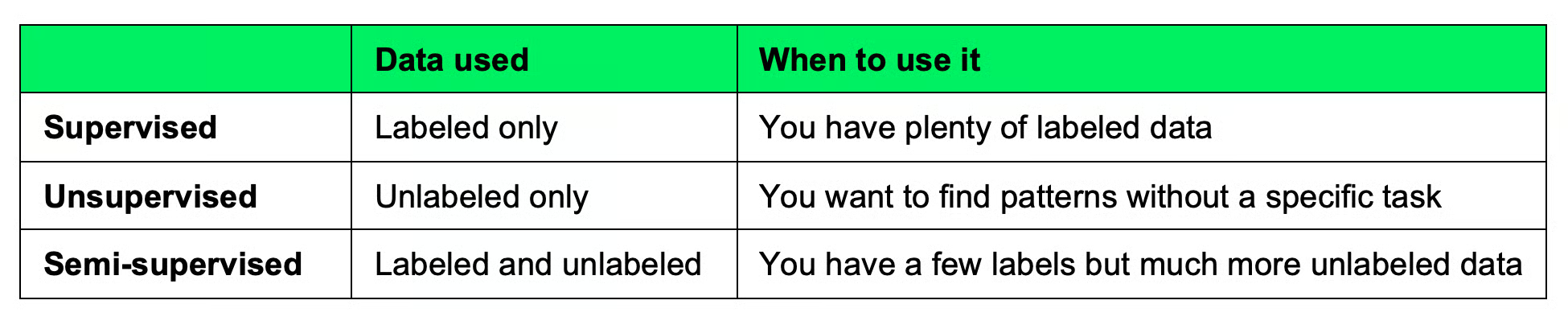

Here's a quick comparison between these three:

Machine learning approach comparison table

To recap, labeling it takes too much time and you have much unlabeled data, semi-supervised might be worth considering. Let me show you some common techniques next.

Common Semi-Supervised Learning Techniques

There are a couple of ways to implement semi-supervised learning. Each technique handles the labeled-unlabeled split differently, so let's go through the most common ones.

Self-training

This is the simplest approach. You train a model on your labeled data, then use it to predict labels for the unlabeled samples. The most confident predictions get added to the labeled set as pseudo-labels, and you retrain the model on the expanded dataset.

You repeat this process until the model stops improving or you run out of unlabeled data. It's easy to implement, but if the model makes a wrong prediction early on, that error is now a part of your dataset.

Co-training

Co-training uses two models instead of one. Each model is trained on a different "view" of the data. Think of this as a different subset of features.

For example, say you're classifying web pages. One model might look at the text on the page, while the other looks at the anchor text of links pointing to it. Each model labels unlabeled samples for the other, and they teach each other over multiple rounds.

The idea is that one model's strengths cover the other's weaknesses. If both views have enough information on their own, co-training can outperform self-training because errors from one model get corrected by the other.

Label propagation

This approach builds a graph where each data point is a node, and edges connect similar points. Labels then "spread" from labeled nodes to their neighbors.

The assumption is that similar data points should share the same label. If a labeled sample sits close to a cluster of unlabeled samples, those unlabeled samples likely belong to the same class. The labels propagate through the graph until every node has one.

This works well when your data has clear cluster structure, but can fail when the boundaries between classes are blurry.

Semi-supervised neural networks

Deep learning has its own set of semi-supervised techniques. Here are the couple of the most common ones:

- Consistency regularization: It pushes the model to produce the same output for an input even after small perturbations like noise or augmentation. The idea is that a good model shouldn't change its prediction just because you slightly altered the input

- Pseudo-labeling in neural networks: It works like self-training but at the batch level. The model generates labels for unlabeled samples during training and uses them alongside real labels in the loss function.

Methods like FixMatch and MixMatch combine these ideas, and they've shown good results on benchmarks with very few labeled examples.

Why Semi-Supervised Learning Matters

You should care about semi-supervised learning for one reason: cost.

Labeling data requires data, domain experts, time, and money. Semi-supervised learning reduces cost by getting more out of fewer labels.

Then, there’s performance. A model trained on 500 labeled samples and 50,000 unlabeled ones will often beat the same model trained on just those 500 labeled samples. The unlabeled data gives the model a more complete picture of how the data is distributed, which leads to better predictions.

And then there's the reality of most datasets. Unlabeled data is everywhere. Every company sits on logs, images, documents, and recordings that nobody has time to label. Semi-supervised learning lets you make something useful from that data.

Applications of Semi-Supervised Learning

Semi-supervised learning shows up in domains where labels are hard to get.

Image classification is one of the most common use cases. Labeling thousands of images is tedious, but collecting unlabeled images is easy. Semi-supervised methods let you train accurate classifiers with just a few hundreds of labeled images.

Text classification is the same. You might have millions of customer reviews or support tickets, but only a small batch with manual labels. Semi-supervised learning can help you build classifiers that generalize across the full dataset.

Speech recognition is another interesting area. Transcribing audio by hand takes a lot of effort, but raw audio data is abundant. The unlabeled recordings help the model learn acoustic patterns, while the transcribed samples teach it the correct mappings.

Medical data analysis is an excellent domain-specific task. Getting a doctor to label scans or patient records is expensive and slow, since they have more important things to do. But hospitals have huge amounts of unlabeled clinical data. Semi-supervised methods help build diagnostic models without needing a fully labeled dataset.

Advantages of Semi-Supervised Learning

Here's what makes semi-supervised learning a good fit for many projects:

- It needs less labeled data: You can get good results with a fraction of the labels that a fully supervised approach would require

- It improves generalization: The unlabeled data exposes the model to a wider range of examples, which helps it perform better on unseen data

- It's cost-effective: When labeling is expensive or time-consuming - which it usually is - semi-supervised learning saves time and budget by making the most of what you already have

So, if you have more data than labels, it’s time to consider semi-supervised learning.

Limitations of Semi-Supervised Learning

Semi-supervised learning has a couple of trade-offs you should know about.

- Unlabeled data quality matters: If your unlabeled data doesn't come from the same distribution as your labeled data, the model learns the wrong patterns

- Wrong pseudo-labels: In methods like self-training, a confident but incorrect prediction gets treated as ground truth. The model trains on that bad label, which reinforces the mistake and makes future predictions worse

- It's more complex than supervised learning: You have more hyperparameters to tune, more design choices to make, and more things that can go wrong. A supervised model is simpler to build and explain. Semi-supervised methods add complexity that isn't always worth it - especially if your labeled dataset is already large enough

None of these are dealbreakers, just something to know about before jumping in.

Semi-Supervised Learning in Practice (Python Example)

Let's see semi-supervised learning in action with a simple Python example using scikit-learn's LabelSpreading algorithm.

I'll create a dataset, label only a small portion of it, and let the model figure out the rest.

Setting up the data

First, let me generate a dataset and mask most of the labels to simulate a semi-supervised scenario:

import numpy as np

from sklearn.datasets import make_moons

from sklearn.semi_supervised import LabelSpreading

from sklearn.metrics import accuracy_score

# Generate a dataset with 500 samples

X, y_true = make_moons(n_samples=500, noise=0.2, random_state=42)

# Keep only 10%, mask the rest as -1

rng = np.random.RandomState(42)

labeled_idx = rng.choice(500, size=50, replace=False)

y_train = np.full(500, -1) # -1 means unlabeled in scikit-learn

y_train[labeled_idx] = y_true[labeled_idx]

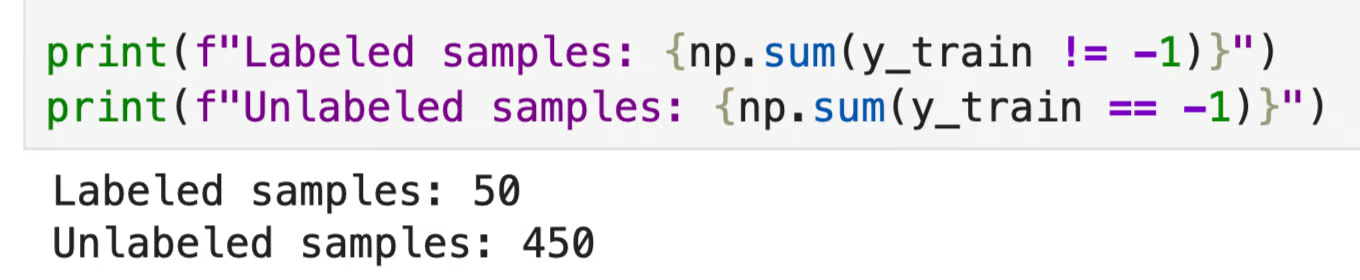

print(f"Labeled samples: {np.sum(y_train != -1)}")

print(f"Unlabeled samples: {np.sum(y_train == -1)}")

Number of labeled and unlabeled data samples

In scikit-learn, -1 marks a sample as unlabeled. Out of 500 samples, only 50 have labels. The model has to figure out the other 450.

Training and prediction

Now I’ll train a LabelSpreading model and see how well it labels the unlabeled data:

model = LabelSpreading(kernel="knn", n_neighbors=7)

model.fit(X, y_train)

y_pred = model.transduction_

# Check accuracy on the originally unlabeled samples

unlabeled_mask = y_train == -1

accuracy = accuracy_score(y_true[unlabeled_mask], y_pred[unlabeled_mask])

print(f"Accuracy on unlabeled samples: {accuracy:.2%}")

Accuracy on unlabeled samples

The model correctly labeled most of the unlabeled samples using just 10% of the data as labeled examples. That’s the core idea.

Visualizing the results

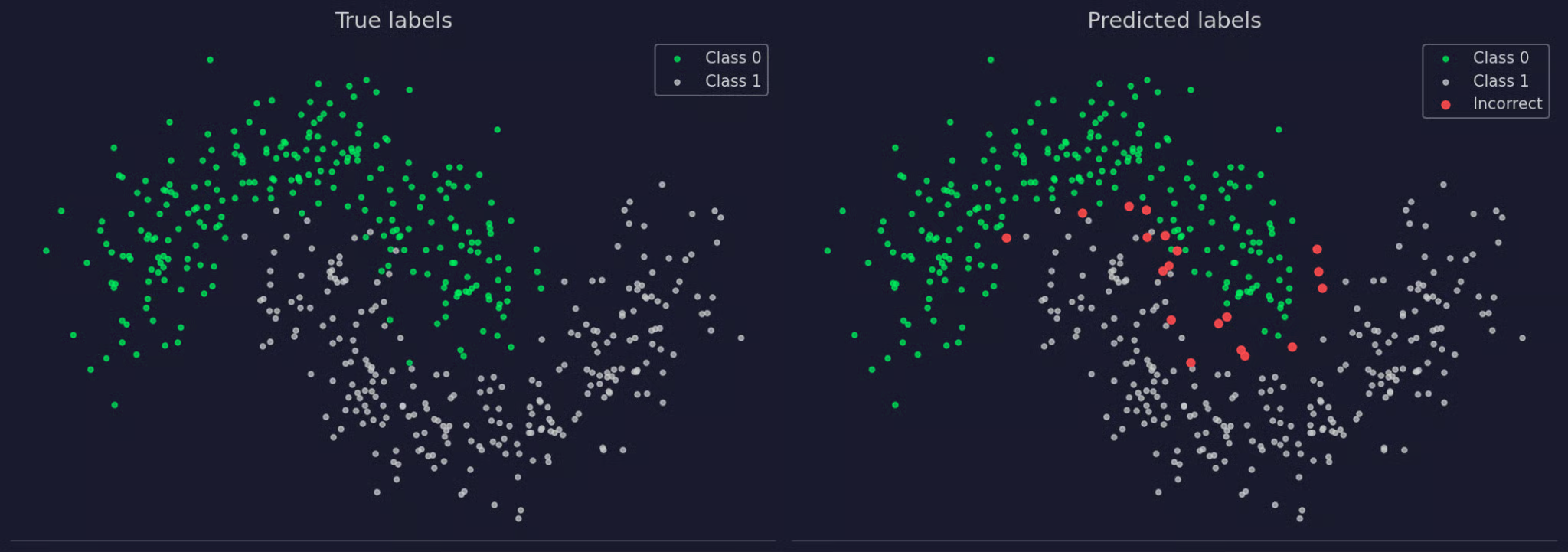

Accuracy is high - almost 96% - but let’s visually inspect which samples were misclassified:

True and predicted labels

The predicted labels near perfectly match the true labels, even though the model only saw 50 labeled examples out of 500. You can see the misclassified ones colored red in the right chart.

Common Mistakes With Semi-Supervised Learning

You’ve seen how powerful semi-supervised learning can be, but it’s not perfect for every scenario. Here are the most common mistakes and how to avoid them.

- Assuming unlabeled data always helps: It doesn't. If the unlabeled data comes from a different distribution than your labeled data, the model learns patterns that don't apply to your actual task. Always check that your labeled and unlabeled data come from the same source and represent the same problem

- Poor model initialization: Semi-supervised methods build on top of an initial supervised model. If that first model is weak - trained on too few samples or with bad hyperparameters - every step after it inherits those problems. Start with the best supervised baseline you can get before adding unlabeled data

- Over-reliance on pseudo-labels: Pseudo-labels are only as good as the model that generated them. If you trust every pseudo-label, errors can compound over training rounds. Set a high confidence threshold and only use pseudo-labels the model is sure about. Throw away the rest

- Ignoring data distribution: Semi-supervised methods assume that the structure of the unlabeled data tells you something useful about the labels. If your data doesn't have clear clusters or smooth decision boundaries, that assumption won’t hold

To get around these four mistakes, start with a strong supervised baseline, add unlabeled data gradually, and check that performance actually improves at each step.

When to Use Semi-Supervised Learning

Semi-supervised learning makes sense in these three scenarios:

- When labeled data is limited: If you only have a small labeled dataset and getting more labels is expensive or slow, semi-supervised learning helps you squeeze that extra bit of accuracy

- When unlabeled data is abundant: You need a large amount of unlabeled data for this to work. If you only have a small unlabeled set, the model won't learn enough structure to make a difference. The bigger the gap between your labeled and unlabeled set sizes, the more semi-supervised methods can help

- When your data has a clear structure: Semi-supervised learning works best when similar data points share the same label. If your data forms clusters or has smooth boundaries between classes, the unlabeled data will make those patterns that much more obvious. If the classes are mixed together with no clear separation, the unlabeled data won't add much.

If all three conditions apply, semi-supervised learning is worth trying.

Conclusion

In plain English, semi-supervised learning gives you a way to train better models without labeling everything. You take a small labeled dataset, combine it with a large unlabeled one, and let the model learn from both.

The benefits are clear: less time labeling, faster time to market, lower costs, and better performance than training on limited labels alone. And in fields like medicine, legal, and NLP - where labeling requires domain experts - that's a big deal.

If you have a huge amount of unlabeled data, try semi-supervised learning. Start with a good supervised baseline, pick a technique that fits your data's structure, and measure whether the unlabeled data actually helps. scikit-learn's LabelSpreading is a good first experiment, but experiment with methods like FixMatch and MixMatch.

If you want to become job-ready in 2026, take our Machine Learning Engineer track. It covers everything from fundamentals to MLOps.

FAQs

What is semi-supervised learning?

Semi-supervised learning is a machine learning approach that trains models on a small set of labeled data and a large set of unlabeled data. The labeled data tells the model what to look for, while the unlabeled data helps it understand the overall structure of the data. This way, you get better results than training on just the labeled data alone.

How is semi-supervised learning different from supervised and unsupervised learning?

Supervised learning uses only labeled data, where every sample has a known output. Unsupervised learning uses only unlabeled data and finds patterns on its own. Semi-supervised learning uses a small labeled dataset to define the task and a large unlabeled dataset to learn the data's structure.

When should I use semi-supervised learning?

Use it when you have a small amount of labeled data, a large amount of unlabeled data, and your data has a clear structure, like natural clusters or smooth boundaries between classes. If labeling is expensive or requires domain experts - like in medical imaging or legal text analysis - semi-supervised learning helps you build accurate models without labeling everything.

What is self-training in semi-supervised learning?

Self-training is a technique where the model trains on labeled data first, then predicts labels for the unlabeled samples. The most confident predictions get added to the labeled set as pseudo-labels, and the model retrains on the expanded dataset. The risk is that wrong predictions early on get reinforced in later rounds, so you should set a high confidence threshold.