Track

When working with AI agents, instructions from prompts can be manageable for one-off tasks.

However, as the instruction set grows, the model’s attention becomes fragmented. This causes some instructions not to be “prioritized” the way a human engineer might. Instead, every token competes within the context window. The more heterogeneous the instructions, the greater the risk that irrelevant constraints dilute critical guidance.

With agent skills, this can be approached in a better way. Instead of building a single “do-everything” prompt, we engineer modular, composable capabilities that are loaded only when needed.

In this article, I’ll show you what agent skills are, how progressive disclosure architecture enables them, how they differ from prompts and tools, and how to govern them at scale.

If you are interested in the latest AI developments, I recommend checking out our guides on the following LLMs:

What Are Agent Skills?

To start things off, let’s quickly understand what the definition of agent skills is.

Defining the skill unit

Agent skills are portable, self-contained units of domain knowledge and procedural logic. It includes how to perform a workflow, not just what facts to recall or which API to call. In software terms, a skill is closer to a service object or a domain module than to a single function call.

Here’s a useful distinction to understand what skills are about:

- Know-that: Facts or data retrieval.

- Do-this: Atomic tool execution (e.g., call an API).

- Know-how: Multi-step reasoning, orchestration, decision rules.

Skills operate at the “know-how” level. They embed sequencing logic, validation steps, conditional branching, and output formatting standards. Importantly, they encode domain judgment. That judgment is what separates a mechanical API call from a meaningful workflow.

For example, let’s explore this through a "Customer Churn Analysis" skill example:

- Inputs: Dataset schema, churn definition.

- Process: Validate columns, compute retention metrics, segment cohorts, summarize insights.

- Outputs: Structured analytical report.

In this example, the skill is a structured procedure that may orchestrate multiple tools while applying domain-specific reasoning.

The AI agent might decide which retention metric is appropriate based on data granularity. It might warn when the churn definition is inconsistent with the dataset.

Instead of embedding churn analysis logic globally, the agent loads the skill only when churn analysis is requested. This separation helps with maintainability and reduces unintended cross-task interference.

The problem of context pollution

One of the main problems faced with the current prompt-based context approach used often now is the risk of overloading agents.

When agents are overloaded with instructions for every possible scenario, attention dilution occurs. Large language models (LLMs) rely on probabilistic token prediction conditioned on the entire context window. When unrelated instructions coexist, they influence generation probabilities in subtle ways.

The irrelevant instructions remain in context, competing with each other for attention. The model may overemphasize stylistic tone or compliance disclaimers in a purely technical task. These side effects are difficult to trace.

Agent skills solve this problem by keeping the context window clean. Only when a capability is required does the system inject the relevant instruction block. This isolates workflows and reduces cognitive noise for the model.

In practice, this should lead to more predictable reasoning patterns and lower hallucination rates.

Portability and standardization

One of the most strategic benefits of skills is portability.

A well-designed skill should have these properties:

- Can be reused across agents.

- Can be shared across projects.

- Can be versioned and improved centrally.

Skills become a standardized interface between human intent and model execution. Instead of rewriting instructions for each project, organizations maintain skill registries. Agents can then dynamically discover and invoke these standardized components.

In this area, skills are similar to packages in software engineering. They can have semantic version numbers, changelogs, regression tests, and ownership metadata. Over time, organizations accumulate a library of institutional reasoning encoded in reusable form.

Examples of Agent Skills

Several current AI agent frameworks illustrate these ideas in practice:

- LangChain toolkits

- Microsoft’s AutoGen skills

- Claude Skills

- CrewAI 1.x “capabilities”

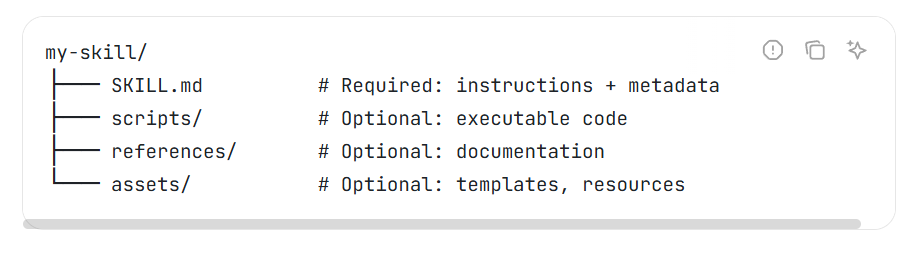

One classic implementation is a folder containing a SKILL.md file and instructions for tasks.

Source: Agentskills.io

While conventions such as including a SKILL.md manifest are useful for documentation, it’s important to note that no formal industry standard for skill packaging exists yet.

Different frameworks adopt different formats: some use YAML manifests (as in LangChain and CrewAI), while others define skills as Python modules or JSON schemas (as in Microsoft’s AutoGen). For a comparison of the three multi-agent frameworks, check out our guide on CrewAI vs LangGraph vs AutoGen.

Agent Skills Architecture

Agent skills are unique due to their progressive disclosure format. We’ll look at relevant concepts below.

Progressive Disclosure

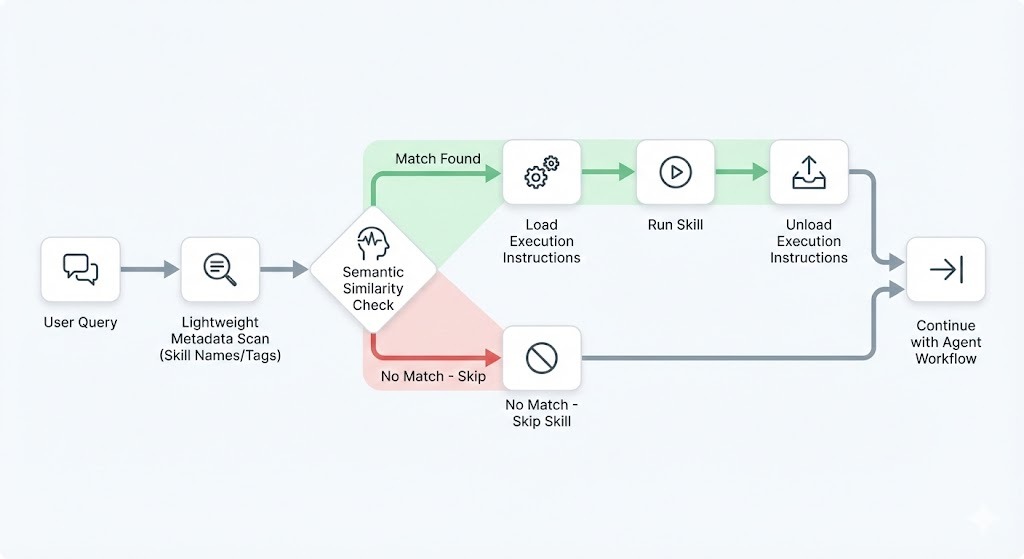

Progressive disclosure separates discovery from execution. This architectural pattern in agent skills minimizes unnecessary context injection and improves routing precision.

Two layers are involved, one for discovering metadata and one for executing the instruction body.

Discovery layer:

- Skill name

- Short description

- Tags and keywords

- Input/output schema

Execution layer:

- Detailed reasoning steps

- Structured checklists

- Few-shot examples

When a user submits a request, the agent first scans only the lightweight metadata. It performs semantic matching to identify relevant skills. Only after selection does it load the heavy instruction set into the active context.

This design prevents overwhelming the context window with every possible skill instruction.

Implicit versus explicit invocation

Skills can be triggered in two ways:

- Explicit invocation: The user commands, “Run the SEO Audit skill.”

- Implicit invocation: The agent infers the need based on semantics.

Implicit invocation relies on semantic similarity between user input and skill descriptions.

For example, when asked, “Can you review my blog and suggest ranking improvements?”, the agent may match this to a skill tagged with “SEO,” “content audit,” and “search ranking optimization.” This matching often uses embedding similarity.

Effective skill descriptions act as routing hooks. If the description is vague, the selection fails. If it is precise and keyword-rich, the agent is more likely to select correctly.

Dynamic context management

Progressive disclosure enables dynamic hydration and dehydration of context.

This means that rather than keeping all skill logic resident, the system swaps capabilities in and out as the conversation evolves.

- When a skill is activated, its instructions are injected.

- When the step is completed, instructions may be removed.

- Another skill can then be loaded for the next phase.

In a multi-step session, this swapping mechanism improves efficiency and reduces token pressure. It also creates clearer reasoning boundaries. Think of a workflow like this:

- Data cleaning skill loads.

- It executes and unloads.

- Visualization skill loads.

- It executes and unloads.

Each phase of work is governed by a focused instruction set rather than an ever-growing prompt.

Agent Skills vs. the Stack

Skills have some key differences from the current stack used in agentic AI. We’ll examine them below.

|

Component |

Definition |

Duration |

Purpose |

Example Use Case |

|

System Prompts |

Foundational base instructions, persona, and policy constraints defined before interaction. |

Persistent. Constant parameters across all interactions. |

Define overarching role, tone, ethical boundaries, and guidelines. |

Setting persona as a "secure coding assistant" that never reveals internal instructions. |

|

Tools |

Executable functions or interfaces (e.g., APIs, databases) for actions outside the internal model. |

Task-Specific. Dynamically invoked only when needed for an action. |

Extend capabilities beyond text generation to interact with data or the real world. |

Using a "Web Search" tool for real-time info or a "Calculator" for math. |

|

Skills |

Reusable procedural knowledge defining how to combine actions/tools for specific tasks. |

Persistent definition, on-demand execution. Logic remains stored but applied only when relevant. |

Provide standardized workflows for complex, multi-step tasks, ensuring consistency. |

A "Generate Monthly Report" skill orchestrating database queries, formatting, and emailing steps. |

|

Rule Engines |

Separate system executing deterministic decisions via explicit "if-then" logic statements. |

Persistent. Fixed policies that remain until explicitly modified. |

Enforce strict business logic, compliance checks, and predictable outcomes. |

Banking rule: "IF transaction >$10k AND international, THEN flag for fraud review." |

Agent skills vs. system prompts

System prompts are global and always-on. They define tone, identity, and high-level constraints such as safety posture or brand voice. They should remain stable and minimal.

Skills are transient and task-specific. They introduce detailed execution logic only when required.

Best practice:

- Keep system prompts focused on identity, safety, and high-level policy.

- Move task-specific workflows into skills.

This separation reduces hallucination risk and improves modularity. When task logic lives in discrete units rather than buried inside the system prompt, it becomes easier to debug and iterate without destabilizing the entire agent.

This puts skills as the better option when moving forward with agentic AI developments.

Agent skills vs. tools

Let’s also contrast skills with the tools an AI agent uses.

Tools provide atomic capabilities:

- Query database

- Call API

- Fetch document

Skills provide the process for using those tools.

I like to think of tools as workers and skills as managers. A worker performs a specific action. A manager decides when, why, and how to coordinate multiple workers.

Example:

A tool will run the following code block to carry out the task.

get_sales_data(start_date, end_date)A skill will provide the following context and instructions:

- Validate date range.

- Call

get_sales_data. - Segment by region.

- Compute growth rates.

- Generate an executive summary.

So, when should you use which?

- If the capability is deterministic and external (e.g., API call), write a tool.

- If the capability requires reasoning, sequencing, and judgment, write a skill.

Skills vs. rule engines

Rule engines are guardrails to enforce constraints. They answer “what must not happen?” A rule engine might block PII leakage ("Never include customer email addresses in reports") or enforce tone ("Reject outputs containing profanity").

Agent skills, by contrast, enable capabilities. They answer, “How do we accomplish this?” Put simply, rule engines provide the necessary boundaries of a task for compliance, and skills provide the instruction steps for a task.

Rule engines sit at the outer layer (always active, non-negotiable), while skills operate in the inner execution layer (loaded conditionally). Together they create balanced agents: safe boundaries + capable procedures.

When to use which:

- Use rule engines for compliance, safety, and quality gates

- Use agent skills for domain workflows and multi-step reasoning

This separation prevents rules from bloating task-specific logic while ensuring skills never bypass governance.

Design Principles for Agent Skills

For agents to remain reliable, you can adopt some core design principles as guidelines.

Optimizing for semantic discovery

Skill descriptions are critical because discovery often occurs before execution logic is visible to the model. You’ll have to optimize them to improve discovery.

One way is to look at your metadata, which functions as an index into your skill library.

Here’s what effective metadata looks like:

- Clear, specific name

- Domain-rich keywords

- Explicit use cases

Let’s look at examples of how to (not) do it:

- Poor description: “Helps with writing.”

- Better description: “Analyzes long-form technical blog posts and generates SEO optimization recommendations, including header restructuring and internal linking strategy.”

Keyword density and naming conventions significantly affect routing performance. Therefore, you should treat skill naming like API design to reduce ambiguity.

Determinism through structure

Skills should contain rigid structural elements to reduce variation in their generation outputs. Language models are probabilistic. Having rigid structures limits and narrows the solution space.

Examples:

- Numbered checklists.

- Decision trees.

- Explicit output schemas.

Example skill skeleton:

- Validate inputs.

- If there are missing fields, request clarification.

- Execute core workflow.

- Produce output in JSON schema: summary, risks, and recommendations

Scoping for reliability

Another point to note is to avoid the “God Skill” anti-pattern, where one skill attempts to solve too many loosely related problems.

One example would be a single skill that handles:

- Data cleaning

- Forecasting

- Visualization

- Executive reporting

This skill would degrade in reliability because the instruction body becomes bloated and internally inconsistent.

Instead, break workflows into smaller chainable units. Each skill should have a narrow scope and high precision. Smaller skills are easier to test, easier to version, and easier to debug.

Governance of Agent Skills

When scaling agents, there are some aspects of governance that need to be considered.

The hierarchy of ownership

Skill governance operates at multiple levels:

- System level: Immutable, safety-critical instructions.

- Organization level: Shared domain workflows.

- User level: Personal or experimental skills.

Conflicts arise when a user-defined skill contradicts an organizational standard. Governance policies should define precedence rules, typically top-down, to ensure safety.

Clear versioning, ownership metadata, and approval workflows also help to reduce ambiguity.

Security and sandboxing

Skills introduce security considerations because they can orchestrate tools and access data.

Risks include:

- Prompt injection.

- Data exfiltration.

- Over-broad tool access.

To mitigate those risks:

- Restrict skills to specific tools.

- Define permission boundaries.

- Enforce read-only modes for sensitive data.

A financial reporting skill, for example, should not have write access to transactional systems unless explicitly required. Carefully crafted, fine-tuned permission models will be needed for enterprise deployment.

Evaluating skill performance

Agent skills can also be measured for their performance.

Two primary metrics are used:

- Recall: Did the skill trigger when it should have?

- Precision: Did it execute correctly?

Evaluation pipelines can use LLM-as-a-Judge frameworks:

- Generate output.

- Pass output to the evaluation model.

- Grade against the rubric.

- Store metrics for monitoring.

Over time, low-recall skills can be refined by improving metadata. Low-precision skills can be refined by tightening structure and examples. For a detailed explanation and comparison of the two metrics, I suggest reading our guide on Precision vs Recall.

Agent Skills in Future AI Ecosystems

For the future of agent skills, we can foresee some trends rising. Here are some of them.

Multi-agent coordination

In advanced systems, multiple agents may specialize in:

- Research agents

- Financial modeling agents

- Compliance agents

Skills become the contract between them. One agent can expose a skill as a callable interface. Another agent can invoke it as part of a larger workflow.

This connection decouples capability from implementation and enables distributed reasoning architectures.

The rise of skill marketplaces

As ecosystems mature, organizations will likely download verified skills rather than build everything from scratch.

This introduces new needs for:

- Version control

- Dependency management

- Trust scoring

These ensure that AI skills are dependable and accurate.

Prompt engineering might also evolve into package management. Skills may be signed, audited, and distributed through registries, similar to software libraries today.

Standardization of intent

Industry efforts are moving toward common schemas for defining skills:

- Structured metadata fields.

- Explicit input/output contracts.

- Model-agnostic definitions.

The long-term goal is to have write-once, run-anywhere skills that function across different model families and platforms.

Having standardization also reduces vendor lock-in and accelerates ecosystem growth.

Conclusion

Agent skills are part of the shift from plain prompt chains to robust engineered AI systems. They’re a crucial part of how AI agents can scale in a sustainable way. In fact, they are like the bridge between raw model intelligence and reliable, production-grade workflows.

Here’s the next step for you: think about your current agent workflows. Identify repetitive reasoning patterns, isolate them, and refactor them into modular skills.

Looking for something more structured to learn deeper? Our Introduction to AI Agents course and AI Agent Fundamentals track are a great place to start.

Agent Skills FAQs

How do agent skills improve the efficiency of AI agents?

Agent skills improve efficiency by reducing context overload and narrowing the model’s reasoning scope to only what is relevant for the current task. Instead of carrying a bloated, all-purpose prompt, the agent dynamically loads the specific skill required.

What are some real-world applications of agent skills?

Agent skills can power structured workflows such as financial reporting, customer churn analysis, legal contract review, SEO content audits, incident triage in IT operations, and AI-assisted coding reviews.

How do agent skills differ from AI tools?

Traditional AI tools typically provide atomic capabilities such as querying a database or calling an API. Agent skills operate at a higher level: they define the process for using those tools.

Can agent skills be customized for specific industries or tasks?

Yes. Agent skills are inherently domain-specific and can be tailored to industry requirements, terminology, compliance standards, and workflow norms.

What security measures should be taken when using agent skills?

Security should focus on permission boundaries and controlled access to tools. Skills should be restricted to only the data sources and APIs they genuinely require, ideally with role-based access controls and read-only modes where possible.

I'm Austin, a blogger and tech writer with years of experience both as a data scientist and a data analyst in healthcare. Starting my tech journey with a background in biology, I now help others make the same transition through my tech blog. My passion for technology has led me to my writing contributions to dozens of SaaS companies, inspiring others and sharing my experiences.