Cursus

Have you ever wondered how does a computer actually calculate a function like sin(x) or eˣ?

Computers can't directly evaluate most mathematical functions. They can only add, subtract, multiply, and divide. So when you call math.sin(0.5) in Python, something has to convert that into a sequence of basic arithmetic. That something is polynomial approximation, and Taylor series are the mathematical foundation behind it.

A Taylor series lets you rewrite almost any smooth function as an infinite sum of simpler terms, each built from the function's derivatives at a single point. Once you understand this idea, a lot of things in data science and machine learning start to click - from how gradient descent works to why certain activation functions behave the way they do.

In this article, I'll walk you through what Taylor series are, how they work mathematically, where they show up in data science and machine learning, and how they relate to other types of series you'll see.

Defining Taylor Series

Taylor series have been around for centuries. Brook Taylor introduced them in 1715, though James Gregory and Colin Maclaurin made significant contributions to the idea as well.

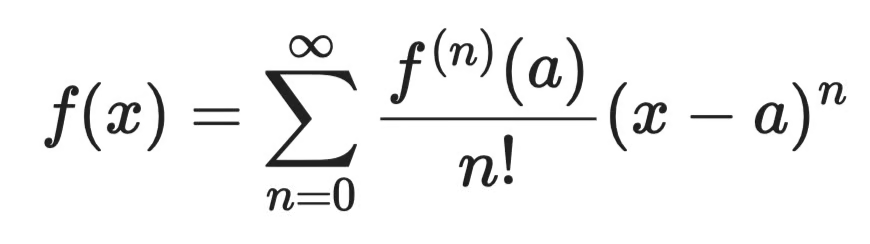

The goal was to find a way to represent complex functions using polynomials, which are much easier to work with.

A Taylor series approximates a function by expressing it as an infinite sum of terms, each derived from the function's derivatives at a single point. The more terms you include, the closer the approximation gets to the actual function.

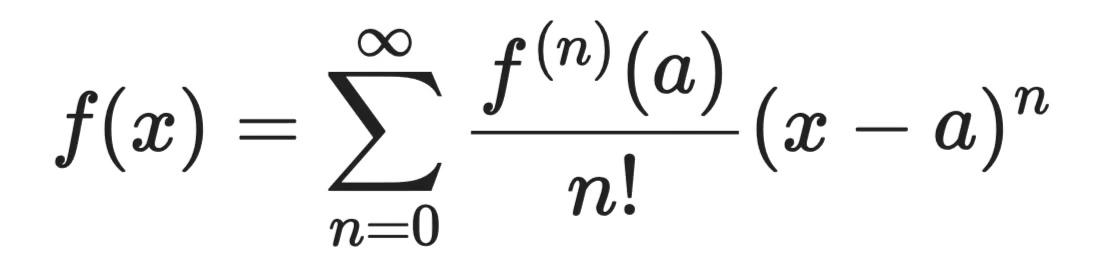

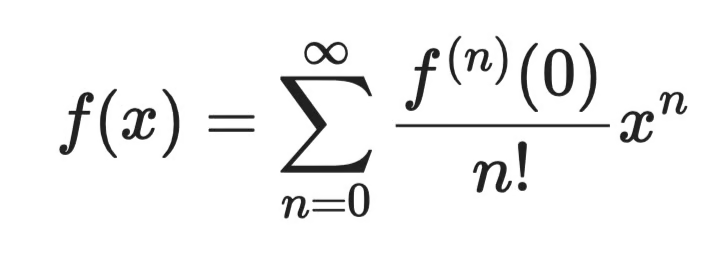

The general formula is this:

General Taylor series formula

Three components make up every term in this sum:

-

f⁽ⁿ⁾(a)- the nth derivative of the function evaluated at the center pointa -

n!- the factorial of n, which keeps the terms from growing out of control -

(x - a)ⁿ- the expansion term, which measures how farxis from the center point

The center point a is where you anchor the series. When a = 0, you get a special case called a Maclaurin series - more on that later.

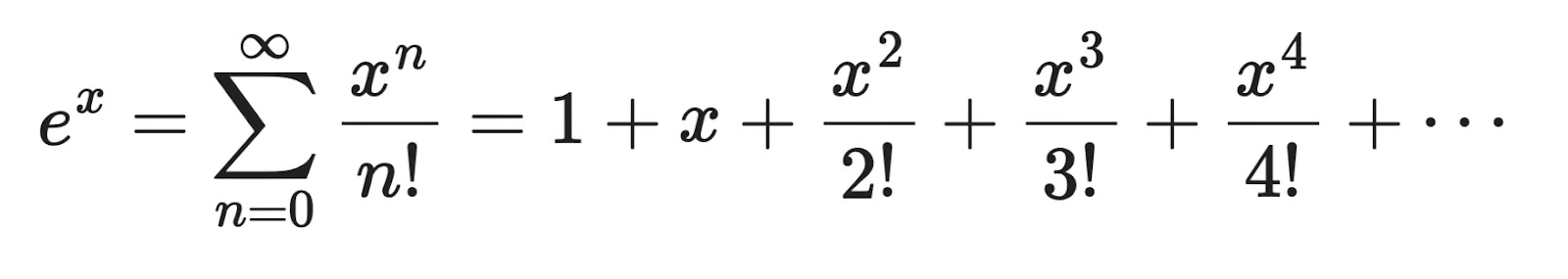

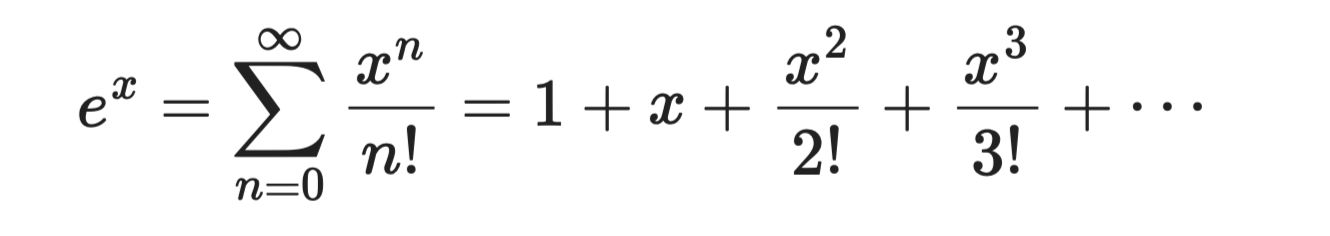

A concrete example: eˣ

The exponential function eˣ is a perfect first example. Its derivative is itself, so f⁽ⁿ⁾(0) = 1 for every n. Centered at a = 0, the Taylor series becomes:

Concrete example

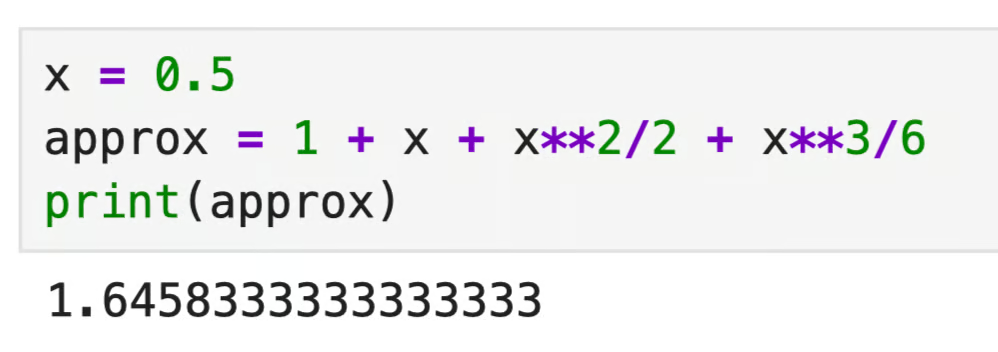

Let's say you want to approximate e⁰·⁵. Just plug x = 0.5 into the first four terms - here’s a Python example:

x = 0.5

approx = 1 + x + x**2/2 + x**3/6

print(approx)

Concrete example in Python

The actual value of e⁰·⁵ is roughly 1.6487. With just four terms, you're already within 0.2% of the true answer. Add more terms, and the approximation gets tighter.

That's the power of Taylor series.

Functions like eˣ, sin(x), and cos(x) are difficult to directly evaluate, but their Taylor series reduce them to basic arithmetic. This is exactly what a computer can work with.

Mathematical Properties of Taylor Series

A Taylor series is only useful if it actually converges to the function you're trying to approximate. Let's look at what that means and what happens when it doesn't.

Taylor series expansion

When you expand a Taylor series, you're building a polynomial one term at a time. Each term adds more information about the function's behavior near the center point a.

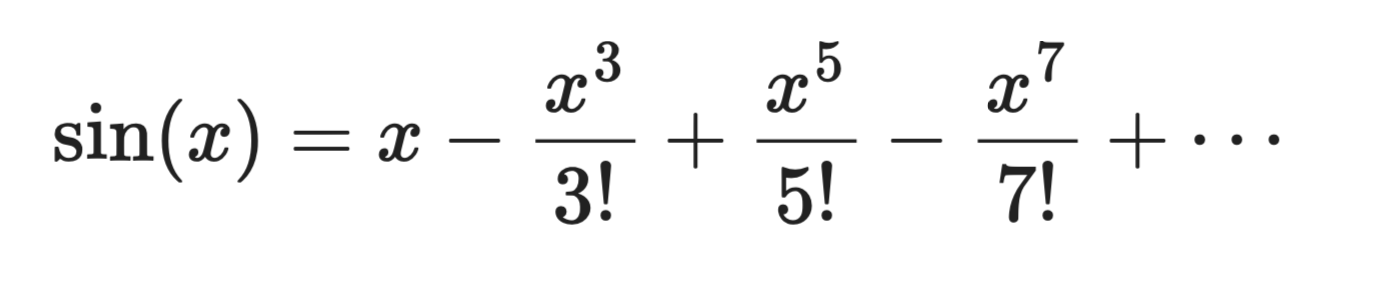

Take sin(x) centered at a = 0:

Taylor series expansion

The first term, x, is a rough linear approximation. Add the second term and the curve gets closer. Add more terms, and the polynomial starts to look exactly like sin(x) near x = 0.

In plain English, expansion means you're trading an exact but hard-to-compute function for a polynomial you can actually work with.

Taylor series approximation

You'll never compute infinitely many terms. In practice, you stop after a few and accept a small error. The result is called a truncated Taylor series, and the error it introduces is called the truncation error.

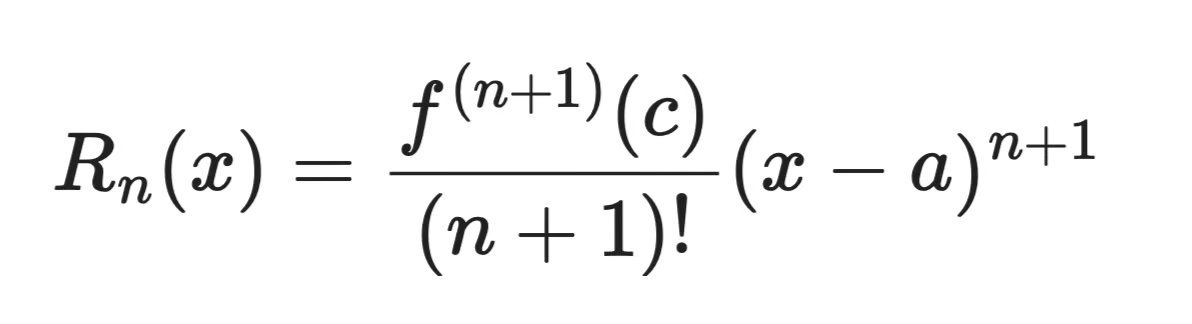

The Lagrange remainder gives you a bound on that error. For a series truncated after n terms:

Lagrange remainder

Where c is some point between x and a. You don't know c exactly, but you can bound f⁽ⁿ⁺¹⁾(c) if you know how large the derivatives of your function can get.

Here's how you can interpret it

-

The further

xis from the center pointa, the larger the error -

The more terms you include, the smaller the error

-

Functions with large, fast-growing derivatives are harder to approximate accurately

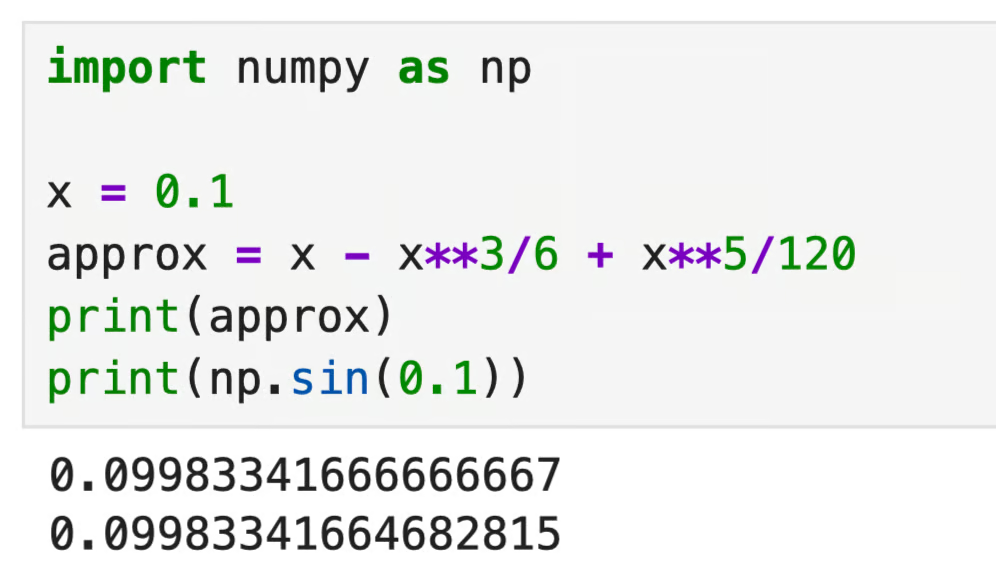

Let's say you're approximating sin(0.1) with three terms:

x = 0.1

approx = x - x**3/6 + x**5/120

print(approx)

print(np.sin(0.1))

Approximation in Python

Three terms get you accuracy to ten decimal places when x is close to 0. That's truncation error in action - small, but not zero.

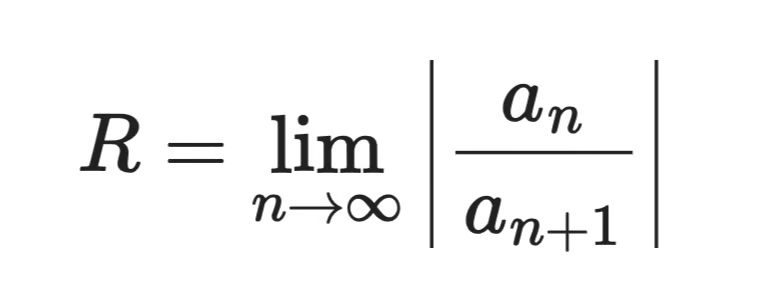

Taylor series convergence

A Taylor series converges at a point x if the partial sums get closer and closer to a fixed value as you add more terms. That fixed value should be f(x) - but that's not always guaranteed.

The radius of convergence R tells you how far from the center point the series remains valid. Inside that radius, the series converges. Outside it, the terms grow instead of shrinking, and the approximation falls apart.

Convergence formula

Different functions have different radii:

-

eˣ,sin(x), andcos(x)converge for all values ofx, soR = ∞ -

ln(1 + x)only converges for-1 < x <= 1, soR = 1 -

1/1-xconverges for|x| < 1, soR = 1

A function can also have an infinite radius of convergence but still fail to equal its Taylor series at certain points. These are called non-analytic functions, and they're an edge case worth knowing about, even if you rarely see them in data science.

So, always check whether x falls within the radius of convergence before trusting a Taylor approximation.

Taylor Series in Data Science and Machine Learning

Taylor series show up in more places than you'd expect - from physics simulations to solving differential equations. But their biggest impact in your day-to-day work as a data scientist is in optimization and model approximation.

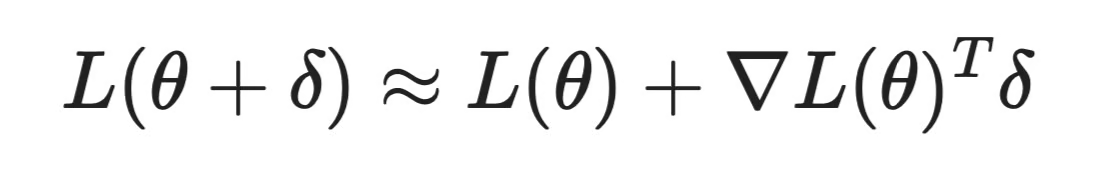

Optimization and gradient descent

Every time you train a machine learning model, you're running some form of optimization. And Taylor series is often behind that optimization

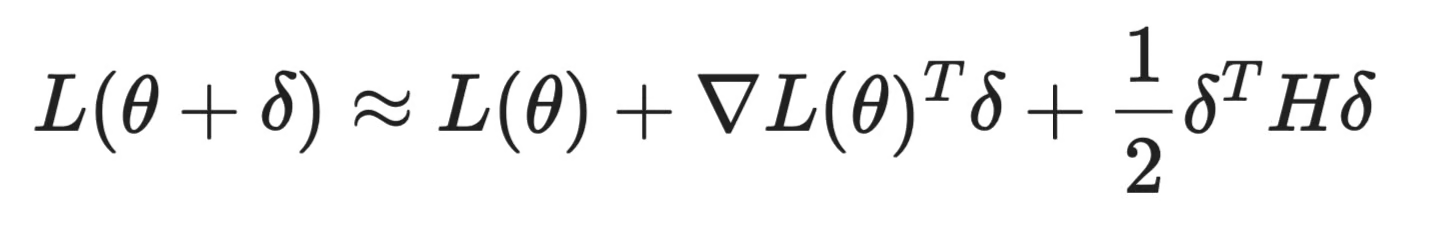

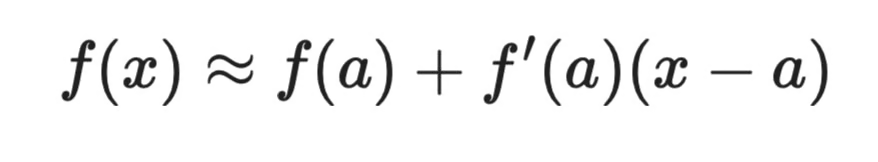

Gradient descent uses a first-order Taylor approximation. When you compute the gradient of a loss function L(θ) at the current parameters θ, you're essentially asking: "if I move a small step in this direction, how much does the loss change?" That's a first-order Taylor expansion around the current point:

Taylor series in optimization

This works, but it ignores curvature. If the loss surface curves, a first-order approximation can overshoot or take inefficient steps.

Newton's method fixes this by including the second-order term - the Hessian matrix H, which keeps track of the curvature:

Taylor series in optimization (2)

Setting the derivative of this expression to zero gives you the optimal step to take. The tradeoff is that computing the full Hessian is expensive for large models. Methods like L-BFGS approximate it instead, which gets most of the benefit at a fraction of the cost.

Activation function approximations

Some activation functions are expensive to compute. Taylor series give you cheaper alternatives that are accurate enough for most purposes.

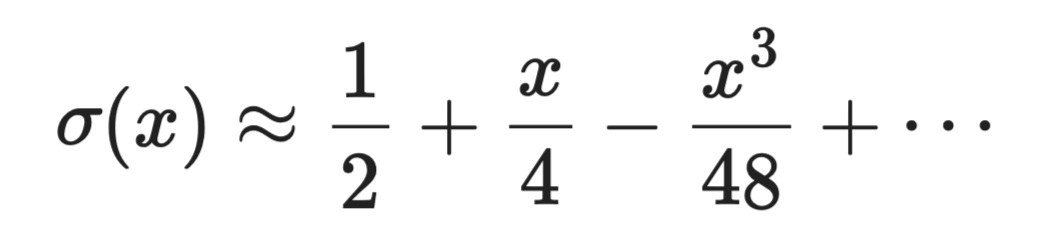

The sigmoid function σ(x) = 1 / (1 + e⁻ˣ) requires computing an exponential, which is expensive. Near x = 0, its Taylor expansion is:

Taylor series in approximation

For hardware-constrained environments like edge devices or FPGAs, polynomial approximations like this can replace exact computations with a handful of multiply-add operations.

GELU, used in transformer models like BERT and GPT, is often implemented via a Taylor-based approximation of the error function erf(x), since the exact form involves an integral with no closed-form solution.

XGBoost and second-order optimization

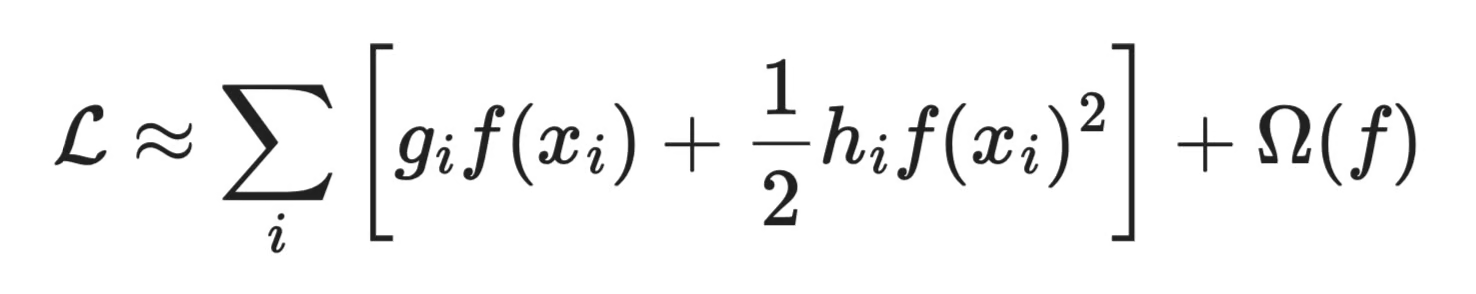

XGBoost is one of the most widely used gradient boosting libraries and it uses a second-order Taylor expansion of the loss function to fit each new tree.

At each boosting step, XGBoost approximates the loss as:

XGBoost loss approximation

Where g_i is the first-order gradient and h_i is the second-order gradient (Hessian) of the loss with respect to the current prediction. Using both terms lets XGBoost fit trees faster and more accurately than first-order methods, which is a big part of why it performs so well on tabular data.

Challenges and limitations

Just because Taylor series can be used all over in data science, it doesn’t mean they’re a metaphorical hammer to every nail you see. A couple of things can go wrong.

-

Approximation error accumulates: In deep networks, you're chaining many operations together. A small Taylor approximation error at one layer compounds across layers, which can affect training stability

-

The radius of convergence matters: Taylor approximations are only reliable near the expansion point. If your inputs drift far from where the approximation was built - say, during inference on out-of-distribution data - the approximation can break down

-

High-dimensional Hessians are expensive: Second-order methods are powerful but don't scale that well. A model with

nparameters has ann × nHessian. For a model with millions of parameters, storing and inverting that matrix is not practical without approximations.

If you understand these tradeoffs, you’ll know when a Taylor-based approach is worth it and when a simpler first-order method is good enough.

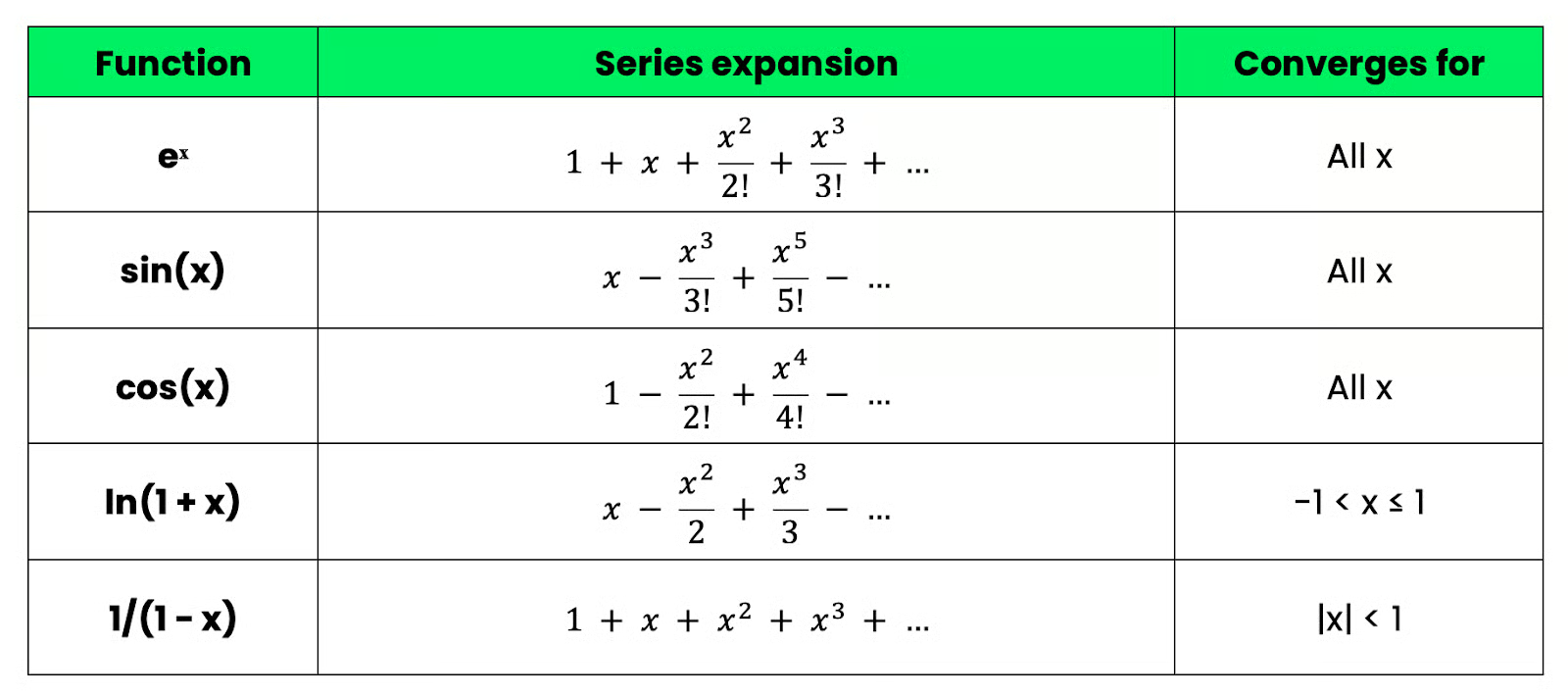

Well-Known Taylor Series

A couple of Taylor series show up everywhere in mathematics, physics, and machine learning. These are the ones worth knowing if you’re serious about data science.

Exponential function

The exponential function eˣ is the simplest Taylor series to derive, since every derivative of eˣ is eˣ itself. Evaluated at a = 0, every coefficient is 1:

Exponential function

This series converges for all values of x, which makes it reliable and easy to work with. It's the foundation for the sigmoid and softmax functions used in classification models.

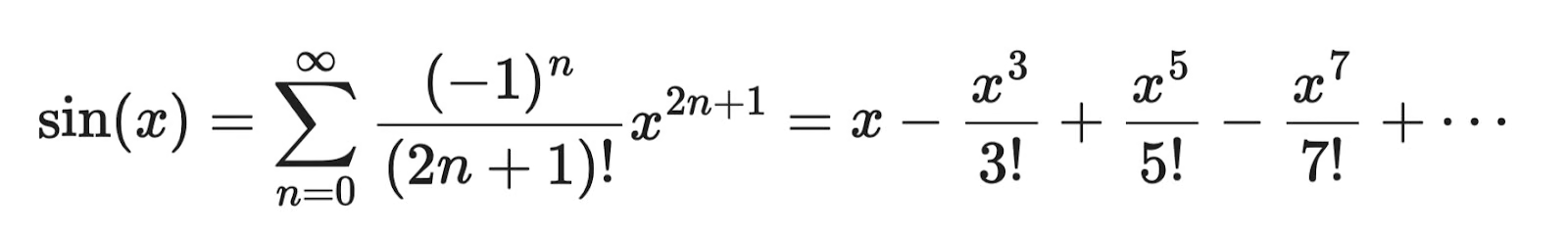

Sine function

The sine function only contains odd powers, which follows from the fact that sin(x) is an odd function - meaning sin(-x) = -sin(x):

Sine function

Like eˣ, this converges for all x. The alternating signs come from the fact that the derivatives of sin(x) cycle through cos(x), -sin(x), -cos(x), and back.

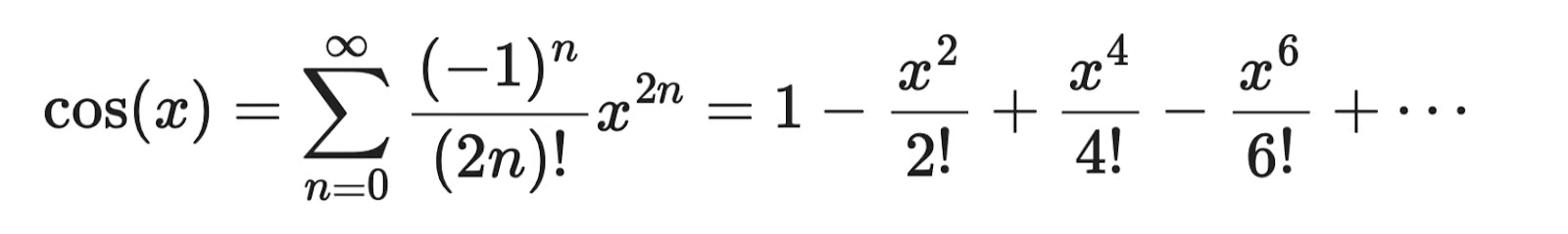

Cosine function

Cosine is the even counterpart to sine - it only contains even powers:

Cosine function

If you look at the sine and cosine series together, you'll notice they're complementary. This relationship is what leads to Euler's famous identity: eⁱˣ = cos(x) + i·sin(x).

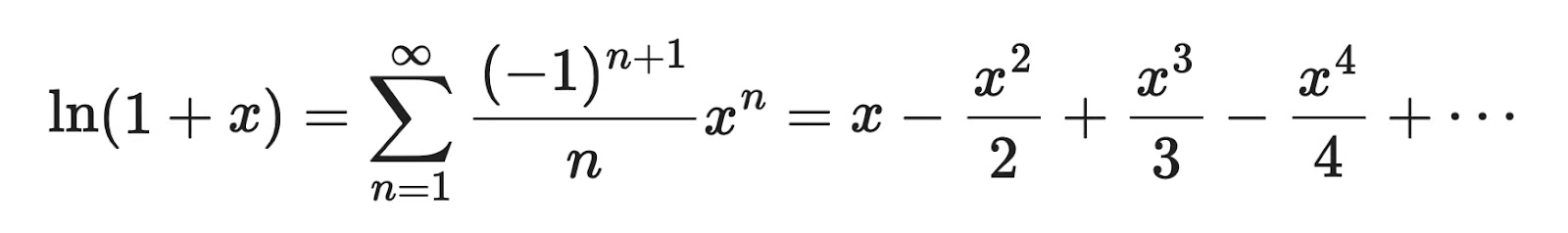

Natural logarithm

The natural logarithm ln(1 + x) has a Taylor series centered at x = 0:

Natural logarithm function

Unlike the previous three, this one only converges for -1 < x <= 1. If you push x outside that range, the series diverges. This comes up in cross-entropy loss, where log probabilities need to stay in a valid range.

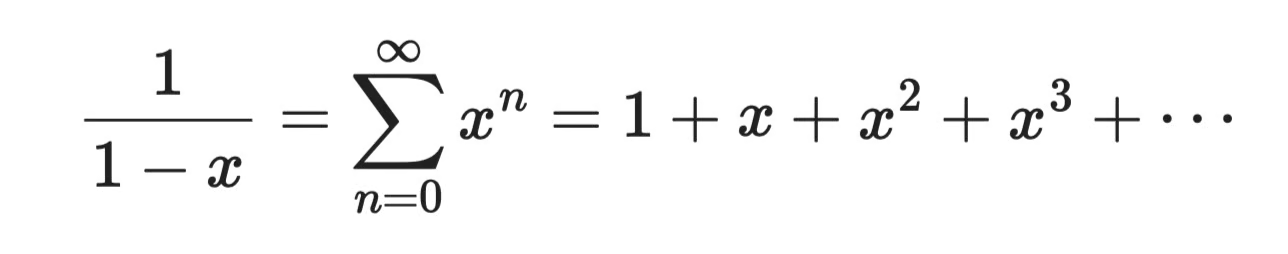

Geometric series

The geometric series is one of the oldest and most used results in mathematics:

Geometric series

This only converges for |x| < 1. It's the starting point for deriving many other Taylor series, and it shows up in probability theory, signal processing, and anywhere you're summing discounted future values.

Quick reference

If you’re looking for something tangible, printable, to put on a wall next to your bed, I have you covered:

Taylor series quick reference

These five series cover the majority of cases you'll encounter in data science and machine learning.

Taylor Series vs. Other Related Kinds of Series

Taylor, Fourier, and Maclaurin series all approximate functions, but they solve different problems and work best in different contexts.

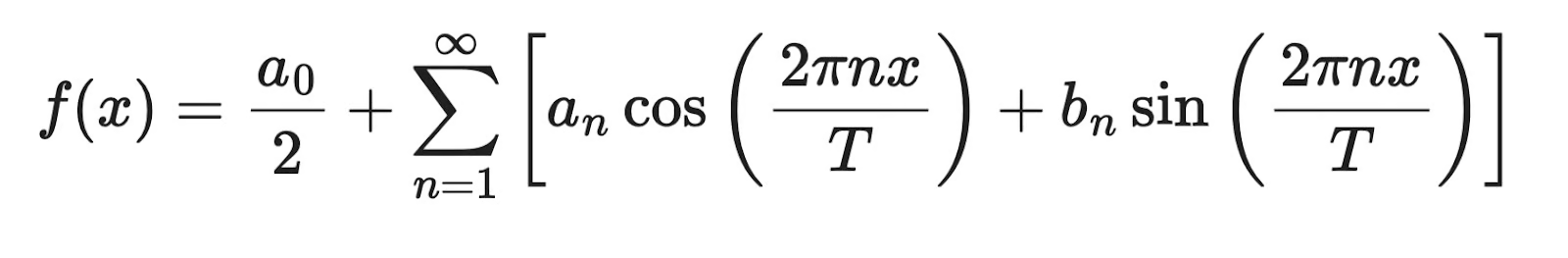

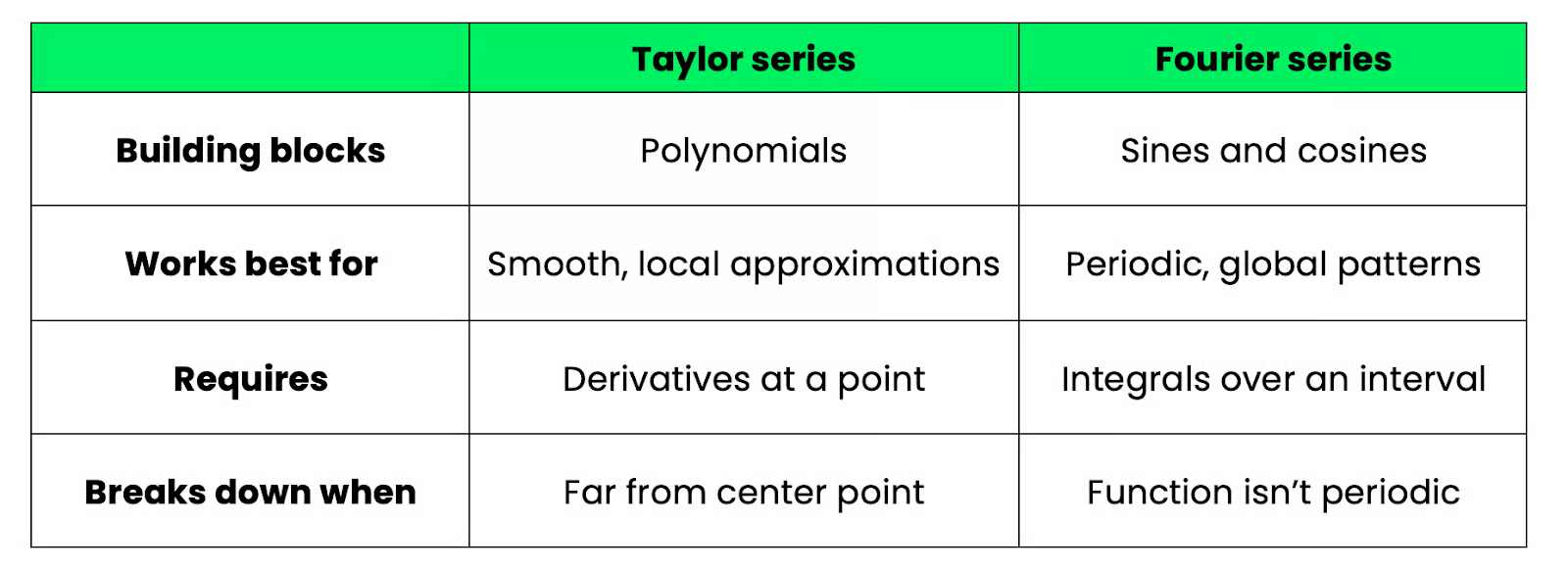

Taylor series vs. Fourier series

Taylor and Fourier series both represent functions as infinite sums, but they do it in completely different ways.

A Taylor series builds a function from polynomials - powers of (x - a). It works by zooming in on a single point and capturing the function's local behavior through its derivatives. The result is accurate near the center point a, but accuracy drops off as you move away.

A Fourier series uses sines and cosines as building blocks:

Fourier series

Rather than capturing local behavior at a point, Fourier series capture global periodic behavior across an entire interval. They're designed for functions that repeat - think audio signals, seasonal patterns, or anything that oscillates.

Here's how the two compare side by side:

Taylor vs. Fourier comparison

Fourier series show up in signal processing and time series analysis - spectral analysis, frequency decomposition, and even some neural network architectures like FNet, which replaces attention with Fourier transforms.

If you're working with tabular data, images, or optimization, Taylor series are the more relevant tool. If you're working with audio, time series, or anything with periodic structure, Fourier series are the better fit.

Taylor series vs. Maclaurin series

This one's simple. A Maclaurin series is just a Taylor series centered at a = 0.

The general Taylor series formula is:

Maclaurin series

Set a = 0 and you get:

Maclaurin series at a = 0

Colin Maclaurin used this specific case so often in his work that it got its own name, but mathematically it's nothing more than a Taylor series at a particular center point.

In practice, most of the series you'll see - eˣ, sin(x), cos(x), ln(1 + x) - are Maclaurin series, because centering at zero keeps the algebra clean. When you need to approximate a function near a different point, you shift the center to a ≠ 0 and get a general Taylor series.

To conclude, every Maclaurin series is a Taylor series, but not every Taylor series is a Maclaurin series.

Taylor Series and Linear Models

Taylor series and linear models might seem unrelated at first, but there's a connection worth knowing about, and it starts with the first-order Taylor approximation.

When you truncate a Taylor series after the first term, you get a linear approximation of a function near a point a:

Taylor series and linear models (1)

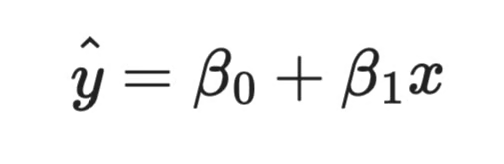

This is a straight line. It has a slope (f'(a)) and an intercept (f(a) - f'(a) ⋅ a). Sound familiar? That's the same structure as a simple linear regression model:

Taylor series and linear models (2)

The difference is where each comes from. In a Taylor approximation, the slope and intercept are determined by the function's derivatives at a single point. In linear regression, they're estimated from data to minimize prediction error. But structurally, they're doing the same thing.

Where this connection gets useful

It explains why linear models work well in certain situations and fail in others.

Linear regression assumes the relationship between your input and output is - or can be treated as - linear. Taylor series tell you exactly when that assumption holds - when your inputs stay close to a fixed point, and the function you're approximating is smooth. If you push your inputs far from that point, a linear approximation breaks down, which is the same reason linear regression often fails on data with strong nonlinear patterns.

Generalized linear models (GLMs) make this connection even more explicit.

Logistic regression, for example, models the log-odds of an outcome as a linear function. The link between the linear predictor and the output probability runs through the sigmoid function - and as you saw earlier, the sigmoid has a well-behaved Taylor expansion near zero.

From linear to nonlinear

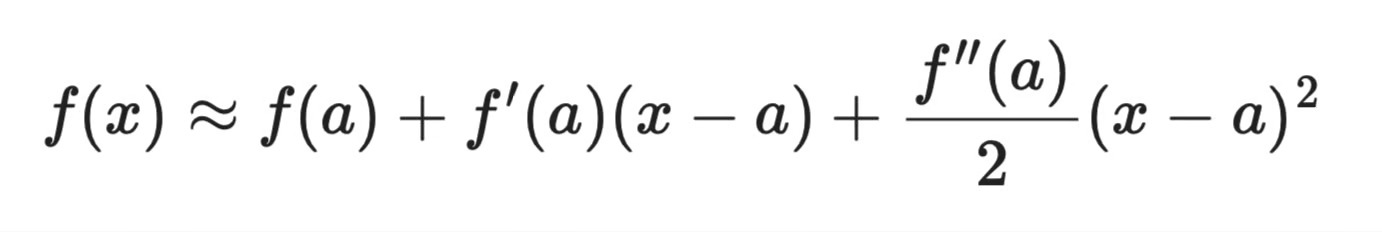

Once you understand that a first-order Taylor expansion gives you a linear model, the next step is to include more terms, and you get a polynomial model.

A second-order Taylor expansion gives you:

Second-order Taylor series

This is a quadratic - a polynomial regression model with a squared term. Each additional Taylor term corresponds to a higher-degree polynomial, which is how polynomial regression extends linear regression to capture curved relationships.

So Taylor series gives you a principled way to think about the bias-variance tradeoff in regression. A first-order approximation (linear model) is fast and interpretable but has high bias if the true relationship is nonlinear. Higher-order approximations fit the data better near the expansion point but risk overfitting as you add terms.

If you want to go deeper on linear regression and when it works, the Essentials of Linear Regression in Python tutorial is a good next step. For R users, the Intermediate Regression in R course covers polynomial regression and model diagnostics in detail.

Conclusion

Taylor series are one of those mathematical tools that keep showing up once you know what to look for.

You've seen how they let computers evaluate functions like eˣ and sin(x) through basic arithmetic, how convergence and truncation error determine how accurate an approximation is, and how the same idea powers gradient descent, XGBoost, and activation function approximations in modern machine learning.

The five well-known series - exponential, sine, cosine, logarithm, geometric - are worth memorizing. They come up often enough that recognizing them on sight saves real time.

From here, the next step is getting comfortable with the algorithmic thinking that sits alongside this kind of math. Our Data Structures and Algorithms in Python course is a solid place to build that foundation. It will help you understand how mathematical ideas translate into code that works and scales.

Taylor Series FAQs

What is a Taylor series used for?

A Taylor series approximates complex functions as infinite sums of polynomial terms built from the function's derivatives at a single point. This makes functions like eˣ and sin(x) computable through basic arithmetic - which is how your computer evaluates them. In machine learning, Taylor series power optimization algorithms like gradient descent and boosting methods like XGBoost.

How does a Taylor series differ from a Maclaurin series?

A Maclaurin series is just a Taylor series centered at a = 0. When the center point is zero, the math simplifies, which is why most well-known series - eˣ, sin(x), cos(x) - are Maclaurin series. When you need to approximate a function near a different point, you use the general Taylor series with a ≠ 0.

What are the convergence properties of a Taylor series?

A Taylor series converges when its partial sums approach a fixed value as you add more terms. The radius of convergence tells you how far from the center point the series stays reliable. Some functions like eˣ converge for all x, while others like ln(1 + x) only converge within a specific interval.

Can Taylor series be used in machine learning?

Yes - gradient descent uses a first-order Taylor approximation to determine each update direction, and XGBoost explicitly uses first- and second-order Taylor terms to fit each boosting tree. Activation functions like GELU are also implemented using Taylor-based polynomial approximations. Most practitioners use Taylor series daily without realizing it.

What are the limitations of using Taylor series?

Truncation error grows as x moves further from the center point, so approximations become less reliable far from a. Some functions only converge within a limited range, meaning the series breaks down entirely on inputs outside that interval. Second-order methods are more accurate but computing the full Hessian scales poorly for large models.