Track

AI coding tools have split into two distinct paradigms: agents that work while you sleep, and assistants that work while you type. OpenAI Codex and GitHub Copilot are prime examples of each.

In this article, I will break down the core philosophical and technical differences between the two tools. We will look under the hood of both platforms, comparing them head-to-head across their agentic capabilities, IDE integrations, codebase context handling, and pricing structures.

TL;DR: OpenAI Codex vs GitHub Copilot

The quickest way to differentiate the two tools is by looking at how they expect you to work.

|

Feature |

OpenAI Codex |

Github Copilot |

|

Type |

Autonomous Coding Agent |

AI Pair Programmer & Multi-Agent Platform |

|

Interface |

CLI, desktop app, web interface |

Native IDE integration (VS Code, JetBrains, etc.), CLI, web interface |

|

Model Choice |

OpenAI GPT models only |

Multi-model picker (GPT, Claude, Gemini) |

|

Unique Edge |

Sandboxed execution and parallel task processing |

Zero-friction IDE workflow and MCP integration |

|

Best For |

Asynchronous task delegation and large-scale refactoring |

Real-time code suggestions and flow-state coding |

|

Pricing Tiers |

$20/mo (Plus), $100/mo or $200/mo (Pro tiers); limited access with Go ($8/mo) |

$10/mo and $39/mo (Individual), $19/mo (Business), $39/mo (Enterprise) |

Your primary choice should be dictated by your immediate engineering needs. Some power users even adopt a hybrid approach.

What Is OpenAI Codex?

Before we look at the product, we have to untangle the terminology. "Codex" began as a specialized model family; the original engine behind the first iteration of GitHub Copilot.

While the underlying models have advanced (transitioning through GPT-5.3-Codex and increasingly relying on GPT-5.4 and GPT-5.5), OpenAI has repurposed the "Codex" name for a standalone, autonomous agent product.

Throughout this article, we refer to OpenAI Codex as the platform, not the model family. Whether you are interacting with it via the CLI, the desktop application, or the web interface, you are accessing the same core agentic service. We treat these as access points to the same underlying intelligence.

Today, OpenAI Codex functions as a heavy-duty autonomous coding agent. It spins up secure, sandboxed cloud environments, clones your repository, writes and tests the code, and then submits a Pull Request for your review.

Codex key features and capabilities

- Sandboxed cloud execution: Codex runs your code in isolated environments. This is critical for security and testing, as it allows the agent to execute potentially destructive scripts or complex migrations without touching your local machine.

- Parallel task handling: You can delegate multiple, independent long-horizon tasks simultaneously via the open-source Codex’s CLI or desktop app.

- Context compaction & memories: For long-horizon tasks, Codex dynamically compresses its context window to maintain focus without exceeding token limits. It now includes the Chronicle feature, which allows the agent to carry useful context, developer preferences, and architectural learnings from one session to another.

- Adaptive reasoning effort: You can manually dial the agent's compute allocation from minimal (for quick scripts) to extra-high (for complex architectural refactors).

The pros and cons of Codex

- Pros: Codex offers true end-to-end autonomy. Its ability to process tasks in parallel, carry persistent context via chronicle memories, and verify its own output in a sandboxed environment makes it especially useful for large-scale delegation. It also offers a lower barrier to entry with a newly introduced $8/month starting plan for lighter usage.

- Cons: There is no real-time inline autocomplete; it requires a distinct mental shift toward task delegation rather than co-writing. Furthermore, while the $8/mo tier is accessible, developers running heavy parallel tasks will quickly need the higher-tier ChatGPT Pro plans ($100/mo or $200/mo) to avoid hitting tight compute ceilings.

To see Codex in action, I recommend taking a look at our tutorials showcasing how to use the Codex CLI for data workflow automation or integrating MCP servers.

What Is GitHub Copilot?

GitHub Copilot has changed dramatically from its origins as a simple inline autocomplete tool. Today, it is a multi-agent platform designed to live natively where you type.

However, Copilot’s rapid development into agentic workflows has fundamentally changed its compute demands. Long-running, parallelized sessions have strained its infrastructure, forcing GitHub to make drastic changes to its individual plan structures in Q2 2026 to maintain service reliability.

GitHub Copilot key features and capabilities

-

Multi-model flexibility: Copilot is no longer locked strictly to one provider. The model picker now includes access to GPT-5.4 and GPT-5.5, as well as Anthropic’s Claude (with Opus 4.7 available on Pro+ plans) and Google's Gemini.

-

Copilot workspace & plan mode: Workspace uses a plan-review-execute model. You can take "plan mode" in VS Code or the CLI to improve task efficiency and reduce token consumption before the agent starts writing.

-

Agent mode &

/fleet: Copilot now supports multi-file edits and parallel workflows directly in the IDE, though these commands consume significant compute. -

MCP integration: Model Context Protocol (MCP) allows Copilot to securely interface with your external local developer tools and data sources.

The pros and cons of GitHub Copilot

- Pros: It provides zero-friction IDE integration (VS Code, JetBrains, Neovim) and has the lowest learning curve of any AI coding tool. The multi-model flexibility is a massive advantage over older versions, allowing you to route specific problems to the models best suited for them. Its deep integration with GitHub (PRs, issues, code search) is unparalleled.

- Cons: The recent implementation of strict session and weekly token limits, coupled with the pausing of new individual paid sign-ups, makes it difficult for heavy users to rely on it for complex tasks without upgrading to Pro+. Its agentic capabilities remain less mature than Codex's sandboxed approach, i.e., Copilot is simply less agentic as it lacks a built-in sandbox for independent execution, re-iteration, and testing.

For more information, check out our GitHub Copilot CLI tutorial and see our comparison guides on how Copilot stacks up against Claude Code and Cursor.

Codex vs GitHub Copilot: Head-to-Head Comparison

Despite sharing the same underlying engineering DNA, Codex and GitHub Copilot are fundamentally different products. Here’s a detailed comparison across various critical factors:

|

Feature |

OpenAI Codex |

GitHub Copilot |

|

Product Type |

Autonomous coding agent |

AI pair programmer & multi-agent platform |

|

Interface |

Multi-interface platform (CLI, Desktop, Web) |

Native IDE (VS Code, JetBrains, Neovim) |

|

Model Choice |

GPT-5.4, GPT-5.5, etc. |

Multi-model (GPT-5.4/5.5, Claude 4.7, Gemini) |

|

Agentic Skills |

Sandboxed execution, parallel processing |

Workspace, agent mode, |

|

IDE Integration |

Extension-based (Sidebar chat-agent, |

Deep, zero-friction integration |

|

Context Handling |

Repo cloning + Chronicle memory |

Enterprise org-wide indexing |

|

Price (Individual) |

Free, $8/mo (Base), $20/mo (Plus), $100-200/mo (Pro) |

Free, $10/mo (Pro), $39/mo (Pro+) |

|

Price (Team) |

Pay-as-you-go pricing model |

$19/mo (Business), $39/mo (Enterprise) |

|

Security |

Sandboxed execution isolation |

IP indemnification, audit logs, policy controls |

|

Best For |

Task delegation, complex multi-file refactors |

Real-time collaboration, flow-state coding |

1. Interface and workflow

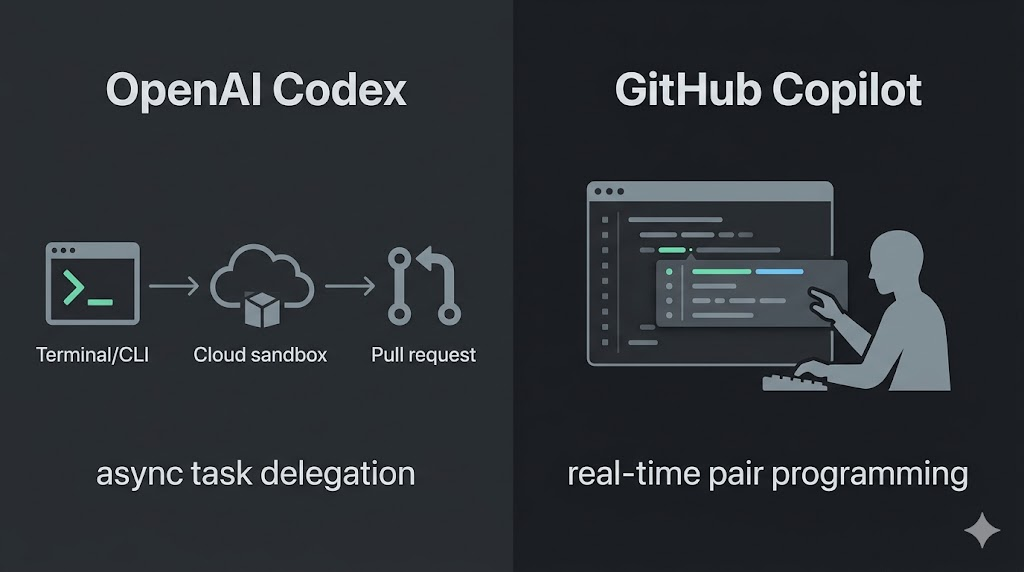

The core philosophical split between the two tools comes down to this:

- Codex is built for task delegation

- Copilot is built for real-time collaboration

To illustrate, imagine you need to refactor your application's authentication flow. With GitHub Copilot, you open your IDE, navigate to the relevant files, and begin collaborating. You use Copilot Chat when you are stuck, rely on inline suggestions as you type, and perhaps trigger Agent Mode for localized multi-file edits while you supervise.

With OpenAI Codex, the workflow looks more like managing a direct report. You describe the desired outcome via the Codex CLI interface or the desktop app, pass it the necessary repository context, and step away. Codex handles the refactoring in the background and eventually submits a Pull Request for you to review.

2. AI models and code generation

Today, Codex is heavily optimized around OpenAI’s latest GPT-5.4 and GPT-5.5 models, explicitly tuned for long-horizon software engineering tasks.

GitHub Copilot, on the other hand, embraces a "bring your own model" philosophy. Its multi-model picker allows you to switch between GPT-5.4, GPT-5.5, Google's Gemini, and Anthropic’s Claude (with Opus 4.7 available on Pro+ plans).

Both tools produce exceptionally strong code for routine tasks. The gap primarily appears on complex, multi-step problems:

- Copilot's multi-model flexibility lets you query different models to break a problem down

- Codex relies on its ability to autonomously execute and verify its own output to guarantee the code works before presenting it to you

3. Agentic capabilities

This is where the divergence becomes most apparent. Codex is engineered from the ground up for true agentic autonomy. It uses secure, sandboxed cloud environments to run closed-loop test verification, and it natively supports parallel task processing so you can run multiple independent refactors simultaneously without freezing your machine.

GitHub Copilot has aggressively entered the agentic space with Copilot Workspace (with a plan-review-execute model) and IDE-based Agent Mode with commands like /fleet.

However, as mentioned earlier, these parallel workflows consume significant compute and often push Copilot users into restrictive token limits. More importantly, Copilot does not have independent, isolated code execution. It requires your local environment to run and test its outputs, making Codex a more robust choice for safe, hands-off, multi-file autonomy.

4. IDE integration and setup

For a daily, in-the-zone coding flow, GitHub Copilot’s integration is unmatched. It is natively embedded in VS Code, JetBrains, and Neovim, and offers a frictionless tab-to-accept workflow that feels like a natural extension of your keyboard.

OpenAI Codex, meanwhile, has moved beyond its origins as a web-only agent. It now features a robust IDE extension (available for VS Code and JetBrains) that brings its autonomous capabilities directly into your editor sidebar.

While it isn't an "inline autocomplete" tool in the same sense as Copilot, it allows you to chat, reference files using @ syntax, and delegate complex tasks to run in the background without leaving your IDE. For background task delegation and multi-file reasoning, Codex’s ability to "think" while you continue working in your editor is a significant operational advantage.

5. Codebase context and understanding

Context is everything in AI-assisted coding. For large organizations with massive, complex codebases, GitHub Copilot Enterprise holds a distinct advantage with its persistent, org-wide repository indexing. It natively understands how your microservices interact simply because it has access to the entire organizational graph.

Codex handles context differently. It clones your repository fresh for its active session, to build a deep understanding of the codebase it is operating on. Previously, Codex would "forget" everything once the session ended.

However, with the newly introduced memory management feature known as Chronicle, Codex can now persistently carry critical architectural context, developer preferences, and complex learnings from one session to another.

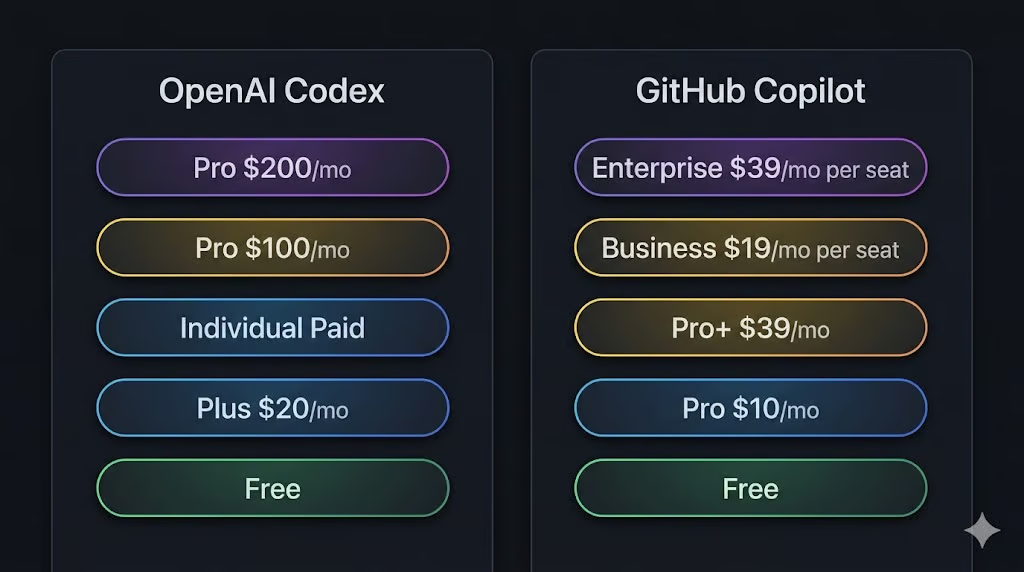

6. Pricing and plans

GitHub Copilot operates on a predictable per-seat pricing model. For individuals, there are three tiers:

- Free: $0/mo

- Pro: $10/mo

- Pro+: $39/mo

For teams, the organizational tracks are:

- Business: $19/mo

- Enterprise: $39/mo

Because agentic workflows have driven compute costs prohibitively high, often resulting in a handful of requests exceeding the price of an individual subscription, GitHub has taken aggressive action. If you are looking to sign up as an individual today, you will encounter a waitlist. New sign-ups for Copilot Pro, Pro+, and Student plans are currently paused to protect the experience of existing customers.

For those already on the platform, strict session and weekly (7-day) token limits have been implemented. These limits are dictated by token consumption and specific "model multipliers." If you run parallel workflows (like the /fleet command), you will burn through your token allotment rapidly.

To improve transparency, VS Code and the Copilot CLI now display real-time usage warnings when you approach your limit. Users who hit their cap will default to standard models until their weekly period resets, though upgrading to Pro+ offers over 5X the limits of the standard Pro plan.

OpenAI Codex is structured around compute capacity rather than per-seat SaaS predictability. It offers a highly accessible Free tier for developers who want to test it out, followed by an $8/mo starting plan for light usage. Both are only suitable for testing out Codex, but are very limited.

Beyond that, it scales with standard ChatGPT subscriptions:

- Plus: $20/mo (offers limited task execution)

- Pro tiers: $100/mo and $200/mo (provide the massive compute required for serious, parallelized software engineering)

The trade-off is straightforward: Copilot is far easier to budget for across an organization, while Codex gives you more granular entry points; being entirely free to start, but scaling up to be noticeably more expensive for power developers running heavy agentic workloads.

7. Security and compliance

GitHub Copilot Enterprise leans heavily into traditional corporate compliance. It offers

- Comprehensive IP indemnification

- Extensive audit logging

- Strict org-wide policy controls that integrate with your existing GitHub administration.

Codex approaches security through architecture rather than policy. Its sandboxed execution isolation ensures that potentially destructive operations (like database migrations or dependency overhauls) cannot damage your local environment or production servers.

However, the compliance setup, such as managing API keys and maintaining audit trails, largely falls on your engineering team rather than being handled out of the box.

Should You Choose Codex Or GitHub Copilot?

At the end of the day, picking between OpenAI Codex and GitHub Copilot is rarely a debate about which AI model is "smarter." Instead, it is a calculation of how you and your team prefer to work.

Do you need an assistant to draft boilerplate while your hands are on the keyboard, or do you need a highly capable agent to tackle heavy refactoring without you supervising it?

You should choose Codex if...

- You want to delegate entire features end-to-end. If your goal is to describe a desired outcome, hand over the necessary context, and simply review a finished Pull Request, Codex is built exactly for this. It thrives on autonomous, hands-off execution.

- You need parallel task processing. For developers who regularly juggle multiple complex issues, the ability to fire off several independent tasks simultaneously, knowing they are running safely in isolated cloud sandboxes, is a game-changer. It prevents your local machine from freezing under the weight of heavy compute tasks.

- You work in terminal/CLI-first environments. If your workflow revolves around the command line, or if you are looking to build custom developer tooling and CI/CD pipelines, Codex’s CLI interface and robust standalone desktop apps (available on both macOS and Windows) provide the exact separation you need.

- You are already paying for ChatGPT Pro. If you are already shelling out $100 or $200 a month for one of the higher-tier ChatGPT Pro subscriptions to access massive compute limits, funneling your coding tasks through Codex is the absolute best way to maximize the value of that investment. And if you aren't, the Free or $8/mo starting tiers make it quite easy to test the waters.

You should choose GitHub Copilot if...

- You want AI suggestions inside your IDE with zero setup friction. If you prioritize staying in your flow state, Copilot remains the industry standard for these workflows. Its native integration into VS Code, JetBrains, and Neovim means you get lightning-fast, tab-to-accept suggestions right where you are already typing.

- You value switching between AI model providers mid-session. Copilot’s multi-model picker is a massive advantage for developers who know that different models excel at different things. Toggling from GPT-5.5 to Anthropic’s Claude Opus 4.7 or Google’s Gemini 3.1 to get past a specific roadblock can be helpful.

- You need predictable per-seat pricing for a team. Managing software budgets is complicated enough. While individual sign-ups are currently paused, organizations can rely on Copilot’s Business ($19/mo) and Enterprise ($39/mo) tiers to scale their AI adoption without worrying about erratic, compute-based billing spikes at the end of the month.

- You are already using the GitHub ecosystem. If your organization relies exclusively on GitHub issues, Pull Requests, and actions, Copilot Enterprise’s ability to natively index your entire organizational codebase and integrate directly into your existing PR workflows is a major differentiator.

Final Thoughts

Choosing between OpenAI Codex and GitHub Copilot depends on how you and your engineering team actually work.

The guiding principle to remember is this:

- Codex is built for delegation.

- Copilot is built for collaboration.

If you want an intelligent pair programmer to catch your typos, unblock you with inline suggestions, and let you toggle between the world's best foundational models mid-session, GitHub Copilot has an advantage. But if you want to hand off a multi-file architecture update to an autonomous agent and simply review the Pull Request over your morning coffee, OpenAI Codex is the tool you need.

If you want to get started, I recommend enrolling in our AI for Software Engineering skill track, which teaches you using GitHub Copilot and similar AI tools.

Codex vs GitHub Copilot FAQs

Can I use Codex and GitHub Copilot together?

Yes, and many power users do it. Because Codex largely operates outside the IDE via its CLI, desktop apps, or web interface, it does not conflict with GitHub Copilot running locally in VS Code or JetBrains. You can have Copilot autocomplete your current function while Codex simultaneously runs a complex, multi-file database migration in a parallel cloud sandbox.

Is Codex the same model that powers GitHub Copilot?

Not anymore. This is a common point of confusion. "Codex" originally referred to the OpenAI model family that powered the first version of Copilot. Today, Codex is OpenAI’s standalone, autonomous coding agent product. Meanwhile, GitHub Copilot is a multi-agent platform that utilizes a variety of different models, including different versions of OpenAI's GPT, Anthropic’s Claude, and Google’s Gemini models.

Which tool is better for autonomous, hands-off coding tasks?

OpenAI Codex is significantly better for hands-off tasks. Its ability to spin up isolated, sandboxed cloud environments means it can independently write, execute, and verify code without risking your local setup. Paired with its ability to process tasks in parallel and retain context via its Chronicle memory feature, Codex is the premier choice for true task delegation.

Which has better pricing for teams?

GitHub Copilot is best if you want a predictable monthly bill because it uses flat per-seat pricing at $19 or $39. OpenAI Codex is more flexible since it uses a pay-as-you-go model where you only pay for what you actually use.

Why is there a waitlist for GitHub Copilot, and is OpenAI Codex a viable alternative?

As of Q2 2026, GitHub has paused new individual sign-ups to manage the extreme compute demands of agentic features like /fleet. OpenAI Codex serves as a decent alternative with a more agentic focus for developers on the waitlist.