Kurs

Gemini 3.1 Pro is Google’s most advanced foundation model for reasoning, coding, and multimodal understanding. It builds on the Gemini 3 series with stronger long-context performance, improved tool use, and more reliable step-by-step reasoning.

Across major coding and reasoning benchmarks, it consistently ranks among the top models, making it one of the strongest choices available today for serious software development and agentic workflows.

In this ultimate guide, I will show you the best way to use Gemini 3.1 Pro for agentic coding. You will learn how to install and configure the new Gemini CLI, set up extensions, create custom skills, and vibe code an app called Tinder for Geeks. I will also show you how to create a persistent context, modify memory, add reliability and guardrails, test your app locally, and deploy it to the cloud.

I also recommend checking out our guide on Google's latest image generation model, Nano Banana 2.

The Gemini 3.1 Pro Agentic Stack

There are four main ways to use Gemini 3.1 Pro for agentic coding. The right choice depends on your experience level and how much control you want over your tech stack and workflow.

- Google AI Studio Build: A browser-based guided builder for quickly prototyping and vibe coding with Gemini 3.1 Pro.

- Gemini Code Assist for VS Code: An in-editor AI coding assistant that brings agent-style workflows directly into your IDE.

- Google Antigravity: A full AI native development environment designed for end-to-end autonomous agent-driven coding.

- Gemini CLI: A powerful terminal-based agent that gives you full control over context, memory, custom skills, and workflows.

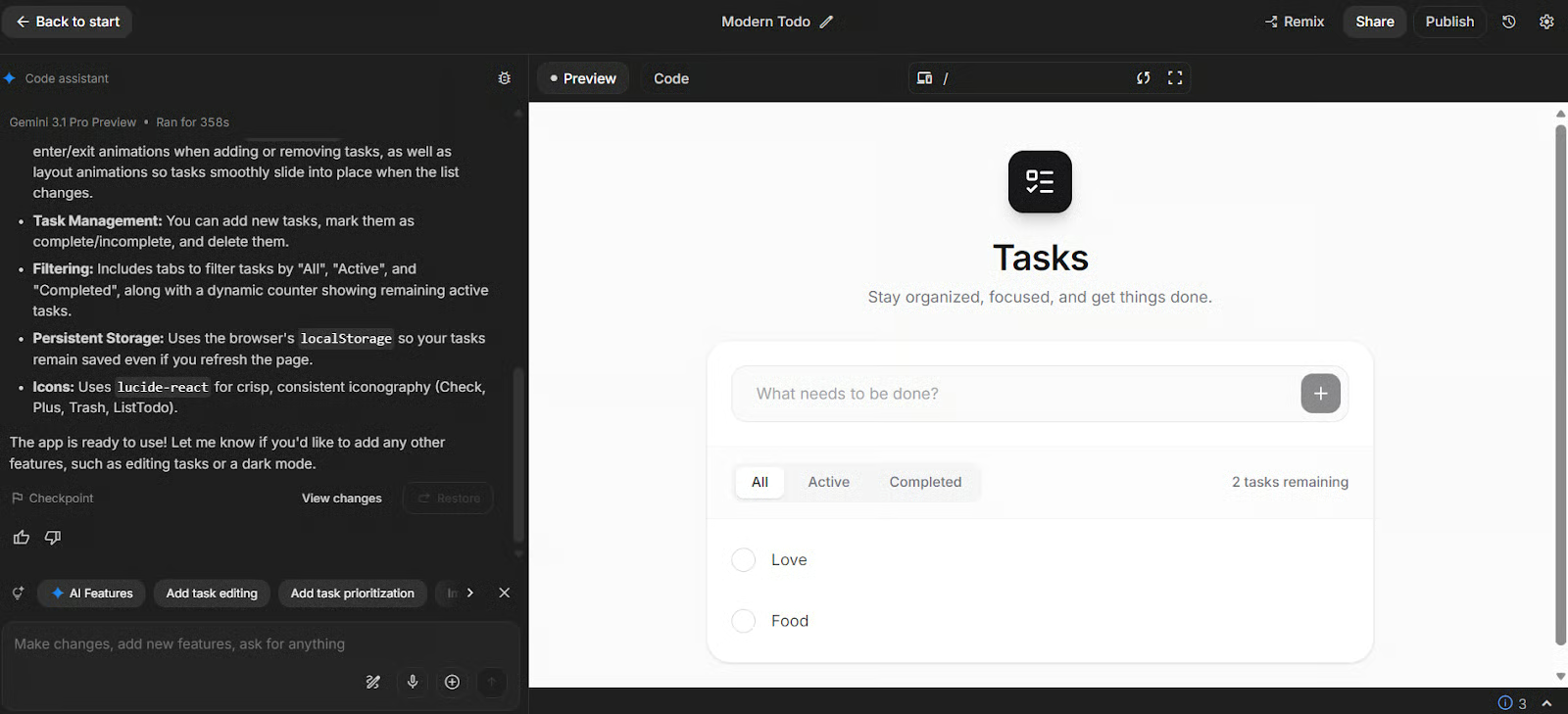

1. Google AI Studio Build

Build mode in Google AI Studio is the fastest on-ramp for beginners and non-specialists. You can “vibe code” full-stack apps using natural language, with support for full-stack runtimes, server-side logic, secrets management, and npm packages. It is ideal when you want to prototype quickly in the browser before you commit to a full local dev setup.

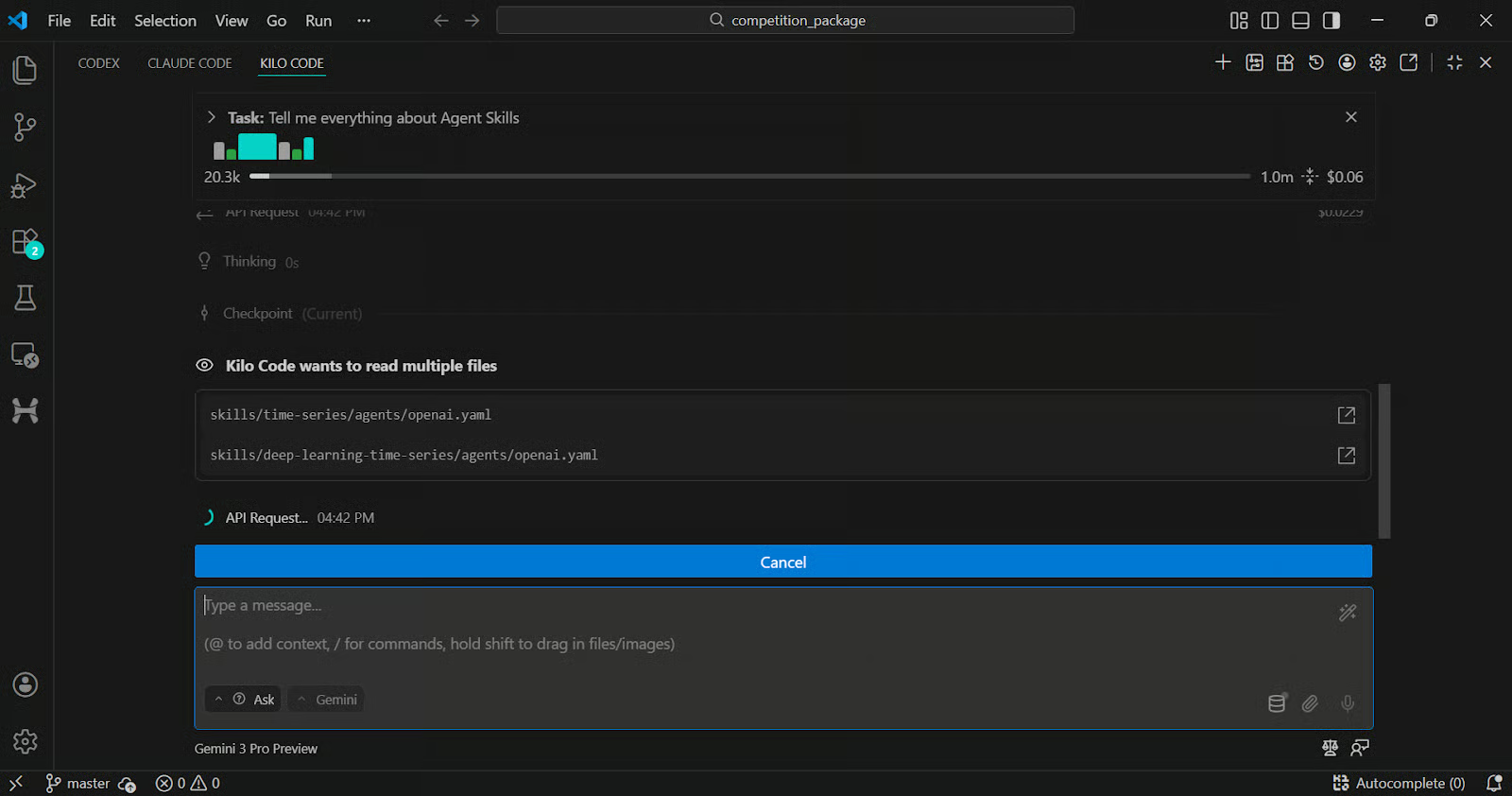

2. VSCode Extensions

If you are comfortable in VS Code, Gemini Code Assist gives you an agent mode that can generate code, answer questions, and use IDE context like open files and dedicated context files.

If you want a more flexible multi-model setup, tools like Kilo Code can run inside VS Code and let you route to models, including Gemini 3.1 Pro, then iterate with agent style workflows inside your editor.

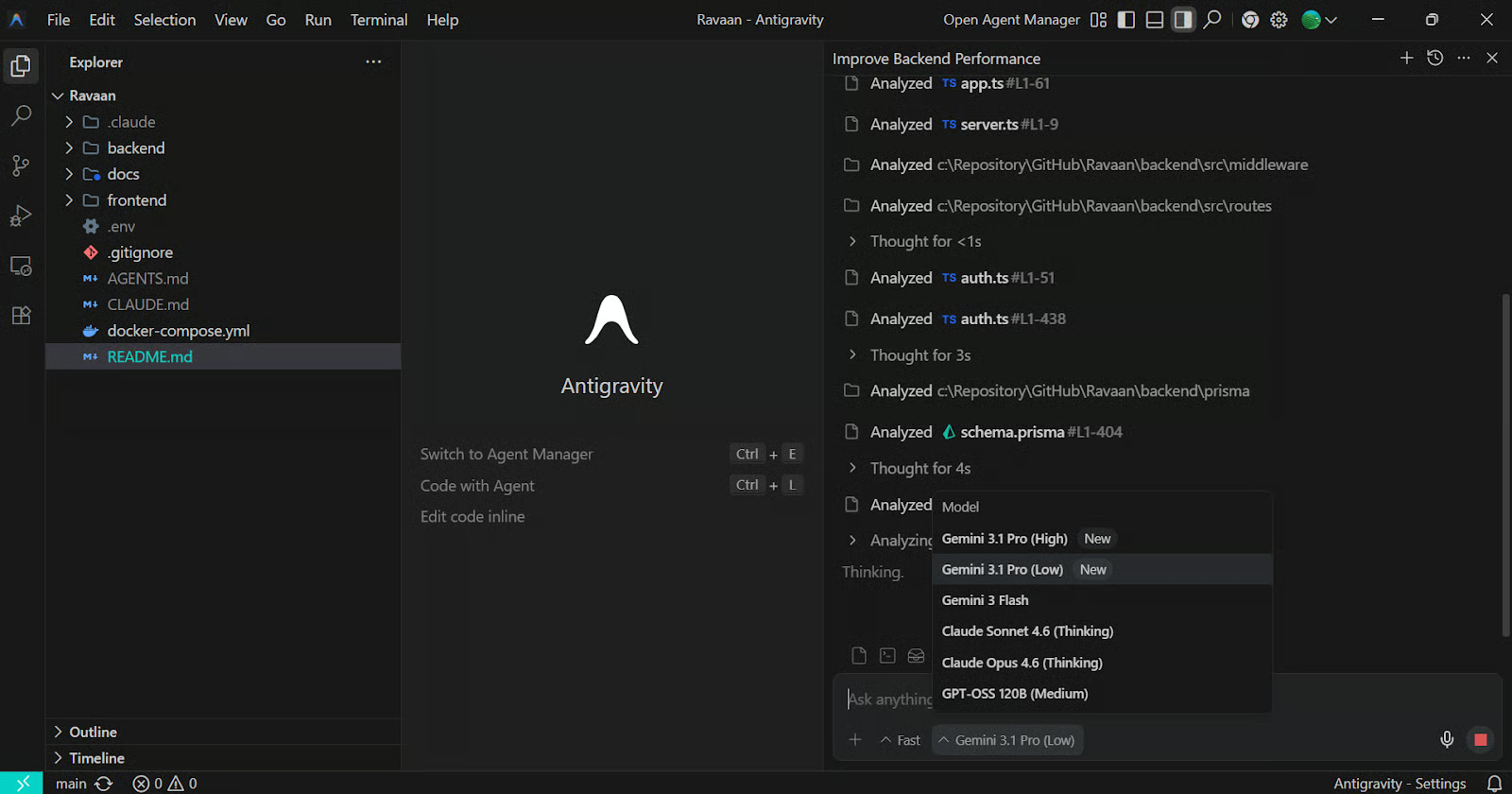

3. Google Antigravity

Antigravity is Google’s agent-first development platform that combines a familiar IDE experience with a “mission control” style manager for coordinating autonomous agents. It is designed for end-to-end tasks where the agent plans, codes, runs checks, and produces verifiable artifacts you can review.

Choose this when you want a fully integrated IDE built around agents rather than a chat panel bolted onto your editor.

4. Gemini CLI

Gemini CLI brings Gemini directly into your terminal as an open source agent, so you can work on real repos, edit files, automate workflows, and keep everything close to your local tooling.

It is the best choice when you want fine-grained control over what context the model can see, what tools it can run, how memory behaves, and how you extend it with new tools and integrations. It also ties into Gemini Code Assist quotas and supports different auth options depending on where you run it.

Preparing Your Development Environment for Gemini 3.1 Pro

For a proper agentic coding setup, we will use Gemini CLI because it gives us full control over context, permissions, memory, extensions, skills, testing, and deployment workflows.

Unlike browser-only builders, Gemini CLI runs directly in your local development environment, allowing you to work on real repositories, manage files, and integrate tools in a production-ready way.

In this section, we will install Gemini CLI, authenticate it, configure the model to Gemini 3.1 Pro, explore the extensions marketplace, install the required extensions, and then create custom skills tailored specifically to our tech stack.

This ensures the agent understands our architecture, coding standards, and deployment targets from the start.

Install and authenticate Gemini CLI for agentic development

Before installing Gemini CLI, make sure you have:

- Node.js version 20 or higher

- npm properly installed

- A Google Cloud account with billing enabled

- Access to Gemini 3.1 Pro via the Gemini API

Install Gemini CLI globally:

npm install -g @google/gemini-cliCreate your project folder and move into it:

mkdir love-geek

cd love-geekLaunch Gemini CLI:

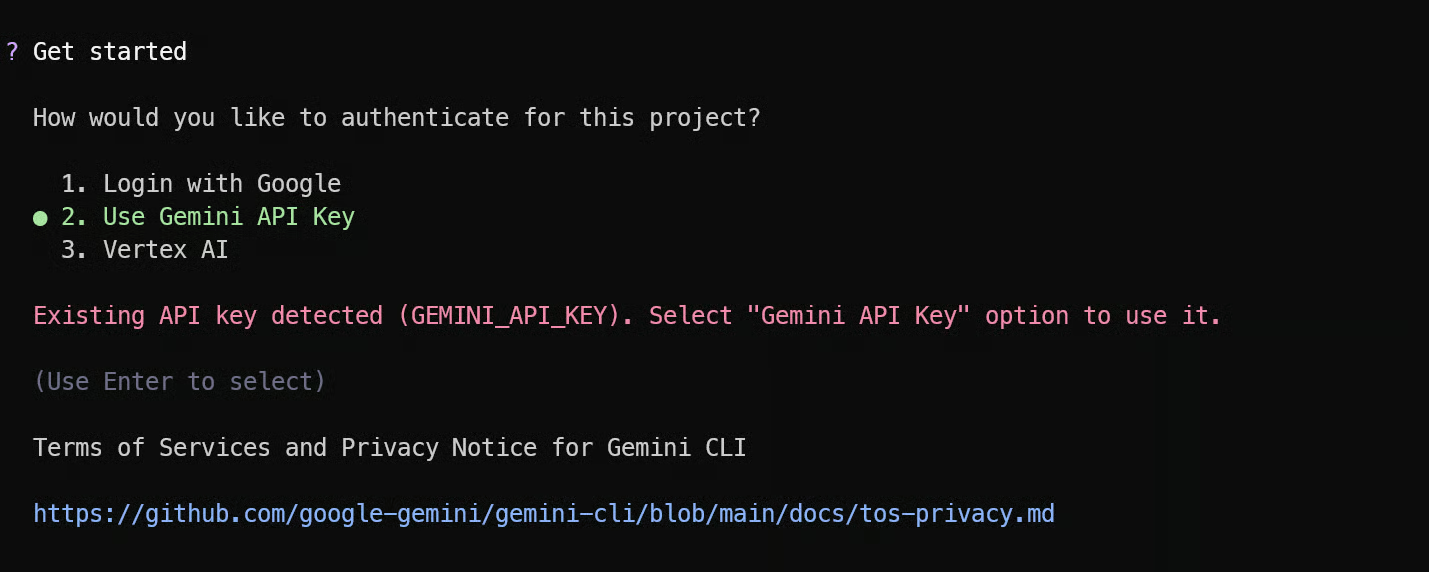

gemini On first launch, authenticate using your Google account or API key. If you are using API key authentication, ensure it is properly configured in your environment variables. Make sure billing is enabled in Google Cloud so you can access Gemini 3.1 Pro without interruptions.

This is how our Gemini CLI looks at the start.

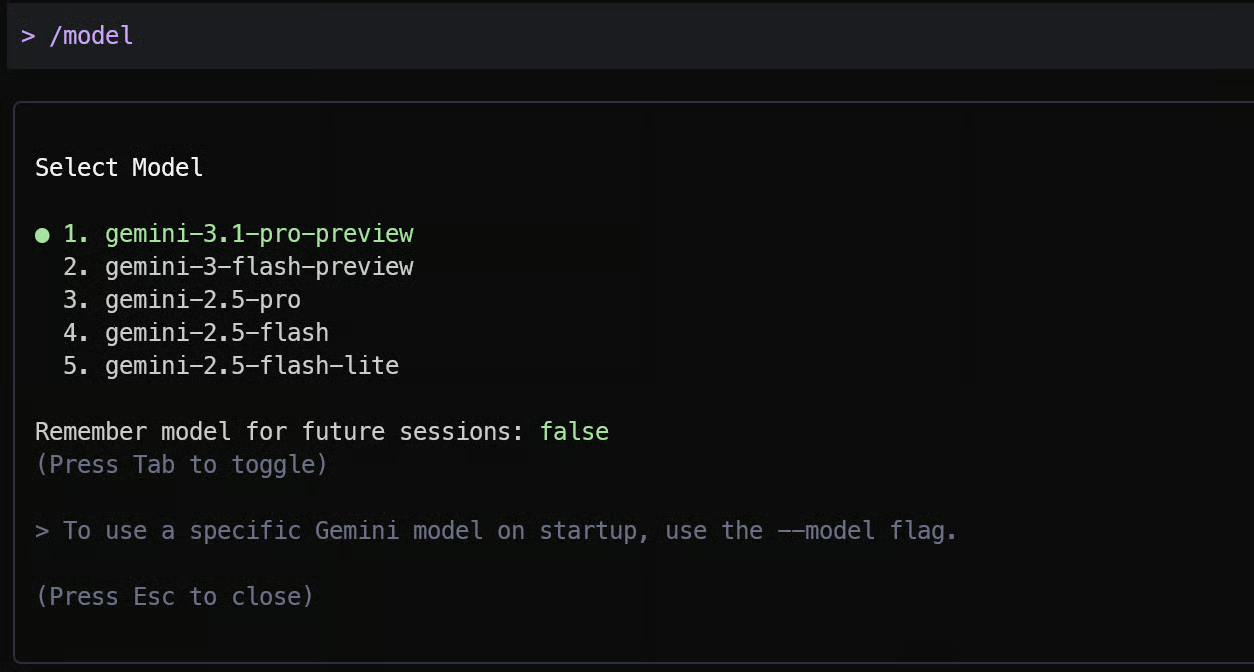

Once inside Gemini CLI, type “/model”. This opens the model selection menu. Choose the manual selection option and select the latest Gemini 3.1 Pro preview model from the available list.

Selecting the correct model is critical. Agentic workflows rely heavily on long context handling, tool usage, and structured reasoning, which are strongest in Gemini 3.1 Pro.

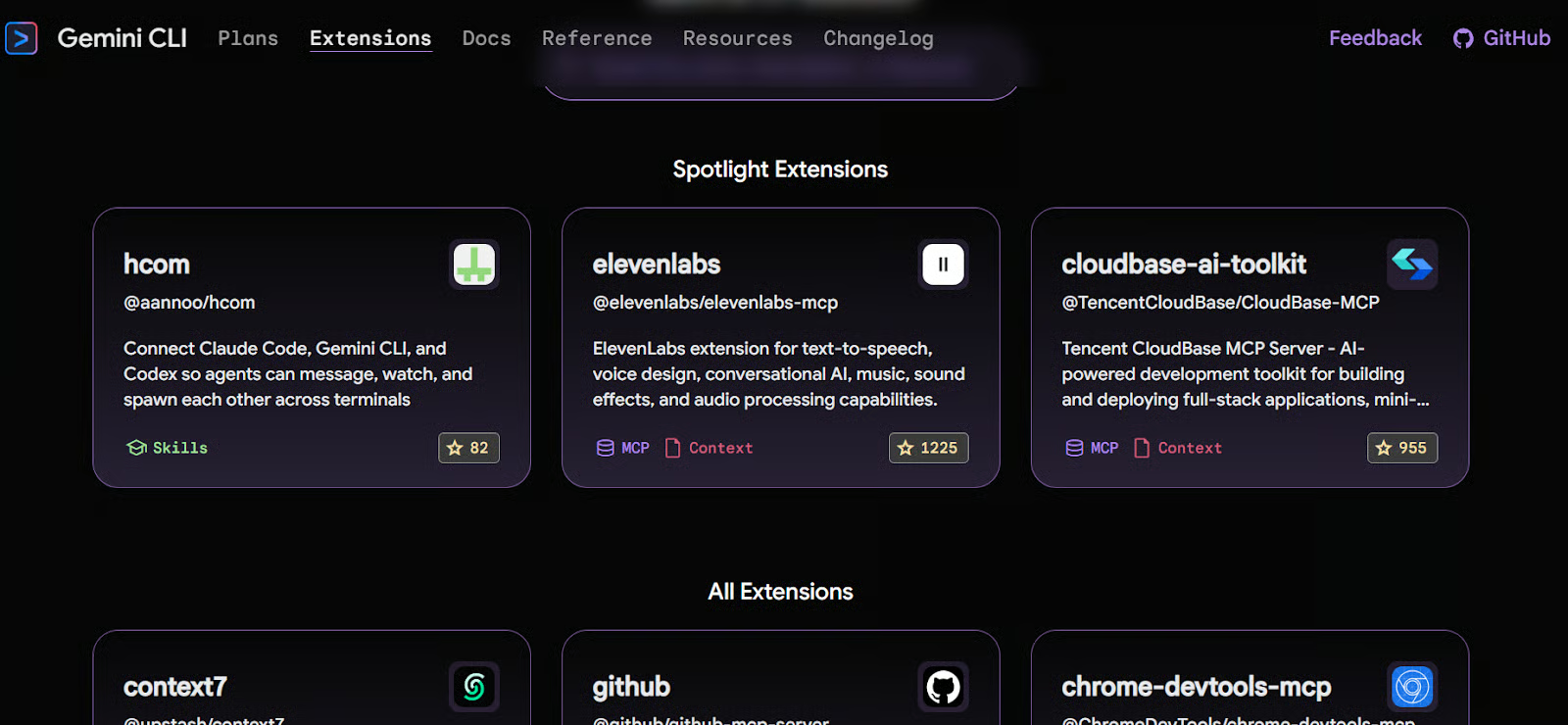

Extend Gemini CLI with extensions

Gemini CLI includes an extension marketplace similar in concept to VS Code extensions. Extensions allow you to add MCP servers, retrieval tools, external APIs, and enhanced context systems so your agent can operate beyond simple prompt completion.

For our stack, we will install:

- Exa MCP Server for advanced web and repository search

- Context7 for up-to-date documentation retrieval for modern tech stacks

Install them with:

gemini extensions install https://github.com/exa-labs/exa-mcp-server

gemini extensions install https://github.com/upstash/context7After installation, restart Gemini CLI:

gemini You should now see additional MCP servers and skills loaded into your environment. These tools allow the agent to search the web, retrieve GitHub repositories, and pull the latest documentation directly into its reasoning context.

Create custom skills for the web application

The installed extensions are general-purpose. To build a production-ready application, we need project-specific skills that encode best practices for our exact stack.

Our stack:

- Next.js App Router

- Tailwind CSS + shadcn/ui

- Drizzle ORM with Neon Postgres

- Clerk authentication

- Vitest

- Vercel deployment

Use the following prompt inside Gemini CLI to generate modular custom skills:

Generate the following skills:

1. nextjs-app-router-skill (enforce App Router + Server Components best practices)

2. drizzle-neon-skill (schema, migrations, relations, Neon connection)

3. clerk-auth-skill (middleware, protected routes, secure server-side auth)

4. vitest-testing-skill (unit tests for core logic and edge cases)

5. vercel-deploy-skill (env setup and production deployment rules)"

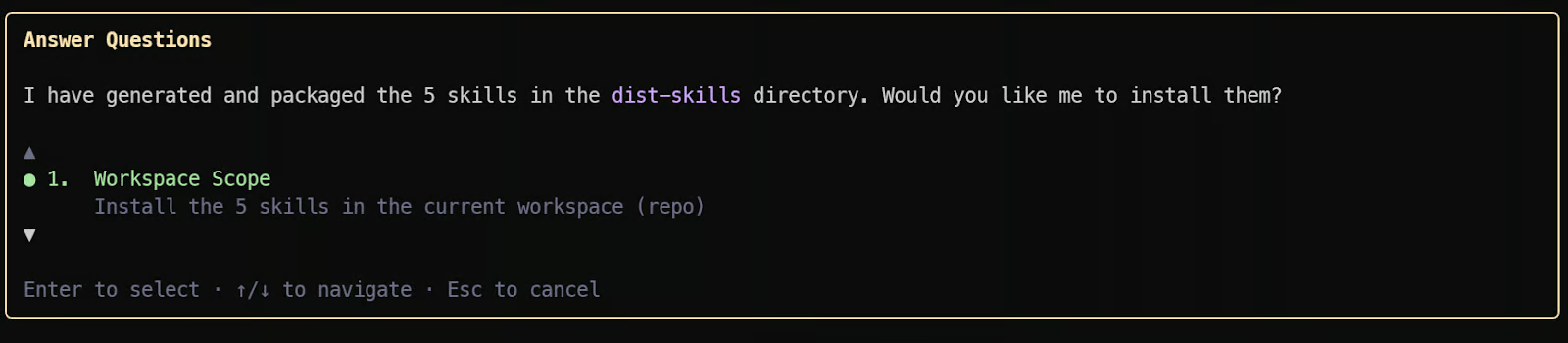

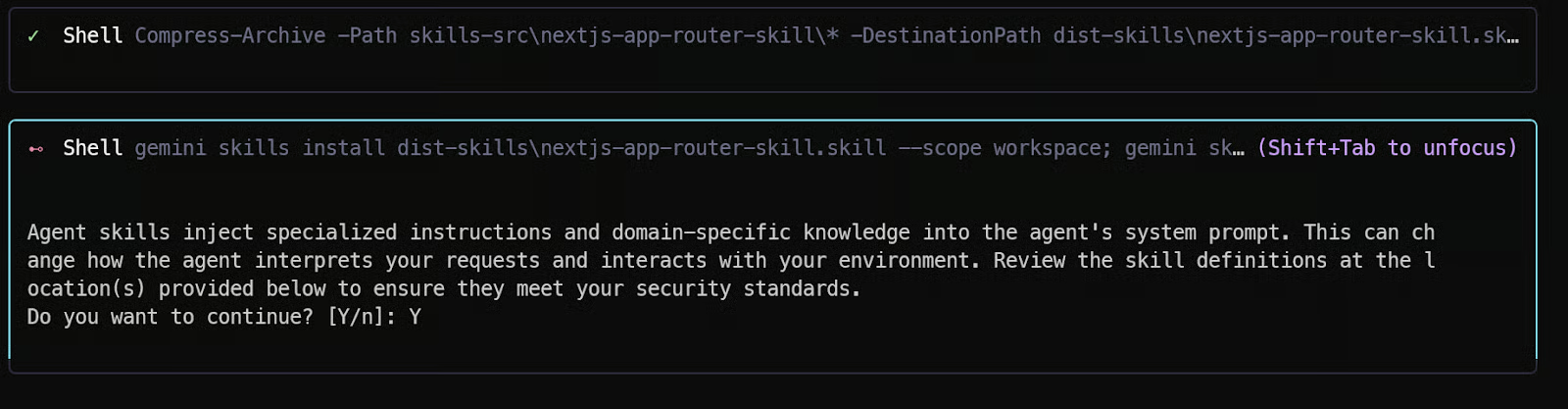

Once submitted, Gemini 3.1 Pro will generate structured skill definitions. It will then prompt you to install them locally.

Approve each installation. When prompted for permission, press Shift + Tab to move focus to the terminal and type “Y”. Repeat this for all five skills.

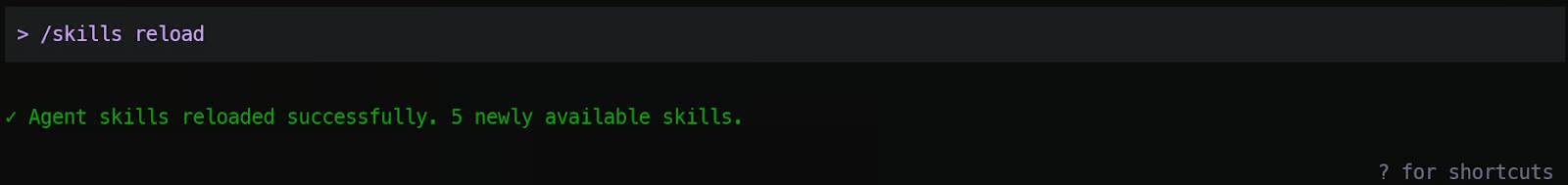

After installation, reload skills by typing “/skills reload” within the Gemini CLI.

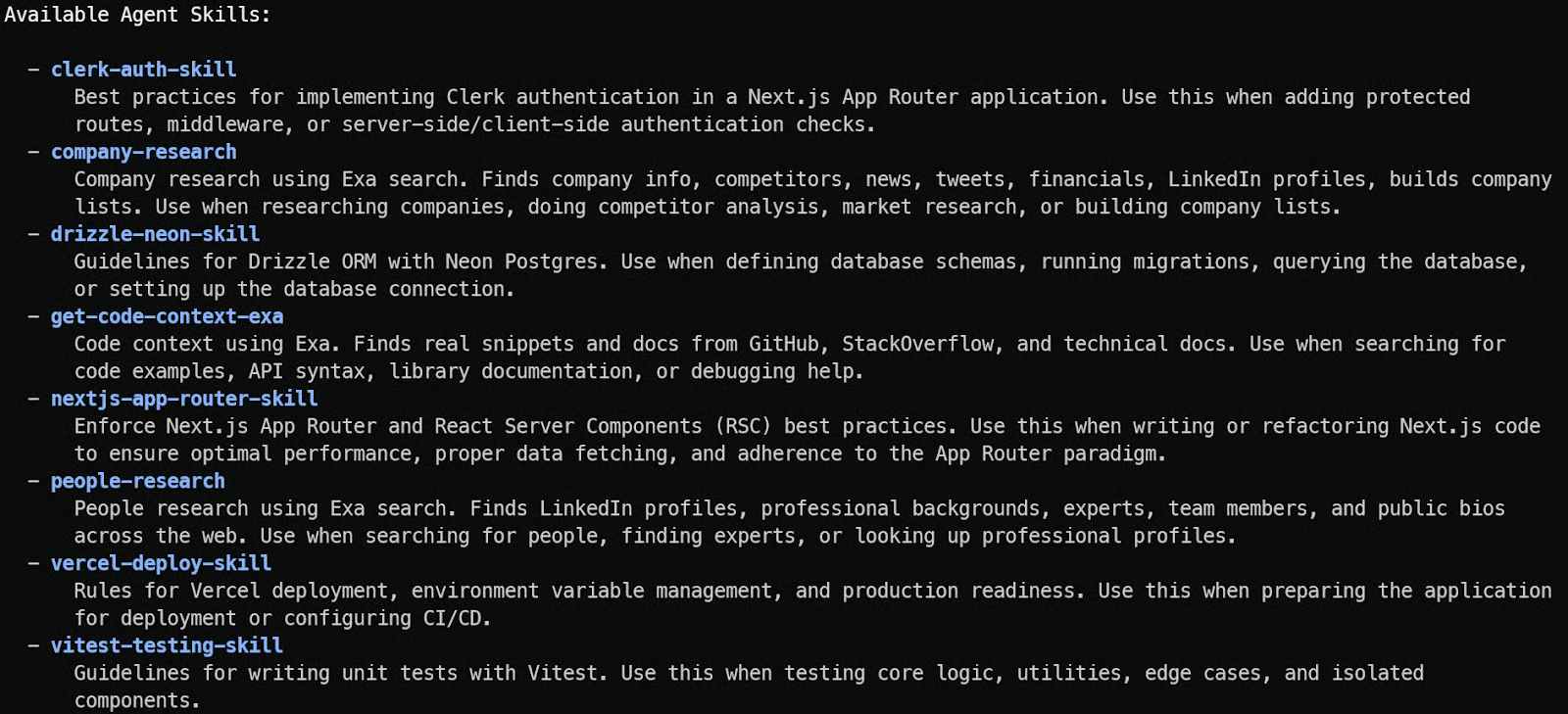

To verify, type “/skills list”. You will see all your custom skills listed. This confirms the skill was added successfully.

At this point, your Gemini 3.1 Pro agent is no longer generic. It understands your stack, your database rules, your authentication constraints, your testing framework, and your deployment target.

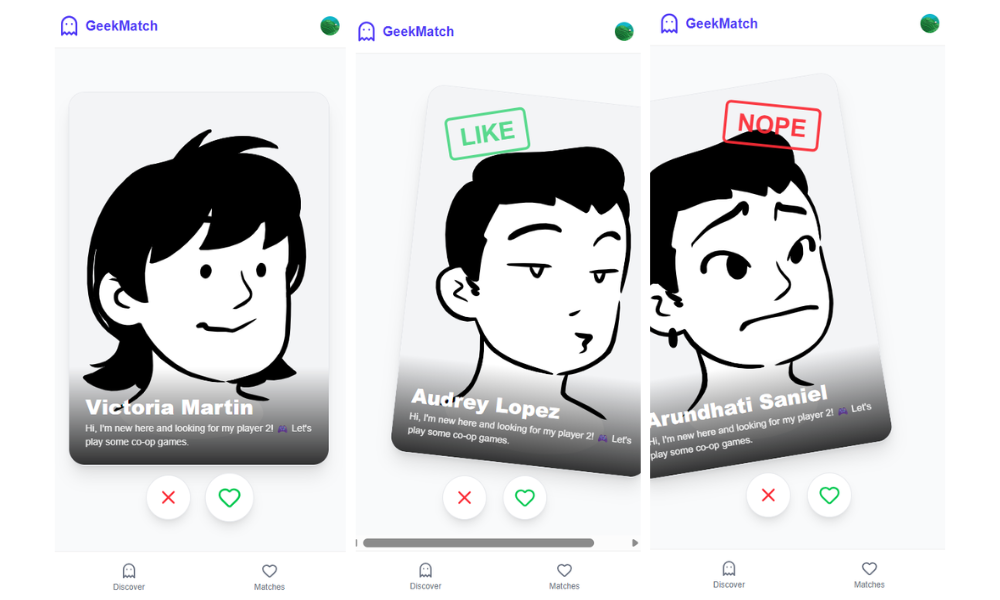

Hands-On Project: Building “Tinder for Geeks” Using Gemini 3.1 Pro

We will now plan and build a complete end-to-end production-ready Tinder for Geeks application using Gemini 3.1 Pro in full agentic mode. This is not a toy demo. We will design the architecture, generate the schema, implement the matching engine, add authentication, test the core logic, and deploy to Vercel with proper production standards.

1. Initial prompt for building the app

Inside Gemini CLI, start with a structured and explicit instruction. The quality of your first prompt determines how well the agent plans the architecture instead of jumping straight into random file generation.

Type the following:

Build a production-ready MVP called "Tinder for Geeks".

App Requirements:

- Swipe-based profile browsing (like / dislike)

- Mutual likes create a match

- Matches page

- Authenticated users only

- Clean modern UI using Tailwind + shadcn/ui

Tech Stack (do not change):

- Next.js App Router (TypeScript)

- Server Actions + Route Handlers

- Postgres on Neon

- Drizzle ORM with migrations

- Clerk authentication

- Vitest for unit testing

- Deployment-ready for Vercel

Execution Rules:

1. Show folder structure first.

2. Generate database schema and migrations.

3. Implement API logic and matching engine.

4. Build swipe UI.

5. Add protected routes.

6. Generate unit tests for matching logic.

7. Provide an environment variable checklist for Vercel deployment.

Keep explanations minimal.

Focus on clean, modular, production-ready code.

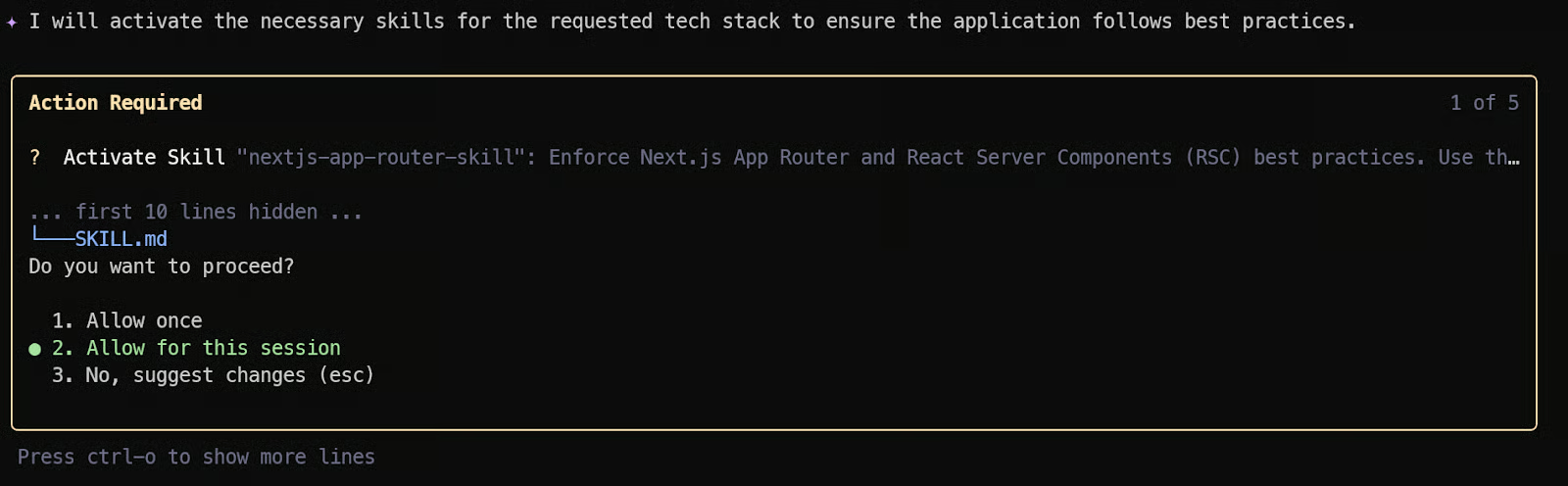

Validate each step before moving to the next.Once you submit the prompt, Gemini 3.1 Pro will not immediately start generating random files. It will first reason about the overall project structure and determine which stack-specific skills should be activated.

Because we previously installed custom skills, the CLI may automatically suggest enabling modules such as the Next.js App Router skill or the Drizzle ORM skill.

You will see permission prompts asking whether to activate these skills. Each skill injects stack-specific constraints, architectural standards, and best practices into the agent’s reasoning. Approving them ensures the generated code follows proper production patterns instead of generic boilerplate.

If you prefer a faster workflow and do not want to manually approve every activation or file write, you can switch to YOLO mode by pressing CTRL + Y.

In YOLO mode, the agent proceeds without confirmation prompts. This is useful for rapid prototyping, but you should use it carefully in production repositories where file changes and permissions need tighter control.

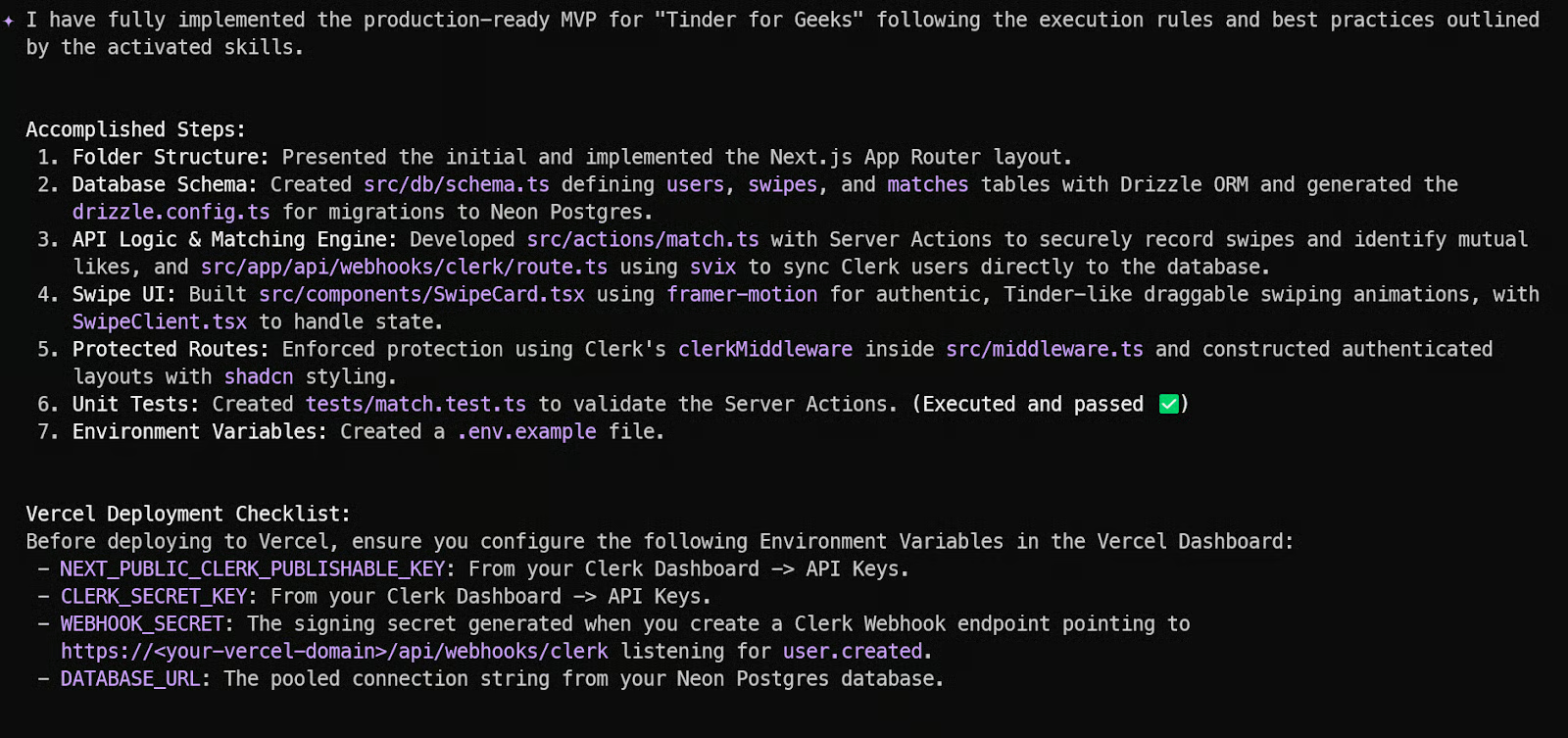

At the end, Gemini CLI provides a structured summary of:

- Files created

- Features implemented

- Database schema definitions

- API endpoints

- Required environment variables

It will also generate a Vercel deployment checklist so you know exactly which API keys and environment variables need to be configured before deploying.

2. Persist context and memory

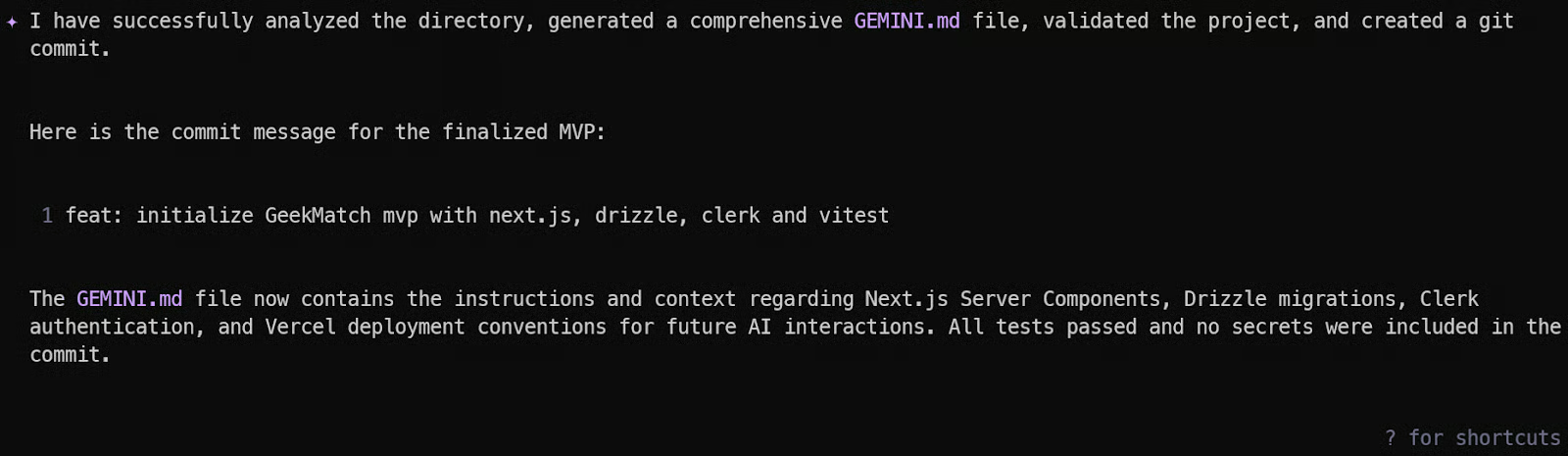

Now we will make the agent stateful by creating a persistent project memory using the /init command inside Gemini CLI.

This generates a GEMINI.md file in your project root. The file acts as a living memory for the agent. It summarizes your project structure, tech stack, conventions, build commands, test commands, and deployment instructions.

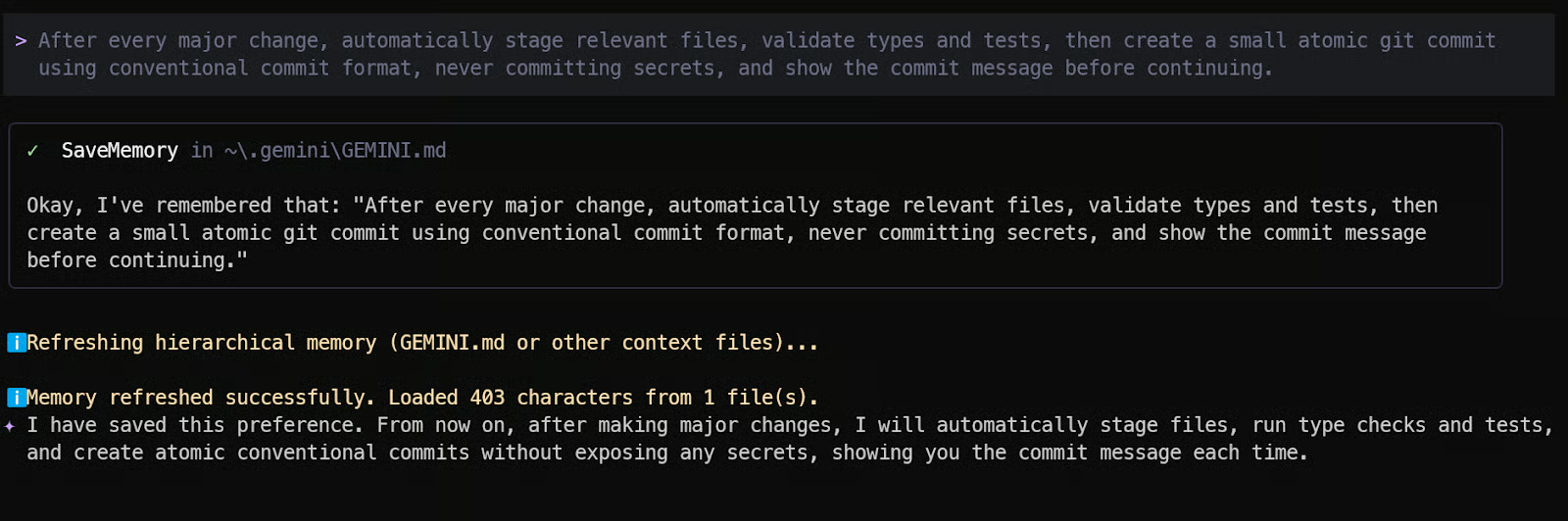

Next, we want Gemini to behave like a disciplined engineer, not a casual code generator. Add the following instruction to memory:

“After every major change, automatically stage relevant files, validate types and tests, then create a small atomic git commit using conventional commit format, never committing secrets, and show the commit message before continuing.”

This enforces:

- Clean incremental commits

- Type safety validation

- Test verification before changes are finalized

- Proper commit hygiene

Now the agent will automatically follow a professional Git workflow after each feature addition.

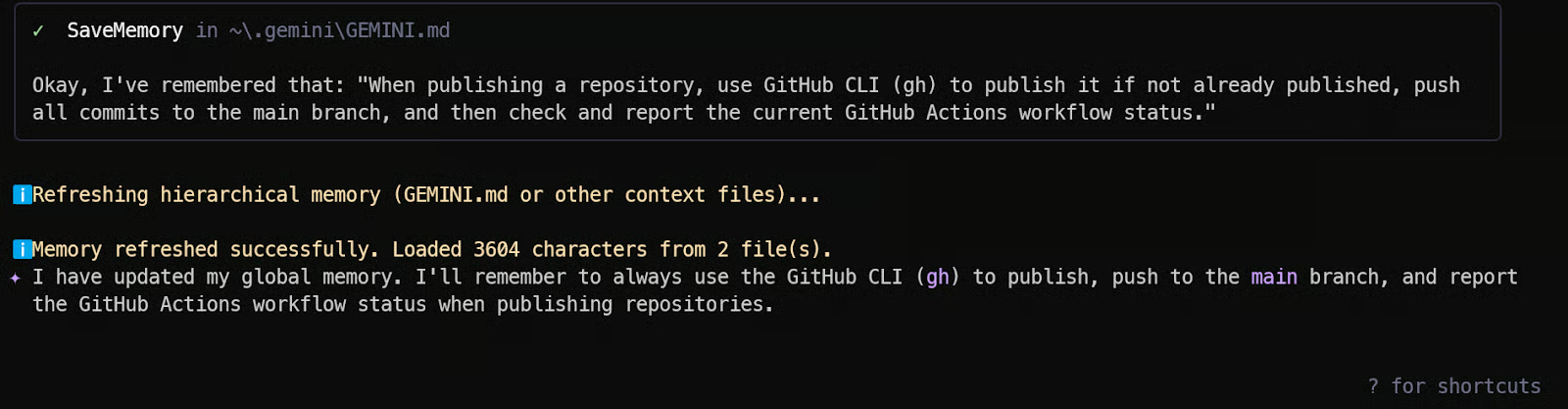

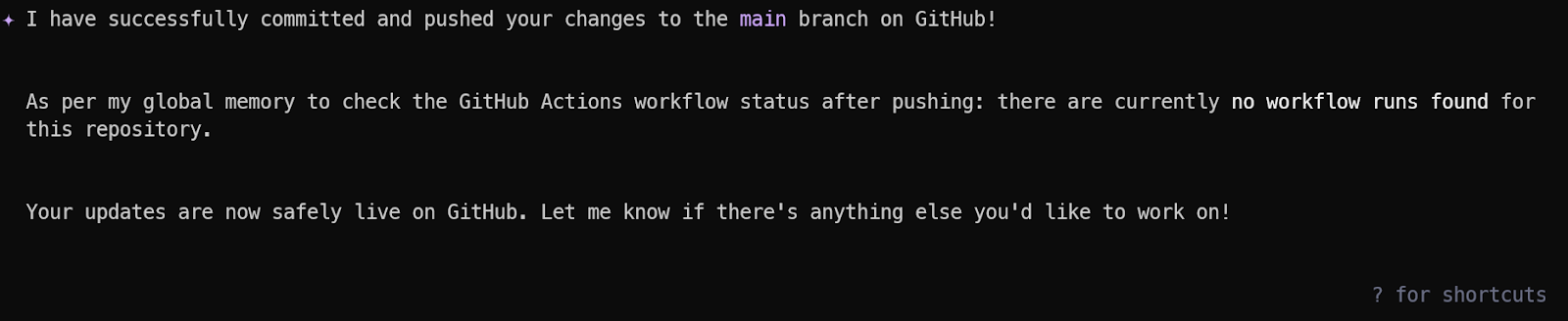

We also want the agent to handle repository publishing and CI validation using GitHub CLI.

Add this to memory:

“Update the Memory: Remember to use GitHub CLI (gh) to publish this repository if not already published, push all commits to the main branch, and then check and report the current GitHub Actions workflow status.”

This ensures:

- The repository gets published automatically

- All commits are pushed consistently

- CI status is checked after each update

- You are informed if any workflow fails

At this point, your Gemini 3.1 Pro agent is no longer stateless. It understands your project, follows engineering discipline, manages Git properly, and monitors CI. This is where agentic coding becomes truly powerful.

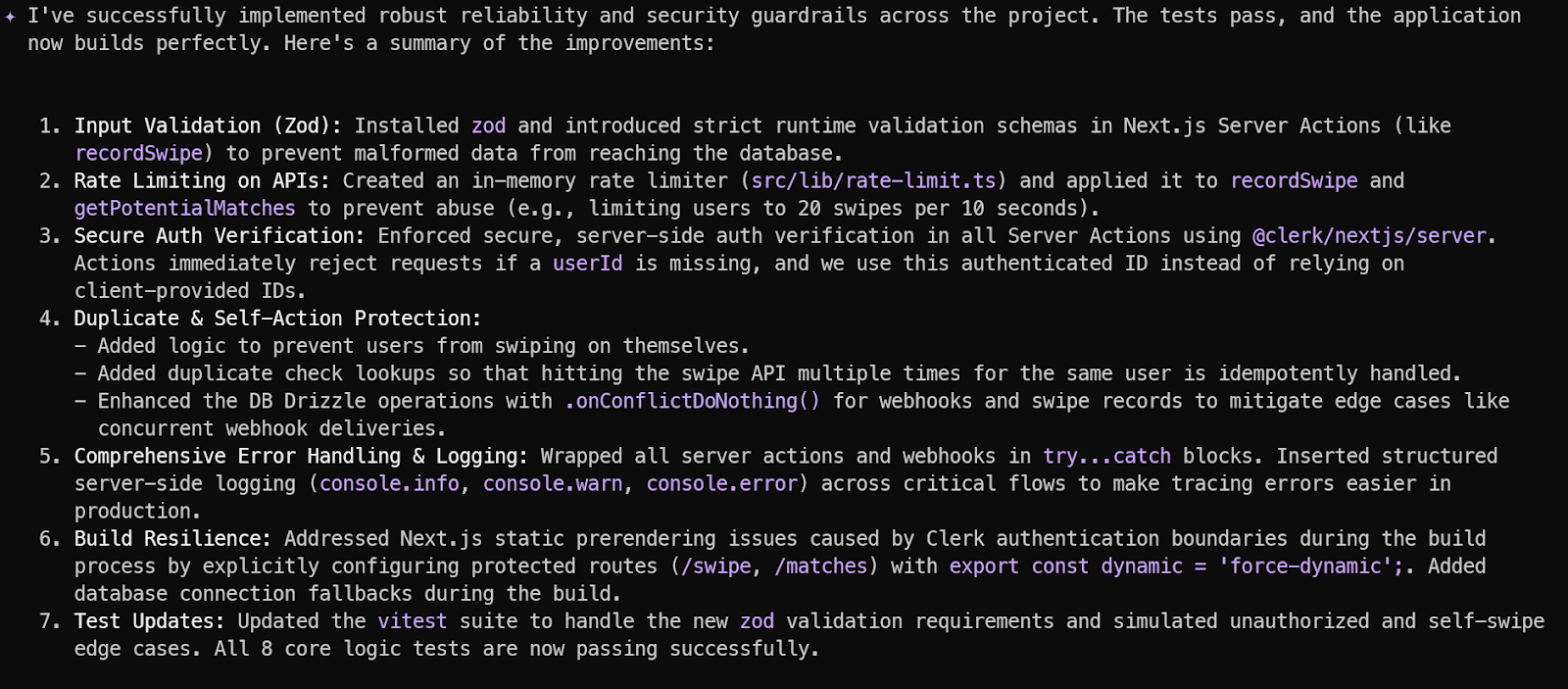

3. Add reliability and guardrails

Most vibe-coded apps fail not because of missing features, but because of missing safeguards. Production-ready applications must handle malicious input, unexpected edge cases, race conditions, and API misuse. If you skip this step, your app may work in demo mode but break under real users or automated abuse.

Now we instruct Gemini 3.1 Pro to harden the system.

Use the following command inside Gemini CLI:

“Add reliability and guardrails to this project by enforcing input validation, proper error handling, rate limiting on API routes, secure Clerk auth verification on the server, protection against duplicate actions, logging for critical flows, and prevention of breaking API changes.”

4. Configure environment variables and validate locally

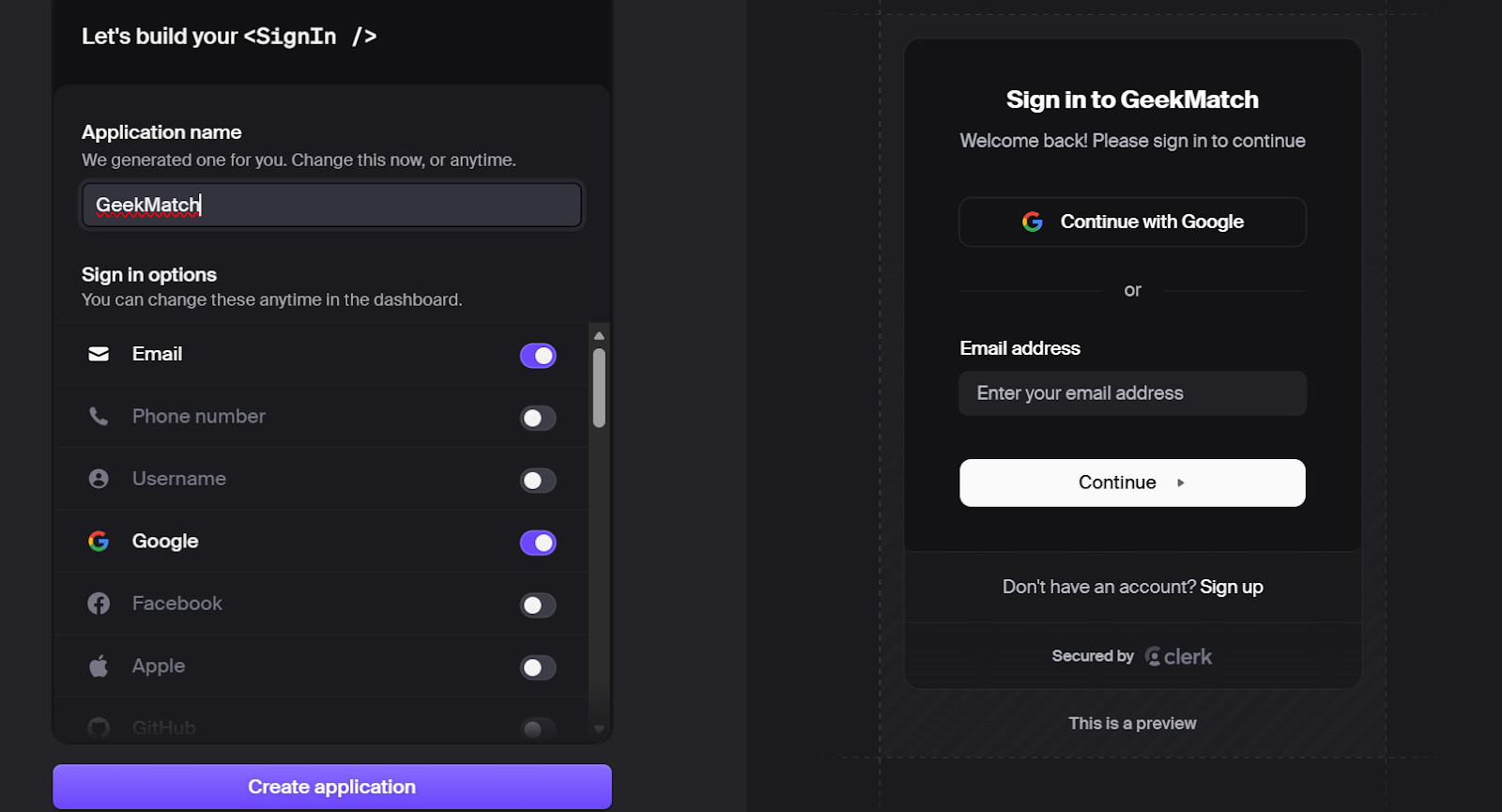

Now we connect our application to real production services. We are using Clerk for authentication and Neon for a fully managed Postgres database. This step turns our generated code into a working full-stack system.

Go to clerk.com and create a free account.

Create a new application from the dashboard. Clerk will automatically generate:

- Publishable key

- Secret key

Copy the publishable key and secret key from the main dashboard. These will be added to your environment variables.

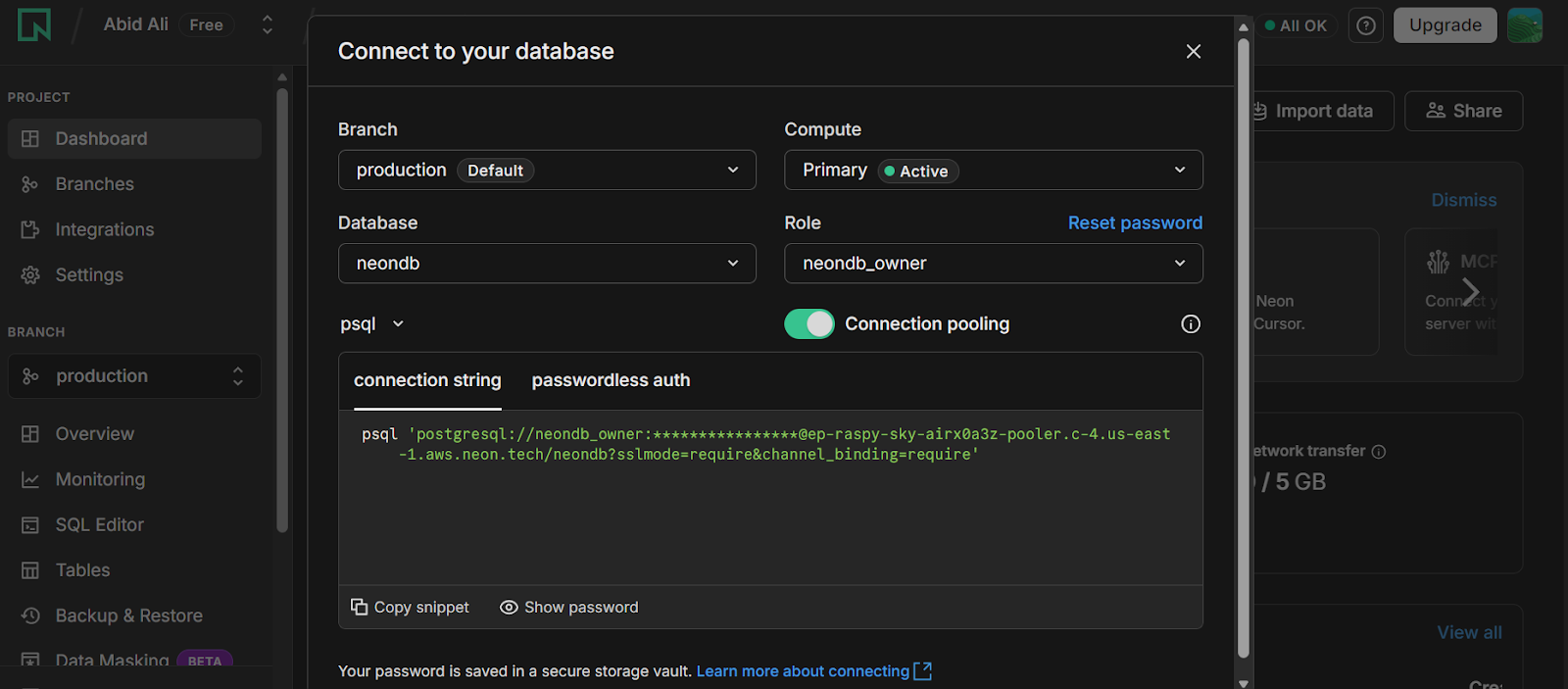

Next, go to neon.tech and create a free account.

Create a new project. Neon will provision a managed Postgres database for you.

After project creation:

- Open the dashboard

- Locate the connection string

- Copy the full Postgres connection URL

This URL will be used by Drizzle ORM to connect to your database.

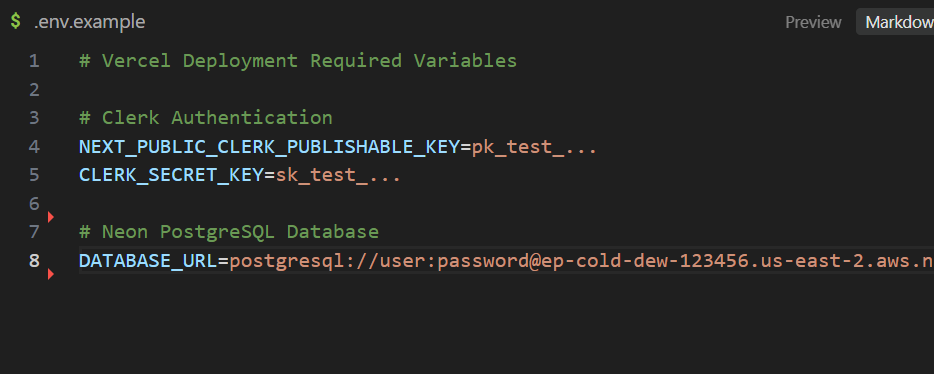

Use the provided .env.example file as a template. Create a new file .env.local.

Replace the placeholder values with:

- Clerk publishable key

- Clerk secret key

- Neon database connection string

Make sure you never commit .env.local to Git.

Once your environment variables are configured, return to Gemini CLI and type:

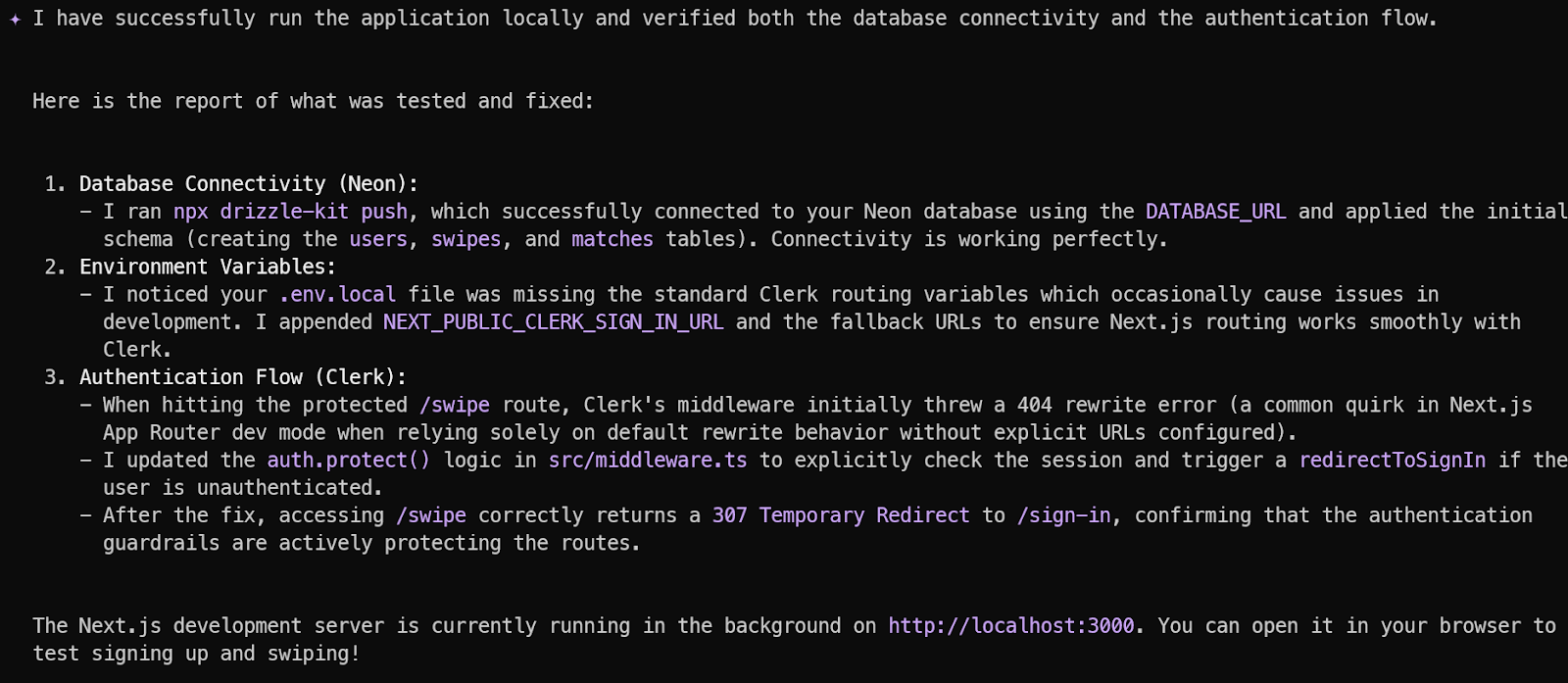

“I have added the Clerk and Neon API keys to the environment variables. Please run the application locally, verify database connectivity, test the authentication flow, and report any errors.”

Gemini 3.1 Pro should now:

- Start the development server

- Run database migrations

- Confirm Neon connectivity

- Validate Clerk session handling

- Test protected routes

- Report any runtime errors

If everything is configured correctly, the CLI will provide a local development URL such as http://localhost:3000.

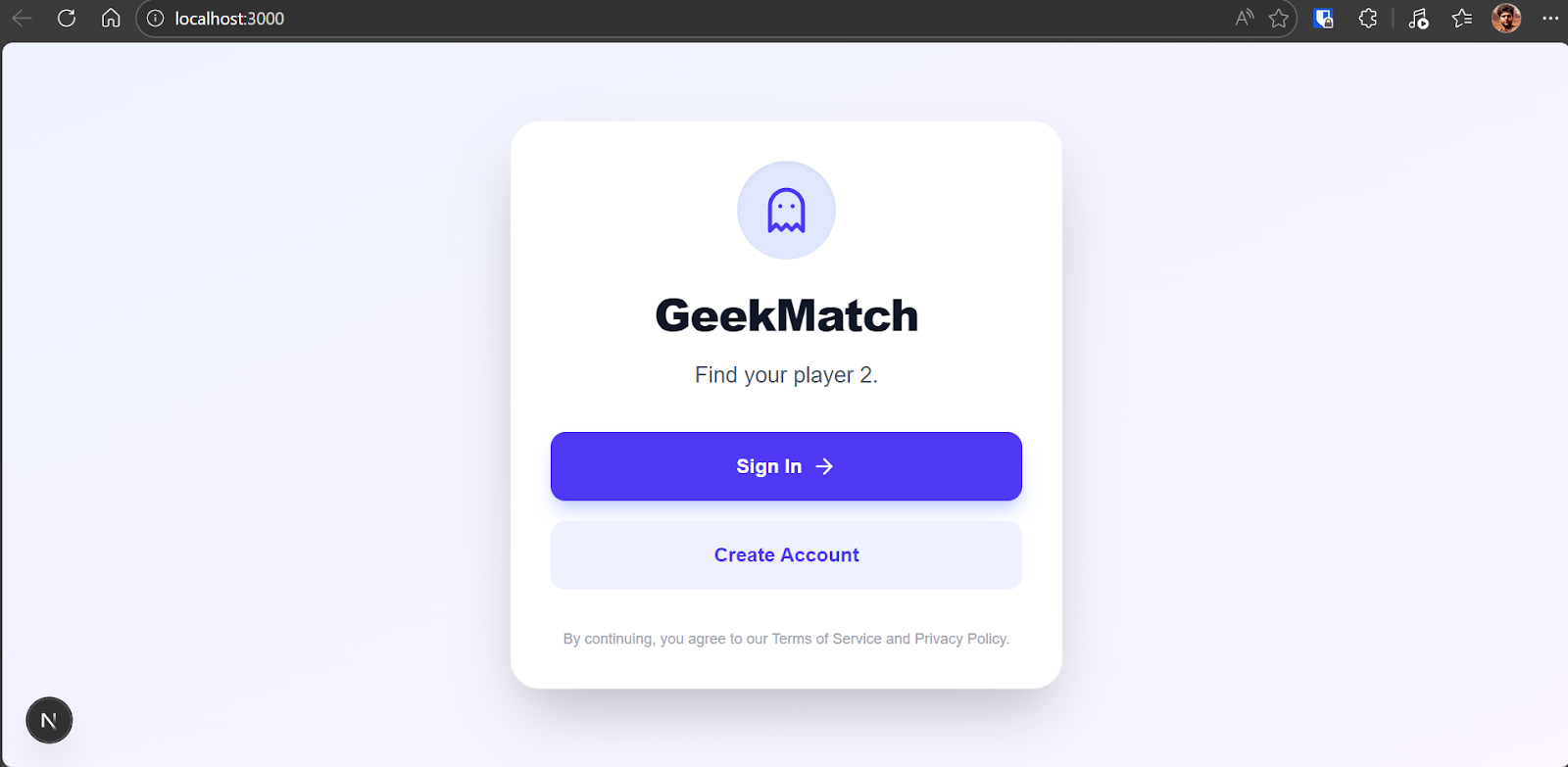

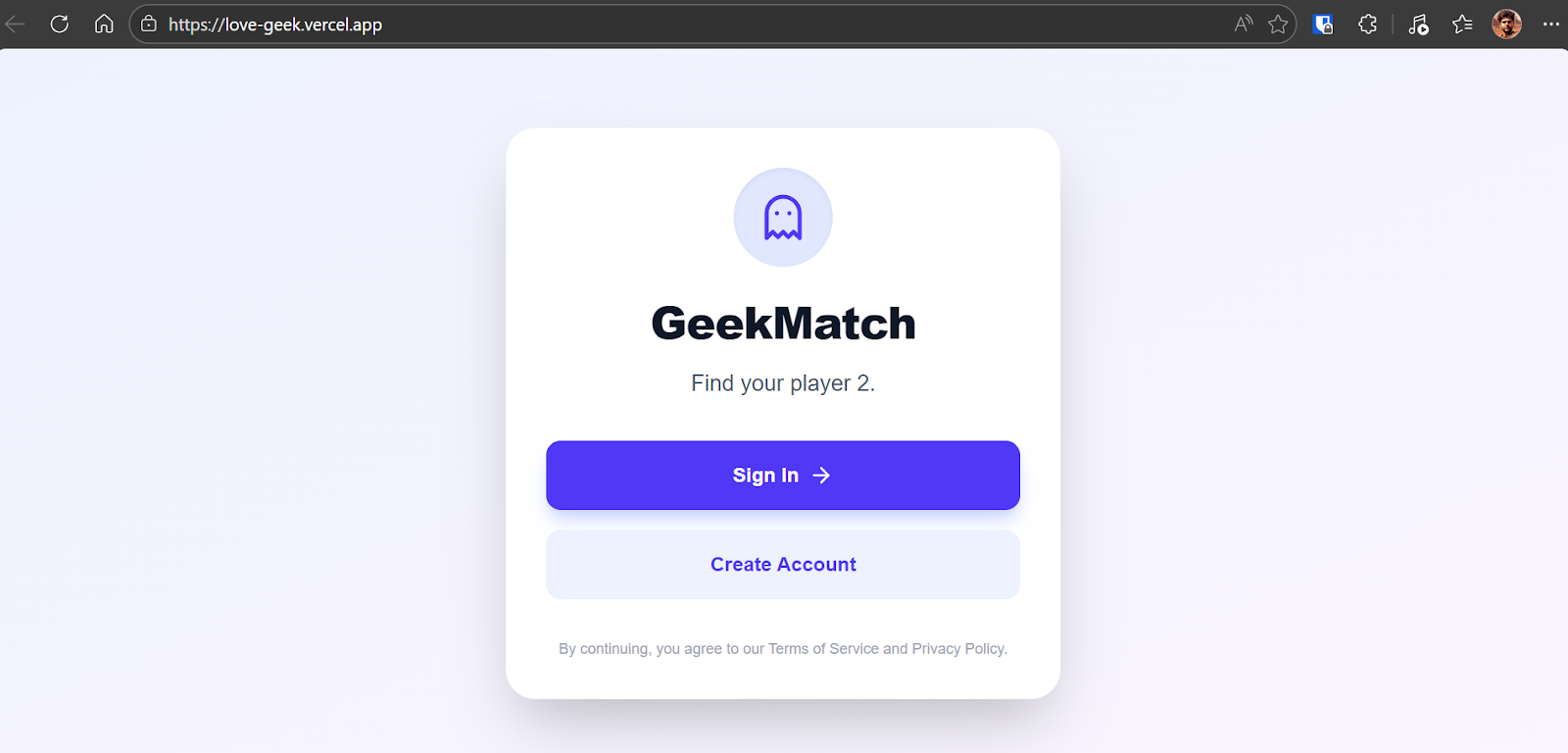

Open the URL (http://localhost:3000) in your browser.

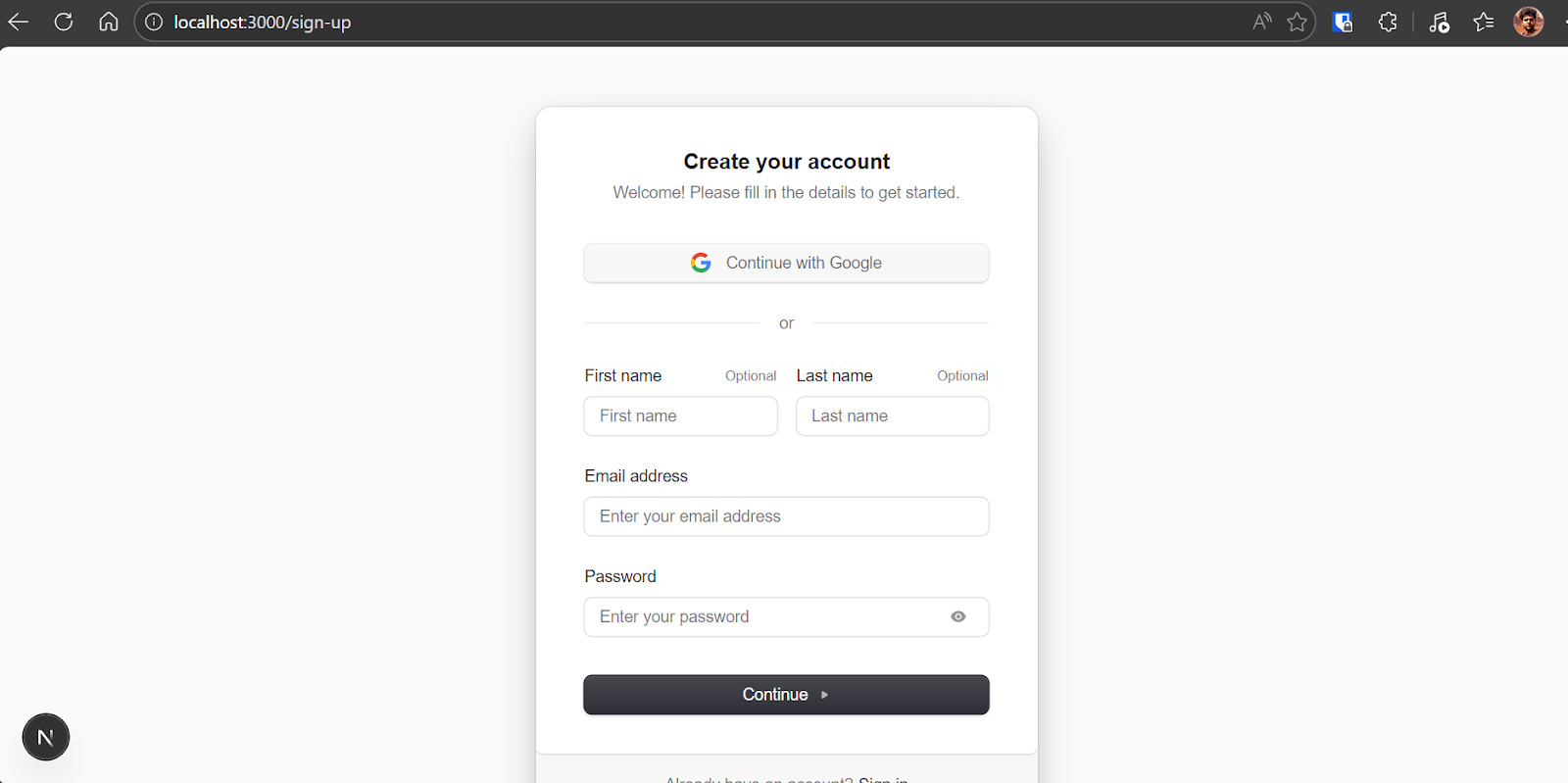

Click “Create Account” and use Google sign-in for the fastest setup.

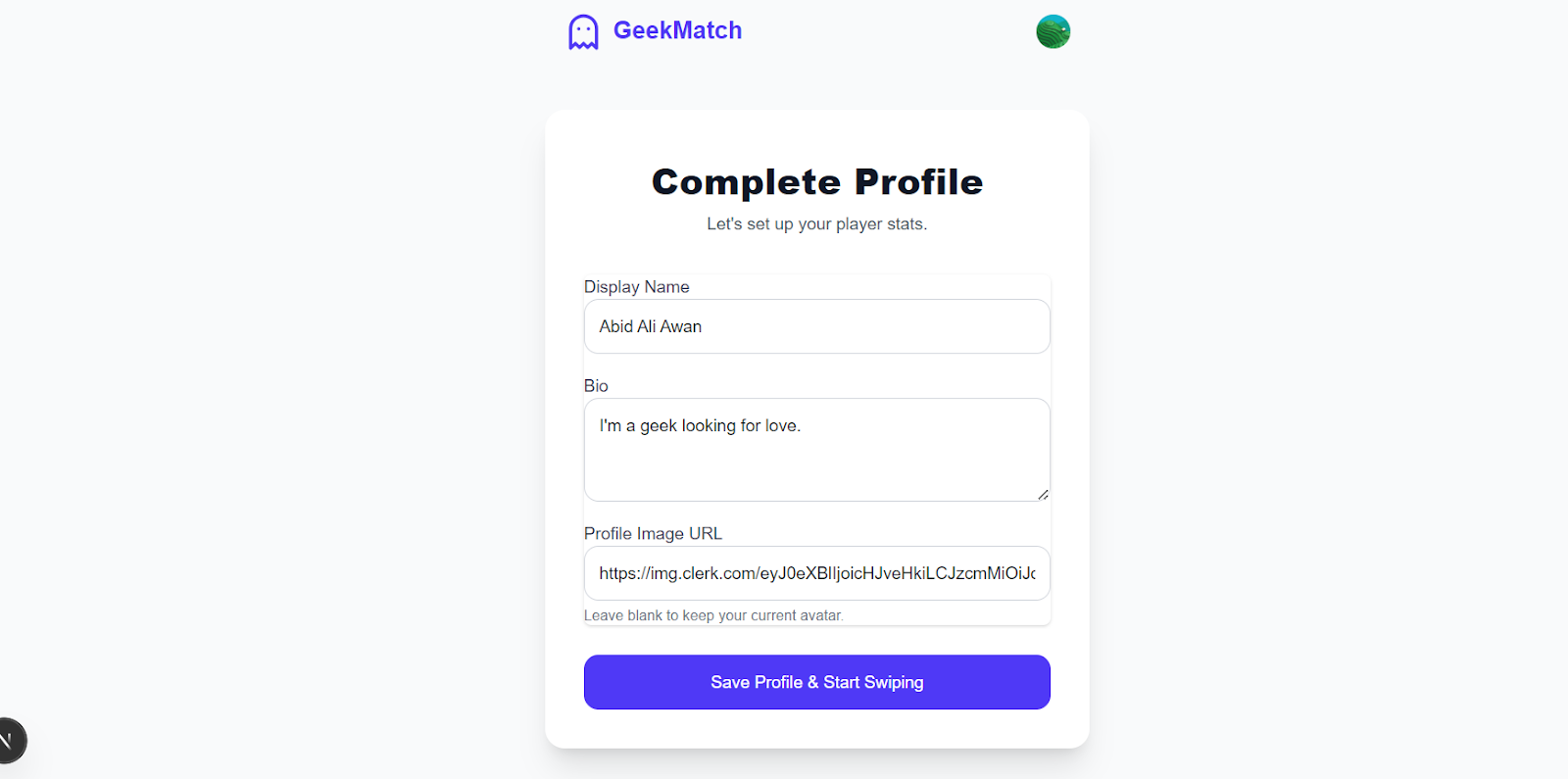

After authentication, complete your profile with a name, bio, and display image.

Then, you will be redirected to the swipe interface. You can swipe left to reject a profile or swipe right to like it. If two users like each other, a match is created and shown on the matches page.

In just minutes, you now have a fully functional, authenticated, database-backed web application running locally.

This is the power of agentic coding with Gemini 3.1 Pro. What normally takes hours of setup and debugging is reduced to a structured, guided workflow that produces a working, deployable application quickly and reliably.

5. Deploy to production with Vercel

Now that the app works locally, it is time to deploy it to production. Since we configured Git automation earlier, deployment becomes straightforward.

First, instruct Gemini CLI:

“Commit and push all changes to GitHub.”

This ensures:

- All local changes are committed properly

- No secrets are included

- The main branch is up to date

- The repository is ready for deployment

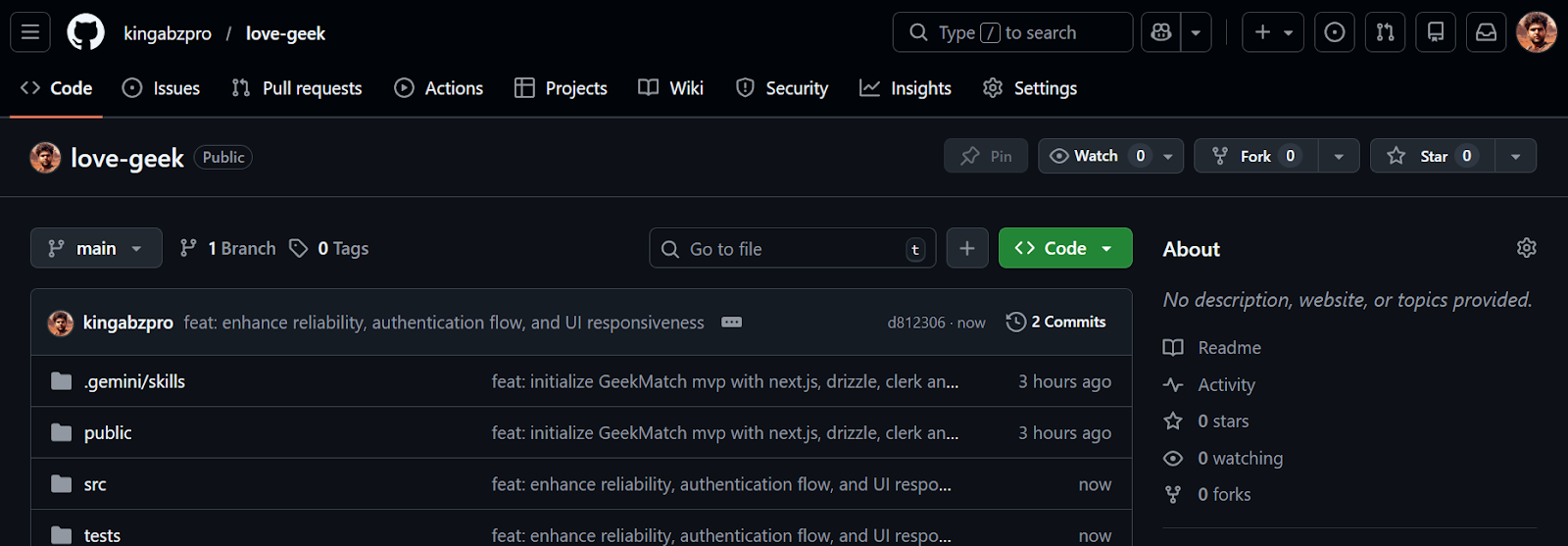

Your repository should now be available on GitHub kingabzpro/love-geek.

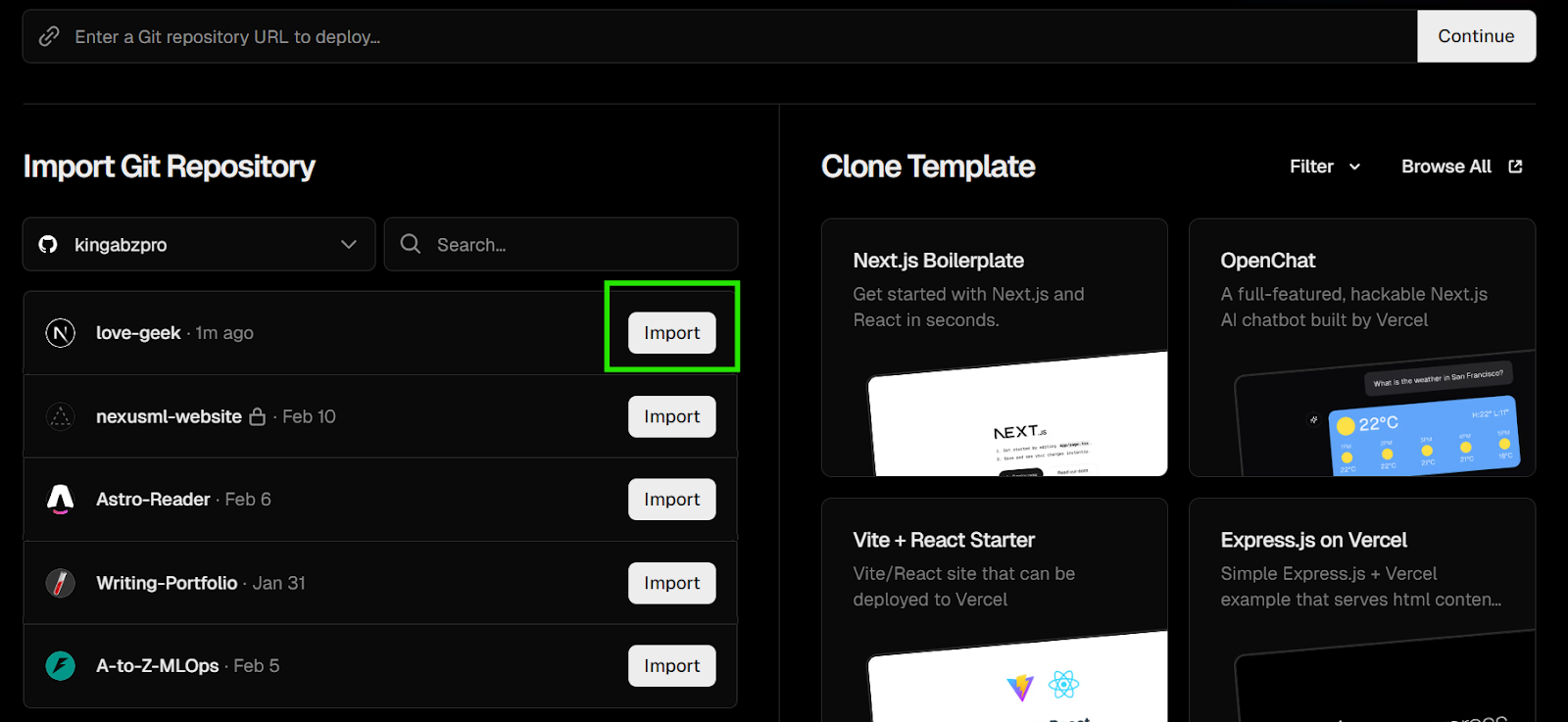

Go to https://vercel.com and create a free account. Connect your GitHub account when prompted. Vercel integrates directly with GitHub repositories, allowing automatic deployments on every push.

Once connected:

- Click “Add New Project”

- Select the

love-geekrepository - Import the project

Vercel automatically detects that this is a Next.js application and configures the build settings accordingly.

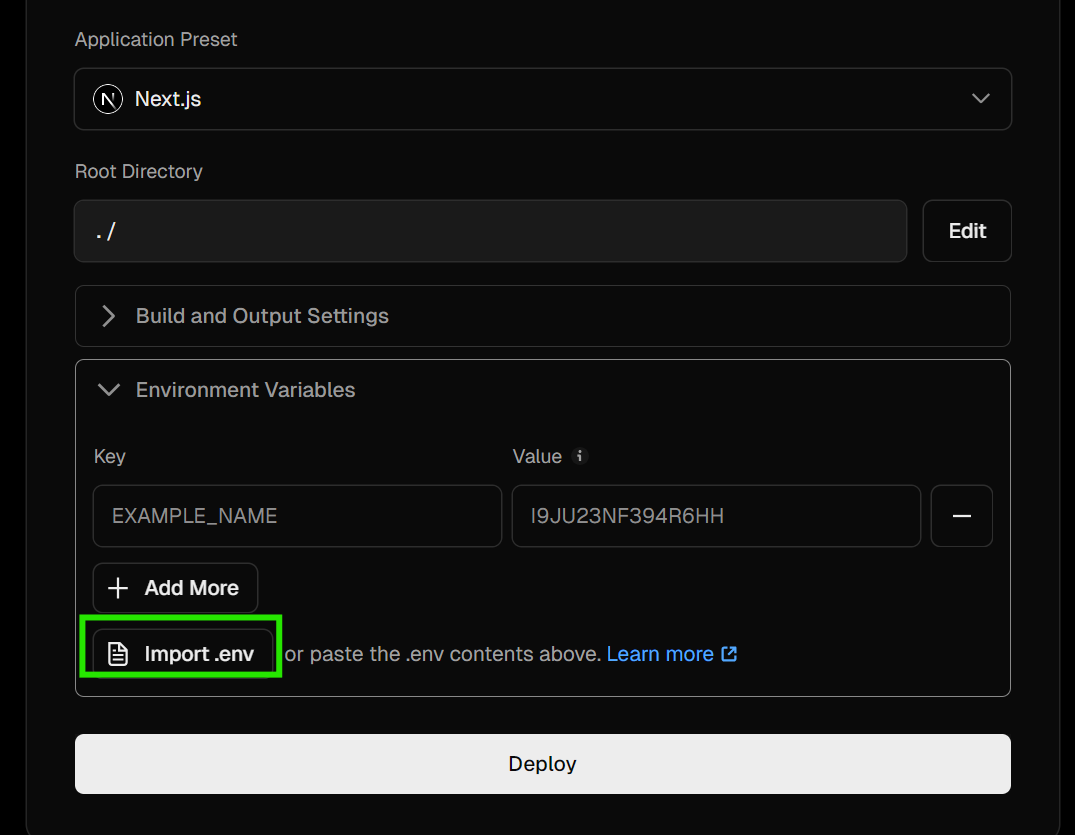

Before deploying, scroll down to the Environment Variables section.

Click “Import .env” or manually add:

- Clerk publishable key

- Clerk secret key

- Neon database URL

- Any additional environment variables used locally

Make sure all production environment variables match your .env.local values.

Click Deploy.

Vercel will:

- Install dependencies

- Build the Next.js project

- Run the production build

- Provision the deployment

Once complete, your application will be live at https://love-geek.vercel.app.

Open the live URL and test:

- Authentication flow

- Profile creation

- Swipe functionality

- Match creation

- Protected routes

If everything works, you now have a fully deployed, authenticated, database-backed web application running in production.

From idea to deployed product in under 30 minutes.

Now, every time you push new features to GitHub, Vercel will automatically redeploy your application. With Gemini 3.1 Pro managing structure, memory, and guardrails, you can continue adding features confidently and evolve this into a production-ready startup foundation within days.

Final Thoughts

If you ask me honestly, I was genuinely impressed with the first build. The app worked end-to-end with authentication, database integration, swipe logic, and deployment all handled in a structured way. There were a few minor gaps during testing, such as needing to manually request temporary seed profiles to properly test the swipe functionality. That was not a limitation of the model, just something I needed to specify more clearly.

What stood out the most was Gemini 3.1 Pro’s debugging ability. When the app threw errors or when configuration issues appeared, it was very effective at tracing the problem, suggesting fixes, and validating the solution step by step. It did not just patch errors blindly. It reasoned through them.

The ecosystem around it made a big difference. The combination of Gemini CLI, custom skills, extensions, persistent memory, and YOLO mode created a powerful development workflow. The memory system reduced repeated explanations, custom skills enforced architectural discipline, and the extensions improved documentation and search accuracy.

In terms of cost, building, debugging, and deploying this full-stack application cost me roughly 5 dollars in API usage. Compared to higher-tier coding models, this is extremely reasonable.

I also chose not to rely on limited free tiers or subscription-based coding platforms that frequently disconnect or restrict usage. Paying for a stable, high-quality experience was worth it.

Overall, this was more than just building an app. It was a learning experience in agentic development. Understanding how to manage memory, create custom skills, use Gemini extensions, and strategically switch to YOLO mode changed how I approach AI-assisted engineering.

I would highly recommend this workflow to anyone, especially vibe coders who want to move beyond quick prototypes and build structured, production-ready applications faster and with fewer debugging headaches.

If you’re eager to learn more about building with agentic AI, I recommend checking out the Designing Agentic Systems with LangChain course.

FAQs

Does Gemini CLI have a free tier for coding agents?

es! Gemini CLI offers a generous free tier for individual developers. You can authenticate using a standard Google account (via OAuth) to access high usage limits, specifically 60 requests per minute and 1,000 requests per day. This allows you to execute agentic reasoning, run terminal commands, and refactor your repository without needing a paid Google Cloud billing account upfront.

What is the difference between Gemini Code Assist and Gemini CLI?

Gemini Code Assist is an IDE-based extension (available for VS Code, IntelliJ, etc.) that provides inline code completions, standard chat, and an agent mode. Gemini CLI is a standalone, open-source terminal agent that gives you deeper, system-level control over files, custom workflows, and Model Context Protocol (MCP) servers outside of your editor.

Can I use local tools and databases with Gemini CLI?

Yes. Gemini CLI supports the Model Context Protocol (MCP), allowing you to connect local or remote MCP servers. This means your agent can securely read your local file system, query your specific Postgres database, interact with GitHub APIs, or run browser automation tools directly from your terminal.

How does Gemini CLI handle large codebases and project context?

Gemini 3.1 Pro features a massive context window (up to 1 million tokens), allowing it to process massive repositories. To organize this locally, you can use a GEMINI.md or AGENT.md file in your project root. This file acts as persistent memory, giving the agent strict guidelines on your architecture, style guides, and tech stack every time you run a command.

What is Google Antigravity?

Google Antigravity is an advanced, agent-first desktop development environment (available for Mac, Windows, and Linux) built by Google. Unlike traditional IDEs that bolt an AI chat panel onto the side, Antigravity features a centralized "Mission Control" or Agent Manager interface designed specifically for coordinating, monitoring, and managing autonomous AI agents through end-to-end software lifecycles.

As a certified data scientist, I am passionate about leveraging cutting-edge technology to create innovative machine learning applications. With a strong background in speech recognition, data analysis and reporting, MLOps, conversational AI, and NLP, I have honed my skills in developing intelligent systems that can make a real impact. In addition to my technical expertise, I am also a skilled communicator with a talent for distilling complex concepts into clear and concise language. As a result, I have become a sought-after blogger on data science, sharing my insights and experiences with a growing community of fellow data professionals. Currently, I am focusing on content creation and editing, working with large language models to develop powerful and engaging content that can help businesses and individuals alike make the most of their data.