Kurs

Qwen 3.5 is a family of open-source multimodal models designed to handle both text and visual inputs. The models follow an image-text-to-text architecture, allowing them to reason over images or video frames alongside natural language prompts.

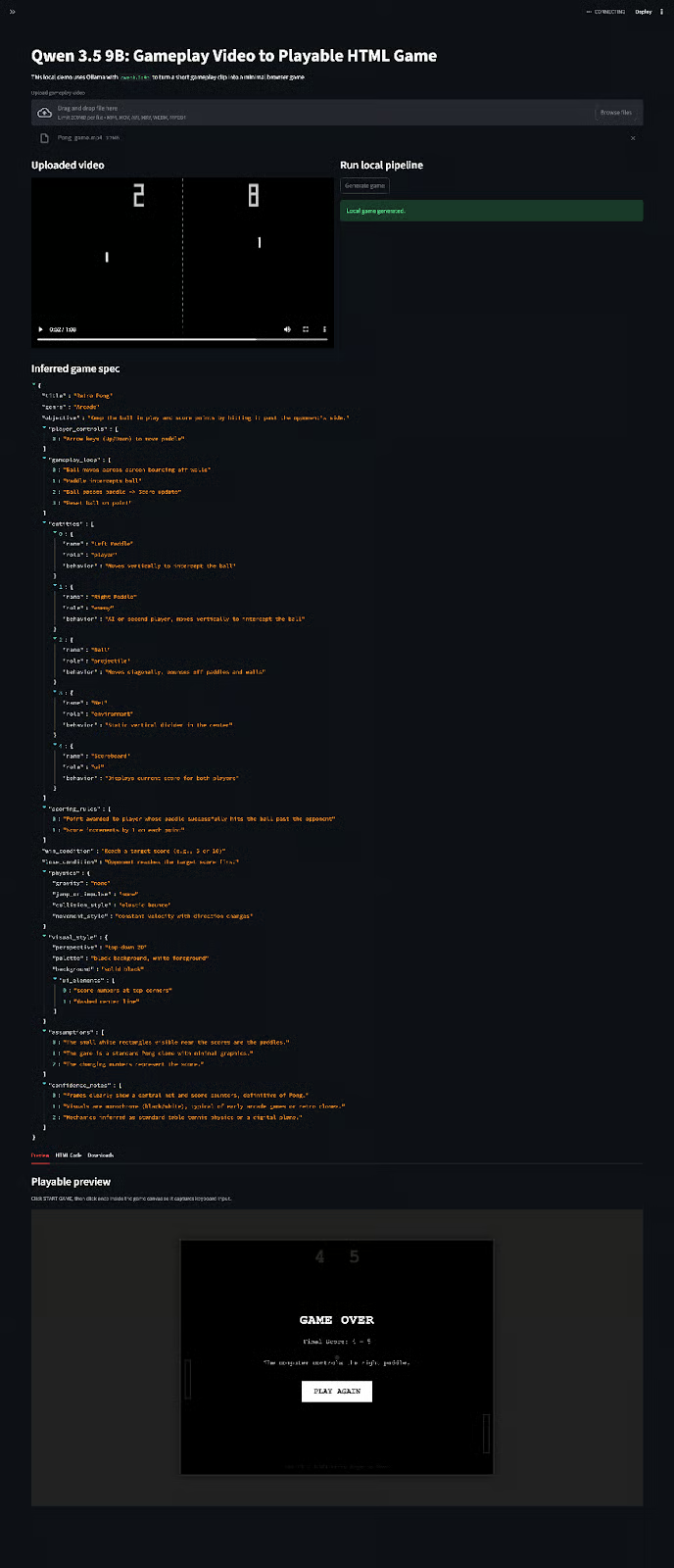

In this tutorial, we’ll build a Streamlit app that converts a gameplay video into a playable browser game using the local Qwen3.5 small, qwen3.5:9b model, hosted via Ollama. The application extracts frames from the uploaded video, infers the game mechanics, converts them into a structured specification, and then generates a complete HTML/CSS/JavaScript game that can be previewed directly inside the interface.

Along the way, you’ll learn how to:

- Extract representative frames from videos for multimodal reasoning

- Run Qwen 3.5 locally with Ollama for image-text inference

- Design a two-stage prompting pipeline for reasoning and code generation

- Convert visual gameplay observations into a structured game specification

- Generate a playable HTML5 browser game from the inferred mechanics

- Build an interactive Streamlit interface to run and preview the pipeline

By the end, you’ll have a working demo that turns a short gameplay clip into a simple, playable browser game generated entirely on a local machine. You can see an abridged version of the workflow in the video below:

What is Qwen 3.5 Small?

Qwen 3.5 is a multimodal model family designed to cover a wide range of deployment scenarios, from lightweight edge models to frontier-scale reasoning systems.

The official Qwen 3.5 lineup currently includes 0.8B, 2B, 4B, 9B, 27B, 35B-A3B, 122B-A10B, and 397B-A17B variants, along with base and quantized checkpoints for some models.

Choosing the right small model depends on the type of workflow you want to build.

- The 0.8B and 2B variants are best suited for edge devices, rapid prototyping, and constrained hardware environments.

- The 4B model provides a step up and works well for small local agents or lightweight visual reasoning tasks.

- The 9B model is better suited for local multimodal applications because it remains compact enough to run locally but powerful enough to infer gameplay mechanics and generate usable HTML game code.

For this tutorial, we used the qwen3.5:9b model, which provides a strong balance between capability, local deployability, and multimodal reasoning, making it well-suited for building a video-frames-to-browser-game pipeline without relying on external APIs.

You can also read our guide on how to run Qwen3.5 locally on a single GPU.

Why use a local small model for this?

I also tested this demo with the qwen/qwen3.5-397b-a17b model. While this model is significantly larger and generally more capable than the local 9B variant, it requires API-based inference, which introduces external dependencies and potential latency.

In practice, if runtime is not a strict constraint, the overall generation time differed by roughly ~3 minutes between the two models in my tests. The larger model did produce slightly smoother gameplay logic and a more polished preview experience, but the improvement was not dramatic.

Considering that the 9B model runs fully locally, the results are surprisingly competitive. Despite its much smaller size, it is capable of generating playable browser games with only a modest drop in quality compared to the much larger 397B model.

You can read our full guide on fine-tuning Qwen3.5 small to learn more about getting the most out of the 0.8B variant.

Qwen 3.5 Example Project: Build a Video-to-Game Generator

In this section, we’ll build a Streamlit app that:

- takes in a short gameplay clip

- extracts a small number of representative frames

- sends those frames to

qwen3.5:9bthrough Ollama - asks the model to infer a structured game specification

- asks the model to generate a single-file HTML game

- previews the generated game inside the Streamlit app

This setup highlights Qwen’s multimodal capabilities that support tasks like reverse-engineering logic from gameplay footage and turning it into code

Step 1: Install dependencies

Before building the application, we first need to prepare a local environment with the libraries required for video processing, multimodal inference, and the interactive interface.

pip install streamlit opencv-python ollama python-dotenv pydanticThe demo uses a small set of Python libraries:

Streamlitto build the interactive web interface where users upload gameplay videos and preview generated games.OpenCVto decode the uploaded video and extract representative frames.Ollamato run the local Qwen 3.5 model for multimodal reasoning and code generation.python-dotenvto manage environment variables if needed.Pydanticto enforce a structured schema for the inferred game specification before generating code.

Next, we need to download the model itself. Ollama makes this straightforward by pulling the model directly from its model registry:

ollama run qwen3.5:9bThe first run downloads the model and stores it locally. The qwen3.5:9B is approximately 6.6GB in size, supports text and image inputs, and provides a 256K token context window, which makes it well-suited for multimodal workflows.

Step 2: Imports

Now that the local environment is ready, the next step is to import the libraries we’ll use throughout the application and create a folder to store generated artifacts.

import json

import base64

import tempfile

from pathlib import Path

from typing import List

import cv2

import ollama

import streamlit as st

import streamlit.components.v1 as components

from pydantic import BaseModel, Field, ValidationError

OUTPUT_DIR = Path("outputs_local")

OUTPUT_DIR.mkdir(exist_ok=True)The above block brings together the core stack for the project. We use json to format the structured game specification, base64 to encode extracted video frames before sending them to the model, and tempfile to safely save uploaded videos during a session. Path from pathlib gives us a clean way to manage file paths, while List from typing is used for type hints in the schema definitions.

Next, we import the main application libraries like cv2 from OpenCV is responsible for reading the uploaded gameplay clip and extracting representative frames, ollama is the interface used to communicate with the locally running qwen3.5:9b model. streamlit provides the web UI, and streamlit.components.v1 lets us embed the generated HTML game directly into the app for live preview and interaction.

Finally, we import BaseModel, Field, and ValidationError from Pydantic. These are used to define and validate the structured JSON schema that represents the inferred game design. Instead of trusting the model to output loosely formatted text, we force it into a predictable schema before passing that result into the HTML game generator.

The last two lines create an outputs_local directory if it does not already exist. This folder is where we save generated artifacts such as the inferred game specification and the final HTML game file, which makes it easier to inspect or download results after each run.

In the next step, we’ll define the Pydantic schema classes that describe the game specification the model must generate from the extracted gameplay frames.

Step 3: Define the schema

At this stage, we define the structured schema that the model must follow when describing the gameplay mechanics inferred from the video frames.

Instead of asking the model to directly generate HTML code from images, we first require it to produce a structured game specification. This intermediate representation makes the pipeline much easier to debug, validate, and extend.

class Entity(BaseModel):

name: str

role: str

behavior: str

class Physics(BaseModel):

gravity: str = ""

jump_or_impulse: str = ""

collision_style: str = ""

movement_style: str = ""

class VisualStyle(BaseModel):

perspective: str = ""

palette: str = ""

background: str = ""

ui_elements: List[str] = Field(default_factory=list)

class GameSpec(BaseModel):

title: str

genre: str

objective: str

player_controls: List[str]

gameplay_loop: List[str]

entities: List[Entity]

scoring_rules: List[str]

win_condition: str

lose_condition: str

physics: Physics

visual_style: VisualStyle

assumptions: List[str]

confidence_notes: List[str]This schema defines the core structure of the game specification that the model must generate.

- The

Entityclass represents any object present in the game, such as the player, enemies, obstacles, or UI elements. Each entity includes a name, its role within the game, and a short description of its behavior. - The

Physicsclass describes how objects move and interact in the game world. These fields capture basic mechanics like gravity, impulse, jump behavior, collision handling, and movement patterns. - The

VisualStyleclass captures the visual presentation of the game. This includes camera perspective (such as top-down or side-scrolling), the color palette, the background style, and any visible UI elements like score counters or health indicators. - Finally, the

GameSpecclass brings everything together. It represents the full game design inferred from the video frames, including the objective, controls, gameplay loop, entities, scoring system, win/lose conditions, and assumptions made by the model when interpreting the frames.

By validating the model’s output against this schema, we ensure that the inferred design always follows a predictable structure. This also makes it much easier to pass the specification into the next stage of the pipeline.

Step 4: Prompts for two-stage generation

With the schema in place, the next step is to define the prompts that guide the model through the two stages of our pipeline.

The first stage focuses on reverse-engineering the gameplay mechanics from the extracted frames and producing a structured game specification.

The second stage takes that specification and generates a playable browser game using HTML, CSS, and JavaScript.

Step 4.1: Define the system prompts

The first component of our prompting strategy is the system prompts, which define the role the model should play during each stage of the pipeline.

SPEC_SYSTEM_PROMPT = """

You are an expert arcade game designer and gameplay reverse-engineering analyst.

You observe a few frames from a gameplay video and infer the smallest possible playable browser-game clone.

The gameplay is likely a simple arcade game like Pong, Breakout, Snake, or Flappy Bird.

Prefer these mechanics if uncertain.

Rules:

- Only infer mechanics that are strongly supported by visual evidence.

- Prefer a minimal playable prototype over a complex clone.

- Do not invent advanced systems unless clearly visible.

- Keep the result suitable for plain HTML5 canvas and JavaScript.

- Return strict JSON only.

- No markdown.

- No code fences.

""".strip()

CODE_SYSTEM_PROMPT = """

You are a senior JavaScript game developer.

Generate a complete single-file HTML game.

Requirements:

- Return raw HTML only.

- No markdown.

- No code fences.

- Use plain HTML, CSS, and JavaScript only.

- Use HTML5 canvas unless there is a strong reason not to.

- Keep the game simple, playable, and self-contained.

- No external libraries, no CDNs, no external assets.

- Use simple shapes/colors instead of images.

- Include score.

- Include restart support.

- Include instructions on screen.

- Keep keyboard controls simple.

- Make the game playable inside an iframe preview.

- IMPORTANT: the canvas must have tabindex="0".

- IMPORTANT: keyboard input must work inside an embedded iframe.

- IMPORTANT: focus the canvas automatically on load, on canvas click, and after pressing Start/Restart buttons.

- IMPORTANT: prevent default browser behavior for arrow keys and spacebar.

""".strip()The first system prompt instructs the model to behave like a gameplay analyst. It emphasizes minimal assumptions and encourages the model to infer only the mechanics that are strongly supported by the frames.

Since the model only sees a small number of sampled images, the prompt explicitly biases it toward simple arcade-style games that can be implemented with lightweight browser logic.

The second system prompt switches the model’s role to a JavaScript developer. At this stage, the model receives a structured game specification and is responsible for converting it into a complete HTML game.

Step 4.2: Build the game-spec prompt

Next, we define a helper function that constructs the user prompt for the first stage of the pipeline. This prompt asks the model to analyze the extracted frames and produce a structured game specification.

def build_spec_user_prompt(game_hint: str, extra_constraints: str, max_frames: int) -> str:

return f"""

You are given a few still frames extracted from a gameplay video.

From these frames, infer a minimal, playable browser game design.

Use very simple 2D arcade-style mechanics.

If you are unsure, bias toward Pong / Breakout / Snake / Flappy Bird style games.

Output valid JSON with exactly this schema:

{{

"title": "string",

"genre": "string",

"objective": "string",

"player_controls": ["string"],

"gameplay_loop": ["string"],

"entities": [

{{

"name": "string",

"role": "player|enemy|obstacle|projectile|ui|environment",

"behavior": "string"

}}

],

"scoring_rules": ["string"],

"win_condition": "string",

"lose_condition": "string",

"physics": {{

"gravity": "string",

"jump_or_impulse": "string",

"collision_style": "string",

"movement_style": "string"

}},

"visual_style": {{

"perspective": "string",

"palette": "string",

"background": "string",

"ui_elements": ["string"]

}},

"assumptions": ["string"],

"confidence_notes": ["string"]

}}

Important:

- Only infer the simplest mechanics needed for a playable clone.

- Prefer a very simple game that can be rebuilt in one HTML file.

- Avoid menus, accounts, audio, networking, cutscenes, or multi-level progression.

Optional game hint from user:

{game_hint or "None"}

Extra constraints:

{extra_constraints or "None"}

You are seeing at most {max_frames} frames from the video, so stay simple and conservative.

Return JSON only.

""".strip()This function builds the prompt that will be sent to the model along with the extracted video frames. It includes several important elements:

- A reminder that the model is analyzing still frames rather than the full video

- A bias toward simple arcade mechanics

- The full JSON schema structure, ensuring the output matches our

GameSpecmodel - Optionally, the user can add hints and constraints that allow the interface to guide the model toward a particular game style

By embedding the schema directly in the prompt, we encourage the model to produce output that can be validated by Pydantic in the next stage of the pipeline.

Step 4.3: Build the code-generation prompt

Once the game specification is generated and validated, we need a second prompt to convert that specification into an actual playable game.

def build_code_user_prompt(game_spec: dict) -> str:

spec_json = json.dumps(game_spec, indent=2)

return f"""

Using the following game specification, generate a complete single-file HTML game.

Requirements:

- One self-contained HTML file.

- Inline CSS and inline JavaScript.

- Must be playable in a browser.

- Must render correctly in an iframe.

- Show score on screen.

- Show controls on screen.

- Add restart support using a button or key.

- Keep visuals simple and robust.

- Use requestAnimationFrame.

- Use canvas for gameplay rendering.

- No external assets.

- Make the game small but genuinely playable.

Game specification:

{spec_json}

Return raw HTML only.

""".strip()The build_code_user_prompt function converts the GameSpec dictionary into formatted JSON and inserts it into the prompt. The model then uses this structured description as a design brief to generate the final HTML game.

As the specification already defines the mechanics, entities, controls, and objectives, the model’s task becomes much simpler. It only needs to translate that design into JavaScript game logic and a canvas-based rendering loop.

In the next step, we’ll implement the frame extraction pipeline using OpenCV, which converts the uploaded gameplay video into a small set of representative images that can be analyzed by the Qwen 3.5 model.

Step 5: Extract representative frames

Ollama’s Qwen 3.5 local models accept text and images, not raw video. So instead of sending the uploaded clip directly, we extract a small set of evenly spaced frames and pass those to the model.

def save_uploaded_video(uploaded_file) -> Path:

suffix = Path(uploaded_file.name).suffix or ".mp4"

with tempfile.NamedTemporaryFile(delete=False, suffix=suffix) as tmp:

tmp.write(uploaded_file.read())

return Path(tmp.name)

def extract_frames(video_path: Path, max_frames: int = 6):

cap = cv2.VideoCapture(str(video_path))

total_frames = int(cap.get(cv2.CAP_PROP_FRAME_COUNT))

interval = max(1, total_frames // max_frames) if total_frames > 0 else 1

frames = []

frame_id = 0

while True:

ret, frame = cap.read()

if not ret:

break

if frame_id % interval == 0:

_, buffer = cv2.imencode(".jpg", frame)

frames.append(base64.b64encode(buffer).decode())

frame_id += 1

if len(frames) >= max_frames:

break

cap.release()

return frames

def extract_json_block(text: str) -> str:

start = text.find("{")

end = text.rfind("}")

if start == -1 or end == -1 or end <= start:

raise ValueError("No valid JSON object found in model response.")

return text[start : end + 1]

def cleanup_html_response(text: str) -> str:

html = text.strip()

if html.startswith("```"):

lines = html.splitlines()

if lines and lines[0].startswith("```"):

lines = lines[1:]

if lines and lines[-1].startswith("```"):

lines = lines[:-1]

html = "\n".join(lines).strip()

return html

def patch_html_for_iframe_keyboard(html: str) -> str:

if 'tabindex="0"' not in html and "<canvas" in html:

html = html.replace("<canvas", '<canvas tabindex="0"', 1)

focus_patch = """

<script>

(function() {

const canvas = document.querySelector("canvas");

if (!canvas) return;

if (!canvas.hasAttribute("tabindex")) {

canvas.setAttribute("tabindex", "0");

}

function focusGame() {

try { canvas.focus(); } catch (e) {}

}

window.addEventListener("load", focusGame);

canvas.addEventListener("click", focusGame);

document.addEventListener("keydown", function(e) {

if (["ArrowUp","ArrowDown","ArrowLeft","ArrowRight"," "].includes(e.key)) {

e.preventDefault();

}

}, { passive: false });

const buttons = document.querySelectorAll("button");

buttons.forEach(btn => {

btn.addEventListener("click", () => {

setTimeout(focusGame, 50);

});

});

})();

</script>

"""

if "</body>" in html:

html = html.replace("</body>", focus_patch + "\n</body>")

else:

html += focus_patch

return htmlThe above utilities define five key components to extract the representative frames from the uploaded video:

- Video upload handling: The

save_uploaded_video()function stores the uploaded gameplay video as a temporary file on disk. Since Streamlit uploads files in memory, writing the file to disk allows OpenCV to read it efficiently during frame extraction while preserving the original file extension. - Frame sampling from video: The

extract_frames()function loads the video using OpenCV and samples a small number of evenly spaced frames across its duration. Instead of processing every frame, the function calculates a sampling interval and extracts representative frames that capture the gameplay. Each frame is encoded as aJPEGand converted into abase64string so it can be sent directly as an image input to the multimodal Qwen model. - Structured JSON extraction: The

extract_json_block()helper function ensures that the model output can be parsed correctly. The extracted content can then be safely validated against theGameSpecschema. - HTML response cleanup: The

cleanup_html_response()function removes markdown formatting that the model may include when generating code. If the model wraps the HTML output in triple backtick code fences, this helper strips those markers so the HTML can be rendered directly inside the Streamlit preview. - Keyboard interaction for embedded games: The

patch_html_for_iframe_keyboard()function injects a small JavaScript patch into the generated HTML to ensure keyboard controls work correctly inside the Streamlit iframe. It automatically focuses the canvas element, assigns atabindexif missing, and prevents the browser from intercepting arrow keys and the spacebar so they can be used for gameplay controls.

Together, these utilities form the video preprocessing and response-handling layer of the pipeline.

Step 6: Infer game specification

Now that we have the extracted frames and the prompt templates ready, we can implement the first stage of the reasoning pipeline, i.e., converting the gameplay frames into a structured game specification.

def infer_game_spec(video_path: Path, game_hint: str, extra_constraints: str, max_frames: int) -> dict:

frames = extract_frames(video_path, max_frames=max_frames)

prompt = build_spec_user_prompt(game_hint, extra_constraints, max_frames)

response = ollama.chat(

model="qwen3.5:9b",

messages=[

{

"role": "system",

"content": SPEC_SYSTEM_PROMPT,

},

{

"role": "user",

"content": prompt,

"images": frames,

},

],

options={

"temperature": 0.1,

"num_ctx": 8192,

},

)

text = response["message"]["content"]

parsed = json.loads(extract_json_block(text))

validated = GameSpec(**parsed)

return validated.model_dump()The above function defines four key stages in the game-spec inference pipeline:

- Frame preparation: The

extract_frames()call samples a small set of representative frames from the uploaded gameplay video. These images act as the visual evidence that the model will use to infer the game mechanics. - Prompt construction: The

build_spec_user_prompt()function dynamically creates the user prompt using the optional hint, any extra constraints, and the maximum number of frames extracted. This prompt provides the model with a clear instruction to produce a schema-compliant game specification. - Multimodal model call: The

ollama.chat()request sends both text prompt and the extracted frames to the localqwen3.5:9bmodel. The generation settings use a lowtemperatureto keep the output deterministic andnum_ctx=8192to provide enough context room for the prompt and structured response. - Schema validation: Once the model responds, the function extracts the JSON block, parses it, and validates it against the

GameSpecPydantic schema. Finally, the validated specification is returned as a Python dictionary.

We now have a structured representation of the game that the model inferred from the sampled video frames. In the next step, we’ll use this validated specification as input for the HTML game generation stage.

Step 7: Generate the HTML game

At this point, the model no longer has to infer mechanics from images. Instead, we ask qwen3.5:9b to produce a single self-contained HTML file that includes the layout, styles, game loop, controls, and rendering logic.

def generate_game_html(game_spec: dict) -> str:

response = ollama.chat(

model="qwen3.5:9b",

messages=[

{

"role": "system",

"content": CODE_SYSTEM_PROMPT,

},

{

"role": "user",

"content": build_code_user_prompt(game_spec),

},

],

options={

"temperature": 0.2,

"num_ctx": 8192,

},

)

text = response["message"]["content"]

html = cleanup_html_response(text)

if "<html" not in html.lower():

raise ValueError("Model did not return HTML")

return patch_html_for_iframe_keyboard(html)The above function performs the following four steps to generate and prepare the final HTML game:

- Structured prompt delivery: The above function takes the validated

game_specdictionary and passes it into thebuild_code_user_prompt()function. This converts the game specification into a detailed implementation prompt that instructs the model to generate a complete single-file HTML game. - Code generation with Qwen model: The

ollama.chat()call sends the code-generation system prompt and the user prompt to the localqwen3.5:9bmodel. Since the task is now pure code generation rather than multimodal reasoning, the model only receives text. - HTML cleanup and validation: The model’s raw response is passed through

cleanup_html_response()to remove any markdown or extra formatting. We then perform a simple validation check to confirm that the output contains an HTML document. - Iframe keyboard patching: The final HTML is passed through

patch_html_for_iframe_keyboard(), which injects a small JavaScript fix to make the game playable inside Streamlit’s embedded iframe. This step is important because browser games often fail to capture arrow keys and spacebar correctly unless the canvas is explicitly focusable and automatically focused.

Now we have a browser-playable HTML game generated entirely from the inferred game specification.

Step 8: Streamlit UI

As the final step, let’s wrap everything inside a simple Streamlit interface so users can try the system without touching the underlying code. The interface exposes three main capabilities:

- Uploading a gameplay video

- Configuring generation parameters such as hints and frame sampling

- Previewing and downloading the generated game

st.set_page_config(page_title="Qwen 3.5 9B (Ollama) - Video to Game", layout="wide")

st.title("Qwen 3.5 9B: Gameplay Video to Playable HTML Game")

st.write(

"This local demo uses Ollama with qwen3.5:9b to turn a short gameplay clip "

"into a minimal browser game."

)

with st.sidebar:

st.header("Local generation settings")

game_hint = st.text_input("Optional game hint", value="Simple Pong-like arcade game")

extra_constraints = st.text_area(

"Extra constraints",

value="Keep the game extremely simple and easy to play with arrow keys or space bar.",

height=120,

)

max_frames = st.slider("Frames extracted from video", 3, 8, 5)

uploaded_video = st.file_uploader(

"Upload gameplay video",

type=["mp4", "mov", "avi", "mkv", "webm"],

)

if "local_game_spec" not in st.session_state:

st.session_state.local_game_spec = None

if "local_game_html" not in st.session_state:

st.session_state.local_game_html = None

if uploaded_video is not None:

video_path = save_uploaded_video(uploaded_video)

col1, col2 = st.columns([1, 1])

with col1:

st.subheader("Uploaded video")

st.video(str(video_path))

with col2:

st.subheader("Run local pipeline")

if st.button("Generate game", type="primary"):

try:

with st.spinner("Extracting frames and inferring game mechanics (local model)..."):

game_spec = infer_game_spec(

video_path=video_path,

game_hint=game_hint,

extra_constraints=extra_constraints,

max_frames=max_frames,

)

st.session_state.local_game_spec = game_spec

with st.spinner("Generating HTML game code with qwen3.5:9b..."):

game_html = generate_game_html(game_spec)

st.session_state.local_game_html = game_html

(OUTPUT_DIR / "local_game_spec.json").write_text(

json.dumps(game_spec, indent=2),

encoding="utf-8",

)

(OUTPUT_DIR / "local_generated_game.html").write_text(

game_html,

encoding="utf-8",

)

st.success("Local game generated.")

except ValidationError as e:

st.error(f"Schema validation failed: {e}")

except Exception as e:

st.error(f"Local generation failed: {e}")

if st.session_state.local_game_spec:

st.subheader("Inferred game spec")

st.json(st.session_state.local_game_spec)

if st.session_state.local_game_html:

tab1, tab2, tab3 = st.tabs(["Preview", "HTML Code", "Downloads"])

with tab1:

st.subheader("Playable preview")

st.caption(

"Click START GAME, then click once inside the game canvas so it captures keyboard input."

)

components.html(

st.session_state.local_game_html,

height=760,

scrolling=False,

)

with tab2:

st.subheader("Generated HTML (local)")

st.code(st.session_state.local_game_html, language="html")

with tab3:

st.subheader("Download files (local)")

st.download_button(

label="Download HTML game (local)",

data=st.session_state.local_game_html,

file_name="local_generated_game.html",

mime="text/html",

)

st.download_button(

label="Download JSON spec (local)",

data=json.dumps(st.session_state.local_game_spec, indent=2),

file_name="local_game_spec.json",

mime="application/json",

)The above code defines how the user uploads a gameplay clip, optionally provides a hint about the game type, and controls how many frames should be extracted from the video for analysis. Once a video is uploaded, the app displays it alongside a Generate game button that triggers the full pipeline.

When the user runs the pipeline, the app first extracts frames and infers the game mechanics using the local qwen3.5:9b model, producing a structured game specification. In the second stage, the model generates a complete HTML game from that specification. The results are stored in Streamlit session state so they persist across UI updates.

After generation finishes, the interface displays the inferred game specification, a live playable preview of the generated game, the raw HTML source, and download buttons for saving both the HTML game and the JSON specification.

To try it yourself, save the code as app.py and launch:

streamlit run app.py

Conclusion

Qwen 3.5 is one of the most interesting multimodal model families available right now. In this tutorial, we used qwen3.5:9b through Ollama to build a fully local gameplay video-to-game generator. The app extracts frames from a short clip, infers a structured game design, generates a single-file browser game, and previews it inside Streamlit.

The hosted qwen/qwen3.5-397b-a17b model is still the faster and stronger option for this task, but the local 9B result is impressive in its own right. It proves that a small multimodal model can now do meaningful visual reasoning and code generation on consumer hardware.

If you want to push this further, then compare 9B against 4B and 27B models, add a refinement loop, or turn the output into a mini game engine that supports multiple iterations from the same clip.

Qwen3.5 Small FAQs

Can I run this demo with `qwen3.5:4b` instead of 9B?

Yes, but results will usually be less reliable. The 4B model is still useful for lightweight local experiments, but the 9B variant is a better balance between speed and quality. Ollama exposes both as local text-and-image models.

Why not use `qwen3.5:0.8b` or 2B?

The 2B models are better suited for simpler multimodal tasks or edge-style deployments than this full pipeline.

What is the best local Qwen 3.5 model for this use case?

The qwen3.5:9b is the best starting point. It is still compact at 6.6GB in Ollama, supports text-and-image input, and performs much better than the very small variants for this kind of reasoning-heavy generation task.

Can I use the larger Qwen 3.5 models locally, too?

Yes. Ollama currently lists 27b, 35b, and 122b variants as well, all with text-and-image support. The tradeoff is memory and latency.

I am a Google Developers Expert in ML(Gen AI), a Kaggle 3x Expert, and a Women Techmakers Ambassador with 3+ years of experience in tech. I co-founded a health-tech startup in 2020 and am pursuing a master's in computer science at Georgia Tech, specializing in machine learning.