Track

Your RAG pipeline returns an answer to a user's question. It looks reasonable, reads well, and sounds confident. But when you compare it against the documents that were actually retrieved, you start noticing things like a date that doesn't appear anywhere in the context or a recommendation that contradicts what the source material says.

Catching those problems at scale is where things get complicated. This is precisely why metrics like BLEU and ROUGE were built for machine translation and summarization tasks, but they can’t tell you whether the model's answer is actually grounded in the retrieved context or whether it contradicts the source.

Human evaluation catches those subtleties, but is too expensive at scale (think of 10,000 queries or more). So there's a gap: Automated metrics are fast but shallow, while human review is thorough but expensive and slow.

Using an LLM as a judge sits in between. You take a capable model, hand it a rubric you've written, and ask it to evaluate the outputs of your system the way a human reviewer would. It's not a perfect solution (I'll get into the failure modes later), but it gives you a practical way to monitor quality at a scale that neither traditional metrics nor manual annotation can match on their own.

In this tutorial, I will walk you through building an LLM judge to evaluate a RAG system with working code throughout, so you can reproduce everything on your own machine.

What is LLM As a Judge?

The main idea of LLM-as-a-judge is asking a language model to evaluate text outputs based on criteria you spell out in a prompt. So basically, you are using an LLM to check if the content generated by another model is good or not.

The reason this works at all (and I remember being skeptical of it initially) is that evaluation is a fundamentally easier task than generation. When a model generates a response from scratch, it's juggling a lot of competing constraints at once:

- Accuracy

- Tone

- Instruction adherence

- Staying grounded in context

- Not over-committing on uncertain information

But when you hand that same model a finished response and ask it to check specific properties, the task becomes much more focused as it only needs to assess, not create. And models tend to perform more consistently on that narrower task.

RAG with LangChain

Comparing LLM-as-a-judge approaches

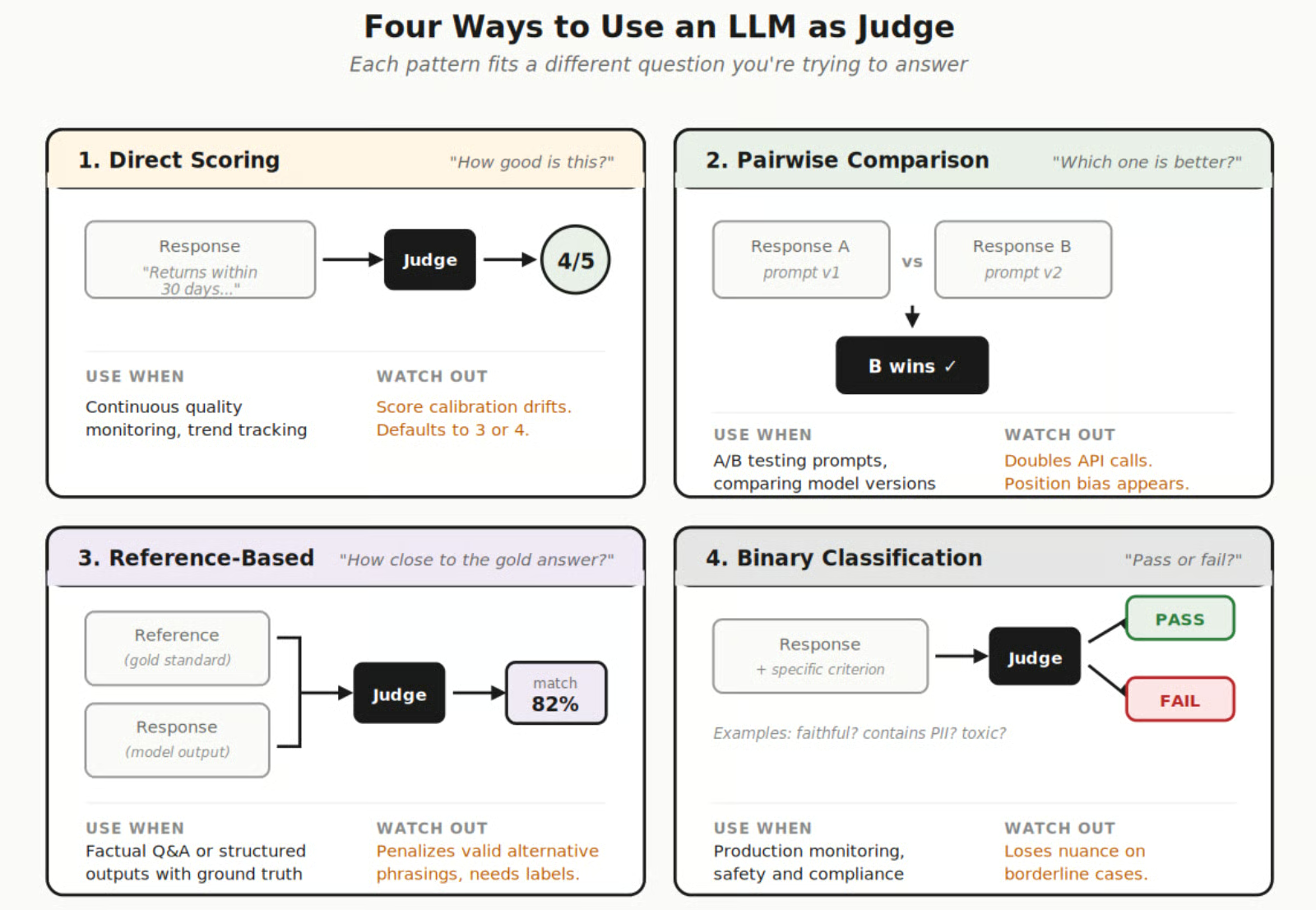

In practice, teams tend to land on one of these approaches depending on what they're trying to measure:

- Direct scoring has the judge rate a single output on a numeric scale (1 to 5, 1 to 10, whatever your rubric defines). You get a number and, if you've asked for it, a written explanation of the reasoning. This is the most common starting point, though it has a calibration problem I'll come back to.

- Pairwise comparison puts two candidate responses side by side and asks the judge to pick the better one. This gets around the numeric calibration issue entirely because the judge only needs to make a relative call, not assign an absolute number. Teams often reach for this when they're comparing prompt variants or model versions against each other.

- Reference-based evaluation provides the judge with both the model output and a gold-standard answer, and asks how well the output matches. This works best for factual Q&A and structured outputs where you already have labeled ground truth to compare against.

- Binary classification strips the judgment down to pass or fail on one specific property. Is this response faithful to the context? Does it contain personally identifiable information? Is it positive? These binary checks are faster to run, cheaper per evaluation, and tend to produce more consistent results than the numeric approaches.

Image by Author. Four different approaches of LLM as a judge.

For a direct overview of strengths, use cases, and restraints, I have compared all four approaches in the following table:

|

Approach |

When to Use |

Strengths |

Watch Out For |

|

Direct scoring |

General quality monitoring, continuous tracking |

Easy to trend over time, works with single outputs |

Judges drift in how they calibrate scores |

|

Pairwise comparison |

A/B testing models, prompt variant comparison |

More reliable rankings than absolute scores |

Doubles your API calls, doesn't give an absolute quality signal |

|

Reference-based |

Factual Q&A, structured outputs |

Clear ground truth makes evaluation straightforward |

Requires labeled data, penalizes valid alternative phrasings |

|

Binary classification |

Safety checks, hallucination detection, compliance |

Low ambiguity, easy to automate alerts |

Loses nuance on borderline cases |

For a broader look at evaluation approaches beyond the judge pattern, LLM Benchmarks Explained covers the full picture.

How Does an LLM Judge Work?

Under the hood, it's a simple API call as you just need to package up the content you want evaluated (the model's output, the original user query, and any retrieved context that was used during generation) and wrap it in a prompt that tells the judge model what you care about and how you want the results formatted.

The judge processes this and returns a structured response, usually a score along with written reasoning that explains why it assigned that score. The quality of your evaluation depends almost entirely on how well you write the rubric. I cannot overstate this.

A simple prompt that says "rate this response from 1 to 5" will give you scores that are all over the place, because the judge has no shared understanding of what a 3 means versus a 4. This is why you need to spell out, concretely, what each score level looks like and include examples if you can.

Implementing LLM As a Judge

Now for the hands-on part, we're going to build a complete evaluation pipeline from scratch: a retrieval augmented generation (RAG) system that answers questions from a small knowledge base, a set of test queries designed to trigger different failure modes, and an LLM judge that scores the outputs on faithfulness and answer relevance.

Setting up the environment

You'll need Python 3.9+ and an OpenAI API key, which you can get in the OpenAI console. Install the dependencies:

pip install openai chromadb langchain langchain-openai langchain-community deepevalSet your API key:

import os

os.environ["OPENAI_API_KEY"] = "your-key-here"We're using OpenAI for both the RAG generator and the LLM judge, though they'll be different models (GPT-4o-mini for generation, GPT-4o for judging). For embeddings, text-embedding-3-small does the job for a tutorial scope. In a production system, you'd want to benchmark a few embedding models on your specific domain data before committing to one.

If you want to build up more background on RAG before jumping into the evaluation code, our Retrieval Augmented Generation (RAG) with LangChain course walks through the fundamentals.

Preparing data and vector store

We need a small knowledge base for the RAG system to retrieve from. I'm going to use a set of text chunks about a fictional company's return policy. The content itself isn't the focus here, but having a contained set of documents makes it easier to see exactly when the model goes beyond the provided context (which is the failure mode we want the judge to catch).

from langchain_openai import OpenAIEmbeddings

from langchain_chroma import Chroma

from langchain.schema import Document

# Sample knowledge base

documents = [

Document(

page_content="All customers are eligible for a full refund within 30 days of purchase. "

"The item must be in its original packaging and unused condition. Refunds are "

"processed to the original payment method within 5-7 business days.",

metadata={"source": "return_policy.pdf", "section": "refund_eligibility"}

),

Document(

page_content="Exchanges can be requested within 45 days of purchase for items of "

"equal or lesser value. Size exchanges on clothing are free of charge. "

"For items of greater value, the customer pays the difference.",

metadata={"source": "return_policy.pdf", "section": "exchanges"}

),

Document(

page_content="Electronics have a 15-day return window due to rapid depreciation. "

"Opened software and digital downloads are non-refundable. Defective electronics "

"can be returned within 90 days with proof of defect from an authorized service center.",

metadata={"source": "return_policy.pdf", "section": "electronics"}

),

Document(

page_content="Shipping costs for returns are covered by the company for defective items. "

"For non-defective returns, the customer is responsible for return shipping. "

"Free return shipping labels are available for loyalty program members regardless of reason.",

metadata={"source": "return_policy.pdf", "section": "shipping"}

),

Document(

page_content="Gift purchases can be returned with the gift receipt for store credit only. "

"Without a gift receipt, returns are processed at the lowest sale price in the last 90 days. "

"Gift cards and prepaid cards are non-refundable and cannot be exchanged.",

metadata={"source": "return_policy.pdf", "section": "gifts"}

),

]

# Create vector store

embeddings = OpenAIEmbeddings(model="text-embedding-3-small")

vectorstore = Chroma.from_documents(documents, embeddings, persist_directory="./chroma_db")

print(f"Indexed {len(documents)} documents")Five documents about return policies, even though that's not a lot, but it's enough to demonstrate the retrieval problems we care about: incomplete context, irrelevant chunks getting pulled in, and the hallucination opportunities that come up when the model feels pressure to give a complete answer even though the context doesn't fully support one.

Building a basic RAG pipeline

The RAG pipeline retrieves relevant chunks and generates an answer. Nothing fancy here, just the standard retrieve-then-generate pattern.

from openai import OpenAI

client = OpenAI()

def rag_query(question: str, top_k: int = 2) -> dict:

"""Run a RAG query: retrieve context, generate answer."""

# Retrieve

results = vectorstore.similarity_search(question, k=top_k)

context = "\n\n".join([doc.page_content for doc in results])

# Generate

system_prompt = """You are a helpful customer support assistant. Answer the

customer's question based ONLY on the provided context. If the context doesn't

contain enough information to answer fully, say so."""

user_prompt = f"""

Context: {context}

Question: {question}

Answer:

"""

response = client.chat.completions.create(

model="gpt-4o-mini",

messages=[

{"role": "system", "content": system_prompt},

{"role": "user", "content": user_prompt}

],

temperature=0.3

)

answer = response.choices[0].message.content

return {

"question": question,

"context": context,

"answer": answer,

"sources": [doc.metadata for doc in results]

}I went with GPT-4o-mini as the generator because it's cheap and fast, and we want the evaluation itself to be the focus. The temperature sits at 0.3, which reduces randomness but doesn't eliminate it.

And the system prompt explicitly instructs the model to only use the provided context, which is standard practice in RAG systems, though models still drift outside the context more often than you'd expect. That's precisely what we want the judge to flag.

Let's test it:

result = rag_query("Can I return opened software?")

print(f"Question: {result['question']}")

print(f"Answer: {result['answer']}")

print(f"Sources: {result['sources']}")Generating an evaluation dataset

You need test cases that exercise different parts of the pipeline. In production, you'd collect these from real user queries. For this tutorial, I'm building a mix that deliberately includes the kinds of questions where RAG systems tend to stumble.

eval_questions = [

# Straightforward questions (should be easy)

"What is the refund window for regular purchases?",

"Are exchanges free for clothing size changes?",

# Questions requiring synthesis across chunks

"What are my options if I received a defective laptop 60 days ago?",

# Edge cases likely to cause hallucination

"Can I get a refund for a digital download I purchased yesterday?",

"What happens if I return a gift without the gift receipt?",

# Questions where context might be incomplete

"Do you offer refunds for international orders?",

"Can I return an item I bought on sale?",

# Adversarial or tricky questions

"If I'm a loyalty member, do I get free return shipping even for electronics?",

]

# Generate RAG responses for all questions

eval_results = []

for q in eval_questions:

result = rag_query(q)

eval_results.append(result)

print(f"Q: {q}")

print(f"A: {result['answer'][:150]}...")

print()The mix matters. Some of these questions have clean, direct answers in the documents, while some require the model to combine information across multiple retrieved chunks (the defective laptop question needs both the general 30-day refund policy and the 90-day electronics defect window).

And a couple of them, like the international orders question, ask about things the knowledge base simply doesn't cover. Those last ones are where you'll see hallucination most frequently, because the model feels the pull to be helpful and fills in gaps with plausible-sounding information that has no grounding in the actual context.

For more on building and testing RAG systems, our selection of Top 30 RAG Interview Questions and Answers is a useful reference.

Setting up the LLM judge

This is where things get interesting. We're building two separate judges:

- Faithfulness judge: Does the answer stay within the bounds of the retrieved context?

- Relevance judge: Does the response actually address what was asked?

def judge_faithfulness(question: str, context: str, answer: str) -> dict:

"""Judge whether the answer is faithful to the retrieved context."""

eval_prompt = f"""

You are an impartial judge evaluating whether an AI assistant's

answer is faithful to the provided context.

Faithfulness means every claim in the answer can be traced back to

information in the context. The answer should not contain information

that isn't supported by or inferable from the context.

Score on a scale of 1 to 5:

1 - The answer contains multiple claims not supported by the context

2 - The answer contains at least one significant unsupported claim

3 - The answer is mostly faithful but includes minor unsupported details

4 - The answer is faithful with only trivial extrapolations

5 - Every claim in the answer is directly supported by the context

Context: {context}

Question: {question}

Answer to evaluate: {answer}

Respond in this exact JSON format: {{"score": <int 1-5>, "reason": "<one paragraph explanation>"}}

"""

response = client.chat.completions.create(

model="gpt-4o",

messages=[{"role": "user", "content": eval_prompt}],

temperature=0.0,

response_format={"type": "json_object"}

)

import json

return json.loads(response.choices[0].message.content)

def judge_relevance(question: str, answer: str) -> dict:

"""Judge whether the answer is relevant to the question."""

eval_prompt = f"""

You are an impartial judge evaluating whether an AI assistant's answer

is relevant to the user's question.

Relevance means the answer directly addresses what the user asked.

A relevant answer may acknowledge limitations in available information,

but it should not go off-topic or provide unrelated information.

Score on a scale of 1 to 5:

1 - The answer does not address the question at all

2 - The answer partially addresses the question but misses the main point

3 - The answer addresses the question but includes significant irrelevant content

4 - The answer addresses the question well with minor tangents

5 - The answer directly and completely addresses the question

Question: {question}

Answer to evaluate: {answer}

Respond in this exact JSON format: {{"score": <int 1-5>, "reason": "<one paragraph explanation>"}}

"""

response = client.chat.completions.create(

model="gpt-4o",

messages=[{"role": "user", "content": eval_prompt}],

temperature=0.0,

response_format={"type": "json_object"}

)

import json

return json.loads(response.choices[0].message.content)A few design choices to call out here. The temperature is at 0.0 for maximum consistency across runs. We're using response_format={"type": "json_object"} so the output is always parseable.

The rubric describes each score level concretely rather than using vague labels like "good" or "poor." Without that level of specificity, I've found that judges default to giving everything a 3 or 4, which tells you nothing useful.

Notice that GPT-4o is doing the judging even though GPT-4o-mini is doing the generation. Having the judge be more capable than the generator is a common pattern because the judge needs strong instruction-following to apply the rubric consistently.

If you use the same model for both roles, you also introduce a documented self-preference bias where the model rates its own outputs more favorably.

Running the evaluation loop

Time to run everything and collect the results.

import json

evaluation_report = []

for result in eval_results:

# Run both judges

faithfulness = judge_faithfulness(

result["question"], result["context"], result["answer"]

)

relevance = judge_relevance(

result["question"], result["answer"]

)

evaluation_report.append({

"question": result["question"],

"answer": result["answer"][:200],

"faithfulness_score": faithfulness["score"],

"faithfulness_reason": faithfulness["reason"],

"relevance_score": relevance["score"],

"relevance_reason": relevance["reason"],

})

print(f"Q: {result['question']}")

print(f" Faithfulness: {faithfulness['score']}/5 | Relevance: {relevance['score']}/5")

print(f" Faith reason: {faithfulness['reason'][:100]}...")

print()

# Summary statistics

faith_scores = [r["faithfulness_score"] for r in evaluation_report]

rel_scores = [r["relevance_score"] for r in evaluation_report]

print(f"Average faithfulness: {sum(faith_scores)/len(faith_scores):.2f}")

print(f"Average relevance: {sum(rel_scores)/len(rel_scores):.2f}")

print(f"Questions with faithfulness < 3: {sum(1 for s in faith_scores if s < 3)}")Each question triggers two API calls to GPT-4o, one per judge. For our eight test questions, that's sixteen evaluation calls total, which is manageable. In production with thousands of daily queries, you'd want to batch these, run them asynchronously, and probably only evaluate a sample rather than every single response.

Analyzing results and iterating

The numeric scores give you an overview, but it's the reasoning that tells you what to actually fix.

# Find problematic responses

print("=== LOW FAITHFULNESS (score < 4) ===")

for r in evaluation_report:

if r["faithfulness_score"] < 4:

print(f"\nQ: {r['question']}")

print(f"Score: {r['faithfulness_score']}")

print(f"Reason: {r['faithfulness_reason']}")

print(f"Answer preview: {r['answer'][:150]}...")

print("\n=== LOW RELEVANCE (score < 4) ===")

for r in evaluation_report:

if r["relevance_score"] < 4:

print(f"\nQ: {r['question']}")

print(f"Score: {r['relevance_score']}")

print(f"Reason: {r['relevance_reason']}")The international orders question and the sale items question will almost certainly score low on faithfulness, because the knowledge base doesn't address those topics, and the model is likely to have improvised an answer.

The defective laptop question is interesting too because it requires synthesizing information from the general refund policy (30 days) with the electronics defect clause (90 days for defective items with proof), and depending on which chunks get retrieved, the model may or may not have the complete picture.

What you do with the results depends on what you discover. Low faithfulness on certain question categories usually points to one of two things: either the retriever is pulling in the wrong chunks (a retrieval problem), or the generator is going beyond the context it was given (a generation problem).

Looking at the retrieved context alongside the answer tells you which one you're dealing with. Low relevance scores, on the other hand, usually mean the system prompt needs tuning, or the retrieved context is so far off-topic that the model has nothing useful to work with.

For the operational side of running evaluations as part of your deployment pipeline, the LLMOps Concepts course covers the infrastructure and workflow patterns.

Using DeepEval for structured evaluation

Writing custom judge functions works, but for production use, you probably want a framework that handles the boilerplate. DeepEval is one of the more mature options, and it comes with well-tested metric implementations that cover the most common evaluation criteria.

from deepeval import evaluate

from deepeval.test_case import LLMTestCase

from deepeval.metrics import FaithfulnessMetric, AnswerRelevancyMetric

# Configure metrics

faithfulness_metric = FaithfulnessMetric(

threshold=0.7,

model="gpt-4o",

include_reason=True

)

relevance_metric = AnswerRelevancyMetric(

threshold=0.7,

model="gpt-4o",

include_reason=True

)

# Create test cases from our RAG results

test_cases = []

for result in eval_results:

test_case = LLMTestCase(

input=result["question"],

actual_output=result["answer"],

retrieval_context=[result["context"]]

)

test_cases.append(test_case)

# Run evaluation

evaluate(

test_cases=test_cases,

metrics=[faithfulness_metric, relevance_metric]

)What you get from DeepEval that's tedious to build on your own:

- Retry logic for when the judge returns malformed JSON (which happens more often than you'd think)

- Result caching so re-running an evaluation doesn't double your API bill

- Pytest integration that lets you wire LLM evaluations directly into your CI/CD pipeline.

Each metric also generates a self-explanation, which means every score comes with a written breakdown of the judge's reasoning that you can inspect when something looks off.

For a deeper look at DeepEval's full capabilities, see Evaluate LLMs Effectively Using DeepEval.

LLM As a Judge Best Practices

Getting an LLM judge to return numbers is straightforward. Getting those numbers to actually mean something useful for your team requires more care than most people expect going in.

Evaluation frameworks and metrics

Besides DeepEval, there are several frameworks to choose from at this point, and the landscape keeps growing. Here's a practical comparison based on where each one fits best:

|

Framework |

Best For |

LLM Judge Support |

RAG-Specific Metrics |

Integration |

|

DeepEval |

Full evaluation suite, CI/CD integration |

Yes, with self-explanatory scores |

Faithfulness, contextual precision/recall, relevancy |

pytest, LangChain |

|

RAGAS |

RAG pipeline evaluation specifically |

Yes |

Faithfulness, answer relevance, context precision, context recall |

LangChain, LlamaIndex |

|

MLflow |

Experiment tracking with evaluation |

Yes (built-in; can also be combined with DeepEval/RAGAS) |

Via third-party integrations |

MLflow ecosystem |

|

Evidently |

Production monitoring and drift detection |

Yes, with continuous tracking |

Via custom evaluators |

Monitoring dashboards |

|

LangSmith |

LangChain-native tracing and evaluation |

Yes |

Via custom evaluators |

LangChain |

For RAG systems, four metrics tend to cover most of what you need in practice.

- Faithfulness tells you whether the generated answer stays grounded in the retrieved context. If the score comes back at 0.6, that means roughly 40% of the claims in the answer lack support from the provided documents. This is your primary hallucination detector, and in my experience, it's the single most important metric to track on an ongoing basis.

- Answer relevancy captures whether the response actually addresses what was asked, which is a separate issue entirely. A response can be perfectly faithful to the context (every claim checks out) and still miss the point of the question completely.

- Context precision looks at the retrieval side: are the relevant documents being ranked near the top of the results? If the document containing the answer gets retrieved at position 5 out of 5, your retrieval technically works, but the ranking is poor, and that affects generation quality because models pay more attention to what appears first in the context window.

- Context recall goes the other direction and checks how much of the information needed to answer the question was actually retrieved. Low recall is a retrieval infrastructure problem, and no amount of prompt engineering on the generation side will compensate for context that simply isn't there.

You want to run these together because different combinations of high and low scores point to different root causes.

- High faithfulness with low relevance means the model is accurately reflecting the context, but the context itself wasn't relevant to the question.

- Low faithfulness with high relevance means the model understood the question but went beyond the provided context to answer it.

Each combination tells you where to look for the fix.

For hands-on practice with evaluation tracking in MLflow, Evaluating LLMs with MLflow walks through the integration.

Production deployment best practices

Production deployment requires more thought than running evaluations in a notebook, and the practical issues that come up at scale tend to fall into five buckets that are worth addressing before you commit to the approach.

- Plan around known biases. LLM judges consistently rate longer responses higher (verbosity bias) and tend to slightly favor whichever response appears first in pairwise comparisons (position bias). The standard mitigation for position bias is to run each pairwise comparison in both orders and only count the result when the judge is consistent across both. For verbosity, you can add explicit language to your rubric stating that conciseness is acceptable or even preferred, though this reduces the effect rather than eliminating it entirely.

- Calibrate against human judgment before trusting the scores. Pull together 50 to 100 examples that human reviewers have already scored on your specific domain, run them through the judge, and check the agreement rate, with anything below 75% agreement indicating that the rubric needs more work on its criteria definitions. Remember to repeat this calibration step periodically because both the judge model and your application evolve over time, meaning an agreement rate that looked solid six months ago may have drifted as your prompts, data, or model versions changed.

- Check consistency separately from accuracy. Run the same inputs through the judge twice and compare the outputs, because if a response gets a 3 on one run and a 5 on the next, your rubric has too much room for interpretation, and the judge is reading it differently each time. In that case, binary pass/fail evaluations tend to produce more reliable results across runs than 5-point scales, so consider collapsing the numeric scores into binary for production monitoring while reserving the granular scales for offline analysis where occasional inconsistency is less costly.

- Manage cost at scale. Evaluation prompts are considerably longer than typical generation prompts because they need to include the original question, the full retrieved context, the generated answer, and the complete rubric with scoring criteria. For high-volume systems, a common cost-effective pattern is to run detailed LLM evaluations on a random 10% sample of queries while applying simpler automated checks (length, format compliance, keyword presence) to the remaining 90% of traffic.

- Keep humans in the loop. Even after the judge is working well in production, set up a regular review process where someone from the team examines a random sample of the judge's evaluations each week. Pay particular attention to responses the judge scored as perfect (5/5) because those are precisely the cases where a missed issue would create the most misplaced confidence in a wrong answer.

For more on operationalizing LLM workflows end-to-end, the Associate AI Engineer for Data Scientists track covers the full deployment picture.

Conclusion

LLM-as-a-judge fills a practical need that neither traditional automated metrics nor human review can cover at scale on their own. Automated metrics miss what actually matters for LLM applications; on the other hand, human evaluation catches what matters but can't keep up with the volume of a production system.

An LLM judge sitting in between gives you a way to continuously monitor output quality with nuance that simple metrics can't provide.

We built a complete pipeline in this tutorial: a RAG system with a small knowledge base, test queries designed to trigger different failure modes, custom evaluation judges for faithfulness and relevance, and a framework-based approach using DeepEval that's closer to what you'd run in production.

If there's one takeaway from all of this, it's that the rubric is everything. This is why you should invest time in writing specific, concrete evaluation criteria with clear examples for each score level. A well-designed rubric with a mediocre model will outperform a vague rubric with the most capable model every time.

If you want to keep building from here:

- Building a RAG System with LangChain and FastAPI takes the RAG pipeline from notebook to production service.

- Developing LLM Applications with LangChain covers the broader application development workflow, including chains, agents, and memory.

- Introduction to LLMs in Python is worth revisiting if you want to solidify the foundations before going deeper.

- 12 LLM Projects For All Levels gives you more project ideas to practice with.

- What is Retrieval Augmented Generation (RAG)? covers the conceptual foundation of RAG in more depth.

LLM As a Judge FAQs

What exactly is LLM-as-a-judge?

The short version: you prompt a capable model like GPT-4 or Claude to score or classify what another model produced, based on a rubric you write in plain English. It won't fully replace having humans review outputs, but when you're dealing with thousands of responses a day, it's the only practical way to keep tabs on quality without hiring an army of annotators.

What kinds of things can an LLM judge evaluate?

Basically, anything you can put into words as a criterion. Faithfulness to source documents, tone, safety, whether the response actually answers the question, and hallucination detection are good examples. Some teams run pairwise comparisons, too, where the judge picks which of two candidate responses it prefers. The flexibility is the whole point.

How accurate are LLM judges compared to humans?

Around 80% agreement with human evaluators is the number that keeps showing up in the research, and that's roughly what two human annotators agree with each other anyway. LLM judges do have biases, though. They tend to favor longer, more detailed responses even when a shorter answer would be better, so you have to design around that.

What frameworks support LLM-as-a-judge?

DeepEval is popular because it integrates with pytest and treats evaluations like unit tests, which fits naturally into CI/CD workflows. RAGAS was built specifically for RAG pipeline evaluation. MLflow added integrations with both of those recently, so you can pull metrics from multiple frameworks into one tracking system. LangSmith and Evidently round out the main options.

Josep is a freelance Data Scientist specializing in European projects, with expertise in data storage, processing, advanced analytics, and impactful data storytelling.

As an educator, he teaches Big Data in the Master’s program at the University of Navarra and shares insights through articles on platforms like Medium, KDNuggets, and DataCamp. Josep also writes about Data and Tech in his newsletter Databites (databites.tech).

He holds a BS in Engineering Physics from the Polytechnic University of Catalonia and an MS in Intelligent Interactive Systems from Pompeu Fabra University.