Course

The promise of machine learning has shown many stunning results in a wide variety of fields. Natural language processing is no exception of it, and it is one of those fields where machine learning has been able to show general artificial intelligence (not entirely but at least partially) achieving some brilliant results for genuinely complicated tasks.

Now, NLP (natural language processing) is not a new field and neither is machine learning. But the fusion of both the fields is quite contemporary and only vows to make progress. This is one of those hybrid applications which everyone (with a budget smart-phone) comes across daily. For example, take "keyboard word suggestion" into the account, or intelligent auto-completions; these all are the byproducts of the amalgamation of NLP and Machine Learning, and quite naturally these have become the inseparable parts of our lives.

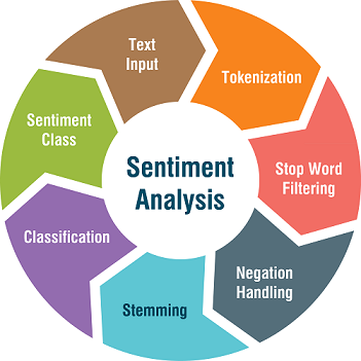

Sentiment analysis is a vital topic in the field of NLP. It has easily become one of the hottest topics in the field because of its relevance and the number of business problems it is solving and has been able to answer. In this tutorial, you will cover this not-so-simple topic in a simple way. You will break down all the little mathematics behind it, and you will study it. You will also build a simple sentiment classifier at the end of this tutorial. Specifically, you will cover:

- Understanding sentiment analysis from a practitioner's perspective

- Formulating the problem statement of sentiment analysis

- Naive Bayes classification for sentiment analysis

- A case study in Python

- How sentiment analysis is affecting several business grounds

- Further reading on the topic

Let's get started.

What is sentiment analysis - A practitioner's perspective:

Essentially, sentiment analysis or sentiment classification fall into the broad category of text classification tasks where you are supplied with a phrase, or a list of phrases and your classifier is supposed to tell if the sentiment behind that is positive, negative or neutral. Sometimes, the third attribute is not taken to keep it a binary classification problem. In recent tasks, sentiments like "somewhat positive" and "somewhat negative" are also being considered. Let's understand with an example now.

Consider the following phrases:

- "Titanic is a great movie."

- "Titanic is not a great movie."

- "Titanic is a movie."

The phrases correspond to short movie reviews, and each one of them conveys different sentiments. For example, the first phrase denotes positive sentiment about the film Titanic while the second one treats the movie as not so great (negative sentiment). Take a look at the third one more closely. There is no such word in that phrase which can tell you about anything regarding the sentiment conveyed by it. Hence, that is an example of neutral sentiment.

Now, from a strict machine learning point of view, this task is nothing but a supervised learning task. You will supply a bunch of phrases (with the labels of their respective sentiments) to the machine learning model, and you will test the model on unlabeled phrases.

For mere introducing sentiment analysis this should be good, but for being able to build a sentiment classification model, you need something more. Let's proceed.

Source: SlideShare

Source: SlideShare

Formulating the problem statement of sentiment analysis:

Before understanding the problem statement of a sentiment classification task, you need to have a clear idea of general text classification problem. Let's formally define the problem of a general text classification task.

- Input: - A document d - A fixed set of classes C = {c1,c2,..,cn}

- Output: A predicted class c $\in$ C

The document term here is subjective because in the text classification world. By document, it is meant tweets, phrases, parts of news articles, whole news articles, a full article, a product manual, a story, etc. The reason behind this terminology is word which is an atomic entity and small in this context. So, to denote large sequences of words, this term document is used in general. Tweets mean a shorter document whereas an article means a larger document.

So, a training set of n labeled documents looks like: (d1,c1), (d2,c2),...,(dn,cn) and the ultimate output is a learned classifier.

You are doing good! But one question that you must be having at this point is where the features of the documents are? Genuine question! You will get to that a bit later.

Now, let's move on with the problem formulation and slowly build the intuition behind sentiment classification.

One crucial point you need to keep in mind while working in sentiment analysis is not all the words in a phrase convey the sentiment of the phrase. Words like "I", "Are", "Am", etc. do not contribute to conveying any kind of sentiments and hence, they are not relative in a sentiment classification context. Consider the problem of feature selection here. In feature selection, you try to figure out the most relevant features that relate the most to the class label. That same idea applies here as well. Therefore, only a handful of words in a phrase take part in this and identifying them and extracting them from the phrases prove to be challenging tasks. But don't worry, you will get to that.

Consider the following movie review to understand this better:

"I love this movie! It's sweet, but with satirical humor. The dialogs are great and the adventure scenes are fun. It manages to be romantic and whimsical while laughing at the conventions of the fairy tale genre. I would recommend it to just about anyone. I have seen it several times and I'm always happy to see it again......."

Yes, this is undoubtedly a review which carries positive sentiments regarding a particular movie. But what are those specific words which define this positivity?

Retake a look at the review.

"I love this movie! It's sweet, but with satirical humor. The dialogs are great and the adventure scenes are fun. It manages to be romantic and whimsical while laughing at the conventions of the fairy tale genre. I would recommend it to just about anyone. I have seen it several times and I'm always happy to see it again......."

You must have got the clear picture now. The bold words in the above piece of text are the most important words which construct the positive nature of the sentiment conveyed by the text.

What to do with these words? The next step which seems natural is to create a representation similar to the following:

So what is the above representation doing? You have guessed it now. Each row is containing a word and its frequency of occurrence in the document (let's call it a document from now on). You are also wondering love has appeared for only once but why the frequency is 2? Well, this is a part of the whole review. Consider, the representation is for the entire review.

While formulating the problem statement of a sentiment classification task, you understood "Bag of words" representation and the above representation is nothing but a Bag-of-words representation. This is probably the most fundamental concepts in NLP and is the first step of doing any text classification problem. So, make sure you understand it well.

A bag-of-words representation of a document does not only contain specific words but all the unique words in a document and their frequencies of occurrences. A bag is a mathematical set here, so by the definition of a set, the bag does not contain any duplicate words.

But for this application, you are only interested in the bold words as mentioned earlier, so the bag-of-words for this document will only contain these words.

Documents are not written in a jumbled way. Are they? The sequence of words in a document is critical. But in the context of sentiment classification, this sequence is not very important. What is more important or the most important part here is the presence of these words.

The words that you found out in the bag-of-words will now construct the feature set of your document. So, consider you a collection of many movie reviews (documents), and you have created bag-of-words representations for each one of them and preserved their labels (i.e., sentiments - +ve or -ve in this case). Your training set should look like:

This representation is also known as Corpus.

This training set should be easy to interpret -

All the rows are independent feature vectors containing information about a specific document (movie reviews), the particular words and its sentiment. Note that, the label sentiment is often denoted as (+, -) or (+ve, -ve). Also, the features w1, w2, w3, 34, ..., wn are generated from a bag of words, and it is not necessary that all the documents will contain each of these features/words.

You will pass these feature vectors to the classifier. So, let's study it next - Naive Bayes classification model for sentiment classification.

Naive Bayes classification for sentiment analysis:

Naive Bayes classification is nothing but applying Bayes rules for forming classification probabilities. In this section, you study Naive Bayes classifier from the context of sentiment classification. It's highly recommended to get some introduction about Naive Bayes classification and the Bayes rule. Resources for that are as follows:

But why Naive Bayes in the world k-NN, Decision Trees and so many others? You will get to that later.

Let's first build the notion of general terms in Naive Bayes classifier the context of sentiment classification. You will start by taking a look at the Bayes rule:

- For a document d and class c:

In this case, the class comprises two sentiments. Positive and negative.

Let's study each term of the above image in details in this context.

- The RHS term P(c|d) is read as the probability of class c given a document d. This term is also known as Posterior.

- P(d|c) should be similar.

Now, what are these Prior and Likelihood? Also, the term P(d) (probability of a document); does it sound absurd? Gems of questions! Let's find the answers now!

- The term which is shown as Prior is your original belief i.e., original label of the document being positive or negative (in terms of sentiments).

- The term Likelihood is the probability of a document d given a class c.

- Now think of the term Posterior as your updated rule or updated belief obtained by multiplying Prior and Likelihood.

- But what is Normalization Constant P(d)? This term is divided with the result produced by the multiplication to ensure the outcome can be presented in a probability distribution.

Not the finest of details till now! But stick to it. You will discover more information. But remember, you are still building your intuition as to relate Bayes rule in the context of sentiment classification.

Let's get into more details as to find out what exactly Bayes rule is trying to do here. The following image presents more detailed steps of Bayes rule:

Lots of unknown terms here. Let's take it slow.

Let's start with the RHS term cMAP. It denotes the primary objective of the Bayes rule here, i.e. to find out the maximum posterior probability/estimate of a certain document belonging to a particular class. MAP is an abbreviation of Max A Posteriori which is a Greek terminology.

What is argmax? You could have used just max!

- Well,

argmaxdenotes the index. Suppose the P(+|d) > P(-|d) where + and - denote positive and negative sentiments respectively. These terms P(+|d), P(-|d) return probabilities which are a numeric quantity. But, you are not interested in the probability, you are interested in finding out the class for which P(+|d) is greater, andargmaxreturns that. For P(+|d) > P(-|d),argmaxwill return +.

And yes, you can drop the denominator term P(d). It entirely depends on the implementation.

But how do I find out $P(d|c)$ and $P(c)$? This is exactly where bag of words will come in handy. But how?

Read on!

You already know how to convert a given document to a bag of words representation. More importantly, you can represent a document as a set of features with this. So now, essentially the term cMAP can be written as (ignoring the denominator term P(d)):

But, how do you really calculate the probabilities? Let's start with $P(c)$ first.

P(c) is basically concerned with this question : "How often does this class occur?" Let's say your document dataset contains 60% positive sentiments and 40% negative sentiments. So, $P(+) = 0.6$ and $P(-) = 0.4$.

Now, how do you interpret this term: P(x1, x2,...,xn | c)?

Think it this way - what is the probability of the occurrences of these words (features) given the class c. For example, say you have 1000 documents, and you have only two words in the corpus - "good" and "awesome". Now, out these 1000 documents, 500 documents are labeled as positive, and the remaining 500 are labeled as negative. Also, you found out that out of 500 positively labeled documents, 200 documents contain "good" and "awesome" both (note P(x1,x2) means P(x1 and x2)). So, the probability P(good,awesome | +) = 200 / 1000 = 1/5.

One important point you would like to make here is if your size of vocabulary is $X$ then you can formulate Xn likelihood (like P(good,awesome | +)) probabilities provided your document contains n words.

Remember you have to compute the likelihood probabilities for both the classes here. So, the total number of combinations a scenario where you have 2000 total words and each document contains 20 words on an average will be (2000)20. This number is insanely large! And what if the corpus size is in millions (which really happens in practical cases)?

This is called the Bayes Classifier. But it just does not work as the computations are way too many. Now, you will study some assumptions for making Bayes classifier a Naive Bayes classifier.

The assumptions that you are going to study are called Naive Bayes Independence Assumptions. They are as follows:

- Bag of words assumption: Assume position does not matter. Assume that a particular word occurs at the 10th and 20th position, but with this assumption, it is meant that you only care about frequency for that word has occurred which is 2. 10 and 12 these two numbers are irrelevant here.

- Conditional independence assumption: This is a critical assumption which makes Bayes classifier Naive Bayes. It states that "assume the feature probabilities P(xi|cj)". Take a closer look at the statement. It means that P(x1|cj), P(x2|cj) and so on are independent of each other. (It does not in any way mean that P(x1), P(x2) and so on are independent of each other) Now, the term P(x1, x2,...,xn | c) can be expressed as follows: ![]()

So, naturally, Xn combinations get reduced to Xn which is exponentially less (if your size of vocabulary is $X$ and your document contains n words). Defined mathematically, Bayes classifier when reduced to Naive Bayes classifier looks like:

Naive Bayes has two advantages:

- Reduced number of parameters.

- Linear time complexity as opposed to exponential time complexity.

Naive Bayes classification mechanism when applied to a text classification problem, it is referred to as "Multinomial Naive Bayes" classification.

Now, you are quite apt in understanding the mechanics of a Naive Bayes classifier especially, for a sentiment classification problem. Now, it's high time that you implement a sentiment classifier.

You will do that it Python! Let's get started with the case study.

A simple sentiment classifier in Python:

For this case study, you'll use an off-line movie review corpus as covered in the NLTK book and can be downloaded from here. nltk provides a version of the dataset. The dataset categorizes each review as positive or negative. You need to download that first as follows:

python -m nltk.downloader all

It's not recommended to run it from Jupyter Notebook. Try to run it from the command prompt (if using Windows). It will take some time. So, be patient.

For more information about NLTK datasets, make sure you visit this link.

You will be implementing Naive Bayes or let's say Multinomial Naive Bayes classifier using NLTK which stands for Natural Language Toolkit. It is a library dedicated to NLP and NLU related tasks, and the documentation is very good. It covers many techniques in a great and provides free datasets as well for experiments.

This is NLTK's official website. Make sure you check it out because it has some well-written tutorials on NLP covering different NLP concepts.

After all the data is downloaded, you will start by importing the movie reviews dataset by from nltk.corpus import movie_reviews. Then, you will construct a list of documents, labeled with the appropriate categories.

# Load and prepare the dataset

import nltk

from nltk.corpus import movie_reviews

import random

documents = [(list(movie_reviews.words(fileid)), category)

for category in movie_reviews.categories()

for fileid in movie_reviews.fileids(category)]

random.shuffle(documents)

Next, you will define a feature extractor for documents, so the classifier will know which aspects of the data it should pay attention too. "In this case, you can define a feature for each word, indicating whether the document contains that word. To limit the number of features that the classifier needs to process, you start by constructing a list of the 2000 most frequent words in the overall corpus" Source. You can then define a feature extractor that simply checks if each of these words is present in a given document.

# Define the feature extractor

all_words = nltk.FreqDist(w.lower() for w in movie_reviews.words())

word_features = list(all_words)[:2000]

def document_features(document):

document_words = set(document)

features = {}

for word in word_features:

features['contains({})'.format(word)] = (word in document_words)

return features

"The reason that you computed the set of all words in a document document_words = set(document), rather than just checking if the word in the document, is that checking whether a word occurs in a set is much faster than checking whether it happens in a list" - Source.

You have defined the feature extractor. Now, you can use it to train a Naive Bayes classifier to predict the sentiments of new movie reviews. To check your classifier's performance, you will compute its accuracy on the test set. NLTK provides show_most_informative_features() to see which features the classifier found to be most informative.

# Train Naive Bayes classifier

featuresets = [(document_features(d), c) for (d,c) in documents]

train_set, test_set = featuresets[100:], featuresets[:100]

classifier = nltk.NaiveBayesClassifier.train(train_set)

# Test the classifier

print(nltk.classify.accuracy(classifier, test_set))

0.71

Wow! The classifier was able to achieve an accuracy of 71% without even tweaking any parameters or fine-tuning. This is great for the first go!

# Show the most important features as interpreted by Naive Bayes

classifier.show_most_informative_features(5)

Most Informative Features

contains(winslet) = True pos : neg = 8.4 : 1.0

contains(illogical) = True neg : pos = 7.6 : 1.0

contains(captures) = True pos : neg = 7.0 : 1.0

contains(turkey) = True neg : pos = 6.5 : 1.0

contains(doubts) = True pos : neg = 5.8 : 1.0

"In the dataset, a review that mentions "Illogical" is almost 8 times more likely to be negative than positive, while a review that mentions "Captures" is about 6 times more likely to be positive" - Source.

Now the question - why Naive Bayes?

- You chose to study Naive Bayes because of the way it is designed and developed. Text data has some practicle and sophisticated features which are best mapped to Naive Bayes provided you are not considering Neural Nets. Besides, it's easy to interpret and does not create the notion of a blackbox model.

Naive Bayes suffers from a certain disadvantage as well:

The main limitation of Naive Bayes is the assumption of independent predictors. In real life, it is almost impossible that you get a set of predictors which are entirely independent.

Why is sentiment analysis so important?

Sentiment analysis solves a number of genuine business problems:

- It helps to predict customer behavior for a particular product.

- It can help to test the adaptability of a product.

- Automates the task of customer preference reports.

- It can easily automate the process of determining how well did a movie run by analyzing the sentiments behind the movie's reviews from a number of platforms.

- And many more!

Next steps:

Congratulations! You have made it till the end. NLP is a very vast and interesting topic and solves some challenging problems. Specifically, the intersection of NLP and Deep Learning has given birth to some fantastic products. It has completely revolutionized the way chatbots interact. The list is never-ending.

This tutorial hopefully gave you a head-start in one of the prime sub-fields of NLP, i.e. Sentiment Analysis. You covered one of the most fundamental topics of NLP - Bag of Words and then studied Naive Bayes Classifier in a detailed manner. You examined its shortcomings as well. You used nltk, one of the most popular Python libraries for NLP and NLU tasks. You implemented a simple Naive Bayes classifier with the movie corpus that nltk provides. Give yourself a round of applause. You deserve it!

Next are some links to some amazing resources if you want to take things further from this humble beginning:

- Study more about NLP and its different tool/techniques

- Study about text data in general

- Study Recurrent Neural Nets

- See some amazing applications of NLP with Deep Learning

Following references were used in order to create this tutorial:

- Text Classification and Naïve Bayes

- Predict Sentiment From Movie Reviews Using Deep Learning

- NLTK Book

- NLP Basics

If you are interested in learning the basics of NLP and applying it to real-world datasets, you can take DataCamp's "Natural Language Processing Fundamentals in Python" course.