Track

On May 5, 2026, a tiny Miami-based startup called Subquadratic released a model named SubQ. The team is small, but they’ve raised $29M in seed funding and claim the model can process up to 12 million tokens in a single pass.

They have also made other crazy-sounding claims, like their model is up to 52 times more efficient than FlashAttention at 1M tokens and achieves a coding performance similar to Claude Opus at roughly 1/20th of the cost.

These are big statements, so it makes sense to break this down and see what’s actually going on. In this piece, I’ll walk through what SubQ is, how the architecture works, and what the early details and developer communities suggest about these claims.

What is SubQ?

SubQ is Subquadratic's LLM, released on May 5, 2026, and built around a single headline feature: a 12-million-token context window. It's the first model the company has shipped, and it comes with a set of bold claims around efficiency and cost that have already sparked significant debate.

The model is not yet publicly available, with access to the API, SubQ Code, and SubQ Search currently limited to early access via waitlist only.

It is built on something called SSA, short for Subquadratic Sparse Attention.

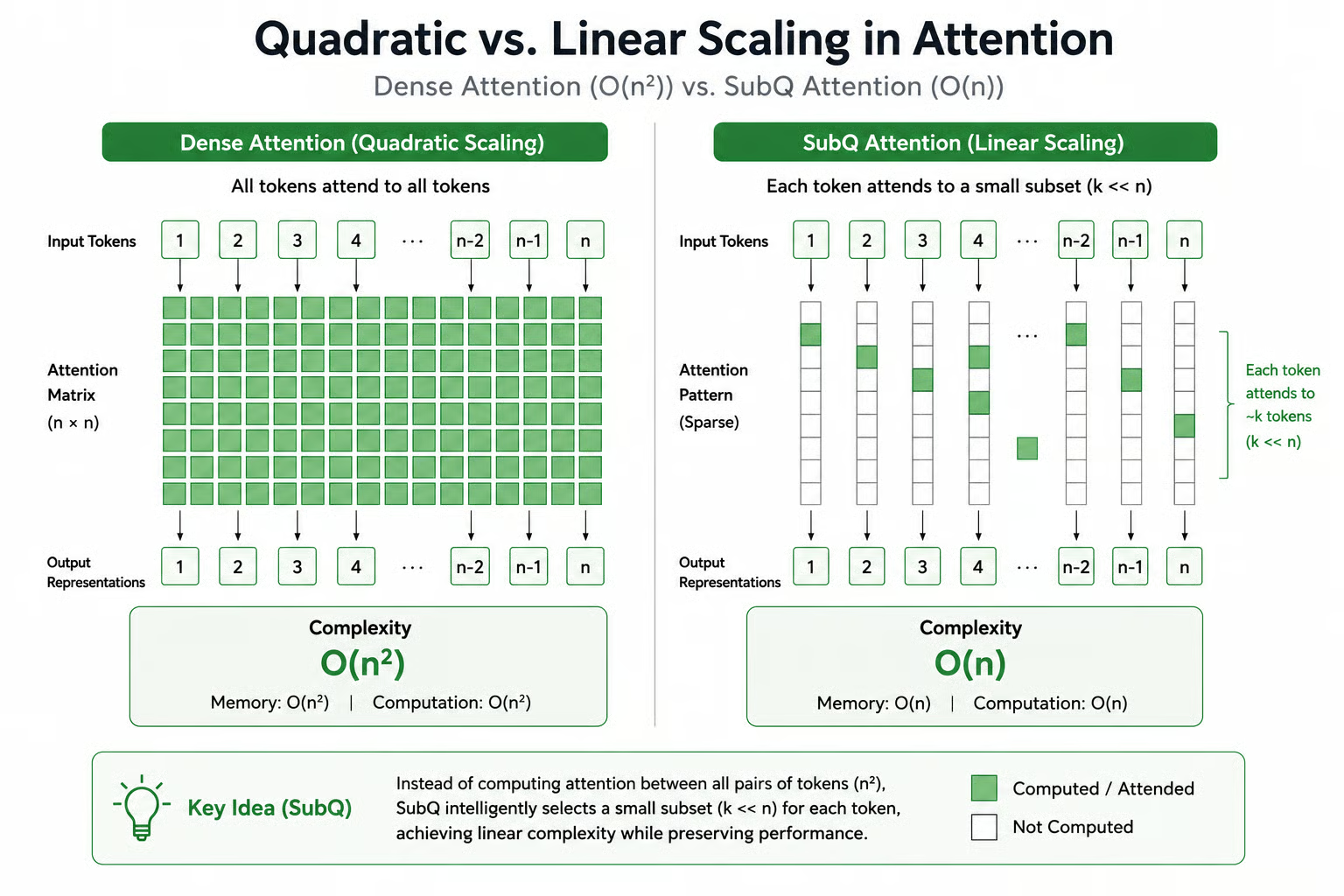

Instead of comparing every token with every other token, which is how standard dense attention works, SSA takes a selective approach. For each token, the model picks the most relevant tokens and computes relationships only within that subset.

This has two clear effects.

- First, it reduces the number of computations, which directly improves efficiency and lowers cost.

- Second, the selection is content-dependent. That means the model isn’t just looking at nearby tokens. It can pull in relevant tokens from anywhere in the sequence, even if they’re far apart.

What’s Special About SubQ?

In practice, the SSA architecture means the model focuses on what actually matters instead of processing everything equally. The intended outcome is similar accuracy to full attention, but with significantly lower compute and memory requirements.

Let’s take a closer look at dense attention first, and then compare both approaches.

The quadratic scaling problem in transformers

Today’s models like GPT, Claude, and Gemini rely on dense attention. At a high level, this means every token is compared with every other token in the input. As the input grows, the number of comparisons grows quadratically.

Take a long document and imagine the model needs to generate the last word. It looks back at every token in that document, builds relationships, and then decides what comes next. That’s why dense attention works well. It considers everything.

But that strength comes with a cost. As the input size increases, the compute and memory requirements rise sharply, and create context window limits. In simple terms, it scales at O(n²), where n is the number of tokens.

This is why most models stay within practical limits. In standard models, 128k tokens is the norm, and most powerful models like Claude Opus max out at 1M context window.

To work around this, most AI-powered systems avoid feeding the model full datasets. Instead, they rely on techniques like:

- RAG (Retrieval-Augmented Generation): Store data externally, fetch only relevant chunks, and pass them into the model.

- Chunking: Break large text into smaller pieces and send only relevant chunks to the model.

- Summarization strategies: Compress long content and feed summaries into the model, instead of the entire text.

If you want a deeper understanding of attention mechanisms, you can check out this tutorial on the Attention Mechanism in LLMs. To get hands-on experience with the building blocks of modern LLMs, I recommend taking our Transformer Models with PyTorch course.

How SubQ positions itself differently

Dense attention grows quadratically with input size, while SSA is designed to scale sub-quadratically, closer to O(n·k) instead of O(n²), where k is the number of tokens selected per step. When k is kept small relative to n, this is substantially more efficient than full attention.

Quadratic vs linear attention diagram showing dense all-to-all connections versus sparse selective connections.

The obvious concern here is accuracy. If the model is only looking at part of the input, it could miss important relationships. That trade-off is usually the reason dense attention exists in the first place.

SubQ’s claim is that this trade-off doesn’t show up in practice. They say the model attends to all the necessary tokens, even if they’re far away from the current token, and maintains similar accuracy to top models.

How Does Subquadratic Sparse Attention Work?

By now, we know that instead of comparing every token against every other token, SSA selects only the tokens that matter in the sequence and computes attention over those positions. Let’s look at how it makes that selection.

How SSA selects relevant relationships

SSA uses content-dependent routing. In simple terms, it picks tokens based on how relevant they are to the current token. It computes a similarity score between tokens, something like similarity(query_i, key_j), and keeps only the top k tokens with the highest scores for attention.

Over time, the model learns to prioritize meaningful tokens and ignore noise. That includes keywords, important entities, and tokens that carry strong contextual signals.

It not only depends on content, but SSA also preserves structural relationships in the sequence. For example, nearby tokens are always given attention through local attention patterns, while certain global tokens are designed to attend across the entire sequence.

SSA also includes hierarchical and clustering-based attention techniques. For example, it clusters similar tokens and computes the attention with the most relevant clusters. This means the model doesn't need to evaluate every token individually, but it can reason at the level of groups first, then zoom in on the tokens within those clusters, if necessary.

Training for long-context retrieval

A large context window alone doesn’t do the magic. Even if you give the model 12M tokens, it still needs to know how to use that context effectively. SSA is trained for this purpose:

- Pre-training: The base model is trained on massive, diverse datasets with long-context representations.

- Supervised fine-tuning: The model is then trained on structured tasks, including reasoning and code generation.

- Reinforcement learning: Rather than limiting the model to consider nearby tokens (local attention), this step is to make it use relevant tokens from the entire text (global attention).

The reinforcement learning stage is especially important for enterprise coding use cases. It allows the model to consider a broader context at once, the entire codebase in this case, while generating the output.

SubQ Benchmarks and Performance

Note that "SubQ 1M-Preview" refers to the version benchmarked at 1M tokens. The full 12M context window is accessible via the API.

On the three benchmarks Subquadratic chose to release, SubQ 1M-Preview trades blows with Claude Opus 4.7, GPT-5.5, and Gemini 3.1 Pro.

But the benchmark selection is narrow; exactly three tests, all focused on long-context retrieval and coding, the two things SubQ is explicitly designed to do well. Broader evaluations across general reasoning, math, multilingual performance, and safety have not been published.

The full model card is listed as "coming soon" on the website, so we can expect more benchmarks across general use cases there.

For now, here are the results across long-context retrieval and coding use cases:

|

Model |

SWE-Bench Verified |

RULER 128K |

MRCR v2 (8-needle, 1M) |

|

SubQ (Subquadratic) |

81.8% |

95.0% |

65.9% |

|

Claude Opus 4.7 (Anthropic) |

87.6% |

94.8% |

32.2% |

|

GPT-5.5 (OpenAI) |

88.7% |

Not available |

74.0% |

|

Gemini 3.1 Pro (Google DeepMind) |

80.6% |

Not available |

26.3% |

|

DeepSeek V4 Pro (DeepSeek) |

80.6% |

Not available |

83.5% |

What the numbers show

RULER 128K: SubQ scores 95% versus Opus 4.7's 94.8%. Though the accuracy difference is negligible, what actually matters here is cost: Subquadratic claims running this eval on SubQ cost around $8, versus roughly $2,600 on Opus at the same context length.

MRCR v2 (8-needle, 1M): This benchmark tests whether the model correctly retrieves and tracks 8 separate facts embedded across a 1M-token context. SubQ shows a research result of 83%, but the production score drops to 65.9%. This ~17-point gap between lab and deployed performance is notable and not fully explained. Even so, it remains competitive with GPT-5.5 (74.0%) and significantly ahead of Claude Opus 4.7 (32.2%) and Gemini 3.1 Pro (26.3%).

SWE-Bench Verified: SubQ scores 81.8%, ahead of Opus 4.6 at 80.8% but behind Opus 4.7 at 87.6%. The margins against Opus 4.6 are small and sensitive to harness setup. Against Opus 4.7 and GPT-5.5, SubQ is clearly behind.

At 12 million tokens: SubQ has been reported to score over 90% on needle-in-a-haystack tasks at 12M context, though this figure has not been verified in official benchmarks. No other frontier model has been tested at this length, so there is nothing to directly compare it to. It is the most architecturally interesting result from the launch and also the one that needs independent reproduction before drawing conclusions.

What SubQ Means for RAG, Coding Agents, and AI Costs

If SubQ’s architecture holds up at scale, it changes how LLM systems are built. Longer context becomes practical, reducing the need for aggressive chunking, retrieval, and token optimization. Let’s look at these changes in more detail.

When RAG becomes optional

For the last two years, Retrieval-Augmented Generation (RAG) has been the default answer to a fundamental limitation in LLMs: models cannot read everything at once. If your knowledge base is larger than the context window, you chunk documents, embed them, store them in a vector database, retrieve the most relevant pieces, and pass only those fragments into the model along with the prompt.

That entire ecosystem exists because context is scarce.

When a model can reliably process millions of tokens in a single pass, many of the engineering layers built around retrieval become less necessary for certain workflows. Instead of spending time deciding which chunks to retrieve, the system can ingest the raw material directly and reason over it end-to-end.

However, beyond the context window, RAG still matters for these capabilities:

- Cross-session memory: LLMs do not remember anything once a session ends. If a user comes back later, the model starts from scratch. Retrieval systems (or databases) store important information like past decisions, documents, or user data so the model can remember and use it in future interactions.

- Permissions and access control: Retrieval systems can enforce rules like “this user can only access data from their team.” This way, the model only receives documents that the user is allowed to see, which prevents accidental data leaks.

- Auditability: In enterprise settings, it’s not enough to give an answer; you need to show why it’s correct. Retrieval systems can point to the exact documents or sources used, so teams can verify results and debug issues if something goes wrong.

- Structured knowledge management: Companies constantly add, update, and remove documents. Retrieval systems use embeddings and indexing to organize this data so the model can find the latest and most relevant information quickly, without reprocessing everything every time.

For a new, interesting approach to adding persistent memory to AI agents, read our Supermemory Tutorial, in which you learn how to build an exercise trainer with short- and long-term memory.

Long-context coding workflows

Right now, most coding models can’t see the entire codebase. So we add layers on top, file search, chunking, ranking, and multi-step planning, just to keep the right context in the window and build relationships across files.

But with the SubQ 12M context, the entire codebase is loaded to the model at once. This simplifies agent design in a meaningful way.

- Planning becomes global: The model can reason about architecture, dependencies, and cross-file interactions without missing context.

- Execution is more coherent: Changes across multiple files can be coordinated in one pass, rather than through incremental updates.

- Review is more reliable: The model can verify its own changes against the entire codebase, reducing inconsistencies.

Cost economics

In standard transformer models, attention scales quadratically with sequence length. If you double the context, the cost doesn’t just double; it can make it four times more expensive.

SubQ claims to break that tradeoff.

- It reports ~1/20 the cost of comparable frontier models (Claude Opus)

- And up to ~1,000× reduction in compute at 12M-token context

If this holds in production, long-context processing stops being an expensive edge case and becomes something you can use more routinely.

However, along with a cheap context window, the model should be able to efficiently use the information we load into its context. Based on the benchmarks, SubQ claims comparable accuracy at a much lower cost, but it’s too early to make a final statement.

How Can I Access SubQ?

SubQ is not publicly available yet. All three products, the core API, SubQ Code, and SubQ Search, are currently in private beta, and access requires an early-access request through the SubQ website.

From a developer's perspective, the API is designed to be easy to integrate. It supports:

- streaming responses

- tool/function calling

- OpenAI-compatible endpoints

That means if your stack already works with OpenAI-style APIs, you typically don’t need to rewrite your integration.

SubQ Code is positioned as a command-line coding agent, while SubQ Search focuses on long-context search for deeper research workflows. Think of them as the SubQ versions of Claude Code and Perplexity.

Pricing for any of these tools is also not yet transparent. There are no publicly available per-token rates, which makes it difficult to independently validate the company’s cost claims.

Skepticism and Open Questions

Within hours of the launch, there’s already a lot of skepticism and mixed reactions on social media platforms and message boards like Hacker News. The discussion is split, with some seeing it as a real breakthrough while others compare it to an “AI Theranos” (a reference to the failed blood-testing startup that made fraudulent technology claims).

Most of the doubts focus on:

- They claim a 12M context window, but the benchmarks shared are all at 1M.

- They named the company Subquadratic, but in some places, they mention O(1)-like scaling. At O(1), you would expect much more than a 12M token context window. Even O(log n) would allow for larger limits.

A similar narrative showed up with Magic.dev in 2024. They made strong claims around extremely large context windows, up to 100M tokens, and major efficiency gains, especially for coding workflows.

The pitch was almost identical: load entire codebases, reduce retrieval complexity, and simplify agent design. But the outcome, at least publicly, has been more muted. Despite raising around $500M, there’s still limited real-world visibility or adoption as of early 2026.

What has been verified

SubQ’s claims are not purely theoretical. Subquadratic reports that benchmarks on RULER, MRCR v2, and SWE-Bench Verified were run by a third-party testing service, showing strong performance on long-context retrieval and competitive results on coding tasks.

However, these results have not yet been independently reproduced by external researchers, and the scope of the evaluation is narrow. All three benchmarks emphasize exactly the areas SubQ is built for (retrieving signals from large context and operating over code).

Another important detail is the architecture. The CTO has confirmed that SubQ does not train models from scratch, but instead builds on open-source base models (likely from families such as DeepSeek or Kimi). This is a practical choice for a small team. It speeds up iteration and reduces training cost. That also means the core innovation isn’t the base model itself, but the attention mechanism and system design around it.

Final Thoughts

SubQ is here, and the claims are pretty bold. The direction is clear: remove the context window constraint and let models handle much larger inputs. They’re positioning it as matching or beating frontier models on coding and long-context retrieval, at a much lower cost.

That said, it’s still early. We’re still waiting on the full model card for more visibility into broader capabilities and testing. The products built on top of it, SubQ Code and the API, are also not publicly available yet. I’m looking forward to getting hands-on with these tools and seeing how the claims hold up in production.

SubQ Model FAQs

Will SubQ change how prompts are written?

Potentially. If the claims hold and longer context becomes significantly cheaper, prompt design may shift toward including more raw information instead of heavily optimized or compressed inputs.

Can SubQ handle multimodal inputs like images and code?

Subquadratic's own website describes their architecture as enabling "large-context, multi-modal inference," suggesting multimodal support is part of their vision. However, no multimodal benchmarks have been published, and the current API and products in private beta are focused entirely on text and code. Whether image input is available or planned for near-term release hasn't been clearly documented.

How does SubQ perform on very short inputs?

All published benchmarks test SubQ at 128K tokens and above. How it performs on short, standard-length inputs against frontier models hasn't been disclosed. Sparse attention mechanisms can sometimes underperform dense attention at shorter sequence lengths, where the overhead of token selection outweighs the efficiency gains. Worth watching once the full model card drops.

Is the company targeting 50M or 100M tokens next?

The New Stack reported that Subquadratic has set a 50 million token context window as a Q4 target. If the architecture scales linearly, this is technically plausible.

Srujana is a freelance tech writer with the four-year degree in Computer Science. Writing about various topics, including data science, cloud computing, development, programming, security, and many others comes naturally to her. She has a love for classic literature and exploring new destinations.