Track

After months of rumors and hot on the heels of the new GPT-5.5 and Claude Opus 4.7, DeepSeek has finally released DeepSeek V4. The release comes in the form of two preview models, V4-Pro and V4-Flash, hitting the market with aggressive pricing and near-frontier performance.

DeepSeek V4-Pro boasts 1.6 trillion total parameters with a 1-million-token context window by default. DeepSeek claims it trails state-of-the-art closed models by only 3 to 6 months while costing a fraction of the price of competitors like OpenAI and Anthropic.

In this article, I’ll cover the DeepSeek V4 release, looking at its key features, benchmark performance, and how it compares to the competition. You can also see our guides to GPT-5.5 and Claude Opus 4.7. For a detailed comparison, read our articles on DeepSeek V4 vs GPT-5.5, Claude Opus 4.7 vs DeepSeek V4, and DeepSeek V4 Flash vs GPT-5.4 mini and nano.

DeepSeek V4 in a nutshell

- V4 comes in two flavors: Pro (1.6T parameters) and Flash (284B parameters).

- Both models feature a default 1-million-token context window.

- Pro costs $1.74 input / $3.48 output per million tokens, heavily undercutting GPT-5.5 and Opus 4.7.

- Available via API, web interface, and open weights (MIT License).

What is DeepSeek V4?

DeepSeek V4 is the highly anticipated new series of open-weight large language models from Chinese AI lab DeepSeek. Released on April 24, 2026, the V4 series comes in two versions: DeepSeek-V4-Pro and DeepSeek-V4-Flash. Both models utilize a Mixture of Experts (MoE) architecture and offer a massive 1-million-token context window by default.

What makes DeepSeek V4 a major release for the industry is its combination of near-frontier performance and super-competitive pricing. The V4-Pro model boasts 1.6 trillion total parameters (49 billion active), making it the largest open-weights model currently available.

Despite its size, DeepSeek claims it trails state-of-the-art closed models by only 3 to 6 months while costing a fraction of the price of competitors like OpenAI and Anthropic.

Key Features of DeepSeek V4

Let’s look at some of the standout features of the latest release:

Structural innovation and 1M context efficiency

The standout feature of DeepSeek V4 is its highly efficient handling of long context.

According to the technical notes, the V4 series uses a Hybrid Attention Architecture that combines Compressed Sparse Attention (CSA) and Heavily Compressed Attention (HCA).

Because of these structural changes, a 1-million-token context is now the standard across all DeepSeek services.

DeepSeek claims that, in a 1M-token context scenario, DeepSeek-V4-Pro requires only 27% of the single-token inference FLOPs and just 10% of the KV cache compared to its predecessor, DeepSeek-V3.2.

Three reasoning effort modes

To give users granular control over latency and performance, DeepSeek V4 includes three reasoning modes:

- Non-think: Fast, intuitive responses for routine daily tasks and low-risk decisions.

- Think High: Conscious logical analysis that is slower but highly accurate for complex problem-solving.

- Think Max: Pushes reasoning capabilities to their absolute fullest extent to explore the boundary of the model's capabilities.

Enhanced agentic capabilities

DeepSeek V4 is apparently optimized for agentic coding. The release notes claim it integrates seamlessly with leading AI agents like Claude Code, OpenClaw, and OpenCode, and it is already driving DeepSeek’s in-house agentic coding infrastructure.

Advanced training optimizations

Under the hood, DeepSeek introduced Manifold-Constrained Hyper-Connections (mHC) to strengthen residual connections and stabilize signal propagation. They also switched to the Muon Optimizer for faster convergence and greater training stability, pre-training the models on over 32 trillion diverse tokens.

DeepSeek V4 Benchmarks

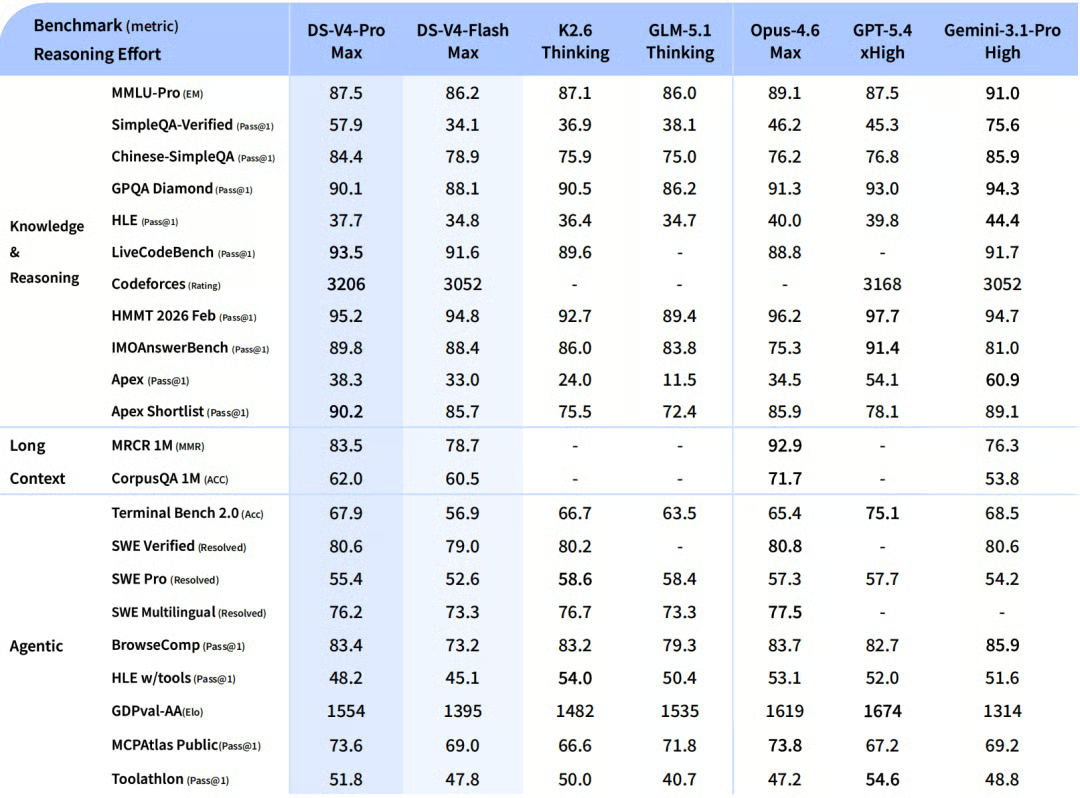

According to DeepSeek’s internal results, DeepSeek V4 demonstrates impressive performance, particularly when pushed to its maximum reasoning limits (DeepSeek-V4-Pro-Max).

According to the official release notes, here is how the model stacks up against the wider industry:

Knowledge and reasoning

Pro-Max easily outperforms other open-source models and beats older frontier models like GPT-5.2. It scores a highly competitive 87.5% on MMLU-Pro and 90.1% on GPQA Diamond, alongside a massive 92.6% on GSM8K for math. While it still trails the absolute bleeding edge (GPT-5.4 and Gemini-3.1-Pro) by a few months, it has significantly closed the knowledge gap.

Agentic tasks

Pro-Max is on par with leading open models, hitting 67.9% on Terminal Bench 2.0 and 55.4% on SWE-Bench Pro. While it falls slightly short of the newest closed models on public leaderboards, internal tests show it beating Claude Sonnet 4.5 and approaching Opus 4.5 levels.

Long context

The 1-million-token window isn't just for show. Pro-Max delivers incredibly strong results here, scoring 83.5% on MRCR 1M (MMR) needle-in-a-haystack retrieval tests. This actually surpasses Gemini-3.1-Pro on academic long-context benchmarks.

DeepSeek V4 Pro vs Flash

Because of its smaller size, Flash-Max naturally scores lower on pure knowledge and struggles with the most complex agent workflows. However, if you give it a larger "thinking budget," it achieves reasoning scores comparable to older frontier models, making it an incredibly cost-effective option for heavy workloads.

How Can I Access DeepSeek V4?

There are several ways to access DeepSeek V4 right now:

- Web interface: You can try both models immediately at chat.deepseek.com via Instant Mode or Expert Mode.

- API access: The API is available today. Developers just need to update their model parameter to

deepseek-v4-proordeepseek-v4-flash. The API maintains compatibility with both OpenAI ChatCompletions and Anthropic API formats. (Note: legacydeepseek-chatanddeepseek-reasonermodels will be retired on July 24, 2026). - Open weights: Both models are released under the MIT License. You can download the weights directly from Hugging Face or ModelScope. Pro is an 865GB download, while Flash is a much more manageable 160GB.

DeepSeek V4 vs Competitors

Over the last week, we’ve seen the release of OpenAI's GPT-5.5 and Anthropic's Claude Opus 4.7. While those models boast top-tier capabilities, especially in long-context reasoning and agentic coding, DeepSeek V4 competes heavily on value and open accessibility.

Here is how DeepSeek-V4-Pro compares to the new flagship models from OpenAI and Anthropic:

|

Feature/Benchmark |

DeepSeek V4 Pro |

GPT-5.5 |

Claude Opus 4.7 |

|

API Pricing (Input / Output per 1M) |

$1.74 / $3.48 |

$5.00 / $30.00 |

$5.00 / $25.00 |

|

Context Window |

1M tokens |

~1M tokens |

~1M tokens |

|

SWE-bench Pro (Coding) |

55.4% |

58.6% |

64.3% |

|

Terminal-Bench 2.0 (Agentic) |

67.9% |

82.7% |

69.4% |

|

Open Weights |

Yes (MIT License) |

No (Closed) |

No (Closed) |

Note: For users prioritizing budget, DeepSeek V4 Flash costs just $0.14 per 1M input tokens and $0.28 per 1M output tokens, undercutting even small models like GPT-5.4 Nano.

How Good is DeepSeek V4?

DeepSeek V4 is an incredibly disruptive release. According to DeepSeek's self-reported benchmarks, the Pro model trails state-of-the-art frontier models (like GPT-5.4 and Gemini-3.1-Pro) by only 3 to 6 months in developmental trajectory.

However, looking at the wider context of the industry, raw performance is only half the story. The big headline of DeepSeek V4 lies in its ultra-high context efficiency and rock-bottom pricing.

Delivering near-frontier capabilities, including a 1M token context window, at a fraction of the cost of GPT-5.5 or Opus 4.7 makes DeepSeek V4 the most compelling option for high-volume enterprise tasks, open-source researchers, and budget-conscious developers.

DeepSeek V4 Use Cases

With those strengths in mind, here are a few areas where I see V4 excelling:

- Automated software engineering: Strong agentic benchmarks and integration with tools like OpenClaw make V4-Pro a solid candidate for autonomous codebase refactoring and debugging.

- High-volume document processing: The reduced costs in 1M-token context computing mean financial analysts and legal teams can process mountains of PDFs, 10-Ks, and contracts for pennies.

- Local deployment and research: Because it uses an MIT license, researchers can run quantization (especially on the 160GB Flash model) to experiment with frontier-level AI locally on high-end consumer hardware.

Final Thoughts

DeepSeek V4 is a massive step forward for the open-source AI community. While GPT-5.5 and Claude Opus 4.7 might edge it out on the absolute hardest coding and reasoning benchmarks, DeepSeek V4 democratizes access to 1-million-token context windows and complex agentic workflows.

If you want to stay ahead of the curve and learn how to implement these cutting-edge models in your own workflows, I recommend checking out some of our resources. Particularly, our Understanding Prompt Engineering course to refine how you communicate with models like DeepSeek, or our AI Agent Fundamentals skill track, if you want to start building scalable agentic systems.

DeepSeek V4 FAQs

Is DeepSeek V4 open-source?

Yes. Both DeepSeek-V4-Pro and DeepSeek-V4-Flash are open-weight models released under the highly permissive MIT License. This allows developers and researchers to use, modify, and deploy the models commercially.

What is the context window for DeepSeek V4?

Both the Pro and Flash models feature a default 1-million-token context window. Thanks to its new Hybrid Attention Architecture, DeepSeek V4 handles this massive context with a fraction of the compute and memory costs of older models.

How much does the DeepSeek V4 API cost?

The pricing is highly competitive. DeepSeek-V4-Flash costs just $0.14 per 1M input tokens and $0.28 per 1M output tokens. DeepSeek-V4-Pro costs $1.74 per 1M input tokens and $3.48 per 1M output tokens.

How large are the DeepSeek V4 models?

DeepSeek uses a Mixture of Experts (MoE) architecture. The Pro model contains 1.6 trillion total parameters (49 billion active) and requires an 865GB download. The Flash model contains 284 billion parameters (13 billion active) and requires a 160GB download.

Does DeepSeek V4 beat GPT-5.5 and Claude Opus 4.7?

In pure capability, no. DeepSeek's self-reported data suggests the V4-Pro model trails state-of-the-art closed models by about 3 to 6 months on the hardest coding and reasoning benchmarks. However, it delivers near-frontier performance at roughly a third of the API cost, making it highly disruptive.

A senior editor in the AI and edtech space. Committed to exploring data and AI trends.