Cursus

Computer vision has always operated on a clean separation between models that produce images and models that understand them. Generative frameworks were primary sources for synthesis, while discriminative architectures focused on classification, segmentation, or depth regression. The standard playbook for any new vision task was to pick an architecture, attach a task-specific head, and finetune on labeled data.

That assumption is what Vision Banana from Google DeepMind is here to break. The paper argues that the image generators have already learned everything a generalist vision model needs, including segmentation, depth, surface normals, and more. In the authors’ opinion, all that's missing is a thin instruction-tuning layer to make those latent capabilities measurable on benchmarks.

In this post, I'll start with what Vision Banana actually is and why the paper has caught the field's attention, then walk through how the model works. I'll end with what works, what doesn't, and what practitioners should take away. Along the way, I'll surface the questions I had while reading the paper. Other readers will probably have the same ones.

Note: Vision Banana itself isn't publicly accessible. What's out is the paper and the project page. The base model is built on Nano Banana Pro and is available through the Gemini API and Google AI Studio.

What Is Vision Banana?

Vision Banana is a research model from Google DeepMind, built by taking Nano Banana Pro (text-to-image generator) and applying lightweight instruction tuning on a mixture of its original training data, along with a small amount of computer vision task data.

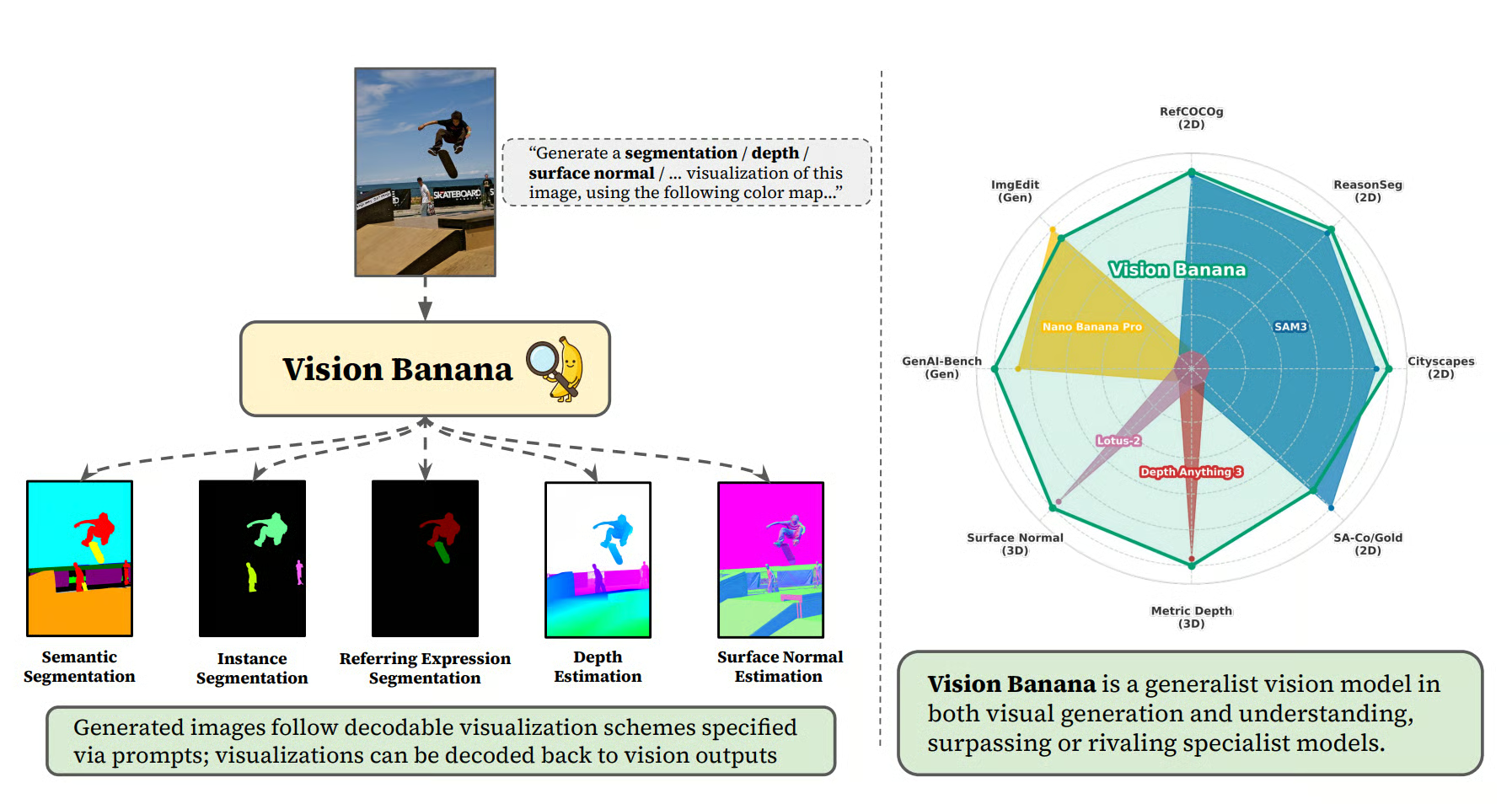

Figure 1: Hidden visual understanding capabilities of image generators by instruction-tuning Nano Banana Pro (Source)

The recipe is to:

- Keep the base model architecture unchanged

- Mix segmentation, depth, and surface normal data into the training distribution at a low ratio

- Instruction-tune

The entire training methodology requires no new architecture, no custom heads, no auxiliary losses, and no specialized decoders.

The resulting model:

- Beats SAM 3 on three segmentation benchmarks

- Beats Depth Anything V3 on metric depth estimation

- Beats Lotus-2 on surface normal estimation

- Retains its base model's image generation quality (statistical tie on GenAI-Bench and ImgEdit)

All that is achieved under a strict zero-shot transfer protocol, ensuring the model has never seen the training splits of the benchmarks it's evaluated on.

Why does this matter?

Vision representation learning has tried many pretraining objectives over the years. Common ones include:

- Supervised classification (ImageNet, JFT)

- Contrastive learning (CLIP, SigLIP)

- Self-distillation (DINO, DINOv2)

- Masked autoencoding (MAE, BEiT)

What’s important is that none of them are generative. Image generation has historically been treated as a downstream capability, not a foundation for understanding. Early generative pretraining attempts (iGPT, LVM) consistently lagged contrastive methods on representation quality benchmarks.

The scaling of generative autoregressive objectives via next-token prediction catalyzed the emergence of zero-shot generalist capabilities in models like GPT-3. Complex downstream behaviors were found to be latent within the high-dimensional weight space, subsequently surfaced through lightweight supervised fine-tuning and instruction alignment. Examples include:

- Neural machine translation

- Polyglot code synthesis

- Abstract summarization

- Arithmetic reasoning

Vision Banana is making the case that we're at the same moment for vision. The paper explicitly draws an analogy between image generation pretraining and language model pretraining, and between instruction tuning and the alignment step.

If the analogy holds, we’ll stop building task-specific pipelines and treat a single large image generator as the foundation layer, specifying tasks via prompts.

How Does Vision Banana Work?

The mechanism is simple: every vision task is reframed as "generate an RGB image with these properties," and at decode time, you turn that RGB image back into task predictions deterministically.

Here's how each task maps:

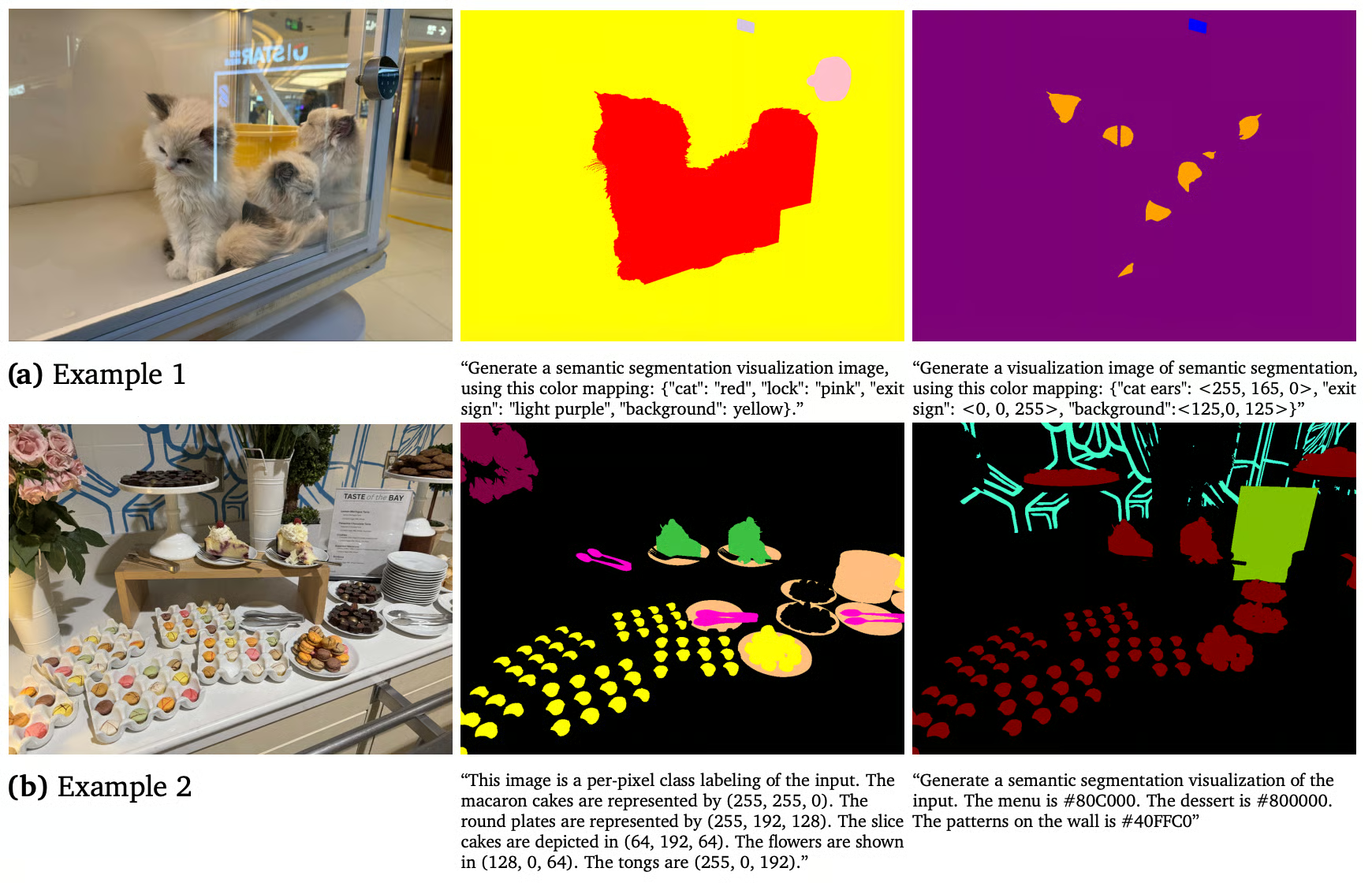

Semantic segmentation

Prompt the model with per-class color assignments, and at the decode time, cluster pixels to the nearest specified color. The vocabulary is whatever you put in the prompt; it is open-vocabulary by construction.

It will become clearer what I mean when we look at an example. Here is a prompt from the paper:

This image is a per-pixel class labeling of the input. The macaron cakes are represented by (255, 255, 0). The round plates are represented by (255, 192, 128). The slice cakes are depicted in (64, 192, 64). The flowers are shown in (128, 0, 64). The tongs are (255, 0, 192).

Figure 2: Semantic segmentation(Source)

Instance segmentation

Instance segmentation is trickier than semantic segmentation, because the number of instances isn't known in advance. So, you can't pre-assign colors.

Vision Banana's solution to this is to do one class per inference, let the model dynamically assign distinct colors to distinct instances, then cluster pixels to color modes at decode time.

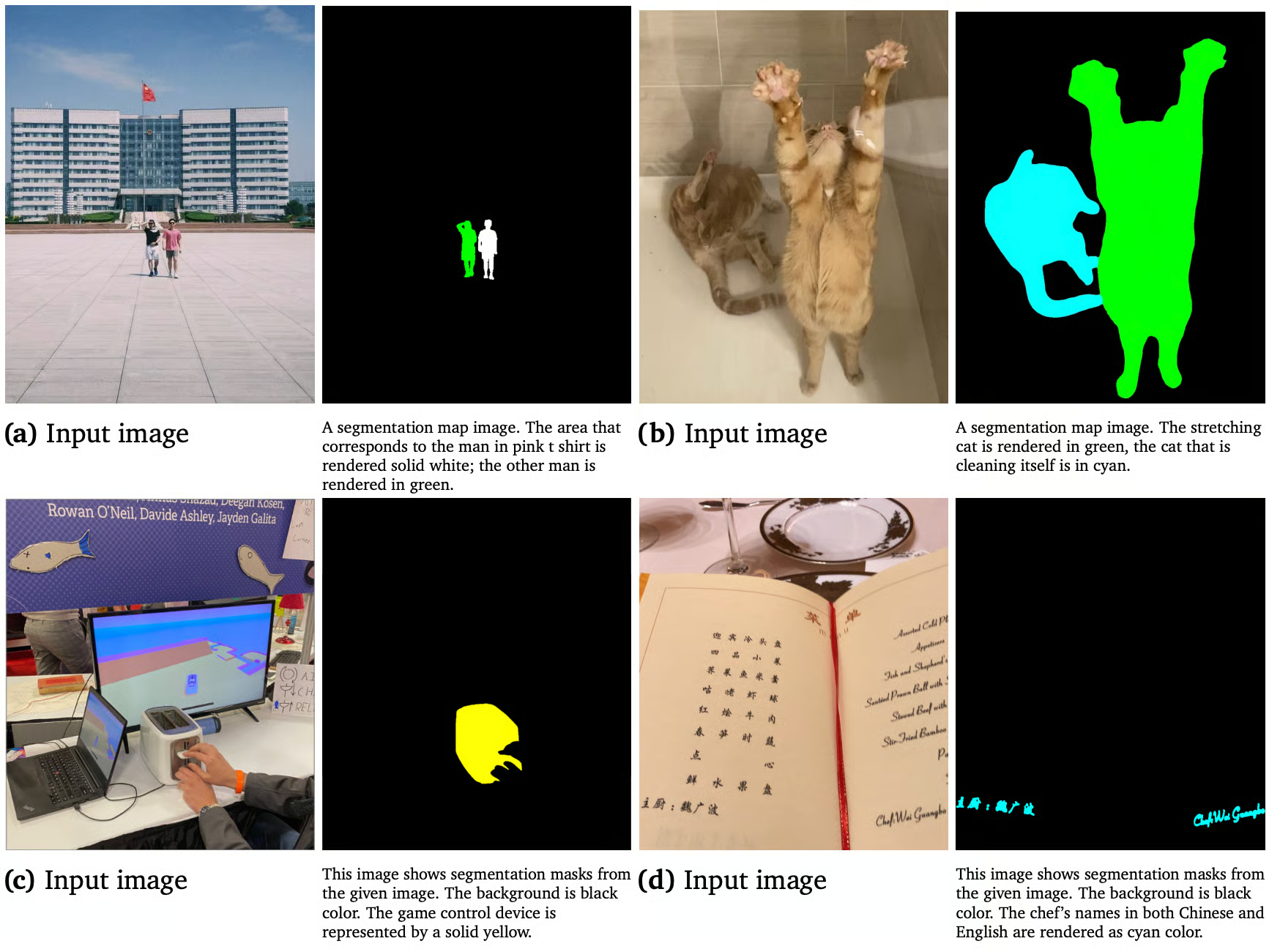

Expression segmentation

Vision Banana can take in a prompt with a natural-language description of what to segment, and return a mask. Here is an example prompt from the paper:

A segmentation map image. The stretching cat is rendered in green, the cat that is cleaning itself is in cyan.This is where multimodal reasoning baked into generative pretraining shines. Discriminative models struggle with referring expressions because the task requires joint linguistic and visual reasoning. Generative models trained on billions of caption-image pairs handle it naturally.

Figure 3: Vision Banana can understand natural language prompts and reason about them (Source)

Depth and surface normals

Both depth and surface normals are projected into the RGB space via bijective mappings, ensuring that the latent geometric properties are preserved with zero information loss during the transformation. We will dissect the specifics of the depth-to-RGB encoding in the following section.

This unified inference paradigm treats every computer vision task as a deterministic image-generation problem. By maintaining a consistent RGB interface throughout the pipeline, the model avoids task-specific architectural branches, relying entirely on prompt-driven conditioning to define the output semantics.

Now, let's understand depth in a bit more detail. It's where the whole approach could most easily fall apart.

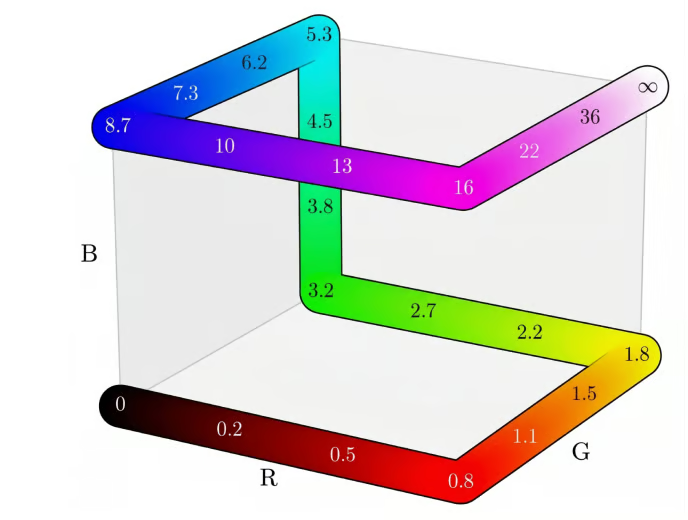

Encoding Unbounded Depth into RGB

If the encoding isn't bijective, you can't recover metric distances at decode time, and the entire "image generation as universal interface" claim collapses with it. This is worth understanding because it tells you whether the approach is principled or hacky.

The problem here is that depth values are unbounded real numbers in [0, ∞), while RGB is bounded [0, 1]. So, to use "generate the depth map" as a training signal, you need a bijective mapping where every metric depth maps to exactly one RGB value, and at decode time, you can invert it back to meters.

The Vision Banana's approach has two stages:

Stage 1: Compress unbounded depth onto [0, 1)

The authors use Barron's (2025) power transform with shape parameter λ = -3, which gives near-field regions more resolution than far-field. An object 2 meters away gets more precision than one 200 meters away. This matches what most applications care about, i.e., graspable objects, not distant ones.

Stage 2: Map [0, 1) onto RGB cube edges

Next, they interpolate along a piecewise-linear path that traces the edges of the RGB cube, essentially the first iteration of a 3D Hilbert curve. This produces smooth, perceptually sensible color transitions with no ambiguity about which color corresponds to which depth.

Since both stages are strictly invertible, the composition is a bijection. Then they train on RGB-encoded ground truth, and at inference, project the predicted RGB onto the nearest cube edge and invert back to meters.

Figure 4: A visualization of our bijection between scalar metric distances 𝑑 ≥ 0 and RGB color values (Source)

For robustness, training augments with alternative colormaps like Plasma, Inferno, Viridis, and grayscale, so the model can handle whichever visualization style you ask for in the prompt.

Surface normals are easier because they are already unit vectors with components in [-1, 1], which maps directly to RGB with the standard camera-space convention (+x right, +y up, +z out of the image plane). So, light green means facing up, pinkish red means facing left, and light blue/purple means facing the camera. No warp needed.

Note: Vision Banana uses no camera intrinsics anywhere, neither in training nor in inference. Most SOTA depth methods (Depth Anything V3, MoGe-2, UniK3D, DepthLM) use intrinsics somewhere in the loop because they help disambiguate monocular scale.

Vision Banana inferring absolute scale from visual priors alone, and still winning, is the strongest piece of evidence in the paper that the generative pretraining is doing geometric work and not just pattern-matching visualizations it saw during training.

Specialist Models vs Vision Banana: A Comparison

The cleanest way to see what's actually different is to compare the two paradigms directly on a single task. Let’s compare depth estimation with the specialist approach (Marigold, Lotus, Depth Anything V3) and Vision Banana.

The two paradigms produce different artifacts. Marigold is a specialized tool, while Vision Banana is a generalist whose breadth comes from a single training step.

The differences cascade into how the model gets used in practice:

|

Aspect |

Specialist (Marigold-style) |

Vision Banana |

|

Output format |

Task-specific tensor |

RGB image |

|

Vocabulary |

Fixed at training |

Defined in prompt |

|

Multi-task |

One model per task |

One model, many tasks |

|

Camera intrinsics |

Often required |

Not used |

|

Generation capability |

Lost in finetuning |

Preserved |

|

Inference cost |

Low |

High (full image generator) |

The benchmarks are a mixed bag of clear wins and one honest loss. All under zero-shot transfer, i.e., the model has never seen the evaluation benchmarks' training splits.

Superior performance profiles:

- Semantic segmentation (Cityscapes): Achieved 0.699 mean Intersection over Union (mIoU), surpassing the specialized Segment Anything Model (SAM 3) at 0.652.

- Referring segmentation (RefCOCOg): Demonstrated a 0.738 cIoU (cumulative IoU), exceeding the SAM 3 Agent baseline of 0.734.

- Complex visual reasoning (ReasonSeg): When jointly leveraged with Gemini 2.5 Pro, attained a 0.793 gIoU, outperforming the SAM 3 Agent's 0.770 and establishing a new zero-shot state-of-the-art, even against non-zero-shot, fully supervised methodologies.

- Metric depth estimation: Registered an average

δ(threshold accuracy) of 0.929 across four diverse datasets, improving upon Depth Anything V3's 0.918. - Surface normal estimation: Recorded a lower average mean angular error of 15.549° across three indoor datasets, demonstrating superior geometric fidelity compared to Lotus-2 (16.558°).

Performance deficit:

- Instance segmentation (SA-Co/Gold): Performance was 0.540 pmF1 (evaluated on a 500-query sample of SA-Co/Gold, not the full benchmark), trailing the non-zero-shot SAM 3 (0.661) and on par with the zero-shot DINO-X (0.552). This result likely stems from the constraint of discrete color assignment for instance cardinality, which restricts resolution in highly dense scenes.

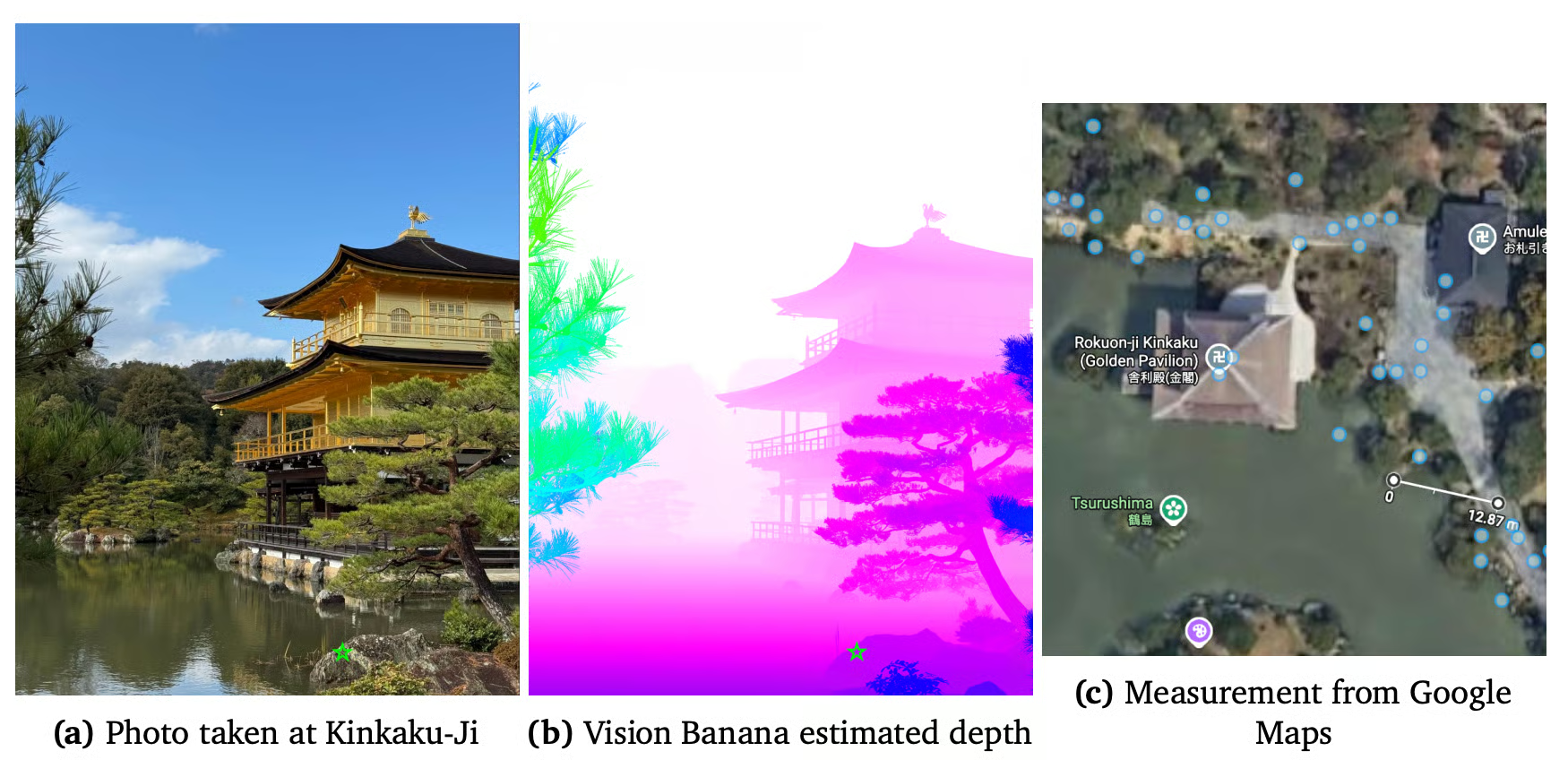

On GenAI-Bench, Vision Banana wins 53.5% of human comparisons against the base Nano Banana Pro. Here is an example of an in-the-wild grounding test from the paper:

Figure 5: Vision Banana depth estimation (Source)

An author took a smartphone photo near Kinkaku-Ji. Vision Banana predicted a specific point at 13.71 meters. Google Maps says 12.87, which is 6.5% absolute relative error on a phone photo with no calibration, no intrinsics, no setup. That's the kind of test that predicts deployment behavior more reliably than benchmark numbers.

Limitations of Vision Banana

Vision Banana has four constraints that are worth flagging. These include:

- Cost: Running a Nano Banana Pro-scale model for every inference is dramatically more expensive than running a distilled depth specialist. The paper acknowledges this directly in the Future Work section. For 10K video frames, this approach is not economically competitive today. Distillation into smaller specialists is the obvious next step.

- Instance-heavy scenes: SA-Co/Gold is the clear loss. Per-class color assignment runs out of headroom when many small objects need to be counted precisely. SAM 3 still wins here.

- Single-image: The current model implementation is fundamentally constrained to static, single-view image inputs, lacking the capacity for spatio-temporal or multi-view processing. Temporal reasoning is designated for future work by the authors.

- Black-box deployment: You can't probe, modify, or run the model privately. This matters in regulated or IP-sensitive contexts where running a frontier API isn't an option.

Final Thoughts

Vision Banana is a concrete demonstration that image generators are already doing the heavy lifting for visual understanding, and that surfacing those capabilities takes alignment rather than new architectures.

The paper's specific contributions are

- Bijective RGB encoding that makes depth and segmentation invertible image-generation problems

- Lightweight instruction-tuning recipe that adds vision skills without burning the generation prior

- Metric depth estimation without camera intrinsics that beats specialists, who require them

- Strict zero-shot transfer protocol that makes the generalist claim measurable on standard benchmarks.

The paper also pushes a generation-first mental model where tasks are specified by prompt and outputs are decoded back from RGB images, and where the same weights handle both producing and parsing visual content.

You can test the project page's prompts against base Nano Banana Pro or Nano Banana 2 to see what's already accessible without instruction tuning, build reasoning-segmentation features with NBP-class models and a multimodal LLM router rather than training custom pipelines directly.

Vision Banana FAQs

How can I use Vision Banana today?

No. The paper and project page are out, but the model weights are at Google DeepMind. The base model, Nano Banana Pro, is available in the Gemini API and AI Studio, and the paper's own thesis suggests that prompting NBP directly with the project page's prompts should already produce meaningful results.

Can Vision Banana replace SAM 3 or Depth Anything?

Not today, mostly because of cost. For applications where you need to run vision inference cheaply at scale (video, real-time, mobile), specialists still win on economics.

How does this compare to MLLMs like GPT-5V or Gemini 2.5?

Multimodal LLMs take an image and produce text. Vision Banana takes an image and a prompt to produce an image. They're solving different problems.

Why doesn't Vision Banana use camera intrinsics for depth?

Most specialists use intrinsics to disambiguate monocular scale (a small object close up and a large object far away can produce identical pixels). However, Vision Banana infers scale from visual priors learned during generative pretraining on object sizes, scene context, and world knowledge.

I am a Google Developers Expert in ML(Gen AI), a Kaggle 3x Expert, and a Women Techmakers Ambassador with 3+ years of experience in tech. I co-founded a health-tech startup in 2020 and am pursuing a master's in computer science at Georgia Tech, specializing in machine learning.