Track

Just last week, OpenAI's GPT-Realtime-2 raised the bar for voice AI when it launched with GPT-5-class reasoning and a 128K context window. Now, Mira Murati's Thinking Machines Lab is making a different argument: that responsiveness and intelligence should be trained into the same model from scratch, not bolted together with voice-activity-detection harnesses and dialog management components.

The lab calls this type of new model an "Interaction Model."

Their research preview, TML-Interaction-Small, is the first result of this approach. It is a 276B parameter Mixture-of-Experts model with 12B active parameters. It processes audio, video, and text in continuous 200ms micro-turns, meaning it perceives and responds at the same time rather than waiting for a speaker to finish.

In this article, I'll cover what TML-Interaction-Small is, walk through its key architectural features, compare it directly to GPT-Realtime-2, and look at the benchmark results in detail.

What Are Interaction Models?

Thinking Machines Lab describes an interaction model as a system where interactivity is part of the model itself, not implemented in a surrounding harness. The core principle is that responsiveness and intelligence should be trained together from scratch, on continuous audio and video streams, rather than bolted onto a text-based model after the fact.

Most existing real-time voice AI systems stitch together voice-activity-detection components, separate encoders, and dialog management layers to simulate responsiveness. Thinking Machines Lab argues this approach will always lag behind models that handle interaction natively because of artificial turn boundaries that limit what the non-interactive model can do.

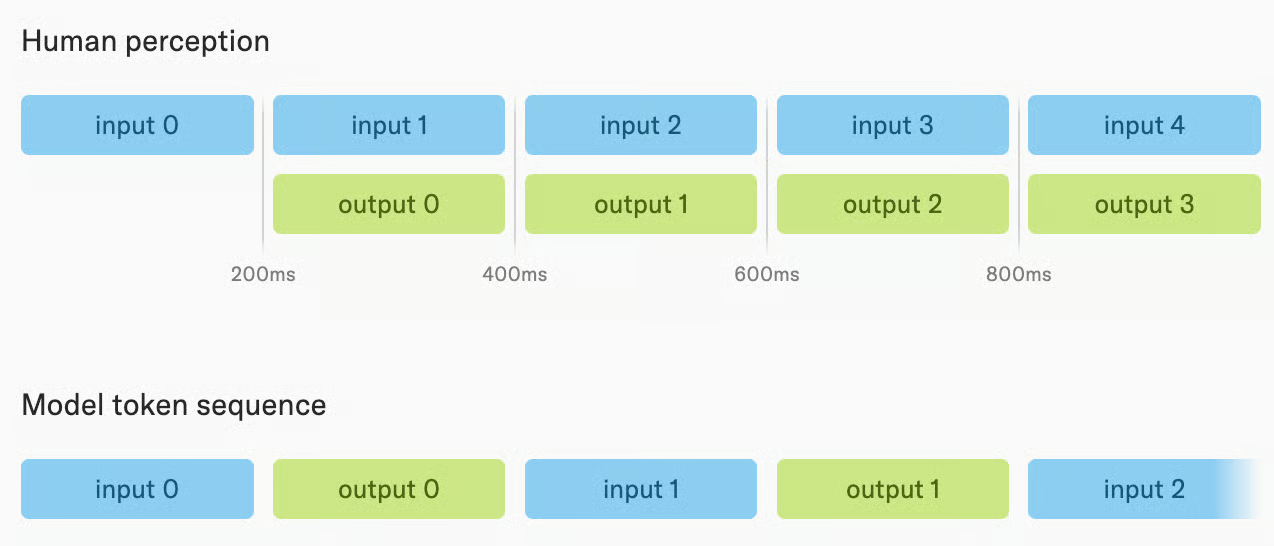

Instead of sequentially consuming user input and then generating a complete response, the lab's interaction models are designed to be closer to human perception. They treat both input and output tokens as streams, and both are interleaved in each of the 200-millisecond-long micro-turns.

In turn, an interaction model perceives and responds at the same time, processing input and output in parallel rather than waiting for a speaker to finish. This enables a couple of neat abilities:

In turn, an interaction model perceives and responds at the same time, processing input and output in parallel rather than waiting for a speaker to finish. This enables a couple of neat abilities:

- Speaking while listening

- Reacting to visual cues without being prompted

- Tracking elapsed time directly

These are all things that turn-based models with external harnesses cannot replicate, regardless of how much reasoning ability they have.

What Is TML-Interaction-Small?

TML-Interaction-Small is Thinking Machines Lab's first public model release and the first implementation of their interaction model architecture.

It is a 276B parameter Mixture-of-Experts model with 12B active parameters, trained from scratch on continuous audio and video streams using the multi-stream micro-turn design I described earlier, where input and output are processed in 200ms chunks.

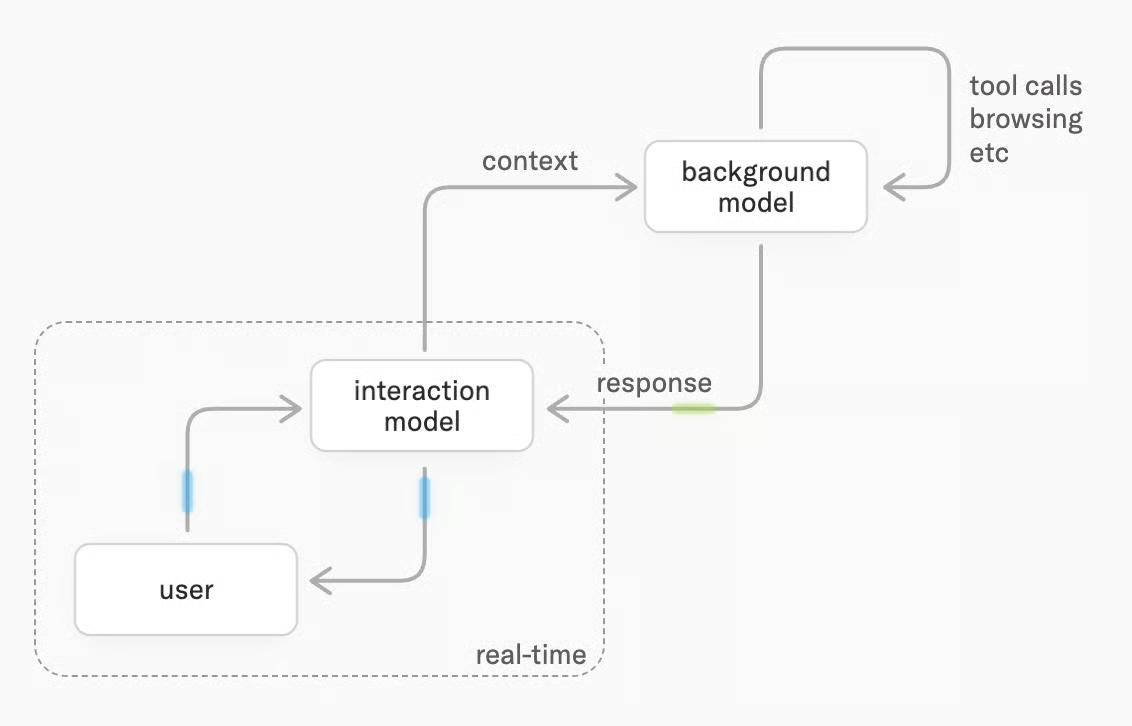

The combination of two models with a shared context offers both responsiveness and intelligence. Users receive answers from the interaction model in real-time, while planning, tool-use, and deeper reasoning are delegated to the background model, which runs asynchronously.

The interaction model then integrates background results into the conversation as they arrive, without dropping out of the conversation.

TML-Interaction-Small Features

Where existing voice AI models take turns (you speak, they respond), TML-Interaction-Small works more like a human conversation partner. Here are the four capabilities that set it apart.

Speak and listen at the same time

TML-Interaction-Small can produce speech while the user is still talking. This makes simultaneous translation possible: you speak in one language, and the model begins translating before you finish your sentence. It also means the model can interject mid-sentence when it catches an error, or give verbal cues ("got it," "go on") while you're still explaining something.

This is also useful for custom real-time responses whenever a specific prompted event occurs. One clip in the release notes, for instance, shows how the model converts EUR amounts and tells the corresponding USD amounts whenever a payment is mentioned by the user.

See and react to video without being asked

TML-Interaction-Small processes video alongside audio and can initiate speech based on what it sees, without any verbal prompt.

If you're doing pushups on camera, it can count reps out loud as they happen. If a relevant object appears in a video stream, it can call it out at the moment it becomes visible. However, this is a feature that can still be improved, as visible from the internal RepCount-A score, in which only a third (33.4%) of instances were within one rep of the ground truth.

One release clip (which looks a bit quirky, in my opinion) demonstrates this in action: When prompted to pay attention to the user's posture, the model detected slouching at the laptop instantly and reminded her to correct it.

Existing commercial real-time APIs are audio-only. They respond to spoken turns but have no way to proactively react to visual changes. This is a capability that simply does not exist in GPT-Realtime-2 or Gemini Live today.

Handle interruptions and self-corrections naturally

If you start a sentence, change your mind, and correct yourself mid-thought, TML-Interaction-Small tracks the correction and responds to what you actually meant. It handles backchanneling (you saying "uh-huh" or "right" while it talks) and distinguishes between someone talking to it versus talking to someone else in the room.

These are scenarios where turn-based models frequently break. They either stop speaking when they shouldn't, or respond to the wrong part of what was said. It will be interesting to see if TML-Interaction-Small can handle it in everyday situations as well as in curated demo videos.

Run complex tasks in the background while staying present

The background model is what makes the interaction model not only fast, but also intelligent. You can keep asking follow-up questions or change the topic while the background task runs. When results are ready, the model weaves them back into the conversation at a natural moment rather than interrupting you with an abrupt context switch.

This means you get both fast conversational responses and the ability to handle multi-step tasks that would normally require the model to go silent for several seconds. In a quiz demo clip, this works quite well: Three users ask trivia questions at a high pace, and the model can mostly keep up with their speed.

TML-Interaction-Small Benchmarks

Thinking Machines reports results across two categories: streaming benchmarks that measure interactivity, and turn-based benchmarks that measure intelligence. The model's strongest results are on the streaming side, which is where its architectural choices are most directly tested.

Interactivity

FD-bench v1.5 gives the model prerecorded audio and measures its behavior across four scenarios:

- User interruption

- User backchannel

- Talking to others

- Background speech

TML-Interaction-Small scores 77.8, compared to 54.3 for Gemini-3.1-flash-live-preview at minimal settings and 46.8 for GPT-Realtime-2.0 at minimal settings. Even GPT-Realtime-2.0 at its highest reasoning setting (xhigh) scores only 47.8.

This is the benchmark that most directly measures what Thinking Machines is building toward. A 30-point gap over the nearest competitor is not a marginal difference. The question is whether FD-bench v1.5 captures the full range of interactivity that matters in practice, which Thinking Machines themselves acknowledge is an open research question.

Turn-taking latency

TML-Interaction-Small achieves a turn-taking latency of 0.40 seconds in FD-bench v1, the fastest of any model compared. Gemini-3.1-flash-live-preview comes closest with 0.57 seconds. Even at minimal settings, GPT-Realtime-2.0 takes about three times as long (1.18 seconds); at xhigh reasoning, GPT-Realtime-2.0 reaches 1.63 seconds.

Latency matters for voice interaction in a way that it does not for text. A 1.2-second gap between when a user finishes speaking and when the model begins responding is not only perceptible but also disruptive. The 0.40-second result puts TML-Interaction-Small closer to human conversational response times.

Intelligence and instruction following

Audio MultiChallenge measures intelligence and instruction following in audio. TML-Interaction-Small scores 43.4%, above GPT-Realtime-1.5 (34.7%) and Gemini-3.1-flash-live-preview (26.8%), but below GPT-Realtime-2.0 at xhigh (48.5%). This is the benchmark where the intelligence-interactivity tradeoff is visible.

The gap between TML-Interaction-Small and GPT-Realtime-2.0 at xhigh is 5.1 percentage points. That is a significant, but not a huge gap, and it comes with a significant latency cost on the GPT-Realtime-2.0 side (1.63 seconds versus 0.40 seconds). Whether that tradeoff is worth it depends on the application.

Response quality and tool-use

FD-bench v3 measures response quality and tool-call accuracy in audio-plus-tools scenarios. TML-Interaction-Small scores 82.8% response quality and 68.0% pass@1 with background agent enabled, compared to 80.0% / 52.0% for GPT-Realtime-2.0 at minimal settings and 81.0% / 58.0% at xhigh.

The pass@1 gap (68.0% versus 58.0%) is the most meaningful number here, as it measures whether the model actually completes tool-dependent tasks correctly. It looks like the dual architecture that separates tool calls from user interactions pays off.

New interactivity benchmarks: TimeSpeak, CueSpeak, and visual proactivity

Thinking Machines created two internal benchmarks and adapted three less widely used benchmarks to measure interactivity capabilities directly. These are worth examining carefully because no competing model performs meaningfully on any of them.

- TimeSpeak (timed speech initiation): TML-Interaction-Small scores 64.7% macro-accuracy.

- CueSpeak (verbal cue-triggered speech): TML-Interaction-Small scores 81.7% macro-accuracy.

- RepCount-A (visual action counting): TML-Interaction-Small scores 33.4% off-by-one accuracy.

- ProactiveVideoQA (visual cue-triggered speech): TML-Interaction-Small scores 31.5 PAUC (no response baseline = 25.0%).

- Charades temporal localization (visual action timing): TML-Interaction-Small scores 30.4 mIoU.

On most of these new benchmarks, GPT realtime-2.0 completely fails, with a result close to zero, or even zero (on the Charades benchmark, which requires the model to say "start" and "stop" at the right moments during a video).

It's hard for me to say how meaningful these results are, since those benchmarks are new and not yet independently validated, but they follow the general picture of the architectural differences and comparable benchmark results.

How Can I Access TML-Interaction-Small?

TML-Interaction-Small is currently in a limited research preview, and no pricing details have been announced. Thinking Machines plans to open broader access later in 2026. Interested researchers and developers can contact the team at interaction@thinkingmachines.ai to request access.

For comparison, GPT-Realtime-2 is priced at $32 per million audio input tokens and $64 per million audio output tokens, as we covered in our GPT-Realtime-2 overview. TML-Interaction-Small's pricing will likely be announced alongside the wider release.

As you probably noticed, the model has the suffix "-Small", and you're right to expect that Thinking Machines will follow up with larger models. They are still too slow to serve, but a release is planned for late 2026.

TML-Interaction-Small vs GPT-Realtime-2

The more interesting gap between the two models is in interactivity benchmarks. On FD-bench v1.5, which measures behavior across user interruption, backchanneling, talking to others, and background speech, TML-Interaction-Small scores 77.8. GPT-Realtime-2.0 at minimal settings scores 46.8, and at its highest reasoning setting (xhigh) scores 47.8. That is a 30-point gap on the benchmark most directly measuring what Thinking Machines is optimizing for.

There is an intelligence trade-off, but the gap here is much smaller than for interactivity. GPT-Realtime-2.0 at xhigh scores 48.5% on Audio MultiChallenge versus TML-Interaction-Small's 43.4%. On BigBench Audio, GPT-Realtime-2.0 at high scores 96.6% versus 75.7% for TML-Interaction-Small (though TML-Interaction-Small reaches 96.5% with background agent enabled).

The general picture that emerges is that TML-Interaction-Small leads on responsiveness and interactivity, while GPT-Realtime-2.0 at high reasoning settings leads on raw intelligence benchmarks.

| Benchmark | TML-Interaction-Small | GPT-Realtime-2.0 (minimal) | GPT-Realtime-2.0 (xhigh) | Gemini-3.1-flash-live (minimal) |

|---|---|---|---|---|

| FD-bench v1 turn-taking latency (s) | 0.40 | 1.18 | 1.63 | 0.57 |

| FD-bench v1.5 average | 77.8 | 46.8 | 47.8 | 54.3 |

| FD-bench v3 response quality (%) | 82.8* | 80.0 | 81.0 | 68.5 |

| Audio MultiChallenge APR (%) | 43.4 | 37.6 | 48.5 | 26.8 |

| BigBench Audio accuracy (%) | 75.7 / 96.5* | 71.8 | 96.6 | 71.3 |

| IFEval (VoiceBench) accuracy (%) | 82.1 | 81.7 | 83.2 | 67.6 |

| IFEval text accuracy (%) | 89.7 | 89.6 | 95.2 | 85.8 |

* With background agent enabled.

To see OpenAI's audio model family in action, check out our GPT-Realtime-2 API tutorial.

Final Thoughts

TML-Interaction-Small looks promising. If it lives up to the claims in the release notes, the new model brings significantly improved interactivity with a short latency, without sacrificing response quality or reasoning power. The ability to simultaneously speak, listen, and respond to visual cues is unique so far and offers lots of possibilities. I am curious to see what the pricing will look like when the model is publicly released.

The intelligence gap to GPT-Realtime-2 is real but narrower than the interactivity gap. For applications where the conversation needs to feel natural, that latency difference matters more than the intelligence gap. For applications where accuracy on hard reasoning tasks is the priority, GPT-Realtime-2.0 at high reasoning settings is still ahead.

If you want to get up to speed on the broader landscape of AI models and how to work with them effectively, I recommend starting with our AI Fundamentals skill track.

TML-Interaction-Small FAQs

What is an interaction model?

An interaction model is a voice AI system where interactivity is built into the model itself, rather than added through external components like voice-activity detection and dialog management. It processes input and output simultaneously, so it can listen and speak at the same time instead of waiting for turns.

Who is behind Thinking Machines Lab?

Thinking Machines Lab is led by Mira Murati, formerly CTO of OpenAI. TML-Interaction-Small is the lab's first public model release, described as a research preview of their interaction model architecture.

How does TML-Interaction-Small compare to OpenAI's GPT-Realtime-2?

TML-Interaction-Small leads on interactivity, scoring 77.8 on FD-bench v1.5 versus 47.8 for GPT-Realtime-2 at its highest setting, with a faster turn-taking latency of 0.40 seconds versus 1.63 seconds. GPT-Realtime-2 leads on raw intelligence benchmarks like Audio MultiChallenge (48.5% vs. 43.4%)

Can TML-Interaction-Small process video?

Yes. It processes video alongside audio and can react to visual events without any verbal prompt from the user, for example, counting exercise reps on camera or calling out objects as they appear. This visual proactivity does not exist in GPT-Realtime-2 or Gemini Live.

Tom is a data scientist and technical educator. He writes and manages DataCamp's data science tutorials and blog posts. Previously, Tom worked in data science at Deutsche Telekom.