Share this webinar

Close your data and AI skills gap

We're the only platform uniquely engineered to advance data and AI skills across your entire organization. Let's explore a tailored program.

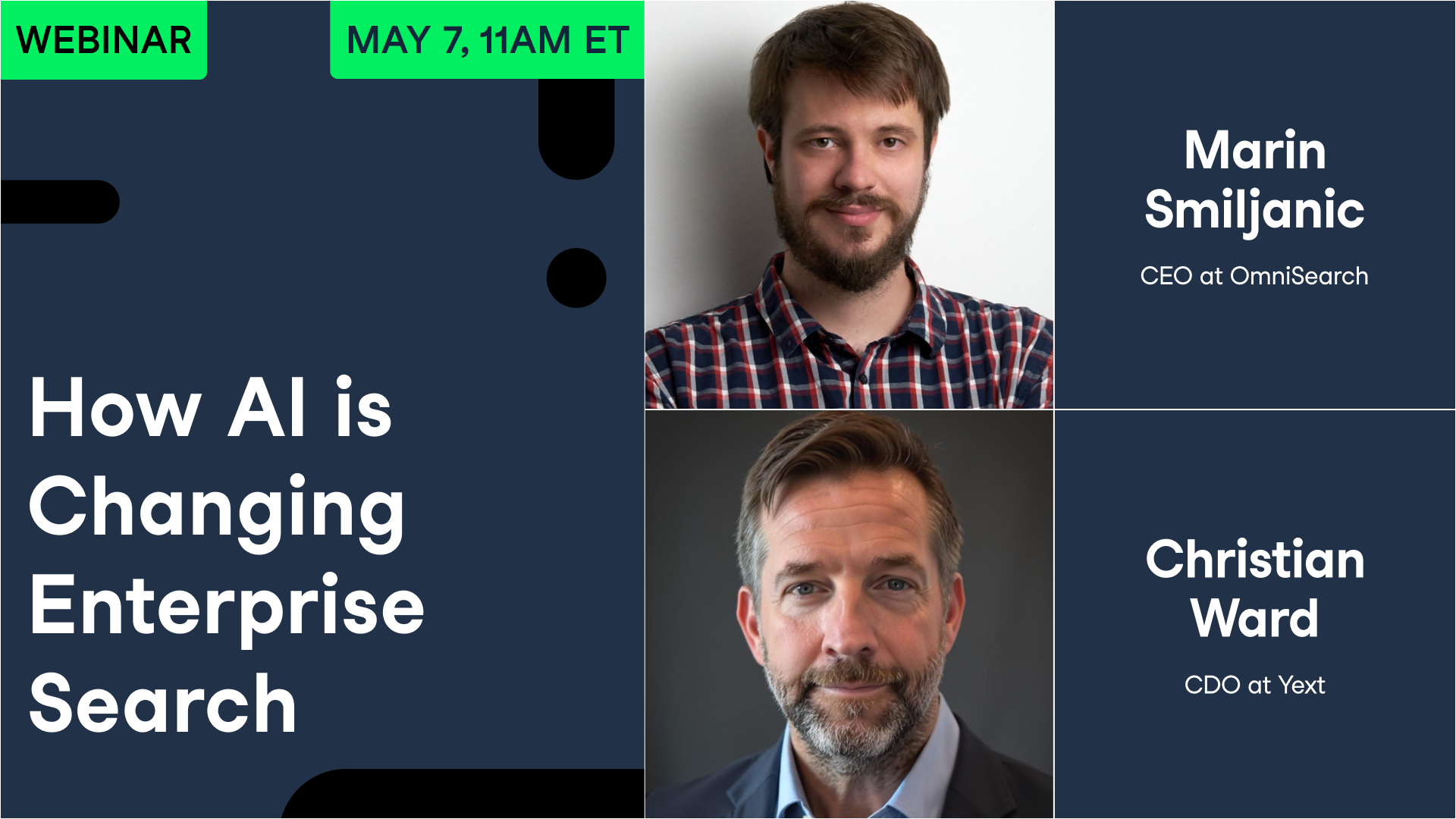

Book an Enterprise DemoHow AI is Changing Enterprise Search

May 2026Your Presenter(s)

Marin Smiljanic

CEO at OmniSearch

Building a multimodal search company. Previously a software engineer at Amazon. Early Apache Spark developer.

Christian Ward

CDO at Yext

Previously CDO at SourceMedia and InfoGroup. Author of "Data Leverage".

Summary

The original PageRank paper, published in 1998, warned in its appendix that a search engine funded by advertising would eventually stop serving users well — and its authors knew this before they'd written a single line of Google's code.

Christian Ward, Chief Data Officer at brand visibility platform Yext, opens with this observation to set up a bigger argument: search wasn't solved, it was delayed. Ward and Marin Smiljanic, CEO of multimodal search company Omnisearch, join DataCamp host Richie Cotton to explain how AI is forcing a rethink of search across three domains — enterprise AI search, external brand visibility, and website product discovery — and what teams should actually do about it.

On the enterprise side, Smiljanic explains why internal knowledge retrieval has always lagged consumer search: corporate data is siloed, multimodal, and messy in ways that PageRank-style indexing was never built for. Expanding context windows and improved RAG pipelines are changing what's possible. On the external side, Ward argues that declining Google traffic is a metric problem, not a brand problem: AI-mediated discovery sends fewer but higher-intent visitors, and most organizations aren't measuring the right things to see it. Both guests are direct about what companies need to do to improve their position in AI-generated answers — structure their data, expose it, and stop assuming their website is only read by humans.

The session closes with audience questions on measuring success, small business AI visibility, and whether hallucination rates are still a practical concern. Anyone working on enterprise search strategy, internal knowledge systems, or brand visibility in AI search engines will find specific, actionable positions from both guests throughout.

Key Takeaways

- Search has always been sequential — query, response, refine, repeat — and AI's conversational model is architecturally different, not just faster at the same task.

- Enterprise data is messy in ways consumer web data never was: siloed across systems, spread across formats (documents, spreadsheets, video, physical archives), and lacking the clean link structure that made early indexing tractable.

- Expanding LLM context windows have changed what RAG can do. Entire documents or video transcripts now fit in a single context window, eliminating the chunk-and-fragment approach that made early enterprise RAG brittle.

- AI-generated answers reduce search traffic volume while increasing its quality — visitors arriving via AI chat are further down the decision funnel. Tracking citations and conversion velocity matters more than tracking page views.

- Smiljanic's teams cut zero-result query rates from 40% to under 10% in enterprise deployments by adding semantic search on top of existing data stores — a direct productivity gain that maps to hours saved per employee per week.

- Ward's "query fan-out" approach: ask an AI model hundreds of follow-on questions about your brand, pull all citations from the API, and map where you appear, misappear, or are absent entirely.

- A 350MB restaurant website carries almost no useful information for an AI agent. The 200KB of structured facts — menu, location, hours, offers — is what gets cited. Structured data exposure is the new determinant of brand discoverability.

- AI memory will reshape advertising: a model that knows a user's preferences, allergies, and purchase history won't surface irrelevant offers, making precise audience data more valuable than broad ad reach.

Deep Dives

Why Search Was Never Actually Solved

Ward's opening provocation is historical. In the appendix to the original PageRank paper, Page and Brin wrote that any search engine built on advertising revenue would eventually be pulled away from serving users. Twenty-five years later, he argues, that prediction played out in slow motion — and the frustrations that built up along the way created the opening that AI chat interfaces are now filling.

The structural limitation Ward identifies goes beyond business incentives. "The biggest thing that has changed is that search was sequential," he says. "It's like a chess match. I'd move and then Google would move. And then I'd move and then Google would move. Conversations are ongoing and developing over time. And that presents a phenomenally richer experience than what we've seen so far."

Smiljanic frames the enterprise version of this problem differently. Early web search worked as well as it did partly because the internet was architecturally uniform: HTML pages with links that could be indexed and ranked. Enterprise data is nothing like that. It exists across disconnected systems, in formats that resist standard indexing, and often without the metadata that would make it retrievable at all.

"The data is always messy," Smiljanic says. "And you can't really build algorithms on top of that neat abstraction." The problem wasn't just that enterprise search was hard to build — it was that the data had never been prepared for search the way web content had. Video archives, decade-old PDFs, spreadsheets buried in shared drives, even physical records: none of it was designed with retrieval in mind.

Smiljanic's company works primarily in media and education, two sectors with deep legacy content. A media archive built up over fifty years might have employed full-time archivists whose only job was labeling footage. AI-powered indexing changes that calculation entirely: content that once required a five-person team to label can now be indexed automatically, and queried within seconds regardless of when it was created.

RAG, Context Windows, and the Infrastructure Shift Behind Enterprise AI Search

Retrieval-augmented generation was the early answer to enterprise AI search: connect a language model to your documents, let it answer questions grounded in what you actually have. The early implementations were frustrating. Context windows were small — measured in a few thousand tokens — forcing engineers to fragment documents into chunks, which broke the reasoning chain and degraded answer quality.

Smiljanic identifies the change that shifted the calculus: "The really cool thing in the past year, year and a half has been the massive improvement in context windows. You all remember RAG probably, and there was a huge debate about whether RAG is alive or dead... Nowadays, you can fill in whole books into the context window. It's massive. And this vastly changes what you can actually do and how powerful these paradigms are."

When an entire document — or an entire video transcript — fits in a single context window, chunking strategies become less critical. Answer quality improves because the model has full context for the section it's reasoning about. The failure mode of losing key information across arbitrary chunk boundaries disappears.

Ward adds a parallel development: the move toward real-time grounding. Traditional indexing is always historical by definition — it reflects the state of data at the time the index was built. Context-based retrieval allows much more current draws against live data, which matters when information is changing fast. "If I've just designed a new cure at a biotech company, and the company salespeople are asking questions, you better not be answering from the indexed information. You want to answer as real time as possible."

For teams building enterprise search systems now, Smiljanic's practical starting point is deliberately unglamorous: figure out where the data actually lives. Build a crawler for proprietary sources — documents, wikis, video — that tracks additions, deletions, and updates. Sync to an index. Attach the question-answering layer. Decide how to expose it (MCP server, API, or a locally hosted model if data privacy is a concern). "Just start with one important source and make sure you have the end-to-end flow running."

Brand Visibility in AI Search: The Metrics Shift

Google search traffic is falling for many businesses, and most marketing teams are treating this as a crisis. Ward's argument is that they're measuring the wrong thing.

The traffic that AI-mediated discovery removes tends to be high-volume and low-intent. Someone who finds your insurance company through a conversational AI interface asking a specific coverage question is far closer to a purchase decision than someone who clicked a blue link while vaguely researching. "There's about 15 to 20% of people that really don't want to engage with you... And so that starts to get away. So in many ways, if the traffic is down, it's not the same thing as the new traffic or the traffic coming from a dialogue. [That traffic] is far further down the funnel, like, miraculously so."

Ward's diagnostic tool for understanding how AI systems perceive your brand is what he calls query fan-out: ask an AI model not just one question about your business, but hundreds of follow-on questions across categories, locations, and customer types. Pull all the citations from the API response. "You will have an instantaneous behind-the-scenes look into how this thing figured out what it figured out about you." The output shows which sources the model trusts, which contexts surface your brand, and which questions return competitors instead of you.

The deeper, longer-term shift is memory. Current AI chat systems maintain context across a session. Future systems with persistent memory will know user preferences, dietary restrictions, and purchase history. Ward makes the advertising implication explicit: "If I have a tree nut allergy, then all of the ads for the restaurants that specialize in that cannot be shown anymore. This is why search never had memory, but dialogue and these chat systems do. Memory is going to fundamentally change the entire way we view or engage in that ongoing dialogue."

When a model knows your full preference history, generic top-of-funnel discovery becomes less relevant. Whether your brand shows up when the context is actually right — because your structured data is accurate, complete, and accessible to the model — is what matters instead.

The Structured Data Imperative

Ward's sharpest claim of the session is about where brand discoverability will come from in the next several years: "Your website is going to absolutely explode in structured knowledge in the next year. The companies that get there first will be not only training, they'll become in the source files by which the models understand [your category]... there's a big opportunity, but it's one you've almost got to take that same concept and that same diligence behind the firewall, and you better start doing it in front of the firewall."

The argument rests on how language models construct answers. When an AI responds to a question about a business, it draws on machine-readable information it can access. A 350MB restaurant webpage heavy with photos, navigation menus, and CSS is nearly useless to a model. The 200KB of structured facts — menu items, location, hours, pricing, loyalty program details — is what gets cited. Businesses that haven't made that structured layer available to AI crawlers are absent from the answers those models generate.

The same logic applies inside organizations. Smiljanic and Ward both note that enterprise knowledge is often locked in forms that neither humans nor AI can easily retrieve: sales call recordings, presentation decks, RFP documents, and product specs that live in one team's shared drive and nowhere else. Ward: "Some of the absolute best content is on a sales call from three months ago when they launched a new business, and no one's written about it yet online."

Ward's practical test for understanding this concretely: photograph something in your workplace — a conference room, a retail shelf, a trade show booth — and ask a capable vision model to extract all the structured data it can see. The output, which will include dimensions, people counts, brands, text, and topic inferences, reveals how much latent structured knowledge exists in physical and digital environments that has never been captured in a retrievable form.

Measuring Success: Time Saved, Zero Results, and Conversion Velocity

Both guests converge on time as the foundational success metric — saved on the enterprise side, accelerated on the consumer side.

For internal enterprise search, Smiljanic uses zero-result query rate as the primary diagnostic: the percentage of queries that return nothing useful, forcing users to escalate, call a colleague, or give up. "We've had customers where they had 40% zero-result queries, where queries just aren't able to find anything. And then we reduce it to 10 or 5%." A 35-point improvement in zero-result rates maps directly to hours recovered across the workforce. Industry estimates routinely put the share of working time spent searching for information at around 20% — any meaningful reduction produces measurable output gains.

Ward's framing for external search success is revenue velocity: shorter cycles from first AI mention to completed transaction, even if total mention volume falls. The challenge is that most AI systems don't yet provide the citation analytics that keyword search did. "Right now, there's very little information coming out of these systems." His practical bridge, until reporting APIs improve, is to run your own query fan-out analysis regularly and track which citations appear over time.

On hallucinations, Ward offers a perspective calibrated to current model performance: "Gemini 3.0 hallucinates under 3% of the time. Can I offer? Your intern this summer hallucinates more than that." The more useful frame, both guests suggest, is to distinguish between simple and complex queries — simple factual lookups have improved dramatically and continue to improve, while highly complex multi-step reasoning still requires more vigilance. The structural improvement over the past two years has been large enough that hallucination is no longer the primary practical obstacle for most enterprise deployments.

관련된

webinar

AI Agents For Business: AI Agents and the Future of Work

Sanjay Srivastava, Chief Digital Officer at GENPACT, and Marianna Bonanome, Head of AI Strategy & Partnerships at SandboxAQ, discuss how AI agents are transforming business operations and workforce planning.webinar

Strategic AI Transformation

Robb Wilson, CEO at OneReach.ai, Louisa Loran, former Director of Strategic Business Transformation at Google, and Evan Schwartz, Chief Innovation Officer at AMCS Group, will share the principles behind successful AI transformation.webinar

Leading with AI: Leadership Insights on Driving Successful AI Transformation

C-level leaders from industry and government will explore how they're harnessing AI to propel their organizations forward.webinar

Leading with AI: Leadership Insights on Driving Successful AI Transformation

C-level leaders from industry and government will explore how they're harnessing AI to propel their organizations forward.webinar

How AI is Changing Data Quality

Gorkem Sevinc, CEO and Founder at Qualytics, and Piyush Mehta, CEO at Data Dynamics, will explore how AI is transforming data quality management.webinar