Track

Large Language Model (LLM) API providers give developers access to powerful AI models without needing to manage GPUs, model deployment, scaling, or inference infrastructure. Instead of self-hosting a model, developers can connect to an API, choose a model, send a request, and receive a response.

In this article, we will look at the top 10 LLM API providers based on model quality, developer experience, pricing, scalability, ecosystem support, and production readiness. For each provider, we will cover what it is best for, its key advantages, and the main drawbacks developers should consider.

This guide breaks down the top LLM API providers into four categories:

- Native LLM providers

- Open-source LLM API providers

- LLM routing providers

- Cloud LLM providers

Associate AI Engineer

Native LLM Providers

Native LLM providers build, train, and serve their own model families through APIs. They are often the strongest option for developers who want high model quality, reliable documentation, advanced reasoning, multimodal support, and production-ready tooling.

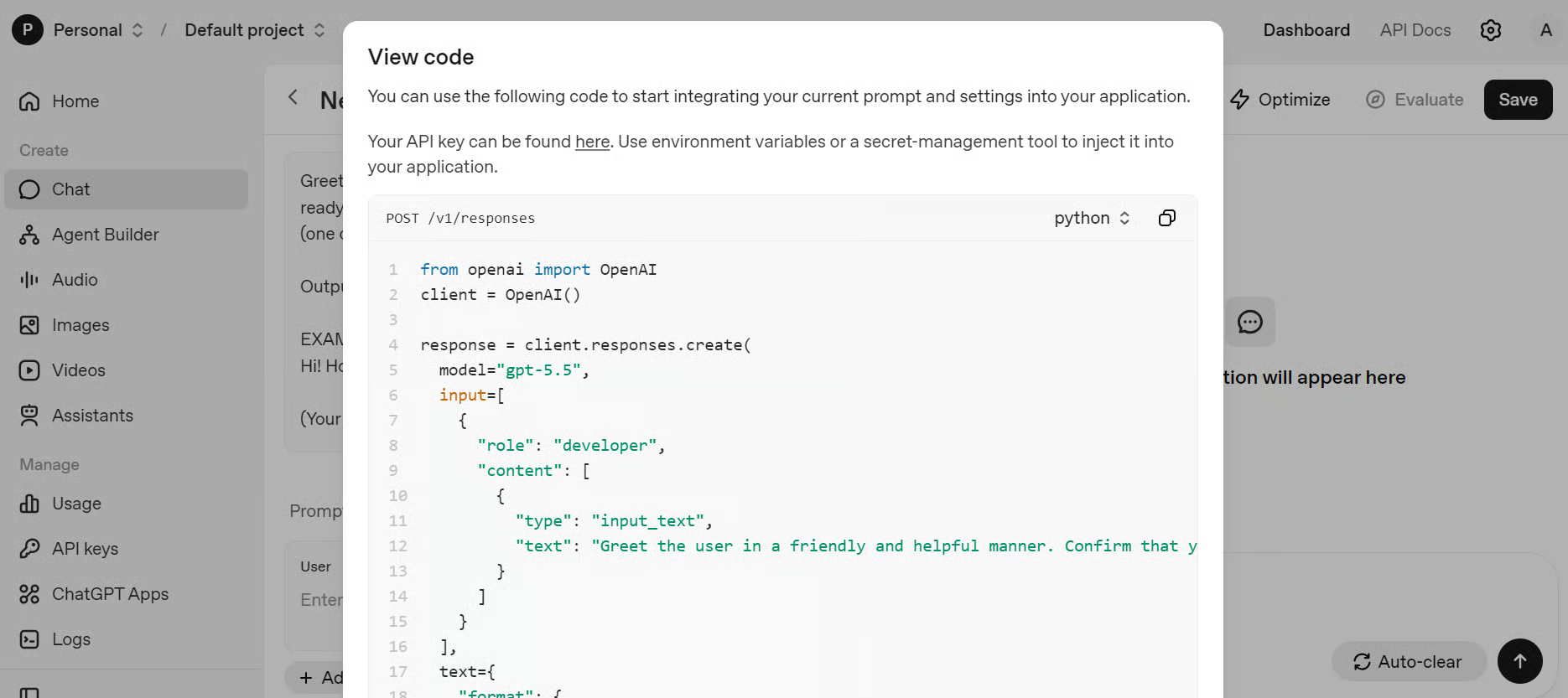

1. OpenAI

OpenAI is one of the most widely used LLM API providers. Its API Platform offers access to:

- Frontier reasoning models like GPT-5.5

- Real-time voice generation and translation via GPT-Realtime-2

- Image generation via the GPT-Images-2.0 image generation model

- Agent-based development through the OpenAI Agents SDK

Its API supports streaming, real-time interfaces, and structured outputs.

OpenAI is a strong choice for teams building AI assistants, coding tools, customer support agents, internal copilots, multimodal apps, and agent-based systems.

Source: OpenAI

One drawback is that OpenAI can become expensive at scale, especially for high-volume or reasoning-heavy applications. It is also a closed API provider, which means developers have less control over model internals, hosting, and customization compared with open-source or self-hosted models.

Best for: general-purpose AI apps, reasoning, coding, multimodal workflows, and production-ready AI products.

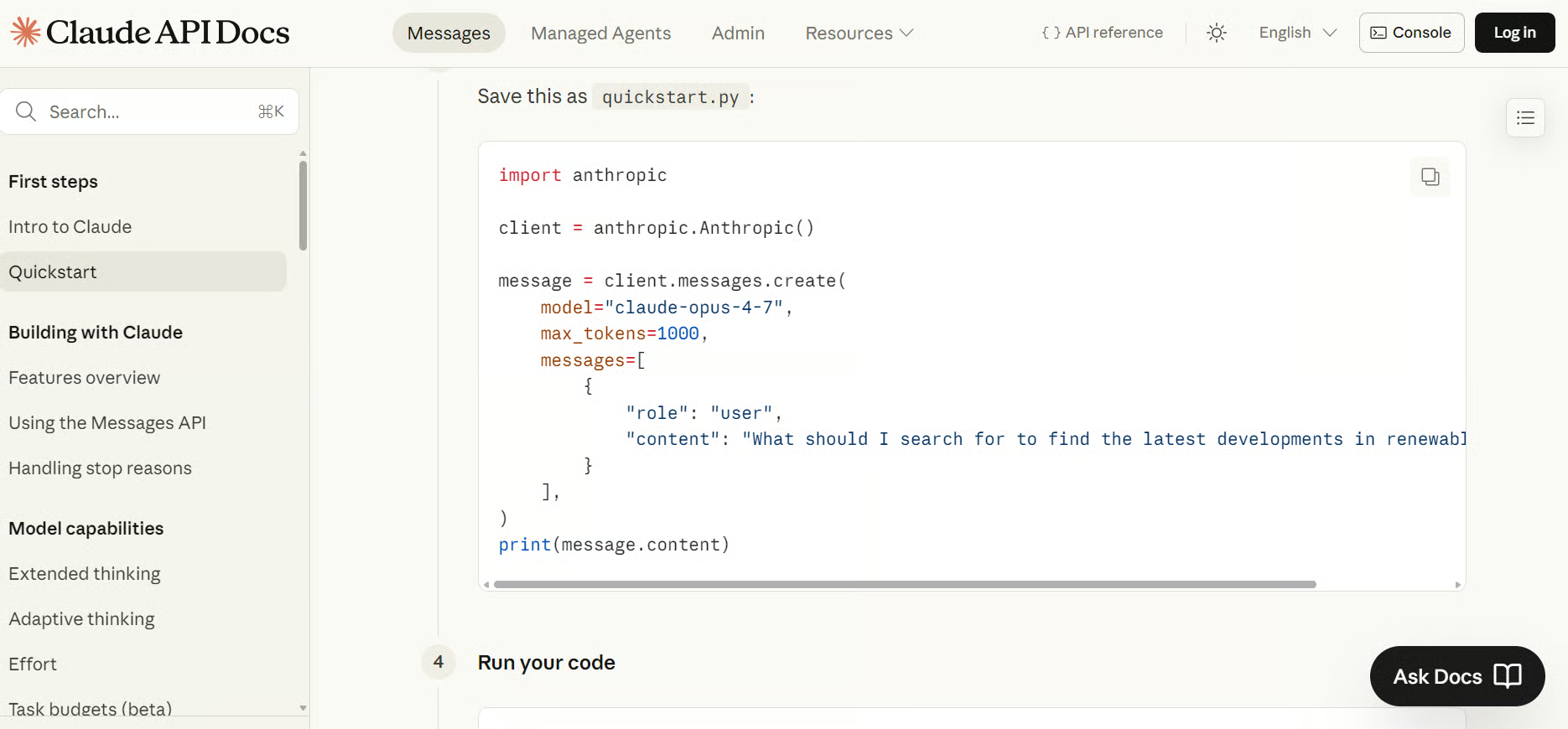

2. Anthropic

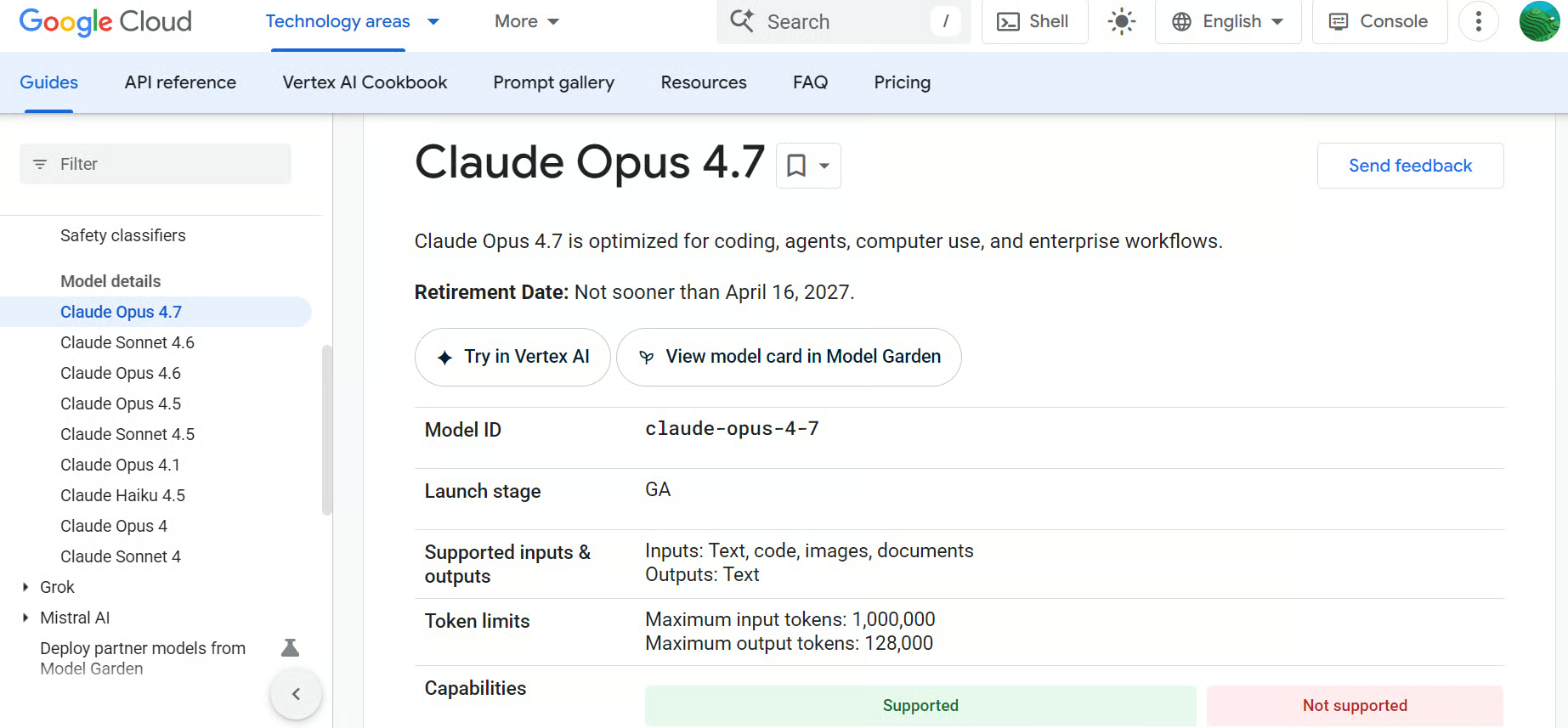

Anthropic provides the Claude model family through its API, e.g., its latest flagship model, Claude Opus 4.7. Claude is designed for language, reasoning, analysis, coding, long-context work, and agentic workflows. Anthropic’s developer platform gives teams direct model access through APIs, SDKs, and developer documentation.

Claude is especially useful for applications that need careful instruction-following, strong writing quality, document analysis, and reliable handling of complex prompts.

Source: Get started with Claude

A key drawback is cost, especially when using Claude for large documents, long-context prompts, or high-volume applications. Since Claude is accessed through a hosted API, teams also have limited control over where and how the model runs.

For privacy-sensitive use cases, developers should carefully review data handling, retention, and compliance requirements before sending confidential business or customer data through the API.

Best for: coding assistants, enterprise AI, long-context analysis, document workflows, and AI agents.

For a detailed comparison of the two AI giants, check out our guide on Anthropic vs OpenAI.

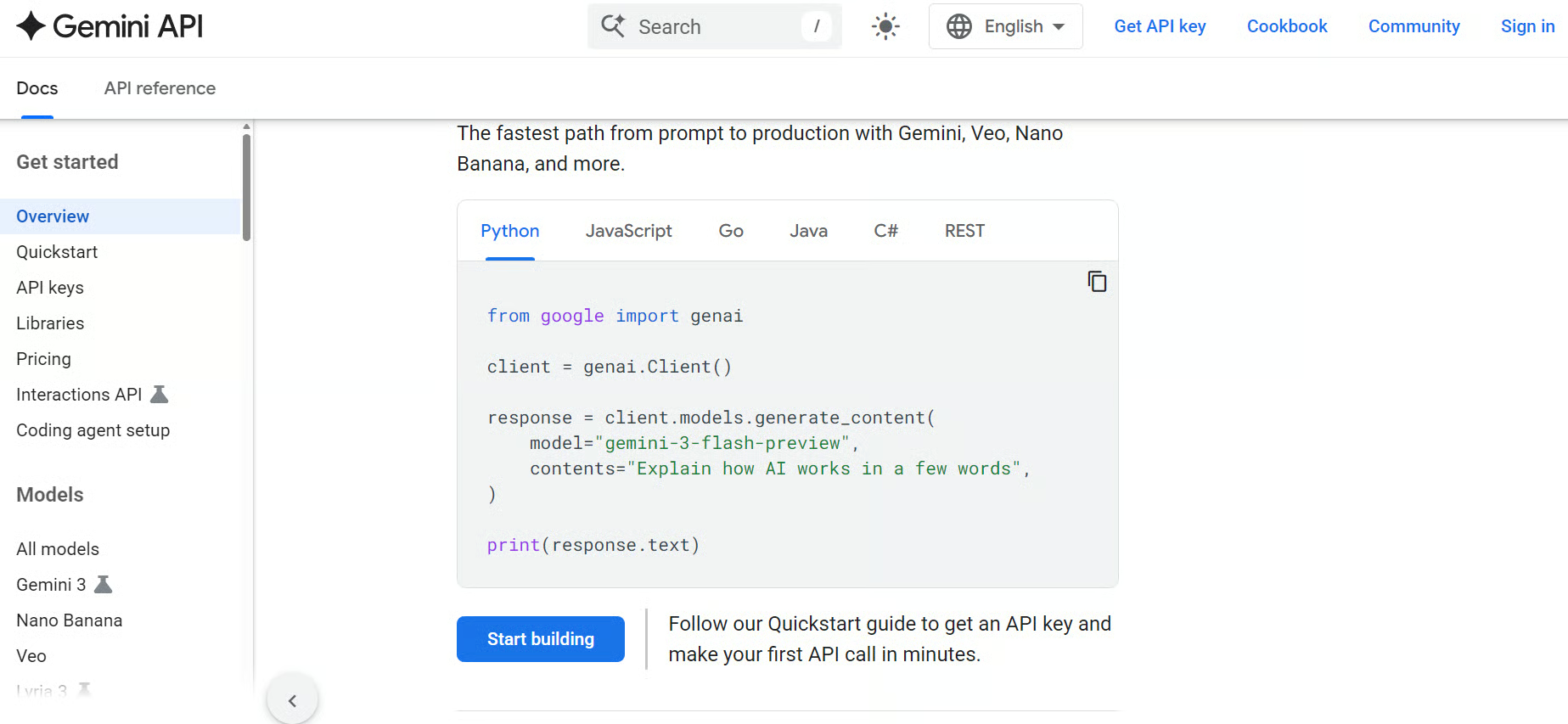

3. Google Gemini

Google Gemini is Google’s native LLM family, including Gemini 3.1 Pro, available through the Gemini API for developers. The Gemini API supports standard, streaming, and real-time APIs, with documentation for model details, SDKs, pricing, setup, and API references.

With Nano Banana 2 for image generation and Veo 3.1 integrated, Gemini is a strong option for developers building multimodal applications. It’s also a good choice for AI assistants (especially those relying on search experiences), coding tools, and products that connect with Google’s broader AI ecosystem.

Source: Gemini API

However, Gemini can feel more tied to Google’s ecosystem, which may not suit teams that want a provider-neutral setup. Pricing, model availability, and feature support can also vary across tools and regions.

For privacy-sensitive applications, teams should review data handling, retention, and compliance requirements before sending confidential business or customer data through the API.

Best for: multimodal AI apps, Google ecosystem integration, long-context workflows, coding, and general-purpose AI applications.

Open-Source LLM API Providers

Open-source LLM API providers give developers hosted API access to open-source and open-weight models. Instead of downloading models and running them on your own GPUs, these platforms host the models and make them available through simple APIs.

These providers are useful for teams that want lower costs, more model flexibility, faster experimentation, and access to popular open models such as Llama, DeepSeek, Qwen, Mistral, Gemma, and others.

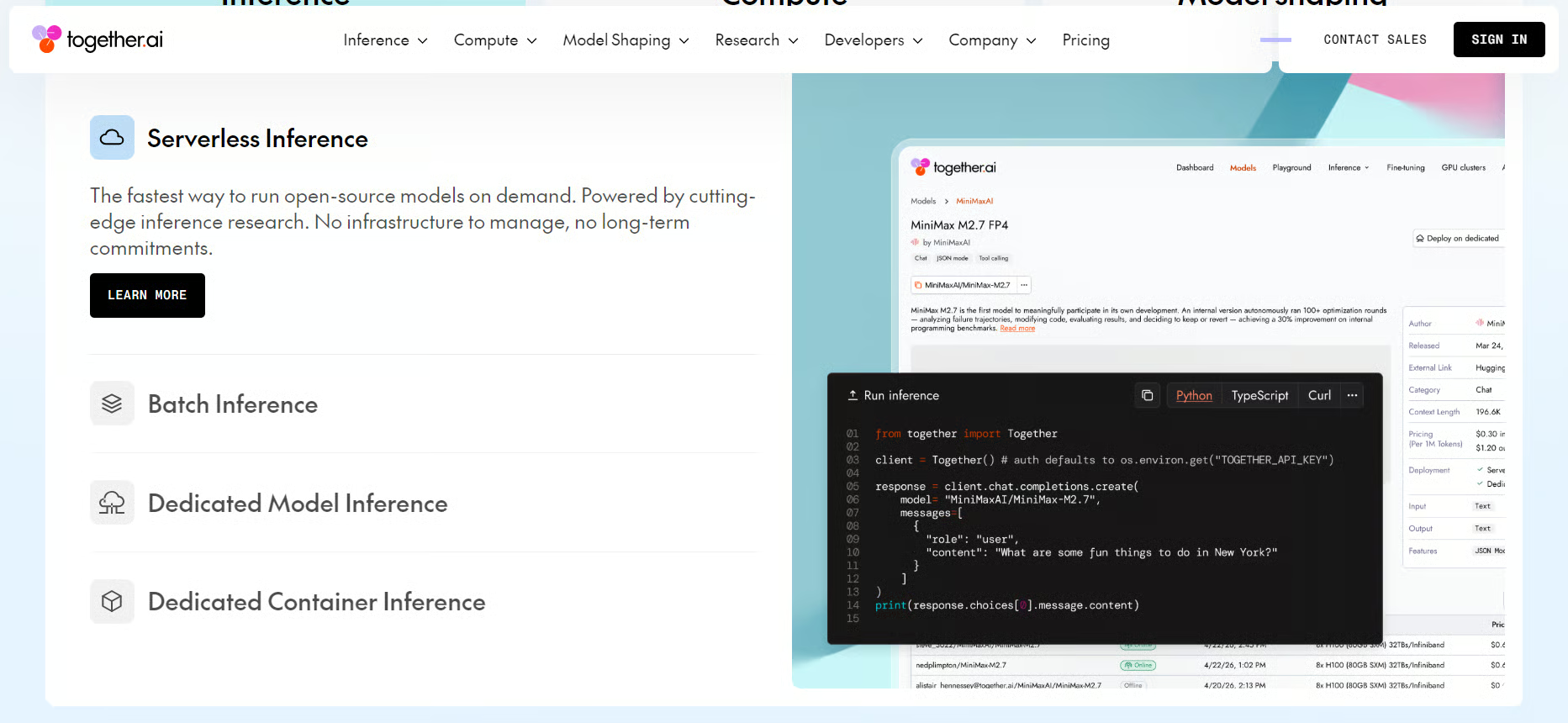

4. Together AI

Together AI provides hosted access to open-source and specialized models through a unified API. Its model catalog includes 200+ models across text, image, video, code, and audio, with support for serverless inference, batch inference, dedicated endpoints, fine-tuning, evaluations, and GPU clusters.

Together AI is a strong option for developers who want to build with open models without managing their own infrastructure. It is especially useful for teams testing multiple models, fine-tuning custom models, or scaling inference workloads.

Source: Together AI

That being said, model quality, speed, and reliability can vary depending on the model selected. Teams may need more testing, benchmarking, and evaluation before using it in production.

For sensitive workloads, teams should check whether they need serverless access, dedicated endpoints, or private deployment options to meet their security and compliance requirements.

Best for: open-source model inference, fine-tuning, scalable AI workloads, and experimentation across many models.

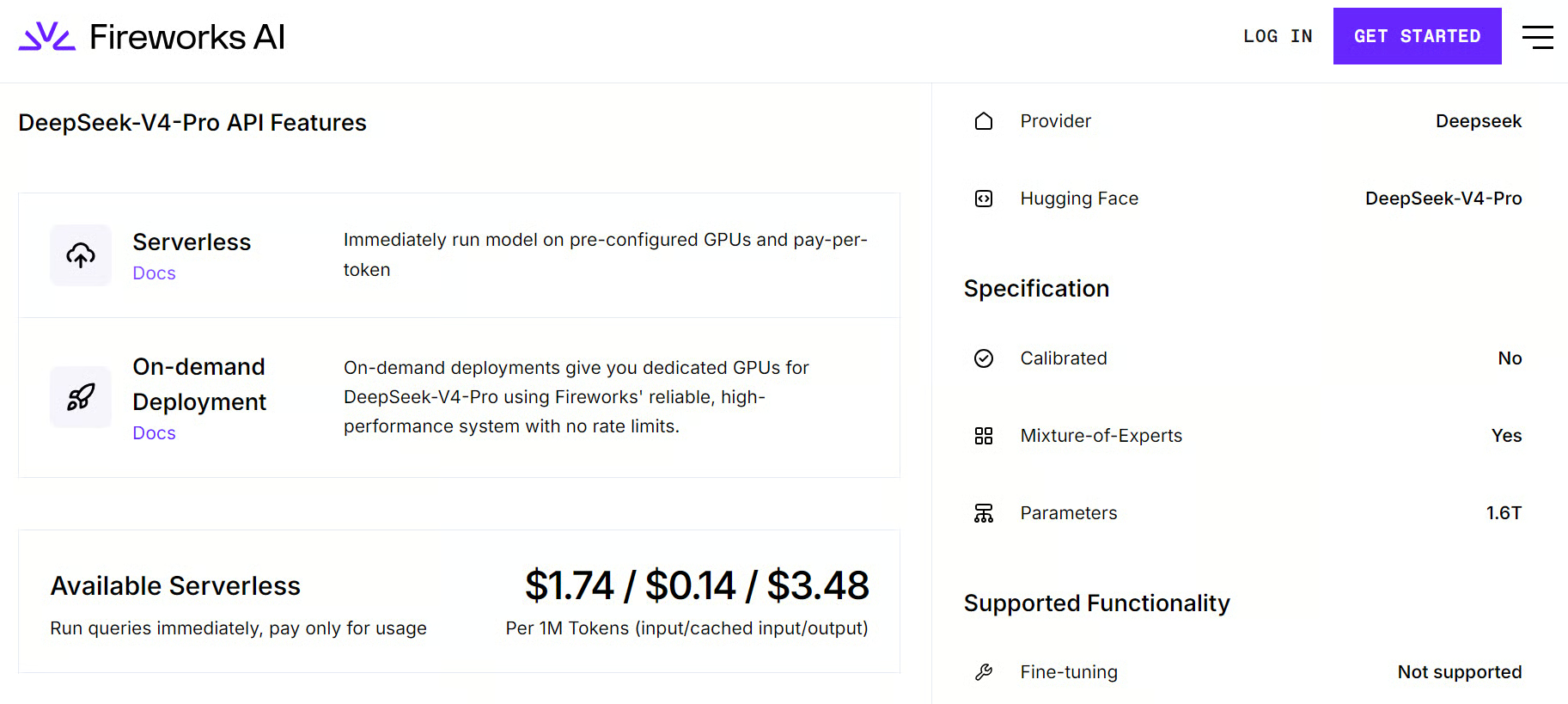

5. Fireworks AI

Fireworks AI focuses on fast inference for open-source LLMs and generative AI models. Its platform supports serverless inference, on-demand deployments, fine-tuning, and production-ready APIs for popular open models.

Fireworks AI is a strong option for teams that want open-model flexibility with faster inference and lower latency. It is especially useful for conversational AI, coding assistants, search, multimodal apps, and enterprise RAG systems.

Source: Fireworks AI

A key drawback is that Fireworks AI is more focused on inference and deployment than being a broad, all-in-one AI platform. Teams may still need separate tools for orchestration, evaluations, monitoring, or complex agent workflows.

Teams should also consider whether serverless inference is enough for sensitive workloads, or whether dedicated deployments are needed for stronger control over performance and compliance.

Best for: fast open-source model inference, fine-tuning, production AI apps, and low-latency open-model deployments.

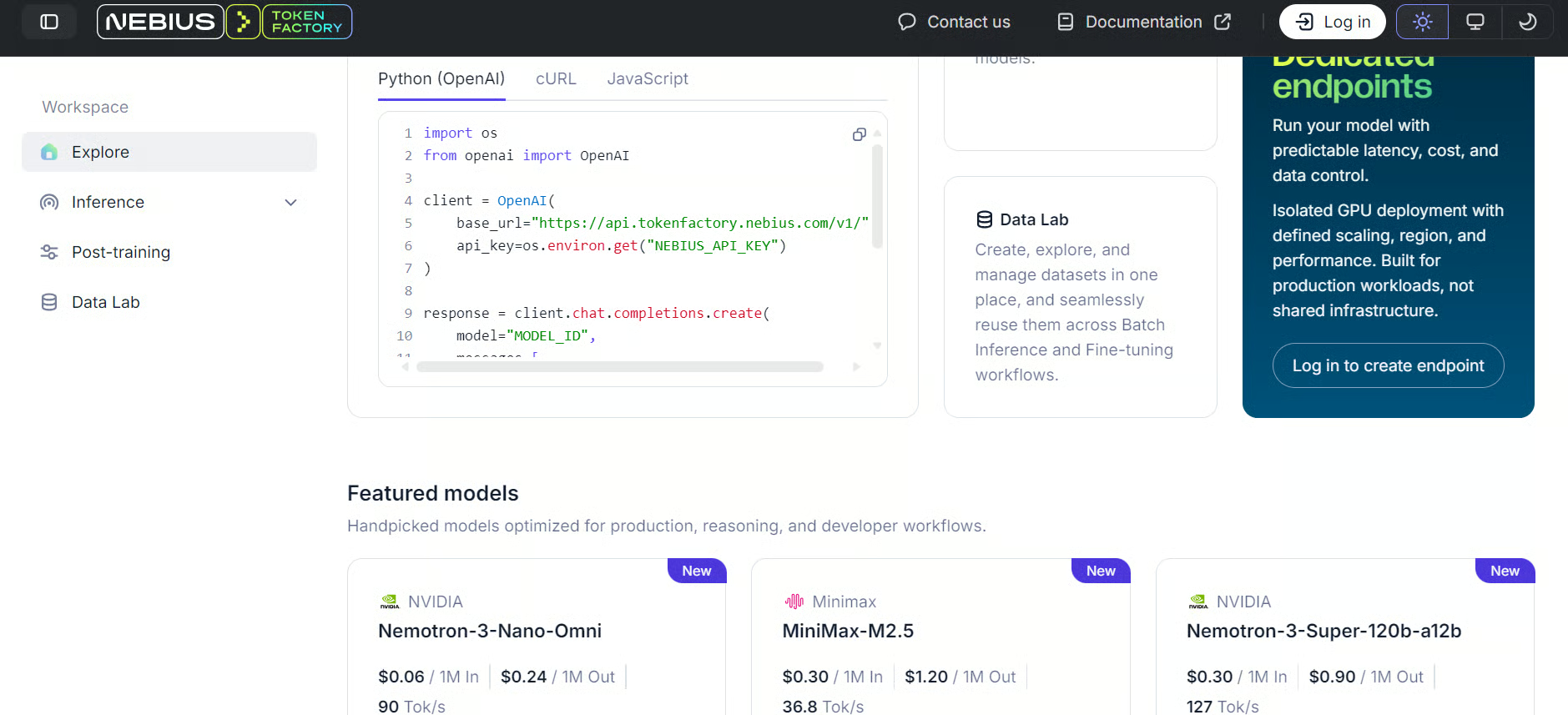

6. Nebius AI

Nebius AI is an AI cloud provider built for GPU-backed AI workloads, hosted inference, model serving, and scalable AI infrastructure. Its Token Factory inference service supports open models through an OpenAI-compatible API, with options for serverless inference, fine-tuning, and dedicated AI cloud infrastructure.

Nebius AI is a strong option for teams that want more infrastructure control than a basic API provider, while still avoiding the complexity of fully managing their own GPU environments. It is especially useful for teams building with open and custom models that need scalable compute, fast inference, and production-ready deployment options.

Source: Nebius Token Factory

Nebius Token Factory offers two inference speed flavors: Fast and Base. Fast is designed for lower-latency, interactive workloads, while Base is designed for more cost-efficient, high-volume inference or background processing.

Nebius is certainly more infrastructure-focused than simple plug-and-play LLM API platforms. This means that teams may need more cloud, deployment, and workload management knowledge to get the most value from it.

For sensitive workloads, teams should consider whether managed inference is enough or whether dedicated infrastructure is needed for stronger control over security, compliance, and data governance.

Best for: AI cloud infrastructure, hosted inference, GPU-backed workloads, fast inference, and teams that want more deployment control.

LLM Routing Providers

LLM routing providers give developers access to multiple models and providers through one API. Instead of integrating separately with OpenAI, Anthropic, Google, Mistral, DeepSeek, and other providers, developers can use one routing layer to manage model access from a single place.

These platforms are useful for model comparison, fallback routing, cost optimization, provider redundancy, observability, and switching between models without rewriting your application.

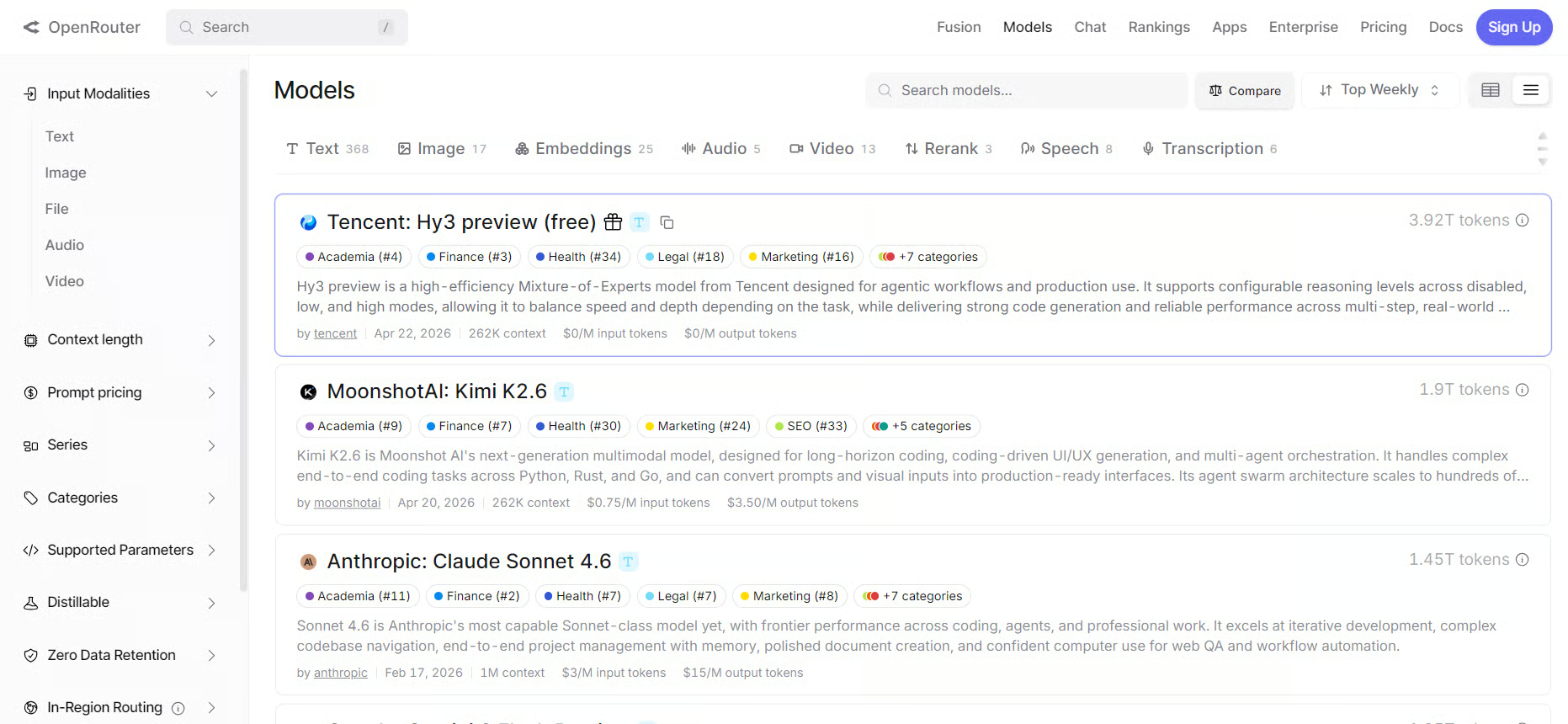

7. OpenRouter

OpenRouter is one of the most popular LLM routing providers. It gives developers access to many models through a single OpenAI-compatible API, making it easier to work with different providers using one integration.

As we have covered in several tutorials, OpenRouter is a strong option for developers who want to compare models, test new releases quickly, route requests across providers, or avoid being locked into one model vendor.

Source: OpenRouter

One drawback is that OpenRouter adds another layer between your application and the model provider. This can introduce extra dependency, variable latency, and provider-specific behavior that still needs testing.

For production workloads, teams should review routing settings, fallback behavior, provider preferences, and privacy controls before sending sensitive or business-critical traffic through the platform.

Best for: multi-model apps, model comparison, fallback routing, provider flexibility, and fast experimentation.

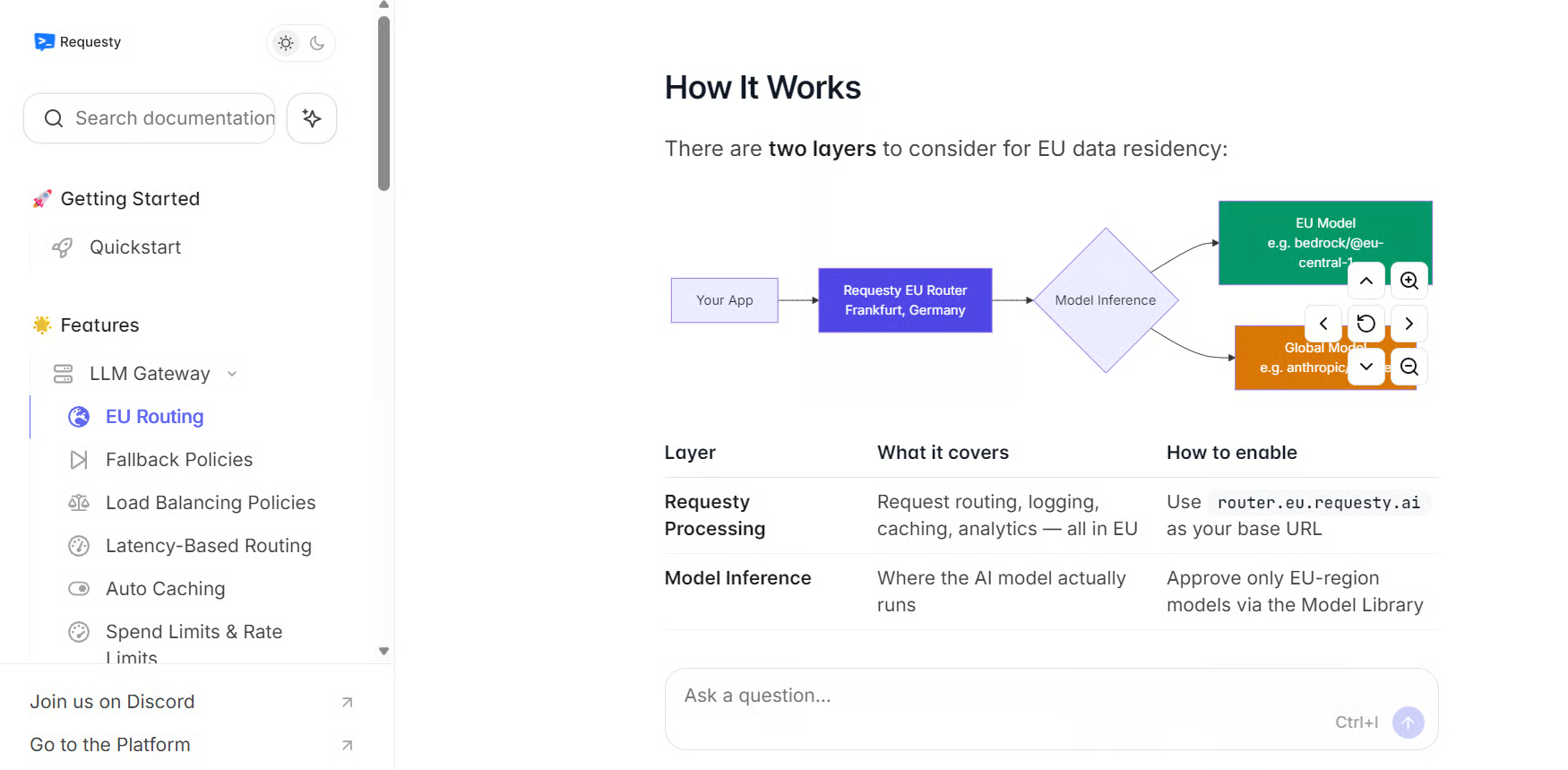

8. Requesty.ai

Requesty.ai is an LLM routing and gateway platform that helps teams connect to multiple LLM providers through one OpenAI-compatible API. It supports 400+ models, with features for routing, fallback, caching, cost management, observability, and governance.

Requesty.ai is a strong option for teams building production AI applications that rely on more than one model provider. It is especially useful for managing routing policies, tracking usage, controlling spend, and improving reliability when providers fail, time out, or hit rate limits.

Source: Requesty AI Documentation

Like for OpenRouter, the key drawback is that Requesty.ai adds another gateway layer to the application stack. Teams need to configure routing, fallback, logging, and governance carefully to avoid unexpected cost, latency, or provider behavior.

For sensitive workloads, Requesty.ai includes controls such as PII detection, scrubbing, content guardrails, audit logs, and governance features, but teams should still decide what data can pass through the gateway and how logs should be managed.

Best for: LLM routing, cost control, observability, provider fallback, governance, and production AI gateway workflows.

Cloud LLM Providers

Cloud LLM providers are large cloud platforms that give developers and enterprises managed access to foundation models. These platforms often support their own models as well as models from third-party providers.

They are especially useful for enterprises that already use cloud infrastructure and need security, governance, compliance, data integration, deployment controls, and model management in one place.

9. Google Vertex AI

Google Vertex AI is Google Cloud’s machine learning and generative AI platform. It gives developers and enterprises access to Gemini models, Model Garden, agent tools, and managed infrastructure for building, deploying, and managing AI applications.

Vertex AI also supports leading partners and open models through Model Garden, including Anthropic Claude, xAI Grok, Mistral AI models, and open-source models such as GLM 5 and Gemma 4, alongside Google’s own Gemini models.

Source: Generative AI on Vertex AI

Google Vertex AI is a strong option for teams that already use Google Cloud and want cloud-native model deployment, governance, security controls, and integration with Google’s broader data and AI ecosystem.

On the other hand, Vertex AI can be more complex than using a simple model API. Teams may need Google Cloud experience to manage projects, permissions, billing, deployment settings, and integrations properly.

For sensitive workloads, Vertex AI is useful for teams that need cloud-level access controls, governance, and managed infrastructure, but setup decisions around logging, data access, and deployment regions still need careful review.

Best for: Google Cloud users, Gemini access, enterprise AI apps, multimodal workflows, and cloud-native model deployment.

10. Amazon Bedrock

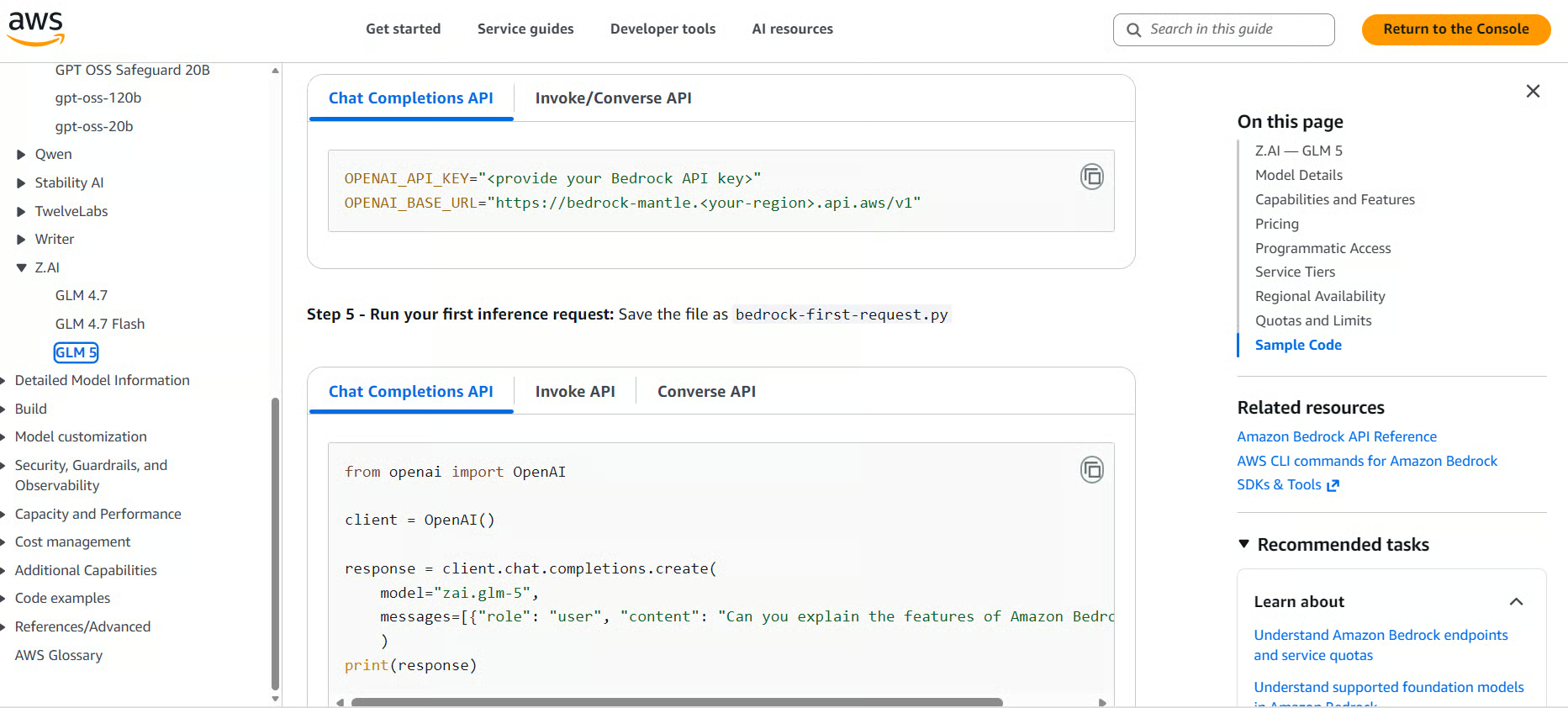

Amazon Bedrock is AWS’s managed generative AI platform. It gives developers access to foundation models from Amazon and third-party providers through one managed AWS service.

Bedrock supports models from providers such as Amazon, Anthropic, Meta, Mistral AI, Cohere, DeepSeek, and others, making it useful for teams that want model choice inside the AWS ecosystem.

Amazon Bedrock is a strong choice for companies already building on AWS because it brings model access, cloud infrastructure, security controls, and enterprise integration into one platform.

Source: Amazon Bedrock

A key drawback is that Bedrock can be more complex than a simple LLM API. Teams may need AWS experience to manage permissions, regions, model access, pricing, networking, and deployment settings properly.

For sensitive workloads, Bedrock is useful because prompts and outputs are not used to train base models or shared with model providers, and teams can use AWS controls such as encryption, IAM, and PrivateLink.

Best for: AWS users, enterprise AI, managed foundation model access, security-focused deployments, and cloud-native generative AI applications.

LLM API Providers Comparison Table

The table below compares the top LLM API providers based on their key advantages and main drawbacks.

|

Provider |

Key advantages |

Main drawbacks |

|

OpenAI |

Strong model quality, mature APIs, multimodal tools, structured outputs, embeddings, speech, image generation, and agentic workflows |

Can become expensive at scale and offers less control than open-source or self-hosted models |

|

Anthropic |

Strong writing quality, careful instruction-following, reasoning, coding, and long-context support |

Costs can rise with large documents or high-volume usage, and teams have limited control over hosting and model internals |

|

Google Gemini |

Strong multimodal capabilities, real-time APIs, long-context support, and close integration with Google’s AI ecosystem |

Can feel tied to Google’s ecosystem, and pricing, availability, and features may vary by tool or region |

|

Together AI |

Hosted access to many open and specialized models, with serverless inference, dedicated endpoints, fine-tuning, evaluations, and GPU infrastructure |

Model quality, speed, and reliability can vary depending on the model selected |

|

Fireworks AI |

Fast inference, low latency, serverless access, on-demand deployments, and production-ready APIs for open models |

More focused on inference and deployment than being a full all-in-one AI development platform |

|

Nebius AI |

GPU-backed infrastructure, hosted inference, OpenAI-compatible API, and speed tiers such as Fast and Base |

More infrastructure-focused, so teams may need stronger cloud and deployment knowledge |

|

OpenRouter |

Access to many models through one OpenAI-compatible API, with easier provider switching and fallback options |

Adds another layer between the app and provider, which can affect latency, reliability, or debugging |

|

Requesty.ai |

One API for multiple providers, with routing, fallback, caching, cost tracking, observability, and governance features |

Requires careful setup of routing, logging, fallback, and cost controls to avoid unexpected behavior |

|

Google Vertex AI |

Managed access to Gemini, Anthropic Claude, xAI Grok, Mistral AI, and open models through Model Garden, plus Google Cloud security and governance tools |

More complex than a simple API and may require Google Cloud experience |

|

Amazon Bedrock |

Managed access to models from Amazon and third-party providers such as Anthropic, Meta, Mistral AI, Cohere, and others inside AWS |

More complex than a basic LLM API, especially around permissions, regions, pricing, and model access |

Choosing the Best LLM API Provider For Your Use Case

Choosing the right LLM API provider depends on three factors:

- What you are building

- How much control you need

- How much you are willing to spend

For startups and small teams, open-source LLM API providers are often the best place to start. Platforms like Together AI, Fireworks AI, and Nebius AI give you access to powerful open models without managing GPUs or infrastructure yourself. They can be faster, cheaper, and more flexible for experimentation and early-stage product development.

If you want to test different models quickly, LLM routing providers are a strong option. Tools like OpenRouter and Requesty.ai let you compare closed-source and open-source models through one API, manage fallbacks, and switch providers without rewriting your application.

For workflows where model quality matters more than cost, native providers like OpenAI and Anthropic are still among the strongest choices. They are especially useful for production AI assistants, coding tools, reasoning-heavy workflows, multimodal apps, and agentic systems where reliability and model performance matter most.

Finally, if your company already runs on AWS or Google Cloud, it often makes sense to stay within that cloud ecosystem. Amazon Bedrock and Google Vertex AI give you managed model access, security controls, governance, and integration with the tools your team already uses.

Final Thoughts

For technical work, coding assistants, and vibe coding tools, I would not over-experiment at the start. In my experience, OpenAI and Anthropic are usually the safest choices because of their strong coding, reasoning, and developer tooling.

Open-source LLM API providers and LLM routing providers are strong alternatives for more advanced experimentation.

Big Cloud LLM providers usually only make sense in a corporate environment. In this case, go with the one whose ecosystem you are already using.

LLM API Provider FAQs

What is an LLM API provider?

A company or platform that hosts large language models and exposes them via an API, so developers can send requests and receive AI-generated responses without managing any GPU or inference infrastructure themselves.

What's the difference between a native LLM provider and a cloud LLM provider?

Native providers (like OpenAI and Anthropic) build and serve their own models directly. Cloud providers (like AWS Bedrock and Google Vertex AI) offer managed access to multiple third-party models bundled into an existing cloud ecosystem with enterprise security and governance tools.

When should I use an LLM routing provider like OpenRouter or Requesty.ai?

When you need to work with more than one model or provider, want automatic fallback if a provider goes down, or need to compare models and control costs, all through a single API integration.

Are open-source LLM API providers less capable than native providers like OpenAI?

Not necessarily; it depends on the task. Platforms like Together AI and Fireworks AI host state-of-the-art open models such as Llama, DeepSeek, and Mistral, which can be competitive with closed models, often at significantly lower cost.

Which LLM API provider is best for enterprises with strict compliance requirements?

Amazon Bedrock and Google Vertex AI are typically the strongest choices, as they offer cloud-native security controls, IAM, encryption, audit logging, and governance features that integrate with existing enterprise infrastructure.

As a certified data scientist, I am passionate about leveraging cutting-edge technology to create innovative machine learning applications. With a strong background in speech recognition, data analysis and reporting, MLOps, conversational AI, and NLP, I have honed my skills in developing intelligent systems that can make a real impact. In addition to my technical expertise, I am also a skilled communicator with a talent for distilling complex concepts into clear and concise language. As a result, I have become a sought-after blogger on data science, sharing my insights and experiences with a growing community of fellow data professionals. Currently, I am focusing on content creation and editing, working with large language models to develop powerful and engaging content that can help businesses and individuals alike make the most of their data.