Share this webinar

Close your data and AI skills gap

We're the only platform uniquely engineered to advance data and AI skills across your entire organization. Let's explore a tailored program.

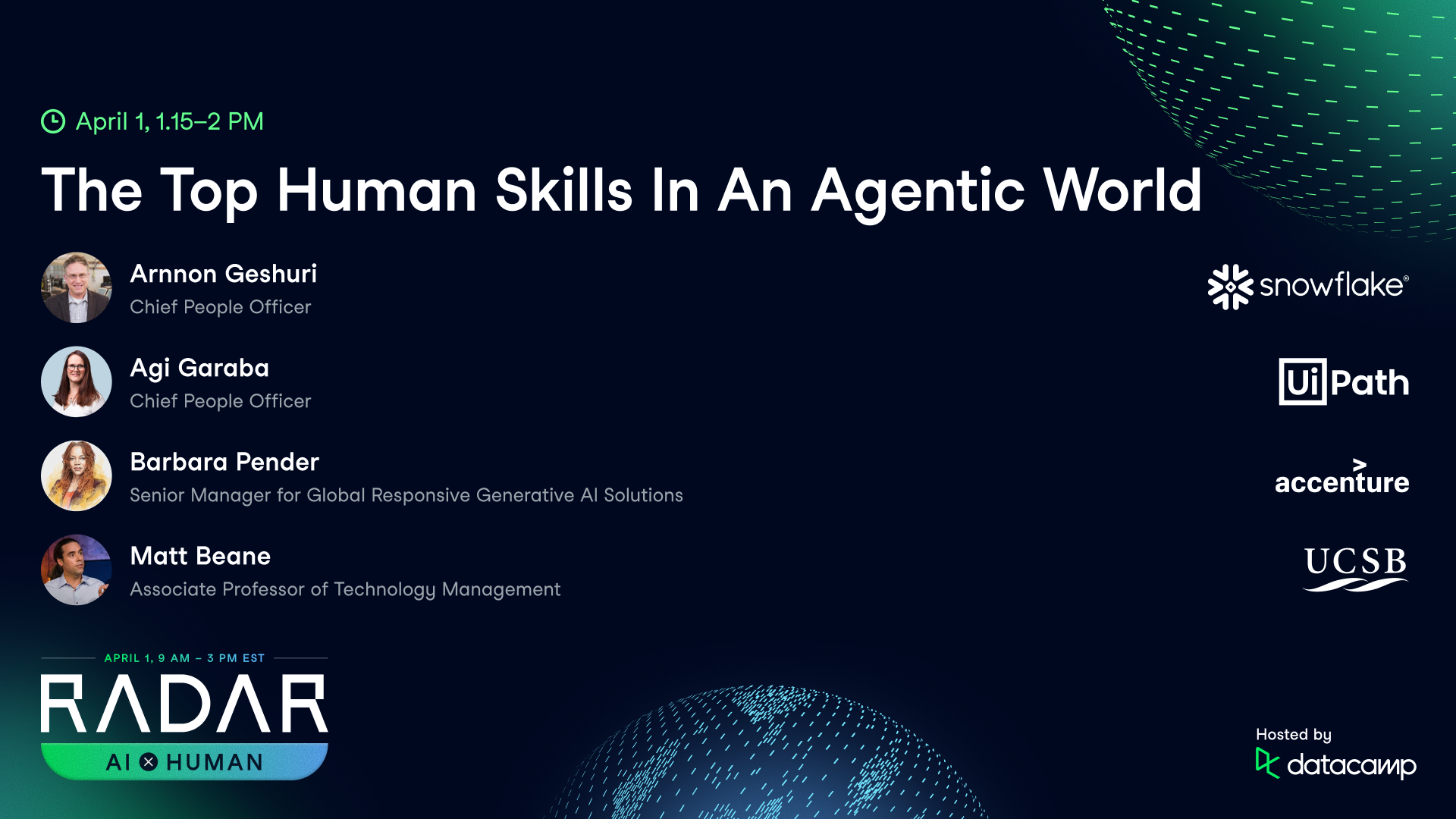

Book an Enterprise Demo[RADAR AI x Human] The Top Human Skills In An Agentic World

April 2026

Your Presenter(s)

Arnnon Geshuri

Chief People Officer at Snowflake

Arnnon runs the people department at AI data cloud platform, Snowflake. He has three decades of experience as a technology-focused people leader. Previously, Arnnon was CPO at Teladoc Health, and held senior HR positions at Tesla, Google, and Morgan Stanley.

Agi Garaba

Chief People Officer at UiPath

Agi runs the people department at AI automation company, UiPath. She is an expert in technology talent acquisition and people strategy. Previously, Agi was VP of People at Vancity and COO at SAP Labs Canada.

Barbara Pender

Senior Manager for Global Responsive Generative AI Solutions

Barbara helps clients implement AI solutions. She has two decades of experience as an executive program manager, trainer and consultant with a focus on responsible AI. Barbara also run the Blanton Techknowledge IT consultancy. Previously, she was a Principal Consultant at Gartner and a manager at United Airlines.

Matt Beane

Associate Professor of Technology Management at University of California, Santa Barbara

Matt researches how we build skill in work involving AI. He is the author of "The Skill Code". Matt is also a Digital Fellow with the Stanford Digital Economy Lab and MIT’s Initiative on the Digital Economy. Previously, Matt founded the Tacitly education startup.

Summary

A candid, practical conversation for people adapting to AI-driven change—whether they’re trying to protect a role, earn a promotion, or pivot into work that will still matter as automation and AI agents spread.

The central argument was less about “job loss” than job redesign: AI is already stripping out repetitive tasks and raising the premium on distinctly human contributions—judgment, accountability, and relationship-based work. Panelists debated which roles may evolve more slowly (teachers, nurses, some legal and policy jobs) and why accountability still tends to pull humans back “in the loop.” From there, the discussion turned to what employers are actually hiring for in an agentic era: curiosity you can show with real experiments, communication skills that translate into better prompting and clearer intent, and systems thinking—the ability to connect specialized work to a larger operating model. The panel also offered concrete learning strategies that fit into busy workdays, from deliberately attempting “impossible” tasks with the latest models to daily fifteen-minute audits of where AI could remove friction. Finally, the group addressed breaking into AI from both early- and mid-career vantage points, emphasizing adaptable career narratives, network-driven learning, and a growing opening for responsible AI as a durable, cross-disciplinary lane.

Key Takeaways:

- Most roles are evolving, not disappearing; AI is more likely to replace tasks (drafting, summarizing, scheduling, first-pass analysis) than entire jobs (managing, teaching, nursing, leading).

- The top human skills in an AI world are increasingly clear: curiosity (try tools and learn fast), communication (clear intent and prompts), judgment (decide what “good” is), and systems thinking (see second-order effects across teams and customers).

- To become AI fluent at work, learning works best “in the flow of work”—small, consistent experiments (10–15 minutes a day) beat occasional marathon upskilling sessions.

- Human accountability still matters: decision-making domains like law and policy may adopt AI more cautiously because someone must own the decision, explain it, and take responsibility when it goes wrong.

- With abundant AI output, checking AI output quality (and protecting attention) becomes a career-defining skill: review loops, source checks, and knowing when not to use AI.

Deep Dives

1) From job replacement to job evolution: what “safe” really means

The conversation began with the question hanging over nearly every workplace: are any jobs safe from AI? The panel’s answer was a reframing. “I really see jobs as more evolving,” said Arnnon Geshuri, arguing that AI is most predictably applied where work is repetitive, standardized, or filled with “friction points.” That’s not reassurance so much as a diagnosis: job descriptions are being rewritten from the inside out, as routines are automated and human attention is redirected to higher-order decisions.

Still, the panel offered concrete examples where full automation remains unlikely in the near term. Barbara Pender pointed to teaching and nursing, and even musicians—roles where emotional intelligence and real-time human responsiveness are core to the work. The point wasn’t that these professions are untouched; it was that they are harder to fully substitute because the work includes tacit knowledge, embodied presence, and trust. Agi Garaba added another category: roles that require complex judgment and explicit accountability—judges, policy makers, certain legal functions—where an AI system may serve as a “sparring partner,” but the human remains responsible for the decision.

Matt Beane sharpened the economic logic. The real risk is highest where “your core tasks can be substituted for by AI,” and where increased efficiency does not expand demand. He used the Uber driver as a contemporary example, invoking Waymo’s scaling pace. The analogy to agriculture landed as a warning: automation may not eliminate a sector, but it can drastically shrink the number of people needed to do the work. That’s a different kind of disruption—one that shifts the competitive baseline, not just a few job titles.

The practical implication for viewers is to map your own role into tasks, then ask which are predictable, repeatable, and information-only. Those are most vulnerable to automation—and also the best targets for you to automate first, reclaiming time for the parts of the job where humans still outperform: judgment, empathy, negotiation, and the ability to carry responsibility when the answer is contested.

2) The human skill stack employers want: curiosity, communication, judgment, systems thinking

When the discussion turned from macro trends to hiring reality, the panelists—two chief people officers among them—described a “human skill stack” that sounds familiar but is taking on new meaning in an agentic AI world. Garaba emphasized systems thinking: the capacity to see how specialized work connects to the broader machine of an organization. In a workplace where tools can generate outputs quickly, disconnected excellence can be less valuable than integrated judgment. A candidate who can explain downstream consequences—how an automation affects customers, compliance, or a handoff to another team—has a structural advantage.

Curiosity came up repeatedly, but not as a slogan. Geshuri described how his recruiting team tries to test it: “we ask people, have you experimented?” The goal is to find proof that candidates are already trying new versions of tools, stress-testing them, and learning their limits. This is less about being an AI zealot than about showing adaptive behavior in a fast-moving environment—and it’s a key hiring signal for the skills needed in an agentic AI world.

Communication was treated as newly instrumental. As Geshuri put it, natural language interfaces are closing the gap between the technical and nontechnical, and the ability to express intent clearly has become operational—not merely interpersonal. Prompting is, in practice, a communication discipline: specifying context, constraints, tone, and success criteria well enough that a model can collaborate effectively. This is one reason humanities-trained workers may find more doors open than they expect; language competence is turning into interface competence.

Judgment, meanwhile, was the boundary condition—where the panel drew a line between using AI and outsourcing responsibility. Geshuri gave a vivid people-management example: you do not want an AI to “write” a promotion conversation or performance review in a way that erodes trust. Beane’s emphasis was complementary: as outputs become cheap and plentiful, organizations are starved for reliable evaluation. Deciding when to rely on AI, when to override it, and how to explain that decision to others becomes a durable form of professional capital.

In other words, technical fluency matters—but employers are also selecting for the ability to steer tools responsibly, communicate intent clearly, and understand the system you’re changing when you automate any single piece of work.

3) How to build these skills without “going back to school”: impossible tasks, daily friction hunts, learning in the flow

The panel’s most actionable advice was about learning mechanics—how people actually develop new capability while holding a full-time job. Beane advocated a deliberately visceral approach: take “the latest model,” give yourself an “impossible task” and a tight time box, and try anyway. The purpose is calibration. Many workers and executives alike still lack a felt sense of what these systems can do, where they fail, and what it takes to steer them. Without that personal experience, organizations drift into abstract debates—either inflated fear or inflated hype—rather than grounded experimentation. Practically, this can be run as an Impossible Task Sprint: pick one task you assume AI can’t do, time-box 30–60 minutes, iterate prompts, and write down what worked, what didn’t, and what human review was required.

Geshuri offered a complementary method that is easier to apply across a team: a daily, fifteen-minute scan for friction points. The question he asks is simple—what did you do today that could have been “augmented” or “amplified” by AI? This shifts learning away from a major reskilling program and toward habit formation. It also creates a portfolio of small wins: the task that once took six hours now takes minutes, freeing time for work that is more meaningful and less mechanical. He also emphasized reinforcement—sharing examples at all-hands meetings and acknowledging people who redesign their workflows well. As a repeatable routine, think of it as a Friction Point Audit (15 minutes a day): list one bottleneck, try AI on it, and keep the best prompt and checklist for next time.

Garaba grounded the same idea in a sports metaphor: consistency beats intensity. Waiting for a free weekend to “really learn AI” is a recipe for delay; micro-opportunities—messy, imperfect, daily—create compounding returns. Importantly, she framed this as learning “in the flow of work,” not as a separate, formal track. That distinction matters because the highest-leverage use cases tend to be specific to one’s context: the email backlog, the draft report, the planning document, the meeting notes.

Pender added a pragmatic warning against having too many tools. The market offers “more than 31” options, she noted, and the right starting point is simply what works for you. Her line was blunt: “AI will not be the bread spoon to you…it’s gonna find you, so you should go and find it first.” The meta-lesson across panelists is that skill-building now looks less like credentialing and more like iterative practice—trying, failing, refining prompts, learning where judgment must remain human, and building a repeatable personal workflow. A simple Quality Checklist helps: verify facts, check sources where possible, test edge cases, confirm tone/audience fit, and decide what must be approved by a human.

4) Breaking into AI (or pivoting mid-career): adaptable paths, responsible AI, and the new premium on quality

On career entry—especially for younger workers—the panel struck a note of realism about shifting ladders. Garaba urged early-career professionals not to stay “too attached” to a single, predetermined path. In an environment where junior roles may be reshaped or reduced, breadth becomes a hedge: learn widely, weave AI literacy into coursework and projects, and use conversations with people in the field to understand jobs that may not even exist yet. Networking came up as a practical tactic—not as favoritism, but as information infrastructure. When employers screen heavily for mindset traits like curiosity and learning agility, referrals and human context can help signal what’s hard to prove on a résumé. For students and new grads asking “how to break into AI with no experience,” the implied playbook is: ship small experiments, document what you learned, and show how you think.

Pender made a case for responsible AI as an accessible on-ramp and a durable specialization. Her argument was that every AI project—agents included—needs an “inclusive approach,” and that responsible AI cannot be tacked on at the end without risk. She described using a Jenga exercise to dramatize what happens when governance and stakeholder inclusion are treated as afterthoughts: the structure eventually collapses. For job seekers, this is also a strategic observation. Roles in responsible AI, risk, governance, and evaluation are often less saturated than model-building tracks, but increasingly central as AI moves into regulated or high-stakes domains. For people searching for “responsible AI jobs,” portfolio proof can be simple: create a lightweight risk checklist, run bias/quality tests on a sample workflow, and write a short memo explaining tradeoffs and mitigations in plain language.

For mid-career professionals, Beane’s framing was less about identity (“become an AI person”) and more about capability: attention and quality control. He argued that “the new problem for the economy is quality,” because AI can produce plausible work quickly while hiding subtle flaws. The differentiator becomes the ability to supervise AI output—starting tasks well, managing iterations, and “landing” work responsibly. In practice, that means developing a reliable review loop, learning what good looks like in your domain, and scaling your judgment without flooding colleagues with more output than they can absorb. For career switchers asking “how do I start or switch into an AI career,” this points to a practical path: combine your domain expertise with AI-assisted delivery, then show outcomes (time saved, error reduction, better decisions), not tool knowledge alone.

For viewers considering a pivot, the incentive is clear: watch how the panel connects hiring signals (curiosity, communication, judgment) to concrete daily behaviors. The path into AI is less a single credential than a track record—of experiments run, workflows redesigned, risks anticipated, and quality safeguarded when the tools are fast but imperfect.

Có liên quan

webinar

[RADAR AI x Human] Building AI-Ready Teams: Skills, Mindset, and Structures

Act like a boss (of an AI-ready team).webinar

[RADAR AI x Human] The Future of Education. This Time It's Personal!

Everyone deserves a world-class personal tutor.webinar

[RADAR AI x Human] AI Upskilling with Purpose: Customer Success Stories

Run AI training that makes a difference.webinar

[RADAR AI x Human] Easy Wins: How Non-technical Teams Thrive with AI

AI for everybody else.webinar

The Hottest AI Skills in 2025

Explore the most in-demand AI skills of 2025. You’ll learn which technical and practical skills are leading the job market, how hiring flows are evolving for AI roles, and what qualities hiring managers prioritize when building their AI teams.webinar