Share this webinar

Close your data and AI skills gap

We're the only platform uniquely engineered to advance data and AI skills across your entire organization. Let's explore a tailored program.

Book an Enterprise DemoMaking AI Work in Healthcare

March 2026

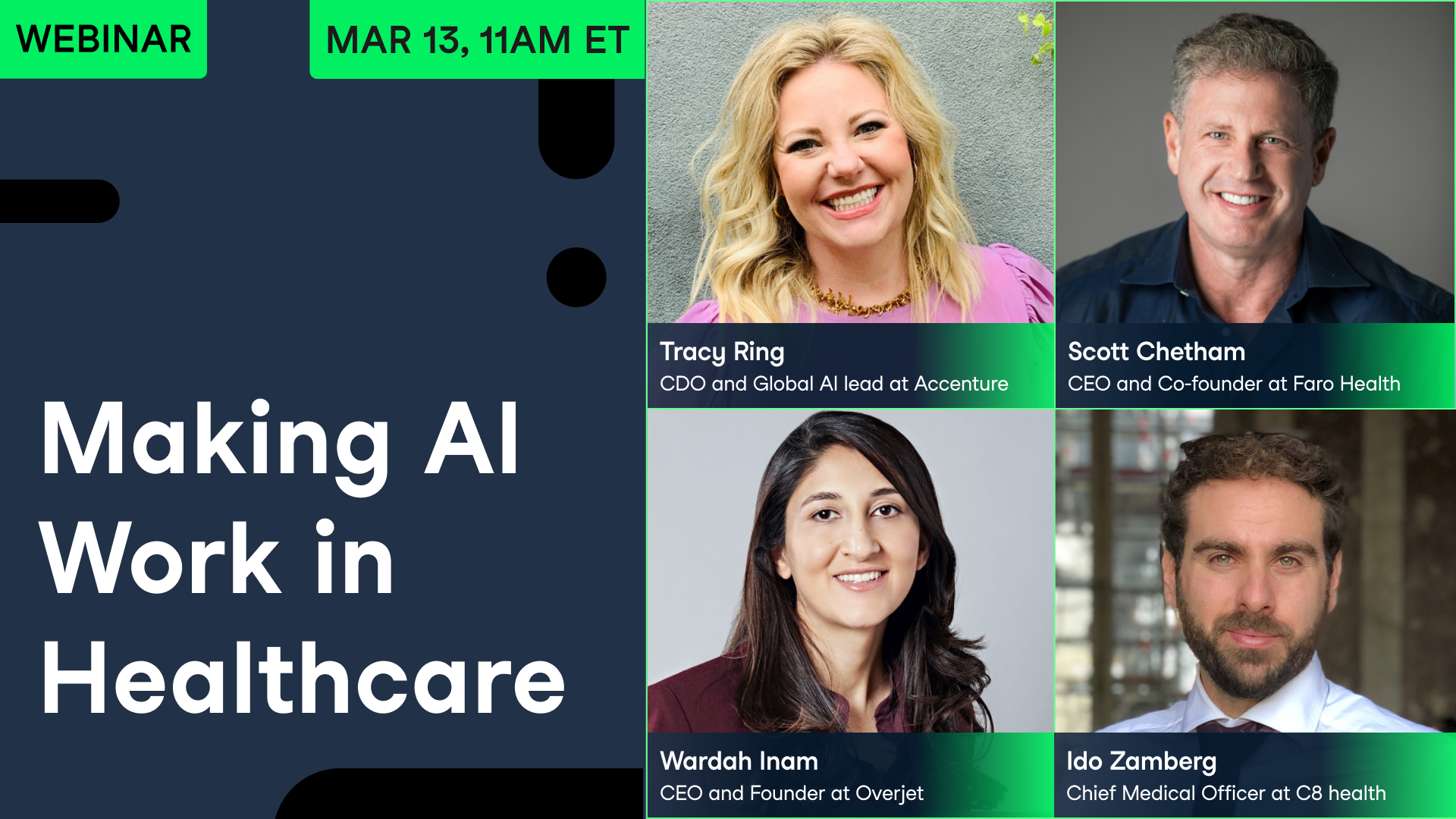

Your Presenter(s)

Tracy Ring

CDO and Global Generative AI lead for Life Sciences at Accenture

Tracy Ring leads Accenture’s Applied Intelligence Products Category Group, in this role she has leadership across Consumer and Industrial Products, Automotive, Life Sciences, Retail and Aerospace and Defense. As the CDO and Global Generative AI lead for Life Sciences, she personally anchors the NA Applied Intelligence Life Sciences practice of more than 500 practitioners. Tracy has created solutions for Generative AI, Data led transformation, Artificial Intelligence, Data and Cloud Modernization, Analytics, and the organization and operating model strategies for next-generation adoption and AI fluency.

Scott Chetham

CEO and Co-founder at Faro Health

Scott runs Faro Health, an AI platform for accelerated clinical development. He has two decades of experience as a clinical research executive. Previously, Scott was Head of Clinical Operations at Verily Life Sciences, CTO at Intersection Medical, and a Venture Partner at Versant.

Wardah Inam

CEO and Founder at Overjet

Wardah runs the AI-powered dentistry platform Overjet. Previously, she was a machine learning researcher for human physiology, with stints at Q Bio and a PhD from MIT.

Ido Zamberg

Chief Medical Officer at C8 Health

Session Resources

Summary

A project-style session for data and healthcare professionals who want to build, validate, and ship AI responsibly in one of the highest-stakes domains.

Healthcare AI is already embedded in daily clinical work—sometimes so smoothly that clinicians don’t realize a separate AI system is running in the background—yet that invisibility raises the bar for trust, transparency, and monitoring. The discussion moved from “coolest use cases” (stroke imaging support, multimodal longitudinal dentistry data, compressed clinical-trial design cycles, and brain-mapping research) to the constraints that determine whether systems succeed: HIPAA and related privacy requirements (including access controls, audit trails, and vendor BAAs), uneven data quality across institutions, and workflow variability by specialty and setting. Panelists emphasized that the biggest risks aren’t only headline-grabbing chatbot failures, but “silent” errors and drift that go unnoticed without continuous evaluation. In regulated environments, success depends on rigorous validation, strong guardrails (including retrieval-based approaches to limit hallucinations), and clear evidence that clinicians perform better with AI than without it—often the kind of evidence expected for FDA software as a medical device (SaMD) submissions and ongoing post-market monitoring. Just as important is adoption: organizations must retrain teams for augmented workflows and abandon the old “go-live, then forget” mindset in favor of product thinking, measurable outcomes, and continuous iteration. For anyone building in this space, the conversation makes a compelling case that domain expertise, careful scoping, and ongoing measurement—not novelty—are what ultimately make AI work in healthcare.

Key Takeaways:

- Trust and transparency are prerequisites for adoption in healthcare; “good enough” is rarely good enough when decisions affect patient outcomes.

- Data challenges are not just clinical—they’re institutional: different hospitals, workflows, and clinician intents can change what a “correct” answer looks like.

- Validation is the bulk of the work, and it doesn’t end at launch; monitoring for drift and edge cases is continuous, with clear metrics (e.g., sensitivity/specificity, precision/recall) and human review for high-risk outputs.

- Regulated paths (e.g., FDA software-as-a-medical-device expectations, quality management systems, and GxP-style documentation where applicable) can be an enabler, forcing proof that AI improves clinician performance.

- Winning teams pair domain experts with technical builders and adopt a product mindset—tight scoping, guardrails, and rapid iteration without skipping quality steps.

Deep Dives

1) AI that “disappears” into clinical care—and why that matters

Some of the most consequential healthcare AI is the least visible. Ido Zamberg described working in a stroke unit where AI-processed CT imaging was so integrated into the workflow that it felt like a native part of the scanner—until later he learned it was a separate AI product. That detail is not just anecdote. When AI fades into the background, it can reduce friction and speed decisions, but it also raises a basic question: how do clinicians calibrate trust in a tool they barely notice, especially when the stakes are high?

“The most impressive use is when you don't know it's even AI.” — Ido Zamberg

The panel’s “coolest use cases” covered the spectrum of how AI enters healthcare. Wardah Inam’s dentistry example focused on multimodal, longitudinal understanding—moving beyond single images toward a fuller picture of patient health over time. Scott Chetham brought the lens of clinical development: protocol design can compress weeks of cross-functional negotiation into an hour by automating research, surfacing tradeoffs, and documenting rationale. That compression is not just productivity; it’s a shift from opinion-driven stalemates toward more explicit, auditable decision-making that can support downstream compliance reviews. Tracy Ring pointed to frontier research—mapping the fruit fly brain in the Flywire project—as a reminder that healthcare AI is also propelled by foundational science, not only enterprise workflows.

Yet the common thread wasn’t novelty. It was fit: AI earns its place when it slots into existing clinical and operational realities, and when teams can explain what the system is for (and what it is not for). That includes communicating appropriately to different users—patients, nurses, specialists—without flattening nuance, and being clear about when a clinician must override or confirm the output. One of the webinar’s recurring undercurrents is that healthcare AI cannot be designed as a generic “assistant” and dropped into a hospital; it must be built as a set of carefully scoped capabilities that match clinical intent, context, and accountability. If you want the full texture of how these use cases play out—and where they can mislead—the panel’s concrete examples are worth hearing in full.

2) Data realities: HIPAA, multimodal history, and institutional variability

Healthcare AI is constrained less by algorithmic imagination than by the stubbornness of data. Inam named an immediate barrier: “dealing with HIPAA data,” especially when the goal is to understand disease progression across time and demographics at scale. Healthcare data is both sensitive and structurally fragmented—split across systems, organizations, and formats, with governance requirements that can slow experimentation and complicate evaluation. In practice, teams have to decide early how they will handle de-identification, minimum-necessary access, consent constraints, retention rules, and vendor risk management—because those choices shape what can be built and how it can be validated.

But privacy is only half the story. Zamberg added a second dimension of variability: the hospital itself. Institutional knowledge—protocols, best practices, localized clinical guidance—differs widely, meaning an assistant that performs well in one setting may misfire in another unless it can ingest and reason over local context. Even the same question can mean different things depending on who asks it. An anesthesiologist asking about a drug may be concerned with pre-surgical timing and interactions; a cardiologist asking the same question may be focused on contraindications or comorbidities. That implies that “correctness” in healthcare is not purely factual; it’s also intent-aware and workflow-aware.

This variability also shapes how teams should think about model design. Large, general-purpose language models can be powerful, but they tend to regress to the mean of what they’ve seen. In clinical settings, “average” is often not safe. The panel’s discussion implicitly argues for architectures that can (a) control what knowledge is used (for example, retrieval over approved guidelines and local policies), (b) adapt outputs to user role and workflow, and (c) remain auditable—so a clinician can see where an answer came from, what sources were used, and decide whether it belongs in a decision chain.

For practitioners, the implication is sobering: data work is not an early-phase nuisance that disappears once a model is trained. It is an ongoing product requirement. The more healthcare AI aims to be longitudinal, multimodal, and context-sensitive, the more it must be engineered around data provenance, access rules, and institutional differences. The webinar’s real value here is not abstract caution, but practical examples of why “just use the data” is rarely an option in healthcare.

3) Validation, guardrails, and regulation: what “trustworthy” looks like in practice

Across panelists, validation emerged as the center of gravity—less a milestone than a permanent operating mode. Chetham made the point bluntly: building agents is often the easier part; proving they behave safely, reliably, and consistently is the long game. For high-stakes clinical tasks, that proof has to translate into concrete evaluation plans: clear intended use, defined cohorts, explicit acceptance criteria, and metrics that match clinical risk (often prioritizing sensitivity/NPV for “rule-out” scenarios and precision/PPV for alerting scenarios).

“Validation work is really two thirds of it, and it never stops.” — Scott Chetham

In regulated healthcare software, Wardah Inam described a two-part standard that is both intuitive and demanding: first, show that the AI performs as well as or better than clinicians on a defined ground truth; second, show that clinicians using AI outperform clinicians without it. That framing pulls the conversation away from “Is the model smart?” and toward “Does the system improve care when embedded in real workflows?” It also clarifies why evaluation design matters as much as model design: establishing ground truth requires multiple clinicians, structured annotation, and study design elements such as washout periods to reduce recall effects. It’s also where teams start to resemble FDA SaMD expectations: documentation, change control, and evidence that performance holds across sites, subpopulations, and edge cases.

“Clinicians when using AI are better than without using AI.” — Wardah Inam

The panel also addressed failure modes that standard demos rarely capture. Hallucinations and bias are not abstract concerns: Chetham noted how training on historical clinical trial protocols can reproduce inadvertent exclusion patterns—bias baked into prior practice rather than anyone’s intent. Zamberg discussed limiting hallucinations by restricting the knowledge available to the model (a retrieval-focused approach) and by increasing transparency—so users can see sources and rationale rather than receiving opaque assertions. Several comments pointed back to a practical theme: guardrails are not only prompt rules; they include data controls, allowed actions, fallback behaviors, human-in-the-loop escalation, and logging that supports audits and incident review.

Regulation, in this telling, is not merely a hurdle; it is a forcing function for rigor. Tracy Ring emphasized that many life sciences organizations already operate in validated environments with established documentation and process discipline (for example, QMS and GxP-style validation where required). The difficult shift is not learning what compliance is, but applying it to systems that drift, update frequently, and need continuous monitoring, including clear plans for retraining, versioning, and post-deployment performance checks. If you’re deciding what “responsible AI” actually entails beyond checklists, the webinar’s emphasis on evaluation frameworks, guardrails, and post-deployment monitoring provides the clearest guide.

4) Adoption at scale: product mindset, workflow fit, and the new healthcare AI skill set

Even high-performing models fail if people don’t use them—or if organizations deploy them with the wrong mental model. Tracy Ring described a common failure pattern: treating AI like legacy enterprise software, where a “go live” marks the end of intense work and the start of light support. With AI, especially agentic systems, that lifecycle breaks down because models change, workflows evolve, and performance can degrade silently unless teams monitor drift and feedback in production.

“‘Go live’ is sort of an idea of the past.” — Tracy Ring

Her remedy is a product mindset: continuous improvement, horizon scanning, and a willingness to deprecate internally built capabilities when off-the-shelf tools catch up. That can be culturally difficult—teams are naturally “precious” about what they’ve built—but it may be essential for keeping pace as model capabilities commoditize. It also aligns with how regulated teams already work: defined ownership, documented changes, and clear accountability when a model update affects risk.

Adoption also runs into human concerns that are easy to underplay: anxiety about job security, and uncertainty about what an “augmented” role entails. Ring noted that education systems often train for older workflows, leaving professionals unprepared for a job where AI does the first 70% and humans are responsible for the final 30%—the part that requires judgment, context, and accountability. Chetham added a practical lesson from building agents: the best way to move fast is to spend more time upfront on scoping and guardrails, because retrofitting constraints after deployment is costly and can create “uncontrolled chains” of agent behavior. In healthcare settings, that upfront work also reduces downstream compliance risk and makes it easier to defend decisions to QA, privacy, and clinical leadership.

On skills, the panel converged on a hybrid answer. Technical ability is becoming more accessible—low-code and natural-language tooling is improving rapidly—but domain expertise and system-level thinking remain differentiators. Teams increasingly blend software engineers, data scientists, clinicians, and subject-matter experts; in some roles, entry points like clinical labeling can become pathways into deeper AI product work. The webinar’s subtext is clear: healthcare AI is not a contest of clever prompts. It is a discipline of workflow design, measurement, and change management—best understood by listening to builders who live with the constraints every day.

Verwandt

webinar

Governing Data to Govern AI

Industry experts explore how data governance and AI governance fit together in practice.webinar

Building Trustworthy AI Products

Experts discuss how to design, build, and operate AI products that users can rely on.webinar

Cutting Through the Hype: An Insider’s Account of AI in Healthcare

Here's the real deal about AI and machine learning in healthcare.webinar

Make AI Work More Than 5% of the Time

Industry experts discuss what separates successful AI implementations from the 95% that never make it.webinar

AI in Healthcare: What the Slope of Enlightenment Will Look Like

Move beyond the hype and see how AI has really impacted the healthcare space.webinar